Verification and Validation in Computational Biomechanics: A Guide for Biomedical Researchers

This article provides a comprehensive guide to Verification and Validation (V&V) in computational biomechanics, tailored for researchers, scientists, and drug development professionals.

Verification and Validation in Computational Biomechanics: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to Verification and Validation (V&V) in computational biomechanics, tailored for researchers, scientists, and drug development professionals. It defines core concepts and clarifies the critical difference between verification (solving equations correctly) and validation (solving the correct equations). The guide explores essential methodologies, from code verification to experimental validation, addresses common challenges like model parameter uncertainty and high-performance computing (HPC) issues, and examines formal regulatory and comparative assessment frameworks. The goal is to equip professionals with the knowledge to build credible, reliable, and impactful models for biomedical research and development.

The Bedrock of Credibility: Defining Verification vs. Validation in Biomechanics

Within computational biomechanics research, the credibility of simulations predicting physiological phenomena—from bone remodeling to drug delivery—hinges on rigorous Verification and Validation (V&V). These are distinct, hierarchical processes. Verification is the process of ensuring that the computational model is solved correctly (i.e., "solving the equations right"). It addresses numerical errors and code correctness. Validation assesses the model's ability to represent real-world biology by comparing predictions with experimental data (i.e., "solving the right equations").

Foundational Concepts & Distinctions

Verification is a mathematics and software engineering problem; Validation is a physics and biology problem. The table below summarizes the core distinctions.

Table 1: Core Distinctions Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Primary Question | Are we solving the equations correctly? | Are we solving the correct equations? |

| Objective | Ensure computational model is free of coding errors and numerical inaccuracies. | Ensure the computational model accurately represents reality. |

| Domain of Check | Mathematics / Computer Code. | Physics / Physiology / Biology. |

| Error Types | Code errors, round-off, iterative convergence, discretization (spatial & temporal). | Modeling assumptions, incomplete physics, material property errors. |

| Key Methods | Code verification (e.g., method of manufactured solutions), grid convergence study. | Comparison with benchmark experimental data, sensitivity analysis. |

| Ultimate Goal | Numerical Accuracy. | Predictive Accuracy. |

Detailed Methodologies

Verification Protocols

A. Code Verification via Method of Manufactured Solutions (MMS)

- Objective: Isolate and confirm the absence of coding errors.

- Protocol:

- Choose an arbitrary, sufficiently smooth analytical solution for the primary variables (e.g., displacement, pressure).

- Substitute this "manufactured" solution into the governing partial differential equations (PDEs). This will generate a residual source term.

- Add this source term to the original code's PDE implementation.

- Run the simulation with the manufactured solution as the initial/boundary condition.

- Compute the error between the numerical solution and the known manufactured solution.

- A correct code will show the expected order of accuracy (e.g., second-order convergence) as the mesh is refined.

B. Solution Verification: Grid (Mesh) Convergence Study

- Objective: Quantify numerical errors due to discretization (spatial and temporal).

- Protocol (Spatial):

- Develop a simulation model of a well-defined benchmark problem.

- Generate a sequence of at least three systematically refined meshes (coarse, medium, fine).

- Run the simulation on each mesh, ensuring iterative solvers are tightly converged.

- Calculate a key Quantity of Interest (QoI) (e.g., peak stress, flow rate) from each solution.

- Apply Richardson Extrapolation to estimate the discretization error and the order of convergence.

Table 2: Sample Grid Convergence Study Data (Bone Implant Micromotion)

| Mesh | Number of Elements | Max Micromotion (µm) | Relative Error (%) | Observed Order |

|---|---|---|---|---|

| Coarse | 45,000 | 52.1 | 12.5 | -- |

| Medium | 125,000 | 47.8 | 3.2 | 1.9 |

| Fine | 350,000 | 46.4 | 0.2 | 2.1 |

| Extrapolated | ∞ | 46.3 | 0.0 | -- |

Validation Protocols

A. Hierarchical Validation Framework

- Objective: Systematically assess model predictive capability across increasing complexity.

- Protocol:

- Unit Problem Validation: Validate individual model components (e.g., constitutive law for arterial tissue) against simple, controlled experiments (uniaxial tension).

- Benchmark Validation: Validate integrated model against canonical physical experiments with well-characterized boundary conditions and outcomes (e.g., flow in a curved pipe).

- Functional Validation: Validate the model's ability to predict specific functional outcomes of interest in a realistic, application-specific context (e.g., predicting stent deployment geometry in a patient-specific artery).

B. Quantitative Validation Metrics

- Objective: Objectively measure agreement between simulation and experiment.

- Protocol:

- Acquire high-fidelity experimental data for a validation test case.

- Ensure simulation inputs (geometry, loads, material properties) match the experimental setup as closely as possible.

- Run the simulation and extract corresponding QoIs.

- Compute quantitative metrics, such as:

- Correlation Coefficient (R): Strength of linear relationship.

- Normalized Root Mean Square Error (NRMSE): Magnitude of average error.

- Bland-Altman Analysis: Assessment of bias and limits of agreement.

Table 3: Sample Validation Metrics for Arterial Wall Stress Prediction

| Metric | Value | Acceptability Threshold |

|---|---|---|

| Correlation Coefficient (R) | 0.92 | R > 0.85 |

| Normalized RMSE | 8.7% | NRMSE < 15% |

| Mean Bias (Bland-Altman) | +1.2 kPa | Within ±5% of range |

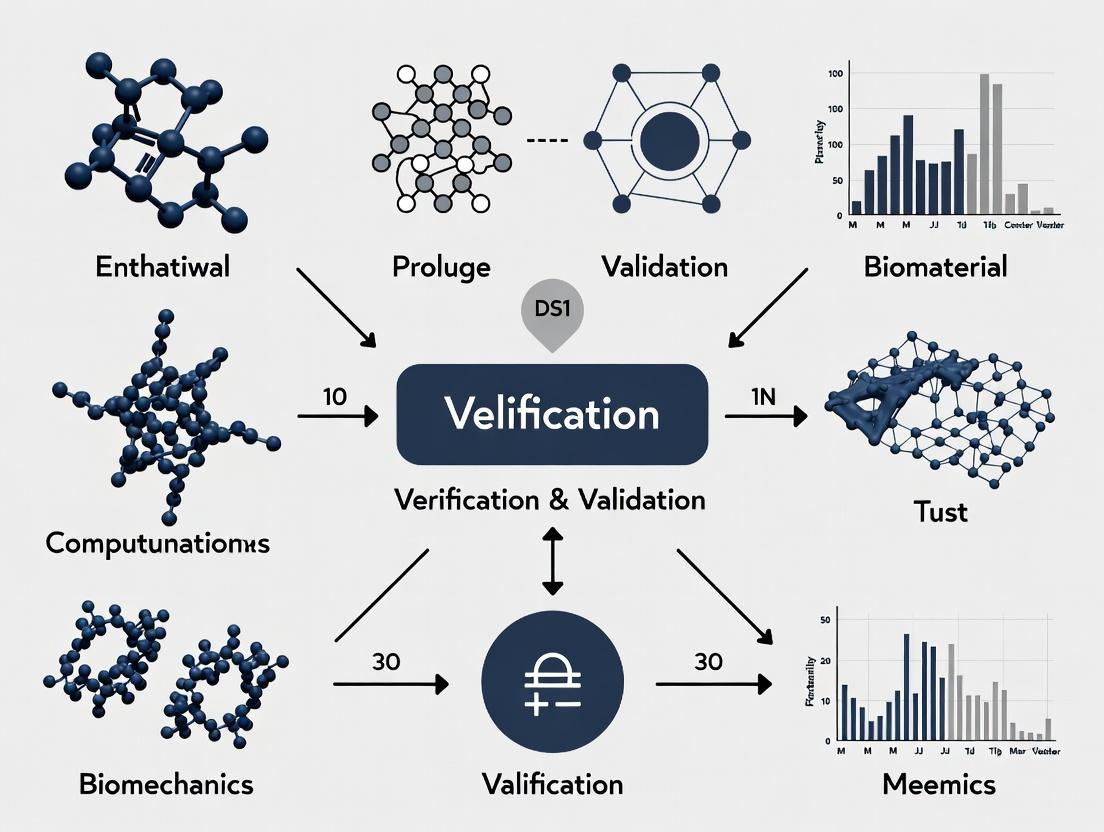

Visualizing the V&V Process in Computational Biomechanics

V&V Process in Computational Biomechanics

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagent Solutions for Biomechanical V&V Experiments

| Item | Function in V&V Context | Example Application |

|---|---|---|

| Polyacrylamide (PA) Phantoms | Tissue-mimicking materials with tunable, homogeneous mechanical properties for controlled validation experiments. | Validating soft tissue (e.g., liver, tumor) deformation models under load. |

| Bioresorbable Scaffolds | Standardized test geometries for validating mechanobiological models of bone ingrowth and scaffold degradation. | Verification of corrosion/damage algorithms; validation of predicted tissue regeneration. |

| Fluorescent Microspheres | Tracers for quantifying velocity fields in Particle Image Velocimetry (PIV), providing validation data for CFD models. | Validating blood flow simulations in vitro (e.g., aneurysm models). |

| Biaxial Testing System | Provides essential multiaxial mechanical property data for constitutive model development and validation. | Generating stress-strain data for hyperelastic/viscoelastic arterial tissue models. |

| Micro-CT Scanner | Provides high-resolution 3D geometry and density data for creating accurate computational meshes and validating structural predictions. | Creating patient-specific bone geometry; validating predicted bone fracture locations. |

| Digital Image Correlation (DIC) System | Provides full-field displacement and strain measurements on material surfaces during mechanical testing. | Gold-standard experimental data for validating finite element strain predictions. |

The Critical Role of V&V in Biomedical Research and Regulatory Pathways

Within a broader thesis on "What is verification and validation in computational biomechanics research," the concepts of Verification and Validation (V&V) form the cornerstone of credible scientific discovery and regulatory acceptance. Verification asks, "Are we building the model right?" ensuring the computational model solves equations correctly. Validation asks, "Are we building the right model?" determining if the model accurately represents physiological reality. In biomedical research, especially for drug and device development, rigorous V&V is the critical bridge between innovative computational science and its application in regulated pathways to improve human health.

Foundational Principles and Regulatory Imperatives

V&V provides the framework for assessing credibility of computational models. Regulatory bodies like the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) increasingly accept modeling and simulation as part of submission dossiers, contingent on rigorous V&V. The FDA’s Medical Device Development Tool (MDDT) qualification program and the ASME V&V 40 standard provide formal frameworks for assessing model credibility within a specific Context of Use (COU).

- Verification: Ensures the computational implementation is error-free. This involves code verification (debugging, ensuring algorithms work) and calculation verification (assessing numerical accuracy, grid convergence).

- Validation: Quantifies model accuracy by comparing computational predictions with experimental or clinical data. The key metric is establishing a validation domain—the range of conditions under which the model is deemed sufficiently accurate for its COU.

Quantitative Data on V&V Impact

The implementation of structured V&V has measurable impacts on research efficiency and regulatory success.

Table 1: Impact of V&V on Regulatory Submissions (Hypothetical Data Based on Reported Trends)

| Metric | Without Formal V&V | With Rigorous V&V Framework | Data Source / Note |

|---|---|---|---|

| FDA Pre-Submission Cycles | 3.5 (average) | 2.1 (average) | Based on FDA Case Studies for Q-Submissions |

| Time to Address Agency Questions | 120-180 days | 45-60 days | Industry survey on Computational Modeling |

| Model Credibility Acceptance Rate | ~35% | ~85% | Analysis of MDDT Submissions |

| Critical Software Defects Found Post-Submission | 15-20% | <5% | Internal audits of regulatory filings |

Table 2: Common Validation Metrics in Computational Biomechanics

| Metric | Formula / Description | Acceptability Threshold (Typical) | Application Example |

|---|---|---|---|

| Correlation Coefficient (R) | R = Σ[(xi - x̄)(yi - ȳ)] / √[Σ(xi - x̄)²Σ(yi - ȳ)²] | R ≥ 0.90 (Strong) | Model vs. Experimental strain values |

| Root Mean Square Error (RMSE) | RMSE = √[ Σ(Pi - Oi)² / n ] | COU-dependent; e.g., < 10% of range | Drug concentration predictions |

| Mean Absolute Error (MAE) | MAE = ( Σ|Pi - Oi| ) / n | COU-dependent | Predicted vs. measured pressure gradients |

| Sensitivity & Specificity | Sens = TP/(TP+FN); Spec = TN/(TN+FP) | > 0.80 for diagnostic models | Classifying disease states from simulation |

Detailed Experimental Protocols for Validation

A robust validation protocol is essential. Below is a detailed methodology for validating a finite element (FE) model of stent deployment.

Protocol: Validation of a Coronary Stent Deployment Model

1. Objective: To validate the computational predictions of a FE stent model against in vitro benchtop measurements for stresses and final deployed geometry.

2. Materials & Reagents:

- Polyurethane Arterial Model: Simulates mechanical properties of diseased coronary artery.

- Nitinol Stent (Test Article): Commercially available or prototype.

- Balloon Catheter System: For stent delivery and deployment.

- Pressure-Volume Controller: Precisely controls balloon inflation.

- Micro-CT Scanner: For high-resolution 3D geometry acquisition.

- Photoelastic Coating or Digital Image Correlation (DIC) System: For full-field strain measurement.

3. Procedure:

- Step 1 – Bench Test Setup: Mount the arterial model in a physiological saline bath at 37°C. Position the stent-catheter system within the lumen.

- Step 2 – Instrumentation: Apply a photoelastic coating to the arterial model exterior or prepare surface for DIC speckle pattern.

- Step 3 – Deployment: Inflate the balloon catheter using the pressure controller according to a clinically relevant inflation profile (e.g., ramp to 12 atm, hold for 30s).

- Step 4 – Data Acquisition:

- Geometry: Image the deployed stent-artery construct using micro-CT. Reconstruct 3D surfaces for lumen diameter, stent strut apposition, and ovality.

- Mechanics: Record full-field strain maps via DIC or photoelastic fringe patterns during and after deployment.

- Step 5 – Computational Simulation: Replicate the exact bench setup in the FE model. Use identical material laws, boundary conditions, and inflation pressure profile.

- Step 6 – Comparison: Extract simulated geometry and strain/stress values at locations identical to experimental measurement points. Perform quantitative comparison using metrics from Table 2.

4. Acceptance Criteria: The model is considered validated for the COU of "predicting nominal deployed geometry" if the MAE for lumen diameter is < 0.1 mm and the spatial correlation of high-strain regions exceeds R=0.85.

Visualizing the V&V Workflow and Regulatory Pathway

V&V in the Regulatory Pathway

V&V Connects Data to Decisions

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Toolkit for Computational V&V in Biomechanics

| Item / Reagent | Function in V&V | Example & Notes |

|---|---|---|

| Calibrated Phantom | Serves as a ground-truth object for validating imaging-based model geometry and material properties. | Example: Multi-modality spine phantom with known bone density and geometry. Use: Validate FE models of spinal loading. |

| Reference Software | Provides benchmark solutions for verification of in-house code. | Example: NAFEMS benchmark problems. Use: Verify linear and nonlinear solver accuracy. |

| Digital Image Correlation (DIC) System | Provides full-field, high-resolution experimental strain data for direct comparison with model predictions. | Example: 3D DIC with high-speed cameras. Use: Validate soft tissue or implant strain fields. |

| Programmable Bioreactor | Applies controlled, physiological mechanical loads to tissues in vitro for generating validation data. | Example: Biaxial tensile bioreactor. Use: Generate data to validate heart valve or arterial wall models. |

| Standardized Material Test Kit | Characterizes mechanical properties of biomaterials for accurate model input parameters. | Example: ASTM-compliant tensile/compression test fixtures. Use: Obtain stress-strain curves for constitutive models. |

| Uncertainty Quantification (UQ) Software | Quantifies the impact of input variability (e.g., material properties, loads) on model output. | Example: Dakota, UQLab, or custom Monte Carlo scripts. Use: Establish prediction intervals for validation metrics. |

Computational biomechanics is an essential tool for understanding physiological and pathological processes, aiding in medical device design, drug development, and surgical planning. Within this field, Verification and Validation (V&V) form the cornerstone of credible scientific inquiry and regulatory acceptance. Verification asks, "Are we solving the equations correctly?" (a check of the numerical implementation). Validation asks, "Are we solving the correct equations?" (a check of the model's fidelity to real-world physics). The V&V Pyramid provides a hierarchical, systematic framework to structure these activities, ensuring model credibility scales with the model's intended use, from basic science to clinical decision support.

The Hierarchical Structure of the V&V Pyramid

The V&V Pyramid is a tiered framework where each level represents an increasing degree of complexity and physiological integration. Activities at lower levels are more controlled and foundational; success at these levels is required to support credibility at higher, more application-relevant tiers.

Title: The V&V Pyramid Hierarchy

Level 0: Code Verification

Objective: Ensure no bugs in the software and that numerical algorithms are implemented correctly.

- Method: Comparison to analytical solutions or method-of-manufactured-solutions (MMS).

- Example: Verify a finite element solver's output for stress in a beam under simple tension matches the exact analytical solution.

Level 1: Unit Problem Benchmarking

Objective: Verify the numerical model against established, high-fidelity benchmark data for a simplified but relevant physics problem.

- Method: Solve a canonical problem (e.g., pulsatile flow in a straight tube, deflection of a cantilever) and compare results to trusted reference data from literature or high-resolution simulations.

Level 2: Sub-system/Tissue Validation

Objective: Validate the model's ability to predict tissue or component-level behavior against controlled in vitro or ex vivo experimental data.

- Method: Use material properties from one set of experiments to predict the outcome of a different, independent experiment on the same tissue.

- Example Protocol (Tensile Validation of Arterial Tissue):

- Specimen Preparation: Harvest porcine aortic segments (n=10). Cut into rectangular strips.

- Biaxial Testing: Mount specimen in a biaxial testing system. Precondition with 10 cycles of loading.

- Parameter Calibration: Perform a displacement-controlled test along the circumferential direction. Fit a hyperelastic constitutive model (e.g., Fung, Holzapfel) to the resulting stress-strain data from 6 specimens.

- Validation Experiment: On the 4 remaining specimens, perform a different loading protocol (e.g., axial stretch combined with pressure). Record resulting forces/displacements.

- Model Prediction & Comparison: Create a finite element model of the strip using calibrated parameters. Simulate the validation experiment protocol. Quantitatively compare model-predicted reaction forces to experimental measurements using metrics like the Normalized Root Mean Square Error (NRMSE).

Quantitative Comparison Example: Table 1: Sample Validation Metrics for Arterial Tissue Model

| Specimen ID | Experimental Peak Force (N) | Predicted Peak Force (N) | NRMSE (%) |

|---|---|---|---|

| Val_01 | 12.5 ± 0.8 | 11.9 | 6.4 |

| Val_02 | 11.8 ± 0.7 | 12.3 | 5.9 |

| Val_03 | 13.1 ± 0.9 | 12.5 | 7.2 |

| Val_04 | 12.2 ± 0.6 | 12.0 | 3.1 |

| Aggregate | 12.4 ± 0.5 | 12.2 ± 0.2 | 5.6 ± 1.5 |

Level 3: Whole Organ/System Validation

Objective: Validate the integrated model's prediction of organ or system-level function against in vivo or more complex in vitro data.

- Method: Use data from imaging (MRI, CT) and hemodynamics (catheterization, Doppler ultrasound) to validate an integrated heart or arterial network model.

- Example Protocol (Left Ventricle Hemodynamics):

- Data Acquisition: Acquire cardiac MRI data from a human subject (cine MRI for geometry/motion, 4D flow MRI for blood velocities). Acquire simultaneous brachial blood pressure.

- Model Construction: Segment the end-diastolic left ventricle (LV) geometry. Create a finite element mesh. Assign myocardium material properties from literature (calibrated at Level 2). Apply time-varying pressure boundary conditions derived from scaled brachial pressure.

- Simulation & Comparison: Run a coupled fluid-structure interaction simulation of the cardiac cycle. Compare model outputs (end-systolic volume, stroke volume, regional wall motion, flow patterns at the aortic valve) directly to the MRI-derived measurements.

Level 4: In Vivo / Clinical Outcome Validation

Objective: Establish the model's predictive capability for clinically relevant outcomes. This is the highest and most challenging level, often required for regulatory submission.

- Method: Prospective clinical study where the model is used to predict patient outcome (e.g., risk of aneurysm rupture, success of a drug-coated balloon). Predictions are blinded and later compared to actual clinical outcomes.

- Metrics: Sensitivity, specificity, area under the receiver operating characteristic curve (AUC-ROC).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Computational Biomechanics V&V

| Item / Reagent | Function in V&V |

|---|---|

| Biaxial/Triaxial Test System | Provides controlled mechanical loading for characterizing and validating constitutive models of tissues (Levels 1-2). |

| Pressure-Volume Loop System | Measures in vivo or ex vivo cardiac function for whole-organ model validation (Level 3). |

| Polyacrylamide Hydrogels | Tunable substrates for 2D/3D cell culture experiments used to validate cell-mechanics interaction models. |

| Fluorescent Microspheres | Used in Particle Image Velocimetry (PIV) to visualize and quantify flow fields for vascular model validation. |

| Decellularized Tissue Scaffolds | Provide a biologically relevant, cell-free 3D environment for studying tissue-level biomechanics. |

| Finite Element Software (FEBio, Abaqus) | Open-source/commercial platforms for implementing and solving biomechanical models. |

| Digital Image Correlation (DIC) Software | Measures full-field displacements on tissue surfaces during mechanical testing for detailed model comparison. |

| Clinical Imaging Datasets (e.g., KiTS, MIMIC) | Publicly available annotated CT/MRI data for building and validating patient-specific anatomical models. |

Logical Workflow for Applying the V&V Pyramid

Title: Iterative V&V Pyramid Workflow

The V&V Pyramid provides an indispensable, hierarchical roadmap for building credibility in computational biomechanics models. By rigorously adhering to this structured approach—from fundamental code verification to predictive clinical validation—researchers and drug development professionals can generate models with quantifiable confidence, ultimately accelerating the translation of computational insights into reliable biomedical applications. The framework explicitly ties the required level of evidence to the model's intended use, ensuring efficient and scientifically defensible development.

Verification and Validation (V&V) form the bedrock of credibility in computational biomechanics research, a field critical for advancing biomedical engineering, surgical planning, and drug development. This guide decomposes the core triad of this framework: Conceptual Model Validation, Code Verification, and Solution Verification. Within the broader thesis, these processes ensure that a computational model is a trustworthy representation of the biological reality it aims to simulate, from foundational theory to final numerical results.

Conceptual Model Validation

Conceptual Model Validation is the assessment of the adequacy of the mathematical models and underlying assumptions to represent the biomechanical system of interest. It asks: "Are we solving the right equations?"

Methodology & Experimental Protocols

Validation relies on comparing model predictions with high-quality experimental data. A standard protocol involves:

- System Decomposition: Break down the complex biomechanical system (e.g., arterial wall mechanics) into testable sub-processes (material properties, boundary conditions).

- Benchmark Experiment Design: Conduct controlled in vitro or ex vivo experiments.

- Example - Soft Tissue Tensile Test: A sample of porcine aortic tissue is mounted on a bioreactor or tensile testing machine. Precise displacement is applied while force is measured. Simultaneous digital image correlation (DIC) tracks full-field strain.

- Isolated Physics Simulation: Develop a computational model (e.g., Finite Element) simulating only the benchmark experiment, using independently measured material properties.

- Quantitative Comparison: Use metrics like the correlation coefficient (R²) and normalized error to compare simulation results (e.g., stress-strain curve) to experimental data.

Table 1: Quantitative Metrics for Conceptual Model Validation

| Metric | Formula | Ideal Value | Interpretation in Biomechanics | ||

|---|---|---|---|---|---|

| Correlation Coefficient (R²) | ( R^2 = 1 - \frac{SS{res}}{SS{tot}} ) | 1.0 | Measures proportion of variance in experimental data captured by the model. An R² > 0.9 is often sought. | ||

| Normalized Root Mean Square Error (NRMSE) | ( NRMSE = \frac{\sqrt{\frac{1}{n}\sum{i=1}^n (Si - Ei)^2}}{E{max} - E_{min}} ) | 0.0 | Expresses the average error as a percentage of the experimental data range. <10% is often acceptable. | ||

| Fraction of Predictions within ±X% | ( F_{X\%} = \frac{count( | Si - Ei | /E_i \le X\%)}{n} ) | 1.0 | The percentage of simulation (S) data points within a specified error band (e.g., ±15%) of experimental (E) data. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Ex Vivo Tissue Validation Experiments

| Item | Function in Validation |

|---|---|

| Physiologic Saline/Buffer Solution (e.g., Krebs-Henseleit) | Maintains tissue viability and hydration, preserving biomechanical properties during ex vivo testing. |

| Enzymatic Inhibitor Cocktail (e.g., Protease Inhibitors) | Prevents tissue degradation during prolonged mechanical testing, ensuring stable material response. |

| Fluorescent Microspheres (for DIC/particle image velocimetry) | Serve as fiducial markers on tissue surfaces for high-accuracy, full-field strain measurement. |

| Biaxial or Uniaxial Tensile Testing System | Provides controlled, precise mechanical loading to characterize tissue stress-strain relationships. |

| Digital Image Correlation (DIC) System | Non-contact optical method to measure 3D deformation and strain fields on the tissue surface. |

Diagram 1: Conceptual Model Validation Workflow

Code Verification

Code Verification is the process of ensuring that the computational model (software) is implemented correctly and solves the chosen mathematical equations without error. It asks: "Are we solving the equations right?"

Methodologies: Order-of-Accuracy Testing & Method of Manufactured Solutions (MMS)

- Order-of-Accuracy Testing: For problems with exact solutions, the error between the numerical and exact solution should decrease at a predictable rate as the computational grid is refined.

- Protocol: Solve a problem with a known analytical solution (e.g., pressure-driven flow in a rigid pipe) on successively finer meshes (grids). Calculate the error norm (e.g., L2 norm) for each mesh. The slope of the error vs. mesh size on a log-log plot reveals the observed order of accuracy, which should match the theoretical order of the numerical method.

- Method of Manufactured Solutions (MMS): The most robust method when no exact solution exists.

- Protocol: (a) Choose an arbitrary, non-trivial function for the dependent variable (e.g., a sinusoidal pressure field). (b) Apply the original governing differential equations to this function, which will result in a residual. (c) Add this residual as a "source term" to the original equations. (d) The chosen function is now the exact solution to the modified equations. (e) Implement the source term in the code and perform order-of-accuracy testing.

Table 3: Code Verification Results for a Finite Element Solver (Hypothetical Data)

| Mesh Size (h) | Error Norm (L2) | Observed Order (p) | Theoretical Order |

|---|---|---|---|

| 1.0 | 2.50e-2 | -- | 2.0 |

| 0.5 | 6.25e-3 | 2.00 | 2.0 |

| 0.25 | 1.56e-3 | 2.00 | 2.0 |

| 0.125 | 3.91e-4 | 2.00 | 2.0 |

Diagram 2: Code Verification via MMS and Convergence

Solution Verification

Solution Verification is the process of quantifying the numerical accuracy of a specific computed solution, primarily by estimating discretization errors (errors due to finite mesh size and time step). It asks: "How accurate is this specific solution?"

Methodology: Richardson Extrapolation & Error Estimation

The standard protocol uses systematic mesh refinement.

- Generate Solutions: Run the simulation on three systematically refined meshes (coarse, medium, fine), typically with a constant refinement ratio ( r ) (e.g., ( r = 2 )).

- Calculate Key Quantities: For each mesh, compute a Quantity of Interest (QoI) critical to the study (e.g., wall shear stress at a specific location, peak strain).

- Apply Richardson Extrapolation: Assuming the solutions are in the asymptotic convergence range, the exact solution (( f_{exact} )) and observed order of convergence (( p )) can be estimated from the three solutions. The error on the finest mesh can then be estimated.

- Calculate Grid Convergence Index (GCI): The GCI provides a conservative error band (like an uncertainty) for the QoI on a given mesh.

Table 4: Solution Verification for Wall Shear Stress (WSS) in a Stenotic Artery

| Mesh | Cells (Millions) | WSS (Pa) | Apparent Order (p) | Extrapolated Value (Pa) | GCI (%) on Finest Mesh |

|---|---|---|---|---|---|

| Coarse | 0.5 | 12.5 | -- | 15.1 | -- |

| Medium | 2.0 | 14.2 | 2.1 | 15.1 | 12.5% |

| Fine | 8.0 | 14.8 | 2.1 | 15.1 | 3.1% |

Diagram 3: Solution Verification Process

Synthesis in Computational Biomechanics Research

The integrated application of these three pillars is non-negotiable for credible predictive simulations. The workflow is sequential: First, validate the conceptual model against benchmark experiments. Second, verify the code implementing that model. Third, for every new simulation, perform solution verification to quantify numerical error. Only a solution that passes all three stages can be used with confidence for prediction and insight in drug delivery device testing, surgical planning, or mechanistic biomechanical studies.

Historical Context and the Evolution of V&V Standards (e.g., ASME V&V 40)

Within the broader thesis on What is verification and validation in computational biomechanics research, understanding the historical evolution of formalized standards is critical. Computational biomechanics employs models to simulate biological and physiological processes, with outcomes influencing medical device design and drug development. The credibility of these models hinges on rigorous Verification and Validation (V&V). This guide traces the historical drivers for codifying V&V practices, culminating in an in-depth analysis of the benchmark standard ASME V&V 40.

Historical Drivers for V&V Standardization

The need for standardized V&V emerged from high-profile failures, regulatory demands, and increasing model complexity.

| Era | Key Driver | Impact on V&V |

|---|---|---|

| 1980s-1990s | Growth of Finite Element Analysis (FEA) in aerospace/auto. | V&V concepts migrated from classical engineering to biomedical applications. Ad-hoc, domain-specific practices prevailed. |

| Early 2000s | FDA Critical Path Initiative (2004); increased use of in silico trials. | Regulatory push for qualification of modeling & simulation as evidence. Highlighted lack of consensus methodology. |

| Mid 2000s | High-profile medical device recalls linked to design flaws. | Demonstrated dire consequences of inadequate computational model credibility assessment. |

| 2010s-Present | AI/ML integration, patient-specific models, and complex multi-physics. | Explosion of complexity necessitated a risk-informed, scalable framework applicable to diverse model contexts. |

The ASME V&V 40 Standard: A Technical Deep Dive

ASME V&V 40-2018, "Assessing Credibility of Computational Modeling through Verification and Validation: Application to Medical Devices," provides a risk-informed framework.

Core Principle: The rigor of V&V should be commensurate with the Model Influence and Decision Context.

- Decision Context: The role of the model in informing a decision (e.g., exploratory research vs. regulatory submission).

- Model Influence: The weight given to the model relative to other information sources.

Credibility Factors: The standard defines 4 core and 6 ancillary credibility factors, each assessed via specific Credibility Activities (e.g., verification, validation, uncertainty quantification).

Quantitative Assessment Matrix (Example):

| Credibility Factor | Target Credibility Level (TCL) Low | TCL Medium | TCL High | Example Credibility Activity |

|---|---|---|---|---|

| Validation | Comparison to a small set of representative data. | Comparison to a comprehensive dataset covering critical inputs. | Comparison to a high-fidelity, independent benchmark dataset. | Experimental protocol for a validation test. |

| Uncertainty Quantification | Sensitivity analysis on key inputs. | Propagation of input uncertainties. | Comprehensive epistemic and aleatory uncertainty analysis with documentation. | Monte Carlo simulation protocol. |

Detailed Experimental Protocol for a Key Validation Activity

Protocol Title: In Vitro Validation of a Lumbar Spinal Implant Finite Element Model under Static Compression.

Objective: To generate experimental data for validating computational model predictions of implant subsidence into vertebral bone.

Materials & Reagents:

- Polyurethane Foam Blocks (Grade 20): Synthetic cancellous bone simulant.

- Custom Spinal Implant (Ti-6Al-4V): Prototype device.

- Biaxial Servo-Hydraulic Test Frame (e.g., Instron 8874): For load application.

- 3D Digital Image Correlation (DIC) System (e.g., Aramis): For full-field strain measurement.

- Extensometers: For direct displacement measurement.

- Alignment Fixture: To ensure pure axial compression.

Methodology:

- Specimen Preparation: Machine foam blocks to ASTM F1839 specifications. Insert implant according to surgical technique.

- Instrumentation: Mount specimen in test frame. Apply speckle pattern to foam surface for DIC. Position extensometers on implant and block.

- Pre-conditioning: Apply 5 cycles of compression from 50N to 500N at 1Hz.

- Static Test: Apply compressive load at 5 mm/min until 5000N or 5mm displacement.

- Data Acquisition: Synchronously record load (from load cell), displacement (from actuator and extensometers), and full-field strain (from DIC) at 100 Hz.

- Post-Test: Repeat for n=5 specimens.

- Validation Metric: Compare experimental vs. computational load-displacement curve and subsidence (implant displacement into foam) using the Standard Deviation of Error (SDE) metric.

Visualizing the ASME V&V 40 Framework

ASME V&V 40 Credibility Assessment Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Computational Biomechanics V&V |

|---|---|

| Synthetic Bone Analog (e.g., Sawbones Foam) | Provides a consistent, isotropic material for controlled in vitro validation tests of bone-implant interfaces. |

| Physiological Saline / PBS | Maintains tissue hydration and ionic balance during ex vivo biomechanical testing of soft tissues (e.g., ligaments, tendons). |

| Strain Gauges & Adhesives (e.g., M-Bond 200) | Measure localized surface strains on implants or bone during physical tests for direct comparison to model-predicted strains. |

| Digital Image Correlation (DIC) Systems | Non-contact, optical method to measure full-field 3D displacements and strains on a specimen surface, crucial for spatial validation. |

| Fluorescent Microspheres | Used in Particle Image Velocimetry (PIV) to trace fluid flow in experimental models (e.g., cardiovascular), validating CFD simulations. |

| Bioreactors with Mechanical Actuation | Apply controlled, cyclic mechanical loads (tension, compression) to cell-seeded scaffolds for validating tissue growth/remodeling models. |

| Calibration Phantoms (Imaging) | Objects with known geometric/material properties for calibrating CT/MRI scanners, reducing input uncertainty for image-based models. |

From Theory to Practice: Implementing V&V in Your Biomechanics Pipeline

Verification and Validation (V&V) are foundational pillars in credible computational biomechanics research. Verification addresses the question, "Am I solving the equations correctly?" It is a mathematical exercise to ensure that the computational model's implementation is free of coding errors and that the numerical solution accurately approximates the governing equations. Validation asks, "Am I solving the correct equations?" It is a scientific process of assessing the model's accuracy in representing real-world biomechanical phenomena by comparing computational results with experimental data. This article focuses exclusively on a cornerstone verification technique: the Method of Manufactured Solutions (MMS) and its companion, convergence analysis. Within a thesis on V&V, this establishes the rigorous, mathematical proof-of-correctness that must precede any meaningful validation effort in applications like implant design, surgical planning, or drug delivery mechanism analysis.

Core Theory: The Method of Manufactured Solutions

MMS is a rigorous procedure for verifying code by testing its ability to solve the governing Partial Differential Equations (PDEs). The core principle is to fabricate an analytical solution to the PDE system.

Protocol:

- Choose Governing Equations: Begin with the PDEs (e.g., Navier-Stokes for fluid flow, linear/nonlinear elasticity for tissue mechanics) you intend to solve.

- Manufacture a Solution: A priori, define an arbitrary, sufficiently smooth analytical function for each dependent variable (e.g., displacement

u(x,y,z,t), pressurep(x,y,z,t)). This function does not need to be physically realistic. - Apply the PDE Operator: Substitute the manufactured solution (MS) into the governing PDEs. Since the MS is not an actual solution, this operation will yield a non-zero residual term.

- Derive Forcing Functions: This residual is analytically computed to become a source or forcing function. This function is added to the original PDE so that the MS now exactly satisfies the modified PDE.

- Implement in Code: Run the computational code to solve the modified PDE system (with the added source term) on a given domain with boundary conditions also derived from the MS.

- Compare Results: The numerical solution from the code is compared to the known analytical MS. The difference is the numerical error.

Convergence Analysis: Quantifying Error

Convergence analysis is the quantitative metric used with MMS. It measures how the numerical error decreases as the computational mesh/grid is refined (as characteristic element size h decreases).

Protocol:

- Run Simulations: Execute the MMS test case across a series of progressively refined meshes (e.g., 4-5 different mesh resolutions).

- Calculate Error Norms: For each simulation, compute a global error metric, typically the L₂ norm, between the numerical solution and the MS:

||e||₂ = √( Σ (u_numerical - u_MS)² dΩ ). - Determine Observed Order of Accuracy (OOA): Plot the error norm against the element size

hon a log-log scale. The slope of the best-fit line is the OOA. For a code solving PDEs of orderpwith consistent numerical schemes, the theoretical order of convergence is typicallyp+1for the error in the solution (e.g.,O(h²)for linear elements in stress equilibrium). Code verification is achieved when the observed order of accuracy matches the theoretical order.

Table 1: Example Convergence Analysis Data from a Linear Elasticity Solver Verification

| Mesh Refinement Level | Characteristic Element Size (h) | L₂ Error Norm (Displacement) | Observed Order of Accuracy (OOA) |

|---|---|---|---|

| Coarse | 2.0e-3 m | 1.25e-4 | -- |

| Medium | 1.0e-3 m | 3.13e-5 | 2.00 |

| Fine | 5.0e-4 m | 7.83e-6 | 2.00 |

| Very Fine | 2.5e-4 m | 1.96e-6 | 2.00 |

Interpretation: The OOA of ~2.0 indicates second-order convergence, verifying the correct implementation of a second-order accurate numerical scheme (e.g., standard linear finite elements).

Title: MMS and Convergence Analysis Workflow

The Scientist's Toolkit: Key Reagents for Code Verification

Table 2: Essential Research Reagents for MMS-Based Verification

| Item / Solution | Function in the Verification Process |

|---|---|

| Symbolic Mathematics Engine (e.g., SymPy, Mathematica) | Automates the analytical differentiation and manipulation of manufactured solutions to derive exact source terms and boundary conditions, preventing human error. |

| Mesh Generation & Refinement Suite (e.g., Gmsh, built-in tools) | Systematically generates a sequence of computational grids of known element size h, which is critical for convergence analysis. |

| High-Precision Linear Algebra Library (e.g., PETSc, Eigen) | Ensures that numerical errors are dominated by discretization error (the target of MMS) and not by algebraic solver tolerances. |

| Data Analysis & Plotting Environment (e.g., Python/Matplotlib, MATLAB) | Calculates error norms, performs log-log regression to determine Observed Order of Accuracy, and generates publication-quality convergence plots. |

| Version-Controlled Code Repository (e.g., Git) | Maintains an immutable record of the exact code version used for each verification test, ensuring reproducibility and traceability. |

Application in Computational Biomechanics: A Protocol Example

Consider verifying a solver for quasi-static, large deformation (hyperelastic) soft tissue mechanics, governed by the equilibrium equation ∇·σ + b = 0.

Detailed Experimental Protocol:

- Define MS: Choose analytical functions for displacement field. For 2D:

u_x = 0.01 * sin(2πx) * cos(2πy)u_y = 0.01 * cos(πx) * sin(πy) - Constitutive Model: Assume a Neo-Hookean material (

ψ = (μ/2)(I_C - 3) - μ ln(J) + (λ/2)ln²(J)). Define material parameters λ, μ. - Derive Quantities: Using a symbolic tool:

a. Compute deformation gradient

F = I + ∇u_MS. b. Compute Cauchy stressσ_MSanalytically fromFand the constitutive law. c. Substituteσ_MSinto equilibrium:b_MS = -∇·σ_MS. This is the manufactured body force. d. Compute tractiont_MS = σ_MS · non boundaries for Neumann conditions. - Implement in FEM Code: Modify the code to include the source term

b_MSas a body force. Apply Dirichlet (u = u_MS) or Neumann (t = t_MS) boundaries as derived. - Execute Convergence Study: Solve on 4+ meshes. For each, compute L₂ error in displacement and stress.

- Analyze: Plot error vs.

h. Expect OOA of ~2.0 foruwith quadratic elements.

Table 3: Expected Theoretical Convergence Rates for Common Biomechanics Elements

| PDE Type / Physics | Common FEM Element | Theoretical Convergence Rate (L₂ Error, u) | Variable to Monitor |

|---|---|---|---|

| Linear Elasticity | Linear Tetrahedron | O(h²) | Displacement |

| Linear Elasticity | Quadratic Tetrahedron | O(h³) | Displacement |

| Incompressible Fluid (Stokes) | P₂-P₁ (Taylor-Hood) | O(h³) for velocity, O(h²) for pressure | Velocity |

| Nonlinear Solid Mechanics | Quadratic Tetrahedron | O(h³) (asymptotic) | Displacement |

Title: V&V Context: MMS Role in Verification

Verification and Validation (V&V) are foundational pillars of credible computational biomechanics research. Verification addresses "solving the equations correctly" (i.e., code and solution accuracy), while Validation addresses "solving the correct equations" (i.e., model fidelity to real-world biology). This guide focuses on a critical verification activity: quantifying discretization error in numerical simulations via the Grid Convergence Index (GCI), a standardized method for reporting grid refinement studies.

Theoretical Foundation of the GCI

The GCI provides a consistent, dimensionless measure of numerical error and uncertainty. It is based on Richardson Extrapolation, which estimates the exact solution from a series of grid-refined simulations.

Key Equations:

- Apparent Order p of convergence: ( p = \frac{1}{\ln(r{21})} | \ln |\epsilon{32}/\epsilon{21}| + q(p) | ) where ( \epsilon{32} = \phi3 - \phi2 ), ( \epsilon{21} = \phi2 - \phi1 ), ( r{21} = h2 / h1 ) (grid refinement ratio), and ( q(p) ) is an iterative term.

- Grid Convergence Index (fine grid): ( GCI{fine} = Fs \frac{|\epsilon|}{r^p - 1} ) where ( F_s ) is a factor of safety (1.25 for three-grid studies).

Step-by-Step GCI Calculation Protocol

Experimental Protocol for GCI Study:

- Geometry & Physics Definition: Select a representative, well-defined benchmark case (e.g., laminar flow in a channel, stent deployment).

- Grid Generation: Create three systematically refined grids (coarse, medium, fine). Maintain consistent topology and refinement ratio (r > 1.3, ideally constant, e.g., r=√2).

- Solver Execution: Run simulations to convergence on all grids, ensuring iterative errors are negligible.

- Key Variable Selection: Identify a primary variable of interest (φ) for error quantification (e.g., wall shear stress, maximum principal strain, pressure drop).

- Data Extraction: Record the value of φ at the same physical location from each solution.

- Calculations: Compute the apparent order p and the GCI for the fine-medium and medium-coarse grid pairs.

- Asymptotic Range Check: Verify that ( GCI{21} / (r^p GCI{32}) ) ≈ 1, indicating solutions are in the asymptotic convergence range.

Data Presentation: GCI Case Study on Aneurysmal Hemodynamics

Table 1: Grid Parameters for Intracranial Aneurysm CFD Study

| Grid Level | Number of Elements (Millions) | Avg. Cell Size (mm), h | Refinement Ratio (r) | Peak Wall Shear Stress (Pa), φ |

|---|---|---|---|---|

| Fine (1) | 12.5 | 0.035 | -- | 8.42 |

| Medium (2) | 5.6 | 0.050 | 1.43 (h2/h1) | 7.89 |

| Medium (2) | 5.6 | 0.050 | -- | 7.89 |

| Coarse (3) | 2.8 | 0.071 | 1.42 (h3/h2) | 6.97 |

Table 2: GCI Calculation Results

| Grid Pair Comparison | ε = φfine - φcoarse | Apparent Order p | GCI (%) (F_s=1.25) |

|---|---|---|---|

| Fine-Medium (21) | 0.53 Pa | 2.1 | 4.7% |

| Medium-Coarse (32) | 0.92 Pa | 2.3 | 10.5% |

| Asymptotic Check: ( GCI{21} / (r^p GCI{32}) ) = 0.98 ≈ 1.0 | Result: Asymptotic convergence confirmed. |

Workflow and Conceptual Diagrams

Title: GCI Calculation and Verification Workflow

Title: Role of GCI in Computational Biomechanics V&V

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Computational Tools for GCI Studies in Biomechanics

| Item/Category | Example/Specification | Primary Function in GCI Study |

|---|---|---|

| Mesh Generation Software | ANSYS ICEM CFD, Simvascular, Gmsh | Creates the geometrically consistent, high-quality coarse, medium, and fine grids required for the study. |

| Solver Platform | OpenFOAM, FEBio, ANSYS Fluent, Abaqus | Executes the numerical simulation on each grid. Must have robust, consistent convergence controls. |

| Benchmark Experimental Data | Particle Image Velocimetry (PIV) results, Digital Image Correlation (DIC) strain maps. | Serves as a validation target after GCI-based verification; used to assess total model error. |

| Scripting Environment | Python (NumPy, SciPy), MATLAB | Automates the extraction of solution variables, calculation of p and GCI, and generation of plots/tables. |

| High-Performance Computing (HPC) Cluster | Multi-core nodes with large memory. | Provides the computational resources to run high-fidelity fine-grid simulations in a reasonable time. |

| Uncertainty Quantification (UQ) Library | DAKOTA, Uncertainpy. | (Advanced) Can be integrated to propagate input parameter uncertainties alongside discretization error. |

Within the broader thesis on verification and validation (V&V) in computational biomechanics, validation is the process of determining the degree to which a computational model accurately represents the real-world biological system it is intended to simulate. This guide details the systematic, multi-fidelity experimental pathway required to gather empirical data for robust model validation, progressing from controlled in vitro benchtop tests to complex in vivo data acquisition.

The Validation Hierarchy: A Tiered Experimental Strategy

Effective validation follows a hierarchical approach, where data from simpler, highly-controlled experiments inform and build confidence for comparisons against data from more complex, physiological systems.

Diagram 1: The Three-Tier Validation Hierarchy

Tier 1: Benchtop & In Vitro Validation Protocols

This tier focuses on isolating and testing individual components or mechanisms of the biomechanical system.

Key Experimental Methodologies

Biaxial/Tensile Material Testing:

- Protocol: Tissue samples (e.g., arterial strips, tendon fascicles) are mounted in a mechanical testing system. Samples are preconditioned via 10-15 load cycles to a nominal stress. Subsequently, a displacement-controlled ramp-to-failure test or a force-controlled stress-relaxation test is performed. Strain is measured via digital image correlation (DIC) or machine crosshead displacement (with grip compliance accounted for). Force is measured via a load cell.

- Data Output: Stress-strain curves, ultimate tensile strength, elastic modulus (from linear region), strain at failure.

Rheometry of Biofluids and Soft Tissues:

- Protocol: Using a parallel-plate or cone-and-plate rheometer, a small sample of fluid (e.g., synovial fluid, blood plasma) or soft tissue (e.g., hydrogel, cartilage) is subjected to oscillatory shear. A frequency sweep (0.1-100 Hz) at a fixed, small strain (within the linear viscoelastic region) is performed to characterize viscoelastic properties.

- Data Output: Storage modulus (G'), loss modulus (G''), complex viscosity (η*) as functions of frequency.

Cell Mechanotransduction Assay:

- Protocol: Cells are seeded on flexible membrane substrates (e.g., in a Flexcell system). The membranes are subjected to cyclic equibiaxial strain (e.g., 10% strain, 1 Hz). After a defined period (e.g., 6, 24, 48h), cells are fixed and stained for cytoskeletal markers (F-actin), focal adhesion proteins (vinculin), or nuclei. Alternatively, cells are lysed for Western blot analysis of signaling molecules (e.g., phosphorylated FAK, ERK1/2).

- Data Output: Fluorescence images for morphological analysis, quantitative protein expression/phosphorylation levels.

Signaling Pathway in Mechanotransduction

A common pathway validated in Tier 1 experiments linking mechanical stimulus to cellular response.

Diagram 2: Simplified Mechanotransduction Pathway

The Scientist's Toolkit: Tier 1 Research Reagents & Materials

| Item | Function & Explanation |

|---|---|

| Polyacrylamide Hydrogels | Tunable-stiffness 2D or 3D substrates for cell culture that mimic tissue mechanical properties. Coated with ECM proteins (e.g., collagen, fibronectin) for cell adhesion. |

| Fluorescent Beads (µm & nm) | Used as tracers for Digital Image Correlation (DIC) in material testing or for particle image velocimetry (PIV) in fluid flow studies. |

| Phospho-Specific Antibodies | Essential for Western blotting or immunofluorescence to detect activated (phosphorylated) signaling proteins in mechanotransduction pathways (e.g., p-FAK, p-ERK). |

| Silicon-based Elastomers (PDMS) | Used to fabricate microfluidic devices for shear stress studies or to create substrates with micropatterned geometry to control cell shape and adhesion. |

| Fluorescently-labeled Phalloidin | Binds specifically to filamentous actin (F-actin), allowing visualization of the cytoskeletal architecture in response to mechanical cues. |

Tier 2: Ex Vivo & Simple Organism Validation

This tier introduces higher biological complexity while retaining significant experimental control.

Key Experimental Methodologies

Isolated Organ Perfusion (Langendorff System for Heart):

- Protocol: An animal heart is excised and the aorta is cannulated. Retrograde perfusion with oxygenated, buffered physiological solution (Krebs-Henseleit) at constant pressure (e.g., 80 mmHg) is initiated. A fluid-filled balloon is inserted into the left ventricle and connected to a pressure transducer. The balloon volume is incrementally increased (preload), and corresponding ventricular pressure is recorded to generate pressure-volume loops. A force transducer can be attached to the apex to measure contractile force.

- Data Output: Left ventricular pressure, dP/dt_max (contractility), end-systolic pressure-volume relationship (ESPVR), stroke work.

Organ-on-a-Chip (OoC) Microphysiological System:

- Protocol: A microfluidic device containing patterned human endothelial and parenchymal cell layers (e.g., lung alveolar barrier, liver sinusoid) is connected to perfusion pumps. Defined mechanical cues (cyclic stretch, fluid shear) are applied. Permeability is assessed by measuring the transport of fluorescent dextran across the endothelial barrier. Effluent can be sampled for metabolic markers (e.g., albumin, urea for liver chips).

- Data Output: Real-time trans-endothelial electrical resistance (TEER), analyte permeability coefficients, metabolic product concentration over time.

Table 1: Representative Quantitative Data from Validation Tiers

| Tier | Experiment Type | Key Measurable Parameters | Typical Values (Example) |

|---|---|---|---|

| Tier 1 | Tensile Test (Arterial Tissue) | Ultimate Tensile Strength, Elastic Modulus, Failure Strain | 1.5 - 4.0 MPa, 1.0 - 10.0 MPa, 50 - 150% |

| Tier 1 | Oscillatory Rheology (Synovial Fluid) | Storage Modulus G' (at 1 Hz), Loss Modulus G'' (at 1 Hz) | 2 - 10 Pa, 1 - 5 Pa |

| Tier 1 | Cyclic Strain on Fibroblasts | p-ERK/Total ERK Ratio Increase | 2.5 - 5.0 fold over static control |

| Tier 2 | Isolated Heart (Rat, Langendorff) | Left Ventricular Developed Pressure, +dP/dt_max | 80-120 mmHg, 2000-4000 mmHg/s |

| Tier 2 | Lung-on-a-Chip (with breathing) | TEER (Ω*cm²), Albumin Permeability (P_app) | >1000 Ω*cm², < 1 x 10⁻⁶ cm/s |

Tier 3: In Vivo & Clinical Data Acquisition

This tier provides the most physiologically relevant data for final model validation but is subject to biological variability.

Key Data Acquisition Methodologies

Medical Imaging for Geometry & Motion:

- Protocol (Cardiac MRI): Human or animal subject is scanned using a cine MRI sequence (e.g., balanced Steady-State Free Precession). Multiple short-axis slices from base to apex and long-axis slices are acquired throughout the cardiac cycle. For tissue tagging, spatial modulation of magnetization (SPAMM) sequences create a grid on the myocardium that deforms with motion.

- Data for Validation: 3D time-resolved geometry of ventricles, wall thickening, ejection fraction, and (from tagging) regional strain tensors (circumferential, radial, longitudinal).

In Vivo Pressure-Volume Loop Catheterization:

- Protocol (Rodent): Under anesthesia, a pressure-volume catheter (e.g., Millar) is inserted into the left ventricle via the carotid artery or apical puncture. Data is acquired at steady-state and during transient preload reduction (inferior vena cava occlusion) to derive load-independent indices of contractility.

- Data for Validation: Real-time pressure and volume, stroke volume, cardiac output, end-systolic elastance (Ees), arterial elastance (Ea).

Telemetric Biopotential & Pressure Monitoring:

- Protocol: An implantable telemetry device is surgically placed in a large animal (e.g., dog, pig). Electrodes are positioned for ECG, and a pressure catheter is placed in a target artery or ventricle. The device transmits data continuously to an external receiver.

- Data for Validation: Long-term, ambulatory hemodynamic pressure waveforms (e.g., arterial, pulmonary), heart rate, activity, and their dynamic changes in response to drugs or stressors.

Integrated Validation Workflow

Diagram 3: Multi-Tier Validation Data Integration

A rigorous validation campaign for computational biomechanics models requires a strategic, multi-tiered experimental plan. Data acquired from benchtop material tests (Tier 1) provides fundamental constitutive properties. Tier 2 experiments on isolated organs or microphysiological systems offer insights into integrated tissue and organ-level responses under controlled conditions. Finally, in vivo and clinical data (Tier 3) serve as the gold standard for assessing the model's predictive capability in the full physiological context. This hierarchical approach, systematically comparing model outputs against quantitative experimental data at each level, is essential for establishing a model's credibility and defining its domain of applicability within the broader V&V framework.

Verification and Validation (V&V) constitute the foundational pillars of credibility in computational biomechanics research. Verification asks, "Are we solving the equations correctly?"—a process of checking the numerical solution against benchmarks. Validation asks, "Are we solving the correct equations?"—assessing the model's ability to predict real-world biomechanical phenomena. This guide details the quantitative metrics essential for both phases, providing the rigorous, objective measures needed to transition a computational model from a conceptual tool to a trusted asset in scientific discovery and drug development.

Core Quantitative Metrics

The evaluation of computational models against experimental or clinical data relies on three primary classes of metrics.

Correlation Metrics

These assess the strength and direction of a linear relationship between model predictions (P) and reference/experimental data (E).

| Metric | Formula | Interpretation | Ideal Value | Use Case in Biomechanics |

|---|---|---|---|---|

| Pearson's r | $$ r = \frac{\sum{i=1}^n (Pi - \bar{P})(Ei - \bar{E})}{\sqrt{\sum{i=1}^n (Pi - \bar{P})^2}\sqrt{\sum{i=1}^n (E_i - \bar{E})^2}} $$ | Linear correlation strength | ±1 | Comparing strain fields from FEA vs. Digital Image Correlation. |

| Coefficient of Determination (R²) | $$ R^2 = 1 - \frac{SS{res}}{SS{tot}} $$ | Proportion of variance explained | 1 | Evaluating predictive power of a pharmacokinetic model for drug concentration. |

| Spearman's ρ | $$ \rho = 1 - \frac{6 \sum d_i^2}{n(n^2 - 1)} $$ | Monotonic relationship (rank-based) | ±1 | Comparing ordinal data (e.g., tissue damage scores). |

Error Norms

These quantify the magnitude of difference between prediction and observation vectors, providing direct measures of accuracy.

| Metric (Norm) | Formula | Description & Sensitivity | Units |

|---|---|---|---|

| Mean Absolute Error (MAE) / L1 Norm | $$ MAE = \frac{1}{n} \sum{i=1}^n | Pi - E_i | $$ | Average magnitude of error, robust to outliers. | Same as data. |

| Root Mean Square Error (RMSE) / L2 Norm | $$ RMSE = \sqrt{ \frac{1}{n} \sum{i=1}^n (Pi - E_i)^2 } $$ | Root of average squared error, sensitive to large errors. | Same as data. |

| Normalized RMSE (NRMSE) | $$ NRMSE = \frac{RMSE}{E{max} - E{min}} $$ | RMSE normalized by data range, enables cross-study comparison. | Dimensionless or %. |

| Maximum Absolute Error (MaxAE) / L∞ Norm | $$ MaxAE = \max( | Pi - Ei | ) $$ | Worst-case error in the dataset. Critical for safety-critical applications. | Same as data. |

Validation Metrics

Advanced metrics that combine aspects of error and agreement, often used for formal model validation.

| Metric | Formula | Threshold for Validation | Application Example |

|---|---|---|---|

| Mean Absolute Percentage Error (MAPE) | $$ MAPE = \frac{100\%}{n} \sum{i=1}^n \left| \frac{Ei - Pi}{Ei} \right| $$ | Case-dependent (e.g., < 20%). | Validating predicted joint reaction forces in gait analysis. |

| Bland-Altman Limits of Agreement | $$ Bias = \mu{P-E}; \ LoA = Bias \pm 1.96\sigma{P-E} $$ | Agreement interval must be within clinically acceptable difference. | Assessing agreement between simulated and measured blood flow velocities. |

| Correlation-Error Score (C-ES) | $$ C\text{-}ES = \frac{NRMSE}{1 + r} $$ | Lower is better. Composite score balancing error and correlation. | Holistic model performance ranking in multi-model studies. |

Experimental Protocols for Metric Computation

A standardized workflow ensures reproducibility and fair comparison.

Protocol 1: Comparative Analysis of Soft Tissue Stress-Strain Predictions

- Objective: Validate a hyperelastic tissue model against biaxial tensile test data.

- Materials: See "The Scientist's Toolkit" (Section 5.0).

- Methodology:

- Data Acquisition: Perform biaxial tests on porcine heart valve leaflet (n=10). Record engineering stress (σexp) and strain (εexp) fields via digital image correlation.

- Simulation: Replicate test boundary conditions in an FEA model using the proposed constitutive law.

- Spatial Registration: Map simulation nodes to corresponding DIC measurement points using nearest-neighbor interpolation.

- Metric Computation: Calculate metrics for the stress field at peak strain.

- Compute r and R² for full-field stress values.

- Compute NRMSE for stress values, normalized by the experimental range.

- Generate a Bland-Altman plot: X-axis = average of simulated and experimental stress; Y-axis = difference.

- Validation Decision: If NRMSE < 15% AND r > 0.9 AND Bland-Altman bias is not statistically significant (p>0.05), the model is considered validated for this loading mode.

Protocol 2: Time-Series Validation of Drug Concentration in a Compartmental PK/PD Model

- Objective: Verify and validate a pharmacokinetic model predicting plasma drug concentration.

- Methodology:

- In Vivo Experiment: Administer drug to animal model (n=8). Collect plasma samples at t=[0, 5, 15, 30, 60, 120, 240, 480] minutes. Measure concentration via LC-MS.

- Model Execution: Run simulation with identical initial conditions and physiological parameters.

- Temporal Alignment: Align simulation time-zero with administration time.

- Metric Computation:

- Calculate MAE and MAPE across all time points for each subject.

- Compute subject-specific R².

- Calculate the Normalized Cross-Correlation (for temporal phase alignment) where τ is time lag: $$ NCC(\tau) = \frac{\sumt P(t) \cdot E(t-\tau)}{\sqrt{\sumt P(t)^2 \cdot \sum_t E(t-\tau)^2}} $$

- Validation Decision: Model passes if group mean MAPE < 25% and mean R² > 0.85 across all subjects.

Visualization of Methodologies

V&V Metric Computation Workflow

Metric Categories & Synthesis

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Biomechanics V&V |

|---|---|

| Biaxial/Triaxial Testing System | Applies controlled multi-axial loads to biological tissue specimens to generate mechanical property data for model calibration and validation. |

| Digital Image Correlation (DIC) System | Non-contact optical method to measure full-field 3D displacements and strains on tissue or implant surfaces during experimentation. |

| Micro-CT/MRI Scanner | Provides high-resolution 3D geometry and, in some cases, material property data (e.g., bone density) for generating anatomically accurate computational meshes. |

| Force Plates & Motion Capture | Gold standard for acquiring in vivo kinetic and kinematic data (e.g., gait analysis) used to drive and validate musculoskeletal simulations. |

| Polyacrylamide Gel Substrates | Tunable-stiffness substrates for cell mechanobiology studies, validating models of cellular force transduction and migration. |

| Fluorescent Microspheres & µPIV | Enables Particle Image Velocimetry in microfluidic devices or transparent tissues to map flow fields for validating CFD models of blood or interstitial flow. |

| LC-MS/MS Platform | Quantifies drug and metabolite concentrations in biological fluids with high sensitivity for pharmacokinetic/pharmacodynamic (PK/PD) model validation. |

| Finite Element Software (FEBio, Abaqus) | Open-source and commercial platforms for implementing and solving biomechanics boundary value problems. |

In computational biomechanics research, Verification and Validation (V&V) provide the foundational framework for establishing model credibility. Verification asks, "Are we solving the equations correctly?" while Validation asks, "Are we solving the correct equations?" Uncertainty Quantification (UQ) is the critical bridge between these two pillars. It systematically characterizes and propagates the effects of various uncertainties—input, parametric, and model-form—on model predictions. This rigorous process transforms a deterministic simulation into a probabilistic statement, which is essential for risk-informed decision-making in applications like implant design, surgical planning, and drug delivery system development.

Core Components of Uncertainty in Computational Biomechanics

Uncertainty is an inherent feature of computational models. For a model prediction Y, the integrated uncertainty can be conceptualized as: Y = M(X, θ, δ), where:

- X represents Input Uncertainty.

- θ represents Parametric Uncertainty.

- δ represents Model-Form/Structural Uncertainty.

Input Uncertainty

Input uncertainty arises from variability and errors in the model's boundary conditions, initial conditions, and geometric representations.

- Examples in Biomechanics: Inter-subject anatomical variability (bone geometry, tissue dimensions), in-vivo loading conditions (gait, blood pressure cycles), and imaging-derived inputs (spatial resolution, segmentation thresholds from CT/MRI).

- Quantification: Often characterized empirically using population studies or measurement error distributions.

Parametric Uncertainty

Parametric uncertainty stems from imperfect knowledge of the model's physical or constitutive parameters.

- Examples in Biomechanics: Material properties (Young's modulus of bone, permeability of cartilage, viscosity of blood), physiological parameters (arterial wall thickness, muscle activation coefficients), and kinetic constants in drug transport models.

- Quantification: Characterized via Bayesian inference from experimental data or through literature reviews reporting mean ± standard deviation.

Model-Form Uncertainty

Model-form (or structural) uncertainty is the most challenging type, arising from the inevitable simplifications, approximations, and missing physics in the mathematical model itself.

- Examples in Biomechanics: Choosing a linear elastic vs. hyperelastic material model for soft tissue, using a porous media assumption for bone, neglecting micro-scale interactions in blood flow, or using a compartmental vs. a spatially resolved model for drug distribution.

- Quantification: Addressed by comparing predictions from multiple competing model structures (multi-model inference) or using discrepancy functions (model error emulators).

Methodologies for Uncertainty Integration and Propagation

A robust UQ workflow integrates all three uncertainty types to produce a probabilistic prediction.

Experimental Protocols for Data-Driven UQ

Protocol 1: Bayesian Calibration for Parametric Uncertainty

- Define Prior Distributions: Assign probability distributions (e.g., normal, log-normal, uniform) to uncertain parameters θ based on literature or expert knowledge.

- Acquire Observational Data: Perform controlled biomechanical experiments (e.g., tensile testing of tissue samples, pressure-flow measurements in segmented arteries) to collect calibration data D.

- Construct Likelihood Function: Formulate a function P(D | θ) that describes the probability of observing the data given specific parameter values, incorporating measurement error.

- Apply Bayes' Theorem: Compute the posterior distribution: P(θ | D) ∝ P(D | θ) P(θ) using sampling methods (Markov Chain Monte Carlo - MCMC, e.g., Metropolis-Hastings or Hamiltonian Monte Carlo).

- Validate Posterior: Perform posterior predictive checks by comparing new model simulations using sampled θ against a separate set of validation data.

Protocol 2: Model Discrepancy Emulation for Model-Form Uncertainty

- Define Model Ensemble: Develop a set of K candidate models {M₁, M₂, ..., Mₖ} representing different structural hypotheses.

- Gather High-Fidelity Data: Obtain benchmark data from highly controlled experiments or from higher-fidelity models (e.g., from a detailed FSI simulation or a small-scale in-vivo study).

- Train Gaussian Process (GP) Emulators: For each model Mₖ, run a designed set of simulations over its input/parameter space. Fit a GP surrogate model to interpolate the model output and a separate GP to model the systematic discrepancy between Mₖ's predictions and the high-fidelity data.

- Bayesian Model Averaging (BMA): Compute the posterior probability (weight) for each model given the data. The integrated predictive distribution becomes a weighted average: P(Y | D) = Σ wₖ P(Y | Mₖ, D), where wₖ is the model weight.

Propagation and Sensitivity Analysis

- Sampling Methods: Use Latin Hypercube Sampling (LHS) or Sobol sequences for efficient propagation of combined uncertain inputs (X) and parameters (θ) through the model.

- Global Sensitivity Analysis: Employ variance-based methods (Sobol indices) to apportion the total variance in the output Y to the different uncertain sources. This identifies which input/parameter uncertainties are most influential and which model components contribute most to model-form uncertainty.

Table 1: Representative Uncertain Parameters in Arterial Wall Biomechanics

| Parameter | Typical Value (Mean) | Uncertainty (Std. Dev. or Range) | Source of Uncertainty | Primary Type |

|---|---|---|---|---|

| Young's Modulus (Artery) | 1.2 MPa | ± 0.4 MPa | Inter-subject variability, measurement technique | Parametric |

| Wall Thickness | 1.0 mm | ± 0.2 mm | Imaging resolution, anatomical location | Input |

| Blood Pressure (Systolic) | 120 mmHg | ± 20 mmHg (physiological range) | Physiological state, measurement | Input |

| Material Model Constant (c₁) | 0.15 MPa | 95% CI: [0.12, 0.18] MPa | Bayesian calibration from ex-vivo tests | Parametric |

Table 2: Comparison of UQ Methodologies

| Methodology | Best For | Computational Cost | Key Output |

|---|---|---|---|

| Monte Carlo Sampling | General propagation, non-linear models | Very High (requires 10³-10⁶ runs) | Full output distribution, statistics |

| Polynomial Chaos Expansion | Smooth models, moderate dimensions | Medium (requires ~10² runs for setup) | Analytic surrogate for statistics/Sobol indices |

| Bayesian Calibration (MCMC) | Inferring parameters from data | High (10⁴-10⁶ iterations) | Posterior parameter distributions |

| Gaussian Process Surrogates | Emulating expensive simulations | Low post-training (train on ~10² runs) | Fast prediction with uncertainty at new inputs |

Visualizing the Integrated UQ Workflow

Title: Integrated UQ Workflow for Computational Models

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for UQ in Computational Biomechanics

| Item | Function & Relevance | Example Product/Specification |

|---|---|---|

| High-Fidelity Experimental Data | Provides the "ground truth" for calibrating parametric uncertainty and assessing model-form discrepancy. | Biaxial tensile tester for soft tissues; Digital Image Correlation (DIC) systems for full-field strain measurement. |

| Bayesian Inference Software | Enables probabilistic calibration and model comparison, quantifying uncertainty in parameters. | Stan, PyMC3/PyMC, TensorFlow Probability (for custom MCMC/HMC sampling). |

| Surrogate Modeling Toolbox | Creates fast-running emulators of expensive simulations for efficient propagation and analysis. | GPy (Gaussian Processes in Python), scikit-learn, UQLab (MATLAB). |

| Global Sensitivity Analysis Library | Computes variance-based sensitivity indices to rank influence of uncertain inputs. | SALib (Python library for Sobol, Morris methods), UQLab. |

| Uncertainty Propagation Sampler | Generates efficient, space-filling samples from multivariate probability distributions. | Custom LHS/Sobol sequences via SciPy or Chaospy. |

| Multi-Model Framework | Manages ensembles of competing model structures for formal model-form UQ. | Custom scripting in Python/MATLAB implementing Bayesian Model Averaging (BMA). |

Overcoming Common Hurdles: Troubleshooting and Refining Your V&V Process

Within the framework of verification and validation (V&V) in computational biomechanics research, the credibility of simulations hinges on quantifying and controlling numerical errors. Verification ensures the mathematical model is solved correctly, while validation assesses the model's accuracy against physical reality. This guide details the core sources of numerical error—discretization, iteration, and round-off—providing methodologies for their identification and mitigation, essential for researchers and drug development professionals relying on in silico models.

Discretization Error

Discretization error arises from approximating continuous mathematical models (PDEs, ODEs) by a discrete numerical system.

Identification via Convergence Analysis

Perform systematic grid and time-step refinement.

Protocol: Spatial Convergence Study

- Define a key Quantity of Interest (QoI) (e.g., peak wall stress, fluid shear stress).

- Generate a sequence of at least three meshes with increasing refinement (e.g., by globally halving element size h).

- Run simulations on each mesh with iterative and round-off errors reduced to negligible levels.

- Calculate the observed order of convergence (p) and extrapolate to h=0 (Richardson Extrapolation).

- The difference between the finest mesh solution and the extrapolated value estimates discretization error.

Table 1: Example Spatial Convergence Study for Arterial Wall Stress

| Mesh | Element Size h (mm) | QoI: Peak Stress (kPa) | Relative Error (%) |

|---|---|---|---|

| Coarse | 0.80 | 125.6 | 12.4 |

| Medium | 0.40 | 138.2 | 3.6 |

| Fine | 0.20 | 142.1 | 0.9 |

| Extrapolated (h→0) | 0.00 | 143.4 | – |

Mitigation Strategies

- Adaptive Mesh Refinement (AMR): Automatically refine mesh in regions of high solution gradient.

- Higher-Order Elements: Use quadratic or spectral elements to increase formal order of accuracy p.

- Time Integration Schemes: Select implicit/explicit schemes (e.g., Newmark-β, Generalized-α) balancing stability and accuracy for dynamic problems.

Iterative Error

Iterative error is the difference between the exact solution of the discretized system and the approximate solution obtained after a finite number of solver iterations.

Identification via Residual Monitoring

Monitor the normalized residual norm of the linear/nonlinear system.

Protocol: Iterative Solver Tolerance Setting

- For a linear system Ax=b, define the relative residual norm: ǁb - Ax⁽ᵏ⁾ǁ / ǁbǁ.

- Run the solver (e.g., Conjugate Gradient, GMRES) and log the residual per iteration.

- Set the solver tolerance (τ_iter) significantly smaller than the estimated discretization error to ensure iterative error does not dominate. A common rule is τ_iter ≤ 0.01 * ε_disc.

Table 2: Iterative Solver Performance for a Large-Scale FE Model

| Solver Type | Preconditioner | Target Tolerance | Iterations to Converge | Solve Time (s) |

|---|---|---|---|---|

| CG | Jacobi | 1E-3 | 2450 | 42.1 |

| CG | ICCG | 1E-5 | 650 | 15.7 |

| GMRES | ILU(2) | 1E-7 | 185 | 8.3 |

Mitigation Strategies

- Advanced Preconditioners: Use Incomplete Cholesky (IC), Algebraic Multigrid (AMG), or physics-based preconditioning.

- Tightening Tolerances: Systematically reduce τ_iter until the QoI change is below a target threshold.

- Nonlinear Solver Monitoring: For Newton-Raphson methods, ensure both residual and solution increment norms converge.

Round-off Error

Round-off error stems from the finite precision of floating-point arithmetic (typically IEEE 754 double-precision, ~16 decimal digits).

Identification via Precision Increment Studies

Protocol: Variable Precision Arithmetic Test

- Re-run identical simulations using single (32-bit), double (64-bit), and extended (80-bit or software-emulated) precision.

- Compare the resulting QoIs. A significant change indicates high sensitivity to round-off.

- Analyze condition numbers of critical system matrices; a high condition number (>1E10) amplifies round-off.

Mitigation Strategies

- Algorithmic Stabilization: Use compensated summation (Kahan algorithm) for critical summations, and avoid subtracting nearly equal numbers.

- Well-Posed Formulations: Choose formulations with lower condition numbers (e.g., mixed formulations for incompressibility).

- Quadruple Precision: Reserve for ill-conditioned but small-scale critical calculations.

Integrated V&V Workflow in Computational Biomechanics

The following diagram integrates error control within a broader V&V workflow, contextualizing it within computational biomechanics research.

Diagram 1: V&V & Error Sources Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Numerical Error Analysis