The Definitive V&V Framework for Biomechanical Models: A Step-by-Step Guide for Researchers and Drug Developers

This comprehensive guide demystifies the Verification and Validation (V&V) process essential for building credible biomechanical models in biomedical research and drug development.

The Definitive V&V Framework for Biomechanical Models: A Step-by-Step Guide for Researchers and Drug Developers

Abstract

This comprehensive guide demystifies the Verification and Validation (V&V) process essential for building credible biomechanical models in biomedical research and drug development. It provides a structured framework, beginning with foundational principles and definitions, progressing through practical methodologies and implementation strategies. The article addresses common troubleshooting scenarios and optimization techniques to enhance model robustness. Finally, it details formal validation protocols and comparative analysis against benchmarks and clinical data. Designed for researchers, scientists, and drug development professionals, this guide aims to establish rigorous, reproducible, and regulatory-ready modeling practices.

Building a Solid Foundation: Core Principles and Critical Definitions in Biomechanical Model V&V

What is V&V? Demystifying Verification vs. Validation (ASME V&V 40)

Within the domain of biomechanical models research—spanning orthopedic implant design, cardiovascular device testing, and drug delivery system evaluation—the credibility of computational models is paramount. The Verification and Validation (V&V) framework provides a rigorous, standardized methodology to establish model credibility. This guide, framed within a broader thesis on V&V processes for biomechanical models, demystifies the core principles as codified in the ASME V&V 40 standard, Assessing Credibility of Computational Modeling through Verification and Validation: Application to Medical Devices.

The primary objective of V&V is to establish credibility for a computational model within a specific context of use (COU). The COU defines the specific question the model is intended to answer and the associated decision-making risk, directly influencing the necessary level of V&V effort.

Core Definitions: Verification vs. Validation

Verification: The process of determining that a computational model accurately represents the underlying mathematical model and its solution. It answers the question: "Are we solving the equations correctly?"

- Code Verification: Ensuring the simulation software is free of coding errors.

- Calculation Verification: Assessing the numerical accuracy of the solution (e.g., mesh convergence, time-step independence).

Validation: The process of determining the degree to which a model is an accurate representation of the real world from the perspective of the intended uses. It answers the question: "Are we solving the correct equations?"

- This involves comparing model predictions with experimentally measured outcomes from physical systems.

The ASME V&V 40 Risk-Informed Credibility Assessment Framework

ASME V&V 40 introduces a risk-informed framework where the required rigor of V&V activities is scaled to the Model Influence and Decision Consequence associated with the COU.

Table 1: Risk-Informed Credibility Factor Assessment (Based on ASME V&V 40)

| Credibility Factor | Low Risk / Influence Scenario | High Risk / Influence Scenario |

|---|---|---|

| Verification | Basic mesh convergence study. | Comprehensive code and calculation verification, including uncertainty quantification (UQ). |

| Validation | Comparison to a limited set of bench test data. | Extensive validation across a wide range of conditions, including in vivo or clinical data where feasible. |

| Model Fidelity | Simplified 2D or linear model. | High-fidelity 3D, non-linear, multi-physics model. |

| Uncertainty Quantification | Qualitative discussion of uncertainties. | Quantitative UQ for both input parameters (aleatory/epistemic) and output results. |

The framework identifies Credibility Factors (e.g., Conceptual Model Adequacy, Verification, Validation, Input Data) that must be evaluated. The sufficiency of evidence for each factor is judged against a set of Credibility Assessment Scale metrics.

Detailed Methodologies for Key V&V Experiments

Experimental Protocol for Validation Bench Testing (Example: Stent Fatigue)

- Objective: Validate a computational fatigue damage model of a coronary stent against physical bench test data.

- Materials: Stent specimens (n=6), pulsatile duplicator test system, pressure sensors, high-cycle fatigue test machine.

- Methodology:

- Computational Simulation: Apply physiological pressure waveforms (80-120 mmHg, 1 Hz) to a finite element (FE) stent model. Predict stress cycles and fatigue safety factor.

- Physical Experiment: Mount stents in a duplicator simulating coronary anatomy. Subject them to identical pressure waveforms for 10 million cycles (simulating ~10 years in vivo).

- Comparison Metrics: Primary metrics are stent fracture location (qualitative) and number of cycles to failure (quantitative).

- Acceptance Criterion: The FE model must predict the fracture location correctly, and the predicted cycles to failure must fall within the 95% confidence interval of the experimental mean.

Protocol for Verification (Mesh Convergence Study)

- Objective: Ensure numerical accuracy of a computational fluid dynamics (CFD) model of blood flow through an aneurysm.

- Methodology:

- Create 4 successive meshes with increasing element density (e.g., 1M, 2M, 4M, 8M elements).

- Solve for a key Quantity of Interest (QoI), such as Wall Shear Stress (WSS) at a specific location.

- Calculate the relative difference in the QoI between successive mesh refinements.

- Apply the Grid Convergence Index (GCI) method to estimate discretization error.

- Acceptance Criterion: The relative difference between the two finest meshes for all QoIs is <2%.

Table 2: Sample Mesh Convergence Study Data

| Mesh Refinement Level | Number of Elements | Peak Wall Shear Stress (Pa) | Relative Difference to Previous Mesh |

|---|---|---|---|

| Coarse | 1,000,000 | 8.5 | - |

| Medium | 2,000,000 | 9.8 | 15.3% |

| Fine | 4,000,000 | 10.1 | 3.1% |

| Extra Fine | 8,000,000 | 10.2 | 1.0% |

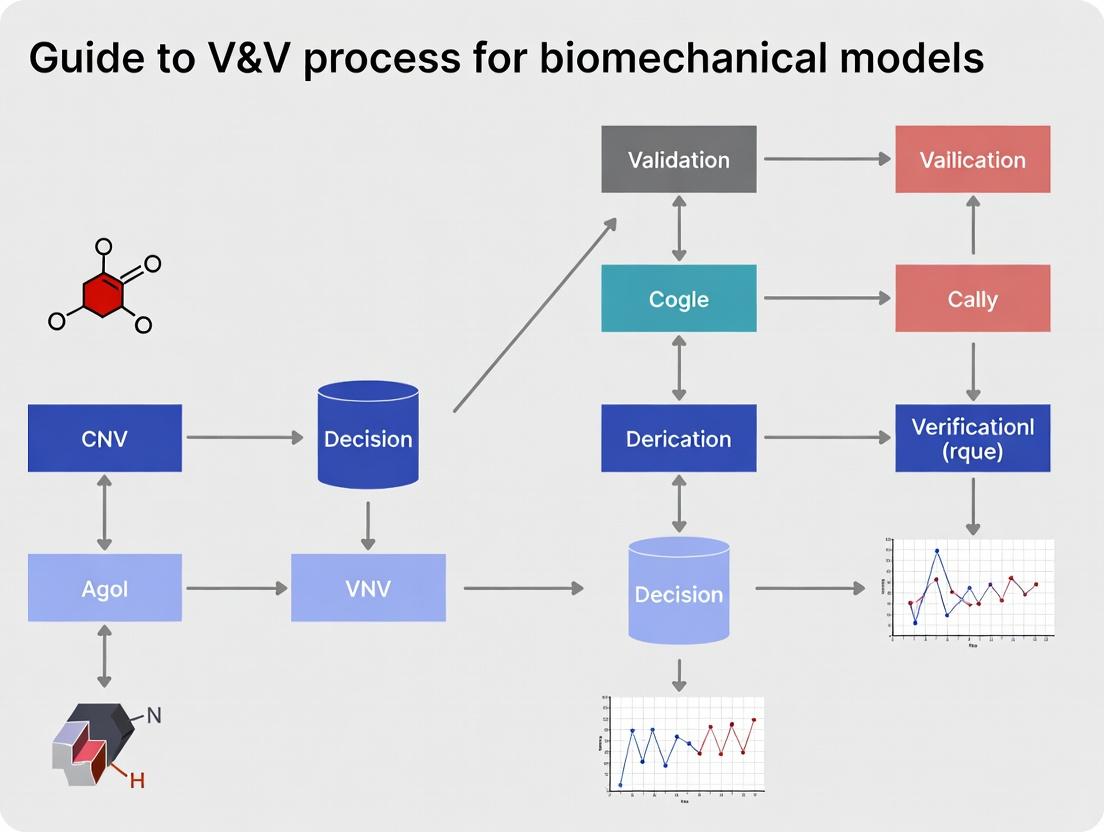

Visualization of the V&V 40 Process for Biomechanical Models

Title: ASME V&V 40 Credibility Assessment Workflow

Title: Logical Relationship: Verification vs. Validation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials for Biomechanical Model V&V Experiments

| Item / Reagent | Function in V&V | Example Application |

|---|---|---|

| Polyurethane Vascular Phantoms | Mimic mechanical properties (compliance, modulus) of human blood vessels for in vitro validation. | Flow visualization and pressure measurement in CFD validation. |

| Silicone Heart Simulants | Provide anatomically accurate, compliant models for cardiac device testing. | Validating left atrial appendage occlusion device deployment simulations. |

| Biorelevant Test Fluids | Aqueous-glycerol or blood-mimicking fluids with matched viscosity and density. | Particle image velocimetry (PIV) experiments for hemodynamics model validation. |

| Strain-Gauge Rosettes | Measure multi-axial surface strains on physical specimens. | Validating finite element-predicted strain fields in bone-implant constructs. |

| Digital Image Correlation (DIC) Systems | Provide full-field, non-contact 3D deformation and strain mapping. | Core validation tool for soft tissue or complex structural mechanics models. |

| Micro-CT Imaging Contrast Agents | Enhance tissue contrast for high-resolution 3D imaging to create geometric models. | Generating anatomically accurate CAD models for simulation (input geometry). |

| Programmable Pneumatic Actuators | Deliver physiologically realistic loading profiles (force, pressure, displacement). | Cyclic loading of orthopaedic implants for fatigue model validation. |

Verification and Validation (V&V) is the formal and rigorous process that determines whether a computational model accurately represents the underlying theory (verification) and reliably predicts real-world phenomena (validation). In biomechanical modeling for drug development and biomedical research, V&V transitions models from research curiosities to credible tools for decision-making. This guide outlines its indispensable role in establishing scientific credibility, ensuring regulatory compliance, and enabling successful clinical translation.

The Pillars of V&V: Definitions and Distinctions

Verification: "Are we solving the equations correctly?" This process ensures the computational model is implemented correctly without bugs or numerical errors. It is a check of the mathematical and coding framework.

Validation: "Are we solving the correct equations?" This process assesses the model's accuracy by comparing its predictions with independent, high-quality experimental or clinical data.

Without both pillars, a model's predictive power is merely speculative.

Quantifying the Impact: The V&V Imperative in Data

Recent analyses underscore the critical gap V&V addresses. The following table summarizes quantitative findings on model reproducibility and regulatory trends.

Table 1: Quantitative Evidence for the V&V Imperative

| Metric | Value / Finding | Source / Context |

|---|---|---|

| Preclinical Research Reproducibility | Estimated < 50% of published biomedical research is reproducible, costing ~$28B/year in the US alone. | Survey of industry and academic literature (2015-2023). |

| FDA Submissions with Modeling | > 100% increase in submissions containing in silico modeling (2010-2020). | FDA Center for Devices and Radiological Health (CDRH) Reports. |

| Regulatory Acceptance (ASME V&V 40) | Adoption of risk-informed V&V framework (ASME V&V 40) is now a de facto requirement for high-consequence in silico medical device trials. | FDA Guidance Documents & 510(k)/PMA clearances. |

| Model Credibility Threshold | For regulatory use, validation must demonstrate a predefined confidence (e.g., 95%) and accuracy (e.g., within 15% of experimental mean) based on Risk-to-Health. | ASME V&V 40-2018: Assessing Credibility of Computational Modeling. |

Core Experimental Protocols for V&V in Biomechanics

A robust V&V plan requires structured experimental data for validation. Below are detailed protocols for generating gold-standard validation data.

Protocol 4.1: Ex Vivo Mechanical Testing for Soft Tissue Model Validation

- Objective: Generate stress-strain data to validate constitutive material models (e.g., for arterial wall, cartilage, tendon).

- Materials: Fresh or properly preserved tissue specimen, biaxial or uniaxial tensile tester, environmental bath (PBS, 37°C), digital image correlation (DIC) system.

- Methodology:

- Specimen Preparation: Mill tissue into standardized dog-bone or rectangular coupons. Measure reference dimensions precisely.

- Mounting: Secure specimen in grips, ensuring minimal pre-strain. Submerge in temperature-controlled bath.

- Pre-conditioning: Apply 10-15 cycles of low-load cyclic strain to achieve a repeatable mechanical response.

- Testing: Apply displacement-controlled loading at a physiological strain rate (e.g., 1-10% per second). Record force (load cell) and full-field strain (DIC) synchronously.

- Data Output: Convert force-displacement to engineering/cauchy stress vs. Green-Lagrange strain. Repeat for n≥5 specimens.

Protocol 4.2: In Vivo Medical Imaging for Kinematics Validation

- Objective: Obtain in vivo kinematic or morphological data to validate joint or organ-scale models (e.g., knee joint contact, cardiac wall motion).

- Materials: MRI or dynamic biplanar X-ray (e.g., EOS system), motion capture system, skin-mounted markers (for mocap), anatomic phantoms for calibration.

- Methodology:

- Subject Preparation: For mocap, place reflective markers on defined anatomical landmarks. For imaging, position subject in scanner/field of view.

- Calibration: Perform imaging system calibration using known phantoms to minimize distortion and define world coordinates.

- Data Acquisition: Acquire image data during dynamic activity (gait, respiration) or static pose. For mocap, synchronize with force plates.

- Segmentation & Reconstruction: Segment target anatomy (bones, organs) from image stacks to create 3D models and kinematic trajectories.

- Data Output: 3D pose (position + orientation) of bones/organ over time, joint angles, contact patterns, strain maps from tagged MRI.

The V&V Workflow: From Concept to Credible Model

The following diagram illustrates the iterative, hierarchical process of building model credibility, as framed by the ASME V&V 40 standard.

Key Signaling Pathways in Mechanobiology: A V&V Target

Biomechanical models often predict cellular responses to mechanical stimuli. Validating these outputs requires understanding key pathways. The diagram below maps a core mechanotransduction pathway relevant to bone remodeling or cardiovascular disease.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Materials for Biomechanical V&V Experiments

| Item | Function in V&V | Example / Specification |

|---|---|---|

| Polyacrylamide Hydrogels | Tunable-stiffness 2D/3D cell culture substrates to validate cell-mechanics models (e.g., traction force microscopy). | Cylinder gels (1-50 kPa stiffness), functionalized with collagen/fibronectin. |

| Fluorescent Beads (Microspheres) | Serve as fiducial markers for Digital Image Correlation (DIC) and particle image velocimetry (PIV) in experimental mechanics. | Polystyrene beads, 0.5-1.0 µm diameter, fluorescent (e.g., red/green). |

| Biaxial Tensile Testing System | Applies controlled, independent loads in two orthogonal directions to characterize anisotropic soft tissues. | Systems with bio-bath, optical force sensors, and video extensometry. |

| Primary Human Cells (Cryopreserved) | Provide physiologically relevant in vitro validation data compared to immortalized cell lines. | Human aortic smooth muscle cells, osteoblasts, chondrocytes. |

| Phospho-Specific Antibodies | Detect activation states of signaling proteins (e.g., p-FAK, p-ERK) to validate model predictions of cellular response. | Validated for Western Blot or immunofluorescence; species-specific. |

| Siliconne Polymer (PDMS) | For fabricating microfluidic organ-on-chip or cell-stretching devices to apply controlled cyclic strain. | SYLGARD 184, mixed for desired elastic modulus. |

| Calcein-AM / Propidium Iodide | Live/Dead viability assay kit to quantify cell health in response to modeled mechanical stimuli (e.g., shear stress). | Standardized fluorescence assay for high-throughput validation. |

V&V is not the final step in model development; it is the foundational process that integrates throughout. It transforms a biomechanical model from an interesting academic exercise into a credible asset that can withstand scientific scrutiny, meet regulatory evidence standards, and ultimately inform clinical decisions—from drug delivery system design to patient-specific treatment planning. In an era of increasing computational sophistication, rigorous V&V is the definitive factor separating predictive insight from digital speculation.

Within the broader thesis on the Guide to Verification & Validation (V&V) for biomechanical models, clarifying foundational terminology is paramount. This technical guide delineates the hierarchical relationship between conceptual and computational models and establishes Uncertainty Quantification (UQ) as the critical bridge connecting model development to rigorous V&V assessment, ultimately supporting regulatory-grade decision-making in drug development and biomedical research.

Foundational Terminology

Conceptual Models

A conceptual model is a non-software-specific, often diagrammatic, representation of a system. It articulates the key components, processes, relationships, and underlying assumptions based on established theory and empirical observations. In biomechanics, this may describe the hypothesized relationships between tissue morphology, material properties, applied loads, and physiological response.

Computational Models

A computational model is the executable instantiation of a conceptual model, implemented via mathematical formulations (e.g., PDEs, ODEs) and numerical algorithms (e.g., Finite Element Method, Agent-Based Modeling). It is the tool for performing simulations to generate quantitative predictions.

Uncertainty Quantification (UQ)

UQ is the systematic analysis of the origins, magnitude, and impact of uncertainties in computational model predictions. It quantifies how uncertainties in model inputs, parameters, and structure propagate to uncertainty in outputs, directly informing model credibility and the interpretation of V&V results.

The Interplay in Biomechanical V&V

The V&V process rigorously connects these elements. Verification asks, "Are we solving the computational model equations correctly?" Validation asks, "Is the computational model an accurate representation of the physical world, given its intended use?" UQ is essential for both, providing metrics for numerical error (verification) and quantifying the mismatch between simulations and experimental validation data.

Table 1: Role of Each Component in the Biomechanical Model V&V Pipeline

| Component | Primary Role in V&V | Key Questions Addressed |

|---|---|---|

| Conceptual Model | Foundation for V&V planning. Defines the system boundaries and assumptions to be tested. | What are the critical hypotheses? What physics/biology must be included for the intended use? |

| Computational Model | Subject of the V&V process. The object being verified and validated. | Is the implementation correct? Does its output match reality within acceptable uncertainty? |

| Uncertainty Quantification | Provides the quantitative framework for V&V. Informs acceptability criteria. | How precise are the predictions? What is the confidence in the validation result? Is model discrepancy significant? |

Methodologies for Uncertainty Quantification

UQ methodologies must be tailored to the computational cost and uncertainty sources of the biomechanical model.

Experimental Protocol for Parameter Uncertainty Characterization

- Objective: To obtain empirical data for defining probability distributions of model input parameters (e.g., Young's modulus, permeability).

- Protocol:

- Sample Preparation: Harvest target tissue (e.g., articular cartilage) from a representative population (n≥X) of animal or human donors, ensuring ethical compliance.

- Mechanical Testing: Using a calibrated biaxial or indentation test system, apply controlled displacement/strain rates.

- Data Acquisition: Record force and displacement at high frequency (≥1 kHz). Simultaneously, image strain fields via Digital Image Correlation (DIC).

- Inverse Analysis: Fit constitutive model equations to the force-displacement-field data using nonlinear regression to estimate parameter values for each sample.

- Statistical Analysis: Perform Anderson-Darling tests for distribution fitting. Construct joint probability distributions, accounting for correlations between parameters (e.g., modulus vs. strength).

Protocol for Sensitivity Analysis (Global Variance-Based)

- Objective: To rank the contribution of uncertain input parameters to the variance of key model outputs.

- Protocol (Using Sobol' Indices):

- Input Sampling: Generate a quasi-random (Sobol') sequence of N samples across the hyperparameter space defined in 4.1.

- Model Execution: Run the computational model for each sample input set. For FE models, employ a surrogate model (e.g., Gaussian Process) to reduce computational cost.

- Index Calculation: Compute first-order (Si) and total-order (STi) Sobol' indices using the Monte Carlo-based estimator of Saltelli et al.

- Interpretation: Si quantifies the direct effect of parameter i. STi includes interaction effects. Parameters with high S_Ti are priority targets for further experimental refinement.

Quantitative Data in Biomechanical UQ

Table 2: Example UQ Results from a Tibial Cartilage FE Model

| Uncertain Parameter | Distribution (Mean ± SD) | Output of Interest | Sobol' Total-Order Index (S_Ti) | Propagated Uncertainty (95% CI) |

|---|---|---|---|---|

| Cartilage Young's Modulus | LogNormal(12.0 ± 3.6 MPa) | Peak Contact Stress | 0.72 | ± 4.2 MPa |

| Cartilage Permeability | Normal(1.5e-15 ± 0.3e-15 m⁴/Ns) | Time to Peak Load | 0.41 | ± 12% |

| Subchondral Bone Stiffness | Uniform(500, 1500 MPa) | Peak Contact Stress | 0.11 | ± 0.8 MPa |

| Load Magnitude | Normal(750 ± 75 N) | Peak Contact Stress | 0.85 | ± 5.1 MPa |

Visualization of Core Concepts

Title: The V&V Process Linking Models, Reality, and UQ

Title: Uncertainty Quantification Framework Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Biomechanical UQ Studies

| Item / Reagent | Function in UQ & V&V | Example Product / Standard |

|---|---|---|

| Biaxial/Indentation Test System | Generates empirical data for parameter distribution fitting and validation. | Instron BioPuls, CellScale BioTester (ASTM F2996) |

| Digital Image Correlation (DIC) System | Provides full-field strain measurements for detailed model validation. | LaVision DaVis, Correlated Solutions VIC-3D |

| High-Fidelity FE Software | Solves complex biomechanical boundary value problems for uncertainty propagation. | Abaqus (Dassault), FEBio (open-source) |

| UQ/Surrogate Modeling Toolkit | Performs sensitivity analysis and propagates uncertainties efficiently. | Dakota (Sandia), SciPy (Python), UQLab (ETH Zurich) |

| Calibrated Load Cells & Displacement Sensors | Ensures traceable, low-uncertainty input for experiments. | HBM, Interface force sensors (ISO 376 calibrated) |

| Standardized Tissue Phantoms | Provides a known, reproducible material for verification of testing and imaging protocols. | Elastomer phantoms (e.g., Smooth-On), Sawbones composites |

| Statistical Software | Fits probability distributions to data and analyzes V&V metrics. | R, JMP, Python (SciKit-Learn, statsmodels) |

1.0 Introduction: The Foundational Role of Context of Use

Within biomechanical model research for drug development, Verification & Validation (V&V) is the critical process for establishing model credibility. However, without a precisely defined Context of Use (COU), V&V activities lack direction and purpose. The COU is the formal statement that details how the model will be used, the specific questions it will answer, and the associated scenarios and conditions. This document establishes the COU as the central, governing artifact—the "North Star"—that informs every subsequent V&V activity, ensuring efficiency, relevance, and regulatory alignment.

2.0 Defining the Model Context of Use: Core Components

A comprehensive COU specification must address the following interconnected components, summarized in Table 1.

Table 1: Core Components of a Biomechanical Model Context of Use

| Component | Description | Example for a Spinal Implant Efficacy Model |

|---|---|---|

| 1. Intended Purpose | The primary objective and decision the model informs. | To predict range of motion (ROM) and facet joint forces in the L4-L5 spinal segment post-fusion, supporting preclinical efficacy claims for a novel interspinous device. |

| 2. Modeled System & Boundaries | Explicit description of the anatomical, physiological, and mechanical systems included and excluded. | Included: L3-L5 vertebrae, intervertebral discs (L3-L4, L4-L5), ligaments, facet joints. Excluded: Muscular active forces, viscoelastic tissue properties beyond quasi-static simulation. |

| 3. Operating Conditions & Inputs | The environmental, loading, and biological conditions under which the model is applied. | Quasi-static pure moments of 7.5 Nm in flexion, extension, lateral bending; bone density within 1 SD of a 65-75 y/o osteopenic population. |

| 4. Outputs of Interest & Acceptable Accuracy | The key model predictions and their required level of accuracy, defined against validation benchmarks. | Primary: L4-L5 ROM (≤15% error vs. in vitro cadaveric data). Secondary: Facet contact force at L4-L5 (≤20% error). |

| 5. Risk of an Incorrect Decision | The potential impact of model error on the downstream decision (e.g., trial design, safety). | High: Model over-predicting ROM could lead to underestimation of adjacent segment disease risk. Mitigation: Conservative validation thresholds and sensitivity analysis. |

3.0 From COU to V&V Planning: A Structured Workflow

The COU directly dictates the scope, rigor, and acceptance criteria for all V&V tasks. The logical relationship is defined in the following workflow.

Diagram 1: COU Informs the V&V Plan Components (79 chars)

4.0 Experimental Protocols for COU-Driven Validation

Validation is the process of determining how well the computational model represents the real world, as defined by the COU. The following protocol is central to biomechanical model validation.

Protocol: In Vitro Biomechanical Testing for Model Validation Data

1. Objective: To generate high-fidelity, experimental biomechanical data under conditions specified in the COU for direct quantitative comparison with computational model predictions.

2. Materials & Specimen Preparation:

- Human Cadaveric Spinal Segments (L1-S1): Fresh-frozen, screened for pathology.

- Custom 6-DOF Spine Testing Apparatus: Equipped with a force-moment sensor and hydraulic actuators.

- Optical Motion Capture System: (e.g., Vicon) with reflective markers.

- Digital Load Cells: For applied pure moment verification.

- Specimen Preparation: Pot L1 and S1 vertebrae in dental plaster within mounting fixtures. Carefully dissect to preserve ligaments and facet joints. Hydrate with 0.9% saline solution throughout.

3. Methodology: 1. Mounting & Alignment: Secure specimen pots to testing frames, aligning the L3-L4 disc horizontally. 2. Marker Placement: Affix rigid marker clusters to each vertebral body (L3, L4, L5). 3. Loading Protocol: Apply pure moments in flexion-extension, lateral bending, and axial rotation to a maximum of 7.5 Nm using a stepwise loading protocol (0, 1.5, 3.0, 4.5, 6.0, 7.5 Nm). Hold each step for 30s to allow for viscoelastic creep. 4. Data Acquisition: At each load step, record: * Applied load cell forces/moments. * 3D marker positions from motion capture (sampled at 100 Hz). * Actuator displacement. 5. Post-Test Imaging: Perform CT scans of the specimen to inform geometric reconstruction in the computational model. 6. Data Reduction: Calculate intervertebral range of motion (ROM) and neutral zone from marker kinematics. Calculate facet contact forces via inverse dynamics or measured strain at instrumented facets.

4. Output: A dataset of mechanical input (applied moment) vs. kinematic output (ROM) and kinetic output (facet forces) for direct comparison with model predictions.

5.0 The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Biomechanical V&V Experiments

| Item / Solution | Function in V&V Context |

|---|---|

| Polyurethane Foam Blocks | Used for potting vertebrae into testing fixtures. Provides a rigid, repeatable interface without damaging bone. |

| Physiological Saline Solution (0.9% NaCl) | Maintains tissue hydration during in vitro testing to preserve biomechanical properties of ligaments and discs. |

| Radio-Opaque Barium Sulfate Suspension | Injected into disc space or facet joints post-test for enhanced contrast in CT imaging, aiding model geometry creation. |

| Strain Gauges & Telemetry Systems | For direct measurement of bone strain in in vitro or in vivo models to validate finite element model stress predictions. |

| Fluoroscopic Imaging Systems | Provides dynamic, 2D radiographic data for validating kinematic outputs of motion segment models under load. |

| Standardized CAD Implant Models | Digital models of standard implants (e.g., ASTM pedicle screws) used to ensure consistency between computational and experimental implant geometry. |

6.0 Quantitative Validation Metrics & Reporting

The COU-specified "acceptable accuracy" must be translated into quantitative metrics. Table 3 summarizes common metrics derived from the validation protocol data.

Table 3: Common Quantitative Validation Metrics for Biomechanical Models

| Metric | Calculation | Interpretation | COU-Driven Threshold Example |

|---|---|---|---|

| Correlation Coefficient (R²) | Statistical measure of linear relationship between model-predicted and experimental values. | R² > 0.9 indicates strong linear agreement in trend. | R² ≥ 0.85 for load-displacement curve shape. |

| Root Mean Square Error (RMSE) | √[ Σ(Predᵢ - Expᵢ)² / n ] | Absolute measure of average error magnitude, in units of the output. | RMSE ≤ 1.5° for segmental rotation. |

| Normalized RMSE (NRMSE) | (RMSE) / (Expmax - Expmin) | Expresses RMSE as a percentage of the experimental data range. | NRMSE ≤ 10% for normalized output comparisons. |

| Mean Absolute Error (MAE) | Σ|Predᵢ - Expᵢ| / n | Similar to RMSE but less sensitive to large outliers. | MAE ≤ 0.3 mm for vertebral displacement. |

7.0 Conclusion

Establishing a detailed, unambiguous Context of Use is the single most critical step in the V&V process for biomechanical models in drug and device development. It transforms V&V from a generic checklist into a targeted, risk-informed, and decision-focused campaign. By serving as the North Star, the COU ensures that all verification activities, validation experiments, and uncertainty analyses are purpose-built to answer the specific question at hand, thereby building the credibility necessary for regulatory submission and scientific impact.

Within the critical framework of Verification and Validation (V&V) for biomechanical models, selecting the appropriate modeling paradigm is foundational. This guide provides an in-depth technical analysis of two dominant computational approaches: Finite Element Analysis (FEA) and Multibody Dynamics (MBD). Each serves distinct purposes in simulating the mechanical behavior of biological systems, from tissue-level stresses to whole-body movement, and each presents unique challenges and protocols within the V&V pipeline.

Core Model Types: Technical Foundations

Finite Element Analysis (FEA)

FEA is a numerical technique for predicting how complex geometries respond to physical forces, heat, fluid flow, and other physical phenomena. It subdivides a large system into smaller, simpler parts called finite elements, connected at nodes. This method is ideal for analyzing stress, strain, and deformation in continuous media like bone, cartilage, and soft tissues.

Key Applications:

- Stress analysis in bone implants and prosthetics.

- Simulation of soft tissue deformation (e.g., skin, muscles, arteries).

- Trauma and injury mechanics (e.g., fracture prediction).

Governing Equations: The core is the weak form of the equilibrium equations, often expressed as: [ \mathbf{K}\mathbf{u} = \mathbf{F} ] where (\mathbf{K}) is the global stiffness matrix, (\mathbf{u}) is the vector of nodal displacements, and (\mathbf{F}) is the vector of applied forces.

Multibody Dynamics (MBD)

MBD models a mechanical system as an assembly of rigid and/or flexible bodies connected by kinematic joints (e.g., hinges, ball-and-socket) and force elements (e.g., ligaments, muscles). It is optimized for analyzing the large-scale motion and joint forces of articulated systems.

Key Applications:

- Gait analysis and human movement simulation.

- Joint contact force estimation.

- Sports biomechanics and ergonomics.

Governing Equations: Typically formulated using Lagrange's equations or Newton-Euler methods, resulting in differential-algebraic equations (DAEs): [ \mathbf{M}(\mathbf{q})\ddot{\mathbf{q}} + \mathbf{C}_\mathbf{q}^T\mathbf{\lambda} = \mathbf{Q}(\mathbf{q}, \dot{\mathbf{q}}, t) ] [ \mathbf{C}(\mathbf{q}, t) = \mathbf{0} ] where (\mathbf{M}) is the mass matrix, (\mathbf{q}) are generalized coordinates, (\mathbf{C}) are constraint equations, (\mathbf{\lambda}) are Lagrange multipliers (joint forces), and (\mathbf{Q}) are generalized forces.

Comparative Analysis: FEA vs. MBD

The table below summarizes the defining characteristics, strengths, and limitations of each modeling type.

| Characteristic | Finite Element Analysis (FEA) | Multibody Dynamics (MBD) |

|---|---|---|

| Primary Domain | Continuum mechanics | Rigid-body & articulated system dynamics |

| Spatial Resolution | High (local stress/strain within a component) | Low to Medium (system-level kinematics/kinetics) |

| Typical Outputs | Stress, strain, deformation, failure points | Kinematics (position, velocity), kinetics (joint forces/torques) |

| Computational Cost | Very High (nonlinear, contact, large DOFs) | Relatively Low (reduced coordinates, constraints) |

| Model Construction | Geometry meshing, material property assignment | Body definition, joint topology, force field parameterization |

| Common V&V Challenges | Material property validation, mesh convergence, boundary conditions | Muscle force estimation, contact modeling, parameter identification |

| Ideal Use Case | Design/analysis of a knee implant under load | Predicting hip contact forces during walking |

Experimental Protocols for Model Input & Validation

Protocol for Material Property Characterization (FEA Input)

Objective: To determine anisotropic, hyperelastic material properties of soft tissue (e.g., tendon) for constitutive models in FEA.

- Specimen Preparation: Harvest fresh tissue samples and machine into standardized dumbbell or rectangular shapes. Keep hydrated in phosphate-buffered saline (PBS).

- Mechanical Testing: Perform uniaxial/biaxial tensile tests using a materials testing system (e.g., Instron) with an environmental chamber.

- Data Acquisition: Apply displacement-controlled loading at a physiological strain rate. Simultaneously record force (via load cell) and full-field strain (via digital image correlation - DIC).

- Parameter Identification: Fit experimental stress-strain data to a constitutive model (e.g., Neo-Hookean, Ogden) using a nonlinear least-squares optimization algorithm to derive material parameters (e.g., shear modulus μ, bulk modulus κ).

Protocol for Kinematic Data Capture (MBD Input/Validation)

Objective: To obtain accurate segmental kinematics for driving or validating an MBD model of human gait.

- Motion Capture Setup: Use an optoelectronic system (e.g., Vicon, Qualisys) with 8+ infrared cameras. Calibrate volume to sub-millimeter accuracy.

- Marker Placement: Apply a retro-reflective marker set (e.g., Plug-in Gait, CAST) on anatomical landmarks and tracking clusters on body segments.

- Data Collection: Subject performs walking trials at a self-selected speed. Synchronously collect ground reaction forces using embedded force plates (e.g., from AMTI or Kistler).

- Data Processing: Filter marker trajectories (low-pass Butterworth, 6 Hz cutoff). Use a kinematic model within the MBD software (e.g., OpenSim, AnyBody) to solve inverse kinematics, minimizing the error between virtual and experimental marker positions to compute joint angles.

Visualization of Model Development & V&V Workflow

Biomechanical Model V&V Workflow

Modeling Paradigms & Data Integration

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Biomechanical Modeling |

|---|---|

| Polyurethane Bone Analogs | Synthetic bones with consistent mechanical properties for in vitro validation of implant FEA models. |

| Silicone Elastomers | Used to fabricate tissue-mimicking phantoms for validating soft tissue FEA simulations (e.g., breast, liver). |

| Retro-reflective Markers | Passive markers tracked by motion capture systems to provide kinematic input data for MBD models. |

| Force Plates | Measure 3D ground reaction forces and moments, essential for inverse dynamics analysis in MBD. |

| Digital Image Correlation (DIC) Systems | Non-contact optical method to measure full-field surface strain during mechanical testing for FEA validation. |

| Biaxial Testing Systems | Characterize anisotropic, nonlinear material properties of tissues (e.g., skin, heart valve) for FEA. |

| OpenSim / AnyBody Software | Open-source and commercial platforms for developing, simulating, and analyzing MBD models. |

| Abaqus / FEBio Software | Industry-standard FEA solvers with capabilities for complex nonlinear, hyperelastic, and contact problems in biomechanics. |

From Theory to Practice: Implementing a Robust V&V Pipeline for Your Biomechanical Model

Within the broader thesis on the Guide to Verification and Validation (V&V) for biomechanical models research, Step 1, Verification, is the foundational process of ensuring that the computational model is solved correctly. This technical guide details the methodologies for checking the accuracy of code implementations and numerical calculations, a critical precursor to validation against experimental data. For researchers, scientists, and drug development professionals, rigorous verification is essential for establishing credibility in simulations of biomechanical systems, from joint mechanics to cellular force generation.

Verification answers the question: "Are we solving the equations correctly?" It is a purely mathematical exercise, distinct from validation ("Are we solving the correct equations?"). In biomechanics, where models often involve complex nonlinear partial differential equations (PDEs) for tissue mechanics, coupled with biochemical signaling, verification is a multi-faceted challenge encompassing code verification, calculation checks, and solution verification.

Core Verification Methodologies: Protocols and Applications

Code Verification: The Method of Manufactured Solutions (MMS)

Experimental Protocol:

- Postulate a Solution: Choose a smooth, non-trivial analytical function for each dependent variable (e.g., displacement, concentration) that satisfies the model's boundary conditions.

- Operate the PDE: Substitute the manufactured solution into the governing PDE. This will generally result in a residual term (a source term).

- Modify Code Input: Implement this source term in the simulation code.

- Run Simulation: Execute the code with the manufactured solution as the initial condition and the derived source term.

- Error Quantification: Compute the difference between the numerical solution and the manufactured analytical solution.

- Convergence Analysis: Systematically refine the spatial and temporal discretization (mesh size Δx, time step Δt). Calculate the order of convergence (p) from the error norm: ( p = \log( error{fine} / error{coarse} ) / \log( refinement_ratio ) ). The code is verified if the observed convergence rate matches the theoretical order of the numerical method (e.g., p≈2 for second-order finite elements).

Calculation Checks: Benchmarking and Cross-Validation

Protocol for Benchmark Comparisons:

- Identify Benchmark: Select a well-established, community-vetted problem with published high-fidelity results (e.g., FDA's CFD challenges, SilicoBone benchmark).

- Replicate Conditions: Precisely implement the benchmark's geometry, material properties, boundary conditions, and loading.

- Execute and Compare: Run the simulation and quantitatively compare key output metrics (stress, strain, flow rate) against benchmark data.

- Statistical Analysis: Use metrics like the Normalized Root Mean Square Error (NRMSE) or Correlation Coefficient to quantify agreement.

Protocol for Cross-Code Verification:

- Independent Implementation: Develop or utilize two independent computational solvers (e.g., a custom FEA script and a commercial package like FEBio or Abaqus) for the same model.

- Identical Input: Ensure all model inputs are numerically identical.

- Output Comparison: Compare results from both codes. Discrepancies indicate potential bugs in one or both implementations.

Solution Verification: Estimating Numerical Error

Protocol for Grid Convergence Index (GCI) Study:

- Generate Three Meshes: Create systematically refined spatial grids (fine, medium, coarse) with a constant refinement ratio ( r > 1.3 ).

- Run Simulations: Compute a key quantity of interest (QoI), ( f ), on each mesh.

- Calculate Apparent Order: Solve for the observed convergence order ( p ) using the results from the three grids.

- Compute GCI: ( GCI{fine} = Fs * |(f{fine} - f{medium}) / f{fine}| / (r^p - 1) ), where ( Fs ) is a safety factor (typically 1.25).

- Report Uncertainty: The GCI provides an error band for the numerical solution on the finest grid.

Table 1: Convergence Analysis for a Finite Element Bone Mechanics Model (MMS)

| Mesh Size (mm) | L2 Norm Error (Displacement) | Observed Convergence Rate (p) | Theoretical Rate |

|---|---|---|---|

| 2.0 | 4.82e-3 | -- | 2.0 |

| 1.0 | 1.21e-3 | 1.99 | 2.0 |

| 0.5 | 3.02e-4 | 2.00 | 2.0 |

Table 2: Benchmark Comparison for Knee Joint Contact Pressure

| Output Metric | Benchmark Result (MPa) | Our Model Result (MPa) | NRMSE (%) |

|---|---|---|---|

| Peak Contact Pressure (Medial) | 5.67 ± 0.15 | 5.71 | 1.2 |

| Peak Contact Pressure (Lateral) | 3.24 ± 0.12 | 3.19 | 1.8 |

| Contact Area (cm²) | 3.85 ± 0.10 | 3.81 | 1.5 |

Table 3: Grid Convergence Index (GCI) for Wall Shear Stress in an Arterial Model

| Grid Level | Elements (millions) | Wall Shear Stress (Pa) | GCI (%) (vs. Finer Grid) |

|---|---|---|---|

| Coarse | 0.8 | 2.45 | 9.7 |

| Medium | 2.5 | 2.67 | 3.1 |

| Fine | 7.1 | 2.73 | -- |

The Verification Workflow

Title: The Iterative Verification Process for Biomechanical Models

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Tools and Resources for Model Verification

| Item / Solution | Function in Verification | Example / Specification |

|---|---|---|

| Code Verification Suite | Automates MMS and convergence testing. | Custom Python/Matlab scripts with numpy/scipy; CEED library for high-order FEM. |

| Benchmark Database | Provides gold-standard data for calculation checks. | SilicoBone (bone biomechanics), FDA Nozzle (CFD), IMPLANT (joint contact). |

| Multi-Physics Solver | Enables cross-code verification and complex model solving. | FEBio (biomechanics-specific), COMSOL Multiphysics, Abaqus Unified FEA. |

| Mesh Generation & Refinement Tool | Creates structured grids for solution verification studies. | Gmsh, ANSYS Meshing, MeshLab; scriptable for batch refinement. |

| Convergence Metric Calculator | Computes error norms and GCI. | VerifAgent (Python), NPARC Alliance GCI tools. |

| Version Control System | Tracks code changes, ensuring reproducibility of verification steps. | Git with GitHub or GitLab. |

| Containerization Platform | Packages the software environment for consistent, repeatable execution. | Docker or Singularity containers. |

Signaling Pathway for Integrative V&V

This diagram contextualizes verification within the broader model credibility assessment.

Title: Verification's Role in the Overall V&V Pathway

Verification is the non-negotiable first step in establishing trust in biomechanical models. By systematically applying MMS, benchmark comparisons, and solution error quantification, researchers can ensure their computational implementation is free of coding errors and numerical inaccuracies. This rigorous foundation, documented with clear convergence metrics and benchmarks, is a prerequisite for meaningful validation against biological experiments, ultimately leading to credible models for drug development and biomedical research.

Within the Verification and Validation (V&V) process for biomechanical models, Validation Planning is the critical phase that determines the model's credibility for its intended use. This step translates the context of use (COU) into a concrete, actionable plan. It defines what must be tested experimentally, how it will be tested, and the quantitative criteria for success, ensuring the model's predictions are sufficiently accurate for decision-making in biomedical research and drug development.

Defining Relevant Validation Experiments

Validation experiments must be designed to challenge the model's predictive capability under conditions mirroring its COU. They are not simply replications of calibration data.

Hierarchy of Validation Evidence

A tiered approach is recommended, moving from simple to complex.

Title: Hierarchy of Validation Evidence for Biomechanical Models

Key Experiment Categories

| Category | Description | Example (Bone Fracture Healing Model) |

|---|---|---|

| Component-Level | Validation of individual sub-models or assumptions. | Validating the osteoblast differentiation rate equation against in vitro cell culture data. |

| Process-Level | Validation of intermediate, integrative model behaviors. | Validating predicted spatial-temporal pattern of callus stiffness against micro-CT/mechanical testing in a rodent segmental defect. |

| System-Level | Validation of overall model output against independent, holistic outcomes. | Validating the predicted time to full mechanical recovery against radiographic & biomechanical data in a large animal study. |

Establishing Quantitative Success Criteria

Success criteria are pre-defined, quantitative metrics that define acceptable agreement between model predictions and experimental data.

Common Validation Metrics

| Metric | Formula / Description | Interpretation & Threshold | ||

|---|---|---|---|---|

| Mean Absolute Error (MAE) | ( MAE = \frac{1}{n}\sum_{i=1}^{n} | yi - \hat{y}i | ) | Average error magnitude. Threshold is COU-dependent (e.g., < 15% of data range). |

| Root Mean Square Error (RMSE) | ( RMSE = \sqrt{\frac{1}{n}\sum{i=1}^{n} (yi - \hat{y}_i)^2} ) | Sensitive to larger errors. Useful when outliers are critical. | ||

| Coefficient of Determination (R²) | ( R^2 = 1 - \frac{\sum (yi - \hat{y}i)^2}{\sum (y_i - \bar{y})^2} ) | Proportion of variance explained. Common target: R² > 0.75. | ||

| Bland-Altman Limits of Agreement | Mean difference ± 1.96 SD of differences. | Assess bias and precision. Acceptable range defined by clinical/biological relevance. | ||

| Sensitivity & Specificity (for categorical outcomes) | Sensitivity = TP/(TP+FN); Specificity = TN/(TN+FP) | For diagnostic or risk stratification models. Targets based on clinical need. |

Detailed Experimental Protocols

Protocol: In Vivo Validation of a Bone Adaptation Model

Objective: Validate a finite element (FE) bone remodeling model's prediction of trabecular architecture changes under controlled loading.

1. Experimental Design:

- Subjects: 12 female C57BL/6 mice (n=6 control, n=6 loaded).

- Intervention: Controlled dynamic axial loading applied to the right tibia via an in vivo loading device (e.g., ElectroForce 5200). Left tibia serves as internal control.

- Loading Regime: 9N peak force, 2Hz, 60 cycles/day, 5 days/week for 3 weeks.

2. Data Acquisition:

- Pre- & Post-Experiment Imaging: In vivo micro-CT scans at 10.5µm isotropic voxel size at day 0 and day 21.

- Outcome Measures: BV/TV (Bone Volume/Total Volume), Trabecular Thickness (Tb.Th), Trabecular Separation (Tb.Sp) quantified in the proximal tibial metaphysis.

3. Model Simulation:

- Input: FE mesh generated from Day 0 scan. Applied experimental loading conditions.

- Output: Predicted spatial changes in bone density (apparent density) mapped over 21 days.

4. Comparison & Analysis:

- Spatial Registration: Align simulated density map with Day 21 scan data.

- Quantitative Comparison: Calculate RMSE and R² between predicted and measured BV/TV in defined VOIs.

- Success Criterion: R² > 0.70 and RMSE < 15% of the mean measured BV/TV change.

Protocol: In Vitro Validation of a Cartilage Mechanobiology Model

Objective: Validate a chondrocyte metabolic network model predicting gene expression under cytokine stimulation.

1. Experimental Design:

- Cell Culture: Human primary chondrocytes, passage 2, seeded in 3D alginate beads.

- Stimuli: Treatment with IL-1β (10 ng/mL) ± dynamic compressive strain (15%, 1Hz, 2h/day) for 48h. Control: unloaded, no IL-1β.

2. Data Acquisition:

- Gene Expression: qPCR for COL2A1, ACAN, MMP13, ADAMTS5 at 24h and 48h. Normalized to GAPDH. Reported as ΔΔCt.

- Protein Synthesis: Sulphated Glycosaminoglycan (sGAG) release measured via DMMB assay in media at 48h.

3. Model Simulation:

- Input: Model initialized with baseline metabolic rates. Simulate IL-1β receptor binding and mechanical signal transduction pathways.

- Output: Predicted fold-changes in gene expression and sGAG release for all conditions.

4. Comparison & Analysis:

- Temporal Correlation: Compare predicted vs. measured fold-change time-courses for each gene.

- Multi-Output Metric: Calculate a combined error metric across all outputs.

- Success Criterion: Model predictions must fall within the 95% confidence intervals of the experimental mean for at least 80% of the measured time points/outputs.

Title: Signaling Pathways in Cartilage Mechanobiology Validation

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Validation | Example Product / Specification |

|---|---|---|

| 3D Bioreactor Systems | Apply controlled mechanical stimuli (compression, shear, tension) to cell-seeded constructs in vitro. | Bose ElectroForce BioDynamic, Flexcell systems. |

| In Vivo Loading Devices | Apply precise, non-invasive mechanical loads to rodent limbs for bone/cartilage adaptation studies. | Ultrasound Bone Density Phantoms - Calibration standards for BMD measurement validation. |

| Micro-CT Imaging System | High-resolution 3D imaging for quantifying bone morphology, tissue mineralization, and scaffold integration. | Scanco Medical µCT 50, Bruker Skyscan 1272. |

| Biomechanical Tester | Measure structural and material properties of tissues (e.g., tensile strength, compressive modulus). | Instron 5944, TA Instruments ElectroForce. |

| ELISA & Multiplex Assay Kits | Quantify specific protein biomarkers (cytokines, matrix proteins, enzymes) in serum, media, or tissue lysates. | R&D Systems DuoSet ELISA, Luminex multiplex panels. |

| qPCR Master Mix & Probes | Quantify gene expression changes with high sensitivity and specificity for pathway validation. | TaqMan Gene Expression Assays, SYBR Green master mixes. |

| Primary Cells & Culture Media | Biologically relevant cell sources with optimized media for maintaining phenotype during experiments. | Lonza primary chondrocytes/osteoblasts, STEMCELL Technologies differentiation kits. |

| Finite Element Analysis Software | Create and solve biomechanical models to generate comparative predictions. | ANSYS Mechanical, FEBio, Abaqus. |

| Statistical Analysis Software | Perform rigorous comparison of model predictions vs. experimental data and compute validation metrics. | R, Python (SciPy/NumPy), GraphPad Prism. |

Within the broader framework of Verification & Validation (V&V) for biomechanical models, Step 3 is the critical empirical phase where model predictions are confronted with physical reality. This stage transforms a theoretically sound, verified model into a validated tool for research or clinical decision-making. For biomechanical models—spanning tissue-scale, organ-scale, or full-body simulations—validation involves sourcing high-quality, contextually relevant experimental or clinical benchmark data and executing a structured, quantitative comparison. This guide details the protocols, data handling, and analytical frameworks necessary to robustly execute this step, ensuring model credibility for researchers, scientists, and drug development professionals.

Sourcing Experimental & Benchmark Data

The quality of validation is intrinsically linked to the quality of the data used. Sourcing requires a strategic approach to identify datasets that are relevant, reliable, and sufficiently detailed.

Data Source Categories

- Primary Experimental Data: Data generated in-house through dedicated validation experiments. This offers maximum control over protocols and measurement modalities.

- Public Repositories & Literature Data: Sourced from published studies or curated databases. Requires careful assessment of methodological reporting.

- Clinical Benchmark Data: Includes imaging (MRI, CT), motion capture, gait analysis, and intra-operative measurements. Often sourced from collaborations or open-access biobanks.

Key Considerations for Data Selection

- Relevance: The experimental conditions (loading, boundary conditions, rate, tissue state) must match the model's intended use case.

- Uncertainty Quantification: Prefer datasets that report measurement error, standard deviations, or confidence intervals.

- Completeness: Data should include full geometry, material properties, boundary conditions, and outcome measures as reported in the original study.

Experimental Protocols for Key Validation Studies

Detailed methodologies for common experiments used to validate biomechanical models are outlined below.

Uniaxial/Biaxial Tensile Testing of Soft Tissues

Purpose: To validate constitutive material models (e.g., hyperelastic, viscoelastic) for ligaments, tendons, and engineered tissues. Protocol:

- Sample Preparation: Harvest tissue specimens to standardized dimensions (e.g., 10mm x 5mm x 2mm). Hydrate in physiological saline.

- Mounting: Secure ends of the specimen in mechanical grips, ensuring alignment to prevent shear.

- Preconditioning: Apply 10-20 cycles of low-load cyclic strain (1-2%) to achieve a repeatable mechanical response.

- Testing: Apply displacement-controlled stretching at a constant strain rate (e.g., 0.1% s⁻¹) until failure or a target strain.

- Data Acquisition: Record force (via load cell) and displacement (via actuator or video extensometer). Calculate engineering stress and strain.

- Output: Stress-strain curves for model input and validation targets (failure stress, strain, tangent modulus).

Indentation Testing for Articular Cartilage

Purpose: To validate contact mechanics and localized deformation predictions in osteochondral models. Protocol:

- Sample Preparation: Mount osteochondral explant or whole joint in a bath of phosphate-buffered saline (PBS).

- Probe Calibration: Use a spherical or flat-ended indenter tip. Calibrate with known weights.

- Site Mapping: Perform a grid of indentations across the articular surface.

- Testing: At each site, apply a ramp-hold displacement (e.g., 10% of tissue thickness). Hold for 60s to observe stress relaxation.

- Data Acquisition: Record force and displacement at high frequency. Calculate aggregate modulus from relaxation phase using Hayes' solution.

- Output: Force-displacement curves and spatial maps of elastic/viscoelastic properties.

In-Vivo Motion Capture & Kinetics

Purpose: To validate musculoskeletal (MSK) model predictions of joint kinematics, kinetics, and muscle forces. Protocol:

- Marker Placement: Apply reflective markers to anatomical landmarks per a defined model (e.g., Plug-in-Gait, CAST).

- Motion Capture: Record subject performing activities (gait, jumping) using a 3D optoelectronic system (e.g., Vicon, Qualisys). Synchronize with force plates.

- EMG Acquisition: Place surface electrodes on relevant muscles. Record electromyography (EMG) activity.

- Processing: Filter marker trajectories and force data. Calculate joint angles, moments, and powers using inverse kinematics and dynamics.

- Output: Time-series data for joint angles, moments, ground reaction forces, and EMG envelopes for comparison with MSK simulation outputs.

Quantitative Comparison & Validation Metrics

Model outputs (e.g., stress, strain, displacement, joint angle) must be quantitatively compared to experimental benchmarks. Data should be summarized in structured tables.

Table 1: Example Validation Metrics for Different Model Outputs

| Model Output Type | Recommended Validation Metric | Formula / Description | Acceptability Threshold (Example) | ||

|---|---|---|---|---|---|

| Time-Series Data(e.g., Joint Angle, Force) | Root Mean Square Error (RMSE) | $RMSE = \sqrt{\frac{1}{n}\sum{i=1}^{n}(yi - \hat{y}_i)^2}$ | < 2 x Experimental SD | ||

| Coefficient of Determination (R²) | $R^2 = 1 - \frac{\sum (yi - \hat{y}i)^2}{\sum (y_i - \bar{y})^2}$ | > 0.75 | |||

| Scalar Values(e.g., Failure Load, Stiffness) | Relative Error (%) | $RE = \frac{ | \hat{y} - y | }{y} \times 100\%$ | < 15% |

| Spatial Field Data(e.g., Strain Map) | Field Correlation (e.g., CORR) | Spatial correlation coefficient between predicted and measured fields. | > 0.80 | ||

| Relative Error Map | Pixel/voxel-wise relative error, presented as a distribution. | Mean < 20% |

Table 2: Example Validation Table for a Femoral Implant Micromotion Model

| Benchmark Source(Experimental Study) | Measured Mean Micromotion (µm) | Model-Predicted Micromotion (µm) | Relative Error (%) | Validation Metric (R²) |

|---|---|---|---|---|

| Viceconti et al., 2020 (in vitro) | 125 ± 18 | 138 | +10.4% | 0.89 (kinematics) |

| Pancanti et al., 2003 (in vitro) | 89 ± 22 | 81 | -9.0% | N/A |

| Composite Benchmark | Range: 50-200 | Range: 55-190 | Mean: 9.7% | Aggregate > 0.8 |

Visualization of Core Concepts

Diagram 1: Biomechanical Model Validation Workflow

Diagram 2: Key Signaling Pathways in Mechanobiology Validation

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 3: Essential Materials for Biomechanical Validation Experiments

| Item / Reagent | Function in Validation | Example Product / Specification |

|---|---|---|

| Phosphate-Buffered Saline (PBS) | Maintains physiological pH and osmolarity for ex-vivo tissue testing, preventing tissue degradation. | Thermo Fisher Scientific #10010023 |

| Protease Inhibitor Cocktail | Prevents tissue degradation during preparation and testing by inhibiting endogenous proteases. | Sigma-Aldrich P8340 |

| Biaxial/Tensile Testing System | Applies controlled multi-axial loads to tissue specimens; essential for constitutive model validation. | Instron BioPuls, CellScale Biotester |

| Digital Image Correlation (DIC) System | Non-contact optical method to measure full-field 2D/3D strain maps on tissue surfaces. | Correlated Solutions VIC-3D, Dantec Dynamics Q-450 |

| Micro-CT Scanner | Provides high-resolution 3D geometry and bone microstructure for model geometry reconstruction and validation. | Scanco Medical μCT 50, Bruker Skyscan 1272 |

| Fluorescent Microspheres (for μPIV) | Tracers for micro-scale Particle Image Velocimetry (μPIV) to measure fluid flow in bioreactors or porous media. | Thermo Fisher Scientific FluoSpheres (0.5-2.0 μm) |

| Motion Capture System | Gold standard for capturing high-accuracy kinematic data for musculoskeletal model validation. | Vicon Vero, Qualisys Miqus M3 |

| Telemeterized Orthopedic Implant | Provides in-vivo, direct measurement of load or strain in implants; the ultimate benchmark for in-silico models. | Instrumented femoral stems (e.g., from OrthoLoad dataset) |

The validation and verification (V&V) of biomechanical models is a critical component in modern drug development, particularly for therapeutics targeting musculoskeletal, cardiovascular, and pulmonary systems. This whitepaper details the application of rigorously validated models to assess the biomechanical efficacy and safety of novel drug candidates, ensuring robust predictions of in vivo performance and reducing late-stage attrition.

Core Principles of Model V&V in Biomechanics

Model Credibility is built upon the ASME V&V 40 framework, which establishes a risk-informed credibility assessment. For drug development, the Question of Interest (e.g., "Does Drug X reduce femoral fracture risk by 30% under compressive load?") dictates the required Credibility Goals. Key activities include:

- Verification: Solving equations correctly (Code Verification) and accurately representing the conceptual model (Calculation Verification).

- Validation: Quantifying model accuracy by comparing computational results to experimental data from relevant physical systems.

Quantitative Data from Recent Studies

Table 1: Validation Metrics for Representative Biomechanical Models in Drug Testing

| Model Type (Application) | Reference Data Source | Key Comparison Metric | Model Prediction Error | Acceptable Threshold (per V&V Plan) | Status |

|---|---|---|---|---|---|

| Finite Element (FE) Bone Model (Osteoporosis drug: fracture risk) | Ex vivo mechanical testing of human trabecular bone (n=12 specimens) | Apparent Elastic Modulus (MPa) | Mean Error: 8.7% | ≤15% | Pass |

| Computational Fluid Dynamics (CFD) Airway Model (Bronchodilator: wall shear stress) | In vitro 3D-printed airway replica with PIV flow measurement | Wall Shear Stress (Pa) at Generation 3 | RMS Error: 0.12 Pa | ≤0.2 Pa | Pass |

| Multibody Dynamics Muscle Model (Myopathy drug: muscle force) | Isokinetic dynamometer data from clinical trial (n=20 patients) | Peak Isometric Force (N) | R² = 0.89 | R² ≥ 0.85 | Pass |

| FE Arterial Wall Model (Anti-hypertensive: plaque stress) | MRI-based wall strain in animal model (n=6 subjects) | Peak Circumferential Stress (kPa) | Max Local Error: 18.3% | ≤20% | Pass |

Table 2: Impact of Model-Based Assessment on Preclinical Program Efficiency

| Development Phase | Traditional Approach (Months) | Model-Informed Approach (Months) | Time Saving | Key Model Contribution |

|---|---|---|---|---|

| Lead Optimization | 7-9 | 4-5 | ~40% | High-throughput screening of compound effects on tissue-level mechanics. |

| Preclinical Safety | 10-12 | 6-8 | ~35% | Predicting off-target biomechanical effects (e.g., valve stress, cartilage load). |

| Phase I/II Bridging | 6-8 | 3-4 | ~50% | Extrapolating biomechanical response across dosages and populations. |

Detailed Experimental Protocols for Validation

Protocol: Ex Vivo Validation of a Bone Finite Element Model for Osteoanabolic Drugs

Objective: To validate a micro-FE model of trabecular bone against mechanical testing for predicting changes in bone strength. Materials: Human trabecular bone cores (from femoral head), µCT scanner, mechanical testing system, FE software (e.g., FEBio, Abaqus). Procedure:

- Imaging: Scan bone cores (8mm diameter) using µCT at isotropic resolution (16µm).

- Mesh Generation: Convert segmented images directly to a linear tetrahedral FE mesh.

- Material Properties: Assign bone tissue a linear elastic, isotropic material model (E=15 GPa, ν=0.3).

- Boundary Conditions: Apply a uniaxial compressive displacement to the top surface, fixing the bottom.

- Simulation: Solve for reaction forces and apparent modulus.

- Physical Test: Perform uniaxial compression test on the same core at 0.01%/s strain rate.

- Comparison: Calculate error between simulated and experimental apparent modulus. Perform mesh convergence study.

Protocol: In Vitro-to-In Silico Validation of an Airway CFD Model for Inhalation Therapeutics

Objective: To validate a CFD model of particle deposition and wall shear stress in a human airway bifurcation. Materials: 3D-printed idealized airway (G3-G5), particle image velocimetry (PIV) system, nebulizer, CFD software (e.g., OpenFOAM, STAR-CCM+). Procedure:

- Experimental Flow Mapping: Perfuse the airway replica with glycerin-water solution matching kinematic viscosity of air. Seed flow with tracer particles. Use PIV to capture 2D velocity fields at multiple planes under steady inhalation (Re=1500).

- CFD Model Setup: Recreate identical geometry. Apply measured inflow velocity profile. Use k-ω SST turbulence model.

- Sensitivity Analysis: Assess impact of mesh density (boundary layer refinement) and turbulence parameters.

- Validation Comparison: Extract velocity magnitude and wall shear stress from both PIV data and CFD results at matched locations. Quantify using normalized root mean square error (NRMSE).

- Drug Deposition Simulation: Introduce a discrete phase of drug aerosol particles (1-5 µm) into the validated flow field to predict regional deposition fractions.

Diagrams of Key Processes

Model V&V Workflow for Drug Development

Biomechanical Efficacy Pathway from Drug to Outcome

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Biomechanical Model Validation Experiments

| Item | Category | Function in Validation | Example Product/Model |

|---|---|---|---|

| 3D Bioprinted Tissue Constructs | Biological Scaffold | Provides anatomically accurate, living tissue analogs for direct mechanical testing and model calibration. | Cellink Bio X6, Allevi 3. |

| Tissue-Mimicking Phantoms | Synthetic Material | Simulates mechanical properties (elasticity, viscosity) of soft tissues for controlled in vitro validation. | Synbone, Simulab Tissue Mimics. |

| Fluorescent Microspheres | Tracer Particles | Enable visualization and quantification of flow patterns (PIV) or drug deposition in vascular/airway models. | Thermo Fisher FluoSpheres. |

| Polyacrylamide Hydrogels | Tunable Substrate | Allows precise control of substrate stiffness to study cellular mechanotransduction in drug response. | Matrigen Softwell Plates. |

| Miniaturized Force Sensors | Measurement Device | Measures contractile forces in engineered muscle tissues or small animal models for functional validation. | Aurora Scientific 1200A, Futek LSB200. |

| Stable Cell Lines with Fluorescent Reporters (e.g., GFP-actin) | Cell Culture | Visualizes cytoskeletal dynamics and morphological changes in response to drug-induced mechanical stimuli. | ATCC, Sigma-Aldrich. |

| μCT Contrast Agents (e.g., Hexabrix) | Imaging Agent | Enhances soft tissue contrast in μCT for detailed 3D geometry reconstruction for FE modeling. | Guerbet. |

| Biaxial Mechanical Testing System | Testing Equipment | Characterizes anisotropic, nonlinear material properties of tissues for constitutive model fitting. | Bose ElectroForce, CellScale BioTester. |

Within the broader thesis of establishing a robust Guide to Verification & Validation (V&V) for biomechanical models in drug and medical device development, the creation of an immutable, comprehensive audit trail is paramount. Regulatory submissions to agencies like the U.S. Food and Drug Administration (FDA) demand not just results, but a transparent, traceable narrative of the entire research lifecycle. This technical guide details the principles and methodologies for constructing an audit trail that meets regulatory scrutiny, ensuring the credibility and reproducibility of biomechanical models used in safety and efficacy assessments.

Regulatory Framework and Core Principles

An audit trail is a secure, computer-generated, time-stamped electronic record that allows for reconstruction of the course of events relating to the creation, modification, and deletion of an electronic record. It is a core requirement under regulations like 21 CFR Part 11 for electronic records and ISO 13485:2016 for quality management systems in medical devices.

Core Principles:

- Attributable: Clearly records who performed an action.

- Legible: Human and machine-readable.

- Contemporaneous: Recorded at the time of the action.

- Original: The first capture of the data.

- Accurate: Free from errors, with amendment procedures.

- Complete: All data, including repeat or reanalysis attempts.

- Consistent: Chronological, with date/time stamps following a protocol.

- Enduring: Preserved for the required retention period.

- Available: Accessible for review and inspection over its lifetime.

Audit Trail Architecture for Biomechanical Model V&V

The audit trail must encapsulate the entire V&V workflow, from conceptual model to finalized submission asset. The following diagram outlines the core logical flow and key documentation touchpoints.

Diagram Title: Audit Trail Data Flow in Model V&V Process

Key Documentation & Quantitative Data Tables

All experimental and computational data must be summarized clearly. Below are example tables for validation activities.

Table 1: Validation Experiment Protocol Summary

| Protocol ID | Objective | Test Article (Biomechanical Model) | Experimental System | Key Measured Outputs | Acceptance Criterion Reference |

|---|---|---|---|---|---|

| VAL-EXP-2023-01 | Quantify strain fields in bone-implant construct | CAD model of femoral stem implant | Servohydraulic tester with Digital Image Correlation (DIC) | Principal Strain (με), Strain Location | ASTM F2996-13, Model Prediction ±15% |

| VAL-EXP-2023-02 | Measure pressure distribution in knee joint | 3D Finite Element Knee Model | Pressure-sensitive film in cadaveric joint | Contact Pressure (MPa), Contact Area (mm²) | Peer-reviewed literature data, Correlation R² > 0.85 |

Table 2: Sample Validation Results & Discrepancy Log

| Data Point | Experimental Mean (SD) | Model Prediction | Percent Difference | Within Acceptance? | Discrepancy Log ID (If No) |

|---|---|---|---|---|---|

| Peak Principal Strain (με) | 2450 (112) | 2610 | +6.5% | Yes | N/A |

| Medial Contact Pressure (MPa) | 4.1 (0.3) | 3.5 | -14.6% | Yes | N/A |

| Lateral Contact Area (mm²) | 225 (18) | 190 | -15.6% | No | DISC-2023-001 |

Detailed Methodologies for Cited Experiments

Protocol VAL-EXP-2023-01: Strain Measurement via DIC

Objective: Validate finite element model predictions of bone strain in a composite femur with an implanted hip stem. Materials: See "Scientist's Toolkit" below. Procedure:

- Specimen Preparation: A composite femoral bone is prepared according to manufacturer specifications. The hip stem is implanted by a certified orthopaedic surgeon using surgical cement.

- DIC Setup: The bone surface is painted with a stochastic black-on-white speckle pattern. A calibrated 3D DIC system (two cameras) is positioned to capture the full field of view of the proximal femur.

- Loading & Data Acquisition: The construct is mounted in a servo-hydraulic testing machine under axial compressive load per ASTM F2996-13. A pre-load of 100N is applied. The system is then loaded to 2000N at a rate of 10N/s. DIC images are captured at 5 Hz throughout loading.

- Data Processing: DIC software computes full-field 3D displacements and strains. Principal strains (ε1, ε2) are extracted from six regions of interest (ROIs) corresponding to model output nodes.

- Comparison: Strain values from the ROIs at peak load are directly compared to the corresponding FEA-predicted strains. Percent difference and spatial correlation are calculated.

Protocol VAL-EXP-2023-02: Joint Contact Pressure Measurement

Objective: Validate a finite element knee model's prediction of contact mechanics under static load. Procedure:

- Specimen Preparation: A fresh-frozen cadaveric knee joint is thawed and dissected to preserve ligaments and cartilage. Pressure-sensitive film is cut to size for the medial and lateral compartments.

- Film Calibration: Film batches are calibrated using a materials tester with known pressures, creating a density-to-pressure calibration curve.

- Testing: The joint is aligned in a custom fixture and loaded axially to 1500N (simulating single-leg stance) for 60 seconds, allowing the film to develop.

- Image & Data Analysis: The film is scanned at high resolution. Using calibration software, pixel density is converted to pressure. Peak pressure, mean pressure, and contact area are computed for each compartment.

- Comparison: Results are compared to model outputs for the same loading condition. A linear regression analysis is performed to assess correlation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomechanical Validation Experiments

| Item | Function in Validation | Example Product/Category |

|---|---|---|

| Composite Biomechanical Bones | Provides a standardized, reproducible surrogate for human bone, eliminating biologic variability in initial validation. | Sawbones (Pacific Research Laboratories) |

| Pressure-Sensitive Film | Quantitatively measures contact pressure magnitude and distribution between articulating surfaces. | Fujifilm Prescale Super Low Pressure |

| Digital Image Correlation (DIC) System | Provides full-field, non-contact 3D measurements of surface deformation and strain during mechanical testing. | Correlated Solutions VIC-3D, Dantec Dynamics Q-400 |

| Servo-Hydraulic Mechanical Tester | Applies precise, programmable loads and displacements to test specimens. | Instron 8500, MTS Bionix |

| Optical Motion Capture System | Captures high-accuracy kinematic data from cadaveric or simulated joint experiments for model input/validation. | Vicon, OptiTrack |

| Standardized Test Fixtures | Ensures consistent, repeatable loading alignment and boundary conditions across experiments. | Custom or ASTM-standard fixtures (e.g., for femoral fatigue) |

| Electronic Lab Notebook (ELN) | Serves as the primary, timestamped record for experimental protocols, raw observations, and initial data capture. | LabArchives, Benchling |

| Metadata Management Software | Links raw data files (DIC, film scans) with their experimental context (protocol, specimen ID, parameters). | Custom scripts, LabKey Server |

Signaling Pathway for Audit Trail Generation

The following diagram illustrates the logical "pathway" or process from a scientific action to its indelible record in the audit trail.

Diagram Title: Data Flow for Automated Audit Trail Entry Creation

Integrating rigorous documentation and traceability practices into the V&V workflow for biomechanical models is non-negotiable for regulatory acceptance. By implementing a structured architecture that captures data from both computational and experimental streams, timestamped and linked with immutable logs, researchers build a defensible evidence package. This audit trail not only satisfies regulatory requirements but fundamentally strengthens the scientific rigor and reproducibility of the research, a core tenet of any comprehensive Guide to V&V for biomechanical models.

Overcoming Hurdles: Troubleshooting Common V&V Issues and Optimizing Model Performance

Within the broader thesis on establishing robust Verification and Validation (V&V) processes for biomechanical models, the occurrence of a failed validation represents a critical inflection point. It is not merely a setback but a rich source of information regarding model fidelity, experimental design, and underlying assumptions. This guide provides a systematic, root-cause analysis (RCA) framework to diagnose and resolve such failures, ensuring models progress toward predictive reliability in applications ranging from orthopedic device design to drug delivery system development.

The Root-Cause Analysis Framework: A Systematic Approach

The proposed framework moves beyond ad-hoc troubleshooting, structuring the investigation into a phased process. The objective is to isolate the source of discrepancy between model predictions and experimental observations.

Phase I: Discrepancy Characterization & Triage

The first step is to quantitatively and qualitatively characterize the nature of the validation failure.

Table 1: Validation Discrepancy Characterization Matrix

| Discrepancy Metric | Description | Quantification Example | Potential Implication |

|---|---|---|---|

| Spatial Error Pattern | Localized vs. global mismatch. | >50% error concentrated at bone-implant interface. | Boundary condition or local material property error. |

| Temporal Dynamics | Phase shift, amplitude mismatch, transient vs. steady-state. | Predicted strain peak leads experimental data by 0.1s. | Damping or viscoelastic parameters incorrect. |

| Sensitivity to Inputs | How error changes with varying inputs (load, rate). | Error increases non-linearly with load magnitude. | Non-linear material model inadequacy. |

| Statistical Significance | Is the mismatch outside experimental uncertainty? | Model mean is 4.2 SDs from experimental mean (p < 0.001). | Systematic error, not random noise. |

Diagram Title: RCA Framework Phased Workflow

Phase II: Targeted Investigation of Causal Categories

Hypotheses from Phase I guide investigation into four primary causal categories.

A. Input & Boundary Condition Error

- Protocol for Sensitivity Analysis: Conduct a global sensitivity analysis (e.g., Sobol indices) using a designed computational experiment (e.g., 500 Latin Hypercube samples). Perturb all uncertain inputs (material properties, loading magnitudes/directions, constraint definitions) within physiologically plausible ranges. Rank inputs by their contribution to output variance at the validation point.