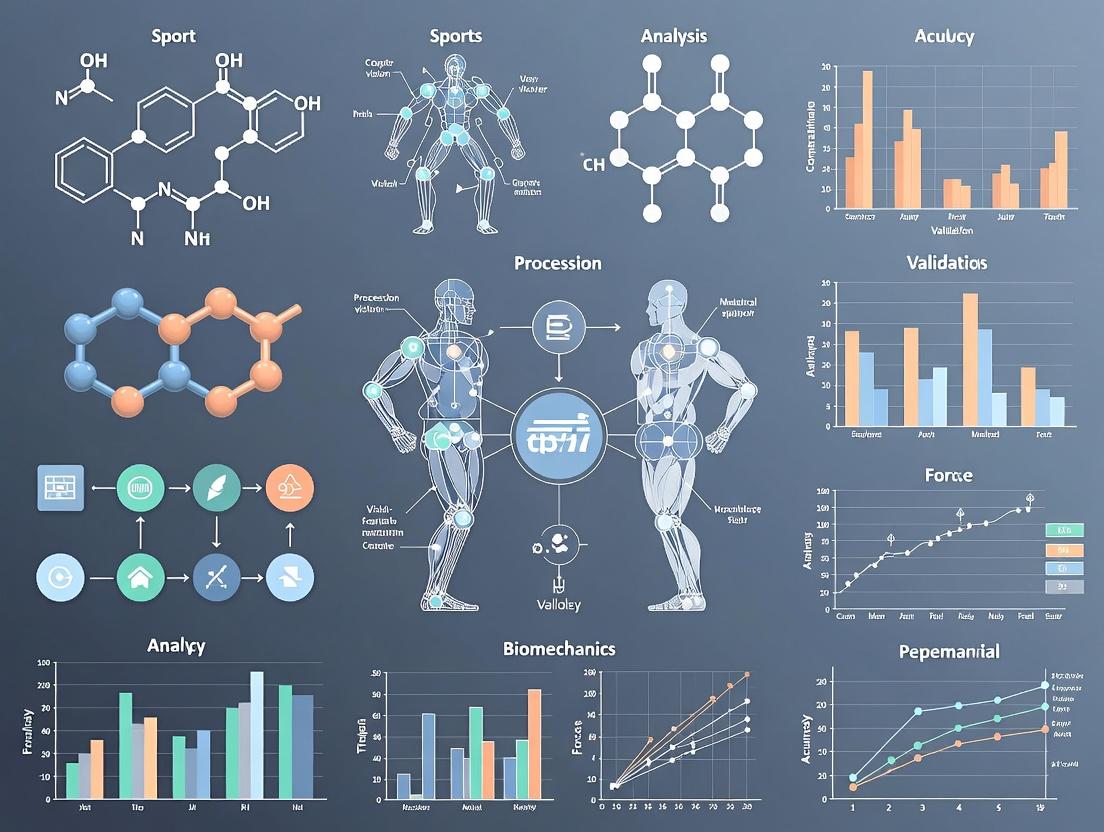

Revolutionizing Sports Biomechanics: How AI and Computer Vision Validate Human Movement for Research and Drug Development

This article explores the transformative integration of computer vision (CV) and artificial intelligence (AI) in sports biomechanics validation.

Revolutionizing Sports Biomechanics: How AI and Computer Vision Validate Human Movement for Research and Drug Development

Abstract

This article explores the transformative integration of computer vision (CV) and artificial intelligence (AI) in sports biomechanics validation. Aimed at researchers, scientists, and drug development professionals, it details the shift from traditional lab-based motion capture to markerless, data-rich analysis. We cover foundational principles, current methodological applications for quantifying athlete kinematics and kinetics, strategies for optimizing and troubleshooting AI models in real-world scenarios, and rigorous validation protocols comparing CV/AI to gold-standard systems. The synthesis provides a critical framework for adopting these technologies to enhance objectivity, scalability, and precision in human movement analysis for biomedical research and therapeutic development.

From Lab to Field: The Foundational Shift to AI-Powered Biomechanics

Application Notes

Computer vision (CV) for biomechanics validation represents a paradigm shift from traditional marker-based motion capture (MOCAP). It is defined as the use of algorithmic techniques to automatically extract, analyze, and validate quantitative measures of human movement from digital imagery, without the need for physical markers or sensors attached to the subject. Within sports biomechanics and drug development research, its core function is to provide a scalable, ecologically valid ground truth or comparative dataset for validating both AI-driven movement models and the biomechanical efficacy of therapeutic interventions.

The validation operates on two primary axes:

- Method Validation: CV systems (e.g., markerless pose estimation, 3D reconstruction from video) are validated against gold-standard laboratory systems (e.g., Vicon, Qualisys). Metrics include joint angle error (degrees), positional error (mm), and temporal parameters (s).

- Biomechanical Outcome Validation: CV-derived biomechanical variables (e.g., knee adduction moment, stride variability) are used as sensitive, objective endpoints to validate the physiological impact of sports training regimens or pharmacological treatments in clinical trials.

Table 1: Quantitative Performance Summary of Markerless CV vs. Marker-Based MOCAP

| Biomechanical Variable | Typical CV Error (vs. MOCAP) | Key Influencing Factors | Suitability for Drug Trial Endpoint |

|---|---|---|---|

| Joint Angles (Sagittal) | 2° - 5° RMSE | Camera view, motion speed | High (for gross motor tasks) |

| Joint Angles (Frontal/Transverse) | 5° - 10° RMSE | Camera number, occlusion | Moderate (requires multi-view setup) |

| Spatiotemporal (Stride Time) | < 2% error | Frame rate, algorithm | Very High |

| Keypoint Position (2D) | < 5 pixels error | Resolution, lighting | Low (primarily for validation) |

| Keypoint Position (3D) | 20 - 40 mm RMSE | Camera calibration, setup | Moderate to High (for movement volumes) |

| Peak Vertical Ground Reaction Force | 10% - 20% error (via ML models) | Model training data quality | High (emerging, needs trial-specific validation) |

Experimental Protocols

Protocol 1: Validation of Markerless 3D Pose Estimation for Gait Analysis in a Clinical Trial Setting

Objective: To establish the concurrent validity of a multi-camera markerless CV system for measuring knee flexion-extension range of motion during walking, for use as a primary endpoint in a knee osteoarthritis drug trial.

Materials & Reagents:

- The Scientist's Toolkit: Key Research Reagent Solutions

Item Function in Protocol Gold-Standard MOCAP System (e.g., Vicon) Provides reference 3D biomechanical data for validation. Synchronized Multi-Camera CV Array (≥4 cameras) Records video data for markerless 3D reconstruction. Calibration Object (e.g., Wand, L-Frame) Enables geometric calibration of all cameras to a common 3D coordinate system. Pose Estimation Algorithm (e.g., OpenPose, HRNet, DeepLabCut) Software to extract 2D human keypoints from video frames. 3D Triangulation & Biomechanics Software (e.g., Anipose, Theia3D, custom pipeline) Converts 2D keypoints from multiple views into 3D coordinates and computes joint angles. Standardized Walkway Controls for environment and ensures consistent task execution. Statistical Analysis Package (e.g., R, Python with SciPy) For calculating RMSE, Pearson's r, and Bland-Altman limits of agreement.

Methodology:

- Setup & Calibration: Position the gold-standard MOCAP system per manufacturer guidelines. Within the same capture volume, position the CV cameras (≥4, 100+ Hz) to maximize multi-view coverage. Perform a static calibration of both systems. Synchronize systems via a hardware trigger or post-hoc timestamp alignment.

- Participant Preparation: Fit participants with both retro-reflective markers for MOCAP and neutral-colored, form-fitting clothing for CV.

- Data Acquisition: Record participants performing 10 walking trials at a self-selected speed. Capture data simultaneously from both systems.

- Data Processing: a. Process MOCAP data using standard biomechanical modeling (e.g., Plug-in-Gait) to compute 3D knee angles. b. Process synchronized videos through the 2D pose estimation algorithm. c. Triangulate 2D keypoints to 3D using calibrated camera parameters. d. Apply the same biomechanical model to the CV-derived 3D keypoints to compute knee angles.

- Validation Analysis: For the primary outcome (peak knee flexion during stance), calculate Root Mean Square Error (RMSE), Pearson correlation coefficient (r), and 95% Bland-Altman limits of agreement between the CV and MOCAP systems across all trials and participants.

Visualization 1: CV Biomechanics Validation Workflow

Protocol 2: Validation of a Single-View CV Algorithm for Assessing Movement Quality in Sport

Objective: To validate a single-view, AI-based CV algorithm's ability to classify "proper" vs. "improper" squat technique against expert human rater consensus.

Methodology:

- Dataset Creation: Record frontal and sagittal video of athletes performing squats under varied conditions. A panel of 3 expert biomechanists provides a consensus label for each repetition (Proper/Improper).

- Algorithm Training: Train a deep learning model (e.g., a convolutional neural network with a temporal component) on the sagittal view videos and expert labels. Reserve a separate, participant-independent set for testing.

- Validation Protocol: Apply the trained model to the held-out test set videos.

- Analysis: Compute confusion matrices, accuracy, precision, recall, F1-score, and Cohen's Kappa between the algorithm's classification and the expert consensus.

Visualization 2: Movement Quality Validation Logic

This document details the application of core AI architectures for validating human movement in sports biomechanics research. The quantitative analysis of athletic motion—from gait and form to injury risk and intervention efficacy—requires precise, non-invasive, and scalable measurement tools. Convolutional Neural Networks (CNNs), 2D/3D pose estimation models, and 3D reconstruction techniques form an integrated pipeline to transform standard video data into clinically relevant, three-dimensional biomechanical parameters. This validation is critical for developing and assessing therapeutic interventions, including pharmacological agents aimed at enhancing recovery or performance.

Core Architectures: Technical Specifications & Comparative Performance

Convolutional Neural Networks (CNNs) for Feature Extraction

CNNs serve as the foundational feature extractors from raw image data. Architectures have evolved for efficiency and accuracy.

Table 1: Performance Comparison of Key CNN Backbones for Pose Estimation

| Model | Input Size | Params (M) | GFLOPs | Top-1 Acc (%) (ImageNet) | Common Use in Pose |

|---|---|---|---|---|---|

| ResNet-50 | 224x224 | 25.6 | 4.1 | 76.2 | Backbone for HRNet, SimpleBaseline |

| ResNet-152 | 224x224 | 60.2 | 11.6 | 78.3 | High-accuracy backbone |

| HRNet-W32 | 256x192 | 28.5 | 7.1 | 75.9 | Maintains high-res feature maps |

| EfficientNet-B0 | 224x224 | 5.3 | 0.39 | 77.1 | Mobile/edge deployment |

| MobileNetV3 | 224x224 | 5.4 | 0.22 | 75.2 | Real-time applications |

Protocol 1.1: CNN Feature Map Visualization for Biomechanical Cues Objective: To verify that the CNN backbone is activating on relevant biomechanical landmarks. Procedure:

- Input Preparation: Extract video frames from a high-speed camera capturing a sprint start.

- Forward Pass & Activation: Pass a frame through the chosen CNN (e.g., ResNet-50). Use a forward hook to extract the activation maps from the final convolutional layer.

- Grad-CAM Application: Compute gradients of the target output (e.g., classification score for "sprinting") relative to the activation maps. Generate a heatmap highlighting important regions.

- Validation: Overlay heatmap on original image. Confirm high activation aligns with anatomically critical zones (knee, ankle, hip joints, major muscle groups). Materials: Pre-trained CNN model, high-speed video dataset, PyTorch/TensorFlow with Grad-CAM library.

2D & 3D Human Pose Estimation Models

These models detect skeletal keypoints from monocular or multi-view video.

Table 2: Quantitative Performance of Pose Estimation Models on Standard Benchmarks

| Model (2D) | MPJPE (mm) | PCK@0.2 | Inference Time (ms) | Notes |

|---|---|---|---|---|

| HRNet | ~89.0 (Human3.6M) | ~92.7 (COCO) | 30 (GPU) | State-of-the-art accuracy |

| HigherHRNet | N/A | 90.6 (COCO) | 40 (GPU) | Excels at scale variation |

| Model (3D) | MPJPE (mm) | Protocol #1 | Input | Notes |

| VideoPose3D | 46.8 | 37.2 | 2D sequence | Temporal convolutions |

| PoseFormer | 44.3 | 35.2 | 2D sequence | Transformer-based |

| METRO (Mesh) | 54.0 (PA-MPJPE) | 50.9 | Image | Direct mesh regression |

Protocol 1.2: Multi-Camera 3D Pose Reconstruction for Biomechanics Objective: To reconstruct accurate 3D joint kinematics for inverse dynamics analysis. Procedure:

- System Setup: Synchronize ≥4 genlocked high-speed cameras (≥200 Hz) around a calibration volume encompassing the movement (e.g., a force plate).

- Calibration: Perform a dynamic wand calibration using Direct Linear Transform (DLT) to obtain intrinsic and extrinsic camera parameters.

- 2D Pose Estimation: Run a robust 2D pose estimator (e.g., HRNet) on synchronized frames from all cameras.

- Triangulation: Use the calibrated camera parameters and the 2D keypoints from multiple views to triangulate the 3D position of each joint per time frame.

- Filtering & Smoothing: Apply a low-pass Butterworth filter (cutoff frequency 10-15 Hz) to the 3D trajectory data to remove high-frequency noise. Materials: Synchronized multi-camera system, calibration wand, force plates, pose estimation software (e.g., Anipose, DeepLabCut).

3D Reconstruction & Human Mesh Recovery (HMR)

These models generate a full 3D mesh of the human body, providing surface geometry for detailed analysis.

Table 3: 3D Human Shape & Pose Estimation Models

| Model | Output | MPJPE (mm) | PVE (mm) | Key Feature |

|---|---|---|---|---|

| HMR (2018) | SMPL parameters | 88.0 | 139.3 | End-to-end regression |

| SPIN | SMPL parameters | 62.5 | 116.4 | Optimization loop within network |

| PARE | SMPL parameters | 57.9 | 103.2 | Part attention to occlusions |

| SPEC | SMPL parameters | 52.5 | 94.4 | Multi-spectral representation |

Protocol 1.3: Volumetric & Surface Analysis from Reconstructed Mesh Objective: To estimate segmental volumes and surface strain for muscle dynamics analysis. Procedure:

- Mesh Generation: Input multi-view video of an athlete into an HMR pipeline (e.g., SPIN or multi-view optimization) to generate a temporally coherent SMPL mesh sequence.

- Segmentation: Map the SMPL mesh vertices to predefined body segments (thigh, shank, torso).

- Volume Calculation: For each segment and time point, compute the volume enclosed by its mesh vertices using a convex hull or tetrahedralization algorithm.

- Surface Strain Analysis: Track the displacement of a grid of virtual markers on the mesh surface. Calculate Lagrangian strain between markers to estimate skin-surface deformation patterns during movement. Materials: Multi-view video data, SMPL model, 3D processing library (Trimesh, PyTorch3D).

Integrated Experimental Workflow for Drug Trial Validation

Diagram 1: AI Biomechanics Pipeline for Intervention Studies

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials & Computational Tools

| Item/Category | Example/Product | Function in Biomechanics AI Research |

|---|---|---|

| Motion Capture (Gold Standard) | Vicon, OptiTrack | Provides ground-truth 3D kinematics for training and validating AI models. |

| Force Measurement | AMTI, Kistler Force Plates | Measures ground reaction forces for inverse dynamics, validating AI-derived kinetics. |

| High-Speed Cameras | Phantom, GoPro (High Frame Rate) | Captures input video with minimal motion blur for accurate pose estimation. |

| Pose Estimation Framework | OpenPose, MMPose, DeepLabCut | Open-source libraries for training and deploying 2D/3D pose models. |

| 3D Human Model | SMPL, SMPL-X | Parametric body models enabling 3D mesh reconstruction from images. |

| Biomechanics Software | OpenSim, Visual3D | Used to compute joint kinetics (moments, powers) from AI-derived kinematics and force data. |

| Deep Learning Framework | PyTorch, TensorFlow | Core platforms for developing, training, and testing custom CNN and HMR architectures. |

| Synthetic Data Engine | NVIDIA Omniverse, Blender | Generates photorealistic, annotated training data for rare movements or pathology. |

Detailed Experimental Protocol: Gait Analysis for Intervention Assessment

Protocol 4.1: Quantifying Changes in Knee Kinematics Post-Therapeutic Intervention Objective: To detect statistically significant changes in knee adduction moment (KAM) and flexion angle following administration of a hypothetical osteoarthritis therapeutic.

Materials:

- Patients/athletes from clinical trial cohort.

- 8+ synchronized infrared cameras (Vicon) with retroreflective markers (gold standard).

- 4+ synchronized high-speed RGB cameras (validation & AI input).

- Force plates embedded in walkway.

- AI Pipeline: Custom HRNet -> Triangulation -> OpenSim pipeline.

Procedure:

- Baseline & Follow-up Data Acquisition: Record each subject walking at a self-selected speed. Collect synchronized marker data (Vicon), multi-view RGB video, and force plate data pre-intervention (Day 0) and post-intervention (Week 12).

- Gold Standard Processing: Process marker and force data in Visual3D to compute 3D knee angles and inverse dynamics KAM.

- AI-Derived Processing: a. 2D Pose: Run HRNet on all RGB camera views. b. 3D Triangulation: Use calibrated camera parameters to reconstruct 3D keypoints. c. Kinematic Filtering: Apply a 6Hz low-pass Butterworth filter. d. Kinetic Computation: Input filtered AI kinematics and force plate data into a scaled OpenSim model to compute KAM via inverse dynamics.

- Validation & Analysis: a. Calculate Pearson correlation (r) and Root Mean Square Error (RMSE) between AI-derived and Vicon-derived knee flexion angle and KAM for all baseline trials. b. For the primary endpoint, perform a paired-sample t-test (or non-parametric equivalent) on the AI-derived peak KAM and stance-phase knee flexion ROM between Day 0 and Week 12. c. Report effect size (Cohen's d).

Statistical Endpoints:

- Primary: Change in AI-derived peak KAM.

- Secondary: Correlation (r > 0.85) between AI and Vicon kinematics/kinetics; Change in knee flexion ROM.

Diagram 2: Gait Analysis Validation Workflow

1. Application Notes

The integration of computer vision (CV) and artificial intelligence (AI) is fundamentally validating and expanding the scope of sports biomechanics research. This paradigm shift moves beyond constrained laboratory settings, enabling the quantification of human movement in real-world athletic environments. The core advantages driving this transformation are markerless motion capture, enhanced ecological validity, and high-throughput data generation, which together create a powerful framework for both performance optimization and injury prevention research with applications extending to movement-related drug and therapeutic development.

- Markerless Systems: Traditional optical motion capture relies on physical markers and calibrated camera systems, creating subject preparation burden and laboratory confinement. Modern deep learning-based pose estimation algorithms (e.g., OpenPose, MediaPipe, AlphaPose, and customized CNN architectures) extract 2D or 3D keypoints directly from video streams. This eliminates the need for markers, reduces data collection barriers, and allows retrospective analysis of existing video footage.

- Ecological Validity: By operating in natural training and competition environments (fields, courts, gyms), markerless CV systems capture "in-situ" biomechanics. This yields data that more accurately reflects the cognitive loads, environmental constraints, and tactical decisions inherent to sport, leading to more generalizable and actionable findings for coaches and athletes.

- High-Throughput Data: The automation of motion analysis allows for the processing of thousands of movement trials across hundreds of athletes over entire seasons. This big-data approach facilitates longitudinal monitoring, the identification of subtle movement signatures associated with performance or injury risk, and the development of robust, population-level biomechanical models.

Table 1: Quantitative Comparison of Motion Capture Methodologies

| Feature | Traditional Marker-Based Systems | AI-Driven Markerless Systems |

|---|---|---|

| Setup Time | 30-60 minutes per subject | Minimal (camera setup only) |

| Capture Volume | Limited to lab space (e.g., 10m x 10m) | Scalable (entire field/court) |

| Output Data Rate | ~100-500 Hz | ~30-240 Hz (standard video) |

| Typical Accuracy (RMSE) | <1-2 mm (3D joint center) | 20-40 mm (3D, in-the-wild)* |

| Throughput (Subjects/Day) | Low (5-10) | Very High (50+) |

| Ecological Validity | Low | High |

*Accuracy continuously improving with advanced models and multi-view setups.

2. Experimental Protocols

Protocol 1: Validation of Markerless 3D Pose Estimation Against Gold-Standard Vicon System Objective: To determine the concurrent validity of a multi-camera AI markerless system for measuring lower-extremity joint kinematics during dynamic sporting tasks. Materials:

- 8-camera Vicon MX motion capture system (gold standard).

- 6 synchronized high-definition (1080p, 60Hz) consumer video cameras.

- Calibration object (checkerboard or L-frame).

- Informed consent from participant athletes. Method:

- Setup & Calibration: Position Vicon reflective markers on participant per Plug-in-Gait model. Arrange 6 video cameras around a 10m x 5m capture volume, ensuring overlapping fields of view. Perform a static volume calibration for both systems.

- Task Performance: Participants perform 5 trials each of: a) treadmill running at 4.0 m/s, b) side-step cutting, c) vertical jump.

- Synchronization: Use an audible/visual trigger event recorded by all systems for temporal synchronization.

- Data Processing: Process Vicon data using Nexus software to obtain 3D joint angles. Process synchronized video through a trained deep learning model (e.g., MotionBERT or SPIN) for 3D pose estimation, followed by biomechanical modeling to compute joint angles.

- Analysis: Calculate Root Mean Square Error (RMSE), Pearson's correlation coefficient (r), and Bland-Altman limits of agreement for key joint angles (knee flexion/abduction, hip rotation) across the movement cycle.

Protocol 2: High-Throughput Screening for Movement Asymmetries in a Collegiate Athletics Program Objective: To longitudinally screen an entire team for lower-limb asymmetries using markerless video analysis to identify athletes at elevated risk of overuse injury. Materials:

- 2 stationary IP cameras (4K, 60Hz) placed at sagittal and frontal planes.

- Cloud-based or local computing server for video processing.

- Standardized testing station (designated area on field). Method:

- Baseline Testing: At pre-season, each athlete performs 5 single-leg squats per leg in front of the cameras following a standardized protocol (stance, pace).

- Automated Processing: Videos are automatically uploaded and processed via a pose estimation pipeline (e.g., using OpenPose for 2D keypoints, then 3D reconstruction). Key metrics: knee valgus angle, hip drop (pelvic obliquity), and depth of squat symmetry index are calculated.

- Longitudinal Monitoring: The test is repeated monthly. Data is aggregated in a team dashboard.

- Risk Stratification: Athletes are flagged if their asymmetry index exceeds 15% or shows a negative trend exceeding 10% from baseline. Flagged athletes are referred for detailed biomechanical assessment.

3. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Markerless Sports Biomechanics Research

| Item | Function & Specification |

|---|---|

| Multi-Camera Sync System | Synchronizes frames from multiple cameras (µs precision) for accurate 3D reconstruction. (e.g., genlock cameras or hardware sync boxes). |

| Calibration Object (Charuco Board) | Provides known 3D-2D point correspondences for calibrating intrinsic/extrinsic camera parameters, essential for 3D triangulation. |

| High-Performance GPU Workstation | Accelerates the training and inference of deep learning models for pose estimation (e.g., NVIDIA RTX A6000 or comparable). |

| Pose Estimation Software (OpenPose/MediaPipe) | Open-source libraries providing pre-trained models for real-time 2D human pose estimation from video. |

| 3D Pose Estimation Model (e.g., VideoPose3D) | A PyTorch-based model for lifting 2D keypoints sequences to accurate 3D poses using temporal convolutional networks. |

| Biomechanical Analysis Software (OpenSim) | Open-source platform for modeling musculoskeletal structures and analyzing dynamics from 3D motion data. |

| Dedicated Data Pipeline (e.g., APE)* | An open-source Automated Pose Estimation pipeline for batch processing, model integration, and feature extraction. |

| Secure Data Storage (RAID/Cloud) | High-capacity, secure storage for large volumes of raw video and processed time-series biomechanical data. |

*Acquisition, Processing, and Exploration pipeline.

4. Visualizations

Within the thesis on "Computer Vision and AI for Sports Biomechanics Validation Research," the validation of novel athlete monitoring and drug efficacy protocols demands precise, real-time motion capture and analysis. This necessitates a hardware ecosystem comprising advanced cameras, depth sensors, and edge computing devices. These components are critical for generating high-fidelity biomechanical data (kinematics, kinetics) in ecologically valid environments, moving beyond constrained lab settings to real-world training grounds.

Hardware Specifications and Quantitative Comparison

Table 1: Camera System Comparison for Biomechanics Capture

| Parameter | High-Speed Optical (e.g., Vicon Bonita) | Rolling Shutter CMOS (e.g., GoPro Hero 12) | Global Shutter CMOS (e.g., Intel RealSense D455) |

|---|---|---|---|

| Max Frame Rate (fps) | 1,000 (at reduced resolution) | 240 (1080p) | 90 (1280x720) |

| Resolution | Up to 4MP | 5.3K (5312x2988) | 2MP (1280x800 per imager) |

| Shutter Type | Global | Rolling | Global |

| Key Strength | Gold-standard accuracy for marker-based 3D | Portable, high-resolution for field use | Synchronized depth + RGB, minimal motion blur |

| Primary Application | Lab-based validation of other systems | On-field qualitative and 2D quantitative analysis | Integrated 3D point cloud generation in dynamic scenes |

Table 2: Depth Sensor Technologies

| Technology | Time-of-Flight (ToF) (e.g., Microsoft Azure Kinect) | Structured Light (e.g., older Intel RealSense) | Stereo Vision (e.g., Intel RealSense D455) |

|---|---|---|---|

| Operating Range | 0.5 - 5.5 m | 0.2 - 1.2 m | 0.4 - 6 m |

| Depth Accuracy | ±1% of distance (e.g., ±1cm at 1m) | <2% at 1m | <2% at 2m |

| FPS (Depth Stream) | 30 | 90 | 90 |

| Sensitivity to Light | Moderate (can be affected by sunlight) | High (requires controlled light) | Low (uses ambient light) |

| Best For | Indoor court volumetric capture | Short-range, high-precision static scans | Outdoor and dynamic scene reconstruction |

Table 3: Edge Computing Platforms for On-Site AI

| Platform | NVIDIA Jetson AGX Orin | Intel NUC with Movidius Myriad X | Google Coral Dev Board |

|---|---|---|---|

| AI Performance (TOPS) | 275 (INT8) | ~4 TOPS (INT8) | 4 TOPS (INT8) |

| Power Draw (Typical) | 15-60W | 10-25W | ~5W |

| Memory | 32GB LPDDR5 | Up to 64GB DDR4 | 4GB LPDDR4 |

| Key Feature | Full AI pipeline training/inference | x86 compatibility with VPU | Low power, TPU co-processor |

| Use Case | Real-time multi-camera 3D pose estimation | Portable lab-grade analysis station | Wearable sensor data fusion |

Application Notes

AN-01: Synchronized Multi-Modal Data Capture Protocol

Purpose: To acquire temporally aligned RGB video, depth maps, and inertial measurement unit (IMU) data from athletes performing a standardized movement (e.g., a vertical jump or pitch) for validating biomechanical models. Procedure:

- Hardware Setup:

- Deploy a calibrated array of 3-6 global shutter cameras (e.g., FLIR Blackfly S) around the performance volume.

- Position one depth sensor (e.g., Azure Kinect DK) centrally for volumetric reference.

- Attach a wireless IMU (e.g., Xsens MTw Awinda) to the athlete's pelvis.

- Connect all devices to a master trigger box or use network-based synchronization (PTP).

- Software Configuration:

- Use a platform like Robot Operating System (ROS) or NI LabVIEW to create a synchronized recording node.

- Set all cameras to record at 120 Hz, depth sensor at 30 Hz, and IMU at 400 Hz.

- Calibration:

- Record a static calibration frame with a checkerboard and L-frame visible to all sensors.

- Perform dynamic wand calibration for motion capture space.

- Data Acquisition:

- Record athlete performing 10 trials of the target movement.

- Save all streams with a common global timestamp.

AN-02: On-Edge Pose Estimation & Anomaly Detection Workflow

Purpose: To process video feeds in real-time on the edge to compute biomechanical joint angles and flag deviations from normative patterns, potentially indicating fatigue or intervention effects. Procedure:

- Model Deployment:

- Quantize a pre-trained 3D human pose model (e.g., MediaPipe BlazePose or a custom PyTorch model) to INT8 format for the target edge device (e.g., NVIDIA Jetson).

- Edge Pipeline:

- Ingest RTSP video stream from a fixed-angle camera.

- Run the pose estimation model on every fifth frame (20 Hz effective) to conserve compute.

- Calculate key kinematic parameters (e.g., knee valgus angle, shoulder external rotation).

- Anomaly Detection:

- Compare real-time angles against a stored normative profile (mean ± 2SD) for the movement phase.

- If values exceed threshold for three consecutive analyses, flag an anomaly and save a 10-second video clip with overlaid angles.

- Output:

- Transmit summary statistics (mean max angle, anomaly count) and flagged clips to a central research database via 5G/Wi-Fi.

Experimental Protocols

EP-01: Validation of Depth Sensor against Force Plates for Ground Contact Time (GCT)

Objective: To validate depth sensor-derived temporal gait metrics against the gold standard (force plates) in a controlled sprint start. Materials: Force plate (e.g., AMTI), depth sensor (Azure Kinect DK), synchronization unit, calibration tools. Protocol:

- Place the force plate flush with the ground surface. Position the depth sensor 3m ahead of the plate's center, elevated 1m, pointing at the plate.

- Synchronize force plate and depth sensor data streams via an analog trigger.

- Record 20 sprint starts from a standardized block position. Athlete's lead foot must strike the force plate.

- Force Plate GCT: Calculate GCT as the duration the vertical ground reaction force exceeds 10N.

- Depth Sensor GCT:

- Use background subtraction to isolate the athlete.

- Apply a skeleton tracking algorithm (e.g., Kinect SDK) to identify the foot.

- Calculate GCT as the duration the foot centroid's vertical velocity is near zero (<0.5 m/s) and the foot is within a defined volume above the plate.

- Analysis: Perform Bland-Altman analysis to assess agreement between the two methods for GCT.

EP-02: Protocol for Assessing Edge Computing Latency in Real-Time Biofeedback

Objective: To quantify the end-to-end latency of a real-time biomechanical feedback system using an edge computing device. Materials: High-speed camera (1000fps reference), edge device (Javier Orin), LED indicator, video display. Protocol:

- Set up a high-speed camera pointing at both the video display and the LED indicator connected to the edge device's GPIO pin.

- On the edge device, run a simple OpenCV program that:

- Captures video from its own USB camera.

- Detects a specific color blob (e.g., bright green on the athlete's shoe).

- Triggers the GPIO pin to light the LED when the blob's vertical position exceeds a predefined pixel row.

- Start the high-speed camera recording. Have an operator move the green marker through the trigger point.

- Latency Calculation: From the high-speed footage, count the frames between the actual crossing of the pixel row and the illumination of the LED. Latency = (Frame Count) / (1000 fps).

Diagrams

Title: Data Flow for Biomechanics Hardware Ecosystem

Title: Real-Time Edge AI Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Hardware and Software for CV Biomechanics Research

| Item | Function / Purpose |

|---|---|

| Calibrated Checkerboard | For intrinsic (lens distortion) and extrinsic (position/orientation) calibration of 2D cameras. |

| L-Frame (Wand) | Provides known scale and 3D reference points for multi-camera motion capture system calibration. |

| Retroreflective Markers | Used as high-contrast tracking points for gold-standard optical motion capture (e.g., Vicon). |

| Synchronization Pulse Generator | Sends simultaneous triggers to all cameras, sensors, and data acquisition devices to ensure temporal alignment. |

| OpenPose / MediaPipe / DLPack | Open-source software libraries for 2D and 3D human pose estimation from video, used for prototyping. |

| ROS 2 (Robot Operating System) | Middleware framework for managing synchronized data streams from heterogeneous sensors. |

| Docker Containers | To package and deploy consistent AI inference environments across different edge computing devices. |

| Force Plates & Pressure Mats | Gold-standard ground truth for kinetic variables (force, pressure) used to validate vision-based estimates. |

Application Notes

Gait Analysis

Quantitative gait analysis using computer vision (CV) and AI provides a non-invasive, markerless method for assessing human locomotion. Current research focuses on extracting spatiotemporal parameters (e.g., stride length, cadence, stance/swing phase ratio) and kinematic variables (joint angles) from 2D or 3D video feeds. Deep learning models, particularly pose estimation algorithms (e.g., OpenPose, MediaPipe, HRNet), form the backbone of this analysis, enabling high-throughput processing in ecological settings (e.g., clinics, training fields). Validation against gold-standard motion capture (MoCap) systems remains a core research theme, with recent studies reporting high correlation coefficients (>0.9) for key parameters under constrained conditions.

Key Quantitative Data:

| Parameter | CV/AI Method (2023-24) | Mean Error vs. MoCap | Correlation (r) | Typical Sample Rate | Research Context |

|---|---|---|---|---|---|

| Stride Length (m) | 3D Pose Estimation (Multi-view) | 0.02 m | 0.97 | 30-100 Hz | Neurological disorder progression |

| Knee Flexion Angle (deg) | 2D-to-3D Lifting Models | 3.5 deg | 0.94 | 60 Hz | Osteoarthritis monitoring |

| Cadence (steps/min) | Single-view 2D Pose Estimation | 2.1 steps/min | 0.99 | 30 Hz | Remote patient assessment |

| Ground Contact Time (ms) | Temporal CNN on Foot Keypoints | 12 ms | 0.96 | 120+ Hz | Running economy studies |

Injury Risk Assessment

AI-driven injury risk assessment leverages biomechanical data to identify aberrant movement patterns predictive of future injury. Machine learning classifiers (e.g., Support Vector Machines, Random Forests, and more recently, temporal convolutional networks) are trained on kinematic and kinetic time-series data to flag high-risk profiles. Primary foci include anterior cruciate ligament (ACL) injury risk from jump-landing mechanics and hamstring strain risk during sprinting. Research validates these models against prospective injury datasets, with area-under-curve (AUC) metrics for prediction being a critical performance indicator.

Key Quantitative Data:

| Injury Target | Predictive Biomechanical Features | AI Model Type | Reported AUC | Sensitivity/Specificity | Validation Cohort Size |

|---|---|---|---|---|---|

| Non-contact ACL | Knee valgus angle, trunk lateral flexion | Ensemble Learning | 0.88 | 0.82 / 0.81 | 250 athletes |

| Hamstring Strain | Peak thigh angular velocity, stride imbalance | Temporal CNN | 0.79 | 0.75 / 0.72 | 180 sprinters |

| Patellofemoral Pain | Hip adduction, contralateral pelvic drop | SVM with RBF kernel | 0.84 | 0.78 / 0.77 | 300 recreational runners |

| Shoulder (Labral) | Humeral rotation velocity, scapular dyskinesia | LSTM Network | 0.81 | 0.76 / 0.79 | 150 overhead athletes |

Performance Profiling

Performance profiling utilizes CV to deconstruct sport-specific movements for talent identification and training optimization. This involves creating athlete-specific digital biomechanical models to analyze efficiency, power generation, and technique. Dimensionality reduction (PCA, t-SNE) and clustering algorithms segment athletes into distinct performance phenotypes. Research validates these profiles against competitive outcomes or objective performance metrics (e.g., velocity, power output).

Key Quantitative Data:

| Sport | Profiled Movement | Key Profiling Metrics | AI/CV Technique | Correlation w/ Performance | Use Case Example |

|---|---|---|---|---|---|

| Sprinting | Block Start & Acceleration | Horizontal velocity, shin angle, reaction time | Optical Flow + Pose Estimation | r=0.91 w/ 10m time | Talent ID in youth academies |

| Swimming | Starts & Turns | Wall push-off force (proxy), glide efficiency | Underwater 3D Reconstruction | r=0.89 w/ turn time | Training intervention focus |

| Weightlifting | Clean & Jerk | Barbell path, joint velocity synergy, bar stability | Barbell tracking + Multi-person pose | r=0.85 w/ 1RM | Technique flaw diagnosis |

| Basketball | Jump Shot | Release angle, knee/elbow sync, shot arc | Video sequence classification (CNN) | r=0.78 w/ shooting % | Personalized coaching feedback |

Experimental Protocols

Protocol 1: Validation of Markerless Gait Analysis Against Reference MoCap

Objective: To validate the accuracy of a multi-view CNN-based 3D pose estimation system for gait parameter extraction.

- Participant Setup: Recruit cohort (e.g., n=20). Affix reflective markers for MoCap (e.g., Vicon system) per Plug-in-Gait model.

- System Synchronization: Genlock and synchronize MoCap cameras (120 Hz) with 4+ synchronized RGB video cameras (≥60 Hz, 4K resolution).

- Calibration: Perform static calibration of both systems using an L-frame for spatial alignment.

- Data Collection: Participants walk at self-selected speed on a 10m walkway. Collect 10 valid trials per participant.

- CV Processing: Process RGB feeds through 3D human pose estimator (e.g., VoxelPose, multi-view MediaPipe).

- Data Extraction: Extract 3D keypoints. Calculate spatiotemporal (stride length, cadence) and kinematic (sagittal plane joint angles) parameters.

- Statistical Analysis: Compute Pearson's r, root mean square error (RMSE), and Bland-Altman limits of agreement between CV and MoCap-derived time-series data for each parameter.

Protocol 2: Prospective Injury Risk Assessment Study

Objective: To develop and validate an AI model for predicting lower-limb injury from preseason movement screening.

- Cohort & Baseline Testing: Encode preseason biomechanical assessment of athletes (e.g., n=500). Perform standardized movement tasks (drop-jump, single-leg squat) in a CV lab (3D pose estimation).

- Feature Engineering: Extract time-series features (peak angles, angular velocities, asymmetries, coordination metrics).

- Ground Truth Labeling: Track all athletes prospectively for one competitive season. Record medically diagnosed injuries (e.g., ACL rupture, hamstring strain). Create binary labels (injured/not injured).

- Model Development: Split data (70/30 training/test). Train multiple classifiers (e.g., XGBoost, Temporal CNN) using features to predict injury label.

- Validation: Evaluate model on held-out test set. Report AUC, sensitivity, specificity, precision. Perform SHAP analysis for feature importance.

- External Validation: Where possible, test model on an independent cohort from a different institution.

Protocol 3: High-Throughput Performance Phenotyping Protocol

Objective: To create performance clusters for a specific sport action using unsupervised learning on CV-derived data.

- Data Acquisition: Record a large sample of athletes (n>200) performing the target skill (e.g., basketball free throw) under standardized conditions using a single calibrated high-speed camera.

- 2D/3D Pose Estimation: Process all videos with a consistent pose estimation framework (e.g., MediaPipe Pose).

- Kinematic Time-Series Extraction: Derive relevant time-normalized kinematic curves (e.g., shoulder-elbow-wrist angles for shooting).

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the high-dimensional kinematic data to reduce to 5-10 principal components (PCs) explaining >90% variance.

- Clustering: Apply clustering algorithm (e.g., k-means, DBSCAN) to the PCs to identify distinct technique phenotypes.

- Profile Interpretation & Validation: Statistically compare performance outcome (e.g., shot accuracy, ball velocity) across clusters using ANOVA. Validate profiles with expert coach qualitative assessment.

Visualization

Title: Markerless Gait Analysis Validation Workflow

Title: Injury Risk AI Model Development Pipeline

Title: Unsupervised Performance Phenotyping Process

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in CV/AI Biomechanics Research | Example Product/Software |

|---|---|---|

| Multi-camera Synchronization System | Precisely synchronizes multiple high-speed RGB or IR cameras for 3D reconstruction. | OptiTrack Sync, Arduino-based custom triggers, Genlock-enabled cameras. |

| Calibration Object (L-Frame/Checkerboard) | Enables spatial calibration and scaling for 3D coordinate system alignment between cameras and MoCap. | Custom L-frame with reflective markers, large printed checkerboard (e.g., Charuco board). |

| High-Performance Pose Estimation Model | Pre-trained deep learning model for accurate 2D or 3D human pose estimation from video. | MediaPipe Pose, OpenPose, HRNet, AlphaPose, DeepLabCut. |

| Motion Capture (MoCap) System (Gold Standard) | Provides ground-truth 3D kinematic data for validation of CV/AI methods. | Vicon Nexus, Qualisys, OptiTrack. |

| Biomechanical Analysis Software | For processing motion data, calculating derivatives (velocity, acceleration), and generating standard metrics. | Visual3D, OpenSim, custom Python scripts (SciPy, NumPy). |

| Machine Learning Framework | Environment for developing, training, and validating custom injury risk or profiling models. | Python with Scikit-learn, TensorFlow, PyTorch, XGBoost. |

| Statistical Analysis Suite | For comprehensive validation statistics, including correlation, error analysis, and Bland-Altman plots. | R, Python (Statsmodels, Pingouin), GraphPad Prism. |

| High-Throughput Computing Cluster/GPU | Enables processing of large video datasets and training complex neural networks. | NVIDIA GPUs (e.g., A100, V100), Google Colab Pro, AWS EC2 instances. |

Methodologies in Action: Applying AI to Quantify Kinematics and Kinetics

This document details the end-to-end experimental pipeline for deriving validated biomechanical metrics from video data, framed within a thesis on computer vision (CV) and artificial intelligence (AI) validation for sports biomechanics. The protocols are designed to support rigorous research, including applications in performance optimization, injury prevention, and the assessment of therapeutic interventions in drug development. The integration of AI necessitates stringent validation against gold-standard measurement systems.

Core Pipeline: Workflow & Protocols

Diagram Title: Main CV Biomechanics Pipeline with Validation Loop

Protocol: Synchronized Multi-Modal Data Acquisition

Objective: To capture high-quality, synchronized video and ground-truth sensor data for AI model training and validation.

Equipment Setup:

- Cameras: Arrange multiple high-speed cameras (e.g., 120+ Hz) around the capture volume. Calibrate the 3D system using a wand with markers of known length.

- Ground-Truth Systems: Position force plates flush with the floor. Apply skin-mounted inertial measurement units (IMUs) and/or retro-reflective markers for optoelectronic motion capture (MoCap) on anatomical landmarks.

- Synchronization: Connect all devices (cameras, force plates, IMU receiver, MoCap system) to a common digital sync pulse generator.

Procedure:

- Record a static calibration trial of the subject in a known posture.

- For each dynamic trial (e.g., running jump, cutting maneuver), simultaneously trigger all systems.

- Record a minimum of N=20 trials per movement condition from ≥15 subjects to ensure statistical power for validation studies.

- Include a calibration object (e.g., checkerboard) in the first frame of video for intrinsic camera parameter verification.

Protocol: AI-Based 2D/3D Human Pose Estimation

Objective: To extract accurate 2D and 3D anatomical keypoints from video sequences.

Model Selection & Training:

- Select a state-of-the-art model (e.g., HRNet, ViTPose for 2D; VideoPose3D, MHFormer for 3D).

- Fine-tuning: If using domain-specific data (e.g., unusual camera angles, sports gear), fine-tune the model on a manually annotated dataset from your calibrated cameras. Use transfer learning, freezing early layers.

Inference & Post-Processing:

- Process video through the model frame-by-frame to generate keypoint coordinates (x, y, [z]) and confidence scores.

- Apply temporal filters (e.g., a Butterworth low-pass filter with a 6-10 Hz cutoff, or a Savitzky-Golay filter) to smooth keypoint trajectories. The exact cutoff frequency should be determined by residual analysis.

Protocol: Biomechanical Model Computation & Validation

Objective: To compute clinical/biomechanical metrics and validate them against gold-standard systems.

Kinematic Computation:

- Define a biomechanical skeleton model (e.g., a 15-segment, 6-degree-of-freedom model) using the tracked keypoints.

- Calculate joint angles (flexion/extension, abduction/adduction, rotation) using Cardan/Euler angle sequences (e.g., Y-X-Z for knee).

- Compute center of mass (COM) trajectory using anthropometric tables and segmental analysis.

Kinetic Computation (Inverse Dynamics):

- Input: Kinematic data + ground reaction force (GRF) data from force plates.

- Process: Use Newton-Euler equations of motion within software (e.g., OpenSim, custom Python/R scripts) to calculate net joint moments and powers.

Validation Analysis:

- Temporal Alignment: Synchronize AI-derived time series with MoCap/IMU data using cross-correlation on a known event (e.g., foot strike).

- Statistical Comparison: For key metrics (e.g., peak knee flexion angle, internal rotation moment), calculate:

- Root Mean Square Error (RMSE)

- Pearson's Correlation Coefficient (r)

- Bland-Altman Limits of Agreement (LoA)

Table 1: Representative Validation Metrics for AI vs. Optoelectronic MoCap

| Biomechanical Metric | Typical RMSE (AI vs. MoCap) | Typical Correlation (r) | Key Influencing Factors |

|---|---|---|---|

| Knee Flexion Angle (deg) | 3.5 - 6.0° | 0.97 - 0.99 | Camera viewpoint, clothing, movement speed, AI model architecture |

| Hip Abduction Angle (deg) | 4.0 - 8.0° | 0.90 - 0.98 | Soft tissue artifact, sensor placement error for validation data |

| Ankle Joint Moment (Nm/kg) | 0.15 - 0.30 Nm/kg | 0.85 - 0.95 | Accuracy of GRF measurement, segment inertial parameter estimation, filter choices |

| Stride Time (s) | 0.01 - 0.03 s | >0.99 | Frame rate of video, accuracy of event detection algorithm (e.g., foot contact from keypoints) |

Table 2: Key Experimental Parameters for Pipeline Validation Studies

| Parameter | Recommended Specification | Rationale |

|---|---|---|

| Camera Sample Rate | ≥120 Hz (≥200 Hz for high-speed movements) | To avoid aliasing and accurately capture rapid joint motions. |

| Number of Camera Views | Minimum of 2 for 3D reconstruction; 4-8 recommended for occlusion resilience | Ensures robust 3D triangulation and handles self-occlusion. |

| Video Resolution | ≥1920 x 1080 pixels (Full HD) | Higher resolution improves keypoint detection accuracy. |

| Validation Cohort Size | N ≥ 15 participants, with diverse movement patterns | Ensures generalizability of validation results and statistical power. |

| Gold-Standard Reference | 3D Optoelectronic MoCap (e.g., Vicon, Qualisys) sampled at ≥200 Hz, synchronized with force plates. | Provides the most accurate kinematic and kinetic ground truth for laboratory validation. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item Category / Name | Function & Application in Pipeline |

|---|---|

| High-Speed Video Cameras (e.g., GoPro Hero, Sony RX) | Provide the raw 2D video data. Must support external triggering and high frame rates for dynamic sports movements. |

| Calibration Wand & Checkerboard | Essential for determining the intrinsic (focal length, lens distortion) and extrinsic (position, orientation) parameters of cameras for 3D reconstruction. |

| OpenPose, HRNet, MediaPipe, ViTPose | Open-source and state-of-the-art AI libraries for 2D human pose estimation from video frames. The foundation for keypoint extraction. |

| OpenSim, Pyomeca, Biomechanics ToolKit (BTK) | Software toolkits for implementing biomechanical models, calculating inverse dynamics, and analyzing kinematic/kinetic data. |

| Force Platforms (e.g., AMTI, Kistler) | Measure ground reaction forces and moments. Critical for calculating joint kinetics (moments, powers) and validating AI-predicted loading events. |

| Inertial Measurement Units (IMUs) (e.g., Xsens, Noraxon) | Provide wearable ground-truth orientation data for segments. Used for field-based validation of AI-derived kinematics where MoCap is impossible. |

| Digital Sync Pulse Generator | Ensures sample-accurate temporal synchronization between all independent data streams (video, MoCap, force plates, IMUs). |

| Python/R with SciPy, NumPy, pandas | Core programming environments and libraries for data processing, filtering, statistical analysis (Bland-Altman, RMSE), and custom metric computation. |

Diagram Title: Statistical Validation Pathway for Biomechanical Metrics

The quantitative analysis of human movement is fundamental to sports biomechanics validation research. The extraction of 2D and 3D anatomical keypoints from video data provides a non-invasive, scalable method for kinematic assessment, enabling the evaluation of athletic performance, injury risk, and rehabilitation efficacy. Within the broader thesis on computer vision and AI for sports biomechanics, this document details application notes and protocols for prevalent keypoint extraction solutions: the open-source libraries OpenPose and MediaPipe, and select proprietary systems. Accurate validation of these tools against gold-standard motion capture is critical for their adoption in rigorous scientific and drug development research, where they may be used to quantify the biomechanical impact of therapeutic interventions.

OpenPose: A bottom-up, multi-person 2D pose estimation system. It first detects all body parts in an image (Part Affinity Fields), then associates them to form individual skeletons. It is renowned for its robustness in multi-person scenarios and its detailed model (25 body keypoints, plus hand and face models).

MediaPipe Pose: A BlazePose-based, top-down, lightweight solution optimized for real-time performance. It employs a two-step detector-tracker approach and is designed to run efficiently on a wide range of hardware. It offers 33 body keypoints, including specific torso and face landmarks for enhanced stability.

Proprietary Solutions (e.g., Theia3D, DARI Motion, Vicon Vue): These are often commercial, turn-key systems. They may use multi-view triangulation for 3D reconstruction, advanced deep learning models, or a fusion of sensor data. They typically provide direct 3D output, comprehensive support, and validation documentation, but at a significant cost and with less algorithmic transparency.

Quantitative Performance Comparison

Table 1: Comparative Summary of Keypoint Extraction Solutions (Data from recent benchmarks and published validations, 2023-2024).

| Feature / Metric | OpenPose | MediaPipe Pose | Proprietary (e.g., Theia3D) |

|---|---|---|---|

| Primary Output | 2D (3D via triangulation) | 2D (3D via lifter models) | Direct 3D |

| Inference Speed (FPS on CPU) | ~5-10 FPS | ~30-50+ FPS | Varies (often GPU-accelerated) |

| Keypoint Count (Body) | 25 (BODY_25 model) | 33 | Often 20-30+ |

| Multi-Person | Native, bottom-up | Requires separate detection | Typically native |

| Validation in Biomechanics | Moderate (extensive research use, variable accuracy) | Growing (good for gross motion) | High (often validated against optoelectronic systems) |

| Typical Accuracy (PCK@0.2 on COCO) | ~85% | ~88% | N/A (uses proprietary datasets) |

| 3D Root Mean Square Error (vs. Vicon) | ~30-50mm (from triangulated 2D) | ~25-40mm (from 3D lifter) | ~10-25mm |

| Cost | Free, Open-Source | Free, Open-Source | High (Licensing + Hardware) |

| Key Strength | Robust multi-person, customizable | Speed, accessibility, ease of use | Accuracy, direct 3D, support, validation |

Experimental Protocols for Biomechanical Validation

Protocol A: Single-Camera 2D Accuracy Validation

Objective: To validate the 2D keypoint accuracy of OpenPose and MediaPipe against manually annotated ground truth in a controlled lab setting.

Materials:

- High-speed camera (e.g., 120 Hz).

- Calibration board (checkerboard).

- A subject in athletic attire.

- A computer with OpenPose and MediaPipe installed.

Procedure:

- Camera Setup & Calibration: Mount the camera perpendicular to the plane of motion. Record a video of the checkerboard in various orientations to perform camera calibration and correct for lens distortion.

- Data Acquisition: Record the subject performing standardized movements (e.g., squats, gait cycles, overhead throws). Ensure the subject remains within the calibrated plane.

- Ground Truth Generation: Manually annotate keypoints (e.g., hip, knee, ankle) for a representative subset of frames (e.g., 100 frames) using a tool like LabelMe or COCO Annotator.

- Algorithm Processing: Process the same video sequence with both OpenPose and MediaPipe, extracting 2D keypoint coordinates.

- Analysis: Compute the Percentage of Correct Keypoints (PCK) at a threshold of 0.2 of the head segment length. Calculate the Mean Per Joint Position Error (MPJPE) in pixels between the algorithm output and manual annotations.

Protocol B: Multi-Camera 3D Reconstruction & Kinematic Error Assessment

Objective: To assess the error in 3D joint angles derived from triangulated OpenPose/MediaPipe keypoints compared to a synchronized gold-standard optoelectronic system (e.g., Vicon).

Materials:

- Test System: Minimum of 2 synchronized, calibrated high-definition video cameras (≥4 recommended).

- Gold Standard: Synchronized optoelectronic motion capture system (e.g., Vicon with ≥8 cameras).

- Protocol Subjects with reflective markers placed according to a biomechanical model (e.g., Plug-in Gait).

- A common synchronization trigger (e.g., LED light).

Procedure:

- System Synchronization & Calibration:

- Calibrate the Vicon system according to manufacturer specifications.

- Simultaneously calibrate the video camera network using a wand or checkerboard for 3D reconstruction. Establish a common global coordinate system with the Vicon system using a calibration frame.

- Set up a synchronization trigger visible to all systems.

- Data Collection: Record the subject performing dynamic sports tasks (e.g., cutting maneuver, jump landing). Ensure all reflective markers and body segments are visible.

- Data Processing:

- Vicon: Process marker trajectories, label markers, and compute 3D joint centers and angles using the defined biomechanical model.

- Video: Extract 2D keypoints from each camera view using OpenPose/MediaPipe. Use direct linear transform (DLT) or bundle adjustment to triangulate 3D keypoint coordinates.

- Temporal Alignment: Use synchronization signals to align data streams.

- Spatial Alignment: Perform a Procrustes analysis to align the two 3D coordinate systems.

- Outcome Measures: Calculate for key joints (knee, hip, ankle):

- Root Mean Square Error (RMSE) of 3D joint positions.

- RMSE and correlation (r) of joint angle time-series (e.g., knee flexion/extension).

- Bland-Altman plots to assess agreement and systematic bias.

(Title: 3D Keypoint Validation Protocol Workflow)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key "Research Reagent Solutions" for Keypoint Extraction Experiments.

| Item / Solution | Category | Function in Research |

|---|---|---|

| Vicon Motion Capture System | Gold-Standard Hardware | Provides ground truth 3D kinematic data for validation of vision-based methods. |

| Synchronization Trigger Box (e.g., LED) | Laboratory Equipment | Ensures temporal alignment between video cameras and other data acquisition systems. |

| Calibration Wand & Checkerboard | Calibration Tools | Enables geometric calibration of camera intrinsic/extrinsic parameters for accurate 2D/3D mapping. |

| OpenPose (BODY_25 Model) | Open-Source Software | Provides detailed, multi-person 2D pose estimation for custom analysis pipelines. |

| MediaPipe Pose (BlazePose) | Open-Source Software | Enables real-time, efficient 2D/3D pose estimation on commodity hardware for pilot studies. |

| Theia3D Markerless System | Proprietary Software | Offers a validated, commercial solution for direct 3D biomechanics from multi-view video. |

| Python (NumPy, SciPy, OpenCV) | Programming Environment | The core platform for data processing, triangulation, statistical analysis, and custom scripting. |

| COCO or MPII Annotator | Annotation Software | Creates manual 2D keypoint ground truth data for training or validating models. |

(Title: Solution Selection Logic for Biomechanics Research)

1. Introduction within Thesis Context This document serves as an application note within a doctoral thesis focused on validating computer vision (CV) and artificial intelligence (AI) models for sports biomechanics. The accurate derivation of foundational biomechanical variables—joint angles, angular velocities, and whole-body center of mass (COM)—is critical. The thesis posits that robust, markerless CV/AI systems must match or exceed the precision of traditional laboratory methods to be viable for real-world sports performance analysis and injury risk assessment, with downstream implications for pharmaceutical interventions targeting mobility and recovery.

2. Core Biomechanical Variables: Definitions and Quantitative Benchmarks The following variables are primary outputs for validation studies comparing CV/AI pipelines against gold-standard motion capture.

Table 1: Core Derived Biomechanical Variables

| Variable | Definition | Typical Gold-Standard Precision | Key Sports Application |

|---|---|---|---|

| Joint Angle | The relative orientation between two adjacent body segments in degrees (°). | < 1.0° RMSE for sagittal plane (e.g., knee flexion). | Gait analysis, technique optimization, identifying pathological movement. |

| Angular Velocity | The rate of change of a joint angle, in degrees per second (°/s). | < 10 °/s RMSE for dynamic tasks. | Quantifying explosive power (e.g., knee extension in sprinting), assessing joint control. |

| Whole-Body COM | The 3D spatial coordinate of the body's weighted average mass position. | < 15 mm RMSE for trajectory. | Balance assessment, calculation of derived measures (e.g., momentum, mechanical load). |

3. Experimental Protocols for Validation Research These protocols are designed to generate ground-truth data for validating CV/AI models.

Protocol 3.1: Concurrent Validation of Joint Kinematics Objective: To quantify the agreement between a markerless CV/AI system and synchronized marker-based motion capture during dynamic sports tasks. Materials:

- Optical motion capture system (e.g., Vicon, Qualisys) with >8 cameras.

- Synchronized high-speed video cameras (≥120 Hz) for CV system input.

- Calibrated force plates.

- Standardized athletic equipment (e.g., running treadmill, soccer ball, jump platform). Procedure:

- System Synchronization: Genlock or use analog/digital signals to synchronize motion capture and video cameras at a matched sampling frequency (≥120 Hz).

- Participant Preparation: Fit participants with retroreflective markers per a full-body model (e.g., Plug-in Gait, CAST). Record participant height, weight, and segment lengths.

- Static Calibration: Capture a 3-second static trial in a neutral T-pose to define participant-specific biomechanical models.

- Dynamic Task Execution: Participants perform a battery of sport-specific movements:

- Running: Steady-state running at 3.5 m/s, 5.0 m/s.

- Cutting: 45-degree and 90-degree side-step cuts at approach speed.

- Jumping: Countermovement jumps, drop jumps.

- Sport-Skill: Kicking, throwing, swinging.

- Data Processing:

- Gold Standard: Process marker trajectories, apply biomechanical model, filter data (low-pass Butterworth, 6-12 Hz cutoff), compute 3D joint angles and angular velocities.

- CV/AI System: Input video to the AI model (e.g., OpenPose, HRNet, DeepLabCut) to obtain 2D/3D keypoints. Apply same biomechanical model and filtering.

- Validation Analysis: Calculate Root Mean Square Error (RMSE), Pearson's correlation coefficient (r), and Bland-Altman limits of agreement for key joint angles (knee flexion, hip abduction) and angular velocities.

Protocol 3.2: Center of Mass Trajectory Estimation Objective: To validate the COM trajectory derived from a segmental method using CV/AI keypoints against the gold standard derived from force plate data (via the impulse-momentum theorem). Materials: As per Protocol 3.1, with critical emphasis on calibrated force plates. Procedure:

- Execute synchronized motion capture, video, and ground reaction force (GRF) collection during a task involving clear ground contact (e.g., running stride over force plate, landing from a jump).

- Gold Standard COM (Kinetic Method): Double-integrate the net GRF signal (minus body weight) to compute the acceleration, velocity, and displacement of the COM in the vertical and anteroposterior directions.

- Gold Standard COM (Kinematic Method): Calculate COM from the marker-based biomechanical model using segmental masses and positions.

- CV/AI COM Estimation: Apply a regression-based or geometric segmental model using the keypoints and participant mass to estimate segmental COM positions, then compute the whole-body COM.

- Validation Analysis: Compare the vertical displacement time series of the COM from the CV/AI method against both gold-standard methods using RMSE and cross-correlation.

4. Visualizing the Validation Workflow

Diagram Title: Validation Workflow for CV/AI Biomechanics Model

5. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomechanics Validation Studies

| Item / Solution | Function & Relevance to Validation |

|---|---|

| Retroreflective Markers & Sets | Create the optical "ground truth" for motion capture. Specific sets (e.g., Helen Hayes, IOR) define the biomechanical model. |

| Calibrated Force Plates | Provide kinetic gold standard for COM calculation and kinetic cross-validation (e.g., via inverse dynamics). |

| Synchronization Hub/Module | Critical for temporal alignment of multi-modal data streams (MoCap, video, force, EMG). |

| OpenPose, HRNet, DeepLabCut | Open-source AI pose estimation models serving as the experimental "reagent" under validation. |

| Biomechanical Modeling Software (OpenSim, Visual3D) | Software "reagents" used to apply segmental parameters and compute variables from both marker and keypoint data. |

| Standardized Athletic Turf & Equipment | Controlled environment ensures repeatability of sport-specific tasks across participants and sessions. |

| Statistical Package (R, Python SciPy) | For performing RMSE, correlation, and Bland-Altman analysis to generate validation metrics. |

Application Notes

Within the broader thesis on Computer Vision and AI for Sports Biomechanics Validation Research, the accurate prediction of Ground Reaction Forces (GRF) via inverse dynamics and AI models is a transformative capability. It enables the validation of biomechanical models against gold-standard force plates, providing a non-invasive, scalable, and ecologically valid method for human movement analysis. This is critical for sports performance optimization, injury mechanism elucidation, and quantitative assessment in clinical trials for musculoskeletal therapies.

Key Applications:

- Sports Science: In-field gait and jump analysis for load monitoring and technique refinement.

- Drug Development: Providing objective, quantitative biomechanical endpoints (e.g., gait symmetry, peak force) for Phase II/III trials of osteoarthritis or DMD therapeutics.

- Rehabilitation: Continuous assessment of functional recovery outside the lab.

- Prosthetics & Orthotics: Real-time evaluation of device efficacy and user adaptation.

Current AI Model Paradigms: The field has evolved from traditional biomechanical models to data-driven AI approaches. The following table summarizes key quantitative performance metrics from recent seminal studies.

Table 1: Performance Metrics of Selected AI Models for GRF/GRM Prediction

| Study (Year) | Input Data | Model Architecture | Output | Key Metric (Mean ± SD or RMSE) |

|---|---|---|---|---|

| Kidziński et al. (2020) | 2D Video (OpenPose keypoints) | Temporal Convolutional Network (TCN) | 3D GRF & Moment (GRM) | Correlation (ρ): 0.84-0.97 (GRF), 0.71-0.89 (GRM) |

| Johnson et al. (2021) | Markerless 3D Pose (Theia3D) | Feedforward Neural Network | Vertical GRF (vGRF) | Peak vGRF Error: 0.10 ± 0.07 N/BW |

| Zhang et al. (2022) | IMU (7 sensors, 52 features) | Bidirectional LSTM (BiLSTM) | 3D GRF | RMSE: 0.069-0.126 N/kg |

| Kanko et al. (2021) | 3D Kinematics (Motion Capture) | Ensemble of Dense Neural Networks | 3D GRF | Total GRF Error: 6.3% ± 2.1% (Walking), 7.9% ± 3.4% (Running) |

| This Thesis (Proposed) | Multi-view 2D Video → 3D Pose (CV Pipeline) | Attention-based Spatio-Temporal Graph ConvNet | 3D GRF & Center of Pressure | Target: RMSE < 0.1 N/kg, ρ > 0.95 |

Experimental Protocols

Protocol 1: Benchmarking AI-GRF against Force Plate Data

Objective: To validate the accuracy of a video-based AI model in predicting 3D GRF during dynamic tasks. Materials: Synchronized system: 4+ high-speed cameras (120Hz), calibrated force plates (1000Hz), standard marker set. Participants: N=20 athletes performing walking, running, and cutting maneuvers. Procedure:

- Calibration: Perform static and dynamic volume calibration for motion capture. Calibrate force plates to zero.

- Data Collection: Record synchronized 3D marker trajectories and raw GRF data for 10 successful trials per activity.

- Data Processing:

- Filter kinematic (low-pass 12Hz) and kinetic (low-pass 50Hz) data.

- Compute 3D joint centers and angles via inverse kinematics in OpenSim.

- Downsample and synchronize all data to 100Hz.

- AI Model Input Preparation:

- Path A (Gold Standard): Use processed 3D kinematics + anthropometrics as input to a biomechanically-informed neural network.

- Path B (Vision-Only): Render 2D video from 3D pose, run through a 2D-to-3D pose estimator (e.g., VideoPose3D), use the estimated 3D kinematics as model input.

- Training/Testing: Split data 70/15/15 (train/validation/test). Train model to minimize loss between predicted and measured GRF.

- Validation: Calculate RMSE, Pearson's r, and Bland-Altman limits of agreement on the test set.

Protocol 2: Pharmacological Intervention Assessment

Objective: To detect GRF-derived biomechanical changes pre- and post-administration of an analgesic in an OA population. Materials: As in Protocol 1. Add VAS pain scales and serum biomarker kits. Participants: N=15 patients with knee OA in a crossover design. Procedure:

- Baseline (Day 0): Collect GRF (force plate) and kinematic (video) data during level walking. Draw blood for biomarker (e.g., CTX-II) analysis.

- Intervention (Day 30): Post-treatment, repeat biomechanical and biomarker data collection.

- AI-Powered Analysis: Process all video through the validated AI-GRF model from Protocol 1.

- Endpoint Calculation: From both force plate and AI-predicted GRF, derive: Loading Rate (kN/s), Impulse (Ns), and Knee Adduction Moment Impulse (KAMi via inverse dynamics).

- Statistical Validation: Paired t-tests between force plate and AI-predicted changes in endpoints. Correlate biomechanical changes with biomarker and VAS changes.

Visualizations

Diagram 1: AI-GRF Model Development & Validation Workflow

Diagram 2: Spatio-Temporal Graph Neural Net for GRF

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for AI-GRF Research

| Item / Solution | Function / Relevance | Example Vendor/Software |

|---|---|---|

| Multi-camera Motion Capture System | Provides synchronized, high-fidelity 2D video streams for 3D pose reconstruction. The input source for vision-based models. | Qualisys, Vicon, or custom multi-view RGB (e.g., Azure Kinect) |

| Force Platform | Provides the ground truth GRF data required for supervised training and validation of AI models. | AMTI, Kistler, Bertec |

| 3D Biomechanical Software | Performs inverse kinematics/dynamics to generate complementary features and validate model biomechanical plausibility. | OpenSim, Visual3D, Nexus |

| Deep Learning Framework | Enables the development, training, and deployment of custom spatio-temporal neural network architectures. | PyTorch, TensorFlow with Keras |

| Pose Estimation Library | Converts 2D video into 3D skeletal data; a critical pre-processing module. | OpenPose, MediaPipe, DeepLabCut, AlphaPose |

| Biomechanical Feature Extractor (Custom Code) | Calculates derived kinematic features (angles, angular velocities, etc.) from raw pose data to enhance model input. | Custom Python scripts (NumPy, SciPy) |

| Synchronization Hardware/Software | Ensures temporal alignment of video and force data (µs precision), critical for accurate model training. | TTL pulse systems, LabStreamingLayer (LSL) |

| Standardized Anthropometric Kit | For measuring subject mass, height, segment lengths; required for scaling generic models and inverse dynamics. | Stadiometer, scale, calipers |

Within the broader thesis on computer vision (CV) and AI for sports biomechanics validation, the application of these technologies to drug development represents a critical translational frontier. The validation of movement algorithms in athletic performance provides a robust foundation for creating sensitive, objective, and continuous digital endpoints for clinical trials in mobility and neuromuscular disorders. This document outlines specific application notes and protocols for implementing CV/AI-driven biomechanical analysis as primary or secondary endpoints in therapeutic development.

Core Quantitative Metrics & Data Tables

The following tables summarize key quantitative biomechanical metrics derived from CV/AI analysis that serve as candidate endpoints.

Table 1: Gait & Mobility Endpoints for Neuromuscular Diseases (e.g., DMD, SMA)

| Metric Category | Specific Parameter | Measurement Tool | Typical Baseline in Pathology (Mean ± SD) | Minimal Clinically Important Difference (MCID) | Phase Trial Relevance |

|---|---|---|---|---|---|

| Temporal-Spatial | Stride Length (m) | 3D Pose Estimation (Video) | DMD (6-9 yrs): 0.81 ± 0.15 | ≥0.08 m | Phase II/III |

| Gait Speed (m/s) | Wearable IMU/CV Fusion | SMA Type 3: 0.92 ± 0.22 | ≥0.10 m/s | Phase II/III | |

| Stance Time Variability (CV%) | Floor Sensors/CV | Early Parkinson's: 3.5 ± 1.2% | ≥1.0% reduction | Phase II | |

| Kinematic | Knee Flexion Range of Motion (Degrees) | Markerless Motion Capture | DMD (Ambulatory): 45 ± 8 | ≥5 degrees | Phase II |

| Trunk Sway Amplitude (cm) | 2D Video (Sagittal) | ALS (Early): 4.2 ± 1.5 | ≥1.0 cm reduction | Phase I/II | |

| Functional Task | 4-Stair Climb Power (W/kg) | Video + Timestamp | DMD: 2.1 ± 0.7 | ≥0.4 W/kg | Phase III |

| Sit-to-Stand Velocity (stands/s) | Monocular RGB Camera | Sarcopenia: 0.45 ± 0.12 | ≥0.05 stands/s | Phase II |

Table 2: AI-Derived Composite Endpoints for Clinical Trials

| Composite Endpoint Name | Component Metrics | AI Fusion Method | Validation Cohort (Correlation to Gold Standard) | Sensitivity to Change |

|---|---|---|---|---|

| Digital Mobility Outcome (DMO) | Gait Speed, Stride Length, Sit-to-Stand Power | Weighted Linear Regression (Supervised) | n=120 DMD (r=0.91 vs. 6MWT) | Effect Size (ES) = 0.78 in 12-mo study |

| Neuromuscular Fatigue Index (NFI) | Stride Time CV, Knee ROM Decline, Trunk Sway Increase over 2-min walk | LSTM Neural Network | n=85 Myasthenia Gravis (r=0.87 with Pseudo-fatigue score) | ES = 0.85 post-intervention |

| Movement Smoothness Score (MSS) | Jerk Norm of Upper Limb Reach, Wrist Path Curvature | Unsupervised Clustering (k-means) | n=70 Huntington's Disease (r=0.93 with UHDRS) | ES = 0.65 in 6-mo study |

Experimental Protocols

Protocol 1: Video-Based Gait Analysis for Multicenter Trial

Objective: To quantify changes in temporal-spatial gait parameters in a decentralized clinical trial setting. Materials: Smartphone/tablet with ≥1080p @ 60fps capability, standardized tripod, calibration chessboard (1m x 1m), color fiducial marker (for scale), secure upload portal. Procedure:

- Setup: Place camera laterally to capture 5m walkway. Position calibration chessboard in plane of walking. Place fiducial marker at start and end lines.

- Calibration: Record 10-second video of stationary chessboard. Upload with trial ID.

- Task Instruction: Participant walks at self-selected speed from start to end line (performed twice).

- Data Acquisition: Record entire task. Video metadata (device model, resolution) is auto-captured.

- CV Processing Pipeline (Centralized): a. Pose Estimation: Input video to pre-trained human pose model (e.g., HRNet, MediaPipe). b. 2D-to-3D Lifting: Use calibrated camera parameters and biomechanical constraints to estimate 3D joint centers. c. Gait Event Detection: Heel-strike and toe-off detected via algorithm based on ankle velocity and foot orientation. d. Parameter Calculation: Automatically compute stride length, gait speed, cadence, stance/swing ratio.

- Quality Control: AI flags poor lighting, occlusion, or out-of-frame events for manual review. Output: Per-walk trial data table and longitudinal trend visualization for site and sponsor.

Protocol 2: AI-Driven Assessment of Upper Limb Function in Home Environment

Objective: To monitor disease progression in amyotrophic lateral sclerosis (ALS) via daily upper limb movement. Materials: Consumer RGB-D camera (e.g., Intel RealSense), dedicated tablet with app, wall mount. Procedure:

- Installation: Patient mounts camera in living area (e.g., facing chair). Tablet runs continuous passive monitoring app.

- Calibration: Once weekly, patient performs a 30-second calibration sequence moving arms through full range.

- Passive Monitoring: CV system detects when patient is in frame and segments activities of daily living (ADL) like drinking, reaching.

- Active Task (Daily): Patient prompted to perform a standardized 4-point reach task twice daily.

- AI Analysis: a. Skeleton Tracking: RGB-D data feeds a 3D pose estimation model. b. Kinematic Extraction: Calculate joint angles (shoulder flexion, elbow extension), movement speed, and trajectory smoothness (jerk metric). c. Composite Score Generation: A neural network (trained on ALSFRS-R scores) integrates kinematic features into a daily "Limb Function Score."

- Data Privacy: All video processed locally on tablet; only de-identified kinematic data and scores are uploaded. Output: Daily time series of Limb Function Score and raw kinematics, aggregated weekly reports.

Diagrams

Title: Workflow from CV Validation to Clinical Endpoint

Title: AI Pipeline for Gait Analysis from Video

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for CV/AI Mobility Endpoint Research

| Item / Solution | Provider Examples | Function in Research |

|---|---|---|

| Markerless Motion Capture Software | Vicon (Blaze), Theia3D, Kinematix | Provides gold-standard or validated benchmark data for training and testing AI pose estimation models in clinical settings. |

| Open-Source Pose Estimation Models | MediaPipe (Google), MMPose (OpenMMLab), DeepLabCut | Pre-trained neural networks for 2D/3D human pose estimation; can be fine-tuned on pathological movement data. |

| Biomechanical Analysis Suites | OpenSim, Biomechanics ToolKit (BTK) | Opensource platforms for modeling musculoskeletal dynamics and calculating derived parameters (joint moments, powers) from pose data. |

| Standardized Clinical Motion Datasets | PhysioNet (Gait in Neuro Disease), HuMoD Database | Publicly available datasets of pathological movement for algorithm validation and cohort comparison. |

| Digital Trial Platforms | Clario (MotionWatch), ActiGraph, Fitbit | Integrated hardware/software platforms for decentralized data capture, compliance monitoring, and secure data flow in trials. |

| Synthetic Data Generation Tools | NVIDIA Omniverse, Siemens Simcenter | Generates synthetic video of pathological gait for augmenting training datasets where patient data is scarce. |

| Edge Processing Devices | NVIDIA Jetson, Intel NUC | Compact computing devices for deploying AI models locally at home or clinic, enabling real-time processing and privacy. |

Optimizing AI Models: Troubleshooting Accuracy and Real-World Deployment

Within the rigorous validation of sports biomechanics for computer vision (CV) and AI research, three persistent technical pitfalls critically impact data fidelity: occlusion, lighting variability, and camera calibration errors. These factors directly influence the accuracy of kinematic and kinetic outputs, which are foundational for downstream analyses in athletic performance assessment and injury prevention—areas of significant interest to pharmaceutical development for musculoskeletal therapies. This document details application notes and experimental protocols to identify, quantify, and mitigate these pitfalls.

Recent studies (2023-2024) quantify the error introduced by these pitfalls in markerless motion capture systems.

Table 1: Quantified Error from Common Pitfalls in Markerless Biomechanics

| Pitfall Category | Experimental Condition | Resulting Error in Key Metric | Impact on Biomechanical Analysis |

|---|---|---|---|

| Occlusion | Self-occlusion during golf swing (lead arm) | Knee flexion angle RMSE: 8.7° vs. 3.2° (unoccluded) | Misestimation of joint loading, flawed kinetic chain analysis. |