Maximizing Value, Minimizing Cost: A 2024 Guide to Computational Efficiency in Multiscale Biomechanical Modeling

This article provides a comprehensive framework for researchers and drug development professionals to optimize the computational cost of multiscale biomechanical models.

Maximizing Value, Minimizing Cost: A 2024 Guide to Computational Efficiency in Multiscale Biomechanical Modeling

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to optimize the computational cost of multiscale biomechanical models. We explore the foundational challenges of balancing physical fidelity with computational expense, detail current methodological approaches and software tools for efficient simulation, present targeted troubleshooting and optimization strategies for common bottlenecks, and discuss rigorous validation and comparative analysis techniques. By integrating these four pillars, the guide empowers scientists to achieve predictive, high-fidelity simulations that are both scientifically robust and computationally feasible, accelerating innovation in biomedical research and therapeutic development.

The Core Challenge: Understanding the Cost-Quality Trade-off in Multiscale Biomechanics

Technical Support Center

Troubleshooting Guide: Common Computational Cost Overruns

Q1: My multiscale simulation (organ + tissue) is consuming far more CPU hours than budgeted. The solver seems to be running slowly. What are the primary areas to investigate? A: This is a common bottleneck. Follow this systematic checklist:

- Mesh Convergence: An overly fine mesh at the macro-scale is the most frequent cause. Re-run a convergence study on a key output metric (e.g., peak stress) using coarser meshes. Use the results to justify the coarsest acceptable mesh.

- Time-Step Stability: For explicit dynamics solvers, a tiny time-step (dictated by the smallest element) can explode step counts. Check for very small or distorted elements in your mesh. For implicit solvers, verify that the iterative solver convergence is not requiring an excessive number of iterations per step.

- Solver Configuration: Are you using a direct solver (e.g., for linear static problems)? For large models, iterative solvers (like Conjugate Gradient) with appropriate preconditioners are vastly more efficient. Consult your software documentation.

- Code Profiling: Use profiling tools (e.g.,

gprof,vtune, or built-in HPC job profiling) to identify if a specific subroutine (e.g., a custom constitutive model or cell mechanics function) is using >90% of the runtime.

Q2: My agent-based model (ABM) of cell population dynamics is slowing down exponentially as cell count increases. How can I improve scaling? A: This indicates an algorithmic complexity issue, often O(n²) due to "naive neighbor searching."

- Root Cause: Each cell checking every other cell for proximity.

- Solution: Implement spatial partitioning data structures.

- Fixed Grid: Divide the simulation space into bins. Cells only interact with others in the same or adjacent bins.

- kd-tree or Octree: More efficient for non-uniform cell distributions. Libraries like CHASTE or BioDynaMo have these built-in.

- Protocol: Profile your code to confirm neighbor search is the bottleneck. Replace the brute-force loop with a call to a library function for spatial queries. Benchmark performance at 1k, 10k, and 50k cells.

Q3: I am getting unexpected cloud billing spikes when running parameter sweeps on AWS/GCP/Azure. How can I control costs? A: This is typically due to uncontrolled resource auto-scaling or data egress fees.

- Set Budget Alerts: Immediately configure billing alerts at 50%, 90%, and 100% of your allocated budget.

- Use Spot/Preemptible Instances: For fault-tolerant parameter sweeps, use spot instances (AWS), preemptible VMs (GCP), or low-priority VMs (Azure). They can reduce compute cost by 60-90%.

- Contain Data Locality: Ensure your computation cluster and output storage are in the same cloud region. Transferring data between regions or out of the cloud ("egress") incurs high, often unexpected, costs.

- Implement Auto-Termination Tags: Use instance tags with a maximum runtime (e.g.,

max-runtime: 8h) and employ cloud functions to shut down resources after this period.

FAQs: Optimizing for Your Research Stage

Q4: What are the key computational cost metrics, and how do they translate? A: The table below summarizes core metrics and their conversions.

| Metric | Definition | Typical Context | Cloud Cost Equivalent (Estimate) |

|---|---|---|---|

| CPU Core-Hour | 1 physical/virtual CPU core running for 1 hour. | Local HPC cluster, traditional budgeting. | ~$0.02 - $0.10 per core-hour (varies by instance type). |

| Node-Hour | 1 compute node (e.g., with 32-64 cores) running for 1 hour. | HPC cluster allocations. | ~$1.00 - $4.00 per node-hour (for comparable VMs). |

| GPU-Hour | 1 GPU (e.g., NVIDIA A100) running for 1 hour. | ML training, CUDA-accelerated solvers. | ~$2.00 - $4.00 per GPU-hour (spot pricing can be ~70% less). |

| Cloud Credit | Monetary unit ($1) of spending on cloud resources. | AWS, GCP, Azure grants. | Directly pays for compute, storage, and networking. |

Q5: For a new multiscale biomechanics project, what is a step-by-step protocol to minimize cost from the start? A: Follow this cost-aware development protocol:

Phase 1: Proof-of-Concept (Local/Laptop)

- Objective: Validate model logic and get initial results.

- Scale: Drastically reduced scale (e.g., 1/10th mesh resolution, 100 cells in ABM).

- Hardware: Local workstation.

- Action: Perform mesh/time-step convergence studies on this small scale to establish scaling laws.

Phase 2: Pilot Scaling (University HPC)

- Objective: Test full-scale model and identify bottlenecks.

- Scale: Full model resolution, but limited parameter variations (≤ 5 runs).

- Hardware: Institutional HPC cluster (using allocated CPU hours).

- Action: Run full-scale simulation. Use profiling data to optimize code. Document exact resource usage (node-hours).

Phase 3: Production Runs (Cloud/HPC)

- Objective: Execute large parameter sweeps or population studies.

- Scale: 100s to 1000s of simulations.

- Hardware: Cloud (Spot Instances) for massive parallelism and quick turnaround. HPC if queue times are acceptable and cost is lower.

- Action: Containerize your workflow (Docker/Singularity). Use orchestration tools (AWS Batch, Nextflow) to manage the sweep. Budget based on Pilot Scaling data.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Experiments |

|---|---|

| FE Software (FEBio, Abaqus) | Provides solvers for continuum-level biomechanics (organs, tissues). Core platform for macro-scale simulations. |

| Agent-Based Framework (CHASTE, CompuCell3D) | Pre-built environment for modeling cell populations, adhesion, and signaling. Avoids rebuilding spatial query algorithms. |

| Multiscale Coupling Library (preCICE) | Specialized library to handle data exchange and coupling between different solvers (e.g., CFD + FE). |

| Container (Docker/Singularity) | Packages software, dependencies, and model code into a single, portable, and reproducible unit. Essential for cloud/HPC. |

| Orchestrator (Nextflow, Snakemake) | Manages complex computational workflows, handles job submission, failure recovery, and is cloud-aware. |

| Profiler (gprof, Vampir) | Measures where a program spends its time (CPU) or communicates (MPI). Critical for identifying optimization targets. |

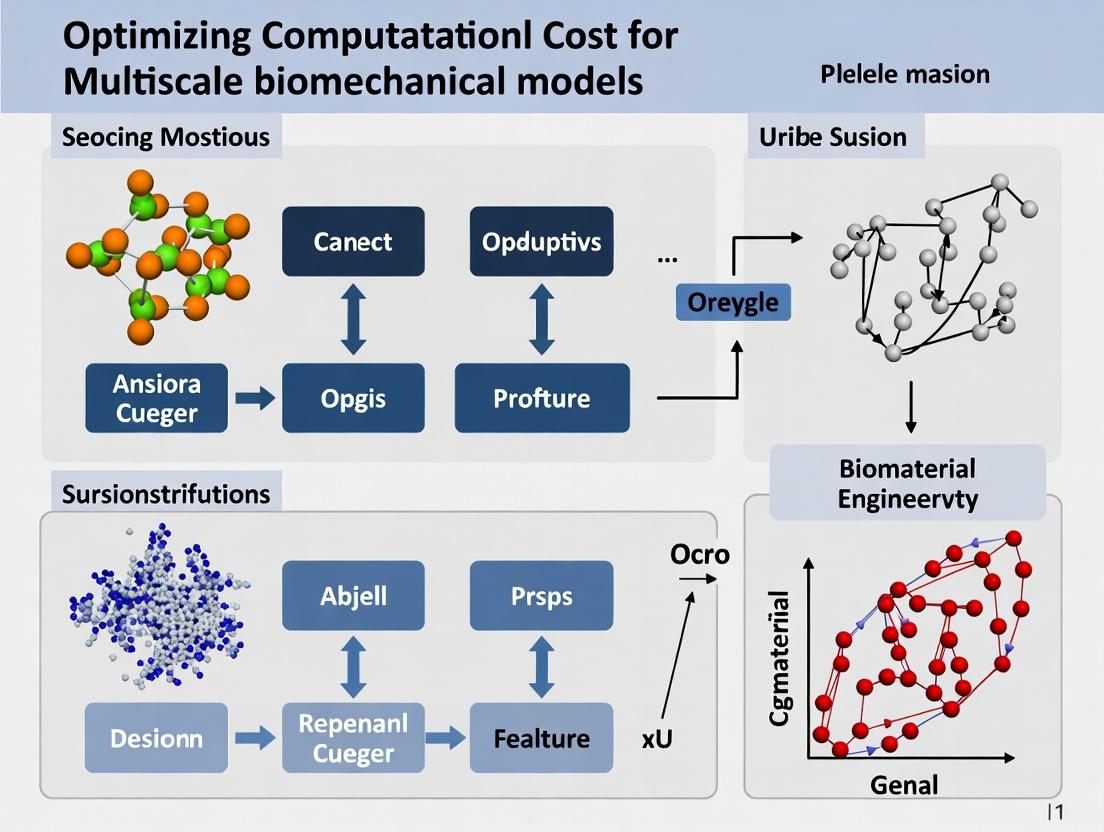

Visualization: Workflow & Cost Relationship

Diagram 1: Multiscale Simulation Optimization Workflow

Diagram 2: Components of Total Computational Cost

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My agent-based model (ABM) of tissue remodeling is becoming computationally intractable when scaling to physiologically relevant cell counts. What are my primary optimization strategies?

A: The cost grows non-linearly due to inter-agent force calculations and state checks. Focus on:

- Spatial Partitioning: Implement a spatial hash grid or quadtree/octree to reduce neighbor search complexity from O(N²) to ~O(N).

- Conditional Updating: Update agent states on event-triggered schedules rather than every universal timestep.

- Hybridization: Replace sub-regions reaching homeostasis with continuum approximations (e.g., PDEs).

Q2: When coupling a Finite Element (FE) organ model with a sub-cellular signaling network, how do I manage vastly different time steps without exploding simulation wall time?

A: This is a classic multirate problem. Implement a scheduler-based temporal coupling protocol.

Experimental Protocol: Multirate Temporal Coupling

- Define Time Scales: FE mechanical solver (Δtmech = 1-10 ms), signaling ODE solver (Δtsig = 0.001-0.01 ms).

- Establish Master Clock: Use the slower FE solver clock as the master.

- Interpolate Mechanical Input: For each FE timestep, interpolate strain/stress values at the Gauss points as constant inputs to the signaling model.

- Sub-cycle Signaling: Advance the signaling network over n sub-cycles (n = Δtmech / Δtsig) using its native solver.

- Average & Return: Average key signaling outputs (e.g., active RhoGTPase concentration) over the n sub-cycles. Map this averaged value back to the FE model to modulate material properties for the next mechanical step.

- Repeat.

Q3: My parameter sweep for calibrating a molecular-scale kinetic model against in vitro data is consuming weeks of HPC time. How can I make this more efficient?

A: Move from brute-force sweeps to intelligent search and surrogate modeling.

- Step 1: Perform a limited, space-filling design-of-experiments (DoE) sweep (e.g., 100-500 runs).

- Step 2: Train a Gaussian Process (GP) or Polynomial Chaos Expansion (PCE) surrogate model on this data.

- Step 3: Use an optimization algorithm (e.g., Bayesian optimization, genetic algorithm) to find the optimal parameter set by querying the cheap surrogate instead of the full model.

Table 1: Computational Cost Comparison for a Sample Parameter Calibration (50 parameters)

| Method | Approx. Model Evaluations | Estimated Wall Time (on HPC Cluster) | Key Advantage |

|---|---|---|---|

| Full Factorial Sweep (5 points/param) | 5⁵⁰ (infeasible) | N/A (infeasible) | Exhaustive |

| Random Sampling (100,000 runs) | 100,000 | ~480 hours | Feasible coverage |

| Latin Hypercube DoE + Surrogate Model | 500 (for training) | ~2.4 hours | Enables efficient global optimization |

Q4: How can I validate a multiscale model spanning from protein binding to tissue function when I cannot get comprehensive experimental data at all scales?

A: Employ a "chain-of-validation" strategy, which is inherently resource-intensive but necessary.

Experimental Protocol: Chain-of-Validation for a Drug Effect Model

- Scale 1 (Molecular): Validate kinetic rate constants of drug-target binding using Isothermal Titration Calorimetry (ITC) or Surface Plasmon Resonance (SPR) data.

- Scale 2 (Cellular): Validate model predictions of downstream phosphorylation (e.g., pERK/STAT) against flow cytometry or Western blot data from treated cell cultures.

- Scale 3 (Tissue): Validate predicted changes in contractility or stiffness using Atomic Force Microscopy (AFM) on engineered tissue treated with the drug.

- Scale 4 (Organ): Compare simulated pressure-volume loop alterations to those measured in an ex vivo perfused heart model under drug influence.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Multiscale Cardiac Electromechanics Validation

| Item | Function in Multiscale Context |

|---|---|

| Human Induced Pluripotent Stem Cell-Derived Cardiomyocytes (hiPSC-CMs) | Provides a human-relevant cellular substrate for calibrating sub-cellular ionic and force-generation models. |

| Engineered Heart Tissue (EHT) Constructs | 3D tissue platform for validating coupled cell-cell mechanics and electrophysiology at the tissue scale. |

| Voltage-Sensitive Dyes (e.g., FluoVolt) | Enables optical mapping of action potential propagation for validating tissue-scale electrophysiological model outputs. |

| Traction Force Microscopy (TFM) Substrate | Polyacrylamide gels with fluorescent beads to measure single-cell and monolayer contraction forces for model calibration. |

| Ex Vivo Langendorff-Perfused Heart Setup | Gold-standard organ-level experimental system for validating integrated hemodynamic and electrophysiological simulations. |

Visualization: Key Workflows & Pathways

Diagram 1: Multiscale Cardiac Model Coupling Workflow (Max Width: 760px)

Diagram 2: Surrogate Model-Assisted Calibration Logic (Max Width: 760px)

Technical Support Center

Troubleshooting Guide

Issue: Simulation fails to complete, running out of memory (OOM).

- Q1: My high-resolution 3D finite element model of a tissue scaffold crashes with an OOM error. How can I proceed?

- A1: This directly relates to the Spatial Resolution driver. The number of mesh elements (and thus degrees of freedom) scales non-linearly with increased resolution.

- Protocol: Implement Adaptive Mesh Refinement (AMR):

- Run an initial, coarse-resolution simulation to identify regions of high stress, strain, or biochemical gradient.

- Define refinement criteria based on these field variables (e.g., refine where gradient > threshold).

- Use a library like

libMeshorFEniCSto dynamically refine the mesh only in critical regions during the solver loop. - Compare results and memory usage against the uniform high-resolution mesh.

Issue: Simulation time is impractically long for capturing a biological process.

- Q2: My agent-based model of cell migration needs to run for 72 hours of biological time, but one simulation already takes a week. What strategies exist?

- A2: This is a Temporal Scales challenge. The computational cost scales with the number of time steps.

- Protocol: Employ Multi-scale Time Stepping:

- Identify "fast" and "slow" processes in your system (e.g., fast: ligand-receptor binding; slow: cell movement).

- Implement multiple time integrators: use a small time step (

Δt_fast = 0.1 sec) for fast processes and a larger one (Δt_slow = 60 sec) for slow processes. - Establish a synchronization schedule (e.g., every 600 fast steps, update the slow subsystem).

- Validate by comparing key outputs (cell trajectories) against a benchmark run with a uniform small time step.

Issue: Adding a new physical phenomenon drastically increases computational cost.

- Q3: Adding fluid-structure interaction (FSI) to my solid tumor growth model increased solve time by 10x. How can I optimize this?

- A3: This is core Physical Complexity. Adding coupled physics requires solving additional equation sets.

- Protocol: Use a Loose/Modular Coupling Scheme:

- Instead of a monolithic (fully implicit) solver, use a partitioned (staggered) approach.

- Solve the solid mechanics equations for the tumor with fixed fluid pressures.

- Solve the fluid dynamics (e.g., Navier-Stokes) in the surrounding vasculature with fixed solid boundaries.

- Pass boundary data (displacement, pressure) between solvers at a predefined coupling interval, not every time step.

- Gradually tighten the coupling interval until solution accuracy is acceptable.

Frequently Asked Questions (FAQs)

Q4: Which driver typically has the largest impact on cost for biomechanical models?

- A4: The impact is multiplicative, but Spatial Resolution is often the primary factor due to the cubic scaling of 3D meshes. Doubling linear resolution can lead to an 8x increase in cell count and a >10x increase in memory and compute time.

Q5: Are there "good enough" lower bounds for resolution or complexity to save cost?

- A5: Yes, determined by sensitivity analysis.

- Protocol: Conduct a Convergence Study:

- Run your simulation at 3-4 progressively finer spatial resolutions or temporal discretizations.

- Plot a key output metric (e.g., maximum principal stress, diffusion front position) against discretization size/time step.

- The "good enough" lower bound is where the metric changes by less than an acceptable tolerance (e.g., <2%).

- Protocol: Conduct a Convergence Study:

- A5: Yes, determined by sensitivity analysis.

Q6: What hardware investments are most effective for each driver?

- A6: See the table below for targeted investments.

Data Presentation

Table 1: Computational Cost Scaling and Mitigation Strategies

| Driver | Typical Cost Scaling | Primary Impact | Mitigation Strategy | Expected Efficiency Gain |

|---|---|---|---|---|

| Spatial Resolution | O(N^d) with N elements in d dimensions | Memory, Solve Time | Adaptive Mesh Refinement (AMR) | 50-80% memory reduction |

| Temporal Scales | O(1/Δt) | Total Wall-clock Time | Multi-scale / Multi-rate Time Stepping | 70-95% time reduction |

| Physical Complexity | O(C^k) for C couplings | Per-iteration Solve Time | Loose/Modular Solver Coupling | 60-90% time reduction |

Table 2: Hardware/Software Solutions for Computational Drivers

| Driver | Recommended Hardware Focus | Key Software Solutions |

|---|---|---|

| Spatial Resolution | High RAM capacity, Fast inter-node interconnect | libMesh, FEniCS, deal.II (for AMR) |

| Temporal Scales | Fast single-core CPU performance | SUNDIALS CVODE (multi-rate), Custom scheduler |

| Physical Complexity | Multi-core CPUs for parallel solver tasks | preCICE (coupling library), MOOSE (multiphysics framework) |

Mandatory Visualizations

Diagram 1: Multi-scale Time Stepping Workflow

Diagram 2: Loose Coupling for Fluid-Structure Interaction

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Computational Optimization

| Item / Software | Function in Optimization | Example Use Case |

|---|---|---|

preCICE |

Coupling library for partitioned multi-physics simulations. | Enables modular FSI coupling between a dedicated solid solver (e.g., CalculiX) and a fluid solver (e.g., OpenFOAM). |

| SUNDIALS CVODE | Solver for stiff and multi-rate ODE systems. | Efficiently handles the different time scales in biochemical signaling within a cell model. |

libMesh / FEniCS |

Finite element libraries with native AMR support. | Dynamically refines mesh around a propagating crack or diffusion front in a bone or tissue model. |

| HDF5 Format | Hierarchical data format for parallel I/O. | Manages output/restart data from high-resolution 3D simulations across many cores, reducing I/O bottleneck. |

| Sensitivity Analysis Toolkit (SAT) | Python library for variance-based sensitivity analysis (Sobol indices). | Quantifies which input parameters (material properties, rates) most affect cost-driving outputs to guide simplification. |

Technical Support Center: Troubleshooting Multiscale Biomechanical Models

This support center provides targeted guidance for common challenges encountered when developing and simulating multiscale biomechanical models in drug development, framed within the critical balance of model fidelity and computational feasibility.

FAQ & Troubleshooting Guides

Q1: My molecular dynamics (MD) simulation of a protein-ligand complex is computationally prohibitive for the timescales needed for drug discovery. What are my primary options? A: The trade-off here is between atomic fidelity and achievable simulation time. Your options, in order of decreasing fidelity but increasing feasibility, are:

- Enhanced Sampling: Implement methods like metadynamics or replica exchange MD to accelerate exploration of conformational space.

- Coarse-Graining (CG): Reduce system degrees of freedom by grouping atoms into beads. Use Martini or similar force fields.

- Hybrid QM/MM: Restrict high-fidelity quantum mechanics (QM) to the active site only, using molecular mechanics (MM) for the bulk.

- Kinetic Modeling: Shift to a system of differential equations based on rate constants derived from shorter MD runs or literature.

Q2: When coupling a finite element (FE) tissue-scale model with a cellular signaling pathway model, the solver fails to converge. How should I diagnose this? A: This is a classic multiscale coupling issue. Follow this protocol:

- Check Timescales: Ensure the integration timestep is appropriate for the fastest process (often the signaling model). Use a multi-rate solver if disparity is large.

- Stagger Execution: Run the FE model for a mechanical time step, then pass the strain/stress data to the signaling model, which runs multiple internal steps before returning feedback.

- Simplify the Feedback: Initially, make the mechanical-to-biological coupling one-way (mechanics affects signaling, but not vice versa) to isolate the instability source.

- Validate Sub-models: Run and stabilize each sub-model (FE and signaling) independently before attempting full coupling.

Q3: My agent-based model (ABM) of cell migration in a tumor microenvironment is too stochastic, yielding irreproducible high-level outcomes. How can I reduce noise without losing emergent behavior? A: This reflects the fidelity/stochasticity vs. feasibility/predictability balance.

- Increase Population: Run the model with a larger number of agents to allow statistical trends to dominate.

- Parameter Sensitivity Analysis (PSA): Systematically identify which stochastic parameters most influence outcome variance.

- Ensemble Averaging: Execute multiple runs with different random seeds and analyze the distribution of outcomes.

- Rule Simplification: Review if every agent needs a unique rule set. Can cells be grouped into phenotypes with shared behavioral rules?

Q4: I need to model drug perfusion and binding across vascular, tissue, and cellular scales. What is the most feasible software architecture? A: A modular, multi-physics approach is recommended. See the workflow diagram below.

Experimental Protocols & Methodologies

Protocol 1: Establishing a Coupled Organ-Cell Model for Cardiotoxicity Screening Objective: Predict drug-induced arrhythmia risk by coupling a whole-heart FE electrophysiology model to a system of ordinary differential equations (ODEs) for cardiomyocyte metabolic stress.

- Acquire Base Models: Obtain a validated human ventricular FE mesh (e.g., from the "Living Heart Project") and a curated cardiomyocyte ODE model (e.g., O'Hara-Rudy model) from public repositories.

- Define Coupling Variables: Map FE model outputs (local strain, action potential duration) as inputs to the ODE model (modulating ion channel kinetics, ATP demand). Map ODE model outputs (metabolite concentrations) back to the FE model (altering conduction velocity).

- Implement Loose Coupling: Use a master script to run the FE solver for 10ms, extract field data, update ODE parameters, solve ODEs, and then update FE tissue properties for the next increment.

- Calibrate & Validate: Calibrate coupling strengths using known positive (Dofetilide) and negative (Aspirin) controls. Validate against high-throughput in vitro data from hiPSC-derived cardiomyocytes.

Protocol 2: Coarse-Graining a Protein for Longer Timescale Binding Site Analysis Objective: Simulate domain motions of a kinase target to identify cryptic allosteric pockets over microseconds.

- All-Atom Reference: Run a short (100ns) all-atom MD simulation of the solvated kinase. Analyze root-mean-square fluctuation (RMSE) to identify rigid and flexible domains.

- CG Mapping: Use a topology conversion tool (e.g.,

martinize.pyfor Martini). Map 4-5 heavy atoms to a single CG bead. Define elastic network bonds within rigid domains to maintain tertiary structure. - Force Field Parameterization: Apply the Martini 3.0 force field. Tune elastic bond constants to match fluctuation profiles from step 1.

- Production & Analysis: Run 10-50µs CG-MD simulation. Cluster conformations and revert promising clusters to all-atom representation for pocket detection and docking studies.

Data Presentation

Table 1: Computational Cost & Fidelity Comparison of Common Biomechanical Modeling Methods

| Method | Spatial Scale | Temporal Scale | Key Fidelity Metric | Approx. Cost (CPU-hr) | Primary Feasibility Limit |

|---|---|---|---|---|---|

| QM/MM | Ångstroms | Femtoseconds | Electronic Structure | 10,000 - 100,000 | System size (>1000 atoms) |

| All-Atom MD | Nanometers | Nanoseconds | Atomic Interactions | 1,000 - 10,000 | Simulation time (>1µs) |

| Coarse-Grained MD | 10s of nm | Microseconds | Mesoscale Dynamics | 100 - 1,000 | Chemical specificity |

| Agent-Based Model | Micrometers | Minutes-Hours | Emergent Behavior | 10 - 100 | Stochastic noise, validation |

| Finite Element Model | mm to Organs | Milliseconds-Seconds | Continuum Mechanics | 1 - 100 | Mesh resolution, material laws |

| ODE/PDE Systems | Cellular-Organ | Milliseconds-Hours | Biochemical Concentrations | < 1 | Model complexity (stiffness) |

Table 2: Troubleshooting Guide: Symptoms, Causes, and Mitigations

| Symptom | Likely Cause | Recommended Mitigation Strategy |

|---|---|---|

| Simulation fails to start or crashes immediately. | Incorrect parameter units, missing boundary conditions, or software dependency error. | Implement a "sanity check" pre-simulation script to validate input dimensions and file paths. |

| Model output is physically impossible (e.g., negative concentrations). | Unstable numerical integration or inappropriate solver for stiff equations. | Switch to an implicit solver (e.g., CVODE for ODEs), and significantly reduce the timestep. |

| Coupled model results are path-dependent or non-reproducible. | Poorly handled data exchange between scales; order-of-operations error. | Adopt a standardized data coupler (e.g., preCICE) and enforce strict version control on all model components. |

| Model calibration requires thousands of runs, which is infeasible. | High-dimensional parameter space with naive sampling (e.g., full factorial). | Employ advanced design of experiments (DoE) and surrogate modeling (e.g., Gaussian Process). |

Mandatory Visualizations

Diagram 1: Multi-Scale Drug Perfusion & Binding Modeling Workflow

Diagram 2: Fidelity vs. Feasibility Decision Logic for Model Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Multiscale Modeling | Example/Note |

|---|---|---|

| High-Performance Computing (HPC) Cluster | Provides the parallel processing power needed for MD, FE, and large-scale ABM simulations. | Essential for feasibility. Cloud-based HPC (AWS, Azure) offers scalable, cost-effective access. |

| Multi-Paradigm Simulation Software | Enables coupling of different model types within a unified framework. | preCICE: Coupler for FE/CFD. COPASI: For ODE systems. LAMMPS/NAMD: For MD/CG-MD. |

| Parameterization Datasets | Experimental data used to derive and calibrate model parameters, grounding fidelity in reality. | Protein Data Bank (PDB): Structures for MD. BioNumbers: Cell/tissue properties. ChEMBL: Drug binding data. |

| Sensitivity Analysis Toolkits | Quantifies how uncertainty in model inputs affects outputs, guiding where to invest computational effort. | SALib (Python): For global sensitivity analysis. Helps identify critical parameters for refinement. |

| Surrogate Model (Metamodel) Libraries | Creates fast, approximate models of complex simulations to enable rapid exploration of parameter space. | GPy (Gaussian Processes) or PySR (Symbolic Regression). Key for feasibility in optimization loops. |

| Visualization & Analysis Suites | Interprets high-dimensional, multi-scale output data to extract biological insight. | ParaView (for FE/CFD), VMD (for MD), Matplotlib/Plotly (for general plotting and dashboards). |

Technical Support Center: Troubleshooting for AI/ML-Enhanced Multiscale Biomechanical Modeling

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During the training of our surrogate ML model for a cardiac tissue simulation, we encounter severe overfitting despite having a large dataset. The model performs poorly on unseen boundary conditions. What are the primary corrective steps? A: Overfitting in surrogate models for high-fidelity biomechanical simulations is common. Implement these steps:

- Architectural Regularization: Introduce dropout layers (start with 0.2 rate) and L2 weight regularization (λ=1e-4) in your neural network.

- Physics-Informed Loss: Augment your data loss (e.g., MSE) with a physics-based loss term from the underlying partial differential equations (PDEs). This constrains the model to physically plausible solutions.

- Data Augmentation via Simulation: Use your base solver to generate additional synthetic training samples by perturbing input parameters (e.g., material properties, loads) within physiologically plausible ranges.

- Simplify the Model: Reduce the number of trainable parameters. For many biomechanical fields, a moderately sized dense network often generalizes better than an excessively deep one.

Q2: Our digital twin pipeline for a liver lobule model stalls when synchronizing data between the agent-based cellular model and the continuum tissue-scale model. What could cause this handshake failure? A: Handshake failures typically arise from data format or scale mismatch.

- Check Time-Step Alignment: Ensure both sub-models are configured to exchange data at congruent temporal intervals. The macro-scale model's time step must be an integer multiple of the micro-scale model's step.

- Validate Data Schema: Confirm that the output array from the agent-based model (e.g., average cytokine concentration per zone) matches the expected input dimensions and physical units of the continuum model's boundary condition nodes.

- Inspect Middleware Logs: If using a coupling library (e.g., Precice, MUSCLE3), check logs for MPI communication errors or buffer overflows, which may indicate insufficient memory allocation for the data exchange field.

Q3: Integrating a new constitutive model for tumor tissue into our AI-accelerated simulation results in a "Gradient Explosion" error during the backward pass of the differentiable solver. How do we debug this? A: Gradient explosion indicates instability in the computational graph.

- Gradient Clipping: Implement gradient clipping (global norm or value clipping) as an immediate stabilization measure.

- Analytic Gradient Inspection: Disable automatic differentiation for the new constitutive model. Instead, implement and register a custom analytic gradient function. This often reveals numerical instabilities in the original implementation.

- Function Smoothing: Ensure all mathematical operations in your new model are smooth (e.g., avoid

if-elsebranches, use smoothed Heaviside functions). Non-differentiable operations disrupt gradient flow.

Q4: When deploying a trained model for real-time simulation in our digital twin, inference latency is too high for interactive use. What optimization strategies can we apply? A: To reduce inference latency:

- Model Quantization: Convert your model's weights from FP32 to FP16 or INT8 precision. This reduces memory bandwidth and can accelerate computation on supported hardware (e.g., NVIDIA Tensor Cores).

- Graph Optimization: Use frameworks like TensorRT or ONNX Runtime to apply graph-level optimizations (e.g., layer fusion, kernel auto-tuning) specific to your deployment GPU.

- Pruning: Remove redundant neurons/weights from the trained model using magnitude-based pruning, then fine-tune.

Experimental Protocols for Cited Key Studies

Protocol 1: Benchmarking Surrogate Model vs. Full-Order Solver for Bone Remodeling Objective: Quantify the computational cost savings and accuracy trade-off of a Physics-Informed Neural Network (PINN) surrogate against a traditional FE solver for a trabecular bone adaptation cycle.

- Dataset Generation: Use the FE solver (e.g., FEBio) to simulate 500 bone remodeling cycles under varied loading conditions. Record input parameters (load magnitude, direction, initial density field) and output fields (resultant density, strain energy density).

- Surrogate Model Training: Construct a PINN with 8 hidden layers of 256 neurons each. Loss = 0.8MSE(Data) + 0.2MSE(PDE Residual). Train for 100,000 epochs using Adam optimizer.

- Benchmarking: On a held-out test set of 50 parameter sets, compare:

- Wall-clock time for a full simulation.

- Relative Error in final density field (L2-norm).

- Peak Memory Usage during execution.

Protocol 2: Calibrating a Cardiovascular Digital Twin with Patient-Specific Data Objective: Update a multi-scale cardiovascular digital twin using clinical catheterization data to personalize hemodynamic predictions.

- Data Assimilation: Acquire patient-specific pressure waveforms from catheterization and aortic geometry from imaging (CT/MRI).

- Parameter Inference: Frame the calibration as an inverse problem. Use a Bayesian optimization (e.g., Gaussian Process) approach to iteratively adjust the digital twin's Windkessel model parameters and boundary conditions.

- Validation Loop: Run the updated digital twin forward to predict ventricular pressure-volume loops. Compare these predictions against clinical echocardiography data not used in calibration.

Table 1: Computational Cost Comparison: Traditional vs. AI-Augmented Simulation

| Metric | High-Fidelity FE Solver | Surrogate ML Model (Inference) | Hybrid Approach (AI+DT) |

|---|---|---|---|

| Avg. Simulation Time | 4.2 hours | 18 seconds | 25 minutes |

| Hardware Requirement | HPC Cluster (CPU) | Single GPU (e.g., V100) | Workstation + Cloud GPU |

| Energy Consumption per Run | ~2.1 kWh | ~0.05 kWh | ~0.3 kWh |

| Relative Error (vs. Ground Truth) | N/A (Baseline) | 3.7% | 1.2% |

| Cost per Simulation (Compute) | $42.00 | $0.85 | $5.50 |

Note: Costs estimated based on AWS EC2/P3 instances (us-east-1). Hybrid approach uses AI for parameter pre-screening and DT for final high-fidelity validation.

Table 2: Common Failure Modes in AI/ML-DT Integration

| Failure Mode | Typical Symptoms | Root Cause Likelihood | Suggested Diagnostic Tool |

|---|---|---|---|

| Concept Drift in DT | DT predictions diverge from physical system over time. | New patient/data regime not seen in training (85%). | Monitor prediction entropy; retrain on new data batch. |

| Coupled Simulation Instability | Oscillations or crash at multiscale interface. | Incorrect scale-separation assumptions (70%). | Perform time-scale analysis of interacting subsystems. |

| High Inference Latency | Digital twin response time > operational requirement. | Unoptimized model graph or quantization failure (60%). | Profile with TensorBoard or PyTorch Profiler. |

Visualizations

Title: AI/ML and Digital Twin Integration Architecture

Title: AI-DT Workflow for Cost-Optimized Research

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Research Tools for AI/ML-Enhanced Biomechanics

| Tool/Reagent | Category | Primary Function | Example/Provider |

|---|---|---|---|

| Differentiable Physics Solver | Core Software | Enables gradient-based optimization and seamless integration with ML frameworks. | NVIDIA Modulus, Fenics, JAX-FEM |

| Multiscale Coupling Library | Integration Middleware | Manages data exchange and synchronization between different spatial/temporal simulation scales. | Precice, MUSCLE3 |

| Surrogate Model Framework | AI/ML Library | Provides architectures (PINNs, GNNs) tailored for learning from physical simulation data. | PyTorch Geometric, DeepXDE, Nvidia SimNet |

| Model Serving Engine | Deployment Tool | Optimizes and deploys trained models for low-latency inference within digital twin pipelines. | NVIDIA Triton, TensorFlow Serving |

| Biomechanics-Specific Dataset | Data Resource | Benchmarks and pre-computed simulation data for training and validation. | Living Heart Project, SPARC Datasets |

| Automated Hyperparameter Optimization | ML Ops Tool | Systematically searches for optimal model training parameters to maximize accuracy. | Optuna, Weights & Biases Sweeps |

Strategic Approaches: Methodologies and Tools for Cost-Effective Multiscale Simulation

Troubleshooting Guides & FAQs

FAQ 1: My concurrent (handshaking) coupling simulation is unstable at the interface. What are the primary causes and fixes?

Answer: Instability in concurrent coupling zones (e.g., in Atomistic-to-Continuum methods) is often due to spurious wave reflections or force mismatches.

- Cause 1: Reflection of high-frequency atomic waves at the continuum boundary.

- Fix: Implement a calibrated non-reflective boundary condition (NRBC) or a perfectly matched layer (PML) in the continuum region to dissipate fine-scale energy.

- Cause 2: Incompatible strain or energy density between the two domains.

- Fix: Refine the "handshake" region width and ensure the constitutive model in the continuum region is rigorously derived from the atomistic potential via consistent coarse-graining or the Cauchy-Born rule.

- Protocol for Diagnosing: Run a simplified 1D wave propagation test. Initialize a wave in the atomistic domain and measure the reflection coefficient at the interface. Iteratively adjust the damping constants in the overlapping zone until reflections are minimized (<5%).

FAQ 2: How do I choose between hierarchical (sequential) and concurrent coupling to optimize computational cost for a large tissue model?

Answer: The choice is dictated by the separability of scales and the need for feedback.

- Use Hierarchical (Sequential) methods when information flows one-way (e.g., from fine-scale protein mechanics to a coarse-grained tissue property). It is computationally cheaper and simpler to implement. Ideal for parameterization.

- Use Concurrent methods when there is strong two-way feedback across scales in a localized region (e.g., crack propagation in bone, where tissue failure alters protein unfolding). It is more accurate for localized phenomena but far more expensive.

- Cost-Optimization Protocol:

- Identify the critical "region of interest" (ROI) requiring high fidelity.

- Use concurrent coupling only within this evolving ROI.

- For the bulk of the domain, use a hierarchical pass of pre-computed coarse-grained properties.

- Perform a cost-benefit analysis using the table below.

FAQ 3: In hierarchical coupling, my upscaled parameters fail to predict correct macroscale behavior. How can I validate the upscaling procedure?

Answer: This indicates a loss of critical fine-scale information during homogenization.

- Cause: The Representative Volume Element (RVE) is too small or does not capture essential microstructural heterogeneity.

- Validation Protocol (Numerical Experiment):

- Full Fine-Scale Simulation: Perform a direct numerical simulation (DNS) on a small but statistically representative macro-sample. Record the stress-strain response (Ground Truth).

- RVE Testing: Extract an RVE, apply periodic boundary conditions, and compute the homogenized constitutive law.

- Upscaled Simulation: Run a macroscale simulation using the homogenized law from step 2.

- Comparison: Compare the macroscale results from step 3 with the DNS results from step 1 for the same sample. The error should be quantified (see Data Table).

- Iterate: Increase RVE size and complexity until the error converges below an acceptable threshold (e.g., 5% for strain energy).

Data Presentation

Table 1: Computational Cost & Accuracy Comparison of Coupling Strategies

| Coupling Strategy | Typical Speedup Factor (vs. Full Fine-Scale) | Key Accuracy Limitation | Best For | Thesis Cost Optimization Context |

|---|---|---|---|---|

| Pure Hierarchical (Sequential) | 100 - 10,000x | Loss of transient/local fine-scale data. Assumes scale separation. | Material property prediction, screening studies. | Pre-compute look-up tables for bulk tissue properties to reduce runtime by >95%. |

| Embedded Domain (Concurrent) | 10 - 100x | Spurious interface reflections; ghost forces. | Localized failure, crack propagation, active site analysis. | Restrict expensive fine-scale domain to <5% of total volume; use adaptive meshing. |

| Bridging Scale (Concurrent) | 50 - 500x | Complexity in projecting displacements/forces. | Dynamic wave propagation, impact mechanics. | Use coarse-scale solution everywhere, inject fine-scale details only where necessary. |

Table 2: Validation Results for a Tendon Fiber Upscaling Protocol

| RVE Size (Collagen Fibrils) | Homogenized Young's Modulus (GPa) | Error vs. DNS Ground Truth | Upscaled Macroscale Simulation Runtime | DNS Runtime (Equivalent Volume) |

|---|---|---|---|---|

| 5 x 5 | 1.2 ± 0.3 | 22% | 15 min | 2.4 days |

| 10 x 10 | 1.45 ± 0.15 | 9% | 42 min | 9.5 days |

| 20 x 20 | 1.55 ± 0.08 | 3% | 2.1 hrs | 38 days |

Experimental Protocols

Protocol 1: Adaptive Concurrent Coupling for a Crack-Tip Propagation Simulation Objective: Model dynamic crack growth in a bone-like composite using concurrent MD-FEA.

- Initialization: Define the macroscale Finite Element (FE) mesh of the specimen. Identify the initial crack-tip location.

- Domain Decomposition: Around the crack-tip, define a high-resolution Molecular Dynamics (MD) region (radius = 50 nm). This is the "critical domain." The surrounding bulk is FE.

- Coupling: Use the Bridging Scale Method. The MD domain informs the FE constitutive response at the crack-tip. FE provides displacement boundary conditions to the MD region.

- Adaptive Refinement: Monitor strain gradient in FE elements adjacent to the crack-tip. If it exceeds a threshold (e.g., 0.1 per nm), migrate the MD domain to follow the crack-tip, converting newly included atoms from FE to MD representation.

- Execution & Monitoring: Run the coupled simulation. Log energy exchange at the interface to ensure balance. Monitor crack propagation speed and branching patterns.

Protocol 2: Hierarchical Parameterization of a Lipid Membrane for Tissue Modeling Objective: Derive coarse-grained (CG) viscoelastic parameters for a phospholipid bilayer from all-atom MD simulations.

- Fine-Scale Simulation: Run all-atom MD of a patch of lipid membrane (e.g., POPC) in explicit solvent for 200+ ns. Replicate in triplicate.

- Property Extraction:

- Area Compressibility Modulus (Ka): From fluctuations of the lateral area of the membrane patch.

- Bending Rigidity (κ): From analysis of undulatory spectra using a Fourier analysis of membrane height fluctuations.

- Shear Viscosity: From the stress autocorrelation function.

- Upscaling: Input the measured parameters (Ka, κ) into a continuum-level material model (e.g., a Helfrich-type elastic shell model or a 2D viscoelastic continuum).

- Verification: Use the new continuum model to predict the deformation of a large vesicle under osmotic shock. Compare results with an extremely costly full all-atom simulation of the same event (if tractable) or against established experimental data.

Mandatory Visualizations

Hierarchical Multiscale Workflow for Tissue Modeling

Concurrent Coupling with Handshake Region

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Function in Multiscale Biomechanics |

|---|---|

| LAMMPS | Open-source MD simulator for fine-scale (atomistic, CG) dynamics. Used to generate material properties and model localized phenomena. |

| FEAP / FEniCS / Abaqus | Finite Element Analysis packages for solving continuum-scale biomechanical boundary value problems. |

| MEDCoupling / preCICE | Libraries specifically designed for code coupling and data exchange between heterogeneous solvers (e.g., MD FEA). |

| Python (NumPy/SciPy) | Essential for scripting workflows, data analysis, homogenization calculations, and automating hierarchical parameter passing. |

| Paraview / OVITO | Visualization tools for both continuum FE results and atomistic/CG simulation data, crucial for debugging coupled interfaces. |

| Consistent Coarse-Graining Tools (e.g., VOTCA, ICCG) | Software to systematically derive CG force fields from atomistic data, ensuring thermodynamic consistency for hierarchical bridging. |

| HPC Job Scheduler (Slurm, PBS) | Manages concurrent execution of multiple coupled software components across high-performance computing clusters. |

Leveraging Reduced-Order Modeling (ROM) and Surrogate Models for Speed

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: My ROM for cardiac tissue electrophysiology loses accuracy after a few simulated beats. What could be the cause? A: This is often a mode interference or basis degeneration issue. In multiscale biomechanical models, the system's dynamics can drift from the subspace captured during the initial Proper Orthogonal Decomposition (POD) snapshot collection. Ensure your training data (snapshots) encompass the full range of dynamics (e.g., multiple heart rates, varying contractility states). Implementing a greedy sampling or adaptive basis update protocol can mitigate this.

Q2: When building a surrogate model for drug effect on ion channel kinetics, how do I choose between Gaussian Process Regression (GPR) and Artificial Neural Networks (ANNs)? A: The choice depends on data size and stochasticity. Use the decision table below:

| Criterion | Gaussian Process (GP) | Artificial Neural Network (ANN) |

|---|---|---|

| Training Data Size | Small to medium (< 10³ samples) | Large (> 10³ samples) |

| Inherent Uncertainty | Provides intrinsic variance (confidence intervals) | Requires modifications (e.g., Bayesian nets) |

| Computational Cost | High for large training sets (O(n³)) | Scalable prediction cost after training |

| Primary Use in ROM | Ideal for probabilistic sensitivity analysis in drug screening | Best for high-dimensional, deterministic parameter mapping |

Q3: The computational speed-up of my Hyper-Reduced Order Model (HROM) is less than expected. Where should I look? A: The bottleneck is likely in the gappy POD reconstruction or the selection of empirical interpolation points. Profile your code to check the time spent on the online data reconstruction step. Optimize by clustering interpolation points or using a collocation method tailored to your biomechanical domain's stress-strain hotspots.

Q4: How can I validate the predictive capability of my surrogate model for a novel drug compound not in the training set? A: Employ a leave-one-cluster-out cross-validation strategy. Cluster your training compounds by physicochemical properties or target profiles, then iteratively leave one cluster out for testing. This tests extrapolation capability. Key metrics should include Mean Squared Error (MSE) and Standardized Mean Error (SME).

Troubleshooting Guides

Issue: Non-Physical Oscillations in ROM Fluid-Structure Interaction (FSI) for Aortic Valve Simulation

Symptoms: Unphysical pressure spikes or valve leaflet fluttering appear in the ROM solution, not present in the high-fidelity model.

Diagnostic Steps:

- Check Basis Sufficiency: Calculate the relative projection error:

ε_proj = ||u_FOM - ΦΦ^T u_FOM|| / ||u_FOM||. If >1%, your POD basis is insufficient. Collect additional snapshots during the valve's rapid closure phase. - Examine Hyper-Reduction Residual: Ensure the gappy POD mesh samples points in the critical coaptation region. A common error is sampling only from the leaflet bodies, missing the contact edges.

- Verify Time Integrator Stability: ROMs often require stricter time-step criteria. Reduce the time step by 50% and see if oscillations dampen.

Resolution Protocol:

- Enrich the Basis: Perform a new high-fidelity simulation with a perturbed parameter (e.g., +5% inflow velocity). Extract snapshots and append to the original snapshot matrix before performing a new POD.

- Optimize Sample Points: Use the Empirical Interpolation Method (EIM) to algorithmically select new sample points that minimize the residual in the force term.

- Regularize: Apply Tikhonov regularization to the least-squares problem in the gappy POD reconstruction step.

Workflow for Building a Validated Cardiac ROM

Diagram Title: Workflow for Developing a Validated Cardiac ROM

Experimental Protocols

Protocol 1: Generating a ROM for Tendon Micromechanics

Objective: Create a hyper-reduced ROM to predict stress-strain response under varying proteoglycan content.

Materials: See "Research Reagent Solutions" below. Method:

- FOM Generation: Using Abaqus FEA, run 50 simulations varying proteoglycan content (0.5-5.0 wt%) and strain rate (0.1-10 %/s).

- Snapshot Assembly: For each simulation, extract the von Mises stress field for all elements at 100 time steps. Assemble into snapshot matrix S (size: [nelements * ntime, n_simulations]).

- Basis Computation: Perform SVD on S:

[Φ, Σ, Ψ] = svd(S, 'econ'). Retain modes capturing 99.9% energy (k = find(cumsum(diag(Σ))/sum(diag(Σ)) > 0.999)). BasisΦ_r = Φ(:,1:k). - Hyper-Reduction: Apply Discrete Empirical Interpolation Method (DEIM) to the internal force vector. Select 1500 empirical nodes.

- Online Phase: Solve the reduced system (

Φ_r^T * F(Φ_r * q)) for new parameters. Reconstruct full-field stress:σ ≈ Φ_r * q.

Validation: Compare ROM-predicted stress at 3% strain against a new, high-fidelity FOM run (not in training). Acceptable error: <2% RMS.

Protocol 2: Building a GP Surrogate for Drug Dose-Response

Objective: Replace a high-cost pharmacokinetic-pharmacodynamic (PK-PD) model with a fast GP surrogate for IC50 prediction.

Method:

- Training Data Generation: Run the full PK-PD model for 200 input parameter sets (e.g., drug association rate

k_on, membrane permeabilityP_m). Record outputIC50. - GP Training: Use a Matérn 5/2 kernel. Optimize hyperparameters (length scales, noise variance) by maximizing the log marginal likelihood using the L-BFGS-B algorithm.

- Surrogate Evaluation: For 50 new parameter sets, predict mean

IC50_GPand varianceσ²_GP. Compute the coefficient of variation of the standard error (CV-SE):mean(σ_GP / IC50_GP). Target CV-SE < 0.15.

Key Performance Data Table:

| Method | Avg. Runtime per Simulation | Relative Speed-Up | Mean Absolute Error (nM) |

|---|---|---|---|

| High-Fidelity PK-PD | 45 minutes | 1x (Baseline) | - |

| Trained GP Surrogate | 0.5 seconds | ~5400x | 0.42 |

| POD-Galerkin ROM | 8 seconds | ~337x | 1.85 |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in ROM/Surrogate Context |

|---|---|

| Abaqus FEA with UMAT | Industry-standard FEA software for generating high-fidelity biomechanical snapshot data. |

| libROM or EZyRB Python Library | Open-source libraries for performing SVD/POD, Galerkin projection, and hyper-reduction. |

| GPy or GPflow (Python) | Libraries for constructing and training robust Gaussian Process surrogate models. |

| LHS Design Script (PyDOE) | Generates efficient, space-filling parameter samples for training data collection. |

| HDF5 Data Format | Manages large, hierarchical snapshot datasets for efficient I/O during basis construction. |

| Docker Container with FEniCS | Ensures reproducible FOM execution environments across different research clusters. |

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: In a multiscale biomechanical model, my Finite Element Analysis (FEA) simulation of bone remodelling is failing to converge when I integrate agent-based tissue cellular activity. What are the primary causes? A: This is typically caused by a time-step mismatch or a stiffness matrix singularity. The agent-based model (ABM) likely operates on a different temporal scale (hours/days) than the FEA solver (milliseconds/seconds). This discrepancy can cause instability. Ensure you are using a stable, staggered coupling scheme where the ABM provides updated material properties to the FEA mesh at defined, synchronized intervals, not every solver iteration. Check for extreme material property values being passed from the ABM, which can create ill-conditioned FEA matrices.

Q2: When coupling Computational Fluid Dynamics (CFD) with an agent-based model of platelet adhesion in a vascular simulation, the computation becomes prohibitively expensive. How can I optimize this? A: The cost stems from resolving near-wall fluid dynamics for every agent interaction. Implement a multi-fidelity approach: Use a detailed CFD solution to train a surrogate model (e.g., a neural network or a simplified analytical flow map) that provides accurate shear stress and pressure fields to the ABM at a fraction of the cost. Alternatively, use adaptive mesh refinement (AMR) in the CFD domain to concentrate resolution only in regions where agents are active.

Q3: My agent-based tumor growth model, which receives mechanical cues from an FEA-calculated tissue strain field, shows unrealistic, grid-aligned migration patterns. What is wrong? A: This is a classic "lattice artifact." Your ABM is likely using the FEA mesh nodes or a regular grid for agent location and movement. Implement an off-lattice, continuous space approach for the agents. The FEA field (strain, stress) should be interpolated to the precise, continuous coordinates of each agent using the shape functions of the underlying FEA elements, allowing for natural, directionally unbiased migration.

Q4: I am experiencing memory overflow errors when exporting high-resolution, time-series CFD velocity data to my agent-based cell migration platform. What are my options? A: Avoid exporting raw field data at every time step. Implement in-situ coupling where the ABM queries the CFD solver for data only at agent locations. If offline coupling is necessary, use data compression techniques. Export data only on a coarsened spatial mesh for the ABM domain, or use efficient binary formats (e.g., HDF5) with chunking and compression enabled. The table below summarizes optimal data exchange strategies.

Table 1: Optimization Strategies for Coupled Simulation Cost

| Bottleneck | Primary Cause | Recommended Solution | Expected Cost Reduction |

|---|---|---|---|

| Time-to-Solution | Fully coupled, monolithic solving | Staggered/Weak coupling with fixed-point iteration | 40-60% |

| Memory Usage | High-resolution data exchange | Surrogate models & in-situ processing | 50-70% |

| Solver Instability | Disparate time scales | Temporal homogenization; sub-cycling | 30-50% |

| I/O Overhead | Writing all field data to disk | Selective export; efficient binary formats | 60-80% |

Experimental Protocol: Staggered Coupling for Bone Mechanobiology

Objective: To simulate bone adaptation by coupling an FEA model of mechanical loading with an ABM of osteoblast/osteoclast activity, while optimizing computational cost.

Methodology:

- Initialization: A 3D FE mesh of a bone segment is created. An ABM population of osteocyte cells is mapped onto the FE mesh nodes.

- FEA Step: A static mechanical load (e.g., 2 MPa compressive stress) is applied. The FEA solver (e.g., Abaqus, FEBio) calculates the strain energy density (SED) field.

- Field Mapping: The SED at each node is interpolated to the precise location of each osteocyte agent in the ABM.

- ABM Step: The ABM (e.g., using PhysiCell) advances in time for a biological period (e.g., 12 simulated hours). Osteocyte agents convert local SED into biochemical signals (RANKL/OPG).

- Bone Remodelling: These signals drive the differentiation and activity of osteoclast (resorption) and osteoblast (formation) agents, which modify local bone density.

- Property Update: The change in bone density is converted into an updated Young's Modulus for each FE element using a density-modulus relationship (e.g., ( E \propto \rho^2 )).

- Synchronization: The updated material properties are passed back to the FEA model. The loop (steps 2-6) repeats for the next loading cycle.

- Control: A fully coupled, concurrent simulation (if feasible) is run for a short period to validate the results of the staggered protocol.

Diagram 1: Staggered Multiscale Simulation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Multiscale Biomechanical Modeling

| Tool/Reagent | Category | Primary Function in Optimization |

|---|---|---|

| FEBio Studio | FEA Platform | Open-source solver for biomechanics; enables custom plugins for ABM coupling. |

| PhysiCell | ABM Platform | Open-source framework for 3-D multicellular systems; built for external signal integration. |

| preCICE | Coupling Library | Enables partitioned multi-physics coupling (e.g., FEA-CFD, FEA-ABM) with efficient communication. |

| HDF5 Library | Data I/O | Manages large-scale data exchange between solvers with compression, reducing I/O overhead. |

| PyTorch/TensorFlow | Machine Learning | For building surrogate models (digital twins) of expensive CFD/FEA solvers. |

| Dakota | Uncertainty Quantification | Manages design-of-experiments and sensitivity analysis to identify critical model parameters. |

| Docker/Singularity | Containerization | Ensures reproducibility of complex software stacks across HPC environments. |

Diagram 2: Bone Mechanobiological Signaling Pathway

Technical Support Center

Framing Context: This support center addresses common issues encountered while using HPC and Cloud-Native architectures to optimize computational costs for multiscale biomechanical models in drug development research.

FAQs & Troubleshooting Guides

Q1: My cloud-native multiscale simulation (e.g., ligand-protein binding followed by cellular response) fails with a "Container Orchestration Timeout" error after scaling beyond 50 pods. What is the cause?

A: This typically indicates a bottleneck in the control plane of your Kubernetes cluster or a networking CNI (Container Network Interface) issue. When orchestrating many parallel biomechanical simulations, the etcd database or kube-scheduler can become overloaded.

- Troubleshooting Steps:

- Check control plane component health:

kubectl get componentstatuses - Monitor etcd performance: Look for high write latency (

etcd_metrics | grep wal_fsync_duration_seconds). - Consider partitioning workloads into multiple namespaces or using a dedicated node pool for the scheduler.

- Check control plane component health:

- Protocol: Optimize Pod Scheduling for MPI Jobs.

- Use the

kube-schedulerconfiguration to define a pod affinity/anti-affinity rule to co-locate tightly coupled MPI processes. - Implement a

Vertical Pod Autoscaler(VPA) to right-size CPU/memory requests before horizontal scaling. - Use a

Custom Resource Definition(CRD) likeMPIJob(from Kubeflow) for native handling of MPI-based biomechanics workloads.

- Use the

Q2: When running finite element analysis (FEA) for bone mechanics on a burst of cloud VMs, I observe severe performance inconsistency (high jitter). How can I mitigate this?

A: "Noisy neighbor" problems in multi-tenant cloud environments and varying VM generations cause this. HPC workloads require consistent, low-latency networking and CPU performance.

- Troubleshooting Steps:

- Use cloud provider-specific HPC instances (e.g., AWS Hpc6a, Azure HBv3, Google Cloud C2) which are optimized for consistent performance.

- Enable SR-IOV (Single Root I/O Virtualization) for network interfaces to bypass the hypervisor for MPI traffic.

- Pin processes to specific CPU cores using

numactlortasksetwithin your container.

- Protocol: Configuring for Low-Latency Inter-Node Communication.

- Deploy a

DaemonSetto install and configure the latest HPC-focused OFED (OpenFabrics Enterprise Distribution) drivers on all worker nodes. - Configure your MPI library (OpenMPI, Intel MPI) to use the high-performance fabric (e.g., UCX over EFA or InfiniBand).

- Validate performance consistency using the OSU Micro-Benchmarks (

osu_latency,osu_bw) across your node pool.

- Deploy a

Q3: My data pipeline for processing 10,000s of molecular dynamics (MD) trajectory files from cloud object storage (e.g., S3, GCS) into my analysis cluster is slower than expected. What architectural patterns can help?

A: The classic bottleneck is treating remote object storage like a parallel filesystem. It is optimized for throughput, not low-latency metadata operations.

- Troubleshooting Steps:

- Use

s3fsorgcsfusecautiously; they are not suitable for high metadata workloads. - Implement a data staging pattern: use a dedicated tool (e.g.,

KubeFluxor a batch job) to pre-fetch required datasets to a local, high-performance parallel filesystem (like Lustre) or a node-local SSD cache before the main computation starts.

- Use

- Protocol: Implementing a Data Staging Workflow for Cloud MD Analysis.

- Define a

PersistentVolumeClaim(PVC) for a high-performance, read-write-many filesystem (e.g., Google Filestore, AWS FSx for Lustre). - Create an init container in your analysis pod spec. The init container's sole job is to use

rcloneor the cloud CLI to copy specific data from object storage to the mounted PVC. - The main analysis container then runs, performing all I/O against the high-speed PVC.

- A final post-process container can archive results back to object storage.

- Define a

Q4: How do I manage and automate hybrid deployments where my sensitive patient-derived biomechanical data resides on-premises, but I need to burst to the cloud for peak HPC capacity?

A: This requires a secure, hybrid cloud architecture focusing on identity management, network security, and data governance.

- Troubleshooting Steps:

- Ensure bidirectional network connectivity (cloud VPN or Direct Interconnect).

- Synchronize identity providers (e.g., On-prem AD with Azure AD or GCP IAM).

- Encrypt data in transit and at rest. Use cloud KMS with customer-managed keys.

- Protocol: Setting Up a Secure Hybrid HPC Burst.

- Identity: Establish cross-realm trust or use a central OIDC provider.

- Networking: Deploy a cloud VPN tunnel or use Azure ExpressRoute / AWS Direct Connect.

- Data Layer: Install a cloud cache appliance (like Avere vFXT or similar) on-premises. It serves as the primary namespace. It automatically tiers "hot" data needed for the cloud burst to the cloud, while keeping the master data on-prem.

- Orchestration: Use a single, unified Kubernetes control plane (e.g., on-prem) with worker nodes in both locations, using labels and taints to control workload placement.

Table 1: Cost & Performance Comparison of Compute Options for Multiscale Biomechanics

| Compute Option | Typical Use Case in Biomechanics | Relative Cost (Indexed) | Time to Solution (vs. On-Prem HPC) | Scaling Limitation (Typical) | Best For |

|---|---|---|---|---|---|

| Cloud VMs (General Purpose) | Pre/Post-processing, visualization | 1.0 (Baseline) | 1.5x Slower | ~32 cores due to network latency | Non-parallel, interactive work |

| Cloud HPC Instances | MD, FEA, CFD simulations | 1.8 - 2.5x | 0.7x Faster | 1000s of cores (fabric limited) | Tightly-coupled, MPI-based simulations |

| Cloud GPU Instances | AI/ML for parameter optimization, deep learning surrogates | 3.0 - 8.0x (Volatile) | 0.2x Faster (for suitable algos) | Memory bandwidth & GPU count | Embarrassingly parallel, ML-driven tasks |

| On-Premises HPC Cluster | Long-running, data-sensitive large-scale models | High CapEx | 1.0x (Baseline) | Cluster size & queue wait times | Steady-state, predictable workloads |

| Hybrid Burst (On-Prem + Cloud) | Handling peak demand for urgent drug candidate screening | Variable (Premium) | 0.9x (with good staging) | Data transfer bandwidth | Unpredictable, deadline-driven scaling |

Table 2: Common Performance Bottlenecks & Mitigations

| Bottleneck Area | Symptom | Diagnostic Tool/Metric | Mitigation Strategy |

|---|---|---|---|

| Inter-Node Communication | MPI jobs slow as node count increases. | OSU Micro-Benchmarks, netstat, fabric provider tools. |

Use HPC instances with RDMA, optimize MPI flags (-mca btl), use process affinity. |

| Parallel Filesystem I/O | Simulation slows with more processes writing output. | iostat, lustre_stats, client-side monitoring. |

Implement staged writing (one file per process, aggregate later), use node-local SSDs for scratch. |

| Container Overhead | Higher-than-expected runtime vs. bare metal. | docker stats, cAdvisor, Kubernetes metrics. |

Use lightweight base images (Alpine, Distroless), assign appropriate CPU limits (not just requests). |

| Cloud API Rate Limiting | Automated job scaling fails sporadically. | Cloud provider's Operations/Logging suite. | Implement exponential backoff in scaling scripts, use queuing systems with built-in cloud integrators (e.g., Slurm with plugins). |

Experimental Protocols

Protocol 1: Benchmarking Cloud HPC Instances for Molecular Dynamics (GROMACS) Objective: Determine the most cost-effective cloud instance type for a standardized MD simulation.

- Environment Setup: Provision identical VPCs on two cloud providers. Deploy a managed Kubernetes cluster (GKE, EKS) or use HPC instance queues (AWS ParallelCluster, Azure CycleCloud).

- Workload Definition: Use a standardized GROMACS benchmark case (e.g.,

DL_POLYwater or a protein-ligand system likeADH). - Execution: For each instance type (General Purpose, HPC-optimized, GPU), run the benchmark across 4, 16, 64, and 128 cores. Use containerized GROMACS with host-specific MPI optimization.

- Data Collection: Record: a) Simulation Time (ns/day), b) Total Job Cost (instance cost * job duration), c) Cost-Performance (Cost / ns/day).

- Analysis: Plot strong scaling efficiency. Use the data from Table 1 to identify the "sweet spot" for core count before communication overhead dominates.

Protocol 2: Auto-scaling a Cloud-Native Ensemble for Parameter Sweeps Objective: Automatically scale resources to complete 10,000 independent simulations of a cellular mechanics model with varying parameters.

- Architecture: Use a Job Queue (Redis), a Work Generator (Python app), and scalable Worker Pods.

- Implementation:

- The Work Generator populates the queue with parameter sets (JSON objects).

- A Kubernetes

Deploymentmanages the worker pods. Each pod pulls a parameter set, runs the simulation (e.g., using FEniCS or Abaqus container), and uploads results to object storage. - A Horizontal Pod Autoscaler (HPA) scales the worker deployment based on queue length (

external.metrics.k8s.io).

- Metrics: Measure time to complete all jobs, average pod startup latency, and total compute cost. Compare to a static cluster of equivalent size.

Visualizations

Title: Secure Hybrid HPC Burst Architecture for Sensitive Data

Title: Cloud-Native Ensemble Parameter Sweep Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential "Reagents" for HPC & Cloud-Native Biomechanics Research

| Item / Solution | Category | Function in the "Experiment" |

|---|---|---|

| Kubernetes | Orchestration | The foundational platform for deploying, managing, and scaling containerized simulation software (GROMACS, FEniCS, Abaqus) and workflows. |

| MPI Operator (Kubeflow) | Workload Manager | A Kubernetes custom controller that natively understands MPI jobs, simplifying the execution of tightly-coupled parallel simulations. |

| High-Performance Container Images | Software Environment | Pre-built, optimized Docker images for key scientific software, often from NGC (NVIDIA) or BioContainers, ensuring reproducibility and performance. |

| CI/CD Pipeline (GitLab CI/GitHub Actions) | Automation | Automates testing of new model code, building of updated containers, and deployment to staging clusters, accelerating research iteration. |

| InfiniBand / EFA Drivers | Hardware Abstraction | Software that enables low-latency, high-throughput network communication between nodes, critical for MPI performance in the cloud. |

| Lustre / BeeGFS Parallel Filesystem | Data Management | Provides a high-speed, shared filesystem for simulations that require concurrent access to large datasets (e.g., from multiple ensemble members). |

| Prometheus & Grafana | Monitoring | Collects and visualizes metrics from the entire stack (application performance, cluster health, cloud costs), enabling data-driven optimization. |

| Terraform / Crossplane | Provisioning | "Infrastructure as Code" tools to declaratively define and provision identical, reproducible HPC cloud environments for different research teams. |

Technical Support Center: Troubleshooting FAQs for Multiscale Biomechanics

Q1: My FE simulation of left ventricular contraction fails to converge when I integrate my active contraction model from cellular dynamics. What are the primary causes? A: This is often due to a mismatch in time scales or numerical stiffness. The cellular model (e.g., a modified Land/Hunter model) operates at sub-millisecond steps, while the FE solver for the whole organ uses larger steps. Ensure proper time-step scaling and solver coupling.

- Protocol for Debugging Coupled Electromechanics:

- Isolate: Run the cellular ionic model independently to verify stability over the full cardiac cycle duration.

- Check Inputs: Feed the generated active stress (from the cell model) into a single-element FE test. If it fails, the stress profile is likely too abrupt.

- Smooth: Apply temporal smoothing (e.g., a low-pass filter) to the active stress time-series before full 3D FE integration.

- Stagger: Implement a staggered (weak) coupling scheme instead of a monolithic one to improve convergence.

Q2: When modeling trabecular bone adaptation, my strain energy density (SED) results are noisy, leading to unrealistic bone resorption patterns. How can I stabilize this? A: Noise arises from high local strain gradients inherent in micro-FE meshes. Implementation of physiological spatial averaging is required.

- Protocol for Bone Adaptation Stabilization:

- Compute Local SED: Perform micro-FE analysis on the segmented µCT mesh.

- Apply Averaging Window: For each element, calculate the average SED over its neighborhood. Use a sphere with a radius of 2-3 times the mean element size, based on biological perception range.

- Apply Remodeling Rule: Feed the averaged SED into the adaptation rule (e.g.,

dDensity/dt = k*(SED - SED_ref)). - Iterate Slowly: Use small timestep multipliers for density change (

k) to prevent oscillatory behavior.

Q3: My agent-based model (ABM) of tumor growth within a soft tissue FE environment is computationally prohibitive beyond 10,000 agents. How can I optimize? A: The primary cost is the search for agent-agent and agent-matrix interactions. Implement spatial hashing and switch to a continuum representation beyond a critical density.

- Protocol for Hybrid ABM Continuum Optimization:

- Spatial Hashing: Bin agents into a 3D grid. Interactions are only checked within the same and adjacent bins, reducing complexity from O(N²) to O(N).

- Continuum Transition: Monitor local agent density. When density in a voxel exceeds a threshold (e.g., 80% confluent), replace that agent cluster with a continuum density field governed by a reaction-diffusion equation.

- Data Mapping: Map continuum variables (e.g., nutrient, pressure) back to remaining discrete agents at each coupling step.

Summarized Quantitative Data on Computational Costs

Table 1: Comparison of Solver Performance for Different Tissue Types

| Tissue Type | Model Scale | Typical Element Count | Explicit Solver Time Step | Implicit Solver Avg. Newton Iterations | Recommended Solver Type |

|---|---|---|---|---|---|

| Cardiac Tissue | Organ (LV) | 100,000 - 500,000 | 0.1 - 1.0 µs (stable) | 4-8 (per step) | Implicit for full cycle |

| Trabecular Bone | Micro-Architecture | 1 - 10 million | N/A (static) | 20-40 (for linear solve) | Direct (for small), Iterative (for large) |

| Soft Tissue/Tumor | Multiscale (ABM+FE) | 50,000 FE + 10^5 Agents | 1.0 ms (for FE) | N/A | Coupled Explicit (FE) & Discrete (ABM) |

Table 2: Impact of Optimization Strategies on Runtime

| Optimization Strategy | Cardiac Electromechanics | Bone Adaptation Loop | Tumor ABM-FE |

|---|---|---|---|

| Baseline Runtime | ~72 hours | ~45 hours/iteration | > 1 week |

| Strategy Applied | Staggered Coupling | SED Averaging | Spatial Hashing + Continuum Switch |

| Optimized Runtime | ~18 hours | ~10 hours/iteration | ~48 hours |

| Speed-up Factor | 4x | 4.5x | >3.5x |

Visualization of Key Workflows

Multiscale Coupling Troubleshooting Flow

Bone Adaptation Loop with Averaging

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Computational Tools & Frameworks

| Item Name | Function / Purpose | Example (Not Endorsement) |

|---|---|---|

| Multiphysics FE Solver | Solves coupled mechanical, electrical, and fluid systems. | FEBio, Abaqus, COMSOL |

| Agent-Based Modeling Library | Framework for creating discrete, rule-based cell/agent models. | Repast, NetLogo, Chaste |

| Cardiac Cell Model Library | Repository of validated ordinary differential equation (ODE) models for cardiomyocytes. | CellML Repository, PMFA |

| Micro-CT Image Segmentation Tool | Converts 3D image data (e.g., bone, tissue) into computational meshes. | 3D Slicer, Simpleware ScanIP |

| High-Performance Computing (HPC) Job Scheduler | Manages parallel computation across CPU/GPU clusters. | SLURM, PBS Pro |

| Spatial Hashing / Nearest Neighbor Search Library | Accelerates distance and interaction queries in particle/agent systems. | nanoflann, Intel oneAPI DPC++ Library |

| Scientific Visualization Software | Visualizes complex multiscale data (scalar fields, vectors, deformations). | Paraview, VisIt |

Overcoming Bottlenecks: Proven Techniques for Performance Tuning and Debugging

Troubleshooting Guides & FAQs

Q1: My multiscale biomechanical simulation is running significantly slower than expected. What is the first step I should take to diagnose the issue?

A: The first step is to perform coarse-grained profiling to identify the high-level bottleneck (CPU, Memory, I/O, Network). Use a system monitoring tool like htop (Linux/macOS) or Resource Monitor (Windows) to observe overall resource utilization. If a single CPU core is at 100% while others are idle, your code is likely single-threaded. If memory usage is continuously growing, you may have a memory leak. If CPU and memory are idle but the disk I/O is high, your simulation may be bottlenecked by reading/writing checkpoint files.

Q2: I've identified that my Python code for agent-based cell modeling is CPU-bound. Which profiling tool should I use to find the specific slow functions?

A: For Python, use cProfile for deterministic profiling and line_profiler (via @profile decorator) for line-by-line analysis. cProfile gives you the total time spent in each function, including built-ins and library calls, helping you identify if the bottleneck is in your code or a dependency (like NumPy). line_profiler is essential for pinpointing the exact slow lines within a critical function.

Q3: My C++ finite element solver for tissue mechanics is using parallelization (OpenMP/MPI), but scaling is poor beyond 8 cores. How can I analyze this? A: Poor parallel scaling requires investigating load imbalance, synchronization overhead, and false sharing. Use specialized parallel profilers:

- Intel VTune Profiler: Excellent for analyzing threading performance (load imbalance, lock contention) and memory access patterns.

- Scalasca (for MPI): Specializes in identifying communication bottlenecks and synchronization issues in MPI codes.

- gprof with

-pgflag: Can provide basic call graph data for parallel programs, though it's less detailed for threading.

Q4: My simulation periodically "hangs" or becomes unresponsive for minutes at a time. What could cause this, and how do I find it? A: This pattern often indicates an I/O bottleneck (writing large result files), garbage collection pauses (in languages like Java or Python), or waiting for a shared resource (network file system, database). Use:

- I/O Profiling:

iotop(Linux) to see disk write activity. - Garbage Collection Logging: For JVM-based languages, enable GC logging (

-Xlog:gc*). For Python, use thegcmodule to track collections. - System Call Tracing: Tools like

strace(Linux) can show if the process is stuck on a particular system call (e.g.,write,read).

Q5: The memory usage of my model grows until it crashes on a cluster node. How do I find the memory leak? A: Memory leaks require runtime memory analysis.

- For C/C++: Use

valgrind --tool=memcheck. It runs your program slowly but provides precise line numbers of allocated memory that was never freed. - For Python: Use

tracemallocto take snapshots of memory allocations and compare them to find which objects are growing unexpectedly. For object-oriented models, this often reveals unintended references keeping large data structures alive. - For Java: Use

jvisualvmorEclipse MATto analyze heap dumps.

Experimental Protocols for Profiling

Protocol 1: Baseline Performance Measurement with cProfile (Python)