Mastering Uncertainty: How Monte Carlo Simulations Are Revolutionizing Biomaterials Design and Drug Development

This comprehensive guide explores the critical role of Monte Carlo simulations in quantifying and managing uncertainty within biomaterials science and drug development.

Mastering Uncertainty: How Monte Carlo Simulations Are Revolutionizing Biomaterials Design and Drug Development

Abstract

This comprehensive guide explores the critical role of Monte Carlo simulations in quantifying and managing uncertainty within biomaterials science and drug development. We begin by establishing the core concepts of uncertainty and stochastic modeling in complex biological systems. We then detail the methodological workflow, from parameter sampling to model execution, with specific applications in scaffold design, drug release kinetics, and implant performance. The article addresses common challenges in simulation design, including computational expense and model validation, providing practical optimization strategies. Finally, we examine rigorous validation frameworks and comparative analyses with other computational methods. Aimed at researchers and industry professionals, this resource provides the foundational knowledge and practical insights needed to leverage Monte Carlo methods for more robust, predictive, and successful biomaterial innovations.

Uncertainty in Biomaterials: Why Stochastic Modeling is Non-Negotiable for Modern Research

In biomaterials research, particularly when using Monte Carlo simulations to predict outcomes like drug release kinetics or scaffold degradation, quantifying uncertainty is paramount. Uncertainty is categorized into two fundamental types:

- Aleatoric Uncertainty (Irreducible): Arises from the inherent stochasticity and variability of biological systems. Examples include cell-to-cell heterogeneity, stochastic molecular interactions, and random thermal fluctuations. It is quantified using probability distributions.

- Epistemic Uncertainty (Reducible): Stems from a lack of knowledge, model simplifications, or measurement limitations. Examples include unknown kinetic parameters, oversimplified reaction pathways, or instrument calibration errors. It can be reduced with better models, more data, or improved experiments.

A Monte Carlo simulation propagates both types of uncertainty through a computational model to produce a distribution of possible outcomes, enabling robust risk assessment and decision-making in drug and device development.

Table 1: Characterizing Aleatoric and Epistemic Errors in Biomaterial Systems

| Uncertainty Type | Source in Biological Systems | Typical Quantification Method | Example in Biomaterials/Drug Delivery | Potential Impact on Monte Carlo Output |

|---|---|---|---|---|

| Aleatoric (Irreducible) | Intrinsic biological noise (gene expression, protein binding). | Statistical variance, coefficient of variation (CV). | Variability in cell adhesion strength on a polymer scaffold (CV ~20-40%). | Output distribution width; defines fundamental prediction limits. |

| Population heterogeneity (cell size, receptor count). | Probability density functions (e.g., Log-normal, Gamma). | Distribution of nanoparticle uptake rates across a cell population. | ||

| Random thermal-driven molecular collisions. | Stochastic simulation algorithms (e.g., Gillespie). | Stochastic binding of growth factors to surface-immobilized receptors. | ||

| Epistemic (Reducible) | Imperfect or sparse experimental data for model fitting. | Confidence intervals, posterior distributions from Bayesian calibration. | Degradation rate constant (k) for a hydrogel with 95% CI: 0.05 ± 0.01 day⁻¹. | Shape and spread of output distribution; reducible with better data. |

| Model structural error (omitted pathways, simplifications). | Model comparison metrics (AIC, BIC), residual analysis. | Assuming Fickian diffusion for drug release when it is actually swelling-controlled. | Systematic bias in predictions. | |

| Measurement instrument noise and calibration limits. | Error propagation formulas, instrument specification sheets. | Fluorescence plate reader with ±5% signal variability in low concentration assays. | Adds quantifiable noise to input parameters. |

Experimental Protocols for Uncertainty Quantification

Protocol 3.1: Empirical Bayesian Calibration for Parameter Estimation (Reducing Epistemic Uncertainty)

Objective: To calibrate a mathematical model of drug release from a biomaterial using sparse experimental data and quantify parameter uncertainty.

Materials: (See "Scientist's Toolkit," Section 5). Procedure:

- Data Collection: Perform in vitro drug release assay (n=8 replicates). Measure cumulative release at times t = [1, 6, 24, 72, 168] hours.

- Define Prior Distributions: For each model parameter (e.g., diffusion coefficient D, initial load L₀), specify a prior probability distribution based on literature or expert knowledge (e.g., D ~ Uniform(1e-11, 1e-9) m²/s).

- Likelihood Function: Define a function that calculates the probability of observing the experimental data given a specific set of parameter values. Assume measurement error is normally distributed.

- MCMC Sampling: Use a Markov Chain Monte Carlo (MCMC) algorithm (e.g., Metropolis-Hastings) to sample from the posterior distribution of the parameters.

- Run 3 independent chains for 50,000 iterations each.

- Discard the first 20% as burn-in.

- Assess convergence using the Gelman-Rubin statistic (R̂ < 1.05).

- Posterior Analysis: The resulting samples represent the joint posterior distribution of all parameters. Report medians and 95% credible intervals (Table 1). This quantified epistemic uncertainty is now ready for propagation.

Protocol 3.2: Characterizing Cell Response Heterogeneity (Quantifying Aleatoric Uncertainty)

Objective: To measure the population-level variability (aleatoric uncertainty) in cell proliferation on a novel biomaterial coating.

Materials: (See "Scientist's Toolkit," Section 5). Procedure:

- Cell Seeding: Seed human mesenchymal stem cells (hMSCs) at a density of 5,000 cells/cm² onto test substrates (n=12 per material).

- Time-Lapse Imaging: Place plates in a live-cell imager. Acquire phase-contrast images of 10 predefined fields-of-view per well every 6 hours for 5 days.

- Single-Cell Tracking: Use automated cell tracking software to segment and track individual cells across frames.

- Data Extraction: For each cell track (>500 per condition), calculate the doubling time from lineage histories.

- Distribution Fitting: Plot a histogram of the doubling times. Fit appropriate distributions (e.g., Log-normal, Weibull) using maximum likelihood estimation. Perform a goodness-of-fit test (e.g., Kolmogorov-Smirnov).

- Report Aleatoric Statistics: Report the distribution type and its parameters (e.g., scale, shape). Calculate the population coefficient of variation (CV). This distribution becomes a key stochastic input for Monte Carlo simulations.

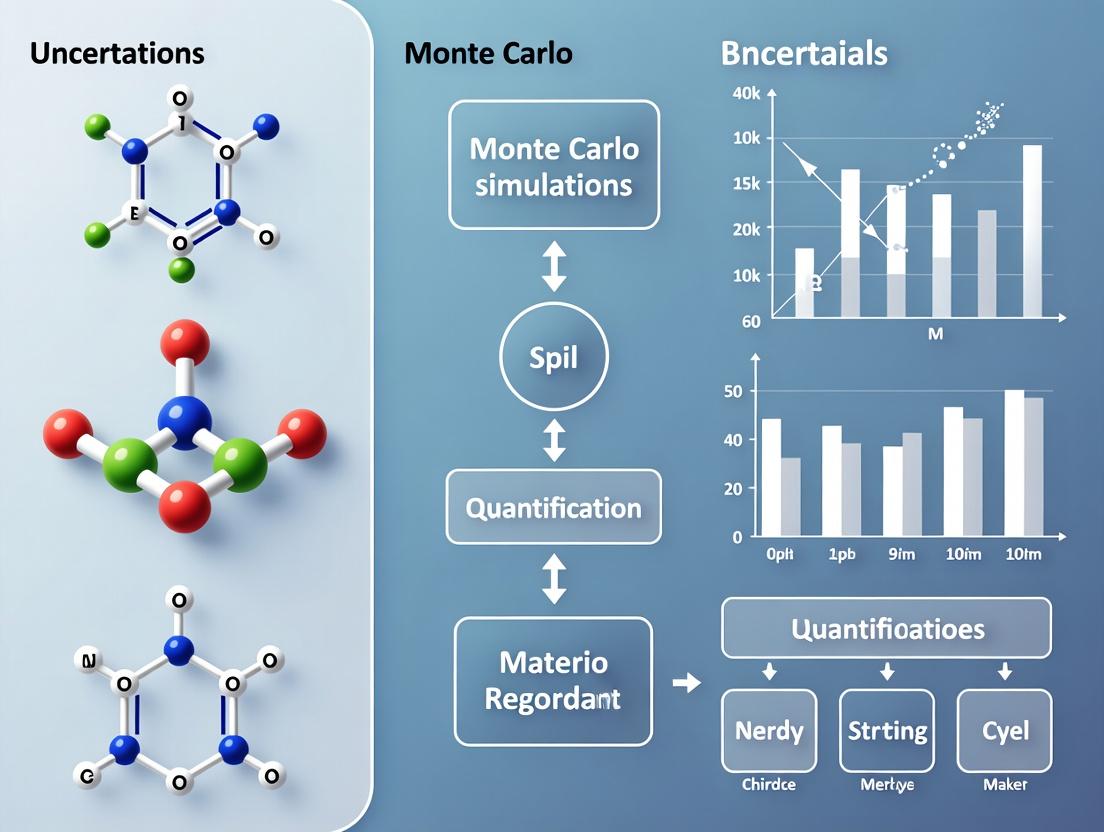

Visualization of Concepts and Workflows

Diagram 1: Modeling uncertainty propagation.

Diagram 2: Uncertainty-aware model calibration.

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Uncertainty Quantification

| Item / Reagent | Primary Function in Uncertainty Analysis | Example Product/Catalog |

|---|---|---|

| Live-Cell Analysis System | Enables longitudinal, single-cell tracking to quantify aleatoric heterogeneity (Protocol 3.2). | Incucyte SX5, BioStation CT. |

| Calibrated Reference Materials | Reduces epistemic uncertainty from instrument error via standardized calibration. | NIST-traceable fluorescent beads, protein standards. |

| High-Content Screening (HCS) Reagents | Multiplexed, cell-based assays for rich data collection to constrain model parameters. | Multiplexed viability/apoptosis kits, Cell Painting dyes. |

| MCMC Software Packages | Implements Bayesian calibration to quantify epistemic parameter uncertainty (Protocol 3.1). | PyMC3 (Python), Stan (R/Python), BayesianTools (R). |

| Stochastic Simulation Software | Directly models aleatoric uncertainty via algorithms like Gillespie's SSA. | COPASI, VCell, Gillespie2 (Python). |

| Monte Carlo Simulation Add-ins | Propagates input uncertainties through complex models in standard tools. | @RISK (for Excel), Simulia Isight. |

In biomaterials research and drug development, deterministic models that rely on average material properties or cellular responses are often insufficient. Biological systems are inherently stochastic due to cell-to-cell variability, random molecular interactions, and heterogeneous biomaterial surfaces. Monte Carlo simulations provide a critical framework for quantifying this uncertainty, enabling researchers to model the full distribution of possible outcomes rather than a single, deterministic average. This is particularly vital for predicting the in vivo performance of drug-eluting stents, tissue engineering scaffolds, and nanoparticle drug delivery systems, where extreme "tail" events (e.g., early implant failure or toxic particle accumulation) dictate clinical success.

Key Limitations of Deterministic Models: Quantitative Evidence

Table 1: Documented Discrepancies Between Deterministic Predictions and Experimental Outcomes in Biomaterials Research

| System Studied | Deterministic Model Prediction (Average) | Experimental Range / Key Stochastic Outcome | Consequence of Ignoring Variability | Primary Source of Uncertainty |

|---|---|---|---|---|

| PLGA Nanoparticle Drug Release | Zero-order release over 28 days. | Burst release 15-45% in first 24hrs; full duration ranges 14-50 days. | Under/over-dosing; therapeutic failure. | Polymer degradation heterogeneity & pore network percolation. |

| Cell Adhesion on RGD-Grafted Surfaces | 70% cell adhesion by 2 hours. | Adhesion fraction 40-90%; rare (<1%) non-adherent "persister" cells. | Misguided scaffold design; incomplete tissue integration. | Stochastic receptor-ligand binding kinetics & cytoskeletal dynamics. |

| Antibiotic Release from Bone Cement | MIC sustained for 90 days. | Local concentrations sub-MIC in >30% of simulated volumes by day 30. | Biofilm formation & antimicrobial resistance. | Spatial heterogeneity in porosity and diffusivity. |

| siRNA Transfection Efficiency | 80% knockdown in target population. | Single-cell data shows a bimodal distribution: 30% of cells show <20% knockdown. | Inconsistent therapeutic gene silencing. | Random endosomal escape and cargo unpacking. |

Monte Carlo Simulation Protocols for Critical Biomaterials Scenarios

Protocol 3.1: Simulating Heterogeneous Drug Release from Polymeric Matrices

Aim: To model the stochastic burst release and variable diffusion pathways from a biodegradable polymer (e.g., PLGA).

Workflow:

- Define Micro-Architecture: Discretize the polymer matrix into a 3D lattice (100x100x100 voxels). Each voxel is assigned an initial drug load and a local degradation rate constant k_deg, drawn from a normal distribution (mean from FTIR/ GPC data, CV=25%).

- Incorporate Stochastic Porosity: Using a percolation threshold model, randomly assign a subset of voxels as "pores" based on an initial porosity probability p. This network evolves as neighboring voxels degrade.

- Monte Carlo Step: For each simulation iteration (representing a time step):

- Randomly select a fraction of voxels for degradation based on k_deg.

- If a voxel degrades, its drug content is released and its state changes to "pore".

- Drug in pore voxels can diffuse to connected pore neighbors. Perform a random walk simulation for a subset of drug molecules to model diffusion.

- Output Analysis: Run 10,000 simulations. Output the distribution of cumulative drug release at key time points (24h, 7d, 28d) and analyze the probability of extreme release profiles.

Diagram Title: Monte Carlo workflow for stochastic drug release.

Protocol 3.2: Simulating Cell Fate Decision on Heterogeneous Surfaces

Aim: To model the variable adhesion and differentiation of stem cells on a biomaterial with nanoscale chemical heterogeneity.

Workflow:

- Map Surface Heterogeneity: Represent the surface as a grid of 50nm x 50nm patches. Each patch has a ligand density drawn from a gamma distribution to mimic random grafting.

- Agent-Based Cell Model: Each cell is an agent with internal states (integrin count, cytoskeletal tension, fate markers).

- Stochastic Binding Events: At each time step, the probability of an integrin forming a bond with a patch is calculated based on local ligand density and binding kinetics.

- Fate Decision Logic: A cell's cumulative signal is integrated. Differentiation probability is calculated via a stochastic Hill function. A random number determines the fate outcome at a decision checkpoint.

- Analysis: After simulating 1,000 cells, generate distributions for adhesion strength, signal activation, and final fate composition (osteogenic vs. adipogenic).

Diagram Title: Stochastic cell fate decision pathway on a heterogeneous surface.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Validating Stochastic Models

| Item / Reagent | Function in Context | Rationale for Stochastic Analysis |

|---|---|---|

| Single-Cell RNA Sequencing (scRNA-seq) Kit (e.g., 10x Genomics) | Profiles gene expression heterogeneity in cells interacting with biomaterials. | Provides empirical distribution data for key fate markers, essential for calibrating and validating agent-based models. |

| Fluorescently-Labelled, Traceable Nanoparticles | Enables tracking of individual nanoparticle uptake and distribution in vitro/vivo. | Allows measurement of particle-cell interaction distributions, not just averages. Critical for modeling biodistribution tails. |

| High-Content Imaging System with Automated Analysis | Quantifies cell morphology, adhesion, and protein expression in thousands of individual cells. | Generates population distribution data for parameters like cell spread area or nuclear YAP intensity, feeding directly into Monte Carlo inputs. |

| Polydisperse & Monodisperse Polymer Reference Sets | Provides materials with known, controlled distributions of molecular weight or particle size. | Allows controlled experiments to isolate the effect of a single stochastic variable (e.g., chain length) on bulk outcome distributions. |

| Microfluidic Gradient Generators | Creates precisely controlled, spatially-varying concentrations of ligands or drugs. | Tests cell response across a continuum of conditions in one experiment, efficiently mapping stochastic response probabilities. |

| Stochastic Sensing Assays (e.g., for reactive oxygen species) | Detects rare or transient molecular events at the single-cell level. | Quantifies the frequency of critical stochastic events (e.g., oxidative burst) that deterministic models overlook. |

In biomaterials and drug delivery research, uncertainty is omnipresent. From the stochastic degradation rates of polymeric scaffolds to the variable binding affinities of a drug to its nanoparticle carrier, deterministic models often fail to capture the inherent randomness. Monte Carlo (MC) simulations provide a powerful, flexible framework for propagating these uncertainties through computational models, allowing scientists to quantify risk, predict performance distributions, and optimize designs probabilistically. This primer outlines the core statistical concepts and provides protocols for applying MC methods to common problems in biomaterials science.

Core Probability Distributions in Biomaterials Modeling

Key sources of uncertainty in biomaterials research can be modeled using specific probability distributions. The choice of distribution should be informed by the physical nature of the parameter.

Table 1: Common Probability Distributions for Modeling Biomaterial Uncertainty

| Distribution | Typical Use Case in Biomaterials | Key Parameters | Example Application |

|---|---|---|---|

| Normal (Gaussian) | Modeling additive measurement errors, bulk properties. | Mean (μ), Standard Deviation (σ) | Variability in the elastic modulus of a hydrogel batch. |

| Log-Normal | Modeling inherently positive quantities with large variances. | Log-mean (μ), Log-stdev (σ) | Distribution of nanoparticle diameters from a synthesis process. |

| Uniform | When only bounds are known, with no central tendency. | Minimum (a), Maximum (b) | Initial guess for an unknown degradation rate within a plausible range. |

| Beta | Modeling probabilities or proportions bounded between 0 and 1. | Shape (α), Shape (β) | Fraction of drug released in a given time window (a proportion). |

| Poisson | Modeling count-based events in a fixed interval. | Rate (λ) | Number of cell attachment events per unit area on a scaffold. |

| Weibull | Modeling failure times and time-to-event data (e.g., degradation). | Scale (λ), Shape (k) | Time to failure (dissolution) of a biodegradable stent. |

Protocol 1: MC Simulation for Drug Release Kinetics Uncertainty

Objective: To predict the confidence bounds for cumulative drug release from a polymeric microsphere, accounting for variability in diffusion coefficient (D) and degradation rate (k).

Materials & Reagent Solutions:

- Computational Environment: Python (NumPy, SciPy, Matplotlib) or R.

- Physical Model: Higuchi or diffusion-degradation coupled PDE (discretized).

- Input Distributions: Log-normal for D; Beta for k (bounded between theoretical min/max).

- Sampling Engine: Mersenne Twister pseudo-random number generator (default in most libraries).

Procedure:

- Parameterize Distributions: Based on experimental characterization of 3 microsphere batches, fit a log-normal distribution to measured D values and a Beta distribution to inferred k values.

- Generate Random Inputs: Sample N=10,000 independent pairs of (D, k) from their respective distributions.

- Run Deterministic Model: For each sampled pair, compute the cumulative drug release profile over 30 days using the numerical model.

- Aggregate Outputs: Collect all 10,000 release curves at each time point.

- Calculate Statistics: At each time point (e.g., day 1, 5, 10, 20, 30), compute the median (50th percentile), and the 5th and 95th percentiles to create a 90% prediction interval.

- Visualize: Plot time on the x-axis, cumulative release on the y-axis, with the median as a solid line and the prediction interval as a shaded band.

Expected Output: A probabilistic release profile showing not just a single curve, but a range of plausible outcomes, providing a more realistic forecast for in vivo performance.

Diagram: Monte Carlo Drug Release Simulation Workflow

Protocol 2: Sensitivity Analysis via Elementary Effects Method

Objective: To rank the influence of uncertain input parameters (e.g., porosity, contact angle, protein concentration) on the predicted cell adhesion density to a novel scaffold.

Materials & Reagent Solutions:

- Screening Design: Elementary Effects (Morris) Method.

- Parameter Ranges: Defined from literature and preliminary data (min, max).

- Computational Tool: SALib (Python library for sensitivity analysis) or custom script.

- Output Metric: Simulated cell adhesion density at 24 hours.

Procedure:

- Define Input Space: For each of k parameters, define a plausible range (e.g., uniform distribution).

- Generate Trajectories: Use the optimized Morris sampling strategy to generate r trajectories (typically 50-100) in the k-dimensional input space. Each trajectory varies one parameter at a time.

- Run Model: Evaluate the cell adhesion model for each input vector in the sample set.

- Compute Elementary Effects: For each parameter in each trajectory, calculate the finite difference derivative (EE_i = [f(x+Δ) - f(x)] / Δ).

- Compute Sensitivity Metrics: For each parameter i, compute the mean of the absolute Elementary Effects (μ*) to assess overall influence, and the standard deviation (σ) to assess non-linearity or interactions.

- Rank Parameters: Sort parameters by decreasing μ. Parameters with high μ and high σ are prime candidates for more detailed uncertainty quantification.

Expected Output: A ranked list of input parameters by their influence on cell adhesion, guiding targeted experimental refinement.

Diagram: Global Sensitivity Analysis Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Monte Carlo Simulation Study

| Item / Solution | Function / Role |

|---|---|

| Pseudo-Random Number Generator (PRNG) | Engine for generating reproducible, statistically random sequences of numbers (e.g., Mersenne Twister). Critical for repeatability. |

| Probability Distribution Fitting Library | Software (e.g., SciPy.stats, fitdistrplus in R) to fit theoretical distributions to empirical data from material characterization. |

| Latin Hypercube Sampler | Advanced sampling technique to efficiently cover multi-dimensional parameter spaces with fewer samples than random sampling. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Enables running thousands of computationally intensive model evaluations (e.g., finite element models) in parallel. |

| Sensitivity Analysis Toolkit (e.g., SALib) | Dedicated library to implement screening (Morris) and variance-based (Sobol) sensitivity methods. |

| Visualization Suite (Matplotlib/Seaborn, ggplot2) | For creating publication-quality plots of distributions, prediction intervals, and sensitivity indices (tornado plots). |

| Bayesian Calibration Software (e.g., PyMC3, Stan) | For the advanced integration of prior knowledge and experimental data to update input distributions (posteriors). |

Integrating Monte Carlo methods into biomaterials research transforms qualitative "what-ifs" into quantitative risk assessments. By formally accounting for uncertainty in material properties and biological responses, researchers can make more robust predictions about device performance, prioritize experimental variables, and ultimately design more reliable and effective biomaterials and drug delivery systems. The protocols provided serve as a foundational template, adaptable to problems ranging from scaffold design to pharmacokinetic modeling.

Within the framework of a thesis employing Monte Carlo simulations to model and quantify uncertainty in biomaterials research, it is critical to identify and characterize key sources of variability. This document details the primary contributors to uncertainty, from raw material synthesis to clinical performance, and provides protocols for their quantification. These inputs are essential for building robust probabilistic simulation models.

Table 1: Major Sources of Uncertainty in Biomaterial Development

| Uncertainty Category | Specific Source | Typical Impact Range (Quantitative) | Measurement Technique |

|---|---|---|---|

| Raw Material & Synthesis (Batch-to-Batch) | Polymer Molecular Weight (Mw) | PDI Variation: 1.1 - 2.5 | Gel Permeation Chromatography (GPC) |

| Nanoparticle Size & Zeta Potential | Size: ± 5-15 nm (from mean); PDI: 0.05 - 0.3; Zeta: ± 5-10 mV | Dynamic Light Scattering (DLS) | |

| Chemical Functionalization Degree | Variation: ± 10-25% of target degree | NMR, FTIR, Colorimetric Assay | |

| Fabrication & Characterization | Scaffold Porosity & Pore Size | Porosity: ± 5-15%; Pore Size Distribution: ± 10-30 μm | Mercury Intrusion Porosimetry, micro-CT |

| Drug/Ligand Loading Efficiency | Efficiency: 60-95% with ± 5-15% batch variance | HPLC, UV-Vis Spectroscopy | |

| In Vitro to In Vivo | Protein Corona Composition | >300 different proteins identified; composition varies with medium & time | LC-MS/MS Proteomics |

| Degradation Rate (in vitro vs. in vivo) | In vitro half-life may differ from in vivo by 2x - 10x | Mass Loss, GPC, Imaging | |

| Host Biological Response (In Vivo Heterogeneity) | Inflammatory Response (IL-1β, TNF-α) | Cytokine levels can vary by 1-2 orders of magnitude between subjects | ELISA, Multiplex Immunoassay |

| Foreign Body Response (Fibrosis Thickness) | Capsule thickness: 50 - 500 μm across subjects & implantation sites | Histomorphometry |

Experimental Protocols

Protocol 1: Quantifying Batch Variability of Polymeric Nanoparticles Objective: To characterize size, dispersity (PDI), and surface charge variability across three independent synthesis batches. Materials: Polymer (e.g., PLGA), solvent (e.g., acetone), surfactant (e.g., PVA), DI water, dialysis tubing, DLS/Zetasizer. Procedure:

- Synthesis: Using a standard single-emulsion solvent evaporation method, prepare three separate batches (n=1 batch each) from the same raw material stock but on different days.

- Purification: Dialyze each batch against DI water for 24h to remove solvents and free surfactant.

- Characterization:

- Dilute an aliquot from each batch 1:20 in DI water.

- Size/PDI: Perform DLS measurement in triplicate (3 runs of 60 sec each) per batch. Record Z-average diameter and PDI.

- Zeta Potential: Perform measurement in triplicate (minimum 10 runs) using a folded capillary cell. Record zeta potential.

- Analysis: Calculate mean ± standard deviation for each parameter (size, PDI, zeta) across the three batches. Input this distribution data into Monte Carlo parameters.

Protocol 2: Assessing In Vitro Degradation Kinetics for Monte Carlo Input Objective: To generate degradation rate distributions for a polyester scaffold. Materials: Pre-weighed polymer scaffolds (e.g., PCL), PBS (pH 7.4, with 0.02% sodium azide), incubator at 37°C, analytical balance, GPC. Procedure:

- Baseline: Weigh each scaffold (Wi) and characterize initial molecular weight (Mw0) via GPC for a subset (n=5).

- Incubation: Immerse scaffolds (n=10 per time point) in PBS and incubate at 37°C under gentle agitation.

- Sampling: At predetermined time points (e.g., 1, 4, 8, 12 weeks), remove scaffolds.

- Analysis:

- Rinse samples, dry to constant weight, and record final weight (Wf).

- Calculate mass loss:

(Wi - Wf)/Wi * 100%. - For select time points, analyze molecular weight (Mwt) via GPC.

- Modeling: Fit degradation data (mass loss, Mw loss) to a kinetic model (e.g., first-order). Extract the rate constant (k) distribution from the n=10 replicates per time point. Use this distribution in degradation simulations.

Diagrams

Title: Uncertainty Propagation in Biomaterial Development

Title: Key Immune Signaling Pathways Post-Implantation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Uncertainty Characterization

| Item | Function & Relevance to Uncertainty Quantification |

|---|---|

| Gel Permeation Chromatography (GPC) System | Determines molecular weight (Mw, Mn) and dispersity (PDI) of polymers. Critical for quantifying batch-to-batch variability in raw materials. |

| Dynamic/Zeta Potential Analyzer | Measures hydrodynamic diameter, size distribution (PDI), and surface zeta potential of nanoparticles. Primary tool for physical characterization variance. |

| Micro-Computed Tomography (micro-CT) Scanner | Provides 3D visualization and quantitative analysis of scaffold architecture (porosity, pore size, interconnectivity). Captures fabrication heterogeneity. |

| High-Performance Liquid Chromatography (HPLC) | Precisely quantifies drug/active agent loading and release kinetics. Essential for measuring encapsulation efficiency variance. |

| LC-MS/MS System | Identifies and quantifies proteins in the biomaterial corona. Characterizes a major source of unpredictable biological variability. |

| Multiplex Cytokine Assay Kits | Simultaneously measure concentrations of multiple inflammatory cytokines (e.g., IL-1β, IL-6, TNF-α, IL-10) from cell culture or tissue lysates. Quantifies heterogeneous host response. |

| Sterile, Endotoxin-Tested Reagents | Minimizes confounding, non-product-related variability in in vitro and in vivo studies introduced by contaminants. |

Within the broader thesis of Monte Carlo simulations in biomaterials research, this application note addresses a critical bottleneck: the propagation of uncertainty from material fabrication through to performance prediction. Stochastic variations in scaffold fabrication parameters (e.g., pore size, interconnectivity) lead to uncertainty in drug elution kinetics, which subsequently introduces significant variance in predictions of implant lifespan and therapeutic efficacy. Quantifying this uncertainty chain is essential for robust clinical translation.

Table 1: Sources of Uncertainty in Scaffold Fabrication and Their Measured Variance

| Uncertainty Source | Typical Mean Value | Reported Coefficient of Variation (CV) | Impacted Performance Parameter |

|---|---|---|---|

| Porosity (%) | 75% | 8-12% | Initial Burst Release, Permeability |

| Average Pore Diameter (µm) | 200 µm | 10-15% | Drug Diffusion Rate, Cell Infiltration |

| Interconnectivity (%) | 98% | 3-5% | Homogeneity of Drug Elution |

| Polymer Degradation Rate (k, week⁻¹) | 0.05 | 15-20% | Long-Term Release Profile, Implant Integrity |

| Drug Loading Efficiency (%) | 92% | 5-8% | Total Delivered Dose |

Table 2: Impact of Input Variance on Monte Carlo Simulation Outputs

| Simulation Output Metric | With Deterministic Inputs | With Stochastic Inputs (95% CI) | Percentage Increase in Prediction Range |

|---|---|---|---|

| Time to 50% Drug Release (days) | 28 days | 22 - 36 days | ±25% |

| Time to 10% Scaffold Mass Loss (weeks) | 20 weeks | 16 - 26 weeks | ±25% |

| Predicted Inflammatory Peak (normalized) | 1.0 | 0.7 - 1.5 | ±40% |

Experimental Protocols

Protocol 1: Quantifying Fabrication Uncertainty in Poly(L-lactide-co-ε-caprolactone) Scaffolds

- Aim: To measure the statistical distribution of key morphological parameters from a standard fabrication batch.

- Materials: See "Research Reagent Solutions" below.

- Method:

- Fabricate scaffolds (n=30) via thermally induced phase separation (TIPS) using a standardized protocol.

- Perform micro-computed tomography (µCT) on each scaffold.

- Reconstruct 3D models and use image analysis software (e.g., BoneJ, CTan) to calculate for each scaffold: total porosity, pore size distribution (mean, mode, SD), and degree of interconnectivity.

- Fit distributions (e.g., Normal, Log-Normal) to each parameter dataset to define stochastic input models for Monte Carlo simulation.

Protocol 2: Stochastic Drug Elution Testing with Uncertainty Bounds

- Aim: To generate elution profiles that account for inter-scaffold variability for model validation.

- Method:

- Load a model drug (e.g., fluorescently tagged albumin) into scaffolds (n=20) from Protocol 1 using a standardized immersion method.

- Immerse each scaffold in individual vials containing PBS (pH 7.4, 37°C) under gentle agitation.

- At predetermined time points, sample and analyze release medium via fluorescence spectrophotometry. Do not replenish the medium to maintain sink condition.

- Plot the mean cumulative release and calculate the 95% confidence interval at each time point to create an "uncertainty envelope."

Protocol 3: Monte Carlo Simulation for Implant Lifespan Prediction

- Aim: To propagate fabrication uncertainty through a coupled degradation-elution model.

- Method:

- Model Definition: Develop a coupled differential equation model linking stochastic porosity (φ) and degradation rate (k) to drug diffusion coefficient (D) and scaffold modulus (E).

- Input Sampling: For each of 10,000 iterations, sample values for φ, pore diameter, and k from the distributions characterized in Protocol 1.

- Simulation Run: Execute the model for each parameter set over a simulated 52-week period.

- Output Analysis: Aggregate results to generate probability distributions for key endpoints: weeks to 80% drug depletion and weeks to 50% loss of initial compressive modulus. Report median values and prediction intervals (e.g., 5th to 95th percentile).

Visualizations

Uncertainty Propagation in Scaffold Performance

Monte Carlo Simulation Workflow for Lifespan

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Uncertainty-Aware Scaffold Characterization

| Item / Reagent | Function in Uncertainty Quantification | Key Consideration |

|---|---|---|

| Poly(L-lactide-co-ε-caprolactone) (PLCL) | Model biodegradable copolymer for scaffold fabrication. Lot-to-lot viscosity variance is a key uncertainty source. | Characterize inherent viscosity (IV) for each batch. |

| Micro-Computed Tomography (µCT) System | Provides 3D morphological data (porosity, interconnectivity) for statistical analysis, not just averages. | Resolution must be 3-5x smaller than smallest pore of interest. |

| Fluorescein Isothiocyanate-Albumin (FITC-BSA) | Model hydrophilic drug surrogate for reproducible, trackable elution studies under uncertainty. | Check for dye leaching in control experiments. |

| Phosphate Buffered Saline (PBS), pH 7.4 | Standard elution medium. Buffer capacity variation can affect degradation, adding uncertainty. | Use standardized powder formulations from a single lot. |

| BoneJ / CTan Image Analysis Plugin | Quantifies pore network metrics from µCT data across multiple samples to build statistical distributions. | Use consistent global thresholding algorithm for all samples. |

| MATLAB or Python (SciPy/NumPy) | Platform for implementing stochastic Monte Carlo simulations and probabilistic analysis. | Requires programming of custom degradation-diffusion models. |

Building Your Simulation: A Step-by-Step Guide to Monte Carlo Workflows in Biomaterial Design

In Monte Carlo (MC) simulations for biomaterials and drug development research, accurately quantifying uncertainty is paramount. The foundational step involves defining all input parameters and assigning them appropriate probability distributions. This step translates deterministic variables into stochastic ones, capturing the inherent biological variability, measurement error, and empirical uncertainty in material properties (e.g., polymer degradation rate, drug diffusivity, surface roughness) and biological responses (e.g., cell adhesion kinetics, protein adsorption rates, drug release profiles). This protocol details the methodology for parameter definition and distribution selection, framed within a thesis on advancing uncertainty quantification in biomaterials science.

Core Probability Distributions and Their Rationale

Normal (Gaussian) Distribution

- Application Context: Used for parameters that are expected to vary symmetrically around a mean value. Ideal for experimental measurement errors, biological traits following the central limit theorem (e.g., average cell diameter in a large population), or properties where the mean ± standard deviation is reported.

- Defining Parameters: Mean (μ, location) and Standard Deviation (σ, scale).

- Limitations: Not suitable for strictly positive parameters that could theoretically be assigned negative values by the distribution.

Log-Normal Distribution

- Application Context: The most critical distribution for biomaterials research. Used for parameters that are strictly positive and often exhibit right-skewed variability. This includes most material properties (e.g., Young's modulus, degradation rate constant), biological half-lives, drug release coefficients, and pharmacokinetic parameters (e.g., clearance, volume of distribution).

- Defining Parameters: The mean (μ) and standard deviation (σ) of the parameter's natural logarithm. The distribution ensures values are always >0.

- Rationale: Many biological and physicochemical processes are multiplicative, leading to log-normal variance.

Uniform Distribution

- Application Context: Used when only a bounded range of possible values is known, with no central tendency. Applicable for scenarios with "expert opinion" ranges (e.g., pH range in a local tissue environment), preliminary sensitivity analyses, or when no empirical data exists beyond minimum and maximum observed values.

- Defining Parameters: Lower bound (a) and Upper bound (b).

Table 1: Summary of Key Probability Distributions for Biomaterials MC Inputs

| Distribution | Key Parameters (Symbols) | Typical Use Case in Biomaterials Research | Example Parameter |

|---|---|---|---|

| Normal | Mean (μ), Std Dev (σ) | Symmetric variation around a mean; measurement error | Error in spectrophotometric absorbance reading |

| Log-Normal | Mean of ln(X) (μ), Std Dev of ln(X) (σ) | Strictly positive, skewed parameters; material properties | Diffusion coefficient (D) of a drug in a hydrogel |

| Uniform | Minimum (a), Maximum (b) | Bounded uncertainty with no central tendency | Porosity of a scaffold batch (within known limits) |

Protocol: Systematic Literature Review for Parameter Estimation

Objective: To gather empirical data for defining distribution parameters (μ, σ, a, b). Materials:

- Scientific databases (PubMed, Scopus, Web of Science).

- Statistical software (R, Python with SciPy/NumPy, GraphPad Prism).

- Data extraction spreadsheet.

Methodology:

- Define Parameter List: List all inputs for the MC model (e.g., hydrogel cross-linking density, initial drug load, cell proliferation rate).

- Structured Search: Execute keyword searches combining the parameter and biomaterial (e.g., "PLGA degradation rate constant," "fibronectin adsorption affinity measurement").

- Data Extraction: For each parameter, extract: reported mean/median, standard deviation/error, sample size (n), and range. Note the experimental model (in vitro, in vivo).

- Calculate Pooled Estimates: Where multiple sources exist, calculate a sample-size-weighted mean and pooled standard deviation.

- Assign Distribution Type:

- If data is symmetric and parameter can be negative → Normal.

- If data is positive and right-skewed, or is a product of variables → Log-Normal. Transform data: μ = mean(ln(data)); σ = sd(ln(data)).

- If only min/max are reliably available → Uniform.

Protocol: Bayesian Updating with Expert Prior Knowledge

Objective: To formalize expert judgment when empirical data is scarce. Materials: Elicitation tool (e.g., MATCH Uncertainty Elicitation Tool), calibration questionnaires.

Methodology:

- Elicit Beliefs: Present the parameter to 3-5 domain experts. Ask for: (a) Lower/Upper Bounds (5th/95th percentiles): "Within what range is the value 90% likely to fall?"

- Fit a Distribution: Use the elicited percentiles to fit a distribution.

- For a Log-Normal, solve for μ and σ given the geometric mean (median) and multiplicative uncertainty.

- Aggregate Expert Opinions: Use linear pooling or Bayesian model averaging to combine distributions from multiple experts into a single prior distribution.

- Update with Data: As new experimental data becomes available, use Bayes' Theorem to update the prior distribution to a posterior distribution, refining the input for future MC simulations.

Visualizing the Parameter Definition Workflow

Title: Workflow for Assigning Parameter Distributions in MC Simulations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Parameter Data Generation

| Item / Reagent | Function in Parameter Estimation |

|---|---|

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | Quantify specific protein (e.g., growth factor) concentrations released from biomaterials, providing data for release kinetics parameters. |

| Rheometer | Measures viscoelastic properties (G', G'') of hydrogels and soft biomaterials, critical for defining mechanical property distributions. |

| Quartz Crystal Microbalance with Dissipation (QCM-D) | Provides real-time, label-free data on protein adsorption mass and viscoelasticity, informing adsorption rate parameters. |

| High-Performance Liquid Chromatography (HPLC) | Quantifies drug or degradation product concentration, essential for defining drug release and polymer degradation parameters. |

| Cell Counting Kit-8 (CCK-8) / MTS Assay | Generates reproducible cell proliferation and viability data, forming the basis for cytotoxicity and growth rate input distributions. |

| Atomic Force Microscope (AFM) | Measures surface topography and nanomechanical properties, providing data for surface roughness and local modulus distributions. |

Statistical Software (R with fitdistrplus package) |

Fits probability distributions to empirical data, calculates maximum likelihood estimates for μ and σ, and assesses goodness-of-fit. |

| MATCH Uncertainty Elicitation Tool | Aids in formally capturing expert judgment as probability distributions for use as prior information in Bayesian updating. |

In Monte Carlo simulations for biomaterials research, such as predicting drug release kinetics from a polymeric scaffold or modeling cellular adhesion probability on a nano-textured surface, input parameters (e.g., degradation rate, porosity, ligand density) are subject to uncertainty. Efficient sampling of this multi-dimensional parameter space is critical for robust uncertainty quantification without prohibitive computational cost. This note compares Latin Hypercube Sampling (LHS) and Simple Random Sampling (SRS) for this purpose.

Quantitative Comparison of Sampling Techniques

Table 1: Core Characteristics and Performance Comparison

| Feature | Simple Random Sampling (SRS) | Latin Hypercube Sampling (LHS) |

|---|---|---|

| Core Principle | Pure random selection from entire parameter space. | Stratified random sampling ensuring each parameter range is evenly covered. |

| Space Filling | Poor for small sample sizes; clusters and gaps likely. | Excellent projective properties; each parameter's distribution is fully stratified. |

| Variance of Mean Estimate | Higher for small N, decreases as 1/√N. | Typically lower for smooth functions, leading to faster convergence. |

| Computational Efficiency (to achieve same error) | Lower; requires more simulation runs. | Higher; achieves comparable accuracy with fewer runs. |

| Implementation Complexity | Simple to generate. | More complex; requires random permutation within stratified bins. |

| Best Suited For | Very high-dimensional problems where LHS stratification becomes less effective, or for verification. | Computationally expensive models with moderate dimensionality (<~50) and smooth responses. |

Table 2: Illustrative Efficiency Data from Recent Simulations (Biomaterial Context)

| Study Context (Simulated Outcome) | Sampling Method | Sample Size (N) Required for ±5% Error in Mean | Relative Computational Time (Normalized) |

|---|---|---|---|

| Nanoparticle Cellular Uptake Efficiency | SRS | 10,000 | 100 |

| LHS | 1,500 | 15 | |

| Polymer Degradation Time (5 params) | SRS | 5,000 | 100 |

| LHS | 800 | 16 | |

| Drug Release Profile AUC (3 params) | SRS | 2,000 | 100 |

| LHS | 400 | 20 |

Experimental Protocols for Implementation

Protocol 3.1: Generating a Simple Random Sample (SRS)

Purpose: To create a baseline set of random input parameters for a Monte Carlo simulation. Materials:

- Pseudorandom number generator (e.g., Mersenne Twister).

- Defined probability distribution for each input parameter (e.g., Normal, Uniform). Procedure:

- For each of the k input parameters, define its statistical distribution (e.g., pore size ~ N(mean=10µm, sd=2µm)).

- Set the total number of simulation runs, N.

- For i = 1 to N: a. For each parameter j = 1 to k: i. Generate a uniform random number u ~ U(0,1). ii. Transform u using the inverse cumulative distribution function (CDF) for parameter j to obtain the sample value x_ij. b. The vector [xi1, xi2, ..., x_ik] defines the input for the i-th simulation.

- Run the deterministic model (e.g., finite element analysis of drug diffusion) with each input vector.

Protocol 3.2: Generating a Latin Hypercube Sample (LHS)

Purpose: To create a stratified random sample ensuring full coverage of each parameter's range. Materials:

- Pseudorandom number generator.

- Defined probability distribution for each input parameter. Procedure:

- For each of the k input parameters, define its statistical distribution.

- Set the total number of simulation runs, N.

- Stratification: a. Divide the cumulative probability range [0,1] for each parameter into N equal intervals: [0, 1/N), [1/N, 2/N), ..., [(N-1)/N, 1].

- Random Permutation & Sampling: a. For each parameter j, generate a random permutation of the interval numbers 1,...,N. b. For each run i (i=1 to N): i. Take the interval index from the i-th position of the permutation for parameter j. ii. Generate a uniform random number u within the selected probability interval. iii. Transform u via the inverse CDF of parameter j to obtain the sample value x_ij.

- The N k-dimensional vectors are randomly paired across parameters, ensuring each parameter's distribution is perfectly sampled.

Protocol 3.3: Comparative Evaluation of Sampling Efficiency

Purpose: To empirically determine the efficiency gain of LHS over SRS for a specific biomaterial model. Materials:

- A calibrated, deterministic computational model (e.g., COMSOL model of hydrogel swelling).

- Python/R scripts implementing SRS and LHS generators. Procedure:

- Select a key scalar output metric from your model (e.g., peak stress, total drug released at t=24h).

- For a sequence of sample sizes (e.g., N = [50, 100, 200, 500, 1000, 5000]): a. Generate M = 100 independent SRS designs of size N. b. Generate M = 100 independent LHS designs of size N. c. For each design, run the N simulations and compute the sample mean (µ_est) of the output metric.

- Analysis: a. For each N and method, compute the variance of the M estimated means. This estimates the variance of the sample mean. b. Plot Var(µest) vs. N for both methods. The faster-decaying curve indicates the more efficient sampler. c. Calculate the relative efficiency: (VarSRS(µest) / VarLHS(µ_est)) at a fixed N. A ratio >1 indicates LHS is more efficient.

Visualizations

Diagram Title: Workflow for Comparing SRS and LHS in Monte Carlo Simulations

Diagram Title: LHS Stratification and Random Point Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Sampling in Biomaterial Simulations

| Item / Software | Function in Sampling & Uncertainty Analysis |

|---|---|

| Python (SciPy/NumPy) | Core programming environment for implementing custom SRS and LHS algorithms, and for statistical analysis of output data. |

| Chaospy / SALib | Dedicated Python libraries for advanced sensitivity analysis and sophisticated sampling designs (including LHS). |

| MATLAB Statistics & ML Toolbox | Provides built-in functions like lhsdesign and lhsnorm for rapid generation of LHS plans. |

| R (mvtnorm / lhs packages) | Statistical computing environment with packages for generating random samples from multivariate distributions and LHS. |

| OpenTURNS / DAKOTA | Advanced frameworks for coupling sampling methods with external simulation codes for industrial-scale uncertainty quantification. |

| Jupyter Notebook | Interactive environment for documenting the sampling protocol, visualizing results, and ensuring reproducibility. |

| High-Performance Computing (HPC) Cluster | Essential for running the thousands of individual simulations (often FEM/CFD) required for robust Monte Carlo analysis. |

Integrating Monte Carlo (MC) simulations with high-fidelity physics-based models is a critical step in advancing predictive biomaterials research. This step quantifies how stochastic uncertainty in input parameters (e.g., material porosity, drug diffusivity, degradation rate) propagates through deterministic Finite Element Method (FEM), Computational Fluid Dynamics (CFD), or Pharmacokinetic/Pharmacodynamic (PK/PD) models. The output provides a probabilistic range of performance outcomes, enabling robust design and risk assessment for biomedical implants, drug-eluting scaffolds, and tissue-engineered constructs.

The following table summarizes the core quantitative linkages between MC uncertainty analysis and the subsequent deterministic models.

Table 1: Mapping of MC-Propagated Uncertain Parameters to High-Fidelity Model Outputs

| Primary Model | Typical MC-Perturbed Input Parameters | Key Quantitative Outputs of Interest | Typical Uncertainty Metric (e.g., 95% CI) |

|---|---|---|---|

| FEM (Biomechanics) | Young's modulus, Poisson's ratio, scaffold porosity, degradation rate constant. | Stress distribution (MPa), strain energy, fatigue life cycles, displacement fields. | ±15-25% variation in max stress; ±20-30% in predicted life cycles. |

| CFD (Transport) | Viscosity, density, permeability, drug diffusion coefficient, inlet flow rate. | Wall shear stress (Pa), pressure drop (mmHg), drug concentration flux, mixing efficiency. | ±10-20% variation in wall shear stress; ±25-40% in localized drug concentration. |

| PK/PD (Therapeutics) | Clearance rate, volume of distribution, binding affinity (Kd), reaction rate constants. | Plasma concentration (µg/mL), time above efficacy threshold, tumor growth inhibition score. | ±30-50% variation in Cmax and AUC; ±40-60% in predicted efficacy time. |

Experimental Protocols

Protocol 3.1: Linking Monte Carlo Simulations to a Finite Element Model of a Bone Scaffold

Objective: To propagate uncertainty in material properties to the predicted stress-shielding performance of a porous titanium alloy implant.

MC Input Sampling:

- Define probability distributions for key inputs: Young's Modulus (Normal dist., mean=110 GPa, SD=11 GPa), Porosity (Beta dist., α=2, β=2, range=60-80%).

- Using a Latin Hypercube Sampling (LHS) strategy, generate 500 independent parameter sets.

Automated Model Execution:

- Script a workflow (Python/ MATLAB) where each parameter set automatically generates a modified input file for the FEM solver (e.g., Abaqus, FEniCS).

- The script must update the material property definitions and regenerate the mesh density consistent with the sampled porosity via a pre-defined scaling law.

FEM Simulation & Output Extraction:

- Execute each FEM simulation to solve a static structural analysis under a standardized gait-cycle load (700 N).

- From each run, extract the maximum von Mises stress in the scaffold and the average stress in the adjacent bone tissue.

Post-Processing & Uncertainty Quantification:

- Compile all 500 output pairs (scaffold stress, bone stress).

- Perform kernel density estimation to create joint probability distributions.

- Calculate the probability of stress-shielding (defined as bone stress < 2 MPa) exceeding a critical threshold.

Protocol 3.2: Linking Monte Carlo to CFD for a Drug-Eluting Stent

Objective: To assess the impact of hemodynamic and release parameter uncertainty on drug uptake in arterial tissue.

Uncertain Parameter Definition:

- Assign distributions: Blood viscosity (Uniform, ±10% of 3.5 cP), Arterial Wall Permeability (Lognormal, median=1e-18 m²), Drug Release Rate Constant (Normal, mean=0.15 hr⁻¹, SD=0.03).

CFD-MC Coupling Setup:

- Use a baseline 3D stent geometry meshed in ANSYS Fluent or OpenFOAM.

- Implement a user-defined function (UDF) for the drug release boundary condition, parameterized by the release rate constant.

- Create a batch script that, for each of 300 MC samples, modifies the UDF and material properties, then runs the transient CFD simulation for 24 simulated hours.

Output Analysis:

- For each run, record the spatial drug concentration profile in the arterial wall at t=24h.

- Compute the area of tissue where concentration exceeds the therapeutic threshold (e.g., 5 µM).

Statistical Visualization:

- Generate a probability map (confidence interval contours) superimposed on the arterial geometry showing the likely drug distribution.

- Report the 5th and 95th percentile for the effective treatment area.

Visualization of Workflows

- Diagram 1 Title: MC-Driven Multi-Model Simulation Workflow

- Diagram 2 Title: Uncertainty Propagation Logic from Inputs to Output

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Computational Tools for MC-Linked Modeling

| Tool/Reagent | Category | Primary Function in Protocol | Example Vendor/Platform |

|---|---|---|---|

| Python (SciPy/NumPy) | Programming & Statistics | Core MC sampling, data management, and statistical post-processing. | Open Source |

| MATLAB | Numerical Computing | Rapid prototyping of sampling algorithms and PK/PD model coupling. | MathWorks |

| Abaqus/ ANSYS | FEM/CFD Solver | High-fidelity deterministic physics-based simulations. | Dassault Systèmes / ANSYS Inc. |

| OpenFOAM | CFD Solver | Open-source solver for customizable fluid and transport simulations. | Open Source |

| Simulink/ COPASI | PK/PD Modeling | Visual or computational framework for pharmacokinetic-pharmacodynamic systems. | MathWorks / Open Source |

| Dakota/ Chaospy | Uncertainty Quantification | Advanced toolkit for design of experiments, sensitivity analysis, and UQ. | Sandia Natl. Labs / Open Source |

| ParaView/ Tecplot | Visualization | Rendering of probabilistic output fields (e.g., confidence contours on geometry). | Open Source / Tecplot, Inc. |

| High-Performance Computing (HPC) Cluster | Hardware | Enables execution of hundreds to thousands of computationally intensive model runs. | Local University / Cloud (AWS, Azure) |

In Monte Carlo simulation for biomaterials research, Step 4 transforms raw simulation data into actionable insights. This phase quantifies uncertainty, identifies critical input parameters, and estimates reliability, which is essential for applications like drug-eluting stent design, scaffold degradation modeling, or nanoparticle biodistribution prediction.

Core Analytical Techniques: Protocols & Application Notes

Sensitivity Analysis Protocol

Objective: To rank input parameters (e.g., material degradation rate, drug diffusion coefficient, surface ligand density) based on their influence on a critical output (e.g., total drug released, time to mechanical failure).

Detailed Protocol:

- Define the Model: Let ( Y = f(X1, X2, ..., Xk) ) be the simulation output, where ( Xi ) are stochastic input parameters defined by probability distributions (e.g., log-normal for degradation rate).

- Generate Sample Matrix: Using a Latin Hypercube Sampling (LHS) design, generate an ( N \times k ) input matrix. A typical ( N ) ranges from 500 to 5000, depending on model runtime.

- Run Simulations: Execute the Monte Carlo model for each of the ( N ) input sets.

- Calculate Sensitivity Indices: Compute Sobol' indices via the Saltelli estimator.

- Total-Effect Index (( S{Ti} )): Measures the total contribution of ( Xi ) to output variance, including interactions. Calculated using: [ V{Y} = \text{Var}(Y), \quad V{Y{\sim i}} = \text{Var}(E[Y|X{\sim i}]), \quad S{Ti} = 1 - \frac{V{Y{\sim i}}}{V{Y}} ]

- Rank Parameters: Rank ( Xi ) in descending order of ( S{Ti} ). Parameters with ( S_{Ti} > 0.1 ) are typically considered highly influential.

Data Presentation (Table 1): Typical Sensitivity Results for a Polymeric Scaffold Degradation Model

| Rank | Input Parameter | Distribution | Total-Effect Index (S_Ti) | Interpretation |

|---|---|---|---|---|

| 1 | Crystallinity (%) | Normal (μ=40, σ=5) | 0.52 | Dominant factor controlling erosion rate. |

| 2 | Initial Mol. Weight (kDa) | Log-normal (μ=5.0, σ=0.2) | 0.23 | Significant influence on initial strength. |

| 3 | Hydrolysis Rate Constant (day⁻¹) | Uniform (min=0.05, max=0.15) | 0.08 | Moderate effect on degradation timeline. |

| 4 | Porosity (%) | Beta (α=2, β=5) | 0.03 | Minimal direct effect in this model setup. |

Confidence Interval Construction Protocol

Objective: To estimate a range (interval) within which a population parameter (e.g., mean time to failure) lies, with a specified probability (confidence level).

Detailed Protocol (Bootstrap Method for Simulation Output):

- Collect Simulation Output: Let ( y = {y1, y2, ..., y_N} ) be the ( N ) output values from the Monte Carlo run.

- Set Parameters: Choose confidence level ( CL ) (e.g., 95%), number of bootstrap samples ( B ) (e.g., 5000-10000).

- Bootstrap Resampling:

- For ( b = 1 ) to ( B ):

- Draw a random sample of size ( N ) with replacement from ( y ), creating ( y^{*b} ).

- Calculate the statistic of interest (e.g., mean, ( \theta^{b} = \text{mean}(y^{b}) )).

- For ( b = 1 ) to ( B ):

- Construct Interval: Sort the ( B ) bootstrap statistics. The ( (1-CL)/2 ) and ( 1-(1-CL)/2 ) percentiles form the lower and upper bounds of the percentile bootstrap CI.

- For 95% CI: Lower = 2.5th percentile, Upper = 97.5th percentile of ( {\theta^{1}, ..., \theta^{B}} ).

Data Presentation (Table 2): Confidence Intervals for Simulated Drug Release Kinetics

| Output Metric | Point Estimate | 95% Bootstrap CI (Lower) | 95% Bootstrap CI (Upper) | Interpretation |

|---|---|---|---|---|

| Mean Time to 80% Release (days) | 28.5 | 26.8 | 30.3 | We are 95% confident the true mean is between 26.8 and 30.3 days. |

| Std. Dev. of Burst Release (%) | 12.1 | 10.5 | 14.0 | Significant variability in initial burst. |

Probability of Failure Calculation Protocol

Objective: To estimate the likelihood that a biomaterial system will not meet a defined performance criterion (failure).

Detailed Protocol:

- Define Failure Event: Specify a limit state function ( G(X) ). Failure occurs when ( G(X) \leq 0 ).

- Example: For scaffold compressive strength, ( G(X) = \text{Simulated Strength}(X) - \text{Required Minimum Strength} ).

- Count Failure Events: From the ( N ) Monte Carlo runs, count the number of simulations where ( G(X) \leq 0 ). Let this count be ( N_f ).

- Estimate Probability: The point estimate for Probability of Failure (( Pf )) is: [ \hat{Pf} = \frac{N_f}{N} ]

- Assess Accuracy: Ensure sufficient failures for a reliable estimate. A rule of thumb: ( N \times \hat{Pf} > 10 ). For very low ( Pf ) (e.g., < 0.001), advanced methods like Subset Simulation are required.

Data Presentation (Table 3): Failure Probability for a Load-Bearing Implant

| Failure Mode | Limit State (G(X)) | Failure Count (N_f) | P_f Estimate | 95% CI for P_f (Clopper-Pearson) |

|---|---|---|---|---|

| Fatigue Fracture (1 yr) | Cycles to fracture - 1e6 cycles | 47 | 0.0094 | [0.0069, 0.0125] |

| Excessive Deformation | Max displacement - 2 mm | 12 | 0.0024 | [0.0012, 0.0042] |

The Scientist's Toolkit: Research Reagent & Computational Solutions

| Item Name | Category | Function in Analysis | Example Supplier/Software |

|---|---|---|---|

| SALib | Software Library (Python) | Performs global sensitivity analysis (Sobol', Morris, FAST). Calculates indices from input/output data. | Open-source (GitHub) |

| Bootstrap Resampling Code | Algorithm | Implements non-parametric CI estimation for any complex output statistic. | Custom implementation in R (boot package) or Python. |

| Subset Simulation | Advanced Algorithm | Efficiently estimates very low probabilities of failure (< 1e-3) for reliable risk assessment. | Open-source implementations (e.g., UQpy). |

| Latin Hypercube Sampler | Sampling Tool | Generates efficient, space-filling input parameter sets for sensitivity analysis. | pyDOE, Chaospy, or custom code. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Enables execution of thousands of computationally intensive biomaterial model runs in parallel. | Institutional or cloud-based (AWS, Azure). |

| Statistical Visualization Library | Software Library | Creates clear plots for CIs, PDFs, and sensitivity indices (e.g., tornado charts). | Matplotlib/Seaborn (Python), ggplot2 (R). |

Within the broader thesis framework of applying Monte Carlo (MC) simulations to quantify uncertainty in biomaterials research, this application note details the protocol for simulating hydrolytic degradation profiles of aliphatic polyesters (e.g., PLGA, PLA). This is critical for predicting scaffold performance in tissue engineering and controlled drug delivery.

The simulation models stochastic chain scission events in an aqueous environment. Key input parameters, their sources, and typical uncertainty ranges are summarized below.

Table 1: Key Input Parameters and Their Uncertainty Ranges

| Parameter | Symbol | Typical Value / Range | Source / Measurement Method | Uncertainty (as ±1σ) |

|---|---|---|---|---|

| Initial Number-Average DP | Nₙ₀ | 200 - 1000 | Gel Permeation Chromatography (GPC) | ±5% of measured value |

| Hydrolysis Rate Constant | k | 1e-4 to 1e-2 day⁻¹ | Fitting to in vitro mass loss data | ±25% of fitted value |

| Local Acidic Autocatalysis Factor | α | 1 (surface) to 15 (core) | pH microsensor measurement; MC sampling | Uniform Dist. [5, 15] for core |

| Critical Chain Length for Solubilization | L_c | 6 - 12 monomer units | Literature meta-analysis; Dissolution assay | Discrete Uniform [6, 7, ..., 12] |

| Scaffold Porosity | ε | 0.75 - 0.90 | Micro-CT imaging | ±0.03 |

Table 2: Example Monte Carlo Simulation Output (PLGA 50:50, Nₙ₀=500, n=1000 runs)

| Time Point (Days) | Mean Mass Remaining (%) | Std. Dev. (±%) | 95% Credible Interval (%) | Probability of Full Erosion |

|---|---|---|---|---|

| 30 | 92.1 | 3.2 | [86.1, 97.8] | 0.00 |

| 60 | 75.4 | 6.8 | [63.2, 87.9] | 0.02 |

| 90 | 52.3 | 10.1 | [34.5, 71.6] | 0.15 |

| 120 | 28.7 | 9.8 | [11.5, 48.2] | 0.68 |

Detailed Experimental Protocols

Protocol 2.1: In Vitro Degradation Study for Parameter Calibration Objective: Generate empirical data to calibrate the hydrolysis rate constant (k) and observe autocatalytic effects. Materials: See "Scientist's Toolkit" below. Procedure:

- Sample Preparation: Fabricate PLGA scaffolds (n=50 per group) via solvent casting/particulate leaching. Sterilize via ethanol immersion and UV light.

- Degradation Setup: Immerse scaffolds in 10 mL of phosphate-buffered saline (PBS, pH 7.4) at 37°C under gentle agitation. Replace PBS every 7 days to maintain sink conditions.

- Time-Point Sampling: At predetermined intervals (e.g., 1, 7, 30, 60 days), remove scaffolds (n=5 per time point).

- Mass Loss Measurement: Rinse samples in deionized water, lyophilize for 48h, and record dry mass (Mt). Calculate percentage mass remaining: (Mt / M_0) * 100.

- Molecular Weight Analysis: Dissolve a portion of the dried scaffold in tetrahydrofuran (THF). Analyze via GPC to determine the evolving number-average degree of polymerization (N_n).

- Morphology Analysis: Image cross-sections via scanning electron microscopy (SEM) to quantify pore size change and observe bulk vs. surface erosion patterns.

Protocol 2.2: Monte Carlo Simulation of Degradation Objective: Propagate parameter uncertainties to predict a distribution of degradation timelines. Computational Procedure:

- Initialize System: Define a 3D lattice representing the scaffold. Assign each polymer chain a starting length sampled from a Flory distribution centered on Nₙ₀.

- Parameter Sampling: For each simulation iteration (i=1 to n, e.g., n=5000), sample input parameters from their defined probability distributions (see Table 1).

- Stochastic Scission Loop: For each time step (Δt): a. Calculate instantaneous scission probability for each cleavable ester bond: P_scission = 1 - exp(-k * α * Δt). The factor α is spatially defined (higher in inner regions if a pH gradient is modeled). b. Use a random number generator (RNG) to decide if each bond breaks. c. Update chain lengths. Flag chains shorter than the sampled L_c for dissolution and removal from the lattice.

- Calculate Outputs: Track system-wide mass loss and molecular weight averages over time.

- Post-Process: Aggregate results from all iterations to generate distributions (mean, standard deviation, credible intervals) for mass loss and erosion time at each simulated time point.

Visualization of Workflow & Model

Title: MC Simulation Workflow for Scaffold Degradation

Title: Stochastic Chain Scission and Erosion Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function / Rationale |

|---|---|

| PLGA (50:50, 75:25) | Standard aliphatic polyester copolymer; tunable degradation rate by lactic/glycolic acid ratio. |

| Phosphate Buffered Saline (PBS), pH 7.4 | Standard physiological buffer for in vitro degradation studies. |

| Tetrahydrofuran (HPLC Grade) | Solvent for dissolving polymeric scaffolds for GPC analysis. |

| GPC/SEC System with RI Detector | To measure the evolving molecular weight distribution of degrading polymers. |

| Lyophilizer (Freeze Dryer) | To remove water from degraded samples for accurate dry mass measurement. |

| Scanning Electron Microscope (SEM) | To visualize surface and cross-sectional morphological changes (pore structure, cracks). |

| Monte Carlo Simulation Software (e.g., custom Python/R code, COMSOL with LiveLink) | To implement stochastic degradation models and propagate parameter uncertainties. |

The efficacy of targeted drug delivery nanoparticles (TDNPs) is inherently variable due to stochastic processes in synthesis, environmental heterogeneity in vivo, and biological noise. This application note frames this challenge within a Monte Carlo (MC) simulation paradigm. By treating critical parameters—such as ligand density, hydrodynamic size, and tumor receptor expression—as probability distributions rather than fixed values, MC methods quantify how input uncertainties propagate to predict a distribution of probable therapeutic outcomes (e.g., percent cellular uptake, tumor accumulation). This shifts the research focus from single-point predictions to robust, probabilistic forecasts of nanoparticle performance.

The major stochastic parameters influencing TDNP efficacy, their typical ranges, and distribution types are summarized below.

Table 1: Key Stochastic Parameters in TDNP Efficacy Modeling

| Parameter | Typical Range/Values | Probabilistic Distribution Type (Commonly Used) | Primary Impact on Efficacy |

|---|---|---|---|

| NP Core Diameter | 10 - 150 nm | Log-normal or Normal | Circulation time, EPR effect, cellular internalization |

| Polyethylene Glycol (PEG) Density | 0.1 - 1.0 chains/nm² | Normal | Protein corona formation, stealth properties |

| Targeting Ligand Density | 0.01 - 5 ligands/particle | Poisson | Specific cell binding affinity, multivalency |

| Tumor Receptor Expression Level | 10³ - 10⁶ receptors/cell | Log-normal | Available binding sites, heterogeneity |

| Blood Flow Velocity in Tumor | 10 - 500 µm/s | Weibull | NP delivery rate to target site |

| Endocytic Rate Constant | 0.01 - 0.3 min⁻¹ | Normal | Internalization efficiency post-binding |

Experimental Protocols for Parameterization of MC Models

Protocol 1: Quantifying Ligand Density Distribution via Flow Cytometry

- Objective: To empirically determine the Poisson distribution parameter (mean ligand density) for a population of antibody-conjugated nanoparticles.

- Materials: TDNPs with fluorescent label on targeting antibody, Isotype control NPs, Flow cytometer with nanoparticle analysis capability.

- Procedure:

- Dilute TDNP sample to ~10⁸ particles/mL in PBS.

- Run sample on flow cytometer, triggering on side scatter. Collect fluorescence intensity (FI) of >50,000 single-particle events.

- Run isotype control NPs (non-targeting, same fluorescent label) to establish background FI.

- Subtract modal background FI from the TDNP FI histogram.

- Fit the resulting FI distribution to a Poisson model using maximum likelihood estimation. The mean FI corresponds to the mean number of fluorophores (ligands) per particle.

Protocol 2: Measuring Heterogeneity in Cellular Receptor Expression

- Objective: To obtain a log-normal distribution of receptor expression for input into binding affinity simulations.

- Materials: Target cell line, Fluorescently-labeled ligand or antibody against target receptor, Flow cytometer.

- Procedure:

- Harvest and wash cells. Aliquot 1x10⁶ cells per tube.

- Stain cells with saturating concentration of fluorescent probe for 1 hour at 4°C. Include an unstained/isotype control.

- Wash cells, resuspend in buffer, and analyze by flow cytometry (>10,000 events).

- Convert fluorescence histogram to Molecules of Equivalent Fluorochrome (MEF) using calibration beads.

- Fit the MEF data to a log-normal distribution. Extract the geometric mean and standard deviation.

Core Monte Carlo Simulation Workflow Diagram

Title: Monte Carlo Simulation Workflow for TDNP Efficacy

Key Signaling & Internalization Pathways for Targeted Nanoparticles

Title: Cellular Uptake and Trafficking Pathways for TDNPs

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for TDNP Variability Research

| Item | Function & Relevance to Uncertainty Quantification |

|---|---|

| Dynamic Light Scattering (DLS) & Nanoparticle Tracking Analysis (NTA) | Characterizes hydrodynamic size distribution (polydispersity index) - a critical input variable for MC simulations of biodistribution. |

| Tunable Microfluidic Nanoparticle Synthesizers | Enables controlled production of NPs with graded properties (size, ligand density) to create empirical parameter distributions. |

| Quantitative Flow Cytometry Bead Kits | Converts fluorescence intensity to absolute ligand count per particle, essential for parameterizing the Poisson distribution of ligand density. |

| 3D Tumor Spheroid Co-Culture Models | Provides a heterogeneous in vitro environment with gradients in cell proliferation and receptor expression, mimicking in vivo variability. |

| Stochastic Modeling Software (e.g., MATLAB, Python with NumPy/SciPy) | Platform for coding custom MC simulations that integrate multiple probability distributions to predict outcome variability. |

| Surface Plasmon Resonance (SPR) with Scattering Detection | Measures binding kinetics (ka, kd) of NPs to immobilized receptors, providing mean and variance for affinity constants in simulations. |

Within the broader thesis on the application of Monte Carlo simulations for uncertainty quantification in biomaterials research, this spotlight focuses on metallic orthopedic implants. These devices, such as hip stems and spinal rods, are subject to complex, variable cyclic loading in vivo. Deterministic fatigue life predictions are insufficient due to inherent uncertainties in material properties, manufacturing defects, patient-specific loading, and biological environment. Probabilistic methods, principally Monte Carlo simulation, are essential to predict reliability and set safety factors, transforming single-point estimates into survival probability distributions.

Application Notes

Fatigue life prediction (Nf) is a function of multiple stochastic variables: Nf = f(σa, σUTS, KIC, εf, a_i, C, m, ...). Key uncertainties include:

- Material Properties: Scatter in ultimate tensile strength (σUTS), fracture toughness (KIC), and fatigue crack growth parameters (C, m) from batch-to-batch variations.

- Initial Defects: Distribution of size, location, and orientation of inherent micro-voids, inclusions, or surface roughness from machining.

- Loading Variability: Inter- and intra-patient variability in gait, activity level, and body weight, leading to a spectrum of applied stress amplitudes (σ_a).

- Environmental Factors: Stochastic corrosion-fatigue interactions in the physiological environment (pH, ion concentration).

Monte Carlo Simulation Framework

A Monte Carlo workflow propagates these uncertainties through a fatigue model:

- Define Input Distributions: Assign probability density functions (PDFs) to each stochastic input variable.

- Define Fatigue Model: Select an appropriate model (e.g., stress-life (S-N) with modifications, strain-life (ε-N), or fracture mechanics-based crack growth).

- Random Sampling: Draw a random set of input values from their PDFs.

- Deterministic Calculation: Compute the fatigue life for that specific set.

- Iterate: Repeat steps 3-4 thousands of times to build a distribution of output (N_f).

- Analyze Results: Determine probability of failure at a given cycle count (e.g., 10 million cycles) or predict reliability with confidence intervals.

Experimental Protocols & Data

Protocol 1: Probabilistic S-N Curve Generation for Ti-6Al-4V ELI

Objective: To construct probability-of-failure S-N curves (P-S-N) from experimental data for use in Monte Carlo input modeling. Methodology:

- Material & Specimen Preparation: Use ASTM F136-compliant Ti-6Al-4V ELI bar stock. Machine smooth, polished fatigue specimens (e.g., ASTM E466 geometry).

- Testing: Conduct fully reversed (R=-1) axial fatigue tests in a physiological saline environment (37°C). Test at multiple stress amplitude levels (σ_a).

- Data Collection: For each σa, test a cohort of specimens (n≥8) to failure. Record cycles to failure (Nf) for each.

- Statistical Analysis: At each σa, fit the log10(Nf) data to a statistical distribution (commonly Weibull or Lognormal). Extract distribution parameters (shape, scale).

- Curve Fitting: Model the relationship between σ_a and the parameters of the life distribution.

Quantitative Data Summary (Representative): Table 1: Example Fatigue Data for Ti-6Al-4V ELI in Physiological Saline (R=-1)

| Stress Amplitude (MPa) | Sample Size (n) | Mean Life, log10(N_f) | Std. Dev., log10(N_f) | Weibull Shape Parameter (β) | Weibull Scale Parameter (θ) cycles |

|---|---|---|---|---|---|

| 600 | 10 | 4.85 | 0.18 | 5.9 | 7.08e4 |

| 500 | 12 | 5.92 | 0.22 | 4.8 | 8.32e5 |

| 400 | 15 | 7.15 | 0.25 | 4.2 | 1.41e7 |

Protocol 2: Monte Carlo Simulation for a Cementless Femoral Stem

Objective: To estimate the probability distribution of fatigue life for a hip stem under variable patient loading. Methodology:

- Model Inputs Definition:

- Loading: Define PDF for peak hip contact force during walking (e.g., Normal distribution: Mean=2.5xBW, SD=0.3xBW, from instrumented implant data).

- Material: Define PDF for fatigue strength coefficient (σ_f') from Table 1 data analysis.

- Geometry: Define PDF for critical fillet radius (from manufacturing tolerances).

- Fatigue Life Model: Use a local stress-based approach (e.g., modified Basquin's law: σa = σf' * (2Nf)^b). Incorporate a stress concentration factor Kt based on random geometry.

- Simulation Execution: Implement a sampling algorithm (e.g., Latin Hypercube) in computational software (Python, MATLAB, R). Perform 50,000+ iterations.

- Output Analysis: Generate a histogram and cumulative distribution function (CDF) of predicted N_f. Report the life at 0.1% probability of failure (safe life).

Quantitative Data Summary (Representative Output): Table 2: Monte Carlo Simulation Results for a Representative Hip Stem Design

| Output Metric | Value (Cycles) | Comment |

|---|---|---|

| Mean Predicted Life (N_mean) | 2.1 x 10^7 | |

| Standard Deviation (log scale) | 0.35 | |

| Life at 0.1% Probability of Failure (N_99.9) | 5.6 x 10^6 | Critical design safety value |

| Simulated Reliability at 10^7 cycles | 99.4% | Probability of survival |

Visualizations

Title: Monte Carlo Fatigue Simulation Workflow

Title: Uncertainty Inputs in Fatigue Life Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for Probabilistic Fatigue Assessment

| Item & Example Source | Function in Probabilistic Assessment |

|---|---|

| ASTM F136 Ti-6Al-4V ELI Bar Stock | Standardized implant-grade alloy for generating material-specific stochastic input data. |

| Physiological Saline (0.9% NaCl) or Simulated Body Fluid (SBF) | Corrosive environment for in vitro testing that mimics physiological fatigue-corrosion interaction. |

| Servohydraulic Fatigue Testing System | Precisely applies cyclic loading to specimens for generating baseline S-N data under controlled conditions. |

| Scanning Electron Microscope (SEM) | Characterizes the distribution of initial material defects and analyzes fracture surfaces post-test. |

| Statistical Analysis Software (e.g., R, Minitab) | Fits probability distributions to experimental data to define input PDFs for Monte Carlo simulations. |

| Monte Carlo Simulation Platform (e.g., Python/NumPy, MATLAB, ANSYS Probabilistic Design) | Core tool for implementing the stochastic sampling algorithm and propagating uncertainties. |

| Finite Element Analysis (FEA) Software | Determines stress distributions in complex implant geometries for different random loading conditions. |

Beyond the Basics: Solving Convergence, Cost, and Validation Challenges in Your Simulations