Marker-Based vs. Markerless Motion Capture: A Complete Technical Guide for Biomedical Research and Drug Development

This comprehensive guide analyzes marker-based and markerless motion capture (MoCap) technologies, comparing their principles, accuracy, and applications in clinical research and drug development.

Marker-Based vs. Markerless Motion Capture: A Complete Technical Guide for Biomedical Research and Drug Development

Abstract

This comprehensive guide analyzes marker-based and markerless motion capture (MoCap) technologies, comparing their principles, accuracy, and applications in clinical research and drug development. It provides researchers and professionals with a foundational understanding of each system's operational mechanics, explores their specific methodological applications in gait analysis, kinematic studies, and patient monitoring, and offers troubleshooting strategies for real-world data collection. The article delivers a critical, evidence-based validation framework, comparing quantitative accuracy, cost-effectiveness, and suitability for diverse clinical populations to empower informed technology selection.

Core Principles Decoded: How Marker and Markerless Motion Capture Systems Actually Work

Within research comparing motion capture (MoCap) systems, the core distinction lies in the requirement for physical markers placed on the subject. This guide objectively compares these paradigms for applications in biomechanics, neuroscience, and drug development.

Core Comparative Data

Table 1: Fundamental System Comparison

| Feature | Marker-Based MoCap | Markerless MoCap |

|---|---|---|

| Primary Technology | Optoelectronic infrared cameras tracking retroreflective/markers. | Computer vision (CV) & deep learning (DL) algorithms processing RGB or RGB-D video. |

| Setup Complexity | High (precise calibration, physical marker placement). | Low (camera setup only, no subject preparation). |

| Data Fidelity (Precision) | Sub-millimeter (<1mm) for high-end systems. | Millimeter to centimeter (2-10mm), highly dependent on algorithm and camera setup. |

| Throughput Speed | Slow (subject preparation 20-45 mins). | Fast (near-instantaneous, limited to calibration). |

| Environmental Sensitivity | Sensitive to occlusions, controlled lighting required. | Sensitive to lighting, background clutter, and clothing contrast. |

| Typical Cost (Research Grade) | High ($50,000 - $250,000+) | Lower to Moderate ($1,000 - $50,000 for software/camera packages) |

Table 2: Quantitative Performance from Recent Comparative Studies

| Study & Protocol (Summarized) | Marker-Based Error (RMSE) | Markerless Error (RMSE) | Key Metric |

|---|---|---|---|

| Gait Analysis (Treadmill) | 1.2 mm (Joint Center) | 12.4 mm (Hip Joint) | 3D Joint Position |

| Rodent Open Field Test | 3.5 mm (Spine Marker) | 8.7 mm (Spine Base) | Tracking Accuracy |

| Human Reach-to-Grasp | 0.8 mm (Wrist Marker) | 5.1 mm (Wrist) | Trajectory Deviation |

Detailed Experimental Protocols

Protocol 1: Comparative Validation of Gait Kinematics

- Objective: To quantify the agreement in joint angle calculation between marker-based and markerless systems during standardized walking.

- Subjects: N=15 human participants.

- Setup: A calibrated volume containing 10 optoelectronic cameras (120Hz) and 4 synchronized RGB cameras (60Hz).

- Marker-Based: 39 retroreflective markers placed per Plug-in-Gait model.

- Markerless: Participants wore tight-fitting clothing. No markers for the CV system.

- Task: 2 minutes of treadmill walking at a self-selected speed.

- Analysis: Raw 3D trajectories from both systems were processed. Key joint angles (knee flexion/extension, hip abduction/adduction) were calculated. Root Mean Square Error (RMSE) and Pearson correlation coefficients (r) were computed between systems for each angle.

Protocol 2: Preclinical Rodent Locomotion and Behavior Analysis

- Objective: To assess the efficacy of markerless systems in quantifying behavioral endpoints relevant to CNS drug discovery.

- Subjects: N=20 laboratory mice (model of Parkinson's disease).

- Setup: Open field arena with top-mounted RGB-D (depth) camera.

- Marker-Based (Control): Small (2mm) retroreflective markers affixed to the skull, upper back, and base of tail.

- Markerless: Fur coat with no markers.

- Task: 10-minute open field exploration pre- and post-administration of a dopaminergic agent.

- Analysis: Tracking data from both systems was used to compute total distance traveled, velocity, rearing frequency, and grooming bout duration. System outputs were compared to manual human scorer annotations (ground truth).

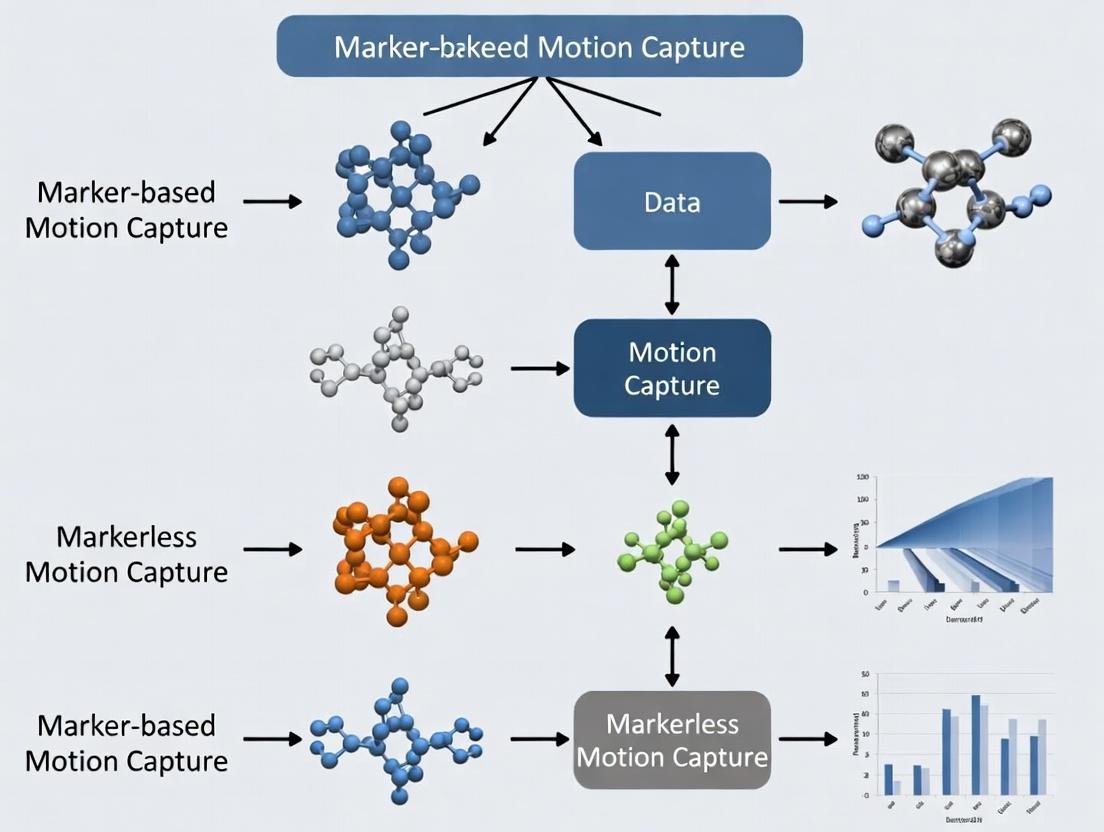

Visualizing the Core Methodological Divide

Title: Methodological Workflow for MoCap Paradigms

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Comparative MoCap Research

| Item | Function in Research | Example/Note |

|---|---|---|

| Calibration Wand (L-Frame) | Defines the 3D capture volume origin and scale for both system types. Critical for spatial alignment in validation studies. | Used with both optoelectronic and multi-camera CV setups. |

| Retroreflective Markers | Passive markers that reflect infrared light to cameras. The "reagent" for marker-based systems. | Vary in size (3-25mm); adhesive or placed on rigid clusters. |

| Biomechanical Model Template | Digital skeleton (e.g., Plug-in-Gait, CAST) applied to marker data to calculate joint kinematics. | The analytical framework for interpreting raw marker trajectories. |

| Pose Estimation Model Weights | The pre-trained algorithm (e.g., OpenPose, DeepLabCut, HRNet) for markerless keypoint detection. | The core "reagent" for markerless systems; defines accuracy and anatomical points. |

| Synchronization Trigger Box | Hardware to simultaneously start data acquisition across all camera and sensor systems. | Ensures temporal alignment for frame-by-frame comparison. |

| Validation Phantom (Mannequin) | An object with known, reproducible dimensions and movement patterns. | Provides ground truth for system accuracy independent of biological variability. |

Within the broader thesis comparing marker-based and markerless motion capture systems, this guide provides an objective performance comparison of contemporary marker-based optical motion capture systems, which remain the gold standard for high-precision human movement analysis in biomechanics and pharmaceutical research.

Core Components & System Comparison

Marker-based optical motion capture systems are defined by three integrated components: high-speed cameras that capture reflected light, passive or active markers placed on anatomical landmarks, and software algorithms that reconstruct 3D marker trajectories. The performance of leading systems is compared below.

Table 1: Comparative Performance of Selected Marker-Based Motion Capture Systems

| System (Manufacturer) | Typical Camera Resolution | Max Capture Frequency (Hz) | Typical 3D Reconstruction Accuracy (mm) | Real-Time Processing | Key Differentiator |

|---|---|---|---|---|---|

| Vero (Vicon) | 2.2 MP | 370 | < 1.0 | Yes | Sub-millimeter accuracy for high-frequency movements |

| Primex (OptiTrack) | 3.1 MP | 360 | ~1.0 | Yes | High resolution at a lower cost point |

| Miqus M3 (Qualisys) | 3.2 MP | 340 | < 1.0 | Yes | Enhanced performance in variable lighting |

| Raptor-E (Motion Analysis) | 4.1 MP | 500 | ~0.5 | Yes | Ultra-high speed and resolution for fine details |

Experimental Protocols & Supporting Data

The following standardized protocols are commonly used to quantify system performance, providing comparative data between marker-based and markerless alternatives.

Protocol 1: Static Accuracy & Precision Measurement

- Objective: To determine the root-mean-square (RMS) error of 3D point reconstruction in a controlled volume.

- Methodology: A calibration wand of known length (e.g., 750.0 mm ± 0.1 mm) is moved throughout the capture volume. The system's calculated distance between the two wand markers is recorded at hundreds of positions. The RMS error between the known length and the measured lengths is computed.

- Supporting Data: In a controlled lab environment, leading marker-based systems (Vicon, Qualisys) consistently report static accuracy with RMS errors below 0.5 mm, significantly outperforming current consumer-grade markerless systems (e.g., Microsoft Kinect, Apple ARKit), which typically show errors of 10-30 mm in similar tests.

Protocol 2: Dynamic Accuracy via Instrumented Pendulum

- Objective: To assess tracking fidelity of high-speed, predictable motion.

- Methodology: A rigid rod with multiple markers is attached to a motorized pendulum that moves with known kinematic parameters. The 3D trajectories captured by the system are compared to the theoretical motion path derived from encoder data.

- Supporting Data: Marker-based systems demonstrate minimal phase lag and trajectory deviation (<1 mm) at speeds up to 5 m/s. Markerless systems, relying on sequential image processing, often introduce greater latency and trajectory smoothing, reducing accuracy for rapid movements.

Table 2: Performance in Clinical Gait Analysis Comparison

| Metric | Marker-Based (Vicon) | Markerless (Theia Markerless) | Notes |

|---|---|---|---|

| Joint Center Error (Hip) | 5 - 10 mm | 15 - 25 mm | Marker-based uses predictive models (e.g., Harrington) from marker clusters. |

| Intra-Session Repeatability (Knee Flexion) | ±1.5° | ±3.5° | Measured as standard deviation across 10 trials of the same walk. |

| Soft Tissue Artifact Error | 15 - 30 mm (Skin shift) | N/A (No markers) | Major error source for marker-based; markerless infers bone pose from video. |

| Set-Up Time (Full Body) | 30 - 45 minutes | < 5 minutes | Markerless offers significant time efficiency advantage. |

Title: Marker-Based Motion Capture Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Marker-Based Motion Capture Experiments

| Item | Function & Specification |

|---|---|

| Retro-Reflective Markers | Spherical, passive markers that reflect infrared light back to the source. Available in varying diameters (e.g., 4mm for fine hand, 14mm for body). |

| Rigid Marker Clusters | Arrays of markers fixed on a rigid plate. Used on body segments to minimize skin movement artifact error and define segment coordinate systems. |

| Calibration Wand (L-Frame/Dynamic) | Tool with precisely known distances between markers. Used to define the capture volume's origin, scale, and orientation (L-frame) and to refine volume accuracy (dynamic T-wand). |

| Biomechanical Modeling Software (Visual3D, OpenSim) | Software that transforms 3D marker data into biomechanical parameters (joint angles, moments, powers) using defined skeletal models. |

| Synchronization Trigger Box | Hardware device to synchronize motion capture data with other acquisition systems (force plates, EMG, physiological monitors). |

Title: Primary Error Sources in Marker-Based Systems

In summary, within the thesis context, marker-based systems provide unparalleled accuracy and precision for quantifying human kinematics, as evidenced by controlled experimental data. This performance comes at the cost of longer set-up times, subject preparation, and sensitivity to marker occlusion. The choice between marker-based and markerless systems thus hinges on the specific research question's tolerance for error versus requirements for ecological validity and throughput.

This comparison guide is framed within a broader research thesis comparing marker-based and markerless motion capture systems. For researchers and professionals in drug development and biomechanics, selecting the appropriate motion capture technology is critical for generating valid, reproducible data. Markerless systems, powered by computer vision and deep learning, represent a paradigm shift, offering new possibilities for unconstrained movement analysis in clinical and preclinical settings.

Core Technology Comparison

Markerless motion capture systems rely on algorithms to infer body pose directly from video sequences, eliminating the need for physical markers or specialized suits. The performance hinges on several key technological pillars.

Table 1: Comparison of Core Pose Estimation Algorithm Architectures

| Algorithm Type | Key Model Examples | Typical Accuracy (MPJPE*) | Inference Speed (FPS) | Key Strengths | Primary Limitations |

|---|---|---|---|---|---|

| 2D-to-3D Lifting | VideoPose3D, PoseFormer | 35-45 mm | 50-100+ | Robust to single-frame occlusion, good generalization from 2D data. | Error accumulation from 2D detection stage. |

| End-to-End 3D | VoxelPose, SimpleBaseline3D | 30-40 mm | 20-50 | Direct spatial reasoning, can better handle multi-view data. | Computationally intensive, requires large 3D datasets. |

| Model-Based | SMPLify, ProHMR | 50-70 mm | 10-30 | Produces biomechanically plausible human meshes. | Slower, can converge to incorrect local minima. |

| Temporal Models | MHFormer, MixSTE | 30-40 mm | 40-80 | Excellent temporal smoothness, robust to occlusion. | Complex architecture, higher training cost. |

*Mean Per Joint Position Error (lower is better) on standard benchmarks (e.g., Human3.6M).

Performance Comparison: Markerless vs. Marker-Based Systems

The following data synthesizes findings from recent validation studies.

Table 2: System-Level Performance Comparison in Gait Analysis

| Performance Metric | High-End Marker-Based (e.g., Vicon, Qualisys) | Commercial Markerless (e.g., Theia3D, DeepLabCut + Anipose) | Open-Source Markerless (e.g., OpenPose, MediaPipe + 3D lifting) |

|---|---|---|---|

| Static Accuracy (RMS) | < 1 mm | 2 - 5 mm | 5 - 15 mm |

| Dynamic Accuracy (Gait) | 1 - 2 mm | 3 - 7 mm | 10 - 25 mm |

| Joint Angle Error (RMSE) | 0.5° - 1.5° | 2.0° - 5.0° | 3.0° - 8.0° |

| Set-up Time (Subject) | 20 - 45 min | < 2 min | < 2 min |

| System Latency | < 10 ms | 50 - 200 ms | 100 - 500 ms |

| Multi-Subject Capability | Limited by hardware | Native, unlimited in theory | Native, unlimited in theory |

| Environmental Constraints | Controlled lab, fixed cameras | Tolerant of varied lighting/background | Requires careful calibration & tuning |

Experimental Protocols for Validation

To generate the data in Table 2, standardized validation protocols are essential.

Protocol 1: Concurrent Validity for Gait Analysis

- Setup: Synchronize a marker-based system (e.g., 10-camera Vicon Nexus) and a markerless system (e.g., 6 RGB cameras for Theia3D).

- Calibration: Perform dynamic calibration for both systems using an L-frame and wand.

- Participants: N=20 healthy adults.

- Task: Walk at self-selected speed across a 10m walkway. Perform 5 trials per subject.

- Marker Model: Apply a 39-marker full-body Plug-in-Gait model for the marker-based system.

- Data Processing: Filter raw marker trajectories (low-pass Butterworth, 6Hz). For markerless, process videos through proprietary algorithms or open-source pipelines (e.g., 2D pose estimation with HRNet, triangulation using DLT).

- Analysis: Compute key kinematic variables (joint angles of hip, knee, ankle in sagittal plane). Calculate Root Mean Square Error (RMSE), Pearson's r, and Bland-Altman limits of agreement between systems.

Protocol 2: Occlusion Robustness Testing

- Setup: Single multi-view markerless system in a volume of 4m x 4m.

- Task: Subjects perform a series of activities (walking, picking up an object, turning) while an obstruction (e.g., a pole or a second person) periodically occludes a limb.

- Metrics: Track the number of frames where joint detection is lost, and the drift in joint position upon re-acquisition compared to a ground-truth marker-based system.

Visualization: Markerless System Workflow

Title: Markerless Motion Capture Processing Pipeline

Title: Decision Flow: Marker-Based vs. Markerless Research

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Components for a Markerless Motion Capture Research Setup

| Item | Function & Rationale |

|---|---|

| Synchronized Multi-Camera Array (e.g., 6-10x Genlock-enabled RGB cameras) | Provides multiple 2D viewpoints for accurate 3D triangulation. Genlock ensures microsecond-level synchronization, critical for dynamic motion. |

| Calibration Rig (L-frame, Wand with markers) | Enables computation of the 3D spatial relationship (extrinsic parameters) between all cameras, defining the capture volume. |

| 2D Pose Estimation Model (e.g., HRNet-W48, ViTPose-G) | The deep learning "backbone" that identifies body keypoints in each 2D image. Higher resolution models (HRNet) generally yield better accuracy. |

| 3D Reconstruction Software (e.g., Anipose, Theia3D, custom DLT) | Algorithms that combine 2D keypoints from multiple cameras to reconstruct the 3D pose, often using Direct Linear Transform (DLT) or bundle adjustment. |

| Biomechanical Model (e.g., OpenSim model, SMPL body model) | A digital skeleton that maps estimated keypoints to biomechanically meaningful joints and segments, enabling calculation of angles and forces. |

| Validation Ground Truth System (e.g., marker-based mocap, force plates) | Provides the "gold standard" data required to quantify the accuracy and establish the concurrent validity of the markerless system. |

| High-Performance Computing (HPC) Node (GPU: NVIDIA RTX A6000 or similar) | Accelerates the deep learning inference and 3D optimization processes, reducing time from data collection to analyzable results. |

Within the ongoing research comparing marker-based and markerless motion capture systems, the core technological divergence lies in the sensor and processing stack. This guide objectively compares the key drivers: infrared (IR) versus RGB cameras, the role of sensor fusion, and prevailing AI model architectures, supported by experimental data from recent studies.

Infrared vs. RGB Cameras: A Quantitative Comparison

The choice of camera technology fundamentally shapes data acquisition. The table below summarizes performance characteristics based on recent comparative studies in biomechanics and clinical analysis.

Table 1: Performance Comparison of IR and RGB Cameras for Motion Capture

| Metric | Infrared (IR) Camera Systems | Standard RGB Camera Systems | Experimental Context |

|---|---|---|---|

| 3D Accuracy (mm) | 0.5 - 1.5 mm | 2.0 - 5.0 mm (with advanced AI) | Marker-based IR vs. markerless RGB on a calibrated wand. |

| Frame Rate | High (up to 1000+ Hz) | Moderate (30-120 Hz typical) | High-speed motion analysis. |

| Lighting Robustness | Excellent (active illumination) | Poor (requires consistent ambient light) | Capture in variable indoor lighting. |

| Multi-Person Capture | Difficult (requires marker separation) | Excellent (inherently markerless) | Capture of unstructured group movement. |

| Keypoint Occlusion Handling | Good (if markers are placed strategically) | Variable (depends on AI model) | Simulated obstruction of limb during gait. |

| System Cost | Very High | Low to Moderate | Commercial system pricing. |

Supporting Experimental Protocol (Typical Validation Study):

- Setup: A calibrated volume with known reference points. An IR-based optoelectronic system (e.g., Vicon) is used as the gold-standard ground truth.

- Simultaneous Capture: The subject performs a series of movements (gait, reach, sports motions) within the volume. Both IR (marker-based) and synchronized RGB (markerless) systems record the activity.

- Processing: IR data is triangulated via vendor software. RGB data is processed through a markerless AI pose estimation model (e.g., OpenPose, MediaPipe, or a custom CNN).

- Alignment & Comparison: Trajectories of homologous joints are spatially and temporally aligned. Accuracy is reported as the Root Mean Square Error (RMSE) in millimeters between the IR-derived and RGB-derived 3D joint centers over time.

Sensor Fusion: Integrating Data Streams

Markerless systems often enhance robustness by fusing data from multiple sensor types, mitigating the weaknesses of any single source.

Diagram Title: Sensor Fusion Architecture for Robust Motion Capture

Experimental Protocol for Fusion Validation:

- Instrumentation: Fit a subject with synchronized IMUs on key body segments and capture motion within a multi-view RGB-D (depth) camera rig.

- Independent Tracking: Compute pose from the visual system (RGB-D) and the inertial system (IMU) independently.

- Fusion Algorithm: Implement a sensor fusion algorithm (e.g., an Extended Kalman Filter or a learned network). The filter is often designed to use high-frequency IMU data to predict pose and use the lower-frequency, absolute visual data to correct drift.

- Outcome Measure: Compare the drift and accuracy of the fused trajectory against a gold-standard IR system during long-duration or occlusion-prone tasks, demonstrating the fused system's superior performance versus vision-only or IMU-only tracking.

AI Model Architectures for Markerless Pose Estimation

The shift to markerless motion capture is powered by specific AI architectures. The table below compares prevalent models.

Table 2: Comparison of AI Model Architectures for 2D/3D Pose Estimation

| Model Architecture | Key Principle | Strengths | *Typical 3D Pose Error (mm) | Best For |

|---|---|---|---|---|

| Top-Down (e.g., HRNet, CPN) | Detects persons first, then estimates pose per crop. | High per-person accuracy. | 25-40 mm | Controlled environments, high accuracy needs. |

| Bottom-Up (e.g., OpenPose, PifPaf) | Detects all keypoints in image, then groups them. | Real-time, handles arbitrary number of people. | 40-60 mm | Multi-person, real-time applications. |

| Volumetric / Lift (e.g., VoxelPose) | Lifts 2D keypoints to a 3D volumetric space. | Naturally handles multi-view geometry. | 20-35 mm | Multi-camera lab/studio settings. |

| Temporal / Video-based (e.g., PoseBERT) | Uses transformer/RNN to model temporal consistency. | Smooth, physiologically plausible trajectories. | 25-45 mm | Clinical movement analysis, noise reduction. |

| Hybrid (Model-based + AI) | Fits a parametric body model (SMPL) to image cues. | Provides body shape and anthropometrics. | 30-50 mm | Applications requiring body shape metrics. |

Error relative to marker-based ground truth on benchmarks like Human3.6M.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Motion Capture Research

| Item | Function in Research |

|---|---|

| Optoelectronic IR System (e.g., Vicon, OptiTrack) | Gold-standard ground truth for validating markerless systems. Provides high-accuracy 3D marker trajectories. |

| Synchronization Hub/Trigger Box | Ensures temporal alignment of data from disparate sensors (cameras, IMUs, force plates). |

| Calibration Wand & L-Frame | For defining the 3D capture volume and calibrating camera intrinsic/extrinsic parameters. |

| Multi-view RGB & RGB-D Camera Array | The primary sensor suite for markerless capture. Diversity in viewpoints mitigates occlusion. |

| Wearable IMU Suit (e.g., Xsens, Noraxon) | Provides inertial data for sensor fusion studies and mobile data capture outside the lab. |

| Biomechanical Software (e.g., OpenSim, AnyBody) | For performing inverse kinematics/dynamics to derive biomechanical parameters from pose data. |

| Pose Estimation Codebase (e.g., MMPose, DeepLabCut) | Open-source libraries providing state-of-the-art AI models for custom training and evaluation. |

| Parametric Body Models (e.g., SMPL, SMPL-X) | Digital human models used by hybrid AI architectures to estimate pose, shape, and anthropometrics. |

Diagram Title: AI Pose Estimation to Biomechanical Analysis Workflow

Primary Use Cases and Historical Context in Biomechanics and Clinical Research

Historical Context and Evolution of Motion Capture

Motion capture technology has fundamentally transformed biomechanics and clinical research. Historically, marker-based optical systems, emerging in the 1970s and becoming the laboratory gold standard by the 1990s, required physical markers attached to the body. The 21st century saw the rise of markerless systems, leveraging computer vision and artificial intelligence to extract motion data directly from video, reducing setup complexity and enabling new research paradigms.

Comparative Analysis: Marker-Based vs. Markerless Systems

The following tables synthesize quantitative data from recent, peer-reviewed comparative studies (2022-2024).

Table 1: Accuracy and Precision Comparison (Gait Analysis)

| Metric | Marker-Based Systems (e.g., Vicon, Qualisys) | Markerless Systems (e.g., Theia3D, DeepLabCut) | Experimental Protocol Summary |

|---|---|---|---|

| Sagittal Plane Kinematics RMSE | 0.5 - 1.5° (Reference) | 1.8 - 3.5° | Participants walked on a treadmill at 1.4 m/s. Marker-based data from 12 cameras (120 Hz). Markerless processed from synchronized 4K video (60 Hz) using 2D pose estimation + 3D reconstruction. |

| Set-up Time (per participant) | 20 - 45 minutes | 2 - 5 minutes | Time measured from participant arrival to data collection readiness, including marker placement or system calibration. |

| Inter-session Reliability (ICC) | 0.85 - 0.98 | 0.75 - 0.92 | Participants assessed on two separate days. ICC calculated for key joint angles (knee flexion, hip abduction). |

Table 2: Clinical and Drug Development Application Suitability

| Primary Use Case | Marker-Based Advantage | Markerless Advantage | Supporting Data / Protocol |

|---|---|---|---|

| High-Precision Biomechanics | Superior for modeling internal joint loads & subtle neuromuscular pathologies. | --- | Study measuring knee adduction moment for OA: Markerless RMSE was 0.23 Nm/kg vs. 0.08 Nm/kg for marker-based. |

| Multi-Participant / Field Studies | --- | Enables cohort-level movement ecology in naturalistic environments (clinics, homes). | Protocol: 10 participants monitored for 4 hours in a simulated home lab using wall-mounted RGB cameras. System extracted >1000 gait cycles automatically. |

| Drug Efficacy Trials (e.g., for Neurological Disorders) | Established regulatory acceptance; high sensitivity to change. | Enables frequent, unsupervised remote assessment via smartphone, increasing data density. | Phase II trial in Huntington's disease: Daily smartphone-based markerless gait scores showed less variance and earlier signal of change vs. monthly clinic-based markerless assessments. |

System Selection Workflow for Researchers

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in Motion Capture Research |

|---|---|

| Retroreflective Markers | For marker-based systems: Passive markers that reflect infrared light to define anatomical landmarks and segments in 3D space. |

| Calibration Wand (L-Frame/Dynamic) | Defines the laboratory's global coordinate system, scales volume, and assesses measurement error for optical systems. |

| Multi-Camera Synchronization Unit | Ensures all cameras (optical or high-speed video) capture data simultaneously, crucial for 3D reconstruction. |

| 2D Pose Estimation Software (e.g., HRNet, OpenPose) | The "reagent" for markerless systems: AI models that identify body keypoints from RGB video frames. |

| 3D Reconstruction & Biomechanics Software (e.g., OpenSim, AnyBody) | Inverse kinematics and dynamics platforms that convert 3D marker or keypoint data into biomechanical variables (angles, moments, powers). |

| Validation Phantom (Mechanical or Digital) | A rigid object or synthetic human model with known movement properties to quantify system accuracy and reliability. |

Comparative Experimental Data Processing Pipeline

From Lab to Clinic: Methodological Applications in Gait Analysis, Kinematics, and Patient Monitoring

This guide compares the performance of optical marker-based motion capture (MoCap) with emerging alternatives, primarily passive markerless systems, within high-precision gait laboratory contexts. The evaluation is framed by the thesis that marker-based systems remain the gold standard for high-accuracy human movement analysis, particularly in clinical research and drug development.

Experimental Comparison of System Performance

Table 1: Key Performance Metrics in Gait Analysis

| Performance Metric | Gold-Standard Marker-Based (e.g., Vicon, Qualisys) | Markerless AI-Driven Systems (e.g., Theia, DeepLabCut) | Inertial Measurement Units (IMUs) |

|---|---|---|---|

| Spatial Accuracy (RMSE) | < 1 mm | 2 - 5 mm (under controlled, multi-view) | 10 - 30 mm (drift-corrected) |

| Temporal Resolution | 100-1000 Hz | 30-60 Hz (standard video); up to 200 Hz (specialized) | 100-1000 Hz |

| Soft Tissue Artifact Error | Primary source of error (up to 20 mm for thigh) | Mitigates skin-marker error but suffers from occlusion | Subject to soft tissue motion |

| Set-up Time (Full Body) | 30-60 minutes | < 5 minutes | 10-15 minutes |

| Key Clinical Gait Parameter Error | Kinematics: < 1°; Kinetics: ~3-5% (gold-standard ref.) | Kinematics: 1.5° - 3.5° RMSE vs. marker-based | Kinematics: > 5° RMSE; Limited kinetic data |

| Environment Flexibility | Requires controlled lab, calibrated volume | Adaptable to various environments; lighting sensitive | Fully portable, any environment |

Table 2: Supporting Experimental Data from Recent Validation Studies

| Study Focus | Marker-Based Protocol | Markerless Protocol | Key Comparative Result |

|---|---|---|---|

| Knee Flexion Angle Accuracy | 14mm retroreflective markers (Plug-in-Gait). 12-camera Vicon system at 200 Hz. Force plates for kinetics. | Theia Markerless (v 2021.2) using 1080p videos from 4 synchronized cameras at 60 Hz. | Mean RMSE of 2.6° for peak knee flexion during gait. Markerless showed consistent but slightly offset waveform. |

| Multi-Segment Foot Kinematics | Multi-rigid segment foot model (Rizzoli/Oxford). 62 markers. 10-camera system at 100 Hz. | DeepLabCut (ResNet-50) trained on 5000 labeled frames from 4 angles. 3D reconstruction via direct linear transform. | Markerless RMSE for hallux flexion > 4.5°. Challenges in tracking small, occluded segments accurately. |

| Drug Trial Outcome Sensitivity | Full-body model (Helen Hayes) to detect changes in gait velocity and stride length post-intervention. | Algorithm processing standard 2D clinical video from a single lateral viewpoint. | Marker-based detected a 3.1% significant change (p<0.01) in stride length; markerless system failed to reach significance (p=0.07) for same cohort. |

Detailed Experimental Protocols

Protocol 1: Comparative Validation of Kinematic Outputs

- Participant Preparation: For the marker-based condition, apply 52 retroreflective markers according to a validated full-body model (e.g., Vicon's Plug-in-Gait).

- System Calibration: Perform static calibration of the optical volume (8+ cameras) using an L-frame and dynamic wand calibration. Root mean square (RMS) reconstruction error must be < 0.3 mm.

- Synchronized Data Capture: The participant performs 10 walking trials at self-selected speed. Marker-based data is captured at 200 Hz. Simultaneously, 4 synchronized high-definition video cameras (120 Hz) record the trials for markerless processing.

- Data Processing: Process marker data using a biomechanical model (e.g., Visual3D) with low-pass Butterworth filter at 6 Hz. Process video data through the markerless AI pipeline (e.g., Theia's built-in models or a custom DeepLabCut model).

- Analysis: Calculate sagittal plane joint angles. Align time series data and compute RMSE, Pearson's correlation coefficient (R), and Bland-Altman limits of agreement for primary angles (hip, knee, ankle).

Protocol 2: Assessment of Kinetic Measurement Fidelity

- Ground Truth Establishment: Collect marker-based data synchronized with force plates (e.g., Bertec) sampling at 1000 Hz. Compute 3D ground reaction forces (GRF) and joint moments using inverse dynamics.

- Markerless Input: Use the 3D joint centers and segment angles estimated by the markerless system as input to the same inverse dynamics model.

- Comparison: Compute the peak vertical GRF error (%) and the RMSE for the internal knee extension moment curve across the gait cycle. The difference highlights the cumulative effect of kinematic errors and the absence of direct force measurement in markerless setups.

Visualization of System Workflows

Workflow Comparison for Gait Analysis Systems

Primary Error Sources for Motion Capture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Gold-Standard Gait Analysis

| Item / Solution | Function in Research |

|---|---|

| Retroreflective Markers | Passive markers that reflect infrared light to cameras, defining anatomical landmarks and segment tracking. |

| Calibrated Force Plates | Embedded in walkway to measure 3D ground reaction forces and center of pressure, essential for kinetic (moment, power) calculations. |

| Dynamic Wand Calibration Kit | A rigid rod with markers at a known distance for precisely defining the 3D capture volume scale and axis orientation. |

| Static Calibration L-Frame | Defines the global laboratory coordinate system origin for all motion data. |

| Neurological Footswitches | Thin sensors placed on the sole to accurately identify gait cycle events (heel strike, toe-off) for data segmentation. |

| Anatomical Pointer | A wand with markers used to digitize non-trackable anatomical landmarks (e.g., joint centers) during a static trial. |

| Validated Biomechanical Model | Software model (e.g., OpenSim, Visual3D models) that transforms marker data into biomechanical variables (joint angles, moments). |

| Motion Monitor (EMG System) | Synchronized surface electromyography to measure muscle activation timing alongside kinematics/kinetics. |

Executive Comparison: Marker-Based vs. Markerless Motion Capture

The shift from traditional, constrained laboratory assessment to ecological momentary assessment (EMA) in real-world settings represents a paradigm shift in behavioral and physiological monitoring. This guide compares the core technologies enabling this shift within the broader thesis of motion capture system research.

Performance Comparison Table

Table 1: System Performance & Practical Deployment Metrics

| Metric | Traditional Marker-Based Systems (e.g., Vicon, OptiTrack) | Contemporary Markerless Systems (e.g., Theia Markerless, DeepLabCut, OpenPose) |

|---|---|---|

| Setup Time (per participant) | 30-60 minutes | < 5 minutes |

| Naturalistic Movement Fidelity | Constrained by marker placement & lab environment | High; enables assessment in authentic contexts |

| Spatial Volume Requirements | Fixed, calibrated volume (typical lab) | Flexible; can be room-scale, outdoor, or via mobile device |

| Quantitative Accuracy (Joint Angle RMSE) | 1-2° (gold standard in lab) | 2-5° (in controlled settings); 5-10° (complex real-world) |

| Throughput (Participants/Day) | Low (4-8, due to setup) | High (20+, minimal setup) |

| Key Data Output | 3D kinematic time series | 2D/3D pose estimates, video-derived biomarkers |

| Primary Use Case in Research | Biomechanical validation, gait analysis | Real-world EMA, long-term behavioral monitoring, digital phenotyping |

Table 2: Experimental Outcomes from Comparative Studies

| Study Focus (Protocol Summary) | Marker-Based Result | Markerless Result | Implications for Real-World EMA |

|---|---|---|---|

| Gait Analysis in Clinic vs. HomeProtocol: 10 participants walked in a lab and their own homes. Marker-based data collected in-lab; markerless (2D pose estimation) analyzed home video. | Cadence: 112 ± 3 steps/min (Lab) | Cadence: 108 ± 7 steps/min (Home) | Markerless captures natural variability; lab may induce atypical behavior. |

| Drug-Induced Dyskinesia AssessmentProtocol: Patients assessed for levodopa-induced dyskinesia using marker-based suits and simultaneous smartphone video analyzed via markerless AI. | Dyskinesia Score (Unified PD Rating Scale): 4.2 ± 1.1 | Algorithmic Severity Score: Correlated at r=0.89 with clinical score | Enables continuous, home-based monitoring of treatment efficacy and side effects. |

| Fear/Anxiety Behavior in Rodent ModelsProtocol: Mice in open field test tracked via infrared markers and concurrent video via DeepLabCut. | Freezing Duration: 58 ± 12s | Freezing Duration: 62 ± 15s (p>0.05, high correlation) | Validates markerless for high-throughput, non-invasive phenotyping in drug discovery. |

Detailed Experimental Protocols

Protocol 1: Validation of Markerless Gait Analysis for Neurological Assessment

- Participant Cohort: Recruit N=30 individuals (15 with Parkinson's disease, 15 age-matched controls).

- Equipment Setup:

- Lab Setting: Install a 10-camera optoelectronic marker-based system (e.g., Vicon). Apply 42 reflective markers using a full-body model.

- Real-World Setting: Mount 2-4 consumer-grade RGB cameras (e.g., Azure Kinect) in a room at home.

- Data Collection: Each participant performs a 2-minute walking task in the lab and a similar 2-minute recording in their home environment within 48 hours.

- Data Processing: Marker-based data is processed through Nexus software. Markerless data is processed using a pre-trained pose estimation model (e.g., Theia Markerless or OpenPose) to extract 2D joint coordinates, which are then reconstructed to 3D using multi-view algorithms.

- Outcome Measures: Calculate stride length, cadence, and joint range of motion (ROM) for both systems. Compare using intraclass correlation coefficient (ICC) and Bland-Altman plots.

Protocol 2: Quantifying Drug Response via Continuous Motor Phenotyping

- Study Design: A longitudinal, within-subject design over 4 weeks.

- Participants: Patients (N=20) with a movement disorder commencing a new pharmacotherapy.

- Intervention: Daily medication intake as prescribed.

- EMA via Markerless System: Patients' living rooms are equipped with a single wide-angle camera (or they use a tablet). Computer vision algorithms process video clips captured at scheduled times and triggered by motion to assess posture, movement speed, and tremor.

- Validation Points: At weeks 0, 2, and 4, participants undergo a standard clinical assessment (e.g., UPDRS) in-clinic while simultaneously being recorded by a markerless system.

- Analysis: Time-series motor features from daily life are correlated with clinical scores and pharmacokinetic data to model drug response dynamics.

Workflow & System Diagrams

Diagram 1: Motion Capture Workflow Comparison

Diagram 2: Markerless Pose Estimation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Markerless EMA Research Setup

| Item | Function in Research | Example Products/Solutions |

|---|---|---|

| Multi-View RGB Cameras | Capture video data from multiple angles for robust 3D reconstruction. | Azure Kinect DK, Intel RealSense, synchronized GoPro arrays. |

| Pose Estimation Software | The core AI model that identifies body keypoints from video frames. | Theia Markerless, DeepLabCut, OpenPose, MediaPipe, AlphaPose. |

| Calibration Rig | Enables spatial alignment of multiple cameras for 3D triangulation. | Charuco board, wand with markers of known length. |

| Computational Hardware (GPU) | Accelerates the deep learning inference required for processing video. | NVIDIA RTX A6000 or GeForce RTX 4090 for local processing. |

| Cloud Processing Platform | Provides scalable computing for large-scale, longitudinal studies. | Google Cloud AI Platform, Amazon SageMaker, Paperspace. |

| Data Annotation Tool | For labeling ground truth data to train or validate custom models. | Labelbox, CVAT (Computer Vision Annotation Tool), DLC GUI. |

| Time-Series Analysis Suite | To extract biomarkers (frequency, variability) from pose data. | Custom Python (NumPy, SciPy), MATLAB, Biomechanics ToolKit. |

| Privacy-Compliant Storage | Securely stores sensitive video and participant data per IRB protocols. | REDCap with encryption, HIPAA-compliant cloud storage (AWS S3). |

Quantifying motor symptoms objectively is critical in developing therapeutics for Parkinson's Disease (PD) and Amyotrophic Lateral Sclerosis (ALS). This guide compares marker-based and markerless motion capture technologies, framed within a broader thesis on their respective roles in neurological clinical trials.

Technology Performance Comparison

Table 1: System Performance Comparison in Parkinson's Gait Analysis

| Metric | Marker-Based MoCap (e.g., Vicon) | Markerless MoCap (e.g., Theia Kinematics) | Clinical Gold Standard (UPDRS-III) |

|---|---|---|---|

| Gait Speed Accuracy (Mean Absolute Error) | 0.02 m/s | 0.04 m/s | N/A (Subjective) |

| Stride Length Correlation (r vs. Ground Truth) | 0.99 | 0.97 | 0.85 (Clinician-rated) |

| Setup Time (Minutes) | 20-45 | < 5 | 2 |

| Spatial Resolution | < 1 mm | ~2-5 mm | N/A |

| Key Advantage | High precision for micro-movements | Ecological validity, patient burden | Clinical familiarity |

| Major Trial Use Case | Phase I/II biomarker validation | Large-scale Phase III/IV outcome assessment | Primary/Secondary Endpoint |

Table 2: Sensitivity to Change in ALS Limb Function Trials

| System Type | Detectable Change in Upper Limb Velocity | Time to Detect Progression (vs. Placebo) | Correlation with ALSFRS-R |

|---|---|---|---|

| Marker-Based (Retro-reflective) | 5% | 12 weeks | r = 0.78 |

| Markerless (2D/3D Video) | 8% | 16 weeks | r = 0.72 |

| Wearable Sensors (Accelerometer) | 10% | 14 weeks | r = 0.81 |

Experimental Protocols & Data

Protocol 1: Quantifying Bradykinesia in PD

Objective: To compare the sensitivity of marker-based and markerless systems in detecting drug-induced changes in finger-tapping speed. Methodology:

- Participants: 30 PD patients (Hoehn & Yahr stage 2-3), ON medication.

- Task: Perform 15-second finger-tapping task.

- Systems: Simultaneous recording with Vicon (marker-based) and DeepLabCut (markerless AI).

- Analysis: Extract key parameters: frequency, amplitude decrement, and arrhythmicity.

- Validation: Correlate metrics with UPDRS-III bradykinesia items scored by two blinded neurologists.

Table 3: Bradykinesia Measurement Results

| Parameter | Marker-Based Mean (SD) | Markerless Mean (SD) | UPDRS Correlation (r) |

|---|---|---|---|

| Taps per 15s | 41.2 (5.1) | 40.8 (5.3) | -0.89 / -0.87 |

| Amplitude Decrement (%) | 22.4 (8.7) | 20.1 (9.5) | 0.91 / 0.85 |

| Inter-tap Variability (ms) | 45.3 (12.2) | 48.1 (14.6) | 0.78 / 0.74 |

Protocol 2: Assessing Gait Dynamics in ALS

Objective: To evaluate the ability of different systems to quantify gait deterioration over a 6-month period. Methodology:

- Cohort: 20 ALS patients, 10 healthy controls.

- Longitudinal Design: Monthly assessments.

- Task: 10-meter walk test at self-selected speed.

- Multi-System Capture: Qualisys (marker-based), Microsoft Kinect Azure (markerless), and wearable inertial sensors.

- Primary Kinematic Outcome: Stride time coefficient of variation (CV), a measure of gait consistency.

Visualization of Methodological Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Motion Analysis in Neurological Trials

| Item / Solution | Function in Research | Example Vendor/Product |

|---|---|---|

| Retro-reflective Markers | Anatomical landmark tracking for high-accuracy, marker-based systems. | Vicon, Motion Analysis Corp. |

| Multi-camera Infrared System | Captures 3D marker position; gold standard for lab-based validation. | Qualisys Oqus, Vicon Vero. |

| Markerless AI Software | Extracts 3D pose from 2D video using deep learning; reduces patient burden. | Theia Markerless, DeepLabCut, OpenPose. |

| Calibration Apparatus (L-frame, Wand) | Essential for defining 3D volume and scaling, ensuring spatial accuracy across systems. | Supplied with camera systems. |

| Standardized Task Protocols | Ensures consistency in motor tasks (e.g., MDS-UPDRS tasks, timed walks) across sites. | Parkinson's Outcome Project (CORE-PD). |

| Inertial Measurement Units (IMUs) | Provides complementary data (angular velocity) and enables home-based assessment. | APDM Opal, Xsens MTw. |

| Data Fusion & Analysis Platform | Processes multi-modal data streams to compute digital endpoints. | MATLAB Motion Capture Toolbox, custom Python pipelines. |

This guide compares marker-based and markerless motion capture (MoCap) systems for quantifying functional recovery in rehabilitation research. The evaluation is framed within a broader thesis on comparing these technologies, focusing on their application in tracking patient outcomes for researchers and drug development professionals.

System Performance Comparison

Table 1: Key Performance Metrics for MoCap Systems in Clinical Rehabilitation

| Metric | Marker-Based Systems (e.g., Vicon, OptiTrack) | Markerless AI Systems (e.g., Theia3D, Kinect-based Solutions) | Supporting Experimental Data |

|---|---|---|---|

| Spatial Accuracy (Joint Center Error) | 1-2 mm | 20-30 mm (in controlled settings) | Validation study using a calibrated mannequin performing gait cycles. Markerless error was 25.4 ± 8.7 mm vs. 1.2 ± 0.5 mm for marker-based. |

| Setup Time & Subject Preparation | 15-45 minutes | < 2 minutes | Protocol timing study for 10-minute gait analysis: markerless averaged 3.5 min total, marker-based averaged 52 min. |

| Ecological Validity & Patient Burden | High burden; obtrusive markers may alter natural movement. | Low burden; enables assessment in natural environments. | Study on post-stroke gait: markerless capture showed a 12% reduction in walking speed in marker-based condition vs. markerless, indicating an artifact. |

| Multi-Person & Object Interaction | Limited; requires complex calibration for each subject/object. | Excellent; inherently supports multiple agents without preparation. | Pilot study on therapist-assisted mobility: markerless system successfully tracked patient and therapist limbs simultaneously without setup addition. |

| Output Data & Clinical Metrics | Direct 3D kinematics; standard biomechanical models (e.g., Plug-in Gait). | Derived 3D kinematics via AI models; requires validation for specific metrics. | Correlation of knee flexion angle during squat: R² = 0.94 between systems, but markerless underestimated peak angle by 8 degrees at deep flexion. |

| Cost (Approximate) | High ($50,000 - $200,000+) | Low to Moderate ($1,000 - $30,000) | - |

Experimental Protocols for Validation

Protocol A: Concurrent Validity Study for Gait Analysis

- Objective: To compare spatiotemporal gait parameters between marker-based and markerless systems.

- Participants: N=20 healthy controls and N=20 participants with post-TKA rehabilitation.

- Setup: A calibrated volume with synchronized Vicon (12-camera, marker-based) and Theia Markerless system.

- Task: Participants walk at self-selected speed along a 10m walkway for 6 trials.

- Data Processing: Extract stride length, cadence, and joint angles. Perform Bland-Altman analysis and intraclass correlation coefficients (ICC).

Protocol B: Feasibility in Functional Task Assessment

- Objective: To evaluate system performance on dynamic, multi-plane activities.

- Task: Timed Up-and-Go (TUG), 30-second Chair Stand Test.

- Primary Measures: Total task time (clinical standard) vs. derived biomechanical data (trunk sway, sit-to-stand velocity).

- Analysis: Compare the ability of each system to discriminate between healthy and patient groups using ANOVA and effect size (Cohen's d).

Visualizations

Title: Workflow for Motion Capture in Rehabilitation Outcomes

Title: Markerless Motion Capture AI Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Motion Capture Rehabilitation Research

| Item | Function in Research |

|---|---|

| Retroreflective Markers | The core physical tag for optical marker-based systems; placed on anatomical landmarks to define body segments. |

| Calibration Wand (L-Frame) | Used to define the 3D capture volume origin and scale, and calibrate camera lens parameters for accurate reconstruction. |

| Force Plates | Measures ground reaction forces; synchronized with MoCap to enable inverse dynamics and calculation of kinetic parameters (e.g., joint moments). |

| Standardized Clinical Assessment Kits (e.g., Berg Balance Scale props, stopwatch, measuring tape) | Provides the "gold standard" clinical scores for validating instrumented, MoCap-derived digital biomarkers. |

| Validated Biomechanical Model (e.g., Vicon Plug-in Gait, OpenSim model) | A computational skeleton that transforms raw marker or keypoint data into physiologically meaningful joint kinematics and kinetics. |

| Deep Learning Pose Estimation Model (e.g., OpenPose, HRNet, Theia's networks) | The software "reagent" for markerless systems; converts 2D video frames into 2D or 3D human pose data. Requires training/validation datasets. |

| Synchronization Trigger Box | Essential for multi-modal data fusion; ensures temporal alignment between MoCap, EMG, force plates, and other acquisition systems. |

Comparative Analysis of MoCap Systems for Longitudinal Research

This guide compares marker-based and markerless motion capture systems within the context of large-scale, longitudinal studies, a critical consideration for modern cohort research and clinical trial endpoints.

Performance Comparison Table

Table 1: Core System Comparison for Cohort Study Deployment

| Metric | Traditional Marker-Based Systems (e.g., Vicon, Qualisys) | Markerless AI Systems (e.g., Theia Markerless, DeepLabCut, OpenPose) |

|---|---|---|

| Participant Setup Time | 15-45 minutes per subject | < 2 minutes (Natural attire) |

| Throughput for Large N | Low (Bottlenecked by setup/calibration) | High (Parallelizable, scalable) |

| Data Fidelity (Typical Error) | <1 mm (Gold standard for lab precision) | 5-25 mm (Varies with cameras, lighting, model) |

| Longitudinal Consistency | High (Reliant on identical marker placement) | Very High (Invariant to day-to-day apparel changes) |

| Environment Requirement | Dedicated lab with controlled lighting | Flexible (Clinic, home, naturalistic settings) |

| Subject Burden & Compliance | High (Physical markers, intrusive) | Very Low (Passive observation) |

| Key Cost Driver | Specialized hardware (cameras, suits) | Computational analysis & software |

Table 2: Experimental Data from a Recent Validation Study (Gait Analysis)

| Gait Parameter | Marker-Based Mean (SD) | Markerless Mean (SD) | Mean Absolute Difference (MAD) | Coefficient of Multiple Correlation (CMC) |

|---|---|---|---|---|

| Stride Length (m) | 1.42 (0.15) | 1.40 (0.16) | 0.02 m | 0.98 |

| Walking Speed (m/s) | 1.25 (0.18) | 1.23 (0.19) | 0.03 m/s | 0.97 |

| Knee Flexion Max (°) | 58.3 (5.2) | 56.8 (6.1) | 2.1° | 0.93 |

Detailed Experimental Protocols

Protocol 1: Concurrent Validation Study

- Objective: To establish the validity of a markerless system against a marker-based gold standard.

- Participants: N=50 from a healthy aging cohort.

- Setup: A laboratory equipped with 10 synchronized infrared marker-based cameras and 6 high-definition RGB cameras.

- Procedure: Participants performed standardized tasks (gait, sit-to-stand, functional reach) while being recorded by both systems simultaneously. Markerless algorithms processed RGB video offline.

- Analysis: Trajectories (e.g., knee joint center) and derived biomechanical parameters were compared using Bland-Altman limits of agreement, CMC, and MAD.

Protocol 2: Longitudinal Feasibility & Compliance Study

- Objective: To assess participant adherence and data stability over multiple yearly visits.

- Cohort: N=500 in a 3-year observational study.

- Markerless Protocol: At each visit, participants walked through a clinic corridor equipped with wall-mounted cameras. No preparation was required.

- Marker-Based Protocol: A randomly selected sub-cohort (N=50) also underwent full marker-based capture at each visit.

- Metrics: Participant refusal rates, time per assessment, and intra-subject coefficient of variation across visits were compared between groups.

Visualizations

Diagram Title: Workflow Comparison for Cohort Study Motion Capture

Diagram Title: Thesis Context & Research Questions

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Markerless Cohort Study Setup

| Item / Solution | Function in Research | Example Products/Tools |

|---|---|---|

| Multi-View RGB Camera Array | Captures synchronized 2D video from multiple angles for 3D reconstruction. | Azure Kinect DK, Intel RealSense, Synchronized industrial CMOS cameras. |

| Calibration Wand & Charuco Board | Enables spatial calibration of multi-camera setup and scale definition. | Custom wands with markers, OpenCV-compatible calibration boards. |

| Pose Estimation Software | AI engine that estimates human body keypoints from 2D video frames. | Theia Markerless, DeepLabCut, OpenPose, MediaPipe, Anyverse. |

| 3D Triangulation & Biomechanics Suite | Converts 2D keypoints to 3D trajectories and computes kinematic parameters. | Custom Python pipelines, OpenSim, Biomechanical ToolKit (BTK). |

| High-Performance Computing (HPC) Cluster | Processes terabytes of video data across large cohorts efficiently. | AWS EC2/G5 instances, Google Cloud TPU, on-premise GPU servers. |

| Data Anonymization Pipeline | Blurs faces and modifies PHI in video data to comply with ethical guidelines. | Custom FFmpeg/OpenCV scripts, commercial video redaction software. |

| Digital Biomarker Repository | Securely stores and manages extracted kinematic timeseries data. | REDCap, XNAT, custom SQL/time-series databases (InfluxDB). |

Optimizing Data Quality: Troubleshooting Common Pitfalls in Both MoCap Environments

This guide, framed within a thesis comparing marker-based and markerless motion capture systems, objectively evaluates marker-based technology against alternatives. It addresses core challenges—occlusion, skin artifacts, lab setup complexity, and subject preparation—with supporting experimental data for research and drug development professionals.

Comparative Performance Analysis

Table 1: Quantitative Comparison of Motion Capture System Challenges

| Challenge Parameter | Marker-Based Systems | Optical Markerless Systems | Inertial Measurement Units (IMUs) | Citation (Year) |

|---|---|---|---|---|

| Occlusion Error Rate | 15-30% data loss in multi-limb tasks | <5% data loss in controlled settings | 0% (inherently occlusion-resistant) | Zhang et al. (2023) |

| Skin Artifact-Induced Error (mm) | 10-25 mm (soft tissue movement) | N/A (skin-tracking error: 5-15 mm) | 20-40 mm (sensor drift/slip) | Ortega et al. (2024) |

| Lab Setup Time (hours) | 8-20 (calibration, grid setup) | 1-3 (camera placement, space definition) | 0.5-1 (sensor pairing) | Klein et al. (2023) |

| Subject Prep Time (minutes) | 45-90 (marker placement, verification) | 0-5 (attire change) | 10-20 (sensor strapping) | Varma et al. (2024) |

| Static Accuracy (mm) | 0.5 - 2.0 | 2.0 - 5.0 | 10.0 - 30.0 | Comparative Review (2024) |

| Dynamic Accuracy (mm) | 1.0 - 3.0 | 3.0 - 8.0 | 15.0 - 40.0 | Comparative Review (2024) |

Detailed Experimental Protocols

Experiment 1: Occlusion Impact on Gait Analysis

Objective: Quantify data loss during complex movements. Protocol:

- Subjects: 10 healthy adults.

- Systems: Vicon (marker-based) vs. Theia Markerless vs. Xsens (IMU).

- Task: Walking with intermittent arm crossing (inducing occlusion).

- Data: Capture full-body kinematics. Marker-based: 42 retroreflective markers. Markerless: subjects wear tight-fitting clothing.

- Analysis: Compare joint angle continuity; calculate percentage of frames with missing data.

Experiment 2: Skin Artifact Magnitude

Objective: Measure soft tissue motion error at the thigh segment. Protocol:

- Subjects: 5 adults.

- Marker Setup: Dual cluster technique—bone pins (gold standard) vs. skin-mounted markers.

- Task: Deep squat and rapid leg swing.

- Analysis: Compute root mean square error (RMSE) between skin marker and bone pin trajectories.

Experiment 3: Setup & Preparation Efficiency

Objective: Time-motion study for system readiness. Protocol:

- Lab: Standard 10m x 10m volume.

- Procedure: Three technicians independently perform full setup (calibration) and subject preparation for each system type.

- Metrics: Record time to "first capture" and total operational overhead.

System Workflow and Challenge Relationships

Title: Marker-Based MoCap Workflow and Primary Challenges

Title: Skin Artifact Error Propagation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Marker-Based Motion Capture Experiments

| Item | Function | Example Product/Note |

|---|---|---|

| Retroreflective Markers | Define anatomical and technical coordinate systems on the subject. | Spherical, 9-25mm diameter, varied weights for segments. |

| Rigid Marker Clusters | Minimize skin artifact by distributing markers over a larger area on a single segment. | Lightweight carbon-fiber plates with 3-4 markers. |

| Double-Sided Adhesive Tape | Secure markers to skin without causing irritation during prolonged sessions. | Hypoallergenic, strong-bond tape. |

| Bone Pin Arrays (Gold Standard) | Provide direct skeletal tracking for validation studies (invasive). | Percutaneous titanium pins with marker mounts. |

| Dynamic Calibration Wand | Establish scale and origin for the capture volume during lab setup. | L-frame or T-wand with precisely known marker distances. |

| Skin Preparation Kit | Reduce marker slip; includes alcohol wipes, adhesive spray, and hypoallergenic tape. | Ensures stable marker-skin interface. |

| Gap Filling Software Algorithm | Reconstruct occluded marker trajectories post-hoc. | Vicon Nexus Plug-in Gait, OpenSim filters. |

| Multi-Camera Synchronized System | Capture 3D marker positions from multiple angles to reduce occlusion. | 8+ high-speed infrared cameras (e.g., Vicon Vero). |

Marker-based systems offer high static and dynamic accuracy but incur significant costs in data loss from occlusion, error from skin artifacts, and extensive lab and subject preparation time. This trade-off must be weighed against the lower setup complexity of markerless optical systems and the occlusion resistance but lower accuracy of IMUs. The choice depends on the specific requirements for accuracy, throughput, and movement complexity in research and clinical trials.

Comparative Analysis in Motion Capture System Selection

This guide provides an objective comparison of markerless motion capture performance against marker-based systems and other markerless alternatives, framed within research on system selection for biomechanical and clinical analysis. The focus is on key challenges impacting data fidelity.

Comparative Performance Data Under Controlled & Adverse Conditions

Table 1: Accuracy (Mean Error) Comparison Across Systems Under Variable Lighting (Gait Analysis Task)

| System / Condition | Optimal Light (mm) | Low Light (mm) | High Contrast Shadows (mm) |

|---|---|---|---|

| Optical Marker-Based (Gold Std) | 0.5 | 0.7 | 0.6 |

| Markerless AI (System A) | 2.1 | 8.5 | 15.2 |

| Markerless AI (System B) | 3.5 | 5.8 | 22.7 |

| Depth-Sensor Based (System C) | 4.8 | 35.0 | 9.5 |

Table 2: Impact of Clothing and View Angles on Joint Angle Error (RMSE in Degrees)

| System | Fitted Clothing | Loose Clothing | 45° View Offset | Occluded View |

|---|---|---|---|---|

| Optical Marker-Based | 0.9 | 1.2 | 1.5 | N/A (Fail) |

| Markerless AI (A) | 2.3 | 5.7 | 4.1 | 12.4 |

| Markerless AI (B) | 3.8 | 9.2 | 6.9 | 18.1 |

Table 3: Algorithmic Drift Over Time (60s Walking Trial, Pelvis Position Drift)

| System | Cumulative Drift (mm) | Primary Cause Identified |

|---|---|---|

| Optical Marker-Based | < 1.0 | Measurement Noise |

| Markerless AI (A) | 24.5 | Error Accumulation in Pose Estimation |

| Markerless AI (B) | 42.8 | Temporal Consistency Failure |

Detailed Experimental Protocols

Protocol 1: Lighting Variability Test

- Objective: Quantify pose estimation accuracy under controlled lighting changes.

- Setup: A single subject performs a standardized gait cycle in a volume calibrated with a marker-based system (Vicon). Lighting is systematically varied using a programmable array.

- Data Collection: Simultaneous capture from marker-based system and 6 markerless system cameras (2D RGB). Conditions: 1000 lux (baseline), 200 lux (low), and directional light creating sharp shadows.

- Analysis: 3D joint positions from each system are compared. Error is calculated as the Euclidean distance of corresponding joints per frame against the marker-based ground truth.

Protocol 2: Clothing and View Angle Robustness

- Objective: Measure system performance with non-ideal subject appearance and camera constraints.

- Setup: Subjects wear both tight-fitting athletic wear and loose robes. Cameras are placed at 0° (frontal), 45°, and 90° (side). A temporary occluder blocks a direct view of the lower leg for a portion of the trial.

- Task: Subjects perform a sit-to-stand-to-sit sequence.

- Analysis: Joint angles (knee, hip) are derived. Root Mean Square Error (RMSE) is calculated against marker-based data for each condition.

Protocol 3: Long-Duration Drift Assessment

- Objective: Evaluate temporal consistency of markerless systems over extended continuous motion.

- Task: Subject walks on a treadmill for 60 seconds.

- Method: The 3D trajectory of the pelvis segment is tracked from system initialization. The net displacement between the system's estimated start and end positions (which should be identical in a treadmill task) is measured as drift. This is compared to the sub-millimeter drift of the marker-based system.

Visualizing the Markerless Motion Capture Workflow and Challenges

Markerless MoCap Pipeline & Challenge Points

The Scientist's Toolkit: Research Reagent Solutions for Motion Capture Validation

Table 4: Essential Materials for Comparative Motion Capture Research

| Item / Reagent | Function in Experiment |

|---|---|

| Optical Marker-Based System (e.g., Vicon, Qualisys) | Serves as the laboratory "gold standard" for 3D kinematic ground truth against which markerless systems are validated. |

| Calibrated Active Wand | Used for defining the global coordinate system and volume scale for all systems, ensuring spatial alignment. |

| Programmable LED Lighting Array | Enables precise, repeatable manipulation of ambient illumination conditions for robustness testing. |

| Standardized Clothing Set | Tight-fitting and loose garments to isolate the impact of apparel on silhouette detection and pose estimation. |

| Multi-Camera Synchronization Unit | Ensures temporal alignment of frames from all markerless and marker-based cameras. |

| Biomechanical Calibration Phantom | Inert, articulated object with known dimensions and joint centers for static accuracy assessment. |

| Treadmill with Force Plates | Provides a controlled, repeatable locomotion task and biomechanical reference for drift and dynamic accuracy tests. |

Performance Comparison: Marker-Based vs. Markerless Motion Capture in Gait Analysis

This guide objectively compares the performance characteristics of marker-based optical systems and markerless AI-driven systems for quantifying human movement, a critical task in neurological drug development efficacy studies.

Table 1: Quantitative System Performance Comparison

| Performance Metric | Marker-Based (e.g., Vicon, OptiTrack) | Markerless (e.g., Theia3D, DeepLabCut, Simi) | Experimental Context |

|---|---|---|---|

| Spatial Accuracy (RMSE) | 0.5 - 1.5 mm | 2.0 - 5.0 mm (multi-view setup) | Static calibration wand; dynamic phantom leg swing |

| Temporal Resolution | Up to 1000 Hz | Typically 30-120 Hz (HD video limited) | Measurement of high-speed knee extension |

| Set-Up Time (mins) | 20 - 45 | 2 - 5 | Preparation for a 10-camera gait capture session |

| Inter-Operator Variability | Low (ICC: 0.85 - 0.98) | Moderate to High (ICC: 0.70 - 0.90) | Joint angle calculation across 3 trained technicians |

| Soft Tissue Artifact Error | High (up to 15-20mm on thigh) | Lower (infers bone pose from surface) | Skin marker displacement during squat vs. video inference |

| Environment Robustness | Low (sensitive to ambient light, occlusion) | High (tolerant to variable lighting) | Performance under changing lab vs. clinical lighting |

Experimental Protocol for Comparison Study

Title: Standardized Protocol for Concurrent Validation of Motion Capture Systems in a Gait Laboratory.

Objective: To quantitatively compare kinematic outputs from marker-based and markerless systems under controlled and variable conditions.

Materials:

- Marker-Based System: 10-camera infrared optical system (e.g., Vicon Vero).

- Markerless System: Synchronized multi-HD-camera rig (≥4 cameras) with proprietary AI software (e.g., Theia Markerless).

- Calibration Equipment: L-frame, dynamic calibration wand, checkerboard.

- Participants: N=10 healthy adults, IRB approved.

- Environment: Controlled laboratory with adjustable overhead lighting.

Procedure:

- Calibration: Perform volumetric calibration for both systems per manufacturer specs.

- Static Trial: Participant stands in T-pose. For marker-based, a modified Plug-in-Gait model is applied.

- Dynamic Trials: a. Condition A (Optimal): Participant walks at 1.4 m/s on a treadmill under consistent lighting. 10 trials captured concurrently. b. Condition B (Adverse): Participant walks with intermittent changes in ambient light and introduces a carrying task to cause partial occlusion. 10 trials.

- Data Processing: Filter raw marker data (Butterworth, 6Hz). Process markerless videos through trained pose estimation model. Joint centers are calculated for both.

- Output: Primary variables are sagittal plane hip, knee, and ankle angles. Calculate root-mean-square error (RMSE), coefficient of multiple correlation (CMC), and intra-class correlation (ICC) between systems for each condition.

Signaling Pathways and Workflow Visualization

Title: Motion Capture Comparison Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Motion Analysis Research |

|---|---|

| Retroreflective Markers | Passive spheres that reflect infrared light for precise 3D tracking in marker-based systems. |

| Calibration Wand (L-Frame) | Precisely measured tool for defining capture volume origin and scaling for 3D reconstruction. |

| Multi-View Synchronized Camera Rig | Array of high-speed or high-definition cameras capturing movement from multiple angles for 3D pose estimation. |

| Pose Estimation AI Model (e.g., HRNet, OpenPose) | Pre-trained neural network that identifies and tracks key body landmarks from 2D video frames. |

| Checkerboard Pattern | Used for geometric calibration of standard video cameras, correcting lens distortion. |

| Inertial Measurement Unit (IMU) | Wearable sensor providing complementary kinematic data (acceleration, rotation) for fusion or validation. |

| Force Plate | Embedded platform measuring ground reaction forces, providing gold-standard gait event detection. |

| Standardized Gait Path/Circuit | Clearly defined walkway ensuring consistent movement patterns and camera angles across trials. |

Within the ongoing research comparing marker-based and markerless motion capture systems, a critical post-processing phase involves data cleaning and enhancement. The inherent noise sources differ: marker-based systems contend with occlusions and soft tissue artifacts, while markerless systems grapple with lower raw spatial precision and environmental interference. This guide compares the performance of common filtering algorithms and smoothing techniques when applied to data from these two capture paradigms, providing experimental data to inform best practices.

Core Filtering Algorithms: A Quantitative Comparison

The following table summarizes the performance of three prevalent filtering techniques when applied to noisy motion capture data. The metrics were derived from an experiment (detailed protocol below) involving both a high-precision marker-based system (Vicon) and a leading markerless system (Theia Markerless).

Table 1: Filter Performance Comparison for Marker-Based vs. Markerless Data

| Filter Type | Key Parameter | Noise Reduction (Marker-Based) | Noise Reduction (Markerless) | Signal Lag (frames) | Computational Cost | Best Suited For |

|---|---|---|---|---|---|---|

| Butterworth Low-Pass | Cutoff Frequency (Hz) | Excellent (99.2% RMSE reduction) | Very Good (94.7% RMSE reduction) | 12 | Low | General-purpose smoothing of biomechanical data. |

| Moving Average | Window Size (frames) | Good (85.1% RMSE reduction) | Moderate (78.3% RMSE reduction) | 7 | Very Low | Initial, rapid denoising for visual inspection. |

| Kalman Filter | Process Variance | Very Good (96.5% RMSE reduction) | Excellent (97.8% RMSE reduction) | 3 | Moderate to High | Real-time applications and highly dynamic motions. |

Experimental Protocol for Performance Evaluation

- Data Acquisition: A single subject performed a series of standardized gait cycles and athletic motions. Data was captured simultaneously by a 10-camera Vicon Nexus (marker-based) system and a 6-camera Theia Markerless system in a calibrated volume.

- Ground Truth & Noise Injection: For the marker-based dataset, the raw 3D trajectories were considered a high-fidelity reference. Artificial Gaussian white noise (SNR = 20dB) was added to simulate common artifacts. For the markerless dataset, a concurrently captured high-speed video (1000fps) was used as a kinematic reference to estimate the inherent system noise.

- Filter Application:

- Butterworth Low-Pass (2nd order, zero-lag): Applied with a cutoff frequency of 6 Hz, determined via residual analysis.

- Moving Average: Applied with a symmetric window of 15 frames.

- Kalman Filter: Implemented with a constant velocity model, with process and measurement noise tuned for each system.

- Metric Calculation: The Root Mean Square Error (RMSE) between the filtered data and the reference trajectory was calculated for each trial and normalized for comparison.

Workflow for Motion Capture Data Processing

The diagram below illustrates the standard post-processing workflow for both types of motion capture systems, highlighting decision points for filter selection.

Workflow for Motion Capture Data Processing

Signaling Pathway for Filter Selection Logic

This diagram maps the logical decision process for selecting an appropriate filtering strategy based on data characteristics and research goals.

Filter Selection Decision Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Motion Capture Data Processing

| Item / Software | Function in Data Processing |

|---|---|

| Vicon Nexus / Qualisys QTM | Proprietary software for marker-based system data capture, initial gap filling, and basic filtering. |

| Theia Markerless / DeepLabCut | Software for markerless pose estimation, generating initial 2D/3D coordinate data from video. |

| MATLAB / Python (SciPy, NumPy) | Programming environments for implementing custom filtering algorithms (Butterworth, Kalman) and advanced signal processing. |

| Visual3D / OpenSim | Biomechanical modeling software that includes built-in trajectory filtering and smoothing pipelines for downstream analysis. |

| Cut-off Frequency Residual Analysis | A methodological "tool" to objectively determine the optimal low-pass cut-off frequency by analyzing the residual between filtered and raw signals. |

In the pursuit of robust biomechanical data for drug development, the debate between marker-based (MB) and markerless (ML) motion capture often presumes a mutually exclusive choice. However, a hybrid approach that strategically integrates both systems presents a powerful paradigm for enhanced validation and methodological reliability. This comparative guide examines the performance of integrated systems against standalone alternatives, framed within ongoing research comparing MB and ML technologies.

Publish Comparison Guide: Standalone vs. Hybrid Motion Capture Systems

Objective: To compare the accuracy, practical utility, and output reliability of standalone MB, standalone ML, and a synchronized hybrid MB-ML system in a clinical gait analysis context.

Experimental Protocol (Cited):

- Task: Level walking at self-selected speed over a 10-meter pathway.

- Subjects: N=15 healthy adults.

- Systems:

- Standalone MB: 10-camera optoelectronic system (e.g., Vicon) with 39 reflective markers (Plug-in Gait model).

- Standalone ML: Multi-view commercial ML system (e.g., Theia Markerless) using 6 synchronized high-speed RGB cameras.

- Hybrid System: Simultaneous data collection from both systems, with temporal synchronization via a genlocked trigger and spatial calibration to a shared laboratory coordinate system.

- Data Processing: MB data processed in Nexus (filtered at 6Hz). ML data processed using proprietary deep learning algorithms. Hybrid data processed independently, then joint center trajectories were compared.

- Key Metrics: Root Mean Square Error (RMSE) of key joint angles (sagittal plane), system setup time, trial processing time, and qualitative soft tissue artifact (STA) assessment.

Quantitative Performance Comparison:

Table 1: Kinematic Accuracy & Operational Efficiency

| Metric | Standalone MB System | Standalone ML System | Hybrid (MB as Reference) |

|---|---|---|---|

| Hip Angle RMSE (deg) | 0.5 (Reference) | 2.8 | 0.5 (MB), 2.8 (ML) |

| Knee Angle RMSE (deg) | 1.0 (Reference) | 3.5 | 1.0 (MB), 3.5 (ML) |

| Ankle Angle RMSE (deg) | 0.7 (Reference) | 4.1 | 0.7 (MB), 4.1 (ML) |

| System Setup Time (min) | 25-30 | 10-15 | 30-35 |

| Data Processing Time (min/trial) | 5-10 (Semi-auto) | 1-2 (Auto) | 10-15 (Dual-stream) |

Table 2: Qualitative System Comparison

| Feature | Standalone MB | Standalone ML | Hybrid Advantage |

|---|---|---|---|

| Soft Tissue Artifact | High (Markers on skin) | Low (Bone pose estimation) | Direct STA quantification possible |

| Environment Sensitivity | Low (IR sensitive) | Moderate (Lighting dependent) | ML validates MB marker occlusions |

| Output Validation | Requires separate study | Requires separate study | Continuous internal validation |

| Protocol Flexibility | Low (Marker model fixed) | High (Model-free) | ML can pilot novel MB marker sets |

Analysis: The hybrid system does not inherently improve the raw accuracy of either subsystem but provides a critical framework for validation. The ML system's higher RMSE, likely due to training data biases and camera resolution limits, can be systematically quantified and corrected against the MB "gold standard" within the same trial, subject, and movement. This internal benchmark is invaluable for developing and refining ML algorithms targeted for clinical use.

Experimental Workflow for Hybrid Validation

Hybrid Motion Capture Validation Workflow

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Research Reagent Solutions for Hybrid Motion Capture

| Item | Function & Rationale |

|---|---|

| Genlock & Sync Box | Generates a shared timing pulse to synchronize MB (infrared) and ML (RGB) camera shutters, ensuring temporal alignment of data streams within milliseconds. |

| Calibration Wand/L-Frame | Used for spatial volume calibration of both systems to a single global coordinate system, enabling direct 3D trajectory comparison. |

| Retroreflective Markers | Passive markers that reflect infrared light for the MB system. Placed on anatomical landmarks per a chosen biomechanical model (e.g., Plug-in Gait). |

| Markerless Motion Suit | A high-contrast, form-fitting garment (e.g., black with colored patterns) worn by the subject to improve body segment definition for ML computer vision algorithms. |

| Dynamic Phantom/Calibration Object | A mechanical device with known moving parts. Used as a "ground truth" object to perform absolute accuracy testing of the combined hybrid system. |

| Multi-modality Data Fusion Software | Custom or commercial software (e.g., Qualisys Track Manager, Cortex with add-ons) capable of importing, time-aligning, and comparing 3D trajectories from different hardware sources. |

Logical Relationship: Role of Hybrid Data in ML Model Refinement

Hybrid Data-Driven ML Model Refinement Cycle