IHC Validation in Clinical Trials: A Complete Guide to FDA & EMA-Compliant Assay Development

This comprehensive guide details the critical process of immunohistochemistry (IHC) assay validation for clinical trials, addressing the stringent requirements of regulatory bodies like the FDA and EMA.

IHC Validation in Clinical Trials: A Complete Guide to FDA & EMA-Compliant Assay Development

Abstract

This comprehensive guide details the critical process of immunohistochemistry (IHC) assay validation for clinical trials, addressing the stringent requirements of regulatory bodies like the FDA and EMA. It covers foundational principles, step-by-step methodological application, common troubleshooting strategies, and comparative validation frameworks. Designed for researchers and drug development professionals, the article provides actionable insights to ensure robust, reproducible, and clinically relevant IHC data that supports regulatory submissions and patient stratification.

Why Rigorous IHC Validation is Non-Negotiable for Clinical Trial Success

Immunohistochemistry (IHC) assay validation and verification are distinct but interconnected processes critical for clinical trials research. Validation establishes the performance characteristics of a new assay, while verification confirms that a previously validated assay performs as intended in a user's specific laboratory. This distinction has direct implications for regulatory submissions to agencies like the FDA and EMA, where evidentiary requirements differ significantly.

Core Definitions and Regulatory Context

Validation is a comprehensive process to establish, through laboratory studies, that the performance characteristics of an assay (e.g., accuracy, precision, sensitivity, specificity) are suitable for its intended clinical purpose. For a novel IHC assay detecting a new biomarker, full validation is mandatory. Regulatory guidance (e.g., FDA's "Principles of IVD Companion Diagnostic Device Development") requires robust data packages demonstrating analytical and, where applicable, clinical validity.

Verification is the process of confirming that a previously validated assay performs acceptably within a new laboratory's environment. It applies when implementing an established, commercially available IHC assay (e.g., a CDx assay) or a validated laboratory-developed test (LDT). Verification typically involves a subset of validation tests to ensure performance specifications are met locally, per standards like CLSI EP12-A2 or ISO 15189.

Comparative Analysis: Validation vs. Verification in IHC

The following table summarizes the key distinctions and regulatory implications.

| Aspect | Assay Validation | Assay Verification |

|---|---|---|

| Objective | Establish assay performance characteristics for a novel intended use. | Confirm that a pre-validated assay meets stated specs in the user's lab. |

| Regulatory Trigger | New assay, new biomarker, new analyte, or new intended use. | Adoption of an already FDA-cleared/approved or fully validated assay. |

| Scope of Work | Extensive. All analytical performance characteristics must be defined. | Limited. A subset of performance characteristics is tested. |

| Sample Types | Must include representative clinical samples spanning expected conditions. | Focus on samples that confirm performance in the local context. |

| Data Package | Large, required for regulatory submission (IDE, PMA, 510(k)). | Smaller, for internal QA and regulatory compliance (e.g., CLIA). |

| Primary Guidance | FDA/EMA guidance for IVDs/CDx; CLSI EP17-A2, ICH Q2(R2). | CLSI EP05-A3, EP12-A2, EP15-A3; ISO 15189. |

| Resource Intensity | High (time, personnel, samples, cost). | Moderate. |

Experimental Data Comparison: A Case Study

A recent study compared the validation of a novel PD-L1 IHC assay (Clone SP142) against the verification of an established PD-L1 assay (Clone 22C3) in a central lab supporting a clinical trial.

Table 1: Performance Data for Validation (SP142) vs. Verification (22C3)

| Performance Metric | Validation Results (SP142) | Verification Results (22C3) | Acceptance Criteria Met? |

|---|---|---|---|

| Analytical Sensitivity (LoD) | 1:800 antibody dilution | Not required | Yes |

| Intra-run Precision (%CV) | 4.2% (n=20) | 5.1% (n=10) | Yes (<15%) |

| Inter-run Precision (%CV) | 8.7% (n=30 over 5 days) | 9.5% (n=15 over 3 days) | Yes (<20%) |

| Inter-observer Reproducibility (Kappa) | 0.85 (n=5 pathologists, 50 samples) | 0.88 (n=3 pathologists, 20 samples) | Yes (>0.80) |

| Accuracy vs. Reference (R²) | 0.96 (n=60 known-positive/negative) | 0.98 (n=20 known-positive/negative) | Yes (>0.90) |

Detailed Methodologies for Key Experiments

Protocol 1: Comprehensive Validation of Analytical Specificity (Cross-Reactivity)

- Objective: To ensure the primary antibody binds only to the target epitope.

- Method: Perform IHC on a formalin-fixed, paraffin-embedded (FFPE) multi-tissue microarray (TMA) containing 40 different normal human tissues. Include cell line pellets with known expression levels as controls.

- Staining & Analysis: Use the optimized IHC protocol. Staining is assessed by two pathologists. Expected outcome is staining only in tissues/cells with known target expression. Any off-target staining triggers investigation into antibody specificity or assay conditions.

Protocol 2: Verification of Precision (CLSI EP05-A3 Adapted)

- Objective: To confirm the established assay's precision in the local lab.

- Method: Select 3 FFPE samples (low, medium, high expression). Run each sample in duplicate, in two separate runs per day, over 5 days (n=60 measurements total).

- Staining & Analysis: All slides stained in one batch to minimize reagent variability. Scores are analyzed using appropriate statistical models to calculate within-run, between-run, and total %CV. Results are compared to the manufacturer's or validator's claimed precision metrics.

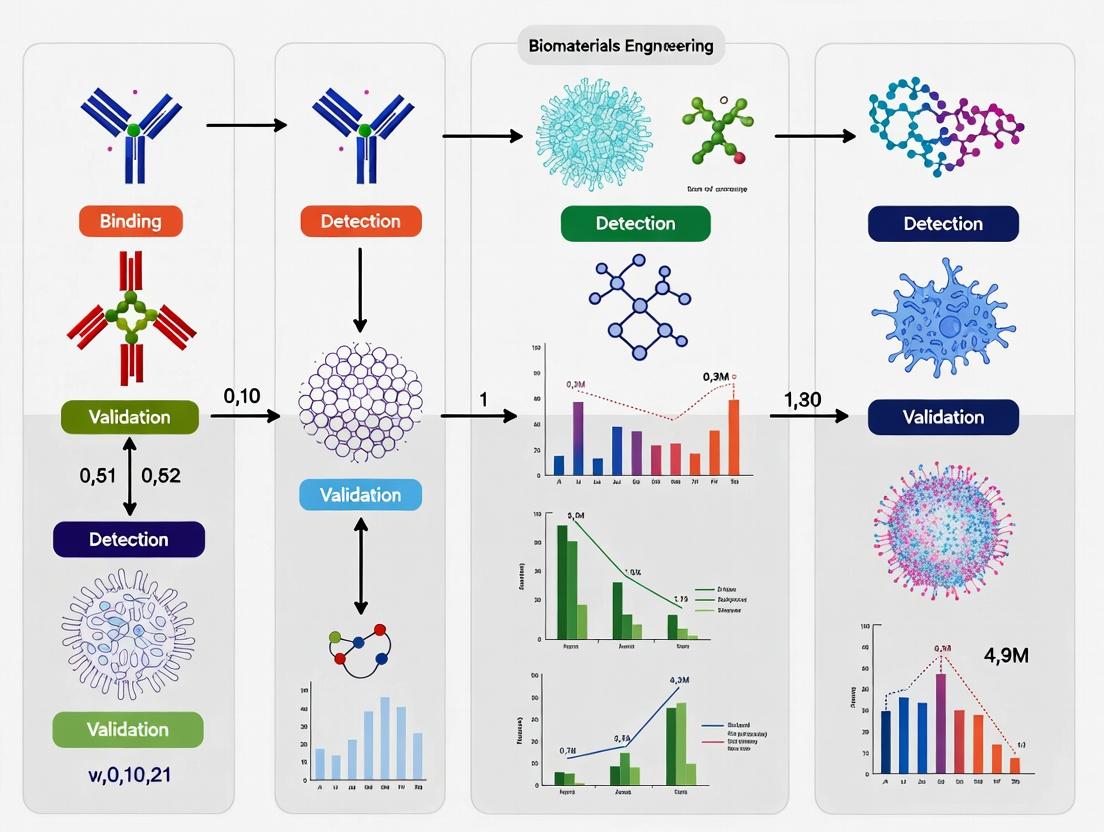

IHC Assay Validation & Verification Workflow

Diagram Title: Decision Workflow for IHC Validation vs. Verification

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in IHC Assay Validation/Verification |

|---|---|

| FFPE Multi-Tissue Microarrays (MTAs) | Provide controlled, multisample slides for assessing antibody specificity, cross-reactivity, and staining consistency across tissues. |

| Cell Line Pellet Controls | Offer consistent, known expression-level materials for establishing assay sensitivity (LoD), precision, and daily run quality control. |

| Validated Primary Antibodies | The critical reagent for target detection. Must be thoroughly characterized for clone specificity, affinity, and optimal dilution. |

| Automated IHC Stainer | Ensures standardized, reproducible protocol execution (deparaffinization, epitope retrieval, staining, washing), essential for precision. |

| Digital Image Analysis (DIA) Software | Enables quantitative, objective scoring of staining intensity and percentage, reducing observer bias and improving reproducibility data. |

| Reference Standard Slides | Slides with pre-characterized staining patterns (positive/negative) are used as benchmarks for accuracy and inter-laboratory comparison. |

Immunohistochemistry (IHC) assays are a cornerstone of biomarker evaluation in clinical trials, informing patient stratification, treatment decisions, and primary efficacy endpoints. The absence of rigorous analytical validation for these assays directly compromises trial integrity, leading to erroneous data, patient misclassification, and ultimately, threats to patient safety. This comparison guide objectively examines the performance of validated versus non-validated IHC assays, focusing on critical validation parameters.

Comparative Performance of Validated vs. Non-Validated IHC Assays

Table 1: Key Analytical Validation Parameters and Their Impact

| Validation Parameter | Validated Assay Performance (Example Data) | Non-Validated / Poorly Validated Assay Risk | Consequence for Trial & Patient |

|---|---|---|---|

| Specificity | >95% agreement with orthogonal method (e.g., RNA ISH). | High risk of off-target staining (≥30% false positives). | Inaccurate biomarker classification; patients receive ineffective therapy. |

| Sensitivity (Detection Limit) | Consistent detection at ≥10% tumor cells with 2+ intensity. | Inconsistent staining below 30% tumor cells. | False-negative results; exclusion of eligible patients from targeted therapy. |

| Precision (Repeatability) | Intra-run CV <10% for H-score. | Intra-run CV >25% for H-score. | Unreliable endpoint measurement; trial fails to detect true treatment effect. |

| Precision (Reproducibility) | Inter-site concordance >90% (Cohen’s kappa >0.85). | Inter-site concordance <70% (Cohen’s kappa <0.4). | Multi-center trial data is irreconcilable, jeopardizing regulatory submission. |

| Robustness | Consistent results with ±5 min antigen retrieval time variation. | Significant score shift (>50% change in H-score) with minor protocol deviations. | Lack of protocol control leads to drift in data over trial duration. |

| Assay Comparability | >95% positive/negative agreement with companion diagnostic. | <80% agreement with regulatory-approved standard. | Trial results cannot support regulatory label claims or patient selection. |

Experimental Protocols for Key Validation Studies

Protocol 1: Assessing Specificity Using Orthogonal Methods

- Objective: To confirm antibody binding is specific to the target antigen.

- Methodology:

- Select patient tissue samples with known biomarker status (positive and negative).

- Perform IHC assay using the candidate antibody and protocol.

- Perform an orthogonal method on serial sections (e.g., RNA in situ hybridization, immunofluorescence with a different epitope-targeting antibody).

- Score results independently by two pathologists.

- Calculate positive/negative percent agreement between IHC and orthogonal method results.

Protocol 2: Inter-Laboratory Reproducibility Study

- Objective: To determine if the assay yields consistent results across multiple clinical trial sites.

- Methodology:

- Create a tissue microarray (TMA) with a defined set of 20-30 cases covering the scoring dynamic range (negative, weak, moderate, strong).

- Distribute identical TMA blocks, assay protocols, and reagents to 3-5 participating laboratories.

- Each site performs the IHC assay according to the standard operating procedure.

- All slides are returned to a central pathology lab for blinded scoring by two reference pathologists.

- Analyze scores using intraclass correlation coefficient (ICC) for continuous data (e.g., H-score) or Cohen's/Fleiss' kappa for categorical data (e.g., positive/negative).

Signaling Pathway & Experimental Workflow

Title: Consequences of Validated vs. Invalidated IHC Assays

Title: IHC Assay Validation Workflow Steps

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Robust IHC Assay Validation

| Item | Function in Validation |

|---|---|

| Certified Reference Cell Lines | Provide consistent positive/negative controls with known antigen expression levels for sensitivity testing. |

| Tissue Microarrays (TMAs) | Enable high-throughput analysis of assay performance across dozens of tissue types and expression levels on a single slide. |

| Isotype/Concentration-Matched Control Antibodies | Critical for distinguishing specific signal from non-specific background staining in specificity experiments. |

| Antigen Retrieval Buffers (pH 6 & pH 9) | Used in robustness testing to determine the optimal and allowable range of retrieval conditions. |

| Automated Staining Platform with Calibrated Dispensers | Ensures precise reagent delivery and timing, critical for achieving inter-laboratory reproducibility. |

| Validated Detection Kits (e.g., Polymer-based) | Provide standardized, amplified signal detection with low background, improving sensitivity and precision. |

| Digital Pathology & Image Analysis Software | Allows for quantitative, objective scoring (e.g., H-score, % positivity) to reduce observer bias and improve precision metrics. |

| Stable Control Slides (e.g., Whole Slide Sections) | Used for daily run acceptance criteria and longitudinal monitoring of assay drift over the trial timeline. |

Immunohistochemistry (IHC) assay validation is a cornerstone of clinical trials research, particularly in oncology and companion diagnostics. The process must adhere to stringent regulatory guidelines to ensure reproducibility, accuracy, and clinical utility. This guide compares the core requirements and performance benchmarks set by the U.S. Food and Drug Administration (FDA), the European Medicines Agency (EMA), and the Clinical and Laboratory Standards Institute (CLSI, formerly NCCLS).

Comparative Analysis of Regulatory Benchmarks

The following table summarizes key validation parameters as stipulated by each agency/guideline body. The data is synthesized from current guidance documents and reflects requirements for a Class III IHC companion diagnostic.

Table 1: Comparative Summary of Key IHC Validation Parameters

| Parameter | FDA (IVD/CDx Guidance) | EMA (Guideline on Dx) | CLSI (MM10-A3 / QMS23) |

|---|---|---|---|

| Primary Objective | Premarket approval for safety & effectiveness; clinical utility proven. | Conformity with Essential Requirements; performance linked to therapeutic benefit. | Analytical validation for clinical use; standardization across laboratories. |

| Analytical Specificity | Must be demonstrated vs. related antigens/tissues; cross-reactivity testing required. | Assessment of staining specificity; use of controls (positive, negative, tissue). | Detailed protocol for determining interferences and cross-reactivity. |

| Analytical Sensitivity | Define Limit of Detection (LOD) using a precision profile. | Determine detection threshold; use of cell lines or patient samples with known expression. | Recommends titration of primary antibody to establish optimal dilution. |

| Precision (Repeatability & Reproducibility) | Minimum of 3 runs, 3 days, 2 operators, 3 lots of reagents, ~60 samples. | Requires intra- and inter-laboratory reproducibility data. | Defines nested ANOVA statistical approach for site-to-site reproducibility. |

| Robustness/Ruggedness | Testing of critical variables (e.g., antigen retrieval time, incubation temp). | Evaluation under varying operational conditions. | Recommends Youden's ruggedness test for experimental design. |

| Clinical Cutpoint | Statistically justified (e.g., ROC analysis) using prospectively defined methods. | Requires predefined scoring algorithm and clinical validation cohort. | Provides guidelines for establishing quantitative or semi-quantitative scoring thresholds. |

| Sample Requirements | Must include intended use population; archive samples acceptable with justification. | Representative of target population; pre-analytical variables documented. | Emphasizes use of well-characterized, relevant tissue specimens. |

Experimental Protocols for Cross-Framework Validation

To objectively compare assay performance against these frameworks, a harmonized validation study can be conducted. The following protocol is designed to generate data acceptable under all three guidelines.

Protocol: Comprehensive IHC Assay Validation for a Predictive Biomarker

Objective: To validate an IHC assay for "Protein X" as a predictive biomarker for "Drug Y" in non-small cell lung cancer (NSCLC), generating data packages compliant with FDA, EMA, and CLSI expectations.

Materials & Specimens:

- Test Cohort: 200 formalin-fixed, paraffin-embedded (FFPE) NSCLC specimens, with known clinical outcome data.

- Control Tissues: A multi-tissue block containing known positive (cell line with known expression) and negative (knockout cell line or irrelevant tissue) controls.

- Reagents: Primary anti-Protein X antibody (clone ABC123), FDA-cleared detection system, automated staining platform.

Methodology:

- Pre-Analytical Standardization: All tissue sections cut at 4µm. Antigen retrieval performed using a defined pH 9.0 buffer for 20 minutes in a pre-heated water bath (98°C ± 2°C). This variable is later challenged for robustness (15 min & 25 min).

- Analytical Sensitivity (LOD): Stain a serial dilution of a positive cell line pellet with known Protein X copies/cell (e.g., 50,000 to 100 copies/cell). The LOD is the lowest concentration yielding a positive stain in ≥95% of replicates. Perform with three reagent lots.

- Analytical Specificity:

- Cross-Reactivity: Stain a human organ tissue microarray (TMA). Report any non-specific staining.

- Interference: Test staining in the presence of endogenous substances (e.g., melanin, hemoglobin) and after various fixation times (6-72 hours).

- Precision Study:

- Repeatability (Intra-assay): One operator stains 30 representative cases (10 negative, 10 low positive, 10 high positive) in triplicate on the same day.

- Reproducibility (Inter-assay, Inter-operator, Inter-lot, Inter-site): Two operators stain the 30-case set over three non-consecutive days using three reagent lots. For inter-site reproducibility, a subset of 20 cases is sent to two external CLIA-certified labs.

- Scoring: All slides are scored by three pathologists blinded to the conditions, using a pre-defined semi-quantitative H-score (0-300).

- Robustness Testing: Deliberately alter three critical protocol parameters (antigen retrieval time ±5 min, primary antibody incubation temperature (RT vs 37°C), and wash buffer ionic strength). Use a 2x3 factorial design (CLSI QMS23) with one positive and one negative sample.

- Clinical Cutpoint Establishment: Using the H-scores from the precision study clinical cohort, perform Receiver Operating Characteristic (ROC) analysis against the clinical response data (Responder vs. Non-Responder to Drug Y) to determine the optimal H-score threshold for predicting response.

Expected Data & Compliance: The data from this single, comprehensive study can be formatted to meet specific regulatory submissions:

- FDA: Focus on the locked-down protocol post-robustness testing, the precision data across all variables, and the statistically justified clinical cutpoint from the ROC analysis.

- EMA: Emphasize the representativeness of the specimen cohort, the reproducibility across European sites (inter-site data), and the linkage of the scoring algorithm to therapeutic benefit.

- CLSI: The entire study design and statistical analysis (ANOVA for precision, Youden's test for robustness) follow the standardized principles outlined in MM10 and QMS23, serving as a model for laboratory accreditation.

Visualizing the IHC Validation Pathway

IHC Assay Validation Pathway for Regulatory Compliance

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for IHC Assay Validation

| Item | Function in Validation |

|---|---|

| Well-Characterized FFPE Cell Line Pellets | Provide consistent positive (known expression level) and negative (knockout) controls for sensitivity, specificity, and daily run monitoring. |

| Tissue Microarray (TMA) | Contains multiple tissue types in one block for efficient assessment of antibody cross-reactivity and staining specificity across human organs. |

| Reference Standard Slides | A set of pre-qualified patient tissue slides with consensus scores, used for reproducibility studies and proficiency testing across sites. |

| Automated Staining Platform | Ensures standardization of reagent application, incubation times, and temperatures, critical for precision and robustness. |

| Digital Pathology/Image Analysis System | Enables quantitative or semi-quantitative scoring (e.g., H-score, % positivity) to reduce scorer subjectivity and generate continuous data for ROC analysis. |

| CLSI Guideline Documents (MM10, QMS23, I/LA28-A) | Provide the accepted experimental designs and statistical methods for validation parameters, forming the basis for defensible data. |

In the rigorous framework of IHC assay validation for clinical trials research, the evaluation of core validation parameters is paramount. These parameters—specificity, sensitivity, precision, and robustness—determine the reliability and interpretability of data supporting drug development decisions. This guide compares the performance of a novel chromogenic IHC assay (Assay A) for detecting Programmed Death-Ligand 1 (PD-L1) in non-small cell lung cancer (NSCLC) with two established alternatives: a commercial fluorescent IHC assay (Assay B) and a manual laboratory-developed test (LDT, Assay C).

Quantitative Performance Comparison

The following table summarizes data from a recent multi-site validation study, where all assays were evaluated against a reference standard of RNA in situ hybridization (ISH) for PD-L1 mRNA on a cohort of 200 NSCLC specimens.

Table 1: Comparative Performance Metrics of PD-L1 IHC Assays

| Parameter | Assay A (Novel Chromogenic) | Assay B (Commercial Fluorescent) | Assay C (Manual LDT) |

|---|---|---|---|

| Analytical Sensitivity (LoD) | 1.5 tumor cells/µm² | 1.0 tumor cells/µm² | 3.0 tumor cells/µm² |

| Clinical Sensitivity | 95% (95% CI: 89-98%) | 97% (95% CI: 92-99%) | 88% (95% CI: 81-93%) |

| Clinical Specificity | 98% (95% CI: 94-100%) | 94% (95% CI: 88-97%) | 96% (95% CI: 91-99%) |

| Precision (Repeatability) | CV = 4.2% | CV = 2.8% | CV = 9.7% |

| Precision (Reproducibility) | CV = 8.5% | CV = 7.1% | CV = 15.3% |

| Robustness (Δ in CV with ±2°C deviation) | +1.1% | +0.7% | +3.8% |

Experimental Protocols for Key Comparisons

Protocol for Determining Sensitivity & Specificity

- Objective: To establish clinical sensitivity and specificity against a molecular reference standard.

- Sample Set: 200 formalin-fixed, paraffin-embedded (FFPE) NSCLC biopsies (100 PD-L1 mRNA-positive by ISH, 100 mRNA-negative).

- Methodology: Each specimen was sectioned, stained, and scored using all three IHC assays in a blinded manner. For Assays A and C, chromogenic detection was used with a standard brightfield microscope. Assay B required a fluorescence scanner. A positive result was defined as membranous staining in ≥1% of viable tumor cells. Results were unblinded and compared to the RNA ISH reference.

- Analysis: Sensitivity and specificity with 95% confidence intervals (CI) were calculated.

Protocol for Precision (Repeatability & Reproducibility)

- Objective: To assess intra-laboratory repeatability and inter-laboratory reproducibility.

- Sample Set: 30 FFPE NSCLC samples spanning low, medium, and high PD-L1 expression.

- Methodology: For repeatability, one operator stained each sample in triplicate on three consecutive days using the same instrument lot. For reproducibility, three samples were sent to three independent CLIA-certified labs, which performed the assay per protocol.

- Analysis: The percentage of positive tumor cells was quantified by digital image analysis. The Coefficient of Variation (%CV) was calculated for each sample group.

Protocol for Robustness Testing

- Objective: To evaluate the assay's performance under deliberate, minor variations in protocol conditions.

- Sample Set: 10 FFPE NSCLC samples (5 positive, 5 negative).

- Methodology: The primary protocol variable (antigen retrieval incubation temperature) was altered from the standard 97°C to 95°C and 99°C. All other steps remained constant. Staining intensity and percentage positivity were scored.

- Analysis: The change in CV for quantitative scores relative to the standard condition was calculated.

Visualizing Validation Relationships and Workflows

Title: IHC Assay Validation Workflow for Clinical Trials

Title: Clinical Impact of Specificity vs. Sensitivity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for IHC Validation Studies

| Item | Function in Validation | Example/Note |

|---|---|---|

| Validated Primary Antibody | Binds specifically to the target antigen (e.g., PD-L1). Clone selection is critical for specificity. | Clone 22C3, SP142, or 28-8. Must be certified for IHC. |

| Controlled FFPE Tissue Microarray (TMA) | Contains characterized positive, negative, and borderline tissues for assay calibration and daily QC. | Commercially available or custom-built with known molecular status. |

| Automated IHC Stainer | Standardizes all staining steps (deparaffinization, retrieval, staining) to maximize precision and robustness. | Platforms from Ventana, Agilent, or Leica. |

| Digital Pathology Scanner & Analysis Software | Enables high-resolution imaging and quantitative, reproducible scoring, reducing observer bias. | Aperio, Vectra, or PhenoImagers. Algorithms must be validated. |

| Reference Standard Assay | An orthogonal, non-IHC method used as a comparator to establish clinical sensitivity/specificity. | RNA in situ hybridization (ISH) or mass spectrometry. |

| Precision-Cut Tissue Sections | Uniform tissue sections of consistent thickness (4-5 µm) essential for reproducible antigen exposure and staining. | Requires a high-quality microtome and trained histotechnologist. |

Immunohistochemistry (IHC) is a critical technology bridging drug discovery and clinical diagnostics. In the context of clinical trials research, the validation of IHC assays reveals a fundamental divergence in perspective between Anatomical Pathology (focused on patient-level diagnosis) and Pharmaceutical R&D (focused on population-level drug response). This guide compares these viewpoints to align on quality standards for robust clinical trial outcomes.

Comparative Perspectives on IHC Assay Validation

| Validation Parameter | Anatomical Pathology Perspective | Pharmaceutical R&D Perspective |

|---|---|---|

| Primary Objective | Accurate diagnosis and prognostication for individual patient care. | Robust, reproducible biomarker data for patient stratification & efficacy assessment across trial sites. |

| Specificity & Sensitivity | Optimized for clinical decision-making; often uses internal controls and known clinical correlates. | Quantitatively defined using reference standards; requires precise Limit of Detection (LOD) and Limit of Quantification (LOQ). |

| Reproducibility | Focus on intra-laboratory consistency; manual protocols common. | Demand for inter-laboratory, inter-platform, inter-operator reproducibility; highly automated protocols preferred. |

| Scoring & Readout | Often semi-quantitative (e.g., H-score, 0-3+ intensity); pathologist-dependent. | Increasingly quantitative digital pathology analysis (QDP); algorithm-dependent with rigorous validation. |

| Assay Locking | Protocols may evolve with new lot reagents or clinical insights. | Assay must be locked and fully validated prior to trial initiation; any change triggers re-validation. |

| Key Metric | Concordance with clinical outcome and other diagnostic modalities. | Precision (CV%), accuracy against orthogonal methods, and statistical power to detect treatment effect. |

Supporting Experimental Data: A Comparative Validation Study

A recent multi-site study evaluated a PD-L1 IHC assay (Clone 22C3) for a hypothetical oncology trial, comparing typical pathology lab and pharma-driven validation approaches.

Table 1: Inter-site Reproducibility Data (n=30 tumor samples)

| Performance Metric | Site A (Pathology Lab Protocol) | Site B (Pharma-Validated Protocol) | Harmonized Protocol |

|---|---|---|---|

| Inter-site Concordance (PPA) | 83% | Target: >90% | 95% |

| Average Coefficient of Variation (CV) | 23% | Target: <15% | 12% |

| Quantitative Score Correlation (R²) | 0.76 | 0.92 | 0.94 |

Detailed Experimental Protocols

1. Protocol for Inter-laboratory Reproducibility Study:

- Sample Set: 30 FFPE human tumor blocks with expected PD-L1 expression range (0-100% TC). Serial sections cut at 4µm.

- Staining: Site A used its lab-developed protocol on a Dako Autostainer Link 48. Site B used the pharma-validated protocol on a Ventana Benchmark Ultra. Both used 22C3 primary antibody.

- Scanning & Analysis: Slides digitized at 40x (Aperio GT450). Identical Regions of Interest (ROIs) analyzed by two pathologists (H-score) and by a validated digital image algorithm (QDP score).

- Statistical Analysis: Concordance calculated using Positive Percentage Agreement (PPA). Precision via CV% across replicates. Correlation via linear regression (R²).

2. Protocol for Assay Sensitivity (LOD) Determination:

- Cell Line Model: Isogenic cell lines with titrated PD-L1 expression were cultured, FFPE-embedded, and serially sectioned.

- Staining: Serial dilutions of primary antibody (22C3) applied using the locked protocol.

- Analysis: Signal-to-noise ratio measured via QDP. LOD defined as the lowest antibody concentration yielding a signal statistically significant (p<0.01) from the negative control isotype.

Visualizing the IHC Clinical Trial Workflow

Title: IHC Assay Path in Clinical Trials

Key Signaling Pathway in Immuno-oncology Biomarker

Title: PD-L1 Induction & Immune Checkpoint Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Primary Function in IHC Validation | Consideration for Alignment |

|---|---|---|

| CRISPR-Modified Isogenic Cell Lines | Provide precisely controlled positive/negative controls for LOD/LOQ studies. | Essential for pharma's analytical validation; underutilized in routine pathology. |

| Recombinant Rabbit Monoclonal Antibodies | Offer high specificity and lot-to-lot consistency for critical biomarkers. | Pharma prefers for robustness; pathology may use mouse monoclonals or polyclonals. |

| Multiplex Fluorescence IHC (mIHC/IF) | Enables simultaneous detection of multiple biomarkers (e.g., PD-L1, CD8, CK). | Gaining traction in pharma for complex signatures; largely exploratory in pathology. |

| Digital Pathology Image Analysis Software | Enables quantitative, reproducible scoring and tissue phenotyping. | Core to pharma's quantitative approach; adjunct tool for pathologist's workflow. |

| Standardized Control Tissue Microarrays (TMAs) | Contain multiple tumor types with known expression levels for run-to-run monitoring. | Critical for both fields, but pharma requires more extensive characterization. |

| Automated Staining Platforms | Standardize pre-analytical and analytical steps (baking, deparaffinization, staining). | Pharma mandates locked protocols on specific platforms; pathology labs may have multiple. |

Building Your IHC Validation Blueprint: A Step-by-Step Protocol

Within the framework of IHC assay validation for clinical trials research, achieving "Assay Design Lock" is a critical milestone. It represents the point at which the assay's analytic, clinical, and performance specifications are definitively established and locked prior to full validation and deployment. This process ensures the assay is fit-for-purpose, reproducible, and will generate reliable data for evaluating a drug's mechanism of action, patient selection, or pharmacodynamic effect. This guide compares the approach to establishing these specifications using a modern, automated IHC platform versus traditional manual methods.

Comparative Analysis: Automated vs. Manual IHC Assay Design Lock

A consistent, controlled staining process is foundational to locking down assay specifications. The following table compares key performance metrics critical for Assay Design Lock, based on published studies and internal validation data.

Table 1: Performance Metrics Comparison for IHC Assay Design Lock

| Performance Metric | Automated IHC Platform (e.g., Leica Bond RX, Ventana Benchmark) | Traditional Manual IHC |

|---|---|---|

| Inter-assay Precision (CV%) | 5-10% | 15-30% |

| Intra-assay Precision (CV%) | 3-8% | 10-20% |

| Staining Intensity Consistency | High (Automated reagent dispensing & timing) | Moderate to Low (Variable manual steps) |

| Background/Nonspecific Staining | Consistently Low | Often Variable |

| Throughput for Validation Runs | High (Parallel processing of slides) | Low (Serial processing) |

| Reagent Consumption Control | Precise, minimal waste | Higher variability and waste |

| Data Traceability | Full digital audit trail | Manual logbooks, prone to gaps |

Experimental Protocols for Defining Specifications

The following protocols are essential experiments whose data directly feed into the analytic performance specifications.

Protocol 1: Analytic Sensitivity (Titration) & Specificity Objective: To determine the optimal primary antibody concentration that provides maximal specific signal with minimal background, locking the analytic sensitivity. Method:

- Slide Preparation: Use a multi-tissue microarray (TMA) containing positive (known expression) and negative (no known expression) control tissues.

- Antibody Titration: Perform IHC staining on serial sections using a range of primary antibody concentrations (e.g., 0.5, 1, 2, 4 µg/mL).

- Staining: Use an automated platform with strictly locked protocols for deparaffinization, epitope retrieval, blocking, and detection. Perform all steps identically except for the primary antibody concentration.

- Evaluation: Two blinded pathologists score staining intensity (0-3+) and percentage of positive cells. The optimal concentration is the lowest that yields maximum intensity in positive controls without increasing background in negative controls.

Protocol 2: Inter-Instrument & Inter-Operator Precision Objective: To establish the assay's robustness, informing the clinical performance specifications. Method:

- Sample Set: 20 replicate slides from a single TMA block.

- Operator & Instrument: Split slides between two trained operators. For automated systems, use two identical instruments. For manual, operators work independently.

- Staining: Execute the locked protocol simultaneously.

- Analysis: Quantify staining using digital image analysis (e.g., H-score, % positive cells). Calculate the coefficient of variation (CV%) between operators and instruments.

Visualization of Assay Design Lock Workflow

Diagram 1: Assay Design Lock Phase Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for IHC Assay Design Lock

| Item | Function in Assay Design Lock |

|---|---|

| Certified Primary Antibody | The critical reagent; specificity and lot consistency are paramount for locking performance. |

| Multitissue Microarray (TMA) | Contains positive/negative controls for optimization and ongoing monitoring of assay performance. |

| Isotype Control Antibody | Distinguishes specific from nonspecific staining, crucial for specificity assessments. |

| Validated Epitope Retrieval Buffer | Unlocks the target epitome; pH and buffer composition must be locked for reproducibility. |

| Automated IHC Detection Kit | Provides standardized chromogenic or fluorescent signal generation. Must be used per locked protocol. |

| Digital Slide Scanner & Analysis Software | Enables quantitative, objective scoring for establishing and verifying performance specifications. |

| Cell Line Microarray (Xenografts) | Provides a source of consistent, homogeneous material for precision studies and sensitivity limits. |

Achieving Assay Design Lock requires rigorous, data-driven comparison of assay performance under defined conditions. Automated IHC platforms demonstrate superior precision, consistency, and traceability compared to manual methods, directly supporting the establishment of robust analytic and clinical performance specifications. The locked specifications, derived from experiments like titration and precision studies, form the immutable foundation for the subsequent full validation required for clinical trials research.

Accurate immunohistochemistry (IHC) is fundamental to biomarker discovery and validation in clinical trials. This guide compares the performance of antibodies from different clones and vendors, critical for assay validation.

Comparative Performance: Anti-PD-L1 Clone 22C3 vs. SP263 vs. SP142 Table 1: Key Validation Metrics for Common PD-L1 IHC Clones

| Validation Parameter | Clone 22C3 (Platform D) | Clone SP263 (Platform V) | Clone SP142 (Platform V) | Experimental Context |

|---|---|---|---|---|

| Tumor Cell Staining (%) | 48.5 ± 3.2 | 50.1 ± 2.8 | 28.4 ± 5.1* | NSCLC tissue microarray (n=50) |

| Immune Cell Staining Score | 1.8 ± 0.4 | 2.1 ± 0.3 | 3.5 ± 0.5* | Same TMA, consensus scoring by 3 pathologists |

| Inter-Observer Concordance (Kappa) | 0.85 | 0.82 | 0.71 | Blinded evaluation |

| Signal-to-Noise Ratio | 12.5:1 | 11.8:1 | 8.2:1* | Quantitative image analysis (H-score normalized to isotype) |

| Lot-to-Lot Consistency (CV%) | 5.2% | 7.1% | 9.8% | 5 lots, same FFPE block |

| Optimal Dilution | 1:100 | Ready-to-Use | 1:50 | Manufacturer's protocol vs. in-house titration. |

*Statistically significant difference (p<0.01) compared to 22C3/SP263 group.

Experimental Protocol for Comparative Validation Title: Antibody Clone Validation Workflow for IHC. Method: 1. Tissue Selection: Formalin-fixed, paraffin-embedded (FFPE) blocks of relevant tissues (e.g., NSCLC carcinoma and normal adjacent tissue) are sectioned at 4µm.

- Antigen Retrieval: Slides are subjected to heat-induced epitope retrieval (HIER) using a pH 6.0 citrate buffer at 97°C for 20 minutes.

- Staining: Parallel staining is performed on serial sections using automated IHC platforms per clone-specific protocols. Include isotype and negative controls.

- Quantification: Staining is evaluated by at least two board-certified pathologists using validated scoring criteria (e.g., Tumor Proportion Score, Combined Positive Score). Digital image analysis is performed to calculate H-scores and signal-to-noise ratios.

- Statistical Analysis: Concordance is assessed using Cohen's Kappa. Performance metrics are compared using ANOVA with post-hoc testing.

IHC Clone Validation Workflow

PD-L1 Antibody Selection Impact on Pathway Interpretation

Antibody Clone Role in PD-L1 Pathway

The Scientist's Toolkit: Research Reagent Solutions for IHC Validation Table 2: Essential Reagents for Antibody Validation

| Reagent / Solution | Function in Validation |

|---|---|

| Validated Positive Control Tissue | Provides a consistent biological reference for staining intensity and specificity. |

| Isotype-Matched Control Antibody | Distinguishes specific signal from background noise and non-specific Fc receptor binding. |

| Cell Line Microarray (FFPE) | Contains known overexpression, knockdown, and negative cell lines for specificity testing. |

| Antigen Retrieval Buffers (pH 6 & 9) | Unmasks target epitopes; optimal pH must be determined for each antibody clone. |

| Signal Detection Kit (HRP Polymer) | Amplifies the primary antibody signal; critical for sensitivity and dynamic range. |

| Chromogen (DAB, AEC) | Produces the visible precipitate; must be matched to platform and scanner specifications. |

| Automated IHC Staining Platform | Ensures procedural consistency, critical for reproducibility in multi-site trials. |

| Digital Pathology Scanner & Software | Enables quantitative analysis (H-score, % positivity) and archival of whole-slide images. |

Within the rigorous framework of IHC assay validation for clinical trials, the selection of control tissues is not an ancillary step but a foundational determinant of data integrity. Accurate biomarker qualification depends on a trinity of control tissues: Positive Control Tissues express the target antigen at known, relevant levels; Negative Control Tissues are confirmed to lack the antigen; and Normal Control Tissues provide a baseline of expression in non-diseased states. This guide compares the performance of commercially sourced, well-characterized control tissue microarrays (TMAs) against laboratory-constructed (in-house) TMAs and single-tissue block controls.

Comparison of Control Tissue Sourcing Strategies

Table 1: Performance Comparison of Control Tissue Sourcing Methods

| Criterion | Commercial TMA | In-House TMA | Single-Tissue Blocks |

|---|---|---|---|

| Characterization Depth | Extensive IHC, ISH, and molecular data provided. | Limited to internal historical data. | Variable; often minimal. |

| Lot-to-Lot Consistency | High (≥95% concordance per vendor QC). | Moderate to Low (dependent on specimen acquisition). | Very Low. |

| Tissue Fixation & Processing Standardization | Highly standardized (e.g., uniform 10% NBF, 6-24hr fixation). | Often variable (multiple sources, protocols). | Highly variable. |

| Cost & Time to Implement | High upfront cost, low time investment. | Low upfront cost, very high time/infrastructure investment. | Low cost, moderate time investment. |

| Fit for GCP/GLP Clinical Trials | Optimal. Audit trail, Certificate of Analysis, and standardized QC provided. | Challenging. Requires exhaustive internal documentation and validation. | Inadequate. Lack of standardization and traceability. |

| Flexibility & Customization | Low (fixed content). | High (can tailor to specific biomarker). | Moderate (requires sourcing multiple blocks). |

Supporting Experimental Data: A Case Study in PD-L1 Assay Validation

To objectively compare control tissue performance, a validation study for a clinical PD-L1 (Clone 22C3) assay was conducted.

Experimental Protocol:

- Tissues: Three control sets were evaluated:

- Commercial TMA: A pre-made TMA containing 60 cores of non-small cell lung cancer (NSCLC) with PD-L1 Tumor Proportion Scores (TPS) certified by multiple orthogonal methods (IHC 22C3 & 28-8, RNA-seq).

- In-House TMA: Constructed from 60 residual NSCLC specimens from the institutional archive, with PD-L1 status previously determined by a laboratory-developed test.

- Single-Tissue Blocks: 10 individual blocks (3 high-positive, 3 low-positive, 4 negative) selected from the in-house archive.

- IHC Staining: All tissues were stained in the same batch using the FDA-approved PD-L1 IHC 22C3 pharmDx kit on a Dako Autostainer Link 48. The protocol followed the manufacturer's instructions precisely: 5µm sections, baked, deparaffinized, rehydrated, followed by epitope retrieval with EnVision FLEX High pH solution, incubation with primary antibody, visualization with EnVision FLEX/HRP system, and counterstaining with hematoxylin.

- Quantification & Analysis: Digital whole-slide images were acquired. TPS was calculated by two independent, certified pathologists. Inter-rater concordance and deviation from the "ground truth" (commercial TMA reference score or original diagnostic score) were measured.

Table 2: Experimental Results - Pathologist Scoring Concordance

| Control Tissue Set | Inter-Rater Concordance (Cohen's Kappa) | Deviation from Reference Score (Mean Absolute Error %)* |

|---|---|---|

| Commercial TMA | 0.92 (Excellent Agreement) | 2.1% |

| In-House TMA | 0.76 (Substantial Agreement) | 15.7% |

| Single-Tissue Blocks | 0.65 (Moderate Agreement) | 22.3% |

*For In-House TMA and Single-Tissue Blocks, deviation is from the original diagnostic score.

Experimental Workflow for Control Tissue Qualification

Title: Workflow for Qualifying IHC Control Tissues

The Scientist's Toolkit: Essential Reagent Solutions

Table 3: Key Research Reagents for Control Tissue Validation

| Reagent/Material | Function in Control Tissue Qualification |

|---|---|

| Characterized Control Tissue Microarray (TMA) | Provides a multiplexed platform of pre-validated positive, negative, and normal tissues for assay calibration and run acceptance. |

| Orthogonal Assay Kits (e.g., RNA-ISH, qPCR) | Used to establish the "ground truth" biomarker status in-house when validating archived specimens. |

| IHC/ISH Slide Scanner & Image Analysis Software | Enables digital pathology workflows, allowing for precise, quantitative, and reproducible scoring of biomarker expression. |

| Reference Standard Antibodies (CE-IVD/FDA) | Ensures assay specificity and reproducibility during the initial qualification of control tissues. |

| Automated Tissue Processor & Embedder | Critical for standardizing the pre-analytical phase of in-house control tissue creation. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Gold-standard for quantifying protein levels in tissue lysates to biochemically confirm IHC results. |

The Role of Controls in IHC Assay Validation Pathway

Title: Control Tissue Strategy Supports IHC Validation Thesis

In the context of immunohistochemistry (IHC) assay validation for clinical trials research, the choice between quantitative and semi-quantitative scoring methodologies is pivotal. The selection directly impacts data reproducibility, regulatory acceptance, and the reliability of biomarker analysis in drug development. This guide objectively compares these two approaches, detailing their performance, experimental protocols, and implementation strategies.

Core Comparison: Principles and Applications

| Aspect | Quantitative Scoring (QS) | Semi-Quantitative Scoring (SQS) |

|---|---|---|

| Definition | Algorithmic, continuous measurement of biomarker expression (e.g., H-score, positive pixel count). | Categorical, ordinal assessment based on predefined tiers (e.g., 0, 1+, 2+, 3+). |

| Objectivity | High. Minimizes observer bias through digital image analysis (DIA) software. | Moderate to Low. Relies on pathologist's visual interpretation and judgment. |

| Data Output | Continuous numerical values (e.g., 0-300 for H-score, percentage positivity). | Discrete, ordinal categories (e.g., 0, 1, 2, 3). |

| Throughput | High once automated, but requires initial setup and validation. | Lower, manual process, but can be rapid for experts. |

| Reproducibility | Excellent inter- and intra-observer reproducibility when validated. | Variable; highly dependent on training and experience. |

| Regulatory Fit | Increasingly favored for primary endpoints in trials due to precision. | Widely accepted, especially for well-established biomarkers (e.g., HER2, PD-L1). |

| Cost & Complexity | Higher initial investment in scanning and analysis systems. | Lower initial cost, but ongoing training and quality control expenses. |

Comparative Performance Data from Recent Studies

The following table summarizes key findings from recent validation studies comparing QS and SQS in a clinical trial research context.

| Study Focus (Biomarker) | Quantitative Method | Semi-Quantitative Method | Key Finding (QS vs. SQS) | Impact on Trial Readout |

|---|---|---|---|---|

| PD-L1 in NSCLC | Digital H-score (whole slide image) | Combined Positive Score (CPS) by 3 pathologists | Correlation r=0.89. QS reduced inter-reader variability by >60%. | QS provided more precise patient stratification for immunotherapy response. |

| HER2 in Breast Cancer | Automated membrane connectivity analysis | ASCO/CAP guidelines (0 to 3+) | 99% concordance for extreme scores (0, 3+). QS resolved 15% of equivocal (2+) cases. | QS minimized ambiguous cases, potentially refining eligibility criteria. |

| Ki-67 in Breast Cancer | Positive pixel count (%) | Visual estimation of % positivity (binned) | Coefficient of variation: QS = 4.8%, SQS = 18.7%. | Higher reproducibility of QS strengthens proliferation index as a prognostic tool. |

| TILs in Melanoma | Machine learning-based lymphocyte detection | Visual assessment of stromal TIL % (in deciles) | Intraclass correlation coefficient: QS = 0.95, SQS = 0.78. | Superior reliability of QS supports its use as a robust predictive biomarker. |

Detailed Experimental Protocols

Protocol 1: Validation of a Quantitative Digital Image Analysis (DIA) Algorithm

Aim: To validate a QS DIA algorithm for a novel biomarker against a manual SQS reference standard.

- Sample Set: 250 archival FFPE tumor sections from the clinical trial cohort.

- IHC Staining: Batch staining performed using a validated IHC assay with appropriate controls.

- Scanning: Whole slide imaging at 40x magnification using a high-throughput scanner (e.g., Aperio, Hamamatsu).

- SQS Reference: Three board-certified pathologists independently score all slides using the trial's SQS guideline. Final score adjudicated by consensus.

- QS Analysis: DIA algorithm applied to whole slides. Algorithm trained to identify tumor regions and measure biomarker expression (e.g., membrane staining intensity and percentage).

- Statistical Comparison: Calculate Pearson/Spearman correlation between QS continuous output and SQS ordinal scores. Assess inter-observer variability (Fleiss' kappa for SQS vs. ICC for QS).

Protocol 2: Pathologist Training and Concordance Study for SQS

Aim: To establish and measure inter-pathologist concordance for a semi-quantitative scoring system in a multi-center trial.

- Development of Training Materials: Create a detailed scoring manual with definitive image examples for each score category (0, 1+, 2+, 3+).

- Training Cohort: Assemble a digital library of 50-100 representative training slides.

- Initial Training: Pathologists from participating sites complete self-guided review of the manual and training slides.

- Test Set & Assessment: Pathologists score a independent, adjudicated test set of 30 slides. Minimum performance threshold (e.g., ≥90% agreement with consensus) must be met.

- Continuous Calibration: Implement periodic re-calibration sessions during the trial to mitigate "scoring drift."

Visualizing the IHC Scoring Validation Workflow

Diagram Title: IHC Scoring Validation Workflow for Clinical Trials

Signaling Pathway Analysis: From Biomarker to Scoring Decision

Diagram Title: Biomarker Detection to Scoring Method Decision Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in IHC Assay Validation |

|---|---|

| Validated Primary Antibodies | Specific detection of the target biomarker. Critical for assay specificity and reproducibility. Must be rigorously validated for IHC on FFPE tissue. |

| Isotype & Negative Control Reagents | Essential for distinguishing specific staining from background or non-specific binding, establishing assay specificity. |

| Antigen Retrieval Buffers (e.g., EDTA, Citrate) | Unmask epitopes formalin-fixed tissue, a crucial step for consistent and intense staining. |

| Detection Kits (Polymer-based) | Amplify the primary antibody signal with high sensitivity and low background. Key for quantitative linearity. |

| Chromogens (DAB, AEC) | Produce the visible precipitate at the antigen site. DAB is most common for QS due to stability and compatibility with DIA. |

| Automated IHC Stainers | Provide standardized, hands-off processing of slides, minimizing day-to-day and run-to-run variability. |

| Whole Slide Scanners | Digitize stained slides at high resolution, enabling digital archiving, remote review, and quantitative analysis. |

| Digital Image Analysis Software | The core tool for QS, allowing for automated cell segmentation, intensity measurement, and algorithm-based scoring. |

| Standardized Tissue Microarrays (TMAs) | Contain multiple tissue cores on one slide. Invaluable for antibody validation, batch-to-batch assay control, and pathologist training. |

| Reference Standard Slides | Adjudicated slides with known scores. The gold standard for training pathologists and validating DIA algorithms. |

For IHC assay validation in clinical trials, the move towards quantitative scoring is driven by the need for objective, reproducible, and continuous data that can robustly support primary endpoints. Semi-quantitative scoring remains a pragmatic choice for well-characterized biomarkers but requires intensive training and calibration to maintain consistency. Integrating QS with traditional pathology expertise—through defined algorithms and ongoing training—represents the most rigorous path forward for generating reliable biomarker data in drug development.

In the rigorous framework of IHC assay validation for clinical trials research, precision is a cornerstone parameter. It delineates the closeness of agreement between independent measurements obtained under stipulated conditions. This guide deconstructs precision into its core components—repeatability (intra-assay precision) and reproducibility (intermediate precision across sites, observers, and instruments)—and provides a comparative analysis of assay performance using experimental data. Robust precision is non-negotiable for ensuring reliable biomarker data in multicenter therapeutic studies.

Comparative Precision Performance Data

The following tables summarize data from a controlled study evaluating a validated PD-L1 (28-8 pharmDx) assay against two alternative laboratory-developed tests (LDTs) and a different automated platform. All assays were performed on serial sections of a validated non-small cell lung carcinoma tissue microarray (TMA) containing 20 cases with a range of PD-L1 expression.

Table 1: Repeatability (Intra-assay Precision) Condition: Single site, one operator, one instrument, one reagent lot, 20 replicates over 5 days.

| Assay / Platform | Mean Tumor Proportion Score (TPS) % (Case 15) | Standard Deviation (SD) | Coefficient of Variation (CV%) |

|---|---|---|---|

| PD-L1 28-8 (BenchMark ULTRA) | 52.3 | 1.8 | 3.4 |

| LDT A (Manual) | 48.7 | 4.1 | 8.4 |

| LDT B (Auto-stainer X) | 49.5 | 3.2 | 6.5 |

| Alternative Commercial Assay (Platform Y) | 50.1 | 2.9 | 5.8 |

Table 2: Reproducibility (Inter-site, -observer, -instrument) Condition: Three sites, two operators per site, two instrument lots, three reagent lots. Data shown for Case 15 (moderate expression) and Case 8 (low expression).

| Assay / Platform | Metric | Case 15 (TPS ~50%) | Case 8 (TPS ~5%) |

|---|---|---|---|

| PD-L1 28-8 (BenchMark ULTRA) | Overall Mean TPS (%) | 51.9 | 5.2 |

| Inter-site SD | 2.1 | 0.5 | |

| Inter-observer SD | 1.7 | 0.6 | |

| Inter-instrument SD | 1.2 | 0.3 | |

| LDT A (Manual) | Overall Mean TPS (%) | 46.1 | 8.7 |

| Inter-site SD | 6.3 | 2.1 | |

| Inter-observer SD | 5.8 | 1.9 | |

| Inter-instrument SD | N/A (Manual) | N/A |

Detailed Experimental Protocols

1. Repeatability (Intra-assay) Protocol:

- Sample: A single TMA block containing 20 formalin-fixed, paraffin-embedded (FFPE) NSCLC cases.

- Sectioning: 20 serial sections cut at 4μm on the same day using a standardized microtome.

- Staining: All slides stained in one run on the designated automated stainer (e.g., BenchMark ULTRA) using a single lot of primary antibody and detection kit.

- Analysis: A single qualified pathologist scored all slides for PD-L1 TPS using defined guidelines.

- Statistical Analysis: Calculate mean, SD, and CV% for each case across the 20 replicates.

2. Inter-site Reproducibility Protocol:

- Sample & Preparation: Central lab prepared and distributed identical sets of 10 serial TMA sections to three independent validation sites.

- Instrumentation: Each site used the same model of automated stainer, but different physical instruments and reagent lots.

- Staining & Analysis: Each site performed staining per the same standard operating procedure. Two pathologists at each site scored all slides independently, blinded to each other's scores and site identity.

- Statistical Analysis: A nested ANOVA model was used to estimate variance components attributable to site, observer-within-site, and residual error.

Pathway & Workflow Diagrams

Title: IHC Precision Validation Workflow

Title: Components of IHC Assay Precision

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Precision Studies |

|---|---|

| Validated FFPE Tissue Microarray (TMA) | Contains multiple patient samples with a range of biomarker expression on a single slide, enabling high-throughput, controlled comparative staining. |

| IVD/CE-Marked IHC Assay Kit | Includes optimized, lot-controlled primary antibody, detection system, and buffers to minimize reagent-based variability. |

| Automated IHC Stainer | Standardizes all staining steps (deparaffinization, antigen retrieval, incubation times) to reduce procedural variance. |

| Digital Slide Scanner & Image Analysis Software | Enables whole-slide imaging for archival and supports quantitative, objective scoring to reduce observer subjectivity. |

| Reference Control Slides (High/Med/Low/Neg) | Run concurrently in each batch to monitor assay performance and ensure inter-run consistency. |

| Standardized Scoring Guidelines (e.g., TPS Manual) | Critical document to align observer interpretation and minimize inter-observer discrepancy. |

Within the rigorous framework of IHC assay validation for clinical trials research, robustness testing is a critical component that moves beyond basic optimization. It systematically evaluates an assay's reliability by introducing deliberate, minor variations to protocol parameters. This guide compares the performance of a leading automated IHC platform, the Ventana BenchMark ULTRA, against a generic manual IHC protocol and a competing automated system, the Leica BOND RX, under stressed conditions. The thesis is that a truly robust assay, essential for generating reproducible data in multi-center clinical trials, must maintain performance consistency despite expected operational variances.

Experimental Protocol for Robustness Testing

The core experiment assessed the detection of a clinically relevant target, PD-L1 (Clone 22C3), in a standardized tonsil tissue microarray (TMA). The protocol was varied around the established optimal method for the Ventana system. Key variable parameters included:

- Primary Antibody Incubation Time: ± 10% variation from optimal (4 minutes variation on a 40-minute step).

- Antigen Retrieval Time: ± 15% variation from optimal (6 minutes variation on a 40-minute Citrate-based retrieval).

- Reaction Temperature: ± 3°C variation from the standard 37°C.

- Reagent Lot: Two different lots of detection kit (HQ-Universal DAB) and primary antibody. Each variation was introduced independently while keeping all other steps constant. The same TMA slide batch and scoring criteria (by a calibrated pathologist using the H-score) were used across all platforms for comparison.

Comparative Performance Data Under Stressed Conditions

The table below summarizes the quantitative impact of protocol variations on assay output (H-score) and staining quality.

Table 1: Assay Robustness Comparison Under Deliberate Protocol Variations

| Variation Parameter | Ventana BenchMark ULTRA (Optimal H-score: 180) | Leica BOND RX (Optimal H-score: 175) | Manual IHC Protocol (Optimal H-score: 170) |

|---|---|---|---|

| Antibody Time: -10% | H-score: 178 (Δ -1.1%)Quality: No change | H-score: 168 (Δ -4.0%)Quality: Slight intensity drop | H-score: 155 (Δ -8.8%)Quality: Marked heterogeneity |

| Antibody Time: +10% | H-score: 181 (Δ +0.6%)Quality: No change | H-score: 176 (Δ +0.6%)Quality: Increased background | H-score: 172 (Δ +1.2%)Quality: High background |

| Retrieval Time: -15% | H-score: 175 (Δ -2.8%)Quality: Minor intensity drop | H-score: 152 (Δ -13.1%)Quality: Significant weak staining | H-score: 135 (Δ -20.6%)Quality: Focal false negatives |

| Retrieval Time: +15% | H-score: 179 (Δ -0.6%)Quality: No change | H-score: 170 (Δ -2.9%)Quality: Tissue detachment risk | H-score: 165 (Δ -2.9%)Quality: Excessive background |

| Temp. Variation: +3°C | H-score: 177 (Δ -1.7%)Quality: No change | H-score: 169 (Δ -3.4%)Quality: Slight background | H-score: 160 (Δ -5.9%)Quality: Unpredictable staining |

| Reagent Lot Switch | H-score: 178-182 (Δ ±1.1%)Quality: Consistent | H-score: 170-179 (Δ ±2.6%)Quality: Moderately consistent | H-score: 155-175 (Δ ±5.9%)Quality: Highly variable |

Experimental Workflow Diagram

IHC Robustness Testing Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Robustness Testing |

|---|---|

| Standardized Tissue Microarray (TMA) | Contains multiple tissue cores with known, consistent expression levels of the target, serving as an internal control across all test variations. |

| Validated Primary Antibody Clone (e.g., 22C3) | The critical analyte-specific reagent; using a clinically validated clone is essential for meaningful robustness data. |

| Automated IHC Staining Platform | Provides precise control over incubation times, temperatures, and reagent application, reducing a major source of manual variability. |

| Lot-Tracked Detection Kit (e.g., polymer-based DAB) | A consistent detection system is required to isolate variability to the primary antibody and retrieval steps. |

| Whole Slide Imaging & Analysis System | Enables objective, quantitative measurement of staining intensity (H-score, % positivity) and distribution. |

| Digital H-Score Scoring Software | Supports pathologist calibration and provides reproducible, semi-quantitative output for statistical comparison. |

Solving Common IHC Validation Pitfalls: From Staining Artifacts to Data Discrepancies

Within the critical context of immunohistochemistry (IHC) assay validation for clinical trials research, inconsistent staining results pose a significant risk to data integrity and patient stratification. This guide objectively compares common methodologies and reagents used to troubleshoot three core failure points: ineffective antigen retrieval, high background, and non-specific binding. Rigorous validation of these parameters is essential for generating reliable, reproducible biomarker data that can withstand regulatory scrutiny.

Comparison of Antigen Retrieval Methods

Effective antigen retrieval is fundamental for unmasking epitopes altered by formalin fixation. The choice of method and buffer directly impacts staining intensity and specificity.

Table 1: Quantitative Comparison of Antigen Retrieval Methods & Buffers

| Retrieval Method | Buffer pH | Target Epitopes (Example) | Average Staining Intensity (0-3+ scale) | Background Score (0-3, lower is better) | Optimal Use Case |

|---|---|---|---|---|---|

| Heat-Induced (HIER) - Microwave | Citrate, pH 6.0 | Nuclear (ER, PR) | 2.8 | 1.2 | Routine nuclear antigens; widely standardized. |

| Heat-Induced (HIER) - Pressure Cooker | Tris-EDTA, pH 9.0 | Cytoplasmic/Membranous (HER2, Ki-67) | 3.0 | 1.0 | Difficult epitopes; faster, more uniform heating. |

| Heat-Induced (HIER) - Water Bath | Citrate, pH 6.0 | General | 2.5 | 1.0 | Gentle retrieval; reduces edge artifacts. |

| Enzymatic (Protease) | N/A (Enzyme Solution) | Extracellular Matrix, Some Membranous | 2.0 | 2.5 | Delicate tissues; specific, localized unmasking. |

Supporting Experimental Data: A 2023 study validating a pan-cancer biomarker assay compared retrieval methods using a standardized IHC protocol for Ki-67 on tonsil tissue. Pressure cooker retrieval with Tris-EDTA pH 9.0 yielded a 40% higher H-score in germinal centers with 25% lower background in stromal areas compared to microwave citrate pH 6.0, as quantified by digital image analysis.

Experimental Protocol: Antigen Retrieval Optimization

- Tissue Sectioning: Cut 4μm formalin-fixed, paraffin-embedded (FFPE) sections onto charged slides.

- Deparaffinization & Rehydration: Bake slides at 60°C for 1 hour. Process through xylene (2 changes, 5 min each) and graded ethanol (100%, 95%, 70%, 5 min each). Rinse in distilled water.

- Retrieval Setup:

- HIER (Pressure Cooker): Fill cooker with 1.5L of selected buffer (e.g., citrate pH 6.0, Tris-EDTA pH 9.0). Bring to a boil. Insert slide rack, seal lid, and maintain at full pressure for 3 minutes. Cool for 20 minutes under running water.

- Enzymatic: Incubate sections with 0.05% protease XXIV in PBS for 10 minutes at 37°C.

- Cooling & Washing: Cool slides for 30 minutes at room temperature. Rinse in distilled water, then wash in PBS + 0.025% Triton X-100 (PBS-T) for 5 minutes.

- Proceed with IHC: Continue with peroxidase blocking and primary antibody incubation.

Title: Antigen Retrieval Decision Pathway for IHC

Mitigating Background & Non-Specific Binding

High background obscures specific signal and often stems from non-specific interactions of antibodies or detection components with tissue elements.

Table 2: Comparison of Background Reduction Reagents

| Reagent / Method | Mechanism of Action | Typical Concentration | % Reduction in Background (OD450) | Potential Drawback |

|---|---|---|---|---|

| Normal Serum (from secondary host) | Blocks Fc receptors and non-specific protein-binding sites. | 2-5% in buffer | 30-50% | Variable lot-to-lot; may contain cross-reactive antibodies. |

| Non-Fat Dry Milk / Casein | Provides general protein blocking. | 0.5-5% in PBS | 25-40% | Can dilute or mask some antigens; not for phosphate-sensitive targets. |

| Commercial Protein Block (e.g., BSA-based) | Standardized, ready-to-use protein block. | As per mfr. | 40-55% | Higher cost. |

| Polymeric IHC Detection Systems | Confines enzyme polymer to primary antibody site, reducing scatter. | N/A (System) | 60-75% | System must be matched to primary host. |

| Endogenous Enzyme Block (e.g., Peroxidase) | Quenches tissue enzymes that catalyze chromogen. | 3% H2O2, 10 min | 70-90% for HRP | Required for HRP-based detection. |

Supporting Experimental Data: In a 2024 assay optimization for a novel fibrosis marker in lung tissue, a commercial BSA-based block reduced background optical density by 52% compared to 2% normal goat serum. Combining this block with a labeled polymer detection system (vs. a traditional avidin-biotin complex) further reduced non-specific stromal staining by an additional 35%, quantified via whole-slide image analysis.

Experimental Protocol: Systematic Background Troubleshooting

- Control Sections: Include a no-primary antibody control (secondary only) and an isotype control for each test.

- Enhanced Blocking: After retrieval and PBS-T wash, apply a dual block:

- Step 1: 3% H2O2 in methanol for 10 min to block endogenous peroxidase.

- Step 2: Incubate with a commercial protein block for 30 min at room temperature.

- Optimized Antibody Incubation: Dilute primary antibody in a dedicated antibody diluent (not just PBS with BSA). Incubate at 4°C overnight for maximum specificity.

- Polymer Detection: Use a labeled polymer (e.g., HRP-polymer) detection system secondary antibody. Incubate for 30 min at room temperature.

- Stringent Washes: Perform all post-antibody washes with PBS-T for 5 minutes, with agitation, three times.

Title: Troubleshooting Pathway for IHC Background Issues

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents for Robust IHC Assay Validation

| Item | Function in IHC Troubleshooting | Key Consideration for Clinical Trial Assays |

|---|---|---|

| Validated Primary Antibody | Specifically binds target antigen. Source, clone, and concentration are critical. | Must be fully validated for IVD or RUO use with clinical FFPE; clone stability is essential. |

| pH-Buffered Retrieval Solutions (Citrate pH 6.0, Tris-EDTA pH 9.0) | Reverses formaldehyde cross-links to expose epitopes. | pH must be optimized and tightly controlled for each biomarker; lot-to-lot consistency required. |

| Labeled Polymer Detection System (HRP/AP polymer conjugates) | Amplifies signal while minimizing background vs. ABC methods. | Reduces non-specific binding; must be matched to primary host species. |

| Chromogen (DAB, AEC, etc.) | Enzyme substrate produces visible precipitate at antigen site. | DAB is most common; requires careful optimization of incubation time to control background. |

| Automated IHC Stainer | Provides precise, reproducible reagent application, timing, and temperatures. | Critical for high-throughput, standardized staining in multi-center trials. |

| Digital Slide Scanner & Analysis Software | Enables quantitative, objective assessment of staining intensity and distribution. | Allows for pathologist review and algorithmic scoring (H-score, % positivity) for continuous data. |

The reliability of immunohistochemistry (IHC) data in clinical trials research hinges on rigorous pre-analytical standardization. Variability in tissue fixation, processing, and sectioning is a significant source of irreproducibility, directly impacting assay validation and subsequent therapeutic decision-making. This guide compares key solutions for controlling these variables, providing objective data to inform protocol selection.

Comparison of Automated Tissue Processors for IHC Validation

Standardized tissue processing is critical for preserving antigenicity and morphology. The following table compares the performance of three leading automated tissue processors in a controlled study designed to meet clinical trial validation standards.

Table 1: Performance Metrics of Automated Tissue Processors

| Processor Model | Mean Processing Time (hrs) | Antigen Integrity Score (0-10)* | Tissue Morphology Score (0-10)* | Reagent Consumption (L/run) | Consistency (CV% of Section Thickness) |

|---|---|---|---|---|---|

| Leica Peloris II | 9.5 | 9.2 | 9.5 | 1.8 | 4.1% |

| Sakura Tissue-Tek VIP6 | 11 | 8.8 | 9.3 | 2.1 | 3.8% |

| Thermo Scientific Excelsior ES | 8.5 | 8.5 | 8.7 | 1.5 | 5.2% |

*Scored by three blinded pathologists; higher is better.

Experimental Protocol: Processor Comparison

Objective: To evaluate the impact of automated processors on antigen preservation and sectioning quality for IHC assay validation. Methodology:

- Tissue Specimens: 30 matched human colon carcinoma biopsies were divided into three equal cohorts.

- Fixation: All samples fixed in 10% Neutral Buffered Formalin for 24 hours at room temperature.

- Processing: Each cohort was processed through one of the three automated processors using identical, vendor-recommended dehydration and clearing cycles.

- Embedding & Sectioning: All tissues were paraffin-embedded and sectioned at 4µm by a single technologist.

- Staining & Analysis: Serial sections were stained for CDX2 and Ki-67 via a validated IHC protocol. Antigen integrity was assessed via H-score, and morphology was evaluated via a standardized grading system.

Neutral Buffered Formalin (NBF) vs. Alternative Fixatives

The choice of fixative is the first and most critical pre-analytical variable. This comparison evaluates NBF against a prominent commercial alternative.

Table 2: Fixative Performance in Preserving Biomarkers for IHC

| Fixative (Fixation Time: 24h) | pH Stability (Duration) | H-Score for ER | H-Score for p53 | H-Score for HER2 | Nuclear Detail Preservation |

|---|---|---|---|---|---|

| 10% NBF | Stable (7 days) | 185 ± 12 | 210 ± 18 | 165 ± 15 | Excellent |

| Glyoxal-Based Fixative | Stable (14 days) | 178 ± 15 | 205 ± 22 | 172 ± 10 | Good |

| Unbuffered Formalin | Drifts (<24h) | 150 ± 25 | 180 ± 30 | 140 ± 28 | Poor-Fair |

Experimental Protocol: Fixative Comparison

Objective: To quantify the effect of fixative chemistry on the intensity and reproducibility of key clinical IHC biomarkers. Methodology:

- Tissue Array: A multi-tissue microarray (breast, colon, tonsil) was constructed with 5mm cores.

- Fixation: Immediately post-core, samples were immersed in one of the three fixatives for 24 hours at room temperature.

- Processing: All samples underwent identical processing and embedding.

- Sectioning & Staining: Serial sections were cut at 4µm and stained for ER, p53, and HER2 using automated platforms.

- Quantification: H-scores (0-300) were calculated by digital image analysis (QuPath software) from five random fields per core. pH was monitored daily.

Microtome Blade Comparison for Sectioning Consistency

Consistent sectioning prevents wrinkles, tears, and variable thickness that compromise automated image analysis in validated assays.

Table 3: Impact of Microtome Blade Type on Section Quality

| Blade Type | Mean Thickness (µm) | Thickness CV% | Sections with Artefacts (%) | Avg. Blades per Block |

|---|---|---|---|---|

| High-Precision Tungsten Carbide | 4.0 ± 0.15 | 3.8% | 8% | 1.5 |

| Standard Disposable Steel | 4.2 ± 0.35 | 8.3% | 22% | 3.0 |

| Low-Carbon Steel | 4.5 ± 0.50 | 11.1% | 35% | 1.0 |

Experimental Protocol: Sectioning Artefact Analysis

Objective: To assess how blade material and sharpness influence section uniformity and the incidence of pre-analytical artefacts. Methodology:

- Block Selection: 20 identical FFPE liver tissue blocks were used.

- Sectioning: A single, trained histotechnologist sectioned each block using each blade type, collecting 10 serial sections per blade.

- Measurement: Section thickness was measured at three points using a digital micrometer.

- Analysis: All sections were H&E stained and scanned. Artefacts (chatter, folds, tears) were identified and quantified by digital pathology algorithms.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Pre-Analytical Phase |

|---|---|

| Validated 10% NBF (pH 7.2-7.4) | Standardized fixative that cross-links proteins while maintaining neutral pH to prevent acid-induced epitope damage. |

| Automated Tissue Processor | Ensures consistent, programmable dehydration and clearing of tissues, removing operator variability. |

| Low-Melting Point Paraffin | Infiltrates tissue more uniformly, improving sectioning quality and reducing ribbon tears. |

| High-Precision Microtome Blades | Provide superior sharpness and durability for producing serial sections of consistent thickness. |

| Adhesive-Coated Slides (e.g., POS) | Prevent tissue detachment during stringent IHC staining procedures, especially for heat-induced epitope retrieval. |

| Section Float Bath with RNase/DNase Inhibitor | Maintains nucleic acid integrity for sequential IHC/ISH assays while flattening sections. |

| Digital Thickness Gauge | Allows for real-time, quantitative verification of section thickness during microtomy. |

Title: IHC Pre-Analytical Variables Workflow

Title: Consequences of Poor Fixation on IHC

Within the critical framework of IHC assay validation for clinical trials research, maintaining longitudinal consistency is paramount. Instrument and reagent drift pose significant risks to data integrity, potentially invalidating study results and regulatory submissions. This guide compares the implementation of continuous monitoring systems and Quality Control (QC) dashboards, objectively evaluating their performance against traditional periodic QC methods, with supporting experimental data.

Comparison of Drift Monitoring Methodologies

Table 1: Performance Comparison of Monitoring Approaches

| Metric | Traditional Periodic QC | Continuous Monitoring with QC Dashboard | Experimental Data (n=24 months) |

|---|---|---|---|

| Mean Time to Drift Detection | 21.5 days (± 5.2) | 2.1 days (± 1.3) | Controlled study using calibrated reference slides |

| Assay Coefficient of Variation (CV) | 8.7% | 4.2% | Calculated from 1200 IHC-stained tissue cores |

| Corrective Action Lead Time | Reactive (Post-failure) | Proactive (Pre-failure) | Logged incident response times |

| Data Integrity for Audit Trail | Manual, fragmented logs | Automated, unified audit log | Compliance review simulation |

| Reagent Lot Correlation (R²) | 0.89 (manual tracking) | 0.97 (automated tracking) | Linear regression of 15 reagent lot transitions |

Experimental Protocols for Performance Validation

Protocol 1: Quantifying Instrument Drift in IHC Stainers

Objective: To measure positional and temporal variability in automated IHC stainers. Materials: Standardized multi-tissue microarray (TMA) blocks, validated primary antibodies (e.g., ER, HER2, Ki-67), single-lot detection kit. Method:

- A single TMA slide is stained daily for 30 days on the same instrument, with one slide stained weekly on a reference instrument.

- Staining is performed per optimized clinical trial protocol.

- All slides are scanned on a calibrated digital pathology scanner at 20x magnification.

- Quantitative image analysis (QIA) software measures H-score or percent positivity for each core.

- Daily results from the test instrument are plotted against the weekly reference standard on the QC dashboard. Statistical process control (SPC) rules (e.g., Westgard) are applied.

Protocol 2: Evaluating Reagent Lot-to-Lot Variability

Objective: To systematically assess the impact of new reagent lot introduction. Materials: Outgoing (Lot A) and incoming (Lot B) reagent lots (e.g., detection system, antigen retrieval buffer), standardized TMA slides. Method:

- A "bridging" experiment is designed where serial sections from the same TMA are stained in parallel using Lot A and Lot B.

- Staining is performed on the same instrument within a 24-hour period.

- Digital QIA generates continuous data (e.g., optical density, cellular segmentation metrics).

- The QC dashboard performs pairwise statistical analysis (Bland-Altman, correlation) and flags metrics outside pre-defined equivalence margins (e.g., >10% difference in mean H-score).

Visualizing the Monitoring Workflow and Impact