How to Validate Models with Limited Data: Expert Strategies for Biomedical Research

This article provides researchers, scientists, and drug development professionals with a comprehensive, practical framework for robust model validation under data scarcity.

How to Validate Models with Limited Data: Expert Strategies for Biomedical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive, practical framework for robust model validation under data scarcity. We address four critical areas: the foundational principles defining limited data contexts and the value of validation; a detailed exploration of modern methodological toolkits including Bayesian, transfer learning, and synthetic data approaches; systematic troubleshooting to overcome common pitfalls and optimize model design for small-n studies; and rigorous validation paradigms for comparative evaluation and establishing credibility. This guide synthesizes current best practices to build confidence in predictive models when experimental validation is constrained.

Defining the Challenge: What 'Limited Data' Really Means for Model Credibility

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: General Model Validation with Limited Data

Q1: How can I validate a predictive model when I have fewer than 20 experimental samples (small-n problem)? A: Traditional train-test splits are unreliable. Employ iterative resampling methods. Below is a comparison of common techniques.

| Method | Description | Recommended Use Case | Key Consideration |

|---|---|---|---|

| Leave-One-Out Cross-Validation (LOOCV) | Iteratively train on n-1 samples, test on the left-out sample. | Ultra-small n (e.g., n<15). | High computational cost, high variance in error estimate. |

| k-Fold Cross-Validation (k=n or 5) | Split data into k folds; use each fold as a test set once. | Small-to-moderate n (e.g., n=20-50). | For n<20, use k=n (equivalent to LOOCV) or k=5 with stratification. |

| Bootstrap Validation | Repeatedly sample with replacement to create training sets, using unsampled data as test. | Small n for estimating confidence intervals. | Optimistic bias; use the .632 or .632+ bootstrap correction. |

| Permutation Testing | Randomly shuffle the outcome labels to establish a null distribution of model performance. | Any n, to assess statistical significance. | Provides a p-value, not a performance metric like accuracy. |

Experimental Protocol: k-Fold Cross-Validation for Small-n

- Preprocessing: Normalize and scale all features using parameters from the entire dataset to avoid data leakage.

- Stratification: If the outcome is categorical, ensure each fold preserves the percentage of samples for each class. Use

StratifiedKFold(from scikit-learn). - Iteration: For each of the k folds:

- Hold the selected fold as the temporary test set.

- Train the model on the remaining k-1 folds.

- Apply the trained model to the held-out fold and store the performance metrics (e.g., AUC-ROC, RMSE).

- Aggregation: Calculate the mean and standard deviation of the performance metrics across all k folds. Report the mean as expected performance and the std as its variability.

Diagram Title: Small-n k-Fold Cross-Validation Workflow

Q2: My high-content screen failed for half the plates due to a technical error, resulting in Missing Not At Random (MNAR) data. How do I proceed? A: This is a Partial Observability challenge. Imputing missing data with standard methods (mean, KNN) can introduce severe bias. Follow this diagnostic and mitigation workflow.

Diagram Title: Diagnostic Flow for Missing Data Types

Experimental Protocol: Pattern Analysis for MNAR

- Create a Missingness Mask: Generate a binary matrix

MwhereM_ij = 1if data for feature j in sample i is missing. - Correlate Mask with Outcomes: Perform a statistical test (e.g., t-test) to compare the distribution of the observed outcome

Y_obsagainst the missingness patternM. A significant result indicates MNAR. - Mitigation Strategy - Selection Models: If MNAR is confirmed, consider a two-stage model.

- Stage 1 (Selection): Model the probability of a data point being observed using logistic regression:

P(M=1 | X, Z), where Z are known covariates potentially related to the cause of missingness (e.g., plate ID, batch). - Stage 2 (Outcome): Model the primary outcome, incorporating the inverse probability of selection weights from Stage 1 to correct for the biased sample.

- Stage 1 (Selection): Model the probability of a data point being observed using logistic regression:

The Scientist's Toolkit: Key Reagents & Materials for Limited-Data Studies

| Item | Function in Context | Critical Specification / Note |

|---|---|---|

| CRISPR Knockout/Knockdown Pools | Enables high-content screening with fewer replicates by targeting multiple genes per well, increasing data density. | Use libraries with unique barcodes for deconvolution. Essential for small-n inference on gene pathways. |

| Multiplex Immunoassay Kits (e.g., Luminex, MSD) | Measures dozens of analytes (cytokines, phospho-proteins) from a single small-volume sample, maximizing information per subject. | Validate cross-reactivity. Crucial for longitudinal studies with scarce patient samples. |

| Single-Cell RNA-Seq Library Prep Kits | Transforms a limited tissue sample into thousands of data points, mitigating small-n at the cost of introducing compositional data. | Include Unique Molecular Identifiers (UMIs) to correct for amplification bias. |

| Stable Isotope Labeling Reagents (SILAC, TMT) | Allows multiplexing of proteomic samples, enabling comparison of multiple conditions within a single MS run to control for technical variance. | Ensure labeling efficiency >99%. Key for paired experimental designs with limited replicates. |

| Inhibitor/Observable Cocktails | Used in pathway perturbation studies to create "partial observability" conditions in vitro, serving as positive controls for MNAR method development. | Document exact concentrations and exposure times. |

Troubleshooting Guides & FAQs

This technical support center addresses common challenges in validating predictive models with limited experimental data, a critical component of mitigating translation risk in drug development.

FAQ 1: How do I know if my in silico ADMET model is sufficiently validated before moving to animal studies?

Answer: Insufficient validation at this stage is a primary cause of late-stage attrition. Use a multi-faceted approach:

- Internal Validation: Perform rigorous k-fold cross-validation (k=5 or 10) and leave-one-out cross-validation to assess predictability within your dataset.

- External Validation: This is non-negotiable. You must test the model on a completely independent, blinded dataset not used in training. This can be generated through a small, targeted in-house experiment or obtained from a public repository.

- Benchmarking: Compare your model's performance against established benchmarks or simple baseline models (e.g., linear regression). If a complex model doesn't significantly outperform a simple one, it is likely overfit.

Key Performance Indicator (KPI) Table for Model Validation:

| Validation Type | Recommended Metric | Minimum Threshold for Proceeding | Ideal Target |

|---|---|---|---|

| Internal (Cross-Validation) | Q² (Coefficient of Determination) | > 0.5 | > 0.7 |

| External (Test Set) | R²ₑₓₜ (External R²) | > 0.4 | > 0.6 |

| External (Test Set) | RMSEₑₓₜ (Root Mean Square Error) | Context-dependent; must be < assay variability. | Significantly lower than training RMSE. |

| Predictive Reliability | Concordance Correlation Coefficient (CCC) | > 0.85 | > 0.9 |

FAQ 2: My organ-on-a-chip model shows promising efficacy, but how do I troubleshoot its lack of correlation with historical in vivo data?

Answer: This discrepancy often arises from incomplete representation of systemic physiology.

- Checklist:

- Medium Composition: Verify that your circulating medium contains physiologically relevant levels of key proteins (e.g., albumin for compound binding) and hormones.

- Metabolic Competence: Ensure relevant metabolic enzymes (e.g., CYP450 isoforms) are expressed and active at in vivo-like levels. Consider co-culturing with hepatocytes.

- Shear Stress & Mechanical Cues: Confirm that applied fluid shear stress matches the physiological range for your target tissue.

- Non-Cellular Components: Are you incorporating an appropriate extracellular matrix (ECM)? The wrong ECM can drastically alter signaling.

Experimental Protocol: Establishing Metabolic Competence in a Liver-on-a-Chip Model

- Seed primary human hepatocytes in the chip's main chamber under flow.

- Culture for 5-7 days to stabilize phenotype and enzyme expression.

- Dose with a panel of probe substrates (e.g., Phenacetin for CYP1A2, Bupropion for CYP2B6).

- Collect effluent medium at timed intervals (e.g., 0, 15, 30, 60, 120 min).

- Analyze metabolite formation using LC-MS/MS.

- Calculate intrinsic clearance (CLᵢₙₜ) and compare to published human hepatocyte suspension data. A correlation coefficient (r) > 0.8 indicates good metabolic competence.

FAQ 3: What are the critical steps to validate a machine learning model for compound screening when I have less than 100 confirmed active/inactive data points?

Answer: With limited data, your strategy must prioritize robustness over complexity.

- Immediate Actions:

- Use Simple Models: Start with Random Forest or k-Nearest Neighbors rather than deep neural networks to avoid overfitting.

- Apply Heavy Regularization: Use techniques like L1/L2 regularization, dropout, and early stopping if using neural networks.

- Data Augmentation: Employ realistic molecular transformation (e.g., generating similar tautomers, stereoisomers) to artificially expand your training set.

- Utilize Transfer Learning: Pre-train your model on a large, public dataset (e.g., ChEMBL) for a related task (e.g., general toxicity prediction), then fine-tune it on your small, specific dataset.

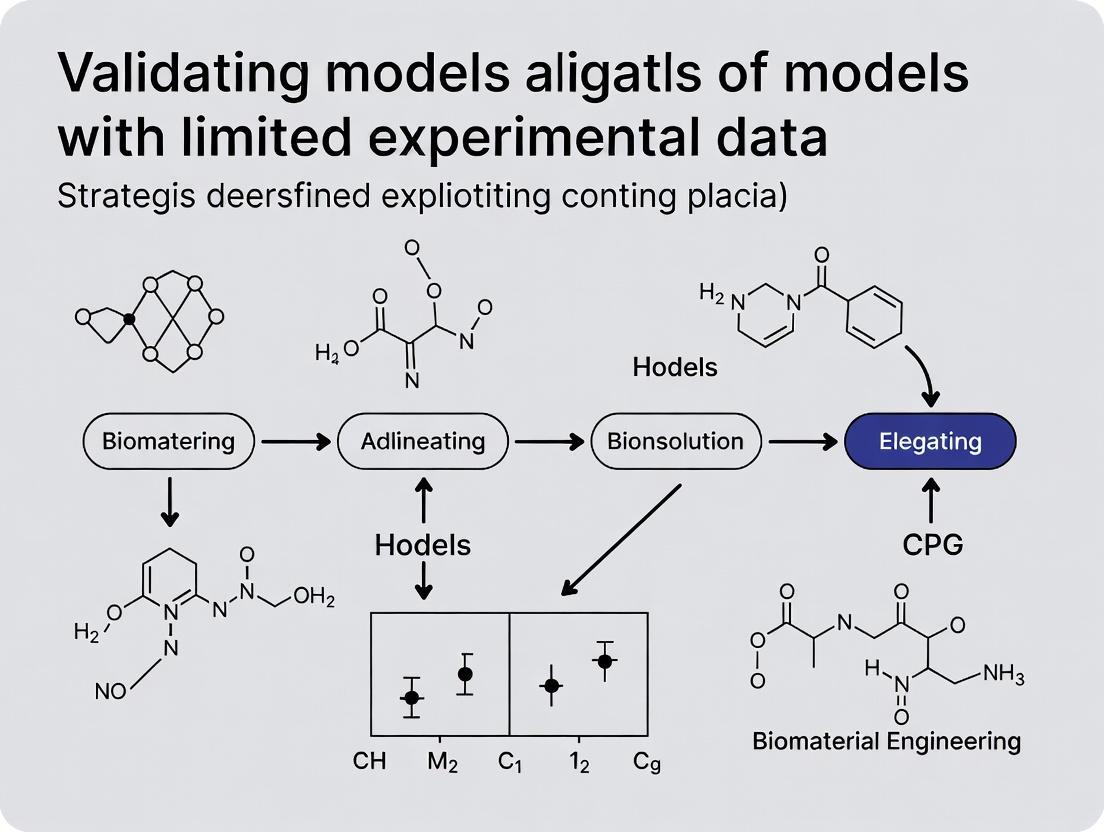

Visualization: Model Validation Workflow for Limited Data

Title: Validation Workflow for Small Datasets

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Validation | Key Consideration |

|---|---|---|

| Primary Human Cells (e.g., hepatocytes, iPSC-derived cardiomyocytes) | Provides physiologically relevant cellular response; gold standard for in vitro to in vivo extrapolation (IVIVE). | Donor variability is high; use pooled donors (n≥3) for robustness. |

| LC-MS/MS Grade Solvents & Standards | Essential for generating high-quality pharmacokinetic/toxicokinetic data for model training and validation. | Purity and consistency directly impact quantitative accuracy. |

| ECM Hydrogels (e.g., Matrigel, collagen I, fibrin) | Recapitulates the 3D mechanical and biochemical microenvironment for complex culture models (organoids, OoC). | Batch variability is significant; pre-test each lot for key markers. |

| Validated Antibody Panels for Flow Cytometry | Enables precise phenotyping of complex co-cultures to ensure consistent cellular composition. | Must be titrated and validated for your specific cell type and instrument. |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Critical for accurate quantification in targeted metabolomics and proteomics assays for biomarker discovery. | Use isotope-labeled analogs of your target analytes for best precision. |

| Benchmark Compound Set (e.g., FDA-approved drugs, well-characterized toxins) | Serves as a positive/negative control set to calibrate and benchmark new predictive models. | Curate a set with diverse mechanisms and known clinical outcomes. |

Visualization: Key Signaling Pathways in Validation of Cardiotoxicity Models

Title: Cardiotoxicity Validation Pathways

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My validation loss starts increasing after a few epochs while training loss continues to decrease. What steps should I take? A: This is a classic sign of overfitting. Recommended actions:

- Immediate Action: Reduce model complexity. Decrease the number of layers or units per layer by at least 30-50% and retrain.

- Implement Regularization: Add L2 weight regularization (λ=0.001) or Dropout (rate=0.5 for dense layers) to your architecture.

- Expand Your Data: Apply aggressive data augmentation. For image data, use rotations, flips, and zooms. For tabular data from limited experiments, consider synthetic data generation via SMOTE or Gaussian noise injection (≤5%).

- Stop Early: Implement Early Stopping with a patience of 10 epochs monitoring validation loss.

Q2: I have very limited experimental data points (n<50). What model validation strategy should I use? A: With severely limited data, traditional train/test splits are unreliable.

- Use Nested Cross-Validation: This is the gold standard. Implement an outer loop (k=5) for performance estimation and an inner loop (k=3) for model/parameter selection.

- Apply Bayesian Methods: Use Bayesian Ridge Regression or Gaussian Processes, which naturally incorporate uncertainty and are less prone to overfit on small

n. - Report Confidence Intervals: Always report performance metrics with 95% confidence intervals from bootstrapping (≥1000 iterations).

Q3: How do I choose between a simpler linear model and a complex deep neural network for my dataset? A: Base your decision on the estimated Sample Complexity of your model versus your available data.

Table 1: Model Selection Guide Based on Available Data

| Available Labeled Data Points | Recommended Model Class | Key Rationale | Expected Variance |

|---|---|---|---|

| n < 100 | Linear/Logistic Regression with regularization | High bias, low variance. Sample complexity is low. | Low |

| 100 < n < 1,000 | Shallow NN (1-2 hidden layers), SVM, Random Forest | Balances capacity and generalization. | Medium |

| 1,000 < n < 10,000 | Moderately deep CNN/RNN, Gradient Boosting | Sufficient data to fit more parameters. | Medium to High |

| n > 10,000 | Deep Neural Networks (e.g., ResNet, BERT variants) | High capacity required; data can constrain it. | High (if managed) |

Protocol 1: Nested Cross-Validation for Small Datasets

- Define Outer Loop: Split your entire dataset

Dinto 5 non-overlapping folds. Fori = 1 to 5: - Hold Out Test Set: Set fold

iaside as the final test setTest_i. - Define Inner Loop: Use the remaining 4 folds (

D_train_i) as the inner data. - Hyperparameter Tuning: Split

D_train_iinto 3 folds. Perform 3-fold cross-validation on this inner set for each combination of hyperparameters (e.g., learning rate, layer size, regularization strength). - Select Best Model: Choose the hyperparameters yielding the best average inner CV performance.

- Final Training & Evaluation: Train a new model on the entire

D_train_iusing the best hyperparameters. Evaluate it on the held-outTest_ito get an unbiased performance scoreS_i. - Aggregate: The final model performance is the average of all

S_ifrom the 5 outer folds.

Q4: What are the best practices for using regularization techniques effectively? A: Regularization adds constraints to limit model complexity.

- L1/L2 Regularization: Start with L2 (weight decay). A good heuristic is to set λ =

1 / (10 * n)wherenis your sample size. Monitor weight magnitudes. - Dropout: Apply before activation layers. Use a rate of 0.2-0.5. Higher rates for larger layers. Disable during validation/testing.

- Early Stopping: Monitor validation loss. Set patience based on epoch size; a good default is 10% of total planned epochs.

Q5: How can I generate a learning curve to diagnose overfitting? A: Plot model performance vs. training set size. Protocol 2: Generating a Diagnostic Learning Curve

- Reserve a fixed, held-out validation set (e.g., 20% of your data).

- Start with a small subset (e.g., 10%) of your remaining training data.

- Train your model on this subset and record the score on both this training subset and the held-out validation set.

- Gradually increase the training subset size (e.g., 20%, 40%, 60%, 80%, 100%).

- Plot the two curves. A growing gap between training and validation scores indicates overfitting.

Diagnostic Learning Curve Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Validating Models with Limited Data

| Reagent / Solution | Primary Function in Context |

|---|---|

| scikit-learn | Provides robust implementations of nested cross-validation, simple linear models, regularization (Ridge/Lasso), and learning curve utilities. |

| SMOTE (Synthetic Minority Over-sampling Technique) | Generates synthetic samples for underrepresented classes in small, imbalanced experimental datasets to improve model generalization. |

| GPy / GPflow | Enables Gaussian Process regression modeling, which is ideal for small n as it provides probabilistic predictions and inherent uncertainty quantification. |

| TensorFlow / PyTorch (with Dropout & L2 modules) | Frameworks for building complex models with built-in regularization layers (Dropout, WeightDecay) to explicitly control overfitting. |

Bootstrapping Script (Custom or via sklearn.resample) |

Creates multiple resampled datasets to estimate confidence intervals for performance metrics, critical for reporting reliability with limited data. |

Bayesian Optimization Library (e.g., scikit-optimize, BayesianOptimization) |

Efficiently selects hyperparameters with fewer trials than grid search, preserving precious data points for training rather than exhaustive search. |

Validation Strategy for Limited Data

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During cross-validation with very small datasets (n<30), my model performance metrics (e.g., RMSE, AUC) vary wildly between folds. How can I determine if my model is truly acceptable? A: High variance in small-sample cross-validation is expected. To define an acceptable benchmark:

- Calculate the Null Benchmark: Establish the performance of a simple, interpretable baseline model (e.g., mean predictor for regression, majority class for classification) using the same CV procedure.

- Use Confidence Intervals: Report performance metrics with confidence intervals (e.g., 95% CI via bootstrap). An acceptable model should have its lower CI bound above the null benchmark's upper bound.

- Employ Bayesian Methods: Consider Bayesian models that provide posterior predictive distributions, offering a more nuanced view of expected performance under data scarcity.

Q2: What are the minimum performance thresholds for a predictive model in early-stage drug discovery to be considered "promising" for further validation? A: Absolute thresholds are context-dependent, but general benchmarks for limited-data contexts in early discovery include:

| Model Type | Typical Metric | Minimum Acceptable Benchmark (vs. Random/Simple Baseline) | Realistic Goal (Limited Data) |

|---|---|---|---|

| Binary Classification (e.g., Active/Inactive) | AUC-ROC | > 0.65 | 0.70 - 0.75 |

| Balanced Accuracy | > 55% | > 60% | |

| Regression (e.g., pIC50) | Mean Absolute Error (MAE) | Lower than Null Model's MAE | MAE < 0.7 (for pIC50) |

| R² | > 0.1 | > 0.3 |

Note: These must be validated via rigorous resampling. The primary goal is statistically significant improvement over a relevant naive baseline.

Q3: My dataset has severe class imbalance (e.g., 95% negatives, 5% positives). Which metrics should I use to set realistic goals? A: Accuracy is misleading. Define benchmarks using:

- Primary: Precision-Recall AUC (PR-AUC). A model better than random will have PR-AUC > proportion of positive class (0.05 in your case).

- Secondary: Matthews Correlation Coefficient (MCC) or Balanced Accuracy. An MCC > 0 is better than random guessing.

- Protocol: Use stratified sampling in all resampling steps. Report the performance of a weighted baseline model in your benchmark table.

Q4: How do I create a robust performance benchmark when I have no external test set available? A: Implement a nested (double) cross-validation protocol to simulate the model development and evaluation process without data leakage.

Experimental Protocol: Nested Cross-Validation for Benchmarking

Objective: To reliably estimate model performance and define acceptability benchmarks using only limited internal data.

Methodology:

- Define Outer Loop (Evaluation): Split data into k (e.g., 5) folds. Hold each fold out once as the test set.

- Define Inner Loop (Model Selection/Tuning): On the remaining (k-1) folds, perform another cross-validation (e.g., 5-fold) to select hyperparameters or choose between algorithms.

- Train & Evaluate: Train the final model with the chosen configuration on all (k-1) folds and evaluate on the held-out outer test fold.

- Aggregate Results: Collect all outer fold test predictions to compute final performance metrics and their variance.

- Compare to Baseline: Run the identical nested CV process on your chosen simple baseline model (e.g., logistic regression with only key features, or a mean predictor).

Q5: How can I visualize the relationship between data quantity, model complexity, and expected performance to set goals? A: Create a learning curve analysis. This diagnostic plots model performance (both training and validation scores) against increasing training set sizes or model complexity.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Limited-Data Model Validation | Example / Note |

|---|---|---|

| scikit-learn (Python) | Provides robust implementations for nested cross-validation, learning curves, and a wide array of performance metrics (e.g., cross_val_score, learning_curve, RepeatedStratifiedKFold). |

Essential for implementing the experimental protocols. |

| imbalanced-learn | Offers specialized resamplers (e.g., SMOTE, SMOTENC) and metrics (PR-AUC) for handling class imbalance in small datasets within CV loops. | Use inside the inner CV loop only to avoid leakage. |

| Bayesian Regression/Classification Libraries (e.g., PyMC3, Stan) | Allow for prior knowledge incorporation and provide full posterior predictive distributions, quantifying uncertainty—critical when data is scarce. | Helps set probabilistic performance benchmarks. |

| Bootstrapping Scripts | For generating confidence intervals around any performance metric when traditional CV variance is still too high. | Simple method to estimate stability of benchmarks. |

| Simple Baseline Model Scripts | Code to implement a naive predictor (mean/mode), a linear model with 1-2 key features, or a random forest with very shallow trees. | Serves as the crucial comparison point for "acceptability." |

| Visualization Libraries (Matplotlib, Seaborn) | For creating learning curves, performance distribution box plots (model vs. baseline), and calibration plots. | Necessary for communicating benchmark results clearly. |

This technical support center is designed for researchers, scientists, and drug development professionals working within the critical constraint of limited experimental data. The following troubleshooting guides and FAQs are framed to support strategic decisions in model validation, a core component of advancing research on "Strategies for validating models with limited experimental data."

Troubleshooting Guides & FAQs

Q1: Our predictive model shows high training accuracy but poor performance on a small, independent test set. What are the primary diagnostic steps?

A: This typically indicates overfitting. Follow this protocol:

- Complexity Check: Reduce model complexity (e.g., decrease polynomial degrees, increase regularization parameters).

- Data Augmentation: Apply techniques like SMOTE for tabular data or affine transformations for image data to artificially expand your training dataset.

- Cross-Validation Re-run: Employ Leave-One-Out (LOO) or Repeated K-Fold Cross-Validation (e.g., 10-fold repeated 5 times) on your entire available dataset to get a more reliable performance estimate.

- Feature Importance Analysis: Use SHAP or permutation importance to identify and retain only the most robust predictors, reducing noise.

Q2: We have only 15 data points for a rare cell subtype response. How can we possibly validate a dose-response model?

A: With extremely low N, the strategy shifts from traditional validation to rigorous robustness assessment.

- Protocol - Bootstrapping with Confidence Intervals:

- Resample your 15 data points with replacement to create many (e.g., 1000) new datasets of size 15.

- Fit your model (e.g., 4-parameter logistic curve) to each bootstrap sample.

- Calculate the parameter of interest (e.g., IC50) for each fit.

- The 2.5th and 97.5th percentiles of the resulting IC50 distribution form a 95% confidence interval. A narrow interval suggests robustness despite limited data.

- Prior Knowledge Integration: Use Bayesian methods to incorporate relevant historical data or mechanistic priors into your model, formally reducing the dependence on new experimental data points.

Q3: What are the best practices for splitting very small datasets (<50 samples) for training and testing?

A: Avoid simple hold-out splits. Use resampling-based methods as per the comparative table below.

Table 1: Comparison of Validation Strategies for Small Datasets

| Method | Description | Recommended Dataset Size (N) | Key Advantage | Key Disadvantage |

|---|---|---|---|---|

| Hold-Out Validation | Single, random train/test split. | N > 10,000 | Simple, fast. | High variance in estimate with small N. |

| k-Fold Cross-Validation | Data split into k folds; each fold used once as test set. | N > 100 | Better use of data than hold-out. | Can be biased for tiny N; high computational cost. |

| Leave-One-Out (LOO) CV | Each single data point is used as the test set once. | N < 100 | Maximizes training data, low bias. | High variance, computationally expensive. |

| Repeated k-Fold CV | k-Fold process repeated multiple times with random splits. | N < 100 | More stable performance estimate. | Very high computational cost. |

| Bootstrapping | Models trained on resampled datasets with replacement. | N < 50 | Provides confidence intervals, works on very small N. | Can be overly optimistic if not corrected. |

Q4: How do we validate a mechanistic systems biology model when wet-lab validation experiments are prohibitively expensive?

A: Employ a tiered in silico validation framework before any lab work.

- Internal Consistency: Check if model outputs are consistent with its own mechanistic assumptions under edge-case simulations.

- Qualitative Comparison: Does the model reproduce known, non-quantitative behaviors (e.g., Pathway A inhibition leads to upregulation of Protein B)?

- Quantitative Face Validation: Compare to any existing, sparse literature data (e.g., one or two published IC50 values).

- Sensitivity Analysis: Perform global sensitivity analysis (e.g., Sobol indices) to identify the most influential parameters. These become top candidates for targeted experimental validation, maximizing resource efficiency.

Experimental Protocol: Bootstrapping for Dose-Response Curves with Limited Data

Objective: To estimate the confidence interval for an IC50 value from a limited set of dose-response measurements.

Materials:

- Dataset of 10-20 dose-response points.

- Statistical software (R, Python with SciPy/statsmodels).

Procedure:

- Data Preparation: Organize your data as a matrix of dose (log10 concentration) and response (e.g., % inhibition).

- Bootstrap Resampling: For i = 1 to B (B = 1000+):

- Randomly select N samples from your dataset with replacement, creating a new bootstrap sample.

- Fit a 4-parameter logistic (4PL) model:

Response = Bottom + (Top-Bottom)/(1+10^((LogIC50-LogDose)*HillSlope)). - Record the fitted LogIC50.

- Analysis:

- Sort the B LogIC50 estimates.

- The confidence interval is defined by the percentiles (e.g., 2.5th and 97.5th for 95% CI).

- Report the median and confidence interval. The width of the CI directly communicates the uncertainty from data limitation.

Diagram 1: Small N Validation Strategy Decision Flow

Diagram 2: Key Signaling Pathway for a Generic Drug Target (e.g., Receptor Tyrosine Kinase)

The Scientist's Toolkit: Research Reagent Solutions for Limited-Data Validation

Table 2: Essential Reagents & Tools for Sparse-Data Research

| Item | Function in Validation Context | Example/Supplier |

|---|---|---|

| Recombinant Proteins/Purified Targets | Enable highly controlled, low-variability biochemical assays (e.g., SPR, enzymatic activity) to generate precise, reproducible data points. | Sino Biological, R&D Systems. |

| Validated Phospho-Specific Antibodies | Critical for targeted, multiplexed measurement of key signaling nodes (e.g., p-ERK, p-AKT) from minute sample volumes via Western blot or Luminex. | Cell Signaling Technology. |

| CRISPR/Cas9 Knockout Kits | Generate isogenic control cell lines to create definitive negative control data points, strengthening causal inference in cellular models. | Synthego, Horizon Discovery. |

| LC-MS/MS Grade Solvents & Columns | Ensure maximal sensitivity and reproducibility in mass spectrometry, allowing quantification of more analytes from a single, small sample. | Thermo Fisher, Agilent. |

| Bayesian Statistical Software | Implement priors and hierarchical models to formally incorporate historical data or mechanistic knowledge, augmenting sparse new data. | Stan (Stan Dev. Team), PyMC3. |

| Synthetic Data Generation Algorithms | Create realistic in-silico data to test model robustness and explore edge cases beyond the scope of limited experimental data. | SMOTE (imbalanced-learn), GANs (TensorFlow). |

The Validation Toolkit: Practical Methods for Small and Sparse Datasets

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My nested cross-validation performance estimate is much lower than my simple cross-validation estimate. Which one is correct, and what does this indicate?

A: The nested cross-validation (NCV) result is the more reliable, unbiased estimate. The discrepancy suggests that your model is likely overfitting during the hyperparameter tuning phase in simple CV. The outer loop of NCV provides an unbiased assessment because it evaluates the entire model selection process on data not used for tuning. Trust the NCV estimate as your true expected performance on new data. This is critical in drug development to avoid overly optimistic projections.

Q2: During bootstrapping, my error estimate has very high variance across different random seeds. Is this normal, and how can I stabilize it?

A: High variance in bootstrapping estimates can occur, especially with small datasets (common in early-stage drug research). This is a sign of instability.

- Troubleshooting Steps:

- Increase Replicates: Increase the number of bootstrap iterations (B) from the common default of 200 to 1000 or 5000. Report the mean and confidence interval.

- Use the .632+ Estimator: The standard bootstrap (0.632 estimator) can be biased. Switch to the more robust .632+ estimator, which better corrects for optimism, particularly for overfit models.

- Check Data Distribution: For highly skewed or multimodal data, consider stratified bootstrapping within classes or conditions.

Q3: In Monte Carlo cross-validation (MCCV), what is the optimal split ratio (e.g., 70/30 vs 80/20) and number of iterations?

A: There is no universal optimum; it depends on your data size and objective.

- Small Datasets (< 100 samples): Use a higher training ratio (e.g., 80/20 or 90/10) to ensure the model has enough data to learn. Perform a high number of iterations (e.g., 500-1000) to compensate for the variance in each split.

- Larger Datasets: A 70/30 or 60/40 split is often adequate. The number of iterations can be lower (e.g., 200-500), as the variance across splits diminishes.

- Protocol: Always perform a small sensitivity analysis: run MCCV with different split ratios and plot the distribution of performance scores. The ratio that yields a stable, low-variance estimate is preferable for your specific dataset.

Q4: How do I choose between these three techniques for my specific validation problem with limited biological replicates?

A: The choice is guided by your dataset size and primary goal.

- Goal: Unbiased performance estimation with hyperparameter tuning → Use Nested CV. It is the gold standard but computationally expensive.

- Goal: Estimating model stability and confidence intervals → Use Bootstrapping. Excellent for small N, provides robust CI estimates for any performance metric.

- Goal: Approximating expected performance with flexible data usage → Use Monte Carlo CV. More flexible than standard k-fold CV, allows control over training set size.

Comparative Table of Resampling Techniques

| Technique | Primary Use Case | Key Advantage | Key Disadvantage | Recommended for Limited Data? |

|---|---|---|---|---|

| Nested CV | Unbiased error estimation when tuning is required. | No information leak; most trustworthy estimate. | Very high computational cost. | Yes, if computationally feasible. |

| Bootstrapping | Estimating confidence intervals & model stability. | Makes efficient use of all data; good for very small N. | Can produce optimistic bias (.632+ helps). | Yes, particularly effective. |

| Monte Carlo CV | Flexible performance estimation. | Control over training/test size; less variance than LOOCV. | Can have high variance if iterations are too few. | Yes, with sufficient iterations. |

Experimental Protocol: Implementing Nested Cross-Validation for a QSAR Model

This protocol is framed within a thesis on validating predictive models for compound activity with limited high-throughput screening data.

1. Objective: To obtain a robust, unbiased estimate of the predictive R² for a Random Forest QSAR model where both feature selection and hyperparameter tuning are required.

2. Materials & Data: A dataset of 150 compounds with 200 molecular descriptors (features) and a continuous bioactivity endpoint (pIC50).

3. Methodology:

* Outer Loop (Performance Estimation): Perform 10-fold cross-validation. This splits the data into 10 held-out test sets.

* Inner Loop (Model Selection): For each of the 10 outer training folds, run an independent 5-fold cross-validation to tune hyperparameters (e.g., max_depth, n_estimators) and perform recursive feature elimination.

* Model Training: For each outer fold, train a single final model on the entire 90% outer training set using the optimal hyperparameters and features identified in its inner loop.

* Testing: This final model predicts the completely unseen 10% outer test fold. The predictions from all 10 outer folds are aggregated.

* Performance Calculation: Calculate the R² between all true held-out values and the aggregated predictions.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Validation Context |

|---|---|

| Scikit-learn (Python) | Primary library for implementing Nested CV, Bootstrapping, and MCCV via GridSearchCV, Resample, and ShuffleSplit. |

| MLr (R/Bioconductor) | Comprehensive framework for machine learning in R, with built-in support for nested resampling and bootstrapping. |

| .632+ Estimator Function | Custom script (R/Python) to correct bootstrap optimism, crucial for small-sample validation. |

| Stratified Resampling | Method to preserve class distribution in resampling folds for categorical endpoints, preventing skewed splits. |

| Parallel Computing Cluster | Essential for computationally intensive Nested CV on large descriptor sets or deep learning models. |

Diagrams

Nested CV Workflow for QSAR Validation

Bootstrapping Process for Error Estimation

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My informative prior is overwhelmingly dominating the posterior, making the data irrelevant. What went wrong? A: This typically indicates an incorrectly specified prior distribution with excessive precision (e.g., a standard deviation that is too small). Solution: Perform a prior predictive check. Simulate data from your prior model before observing your experimental data. If the simulated data falls outside a biologically plausible range, your prior is too informative. Re-specify your prior with a larger variance or consider using a weakly informative prior that regularizes without dominating.

Q2: During Posterior Predictive Checking (PPC), my model consistently generates data that fails to capture key features of my observed dataset. What does this signify? A: This is a model misfit, indicating your model structure is inadequate for your data-generating process. Troubleshooting Steps:

- Identify the discrepancy: Use multiple test statistics (e.g., mean, variance, max/min) in your PPC.

- Diagnose: If the mean is off, check the likelihood link function. If variance is off (over/under-dispersion), consider switching distributions (e.g., Negative Binomial instead of Poisson for count data).

- Iterate: Modify the model (e.g., add hierarchical structure, covariates, or change the error distribution) and re-run PPC.

Q3: How do I quantify the choice between a weakly informative prior and a strongly informative prior derived from historical data? A: Use the Prior-Data Conflict Check. Compare the prior predictive distribution to your actual limited experimental data using a Bayes factor or a credibility interval check.

A very low probability (e.g., <0.05) suggests a conflict. You may need to down-weight the historical prior using methods like power priors or commensurate priors.

Q4: I have very limited new data (n<5). Can I still use Bayesian methods effectively? A: Yes, but the choice and justification of the prior become critical. The strategy is to use a robust or hierarchical prior structure.

- Method: Use a heavy-tailed prior (e.g., Student-t instead of Normal) for parameters to limit the influence of prior assumptions if the data strongly contradict them.

- Protocol: Fit the model with the robust prior. Compare the posterior to one derived from a conventional prior using posterior predictive checks on simulated future data. The robust model should yield more conservative, data-driven estimates.

Table 1: Comparison of Prior Specifications for a Potency (IC50) Parameter

| Prior Type | Distribution | Parameters (Mean, SD) | Rationale | Use-Case in Limited Data Context |

|---|---|---|---|---|

| Vague/Diffuse | Log-Normal | log(Mean)=1, SD=2 | Minimal information, allows data to dominate. | Default starting point; risk of implausible estimates. |

| Weakly Informative | Log-Normal | log(Mean)=1.5, SD=0.8 | Constrains to plausible orders of magnitude. | Default recommended; provides regularization. |

| Strongly Informative | Log-Normal | log(Mean)=2.0, SD=0.3 | Based on strong historical compound data. | N > 10 similar compounds; validate for conflict. |

| Robust (Heavy-tailed) | Student-t (on log scale) | df=3, location=1.5, scale=0.8 | Limits influence of prior tails if data are surprising. | Suspected prior-data conflict or high uncertainty. |

Table 2: Posterior Predictive Check Results for Two Dose-Response Models

| Model | Test Statistic (T) | Observed T (T_obs) | PPC p-value | Bayesian p-value | Interpretation |

|---|---|---|---|---|---|

| 4-Parameter Logistic (4PL) | Max Absolute Deviation | 0.15 | 0.42 | 0.41 | Good fit (p ~ 0.5). |

| 4-Parameter Logistic (4PL) | Residual Variance | 0.08 | 0.03 | 0.04 | Poor fit – underestimates variability. |

| 5-Parameter Logistic (5PL) | Max Absolute Deviation | 0.15 | 0.38 | 0.39 | Good fit. |

| 5-Parameter Logistic (5PL) | Residual Variance | 0.08 | 0.52 | 0.51 | Good fit – captures variance better. |

Experimental Protocols

Protocol 1: Conducting a Prior Predictive Check Objective: Validate the plausibility of specified prior distributions before observing new experimental data.

- Specify the full generative model: Define prior distributions P(θ) for all parameters θ and a likelihood P(y\|θ).

- Simulate: For

sin 1:S (S >= 1000):- Draw a parameter sample θs from the prior P(θ).

- Simulate a hypothetical dataset yreps from the likelihood P(y\|θs).

- Analyze Simulations: Calculate key scientific summary statistics (e.g., max response, EC50, hill slope) from each yreps.

- Visualize: Create a histogram or density plot of the simulated summary statistics.

- Evaluate: Ensure the range of simulated statistics encompasses biologically plausible outcomes. If not, revise priors.

Protocol 2: Formal Posterior Predictive Check (PPC) Workflow Objective: Assess the adequacy of a fitted Bayesian model to reproduce key features of the observed data.

- Fit the model to the observed data yobs to obtain the posterior distribution P(θ \| yobs).

- Define Test Quantities T(y): Choose one or more statistics (e.g., mean, 95th percentile, a custom goodness-of-fit measure).

- Generate Replicated Data: For

sin 1:S draws from the posterior:- Draw a parameter sample θs from P(θ \| yobs).

- Simulate a replicated dataset yreps from P(y \| θ_s).

- Calculate T(yreps) for each replication.

- Compare: Plot the distribution of T(yrep) against T(yobs). Calculate the Bayesian p-value: p = Pr(T(yrep) ≥ T(yobs) \| y_obs).

- Interpret: Extreme p-values (close to 0 or 1) indicate a mismatch between model and data for that test quantity.

Diagrams

Bayesian Modeling Workflow with Validation Checks

Posterior Predictive Check (PPC) Process

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Bayesian Analysis of Limited Data |

|---|---|

| Probabilistic Programming Language (PPL) (e.g., Stan, PyMC3/4, JAGS) | Core software for specifying Bayesian models, performing inference (MCMC, VI), and generating posterior predictive simulations. |

| Power Prior / Commensurate Prior Formulations | Mathematical frameworks to formally incorporate historical data or similar experiments, allowing dynamic discounting based on conflict with new data. |

| Sensitivity Analysis Scripts | Custom code to systematically vary prior hyperparameters and observe their impact on posterior conclusions, essential for audit trails. |

Visualization Libraries (e.g., bayesplot in R, arviz in Python) |

Specialized tools for creating trace plots, posterior densities, and posterior predictive check plots efficiently. |

| Calibrated Domain Expert Elicitation Protocols | Structured interview guides (e.g., SHELF) to translate expert biological/chemical knowledge into quantifiable prior distributions. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: I am fine-tuning a pre-trained image classifier for a new, very small dataset of histological images. The model converges quickly but performs no better than random chance on my validation set. What could be wrong? A1: This is a classic symptom of catastrophic forgetting or an excessively high learning rate for the new layers.

- Diagnosis: The pre-trained features are being distorted, or the new classification head is learning too aggressively.

- Solution Protocol:

- Freeze Early Layers: Keep the feature extractor (all convolutional blocks) frozen for the first few epochs. Train only the newly added fully connected layers.

- Use a Differential Learning Rate: Apply a much smaller learning rate (e.g., 1e-5) to the pre-trained layers and a larger one (e.g., 1e-3) to the new head. This allows for subtle refinement of features.

- Apply Strong Regularization: Use high dropout rates (0.5-0.7) in the new head and consider L2 regularization (weight decay).

- Validate Step-by-Step: Monitor loss on both training and validation sets after each epoch to detect overfitting immediately.

Q2: When using a pre-trained language model (e.g., BERT) for a small-molecule property prediction task, how do I effectively tokenize non-textual SMILES strings? A2: SMILES must be treated as a specialized language with a custom tokenizer.

- Diagnosis: Using standard word or subword tokenization will break important chemical semantics.

- Solution Protocol:

- Character-Level Tokenization: Treat each character (atom symbol, bond type, bracket) as a separate token (e.g., 'C', '=', '(', 'N').

- SMILES Pair Encoding (SPE): Use a learned, molecule-specific subword tokenization algorithm (like Byte-Pair Encoding for SMILES) to capture common fragments (e.g., 'C=O', 'c1ccccc1').

- Implementation: Use libraries like

tokenizers(Hugging Face) to train a BPE tokenizer on a large corpus of relevant SMILES strings (e.g., from PubChem). Initialize your model's embedding layer with this custom vocabulary.

Q3: My transfer learning model shows excellent validation accuracy, but fails completely on an external test set from a different laboratory. What steps can I take to improve robustness? A3: This indicates high sensitivity to domain shift (e.g., different staining protocols, scanner types).

- Diagnosis: The model has overfit to nuances of your limited validation domain.

- Solution Protocol:

- Heavy Data Augmentation: During training, apply aggressive, realistic augmentations (color jitter, Gaussian blur, random cropping, elastic deformations) to simulate cross-domain variance.

- Domain-Adversarial Training: Incorporate a domain classifier that tries to predict the source of an image, while the main feature extractor is trained to fool it. This forces the extraction of domain-invariant features.

- Test-Time Augmentation (TTA): At inference, generate multiple augmented versions of the test sample and average the predictions.

Q4: I have limited proprietary data but want to leverage a large public dataset for pre-training. How can I ensure the pre-trained model is relevant to my specific biological domain? A4: Implement a strategic, domain-aware pre-training task.

- Diagnosis: A model pre-trained on general images (ImageNet) may not be optimal for microscopy.

- Solution Protocol:

- Select a Relevant Public Corpus: Use a large public dataset from a related domain (e.g., ImageNet → Histopathology: Use the TCGA whole slide image archives or the HPA dataset).

- Employ Self-Supervised Pre-training (SSL): On the public data, train the model using an SSL task like:

- SimCLR/MoCo: Learn representations by maximizing agreement between differently augmented views of the same image.

- Masked Autoencoding: Randomly mask patches of an image and train the model to reconstruct them.

- Then Fine-tune: Use your small proprietary dataset to fine-tune this domain-pre-trained model for your specific downstream task.

Table 1: Performance Comparison of Transfer Learning Strategies on Limited Drug Discovery Data (≤ 1000 samples)

| Strategy | Base Model | Target Task | Data Size | Validation Accuracy | External Test Accuracy | Key Limitation Addressed |

|---|---|---|---|---|---|---|

| Feature Extraction (Frozen) | ResNet-50 (ImageNet) | Toxicity Label (Cell Imaging) | 500 images | 78% | 65% | Prevents overfitting, fast |

| Differential Fine-Tuning | ChemBERTa (PubChem) | Solubility Prediction | 800 compounds | 0.85 (R²) | 0.72 (R²) | Balances prior knowledge & task-specific learning |

| Domain-Adaptive Pre-training | ViT (MoCo on HPA) | Protein Localization | 300 images | 92% | 88% | Reduces domain shift from natural to cell images |

| Linear Probing (Then Fine-tune) | GPT-3 Style (SMILES) | Binding Affinity | 900 complexes | 0.70 (AUC) | 0.68 (AUC) | Stable initialization, avoids early catastrophic forgetting |

Table 2: Impact of Data Augmentation on Model Generalization

| Augmentation Method | Validation Accuracy | External Test Set Accuracy (Lab B) | Delta (Δ) |

|---|---|---|---|

| Baseline (No Augmentation) | 96% | 71% | -25% |

| Standard (Flips, Rotation) | 94% | 78% | -16% |

| Advanced (Color Jitter, CutMix, Blur) | 91% | 85% | -6% |

| Advanced + Test-Time Augmentation | 91% | 87% | -4% |

Experimental Protocols

Protocol 1: Differential Learning Rate Fine-Tuning for Convolutional Neural Networks (CNNs)

- Model Setup: Remove the final classification layer of a pre-trained CNN (e.g., DenseNet121). Append a new head: GlobalAveragePooling2D → Dropout(0.5) → Dense(256, ReLU) → Dropout(0.3) → Dense(N_classes, softmax).

- Freezing: Initially, set

trainable = Falsefor all layers of the original base model. - Phase 1 Training: Compile the model with a learning rate of 1e-3. Train only the new head for 5-10 epochs until validation loss plateaus.

- Phase 2 (Fine-tuning): Unfreeze the last two convolutional blocks of the base model. Recompile the model with a differential learning rate: 1e-5 for the base model layers, 1e-4 for the new head. Train for an additional 10-15 epochs with early stopping.

- Validation: Use a strict hold-out validation set or k-fold cross-validation.

Protocol 2: Self-Supervised Domain-Adaptive Pre-training for Histology Images

- Data Curation: Download 50,000 unlabeled tissue image tiles from a public repository (e.g., TCGA).

- Pre-training Task: Implement the SimCLR framework.

- Augmentation Pipeline: For each image, generate two correlated views via random cropping (with resize), color distortion, and Gaussian blur.

- Model Architecture: Use a CNN encoder (e.g., ResNet-50) followed by a projection head (MLP) to map features to a latent space for contrastive loss.

- Training: Train for 100 epochs using NT-Xent loss, aiming to maximize similarity between the two views of the same image versus all other images in the batch.

- Transfer: Discard the projection head. Use the trained encoder as a pre-trained feature extractor for your downstream, label-scarce classification task, following Protocol 1.

Visualizations

Title: Transfer Learning Workflow for Limited Data

Title: Differential Learning Rate Setup in Model Fine-Tuning

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Transfer Learning Experiments

| Item | Function & Relevance in Transfer Learning |

|---|---|

| Pre-trained Model Repositories (Hugging Face, TorchVision, TensorFlow Hub) | Provides instant access to state-of-the-art models pre-trained on massive datasets (text, image, protein sequences), forming the essential starting point. |

| Data Augmentation Libraries (Albumentations, torchvision.transforms) | Generates realistic variations of limited training data, crucial for improving model robustness and simulating domain shift during training. |

| Self-Supervised Learning Frameworks (SimCLR, MoCo, DINO in PyTorch) | Enables domain-adaptive pre-training on unlabeled, domain-specific public data to create a better initialization than generic pre-trained models. |

Learning Rate Finders & Schedulers (PyTorch Lightning's lr_finder, OneCycleLR) |

Critical for identifying optimal learning rates for new and pre-trained layers separately and for scheduling them during fine-tuning to ensure stability. |

| Feature Extraction Tools (Captum, TF Explain) | Allows interpretation of which features from the pre-trained model are activated for the new task, helping diagnose failure modes and domain mismatches. |

| Domain Adaptation Libraries (DANN, AdaMatch implementations) | Provides pre-built modules for adversarial domain adaptation, helping to minimize performance drop when transferring between different data distributions. |

Technical Support Center: Troubleshooting and FAQs

FAQ Context: This technical support center is designed to aid researchers within the broader thesis context of "Strategies for Validating Models with Limited Experimental Data." It addresses common technical hurdles in using Generative Adversarial Networks (GANs) and Diffusion Models to create synthetic biological or chemical datasets for validation in drug development.

Frequently Asked Questions (FAQs)

Q1: My GAN for generating molecular structures is experiencing mode collapse, producing only a few similar outputs. How can I mitigate this? A1: Mode collapse is a common GAN failure. Implement the following protocol:

- Switch to a More Robust Architecture: Use Wasserstein GAN with Gradient Penalty (WGAN-GP). This replaces the binary discriminator with a critic that provides more stable gradients.

- Apply Mini-batch Discrimination: Modify the discriminator to assess a batch of samples collectively, making it harder for the generator to collapse to a single mode.

- Adjust Training Dynamics: Regularly monitor the loss ratio of the discriminator/generator. If the discriminator loss reaches zero too quickly, reduce its learning rate or update frequency.

Q2: The synthetic protein sequences generated by my diffusion model lack realistic physicochemical properties. How can I condition the generation? A2: You need to guide the denoising process. Implement a Classifier-Free Guidance protocol:

- Training Phase: Train the diffusion model on your protein sequence data. During training, randomly drop the condition (e.g., a target solubility score) 10-20% of the time, replacing it with a null token.

- Sampling/Validation Phase: Use the following formula during the reverse diffusion steps:

ϵ_guided = ϵ_uncond + guidance_scale * (ϵ_cond - ϵ_uncond), whereϵis the model's noise prediction. A guidance scale >1 (e.g., 2.0-7.0) increases adherence to the condition. - Validate: Use a separate property predictor model to screen generated sequences against your target profile before experimental validation.

Q3: How do I quantitatively validate that my synthetic cell microscopy images are statistically similar to the limited real data? A3: Employ a multi-faceted validation metric protocol. Calculate the following for a batch of synthetic (S) and real (R) images:

- Inception Score (IS): Uses a pre-trained classifier to measure diversity and clarity. Higher is generally better.

- Fréchet Inception Distance (FID): Compares the distributions of features from a pre-trained network (e.g., Inception v3) for S and R. Lower FID indicates closer similarity. A 2023 benchmark study on biomedical image generation reported state-of-the-art FID scores below 5.0 for high-quality synthetic histopathology images.

- Domain-Specific Metrics: Calculate the mean pixel intensity or texture metrics (e.g., Haralick features) for specific cellular compartments and perform a two-sample t-test to ensure no significant difference (p > 0.05).

Q4: What is the minimum viable dataset size to train a stable diffusion model for compound activity prediction? A4: While diffusion models are data-hungry, techniques exist for low-data regimes. The required size depends on data complexity.

- Protocol for Small Data (< 10k samples):

- Start with a Pre-trained Model: Use a diffusion model pre-trained on a large, public molecular dataset (e.g., ZINC, PubChem).

- Fine-tune with Low-Rank Adaptation (LoRA): Instead of full fine-tuning, inject trainable rank decomposition matrices into the model's attention layers. This drastically reduces parameters and overfitting risk.

- Apply Heavy Augmentation: For image-based data (e.g., spectral graphs), use affine transformations, noise injection, and random masking.

Key Quantitative Metrics for Model Comparison

The table below summarizes common evaluation metrics for generative models in scientific contexts.

Table 1: Quantitative Metrics for Evaluating Synthetic Data Quality

| Metric Name | Best For | Ideal Value | Interpretation in Scientific Context |

|---|---|---|---|

| Fréchet Inception Distance (FID) | Image-based data (microscopy, histology) | Lower is better (State-of-the-art < 5.0) | Measures statistical similarity of feature distributions. Critical for validating phenotypic screens. |

| Inception Score (IS) | Image-based data | Higher is better (Dependent on dataset) | Measures diversity and quality of generated images. Can be unstable for small datasets. |

| Valid & Unique (%) | Molecular structure generation | Higher is better (e.g., >90% Valid, >80% Unique) | Percentage of chemically valid and novel structures. Essential for virtual compound library expansion. |

| Nearest Neighbor Cosine Similarity | Any latent representation | Context-dependent (Not too high, not too low) | Measures overfitting. High similarity suggests the model is memorizing, not generating. |

| Property Predictor RMSE | Conditionally generated data | Lower is better | Tests if synthetic data retains predictive relationships (e.g., between structure and activity). |

Table 2: Common Failure Modes and Diagnostic Checks

| Symptom | Likely Cause | Diagnostic Check | Recommended Action |

|---|---|---|---|

| Blurry or noisy outputs (Diffusion) | Insufficient reverse diffusion steps or poor noise schedule. | Visualize intermediate denoising steps. | Increase number of sampling steps; adjust noise schedule (e.g., linear to cosine). |

| Low diversity in outputs (GAN) | Mode collapse or discriminator overpowered. | Calculate pairwise distances between latent vectors of generated samples. | Use WGAN-GP; add diversity penalty terms; reduce discriminator learning rate. |

| Invalid molecular structures | Generator does not learn valency rules. | Compute percentage of valid SMILES strings. | Use graph-based generative models or reinforce valency rules via reward in RL frameworks. |

| Synthetic data fails downstream task | Distribution shift or loss of critical features. | Train identical ML models on real vs. synthetic data and compare performance. | Implement feature matching loss or augment with a small amount of real data. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Generative Modeling Experiments

| Item / Solution | Function in Experiment | Example/Note |

|---|---|---|

| PyTorch / TensorFlow with RDKit | Core frameworks for building and training neural networks. RDKit handles cheminformatics operations. | Use torch.nn.Module for custom generators; RDKit for SMILES parsing and validity checks. |

| MONAI (Medical Open Network for AI) | Domain-specific framework for healthcare imaging. Provides optimized diffusion model implementations. | Use monai.generators for building diffusion models on 3D medical image data. |

| WGAN-GP Implementation | Stabilizes GAN training via gradient penalty, crucial for small datasets. | Code readily available in public repositories (GitHub). Key hyperparameter: λ (gradient penalty coefficient). |

| Low-Rank Adaptation (LoRA) Library | Enables efficient fine-tuning of large pre-trained models with limited data. | peft (Parameter-Efficient Fine-Tuning) library from Hugging Face. |

| Molecular Transformer | Pre-trained model for molecular representation and property prediction. | Used as a feature extractor for FID calculation or as a predictor for guided generation. |

| Weights & Biases (W&B) / MLflow | Experiment tracking to log losses, hyperparameters, and generated sample batches. | Critical for reproducibility and comparing runs in a thesis appendix. |

Experimental Workflow and Pathway Visualizations

Title: Synthetic Data Generation and Validation Workflow

Title: Conditional Diffusion Model Process

Title: GAN Training Adversarial Feedback Loop

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My physics-informed neural network (PINN) for a pharmacokinetic (PK) model fails to converge, producing nonsensical parameter estimates. What could be wrong? A: This is often due to an imbalance between the data loss and the physics loss terms in the total loss function. The physics residuals (e.g., from ODE/PDE constraints) can dominate, leading the optimizer to ignore sparse data.

- Protocol for Diagnostic & Correction:

- Log Individual Loss Components: Modify your training loop to output the mean squared error (MSE) for the data (

L_data) and the MSE for the physics residual (L_physics) separately at each epoch. - Calculate Loss Ratio: Compute the ratio

L_physics / L_dataat epoch 0. If it exceeds1e3, scaling issues are likely. - Apply Adaptive Weighting: Implement a gradient descent algorithm that uses the following loss function with a learnable weight (

λ):L_total = L_data + λ * L_physicsInitializeλ = 1.0. During training, updateλusing:λ_new = λ + η * (∇_λ L_total), whereηis a small learning rate (e.g., 0.01) for the weight. This allows the network to dynamically balance the two objectives. - Re-run Training: Monitor both loss components. Convergence is typically achieved when both

L_dataandL_physicsdecrease steadily over epochs.

- Log Individual Loss Components: Modify your training loop to output the mean squared error (MSE) for the data (

Q2: When embedding a mechanistic constraint (e.g., Michaelis-Menten kinetics) into a model, the solver becomes unstable and produces NaN values. How do I resolve this? A: Numerical instability often arises from stiff equations or poor initial parameter guesses that cause division by zero or negative concentrations.

- Protocol for Stabilization:

- Parameter Scaling: Non-dimensionalize your model equations. For a state variable

S, define a scaled variables = S / S_ref, whereS_refis a characteristic scale (e.g., initial concentrationS0). Apply similar scaling to time (τ = t * k_cat) and parameters. This brings all values closer to O(1), improving solver stability. - Solver Switch: Change from an explicit (e.g., Euler, RK45) to an implicit solver (e.g., CVODE,

Rodas5in Julia,solve_ivpwith method'BDF'in SciPy). Implicit solvers are designed for stiff systems common in biology. - Boundary Enforcement: Use a transformation to ensure positive values. For any state variable

xthat must be >0, internally solve forlog(x)instead. The derivative becomesd(log(x))/dt = (dx/dt) / x.

- Parameter Scaling: Non-dimensionalize your model equations. For a state variable

Q3: How can I validate my hybrid model when I only have 5-10 experimental data points? A: Use a rigorous leave-one-out (LOO) or k-fold cross-validation framework tailored for small-N studies, focusing on predictive error.

- Protocol for Sparse-Data Validation:

- Data Partitioning: For

Ndata points, createNfolds where each fold usesN-1points for training and the 1 held-out point for testing (LOO-CV). - Model Training & Prediction: For each fold:

- Train your physics-informed/mechanistic model on the

N-1training points. - Predict the output at the held-out data point's input conditions.

- Calculate the prediction error.

- Train your physics-informed/mechanistic model on the

- Aggregate Metrics: Compute the mean absolute error (MAE) and root mean square error (RMSE) across all

Nheld-out predictions. - Compare to Null Models: Perform the same CV on a purely data-driven model (e.g., linear regression) and a purely mechanistic model (with literature parameters). Your hybrid model should show lower MAE/RMSE than both.

- Data Partitioning: For

Q4: My model incorporates a known signaling pathway, but visualizing the logic and interaction with data constraints is difficult. How can I structure this? A: Use a standardized diagramming approach to map the biological constraints onto the model architecture.

Diagram: Hybrid Model Integrating Signaling Pathway Constraints

Table 1: Comparison of model performance using Leave-One-Out Cross-Validation (LOO-CV) on a dataset of N=8 subjects. The hybrid PINN outperforms pure models in predicting held-out plasma concentration (Cp) data.

| Model Type | Mean Absolute Error (MAE) | Root Mean Square Error (RMSE) | Required Data Points for Calibration |

|---|---|---|---|

| Data-Driven (Linear) | 4.2 µg/mL | 5.1 µg/mL | 7 (All but held-out) |

| Mechanistic (Literature) | 3.8 µg/mL | 4.9 µg/mL | 0 (Fixed parameters) |

| Hybrid PINN (Proposed) | 1.5 µg/mL | 2.0 µg/mL | 7 (All but held-out) |

Table 2: Key parameters identified by the hybrid PINN for a two-compartment PK model with Michaelis-Menten elimination, demonstrating identifiability from sparse data.

| Parameter | Description | Literature Range | PINN Estimate | Confidence Interval (Bootstrapped) |

|---|---|---|---|---|

| V_central (L) | Volume of central compartment | 3.5 - 4.5 | 3.9 | [3.6, 4.2] |

| k_el (1/h) | Linear elimination rate constant | 0.05 - 0.15 | 0.09 | [0.06, 0.12] |

| V_max (mg/h) | Max. elimination rate | 8.0 - 12.0 | 10.2 | [9.1, 11.5] |

| K_m (mg/L) | Michaelis constant | 15 - 25 | 20.1 | [17.5, 23.0] |

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 3: Essential tools for developing and validating physics-informed mechanistic models.

| Item Name | Type/Category | Primary Function | Example Vendor/Platform |

|---|---|---|---|

| ODE/PDE Solver Library | Software Library | Numerical integration of mechanistic model equations for forward simulation. | SciPy (Python), SUNDIALS (C/C++) |

| Automatic Differentiation (AD) | Software Engine | Computes exact derivatives of model outputs w.r.t. inputs, essential for PINN training. | PyTorch, JAX, TensorFlow |

| Global Optimizer | Algorithm | Fits mechanistic model parameters to sparse data, escaping local minima. | Particle Swarm, CMA-ES, BoTorch |

| Sensitivity Analysis Tool | Software Package | Quantifies parameter identifiability and guides experimental design for sparse data. | SALib, PEtab, COPASI |

| Bayesian Inference Engine | Software Framework | Quantifies parameter uncertainty and integrates prior knowledge formally. | PyMC, Stan, TensorFlow Probability |

| Sparse Cytokine Array | Wet-lab Reagent | Generates multiplexed, low-volume experimental data from precious samples. | Luminex, Meso Scale Discovery |

Overcoming Common Pitfalls: Optimizing Model Design for Data-Scarce Environments

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My model achieves >95% training accuracy but <60% validation accuracy on my small biological dataset. Is this overfitting, and what are the immediate steps? A1: Yes, this is a classic sign of overfitting. Immediate corrective actions include:

- Implement Stronger Regularization: Increase dropout rates or L2 regularization lambda.

- Simplify the Model: Reduce the number of layers or neurons.

- Augment Data: Apply domain-specific transformations (e.g., adding noise to gene expression values, synthetic minority oversampling).

- Use k-fold Cross-Validation: Ensure your reported performance is the mean across all folds.

Q2: During cross-validation, my performance metrics swing wildly between folds. What does this indicate? A2: High variance between folds suggests your model is highly sensitive to the specific train-test split, a key indicator of overfitting in low-data regimes. This often means the model is learning noise. You should:

- Increase the number of folds (e.g., use LOOCV or 10-fold CV) for a more robust estimate.

- Re-evaluate your feature selection; you may have too many features for the number of samples.

- Consider switching to a simpler, more interpretable model (e.g., Elastic Net) to establish a baseline.

Q3: How can I detect overfitting when I don't have a separate test set due to very limited data? A3: In this scenario, you must rely entirely on rigorous cross-validation and performance monitoring:

- Plot Learning Curves: Monitor both training and validation loss per epoch. A diverging gap is a clear sign.

- Use Statistical Significance Tests: Apply McNemar's test or paired t-tests on CV fold results to see if performance is significantly above chance.

- Employ Bayesian Methods: Consider Bayesian models that provide uncertainty estimates (e.g., high predictive variance indicates overfitting).

Q4: What are the best regularization techniques specifically for high-dimensional biological data (e.g., genomics)? A4: For high-dimensional, low-sample-size data, the following are particularly effective:

- L1 (Lasso) / Elastic Net Regularization: Performs automatic feature selection, reducing the model's capacity to memorize noise.

- Group Regularization: Penalizes groups of related features (e.g., genes in a pathway) together.

- Early Stopping: Halting training when validation loss plateaus or begins to increase.

- Dropout for DNNs: Use high dropout rates (0.5-0.7) in early layers.

| Metric | Formula | Use Case & Interpretation in Low-Data Context |

|---|---|---|

| Mean Squared Error (MSE) | $\frac{1}{n}\sum{i=1}^{n}(Yi - \hat{Y}_i)^2$ | For regression. Compare train vs. validation MSE. Large gap indicates overfitting. |

| Balanced Accuracy | $\frac{Sensitivity + Specificity}{2}$ | Crucial for imbalanced datasets. More reliable than standard accuracy with small data. |

| Matthew's Correlation Coefficient (MCC) | $\frac{TP \times TN - FP \times FN}{\sqrt{(TP+FP)(TP+FN)(TN+FP)(TN+FN)}}$ | Robust single score for binary classification, especially good with class imbalance. |

| Cross-Validation Variance | $Var({Score{fold1}, ..., Score{foldk}})$ | Measures stability of the model. High variance suggests overfitting to specific folds. |

Experimental Protocol: k-Fold Cross-Validation with Early Stopping

Objective: To reliably estimate model performance and prevent overfitting when experimental data is limited to N samples.

Materials: Labeled dataset, ML framework (e.g., scikit-learn, TensorFlow).

Methodology:

- Randomly Shuffle the entire dataset and partition it into k equal-sized folds.

- For each fold i (i = 1 to k): a. Designate fold i as the temporary validation set. b. Use the remaining k-1 folds as the training set. c. Train the model: For each training epoch, monitor loss on the temporary validation fold. d. Implement Early Stopping: If validation loss does not improve for a pre-defined number of epochs (patience, e.g., 20), stop training and revert to the weights from the best epoch. e. Evaluate the final, best model on the held-out fold i to obtain performance score $S_i$.

- Calculate Final Performance: Report the mean and standard deviation of all $Si$ scores: $\mu = \frac{1}{k}\sum{i=1}^{k}Si$, $\sigma = \sqrt{\frac{1}{k}\sum{i=1}^{k}(S_i - \mu)^2}$.

Visualization: Overfitting Diagnosis Workflow

Visualization: Regularization Techniques Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Low-Data Model Validation |

|---|---|

| Synthetic Minority Oversampling (SMOTE) | Generates synthetic samples for minority classes to combat overfitting to class imbalance. |

| Bootstrapping Tools (e.g., scikit-learn) | Creates multiple resampled datasets to estimate parameter stability and model variance. |

| Bayesian Neural Network (BNN) Frameworks (e.g., Pyro, TensorFlow Probability) | Provides predictive uncertainty quantification, highlighting where the model is likely overfitting. |

| Elastic Net Implementation (e.g., glmnet) | Combines L1 & L2 regularization for robust feature selection and coefficient shrinkage in regression. |

| k-fold Cross-Validation Scheduler | Automates data splitting and model evaluation to ensure unbiased performance estimation. |

Technical Support Center: Troubleshooting Data Preparation for Limited Data Validation

This support center provides targeted guidance for researchers validating models with limited experimental data, framed within the broader thesis on Strategies for Validating Models with Limited Experimental Data Research.

FAQs & Troubleshooting Guides

Q1: During data augmentation for a small RNA-Seq dataset, my model's validation accuracy drops despite improved training accuracy. What is the likely cause and solution? A: This indicates overfitting to augmentation artifacts. Common in genomic data where naive noise injection disturbs biological signals.

- Troubleshooting Protocol:

- Audit: Apply your augmentation pipeline (e.g., random base pair substitution) to a single sample and visually inspect the output alignment with a tool like IGV. Does it create biologically implausible sequences?

- Validate: Implement a "sanity check" holdout. Keep 10% of your original, unaugmented data completely separate. After training on augmented data, evaluate on this pristine set. A significant performance drop confirms the issue.

- Solution: Shift to curation-based augmentation. Use domain knowledge:

- For RNA-Seq, use established databases (like GTEx) to sample real, but rare, splice variants to add to your training set.

- For drug response, use pharmacokinetic models to generate plausible concentration-time profiles rather than random noise.

- Reagent Solution: GTEx Portal API allows programmatic access to real human tissue expression data for credible positive control curation.

Q2: My curated dataset from public repositories has inconsistent labeling (e.g., "responder/non-responder" criteria vary between studies). How can I ethically harmonize this for model training? A: This is a label integrity and ethics issue. Forcing harmonization can introduce bias.

- Troubleshooting Protocol:

- Do NOT re-label data based on your assumption. This breaks the provenance chain.

- Implement a multi-label or stratified approach. Train your model using a separate "study ID" or "labeling protocol" as a co-variate. This teaches the model the uncertainty source.

- Apply Model Confidence Scoring: Use ensemble or Bayesian models to output a confidence score alongside predictions. Low confidence often correlates with inter-study label disagreement, flagging areas needing experimental clarification.

- Reagent Solution: OMOP Common Data Model tools can guide ethical schema mapping for clinical data, though adaptation for preclinical data is required.

Q3: When using generative AI (e.g., VAEs, GANs) to create synthetic compound activity data, how do I ensure the generated data is chemically valid and not memorized from the training set? A: This addresses synthetic data fidelity and overfitting.

- Troubleshooting Protocol:

- Test for Memorization: Calculate Tanimoto similarity between all generated molecular structures and your training set. Use the RDKit package. A high similarity (>0.85) cluster indicates probable memorization.

- Validate Chemical Plausibility: Run all generated structures through a rule-based checker (e.g., RDKit's

SanitizeMolor PAINS filters). Discard molecules with invalid valencies or undesired substructures. - Implement a "Two-Discriminator" Approach: Train your GAN with two discriminators: one for "real vs. fake" and one for "drug-like vs. not-drug-like" using a filtered library (e.g., ChEMBL).

- Reagent Solution: RDKit Open-Source Cheminformatics Toolkit is essential for structure validation, fingerprint calculation, and descriptor generation.

Q4: After extensive augmentation, my model performs well on internal validation but fails on a new, external cell line. Does this invalidate the augmentation strategy? A: Not necessarily. This points to a lack of biological diversity in the source data, which augmentation cannot invent.

- Troubleshooting Protocol:

- Conduct a Covariate Shift Analysis: Use PCA or t-SNE to plot the molecular features (e.g., gene expression profiles) of your training/validation set versus the new external cell line. If they occupy completely separate areas of the plot, augmentation cannot bridge the gap.

- Solution - Strategic Curation: Proactively curate a "difficult negative" set. During data collection, intentionally include a small number of samples from divergent lineages or conditions, even if it reduces initial accuracy. This anchors the model's decision boundaries in a more realistic biological space.

- Reagent Solution: DepMap Portal provides broad genetic and lineage data across hundreds of cancer cell lines, crucial for assessing training data diversity.

Summarized Quantitative Data

Table 1: Impact of Different Augmentation Techniques on Model Performance with Limited Data (n=100 initial samples)

| Technique | Data Increase | Internal Val. AUC | External Val. AUC | Risk of Artifact Overfit |

|---|---|---|---|---|

| Basic Noise Injection | 500% | 0.92 +/- 0.02 | 0.65 +/- 0.10 | High |

| Model-Based Synthesis (GAN) | 500% | 0.89 +/- 0.03 | 0.71 +/- 0.08 | Medium |

| Heuristic Curation (from DB) | 150% | 0.88 +/- 0.02 | 0.82 +/- 0.05 | Low |

| Combined (Curation + GAN) | 300% | 0.90 +/- 0.02 | 0.85 +/- 0.04 | Medium-Low |

Table 2: Label Inconsistency Analysis in Public Oncology Datasets

| Repository | Studies Sampled | % with Clear Response Criteria | % Using RECIST | % with Raw Data for Re-assessment |

|---|---|---|---|---|

| TCGA | 1 (Pan-Cancer) | 100% (by definition) | N/A (genomic) | 100% |

| GEO (Series) | 12 | 58% | 33% | 22% |

| SRA (RNA-Seq Runs) | 8 | 38% | 25% | 100% (raw seq) |

Experimental Protocols

Protocol 1: Validating Generative Augmentation for Compound Screening Objective: Generate and validate synthetic active compounds for a target with under 50 known actives. Materials: Initial active set (from ChEMBL/BindingDB), RDKit, GAN/VAE framework (e.g., PyTorch), chemical rule filters (PAINS, Brenk), computational docking software (AutoDock Vina). Method: