Building Trust in Digital Biology: The Definitive Guide to Credible Computational Biomechanics Models

This article provides a comprehensive framework for establishing and verifying the credibility of computational biomechanics models in biomedical research and drug development.

Building Trust in Digital Biology: The Definitive Guide to Credible Computational Biomechanics Models

Abstract

This article provides a comprehensive framework for establishing and verifying the credibility of computational biomechanics models in biomedical research and drug development. We systematically explore foundational principles, methodological best practices, common pitfalls, and advanced validation strategies. Designed for researchers, scientists, and industry professionals, the guide bridges the gap between complex model development and real-world, reliable application, ensuring models are both scientifically robust and clinically actionable.

What Makes a Model Believable? Core Principles of Credibility in Computational Biomechanics

Within computational biomechanics for drug development, model credibility transcends traditional validation. It is the justified confidence that a model is reliable for its intended use, encompassing trustworthiness (inherent model quality) and reliance (fitness for a specific decision context). This framework is integral to advancing Standards for credibility of computational biomechanics models research, moving from simple comparison to data to holistic assessment.

The Pillars of Credibility: A Quantitative Framework

Credibility is built upon interconnected pillars, each contributing to overall trust. The following table quantifies key metrics and targets derived from recent literature and standards (e.g., ASME V&V 40, FDA-related submissions).

Table 1: Quantitative Pillars of Model Credibility

| Pillar | Core Metric | Target/Threshold | Measurement Method |

|---|---|---|---|

| Model Verification | Code-to-Math Error | < 0.1% Relative Error | Comparison to analytical solutions for simplified cases. |

| Experimental Validation | Point-wise Comparison Error | < 15% Mean Error | Ex vivo or in vivo biomechanical data vs. model prediction. |

| Uncertainty Quantification | 95% Confidence Interval | Encompasses > 90% of Data | Probabilistic sampling (Monte Carlo, Polynomial Chaos). |

| Sensitivity Analysis | Sobol Total-Order Index | > 0.1 for Key Parameters | Global variance-based sensitivity analysis. |

| Reproducibility | Inter-laboratory Variability | < 20% Coefficient of Variation | Round-robin benchmarking studies. |

Experimental Protocols for Foundational Validation

A cornerstone of credibility is robust experimental data for validation. The following protocol is typical for obtaining biomechanical properties of arterial tissue, a common application.

Detailed Protocol: Biaxial Mechanical Testing of Murine Arterial Tissue

Objective: To obtain stress-strain relationship data for validating vascular wall mechanics models. Materials: See "The Scientist's Toolkit" below. Procedure:

- Tissue Harvest: Euthanize mouse (IACUC approved). Excise target artery (e.g., thoracic aorta) and place in chilled, oxygenated physiological saline solution (PSS).

- Specimen Preparation: Under dissection microscope, carefully remove perivascular adipose and connective tissue. Mount artery onto custom biaxial testing system using biocompatible cyanoacrylate on porous mounts.

- Preconditioning: Submerge specimen in 37°C PSS. Apply 10 cycles of equibiaxial stretch (5-10% strain) to achieve a repeatable mechanical state.

- Primary Test - Proportional Loading: Stretch specimen simultaneously in axial and circumferential directions at a fixed ratio (based on in vivo measurements) to a maximum stretch ratio (λ~max~ = 1.3-1.5). Record forces from both load cells.

- Primary Test - Uniaxial Testing: Return to reference. Conduct separate uniaxial tests in each direction while maintaining the other dimension at its reference length.

- Data Acquisition: Record forces (N) and true grip-to-grip displacements (mm) at 100 Hz. Synchronize with video for digital image correlation (DIC) to compute full-field Green-Lagrange strain.

- Stress Calculation: Compute First Piola-Kirchhoff stress as force/original cross-sectional area. Cauchy stress is derived using deformation gradient.

Signaling Pathways in Mechanobiology: A Core Computational Target

Computational biomechanics models often integrate signaling pathways triggered by mechanical stimuli, crucial for drug target identification.

Diagram 1: Vascular Mechanotransduction Pathway

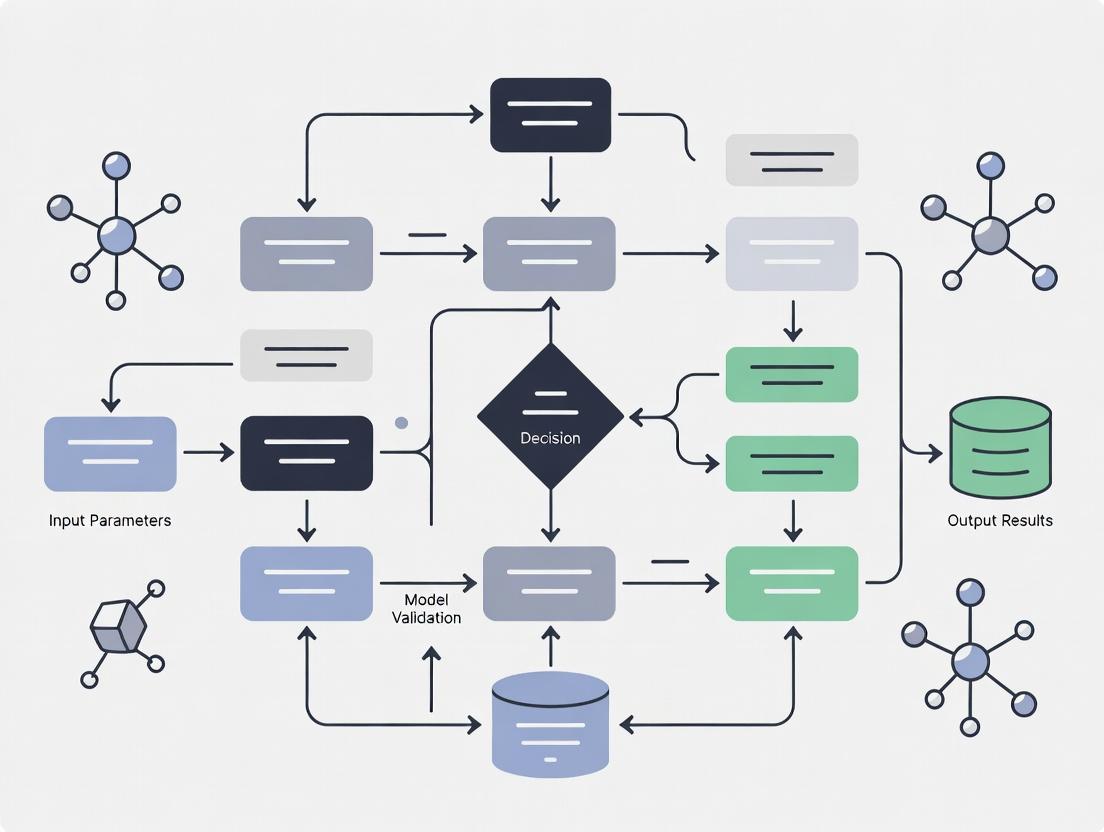

The Credibility Assessment Workflow

Establishing credibility is a systematic process, integrating computational and experimental elements.

Diagram 2: Credibility Assessment Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Biomechanics Validation Experiments

| Item | Function in Experiment | Example Product/Specification |

|---|---|---|

| Physiological Saline Solution (PSS) | Maintains tissue viability ex vivo by mimicking ionic composition and pH of blood. | Krebs-Henseleit buffer: NaCl (118 mM), KCl (4.7 mM), CaCl₂ (2.5 mM), MgSO₄ (1.2 mM), NaHCO₃ (25 mM), KH₂PO₄ (1.2 mM), Glucose (11 mM). pH 7.4, bubbled with 95% O₂/5% CO₂. |

| Digital Image Correlation (DIC) Kit | Measures full-field, non-contact strain on tissue surface during mechanical testing. | Speckle pattern kit (black/white acrylic spray), high-resolution monochrome cameras (5+ MP), stereo calibration target, software (e.g., LaVision DaVis, GOM Correlate). |

| Biaxial Testing System | Applies independent, controlled loads along two orthogonal axes to soft biological tissues. | Bose ElectroForce Planar Biaxial TestBench, CellScale Biotester. Equipped with 2-10N load cells and sub-micron displacement actuators. |

| Polyacrylamide Substrates | For 2D cell mechanobiology studies to control substrate stiffness independent of chemistry. | Tunable stiffness gels (0.5-50 kPa) coated with collagen I or fibronectin for cell adhesion. |

| Fluorescent Calcium Indicators | Visualize intracellular calcium flux, a key readout of mechanosensitive pathway activation (e.g., in endothelial cells). | Fluo-4 AM, Fura-2 AM (cell-permeable dyes). Ratio-metric imaging allows quantification. |

Within the critical field of computational biomechanics—essential for medical device design, surgical planning, and drug delivery systems—model credibility is paramount. This whitepaper delineates the triad of Verification, Validation, and Uncertainty Quantification (VVUQ) as the foundational standards for establishing trust in predictive simulations. VVUQ provides a rigorous framework to ensure that biomechanical models are solved correctly (Verification), accurately represent physical reality (Validation), and transparently communicate their limitations (Uncertainty Quantification).

Foundational Principles & Methodologies

Verification: Solving the Equations Right

Verification is the process of ensuring that the computational model's implementation—the numerical algorithms and software—solves the underlying mathematical equations correctly.

- Code Verification: Uses method of manufactured solutions (MMS) and order-of-accuracy tests to confirm the absence of coding errors.

- Calculation Verification: Assesses numerical accuracy (e.g., discretization errors) for a specific simulation, often via grid convergence studies.

Validation: Solving the Right Equations

Validation assesses the accuracy of the computational model by comparing its predictions with high-fidelity experimental data from the intended physical context.

- Hierarchical Validation: Tests model components (material properties) before integrated system response (organ deformation).

- Validation Metric: A quantitative measure (e.g., normalized RMS error) defining the difference between simulation and experimental data.

Uncertainty Quantification: Characterizing Confidence

UQ systematically identifies, characterizes, and propagates all sources of uncertainty to quantify their impact on model predictions.

- Aleatoric Uncertainty: Inherent variability (e.g., inter-subject biological differences).

- Epistemic Uncertainty: Reducible uncertainty from lack of knowledge (e.g., material parameter ranges).

- Sensitivity Analysis: Identifies which input uncertainties most influence output variability.

Quantitative Data in Computational Biomechanics VVUQ

The following tables summarize key quantitative benchmarks and outcomes from recent studies.

Table 1: Typical Validation Metrics and Targets for Cardiovascular Models

| Model Component | Validation Metric | Acceptance Threshold (Literature Reference) | Common Experimental Comparator |

|---|---|---|---|

| Arterial Wall Stress | Peak Systolic Stress Error | < 15% | MRI-based Strain Measurement |

| Valve Leaflet Dynamics | Coaptation Area Difference | < 10% | High-Speed Camera (in vitro) |

| Drug Elution from Stent | Normalized RMS Error of Release Curve | < 20% | In Vitro USP Dissolution Apparatus |

| Coronary Flow (FFR_{CT}) | Diagnostic Accuracy vs. Invasive FFR | > 90% Sensitivity/Specificity | Invasive Fractional Flow Reserve (FFR) |

Table 2: Common Sources of Uncertainty and Their Magnitude in Bone Biomechanics

| Uncertainty Source | Type | Typical Range/Description | Propagation Method |

|---|---|---|---|

| Cortical Bone Elastic Modulus | Epistemic | 12 - 20 GPa (Population Variance) | Monte Carlo Sampling |

| Muscle Force Magnitude | Aleatoric | ± 20% of Estimated Peak Force | Polynomial Chaos Expansion |

| Mesh Density (Tetrahedral) | Epistemic | 10% change in predicted strain energy density | Grid Convergence Index (GCI) |

| Boundary Condition (Load Point) | Epistemic | 5mm anatomical landmark variation | Latin Hypercube Sampling |

Experimental Protocols for Validation

A robust validation experiment is critical. Below is a detailed protocol for a foundational biomechanics validation study.

Protocol: In-Vitro Validation of a Lumbar Spinal Segment Finite Element Model

- Objective: To validate predictions of intervertebral disc pressure and facet joint force under flexion-extension moments.

- Materials: Lumbar functional spinal unit (L3-L4), custom six-degree-of-freedom spine simulator, pressure needle transducer, miniature load cells for facets, optical motion tracking system, hydraulic testing machine.

- Procedure:

- Specimen Preparation: Dissect fresh-frozen human lumbar segment, preserving ligaments. Pot vertebrae in polymethyl methacrylate (PMMA) fixtures.

- Instrumentation: Insert calibrated pressure transducer into the nucleus pulposus of the L3-L4 disc. Implant miniature strain-gauge based load cells into the articular surfaces of the facet joints.

- Experimental Setup: Mount potted specimen in spine simulator. Apply pure rotational moments (±7.5 Nm) in the flexion-extension plane using the hydraulic actuator under displacement control at a rate of 0.5°/sec.

- Data Collection: Synchronously record applied moment (from actuator load cell), intervertebral rotation (from optical markers), disc pressure, and facet joint forces at 100 Hz for three loading cycles.

- Comparison: Extract the third-cycle moment-rotation response, peak disc pressure, and peak facet force. Compare directly with outputs from the finite element model subjected to identical boundary and loading conditions.

Visualizing the VVUQ Workflow

VVUQ Process for Model Credibility

Sources of Uncertainty in Models

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Ex-Vivo Biomechanics Validation

| Item Name | Function in VVUQ Context | Example Product/Standard |

|---|---|---|

| Phosphate-Buffered Saline (PBS) | Maintain physiological ionic strength and pH for hydrated tissue testing. | Thermo Fisher Scientific, Gibco 10010023 |

| Protease Inhibitor Cocktail | Prevents tissue degradation during long-term mechanical testing of biological specimens. | Sigma-Aldrich, P8340 |

| Silicone Lubricant Spray | Reduces friction in testing fixtures to simulate physiological joint lubrication. | Dow Corning 316 Spray |

| Radio-Opaque Beads (≤0.5mm) | Fiducial markers for Digital Image Correlation (DIC) or biplanar radiography strain measurement. | Bead size: 0.3mm, Material: Zirconium Oxide |

| Polymethyl Methacrylate (PMMA) | Rigid potting material to securely mount bone or tissue specimens into testing fixtures. | Orthodontic Resin, Jet Tooth Shade |

| Strain Gauges (Micro) | Direct surface strain measurement on bone or implant for local model validation. | Tokyo Sokki Kenkyujo, FLA-2-11-1LJC |

| Calibration Phantom (CT/MRI) | Essential for quantifying and minimizing imaging-related uncertainty in patient-specific models. | QRM-BDC/CT, Modulus MRI Tissue Characterization Phantom |

The Role of ASME V&V 40 and Other Emerging Regulatory & Standards Frameworks

Within the critical thesis on establishing credibility for computational biomechanics models in biomedical research, standardized frameworks are paramount. These frameworks provide the methodological rigor and regulatory pathways necessary for model acceptance in drug development and medical device evaluation. This guide examines the core principles of the ASME V&V 40 standard and its interplay with other emerging regulatory and standards frameworks, providing researchers and professionals with actionable technical protocols.

Core Frameworks and Quantitative Comparison

Table 1: Comparison of Key Computational Model Credibility Frameworks

| Framework | Primary Scope | Key Output/Goal | Regulatory Affiliation | Primary Application Context |

|---|---|---|---|---|

| ASME V&V 40 | Risk-informed Credibility of Computational Models | Establishing Model Credibility for a Context of Use | FDA (Recognized Consensus Standard) | Medical Devices, Biomechanics |

| FDA: Assessing Credibility of Computational Modeling & Simulation | Regulatory Submission Evaluation | Sufficient Credibility Evidence for Regulatory Decision-Making | FDA (Guidance) | Pharmaceuticals, Medical Devices |

| ISO/IEC Guide 98-3:2008 (GUM) | Uncertainty Quantification | Standardized Expression of Measurement Uncertainty | International Standards | Foundational Metrology for all Sciences |

| ISO 23461:2023 (Biomechanics) | Human Body Models Verification & Validation | Credibility of Human Body Models in Impact Scenarios | International Standards | Automotive Safety, Impact Biomechanics |

| EMA: Qualification of Novel Methodologies | Methodological Qualification | Acceptance of a developed methodology for use in regulatory contexts | European Medicines Agency | Drug Development, Clinical Trials |

Table 2: ASME V&V 40 Risk-Based Credibility Factors & Common Activities

| Credibility Factor | Low Risk Example Activity | High Risk Example Activity | Common Quantitative Metric(s) |

|---|---|---|---|

| Verification | Code version control; unit testing. | Independent code verification; order-of-accuracy testing. | Code coverage (%); grid convergence index. |

| Validation | Comparison to public domain benchmark data. | Prospective, protocol-driven animal or cadaveric experiment. | Mean absolute error; correlation coefficient; validation metric (e.g., 𝑝-value). |

| Uncertainty Quantification | Parameter sensitivity analysis. | Probabilistic analysis (Monte Carlo) with propagated input uncertainties. | Confidence/credibility intervals; Sobol indices for sensitivity. |

| Peer Review | Internal team review. | External, independent review by domain experts. | Review report disposition (accept/revise). |

Experimental Protocols for Key Validation Activities

Protocol 1: Prospective Validation of a Bone Strain Prediction Model

Objective: To provide high-risk credibility evidence for a finite element (FE) model predicting femoral strain under load, per ASME V&V 40 and FDA guidance.

- Model Context of Use Definition: The model predicts strain magnitudes >500 µε in the proximal femur during a simulated stumble (loading configuration: 2.5x body weight, 15° adduction).

- Validation Experiment Design:

- Specimens: N=6 fresh-frozen human cadaveric femora, screened via DEXA.

- Instrumentation: Tri-axial strain gauges (n=3 per specimen) bonded at high-stress regions (calcar, lateral shaft).

- Loading: Hydraulic testing machine applies load consistent with the in silico boundary conditions. Load is applied in increments to failure.

- Data Acquisition: Strain data sampled at 10 kHz. Synchronized with load-cell data.

- In Silico Replication:

- Create specimen-specific FE meshes from pre-test µCT scans.

- Assign heterogeneous material properties from Hounsfield Units.

- Apply identical boundary and loading conditions.

- Comparison & Metric Calculation:

- Extract simulated strain at the exact gauge locations in the model.

- Calculate the Validation Metric p: the proportion of experimental data points falling within the 95% prediction interval of the computational results. Per FDA guidance, a model with p ≥ 0.8 is considered well-validated for the context of use.

Protocol 2: Uncertainty Quantification for a Drug Delivery CFD Model

Objective: To quantify output uncertainty in a computational fluid dynamics (CFD) model of drug transport in an aneurysm sac.

- Input Uncertainty Identification: Define probabilistic distributions for key inputs: blood viscosity (Normal, μ±σ), inflow waveform magnitude (Uniform, ±10%), wall compliance (Beta distribution).

- Sampling: Use Latin Hypercube Sampling to generate 500 sets of input parameters from the defined distributions.

- Model Execution: Run the deterministic CFD model for each parameter set.

- Output Analysis: For the key output (e.g., drug residence time), construct a kernel density estimate to represent the output distribution. Calculate the 5th and 95th percentile values to report a 90% credibility interval.

- Global Sensitivity Analysis: Calculate Sobol indices from the simulation ensemble to rank the contribution of each uncertain input to the output variance.

Visualization of Frameworks and Workflows

ASME V&V 40 Risk-Informed Credibility Process

Interaction of Key Regulatory & Standards Frameworks

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Computational Model Credibility Activities

| Item/Reagent | Function in Credibility Assessment | Example in Context |

|---|---|---|

| Benchmark Datasets | Provides gold-standard data for validation. | Public domain in vitro hemodynamic measurements (e.g., FDA nozzle). |

| Code Verification Suites | Unit and regression testing for software. | NAFEMS FV benchmarks for CFD; analytical solutions for FE. |

| Uncertainty Quantification (UQ) Toolkits | Libraries for probabilistic analysis and sensitivity. | Dakota (SNL), Chaospy, or UQLab for sampling and Sobol indices. |

| High-Fidelity Instrumentation | Generates high-quality validation data. | Digital Image Correlation (DIC) for full-field strain; 4D Flow MRI for hemodynamics. |

| Controlled In Vitro Phantoms | Physical models for targeted validation. | 3D-printed compliant arterial phantoms with tunable material properties. |

| Structured Reporting Templates | Ensures comprehensive documentation per standards. | ASME V&V 40 reporting template; FDA CMC pilot program template. |

The convergence of ASME V&V 40 with regulatory guidances from the FDA and EMA creates a robust, risk-informed ecosystem for establishing the credibility of computational biomechanics models. For researchers, adherence to these frameworks is no longer optional but a fundamental requirement for translating computational research into credible evidence for drug and device development. The future lies in the continued harmonization of these standards and the development of shared, high-fidelity validation databases to accelerate innovation.

This whitepaper, framed within the broader thesis on Standards for credibility of computational biomechanics models research, presents a structured hierarchy for establishing model trustworthiness. For researchers, scientists, and drug development professionals, the transition from promising in-silico benchmarks to reliable real-world therapeutic predictions remains a critical challenge. This guide delineates the sequential levels of evidence required to navigate this transition credibly.

The Model Credibility Hierarchy

The credibility of a computational biomechanics model is not binary but ascends through a structured pyramid of evidence. This hierarchy, adapted from regulatory and consensus frameworks, emphasizes progressive validation.

Diagram Title: Five-Level Model Credibility Hierarchy

Level 5: Code and Theory Verification

This foundational level ensures the computational model correctly implements its underlying mathematical theory.

Experimental Protocol: Code Verification

- Objective: Confirm the absence of coding errors and numerical inaccuracies.

- Method: Utilize Method of Manufactured Solutions (MMS). An arbitrary analytical solution is substituted into the governing partial differential equations (e.g., Navier-Stokes for fluid flow, equilibrium equations for solid mechanics) to derive a source term. The computational model is run with this source term; its output is compared to the known manufactured solution.

- Acceptance Criterion: The observed order of accuracy of the solver should match the theoretical order as mesh/grid resolution is refined.

Table 1: Sample Code Verification Results for a Finite Element Solver

| Mesh Size (h) | L2 Error Norm | Observed Order of Accuracy |

|---|---|---|

| 1.0 | 5.21e-2 | - |

| 0.5 | 1.34e-2 | 1.96 |

| 0.25 | 3.39e-3 | 1.98 |

| 0.125 | 8.52e-4 | 1.99 |

Theoretical order for a 2nd-order accurate solver is 2.0.

Level 4: Experimental Benchmarking

The model is tested against controlled in-vitro or ex-vivo experiments to validate its predictive capability for the physics of interest.

Experimental Protocol:Ex-VivoTissue Mechanical Testing

- Objective: Calibrate and validate material constitutive models.

- Sample Preparation: Human or animal tissue specimens (e.g., arterial wall, cartilage) are harvested and prepared to standard geometries (e.g., rectangular strips, biaxial coupons).

- Equipment: A biaxial or uniaxial tensile testing system equipped with a saline bath for temperature and hydration control.

- Method: Specimens are subjected to preconditioning cycles followed by controlled displacement or force protocols (e.g., equibiaxial stretch, stress relaxation). Simultaneous force (load cell) and deformation (optical tracking with digital image correlation) data are collected.

- Model Comparison: The experimental boundary conditions and geometry are replicated in a computational simulation (e.g., FEA). The simulated stress-strain response is quantitatively compared to the experimental data.

The Scientist's Toolkit

Table 2: Key Reagents & Materials for Biomechanical Benchmarking

| Item | Function in Protocol |

|---|---|

| Phosphate-Buffered Saline (PBS) | Maintains physiological ionic strength and pH to prevent tissue degradation during ex-vivo testing. |

| Protease Inhibitor Cocktail | Added to the bath solution to inhibit enzymatic degradation of the tissue sample's extracellular matrix. |

| Silicone Carbide Grinding Paper | Used to precisely shape and smooth tissue specimens to ensure uniform geometry for accurate stress calculations. |

| Fluorescent Microspheres | Applied to the specimen surface as speckle patterns for high-fidelity strain measurement via Digital Image Correlation (DIC). |

| Biaxial Testing System | Computer-controlled system with independent actuators to apply precise mechanical loads along two perpendicular axes. |

Level 3: Retrospective Clinical Correlation

Model predictions are compared to retrospective clinical data (e.g., imaging, outcomes) from patient cohorts.

Experimental Workflow

The protocol for a retrospective study correlating arterial wall stress predictions with plaque rupture sites is depicted below.

Diagram Title: Retrospective Clinical Correlation Workflow

Table 3: Example Results from a Retrospective Plaque Rupture Study (n=45 patients)

| Metric | Value | Conclusion |

|---|---|---|

| AUC (ROC Curve) | 0.82 (95% CI: 0.74-0.89) | Model has good discriminatory ability. |

| Sensitivity | 78% | Model identified 78% of known rupture sites within predicted high-stress regions. |

| Specificity | 85% | 85% of predicted high-stress regions were colocated with known rupture sites. |

| Mean Peak Stress at Rupture Sites | 325 kPa ± 112 kPa | Significantly higher than at stable sites (p<0.01). |

Level 2: Prospective Clinical Validation

The model makes predictions for ongoing clinical cases, and its accuracy is judged against future, previously unknown outcomes.

Experimental Protocol: Prospective Trial for Device Efficacy

- Objective: Validate a model predicting post-stent apposition and wall stress.

- Design: Multicenter, observational prospective cohort study.

- Method: Pre-procedural imaging (CT, angiography) is used to create patient-specific models and simulate stent deployment and resultant wall mechanics. The model predicts regions of malapposition or elevated stress. Follow-up intravascular imaging (e.g., OCT at 6-12 months) is then performed to assess actual stent apposition and neointimal hyperplasia. Predictions are compared to follow-up data.

- Primary Endpoint: Positive predictive value of the model for identifying regions that develop significant neointimal hyperplasia.

Level 1: Real-World Predictive Accuracy

The highest level of credibility is achieved when model predictions directly and reliably inform clinical decision-making and improve patient outcomes in diverse, real-world settings.

Navigating the hierarchy from benchmarks (Levels 4-5) to clinical correlation (Level 3) and ultimately to prospective and real-world validation (Levels 2-1) establishes a rigorous, evidence-based pathway for the credibility of computational biomechanics models. This structured approach is essential for their eventual adoption in regulatory submissions and personalized therapeutic drug and device development.

Credibility in computational biomechanics is foundational for translating in silico findings into clinical impact. Within the broader thesis of establishing standards for model credibility, this whitepaper examines how credibility directly dictates success in three critical domains: research reproducibility, regulatory submission, and clinical translation. The reliance on computational models, particularly in drug development for musculoskeletal and cardiovascular applications, mandates rigorous assessment of predictive accuracy and robustness.

The Credibility Framework and Research Reproducibility

Reproducibility is the first casualty of inadequate model credibility. A credible model must be fully documented, validated against benchmark data, and its uncertainty quantified.

Key Factors Affecting Reproducibility

A 2023 review of 400 published computational biomechanics studies found that only 35% provided sufficient detail for full replication. The primary barriers are undocumented model parameters, inaccessible code, and insufficient raw validation data.

Table 1: Reproducibility Metrics in Recent Computational Biomechanics Literature (2020-2023)

| Factor | Studies with Complete Code Sharing (%) | Studies with Full Parameter Tables (%) | Studies Providing Raw Validation Data (%) | Estimated Replication Success Rate (%) |

|---|---|---|---|---|

| Musculoskeletal Models | 28 | 45 | 32 | 30 |

| Cardiovascular Fluid-Solid Models | 22 | 38 | 25 | 25 |

| Bone Implant Micromechanics | 41 | 52 | 40 | 38 |

| Average | 30.3 | 45.0 | 32.3 | 31.0 |

Experimental Protocol for Credibility Assessment (Validation Hub)

A standard protocol for establishing reproducibility is the "Validation Hub" approach.

Protocol: Multi-Laboratory Validation Hub for a Tibial Fracture Fixation Model

- Model Definition: A consortium defines a standard tibia geometry (from public repository), implant design (locking plate), and loading condition (axial compression to 2500N).

- Input Specification: All participating labs receive the same mesh file, material properties (Cortical bone: E=17 GPa, ν=0.3; Cancellous bone: E=155 MPa, ν=0.3; Steel implant: E=200 GPa, ν=0.3), and boundary conditions.

- Blinded Prediction: Each lab uses its own chosen solver and analyst to predict the strain distribution at six predefined locations on the bone and the implant's displacement.

- Experimental Benchmark: A physical test is performed using a synthetic bone composite and digital image correlation (DIC) to measure the "ground truth" strain and displacement.

- Comparison & Uncertainty Quantification: Predictions are compared to the benchmark. Credibility is quantified using the Standardized Credibility Assessment Score (SCAS):

SCAS = 100 * exp( -0.5 * ( (MAE/Experimental Uncertainty)^2 + (Code Sharing Penalty) + (Documentation Penalty) ) )where MAE is the Mean Absolute Error across all measurement points.

Diagram 1: Validation Hub Workflow for Credibility

Credibility in Regulatory Submission

Regulatory bodies like the FDA and EMA increasingly accept computational modeling and simulation (CM&S) as evidence in submissions. Credibility is governed by frameworks like the ASME V&V 40 and the FDA's "Reporting of Computational Modeling Studies" guidance.

Credibility Factors for Regulatory Success

A model's Context of Use (COU) defines the required level of credibility. A higher-risk COU (e.g., predicting stent fatigue life) demands more extensive evidence than a low-risk COU (e.g., educational tool).

Table 2: FDA Submission Outcomes for CM&S (2018-2022) in Orthopedics & Cardiology

| Context of Use (COU) | Submissions Containing CM&S (%) | Requests for Additional V&V (%) | Approval Delay Attributed to Inadequate V&V (Avg. Months) |

|---|---|---|---|

| Complementary Evidence (e.g., stress trends) | 65 | 45 | 3.2 |

| Primary Evidence (e.g., implant fatigue safety) | 22 | 78 | 8.5 |

| Replace a Clinical Trial (e.g., patient-specific planning) | 13 | 92 | 14.0 |

Protocol: Building a Credibility Dossier for a Coronary Stent

Objective: Submit a computational fluid dynamics (CFD) model to demonstrate hemodynamic performance of a new stent design.

- Define COU: "To predict the time-averaged wall shear stress (TAWSS) in the stented artery, identifying regions at risk for restenosis, as complementary evidence."

- Credibility Plan: Map model sub-components (flow geometry, boundary conditions, wall compliance) to required verification & validation (V&V) activities.

- Verification: Demonstrate mesh independence (solution change <2% with mesh refinement). Code verification via method of manufactured solutions.

- Validation: Hierarchical Validation:

- Benchmark: Compare with particle image velocimetry (PIV) data in a idealized stenotic phantom. Acceptable error: TAWSS within 15%.

- Animal Model: Compare model-predicted low TAWSS regions with sites of neointimal hyperplasia in a porcine study (histology correlation).

- Uncertainty Quantification: Propagate uncertainties in arterial diameter (±10%), blood viscosity (±5%), and inflow waveform to define confidence intervals on TAWSS.

- Documentation: Assemble dossier with traceable links from model requirements to V&V results and uncertainty statements.

Diagram 2: Regulatory Credibility Dossier Development

Credibility in Clinical Translation

For patient-specific clinical decision support (e.g., surgical planning), credibility requires demonstrating clinical accuracy and utility.

Clinical Validation Metrics

A 2024 meta-analysis of 15 studies on finite element (FE) analysis for fracture risk prediction showed that models with high technical credibility did not always lead to clinical utility.

Table 3: Impact of Credibility on Clinical Prediction Accuracy (Fracture Risk Assessment)

| Credibility Tier (Based on ASME V&V 40) | Number of Clinical Studies | Median AUC for Fracture Prediction | Improvement over BMD-alone Model (AUC Increase) |

|---|---|---|---|

| Tier 1 (Minimal V&V) | 5 | 0.72 | +0.04 |

| Tier 2 (Partial V&V) | 7 | 0.79 | +0.11 |

| Tier 3 (Full V&V + UQ) | 3 | 0.85 | +0.17 |

Protocol: Prospective Clinical Validation of a Patient-Specific Knee Model

Objective: Validate a musculoskeletal model for predicting post-TKA patellofemoral contact force against in vivo measurements from an instrumented implant.

- Patient Cohort: Recruit 10 patients receiving a telemetric knee implant.

- Pre-Op Imaging: Obtain CT scans for bone geometry and MRIs for muscle attachment sites.

- Model Personalization: Scale generic model to patient anatomy. Calibrate muscle parameters using pre-op gait analysis.

- Surgical Simulation: Virtually implant the prosthesis component sizes and positions as recorded during surgery.

- Blinded Prediction: For each patient, predict patellofemoral contact forces during level walking, stair ascent, and descent.

- In Vivo Measurement: Patients perform activities at 6 months post-op. Telemetric implant measures real contact forces.

- Analysis: Calculate root-mean-square error (RMSE) and Pearson's correlation (r) between predicted and measured force waveforms. Pre-define success criterion: r > 0.8, RMSE < 20% of peak measured force.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Research Reagent Solutions for Credible Computational Biomechanics

| Item | Function/Benefit | Example Use Case |

|---|---|---|

| Standardized Geometry Repositories | Provides benchmark anatomical models for validation and inter-study comparison. | Using the "Living Heart Project" meshes for cardiac simulation validation. |

| Synthetic Bone Composites | Offers consistent, repeatable mechanical properties for physical benchmark testing. | Validating a femoral stem finite element model in a simulated implantation test. |

| Digital Image Correlation (DIC) Systems | Provides full-field, high-resolution strain measurements on physical specimens for model validation. | Measuring surface strain on a vertebra during compression testing. |

| Telemetric Implants | Enables direct in vivo measurement of forces or pressures for clinical validation of predictive models. | Validating a lumbar spine model against forces in an instrumented spinal fixation rod. |

| Uncertainty Quantification (UQ) Software Libraries | Facilitates propagation of input uncertainties (e.g., material properties) to quantify output confidence intervals. | Determining the probability that stent wall stress exceeds fatigue limit. |

| Model Sharing Platforms (e.g., Physiome Model Repository) | Ensures model reproducibility and allows peer audit of code and parameters. | Sharing a validated hemodynamics model of an aortic aneurysm for community use. |

From Theory to Practice: A Step-by-Step Methodology for Building Credible Models

Within computational biomechanics, particularly for applications in drug development and medical device evaluation, model credibility is paramount. The Context of Use (COU) is a formal, detailed specification that defines how a computational model is intended to be used to inform a specific decision. It is the foundational "North Star" that guides all subsequent decisions in model development, verification, validation, and uncertainty quantification. This guide establishes COU definition as the critical first step within a broader framework for achieving credible computational biomechanics research, aligning with standards from the FDA's ASME V&V 40 and the FDA-ISOO Good Simulation Practice (GSP) principles.

The Anatomy of a COU: Core Components

A well-defined COU must explicitly address the following components. This structure ensures the model's purpose is unambiguous and testable.

Table 1: Core Components of a Context of Use Statement

| Component | Description | Example (Knee Implant Stress Analysis) |

|---|---|---|

| 1. Intended Decision | The specific regulatory, clinical, or engineering decision the model will inform. | To evaluate if von Mises stress in a novel polymer tibial insert remains below yield strength under gait-cycle loading. |

| 2. Model Outputs of Interest | The specific, quantifiable metrics the model will produce to inform the decision. | Peak von Mises stress in the insert; stress distribution map. |

| 3. Performance Requirements | The quantitative accuracy or precision needed for the outputs to be decision-relevant. | Model must predict peak stress within ±15% of benchtop experimental measurements. |

| 4. Population & Scenarios | The biological, physiological, and physical conditions under which the model is applied. | Population: Adults (50-75 yrs) with osteoarthritis. Scenario: Normal gait, ISO 14243-1 loading profile. |

| 5. Risk associated with Decision | The consequence of the model being wrong, informing the required level of credibility. | Moderate risk; failure could lead to premature implant wear but not acute life-threatening failure. |

Experimental & Computational Protocols for COU-Driven Validation

The COU dictates the design of validation experiments. The workflow is a closed loop, initiated and governed by the COU.

Diagram Title: COU-Driven Model Validation and Refinement Workflow

Detailed Protocol for a Representative Validation Experiment

Objective: Validate a finite element (FE) model of femoral artery wall stress in response to blood pressure, as defined by a COU for a stent design decision.

Protocol: Ex Vivo Bovine Artery Pressure-Inflation Test

- Specimen Preparation: Fresh bovine femoral arteries (n=6) are dissected and cleaned of perivascular tissue. Segments (5 cm length) are mounted in a bioreactor chamber filled with phosphate-buffered saline (PBS) at 37°C.

- Instrumentation: The segment is connected to a computer-controlled pressure pump. A laser micrometer measures external diameter at mid-section. Intraluminal pressure is monitored via a high-fidelity transducer.

- Imaging Markers: A regular grid of ink dots is applied to the adventitial surface for digital image correlation (DIC) strain analysis.

- Experimental Sequence: a. Pre-conditioning: Apply 10 cycles of pressure from 80 to 120 mmHg. b. Data Acquisition: Increase pressure from 80 mmHg to 180 mmHg in 10 mmHg increments. At each step, hold for 30 seconds and record: pressure, external diameter, and a high-resolution image for DIC.

- Data Processing: DIC software calculates 2D surface strain fields (circumential and longitudinal). Pressure-diameter data is used to calculate physiological compliance.

- Comparison to Simulation: The experimental geometry is reconstructed via micro-CT. The FE model is run with identical pressure boundary conditions. The simulated strain fields and diameter change are quantitatively compared to experimental measurements using metrics like the Correlation Coefficient (R²) and Normalized Root Mean Square Error (NRMSE).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Ex Vivo Vascular Biomechanics Experiments

| Item | Function | Example/Supplier |

|---|---|---|

| Ex Vivo Bioreactor System | Maintains physiological temperature and environment for vascular tissue during mechanical testing. | Bose BioDynamic 5110; Instron BioPuls. |

| Digital Image Correlation (DIC) System | Non-contact optical method to measure full-field 2D or 3D surface deformation and strain. | Correlated Solutions VIC-2D/3D; Dantec Dynamics Q-400. |

| High-Fidelity Pressure Transducer | Accurately measures intraluminal fluid pressure with low hysteresis and high frequency response. | Millar SPR-350 catheter transducer; Honeywell sensing elements. |

| Pseudo-Physiological Saline Solution | Bathing solution that maintains tissue hydration and ionic balance, preventing artifact-inducing degradation. | Dulbecco's PBS (DPBS), pH 7.4, with 1 g/L glucose. |

| Micro-Computed Tomography (Micro-CT) Scanner | High-resolution 3D imaging to capture reference geometry for accurate FE model reconstruction. | Bruker Skyscan 1272; Scanco Medical µCT 50. |

| Finite Element Analysis Software | Platform for building, solving, and post-processing computational biomechanics models. | Simulia Abaqus; ANSYS Mechanical; COMSOL Multiphysics. |

Quantitative Validation Metrics: From Data to Decision

The COU's performance requirements are tested using quantitative metrics. The following table summarizes common metrics used in computational biomechanics validation.

Table 3: Common Quantitative Metrics for Model Validation

| Metric | Formula | Interpretation & Application |

|---|---|---|

| Correlation Coefficient (R²) | R² = 1 - (SSres / SStot) SSres=Σ(yi - ŷi)², SStot=Σ(y_i - ȳ)² | Measures proportion of variance in experimental data explained by the model. Target: R² > 0.9 for high confidence. |

| Normalized Root Mean Square Error (NRMSE) | NRMSE = (RMSE) / (ymax - ymin) RMSE = √[ Σ(yi - ŷi)² / n ] | Normalized measure of average error magnitude. Target: NRMSE < 0.15 (15%) per typical COU requirement. |

| Mean Absolute Percentage Error (MAPE) | MAPE = (100%/n) * Σ | (yi - ŷi) / y_i | | Average absolute percentage error. Sensitive to values near zero. |

| Pass/Fail Criteria based on Tolerance | Acceptance if: |yi - ŷi| < Tolerance for all i | Direct binary check against a predefined tolerance (e.g., ±10% stress, ±1mm displacement). |

Logical Framework: From COU to Credible Model

The relationship between COU, credibility activities, and the final decision is a structured logical hierarchy.

Diagram Title: COU as the Foundation for Model Credibility Activities

Within the broader thesis on Standards for Credibility of Computational Biomechanics Models, Step 2, Systematic Model Formulation and Assumption Management, serves as the critical bridge between conceptual modeling and mathematical instantiation. This phase transforms a qualitative understanding of a biomechanical system—such as arterial wall stress, bone remodeling, or cartilage contact mechanics—into a rigorous, testable mathematical framework. It demands explicit documentation of governing equations, boundary and initial conditions, constitutive laws, and, most importantly, a structured inventory of all associated assumptions. For researchers, scientists, and drug development professionals, this process is foundational to model credibility, reproducibility, and regulatory acceptance, as it directly addresses the "Why?" and "How?" behind the model's construction.

Core Components of Model Formulation

Model formulation decomposes the biological-physical system into defined components. Each requires deliberate choices grounded in physiology and physics.

Governing Equations

These are the fundamental physical conservation laws applied to the continuum domain.

- Balance of Linear Momentum: ∇·σ + ρb = ρa (where σ is stress tensor, ρ is density, b body force, a acceleration).

- Balance of Mass (Continuity Equation): ∂ρ/∂t + ∇·(ρv) = 0.

- Constitutive Equations: Mathematical relationships describing the material-specific response to mechanical stimuli (e.g., stress-strain relationship).

Boundary and Initial Conditions (BCs & ICs)

BCs define interactions with the environment; ICs define the system state at time zero.

- Dirichlet (Essential) BC: Prescribed displacement or velocity (e.g., fixed end of a tendon).

- Neumann (Natural) BC: Prescribed traction or force (e.g., applied pressure on a vessel wall).

- Initial Conditions: Initial displacement, velocity, or stress fields within the domain.

Spatial and Temporal Scales

Explicit definition of scale prevents confounding phenomena (e.g., modeling cellular response with continuum-level equations).

The Assumption Management Framework

Assumptions are inevitable simplifications. Systematic management involves cataloging, justifying, and grading their potential impact.

Assumption Taxonomy

A structured categorization ensures comprehensive tracking.

Table 1: Taxonomy of Model Assumptions in Computational Biomechanics

| Category | Sub-Category | Example | Potential Impact on Credibility |

|---|---|---|---|

| Geometric | Idealization | Modeling a complex femur as a simplified cylinder. | High impact on local stress concentrations. |

| Symmetry | Assuming axial symmetry in an aortic aneurysm model. | Reduces computational cost; may miss asymmetric features. | |

| Material | Constitutive Law | Modeling bone as linear elastic, isotropic. | High impact for loads beyond elastic regime. |

| Homogeneity | Assuming uniform density in trabecular bone. | Neglects local variations influencing failure. | |

| Loading & BCs | Load Simplification | Modeling gait as a static load case. | Misses dynamic and fatigue-relevant effects. |

| Boundary Fixity | Assuming a perfectly fixed implant-bone interface. | Overestimates stability if micromotion occurs. | |

| Numerical | Mesh Independence | Using an element size not verified for convergence. | Results may be quantitatively unreliable. |

| Solver Tolerance | Using a coarse solver tolerance for contact. | May cause non-physical penetration or instability. |

Assumption Justification and Impact Grading

Each assumption must be justified by literature, experimental data, or sensitivity analysis. Impact is graded as Low, Medium, or High based on its potential to alter primary model outputs or conclusions.

Experimental Protocol: Sensitivity Analysis for Assumption Impact Assessment

- Objective: Quantify the influence of a specific assumption on key model outputs (e.g., peak stress, strain energy).

- Methodology:

- Define Baseline Model: Implement the model with the standard assumption (e.g., isotropic material).

- Identify Parameter/Model Variant: Define the alternative, more complex representation (e.g., transversely isotropic material with defined fiber direction).

- Define Output Metrics: Select quantifiable outputs of interest (QOIs), e.g., maximum principal stress at a critical location.

- Perturb System: Run simulations for both the baseline and the variant models under identical loading conditions.

- Quantify Difference: Calculate the relative difference in QOIs: ΔQOI = |(QOIvariant - QOIbaseline)| / QOI_baseline.

- Interpretation: A ΔQOI > 10% (a common threshold) suggests the assumption has a high impact and warrants careful consideration or refinement.

Workflow for Systematic Formulation

The following diagram outlines the iterative, decision-based workflow for this phase.

Diagram 1: Model Formulation & Assumption Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Tools for Model Formulation & Validation

| Item / Solution | Function in Model Formulation & Credibility |

|---|---|

| High-Resolution μCT/Micro-MRI Scanner | Provides precise 3D geometry for model reconstruction and internal microstructure data for heterogeneity assessment. |

| Biaxial/Triaxial Mechanical Tester | Generates multi-directional stress-strain data essential for deriving and calibrating anisotropic constitutive laws. |

| Digital Image Correlation (DIC) System | Provides full-field experimental strain maps on tissue surfaces for direct quantitative validation of model strain predictions. |

| Literature Mining & Database Software | Enables systematic review to justify assumptions based on prior published biomechanical data (e.g., material properties). |

| Sensitivity Analysis Toolkits | Software libraries (e.g., SALib, Dakota) or built-in FEA modules to automate impact assessment of parameter/assumption uncertainty. |

| Ontologies (e.g., FIX, OBI) | Formal, controlled vocabularies (Foundational Model of Anatomy, Ontology for Biomedical Investigations) to ensure consistent, unambiguous description of model components and processes. |

| Model Description Language (e.g., CellML, FieldML) | Standardized formats for encoding the mathematical model independently of solution code, enhancing reproducibility and exchange. |

Application in Drug Development: A Case Framework

Consider modeling arterial wall stress to predict aneurysm rupture risk—a key application in cardiovascular drug development.

- Formulation: Governed by equations of finite elasticity for an incompressible, thick-walled tube.

- Critical Assumptions:

- Material: Artery modeled as a homogeneous, isotropic, hyperelastic (e.g., Yeoh) material.

- Justification: Lacks patient-specific collagen fiber orientation data; a common simplification in population studies.

- Impact Grading: High. Rupture is fiber-driven. Mitigated by calibrating model to patient-specific pressure-diameter data.

- Loading: Assumes static peak systolic pressure.

- Justification: Lacks dynamic pressure waveform; simplifies computation.

- Impact Grading: Medium. Misses fatigue but captures peak stress instant.

- Validation Protocol: Predicted bulge geometry vs. CT; predicted wall strain vs. MRI-based tissue tagging.

Step 2, Systematic Model Formulation and Assumption Management, is not a passive documentation exercise but an active, critical reasoning process. It forces the explicit articulation of the model's relationship to the target biomechanical system. By providing a structured framework for assumption inventory, justification, and impact assessment—supported by targeted experimental protocols and tools—this step lays the essential foundation for credibility. It creates the auditable trail that allows other researchers, regulatory reviewers, and drug development teams to understand the model's limitations and trust its predictions, thereby advancing the standards for credible computational biomechanics.

This whitepaper details the third pillar of a proposed framework for establishing credibility in computational biomechanics models. Within the broader thesis—Standards for Credibility of Computational Biomechanics Models Research—Step 3 is dedicated to ensuring the numerical correctness, stability, and reliability of the computational solution of the underlying mathematical equations. It moves beyond conceptual model formulation (Step 1) and mathematical model construction (Step 2) to demand evidence that the equations are being solved accurately.

A biomechanical model, no matter how conceptually sound and mathematically rigorous, is only as credible as its computational implementation. Rigorous Computational Verification (RCV) isolates the numerical solution from physical modeling errors to confirm that the governing equations are solved with acceptable accuracy. This involves a hierarchy of techniques, from code verification to solution verification, providing the foundational trust in the digital tool before it is applied to physical reality.

Core Methodologies for Verification

The following table summarizes the primary quantitative methods and benchmarks used in RCV. The experimental protocol for each is detailed subsequently.

Table 1: Hierarchy of Computational Verification Techniques

| Technique | Primary Objective | Quantitative Metric(s) | Acceptance Criteria |

|---|---|---|---|

| Method of Manufactured Solutions (MMS) | Verify code correctness and order of accuracy. | Observed Order of Accuracy (p), Discretization Error. | p ≥ theoretical order of convergence; error reduces systematically with grid/time-step refinement. |

| Analytical/Numerical Benchmark Comparison | Verify solution against a known canonical result. | Relative Error (L₂ Norm), Point-wise Difference. | Error ≤ predefined tolerance (e.g., 1% or 0.1% relative error). |

| Grid Convergence Index (GCI) Study | Quantify numerical uncertainty due to discretization. | GCI value (as a percentage of the solution). | GCI is acceptably small for the application; asymptotic convergence is demonstrated. |

| Sensitivity Analysis (Numerical Parameters) | Assess stability and robustness of solver. | Variation in key output variables (e.g., max stress, flow rate). | Solution is insensitive to perturbations in solver tolerances, artificial diffusion, etc. |

Experimental Protocol: Method of Manufactured Solutions (MMS)

- Objective: To conclusively verify that the software implementation solves the intended set of partial differential equations (PDEs) correctly.

- Procedure:

- Manufacture: Choose an arbitrary, sufficiently smooth analytical function for all dependent variables (e.g., displacement, pressure, velocity).

- Operate: Substitute the manufactured solution into the governing PDEs. This will yield a non-zero residual, as the chosen function is not a true solution.

- Source Term: Define this residual as a "source term" or "forcing function" to be added to the original PDEs.

- Solve: Run the computational code with this new source term and the appropriate boundary conditions derived from the manufactured solution.

- Compare & Converge: Compute the error between the numerical solution and the manufactured analytical solution. Repeat simulations on progressively refined spatial and temporal grids.

- Calculate Order: Determine the observed order of accuracy (p) from the error decay rate:

p = log(Error_fine / Error_coarse) / log(Refinement_Ratio).

- Success Criteria: The observed order of accuracy (p) matches the theoretical order of the discretization scheme (e.g., p=2 for a second-order accurate finite element method).

Experimental Protocol: Grid Convergence Index (GCI) Study

- Objective: To estimate the numerical uncertainty in a simulation due specifically to spatial and temporal discretization for which no analytical solution exists.

- Procedure (for a systematic grid refinement study):

- Generate Grids: Create three or more systematically refined computational grids (or time-steps). A consistent refinement ratio, r (e.g., r = √2 or 2), is recommended.

- Run Simulations: Execute the simulation on each grid, recording a key quantity of interest (φ) such as peak wall shear stress or drag force.

- Calculate Apparent Order: Use the solutions from the three finest grids to compute the apparent order (p) of convergence.

- Extrapolate: Estimate the asymptotic value of the quantity of interest as grid size approaches zero (φext^21) using Richardson extrapolation.

- Compute GCI: Calculate the Grid Convergence Index for the fine grid solution:

GCI_fine = (F_s * |ε|) / (r^p - 1), where ε is the relative error between fine and medium solutions, and Fs is a safety factor (typically 1.25 for three-grid studies).

- Success Criteria: The GCI provides a quantitative error band (e.g., GCI = 2.4%) on the reported solution, allowing researchers to state the result with a known level of numerical uncertainty.

Visualization of the Verification Workflow

Title: RCV Workflow from Model to Verified Solution

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Rigorous Computational Verification

| Item / Solution | Function in Verification |

|---|---|

| Code Verification Test Suite (e.g., MMS Generator) | Automated framework to generate analytical source terms and compute error norms, essential for continuous integration testing. |

| High-Order Accurate Solver | A computational solver with a documented theoretical order of accuracy (e.g., 2nd order) against which observed convergence can be measured. |

| Mesh Generation & Refinement Tool | Software capable of producing a sequence of nested or systematically refined computational grids (hexahedral, tetrahedral) with known refinement ratios. |

| Benchmark Problem Database | A curated collection of canonical problems with high-fidelity numerical or analytical solutions (e.g., FDA's CFD benchmarks, Ascher's test problems). |

| Richardson Extrapolation & GCI Calculator | Scripts/tools to perform convergence analysis, Richardson extrapolation, and calculate the Grid Convergence Index from a set of solutions. |

| Sensitivity Analysis Dashboard | A parameter study tool to vary numerical parameters (solver tolerance, artificial viscosity) and visualize their impact on outputs. |

1.0 Introduction: The Validation Imperative in Credible Computational Biomechanics Within the framework of Standards for Credibility of Computational Biomechanics Models, Step 4 represents the critical pivot from in-silico prediction to physical verification. It is the process of "Solving the Right Equations"—identifying and experimentally testing the specific, falsifiable hypotheses generated by the model that are most consequential to its predictive claim. This step moves beyond generic correlation to strategic interrogation of the model's mechanistic underpinnings, ensuring it captures the correct physics and biology, not just favorable outcomes.

2.0 Core Principles of Strategic Validation Strategic validation is hypothesis-driven, not data-driven. It requires:

- Identifying Model-Predicted Critical Thresholds: Quantities (e.g., shear stress > 2.5 Pa) where the model predicts a dramatic shift in biological response.

- Probing Mechanistic Pathways: Testing intermediate variables within the modeled pathway (e.g., strain-induced integrin activation prior to YAP nuclear translocation).

- Leveraging Perturbation Analysis: Using experimental interventions (knockdown, inhibition, mechanical disruption) predicted by the model to alter the system state in a specific, quantifiable manner.

3.0 Quantitative Landscape of Key Validation Targets The following table synthesizes current quantitative benchmarks and targets for validation in computational biomechanics, as derived from recent literature.

Table 1: Strategic Validation Targets & Quantitative Benchmarks

| Validation Target | Typical Experimental Readout | Quantitative Range / Threshold (Examples) | Relevance to Model Credibility |

|---|---|---|---|

| Cellular Strain/Stress | Traction Force Microscopy (TFM), FRET-based biosensors | Traction stresses: 0.1 - 10 kPa; ERK activity EC₅₀ at ~4% strain | Validates the input mechanical stimulus predicted by the model. |

| Cytoskeletal Remodeling | Fluorescence intensity, F-actin alignment index | Alignment index > 0.7 under > 1 Pa shear; cortical-to-cytoplasmic ratio changes | Tests the model's prediction of cytoskeletal adaptation mechanics. |

| Nuclear Mechanotransduction | YAP/TAZ nuclear-to-cytoplasmic ratio | N/C ratio > 2.0 defined as "active"; response threshold at ~5% substrate strain | Validates the downstream transcriptional output pathway. |

| Paracrine Signaling Output | ELISA/MSD for cytokines (e.g., TGF-β, IL-8) | e.g., IL-8 secretion > 2-fold increase under cyclic stretch vs. static | Tests model predictions of multicellular communication outcomes. |

| Barrier Integrity | Transendothelial Electrical Resistance (TEER), Permeability coefficient | TEER > 1500 Ω·cm² for intact barrier; Permeability < 5 x 10⁻⁶ cm/s | Validates functional tissue-scale predictions of model. |

4.0 Detailed Experimental Protocols for Strategic Validation

Protocol 4.1: Traction Force Microscopy (TFM) for Model-Input Validation Purpose: To experimentally measure the tractions exerted by cells on their substrate, providing direct validation for finite element-predicted stress/strain fields. Materials: Polyacrylamide (PAA) hydrogels (1-15 kPa) with embedded 0.2 μm fluorescent beads, fibronectin or collagen for coating, live-cell imaging microscope. Methodology:

- Fabricate fluorescent bead-embedded PAA gels of a stiffness matching the computational model.

- Image the bead positions in a relaxed, cell-free state (reference image).

- Seed cells (e.g., vascular endothelial cells) onto the gel and allow adhesion (4-6 hrs).

- Image bead positions under cell-loaded conditions.

- Lyse cells (e.g., with 1% SDS) and re-image bead positions for final reference.

- Use Particle Image Velocimetry (PIV) and Fourier Transform Traction Cytometry (FTTC) algorithms to compute displacement fields and resultant traction stresses from bead displacements.

- Statistically compare the spatial distribution and magnitude of experimental tractions to model predictions.

Protocol 4.2: FRET-based Biosensor Imaging for Pathway Interrogation Purpose: To dynamically validate predicted activity levels of key signaling molecules (e.g., Rac1, ERK) in response to modeled mechanical stimuli. Materials: Cells stably expressing FRET biosensor (e.g., RaichuEV-Rac1, EKAR), fluid shear stress device or cyclic stretch chamber, fast-acquisition inverted fluorescence microscope with FRET filter sets. Methodology:

- Plate biosensor-expressing cells on appropriate imaging substrates.

- Calibrate the microscope for CFP/YFP FRET pair, correcting for bleed-through and photobleaching.

- Apply the precise mechanical stimulus (magnitude, duration, waveform) used in the simulation.

- Acquire time-lapse images of donor (CFP) and acceptor (YFP) emission simultaneously.

- Calculate the FRET ratio (YFP/CFP intensity) on a per-cell basis over time.

- Compare the temporal and magnitude kinetics of FRET ratio changes (signaling activity) against the timecourse predicted by a coupled biochemical-mechanical model.

5.0 Visualizing the Validation Framework and Pathways

Title: Strategic Validation Workflow for Model Credibility

Title: Key YAP Mechanotransduction Pathway for Validation

6.0 The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Strategic Mechanobiology Validation

| Reagent / Material | Supplier Examples | Function in Validation |

|---|---|---|

| Tunable Polyacrylamide Hydrogels | Matrigen, BioTribes, in-house fabrication | Provides a well-defined, isotropic substrate with controllable stiffness for TFM and 2D mechanosensing studies. |

| FRET-based Biosensor Plasmids | Addgene (K. Hahn, M. Matsuda labs), MoBiTec | Enables live-cell, spatiotemporal quantification of signaling molecule activity (Rac, Rho, ERK) in response to stimulus. |

| Small Molecule Inhibitors (e.g., Blebbistatin, Y27632) | Tocris, Sigma-Aldrich, Cayman Chemical | Allows precise perturbation of specific mechanotransduction nodes (myosin II, ROCK) to test model causality. |

| siRNA/shRNA Libraries (Targeting Integrins, YAP/TAZ) | Horizon Discovery, Sigma-Aldrich, Qiagen | Enables genetic knockdown of predicted critical pathway components to validate their necessity. |

| Microfluidic Shear Stress Devices | Ibidi, Cherry Biotech, Elveflow | Applies precise, laminar fluid shear stress waveforms to cells for vascular or bone fluid flow model validation. |

| Cyclic Stretch Culture Systems | Flexcell, Strex, EBERS | Applies controlled uniaxial or equibiaxial strain to validate models of lung, heart, or muscle mechanics. |

| Antibody Panel for Mechanotransduction (pY397-FAK, Nuclear YAP) | Cell Signaling Technology, Abcam, Santa Cruz | Provides standard immunofluorescence or Western blot endpoints for pathway activation quantification. |

Within the broader thesis on establishing standards for credibility in computational biomechanics models, Step 5 represents a critical phase: Comprehensive Sensitivity Analysis and Uncertainty Quantification (SA/UQ). This process systematically evaluates how uncertainties in model inputs (parameters, boundary conditions, geometry) propagate to uncertainties in model outputs and identifies which inputs are most influential. For models used in drug development and biomechanics research—where predictions may inform clinical decisions—rigorous SA/UQ is non-negotiable for establishing predictive credibility and quantifying confidence in results.

Theoretical Framework for SA/UQ in Computational Biomechanics

The core objective is to treat the computational model ( \mathcal{M} ) as a function mapping a set of d uncertain input parameters ( \mathbf{x} = (x1, x2, ..., x_d) ) to outputs of interest ( \mathbf{y} = \mathcal{M}(\mathbf{x}) ). Uncertainty in ( \mathbf{x} ), characterized by probability distributions, leads to uncertainty in ( \mathbf{y} ). SA/UQ decomposes this relationship.

Global Sensitivity Analysis (GSA): Quantifies the contribution of each input parameter ( xi ) to the output variance, considering interactions between parameters. The Sobol' variance-based method is a gold standard. The total-order Sobol' index ( S{Ti} ) measures the total effect of ( xi ), including all interactions: [ S{Ti} = \frac{\mathbb{E}{\mathbf{x}{\sim i}}[\mathbb{V}{xi}(y|\mathbf{x}{\sim i})]}{\mathbb{V}(y)} ] where ( \mathbf{x}{\sim i} ) denotes all parameters except ( x_i ).

Uncertainty Quantification: Propagates input uncertainties through ( \mathcal{M} ) to construct a probability distribution or confidence intervals for the output. Common techniques include Monte Carlo (MC) sampling, Polynomial Chaos Expansion (PCE), and Gaussian Process (GP) surrogates.

Key Methodologies and Experimental Protocols

Protocol for Variance-Based Global Sensitivity Analysis (Sobol' Method)

Objective: Compute first-order (( Si )) and total-order (( S{Ti} )) Sobol' indices for all model input parameters. Procedure:

- Define Input Distributions: For each of the

duncertain parameters, define a plausible probability distribution (e.g., uniform, normal, log-normal) based on experimental data or literature. - Generate Sampling Matrices: Create two ( N \times d ) sampling matrices, ( A ) and ( B ), using a quasi-random sequence (e.g., Sobol' sequence) for improved convergence. ( N ) is the sample size (typically ( 10^3 - 10^4 )).

- Construct Hybrid Matrices: For each parameter ( i ), create matrix ( C_i ), where all columns are from ( A ), except column ( i ), which is from ( B ).

- Model Evaluation: Run the computational model ( \mathcal{M} ) for all rows in matrices ( A, B, ) and each ( C_i ). This requires ( N \times (d + 2) ) model evaluations.

- Index Calculation: Compute the model outputs ( f(A), f(B), f(Ci) ). Use the estimator by Saltelli et al. (2010) to calculate ( Si ) and ( S_{Ti} ).

Protocol for Surrogate-Assisted SA/UQ (Polynomial Chaos Expansion)

Objective: Build an accurate surrogate model to enable efficient MC sampling for UQ and GSA. Procedure:

- Experimental Design: Generate

Nsamples of the input vector ( \mathbf{x} ) using an appropriate design (e.g., Latin Hypercube Sampling). - Run High-Fidelity Model: Evaluate ( \mathcal{M}(\mathbf{x}^{(k)}) ) for each sample

k=1...N. - Construct PCE Surrogate: Approximate the model as ( \mathcal{M}(\mathbf{x}) \approx \sum{\alpha \in \mathcal{A}} c\alpha \Psi\alpha(\mathbf{x}) ), where ( \Psi\alpha ) are orthogonal polynomial basis functions (chosen based on input distributions), and ( c_\alpha ) are coefficients. Use least-squares regression or quadrature to determine coefficients.

- Validate Surrogate: Use a hold-out validation set or cross-validation to assess surrogate accuracy (e.g., via ( Q^2 ) predictive coefficient).

- Perform SA/UQ: Execute a large MC sample (e.g., ( 10^6 )) on the cheap-to-evaluate PCE surrogate to generate the output distribution. Sobol' indices are derived analytically from the PCE coefficients.

Data Presentation: SA/UQ Results from a Representative Bone Remodeling Study

The following tables summarize quantitative SA/UQ results from a hypothetical but representative study on a finite element model of tibial bone adaptation under pharmacological intervention.

Table 1: Input Parameter Distributions for Bone Remodeling Model

| Parameter | Description | Nominal Value | Uncertainty Distribution | Source |

|---|---|---|---|---|

E_max |

Maximum Young's modulus of bone | 20 GPa | Uniform (18, 22) GPa | Nanoindentation ex vivo |

k |

Remodeling rate constant | 0.05 g/(mm²·day) | Log-normal (μ=0.05, σ=0.015) | Histomorphometry |

S_ref |

Reference mechanical stimulus | 0.025 MPa | Normal (0.025, 0.005) MPa | Telemetry data calibration |

Drug_Efficacy |

Reduction in osteoclast activity | 0.65 | Beta (α=8, β=4) [0,1] | Phase II clinical trial data |

Load_Magnitude |

Peak gait load | 2500 N | Uniform (2200, 2800) N | Gait analysis variability |

Table 2: Global Sensitivity Indices for Predicted Bone Density Change at 12 Months

| Output Variable | E_max (Si / STi) |

k (Si / STi) |

S_ref (Si / STi) |

Drug_Efficacy (Si / STi) |

Load_Magnitude (Si / STi) |

|---|---|---|---|---|---|

| Δ Density (Trabecular) | 0.02 / 0.05 | 0.45 / 0.72 | 0.10 / 0.18 | 0.25 / 0.41 | 0.01 / 0.08 |

| Δ Density (Cortical) | 0.08 / 0.15 | 0.15 / 0.30 | 0.05 / 0.12 | 0.10 / 0.22 | 0.50 / 0.65 |

Table 3: Uncertainty Quantification of Key Outputs (10^6 MC samples via PCE Surrogate)

| Output Variable | Mean Prediction | Standard Deviation | 95% Credible Interval |

|---|---|---|---|

| Trabecular Density Increase (%) | 4.8% | ±1.2% | [2.5%, 7.1%] |

| Cortical Density Increase (%) | 2.1% | ±0.8% | [0.6%, 3.6%] |

| Failure Load Change (N) | +312 N | ±85 N | [+145 N, +479 N] |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials & Software for SA/UQ in Computational Biomechanics

| Item | Function | Example Product/Software |

|---|---|---|

| Quasi-Random Sequence Generator | Produces low-discrepancy samples for efficient space-filling and GSA. | SobolSeq, SciPy QMC module |

| Surrogate Modeling Toolkit | Constructs and validates PCE, GP, or other surrogate models. | UQLab, SMT (Surrogate Modeling Toolbox), gPCE Matlab工具箱 |

| High-Performance Computing (HPC) Scheduler | Manages thousands of computationally expensive model evaluations. | SLURM, PBS Pro, Azure Batch |

| Uncertainty Parameter Database | Curates and stores distributions for biomechanical parameters. | VIVO collaborative platform, institutional SQL database |

| Standardized Model Reporting Template | Documents SA/UQ methods and results as per credibility standards. | ASME V&V 40 reporting template extension |

Visualization of Workflows and Relationships

Title: Comprehensive SA/UQ Workflow for Model Credibility

Title: Influence of Input Parameters on Bone Adaptation Predictions

Within the evolving thesis on Standards for Credibility of Computational Biomechanics Models, the concept of a Credibility Dossier emerges as a critical, structured framework for evidence-based model assessment. This dossier serves as a comprehensive, transparent record documenting the foundational assumptions, developmental processes, and, most importantly, the multi-faceted evaluation of a model's predictive capability and limitations. It moves beyond traditional validation reports by embedding the model within a rigorous credibility assurance process, aligning with broader initiatives like the ASME V&V 40 standard and the FAIR (Findable, Accessible, Interoperable, Reusable) principles for scientific data.

Core Components of a Credibility Dossier

A Credibility Dossier is organized around four pillars, providing traceability from context to evidence.

Model Context and Intended Use

This section defines the boundaries of credibility. It includes a detailed specification of the Model of Interest (MOI), the context of use (COU) specifying the specific clinical, industrial, or research question, and the quantities of interest (QOIs) that the model predicts.

Development and Implementation Documentation

This pillar ensures technical reproducibility. It encompasses the underlying mathematical theory, computational implementation details (software, version, dependencies), complete model equations, parameter values with sources, and a description of numerical methods and solver settings.

Verification, Validation, and Uncertainty Quantification (VVUQ)

This is the evidentiary core of the dossier. It systematically presents the activities undertaken to build confidence in the model's predictions for the specified COU.

Verification: Evidence that the computational model is solved correctly (solving the equations right). Validation: Evidence that the computational model accurately represents the real-world physics/biology (solving the right equations). Uncertainty Quantification (UQ): Characterization and propagation of uncertainties from inputs, parameters, and model form to the QOIs.

Lifecycle Management and Dissemination

This section addresses the model's sustainability and accessibility. It includes version history, a plan for updates and re-evaluation, licensing information, and access details for model code, data, and the dossier itself.

Quantitative Data Synthesis from Current Literature

Recent research emphasizes quantitative metrics for credibility assessment. The following table summarizes key quantitative thresholds and metrics proposed for computational biomechanics models, particularly in cardiovascular and orthopedic applications.

Table 1: Summary of Proposed Quantitative Credibility Metrics in Biomechanics

| Assessment Area | Proposed Metric | Typical Target/Threshold | Source/Context |

|---|---|---|---|

| Spatial Convergence (Verification) | Grid Convergence Index (GCI) | < 5% for key QOIs | ASME V&V 20, CFD/FEA studies |

| Temporal Convergence | Relative change in QOI with time step refinement | < 2% | Pulsatile flow & dynamic simulation |

| Parameter Sensitivity | Normalized Sensitivity Index (Si) | Rank parameters; | UQ for drug delivery device models |

| Validation - Solid Mechanics | Correlation (R) between predicted vs. experimental strain | R > 0.85 (Excellent), R > 0.70 (Good) | Cardiac tissue, bone implant studies |

| Validation - Fluid Mechanics | Normalized root-mean-square error (NRMSE) for velocity/pressure | NRMSE < 0.20 (20%) | Arterial hemodynamics, valve models |

| Validation - General | Credibility Assessment Score (CAS) | Composite score (0-1) based on multiple metrics | Multi-scale model frameworks |

| Uncertainty in QOI | 95% Confidence/ Prediction Interval (CI/PI) Width | Reported relative to QOI mean (e.g., ±15%) | Probabilistic UQ analysis |

Experimental Protocols for Foundational Validation

A Credibility Dossier must reference standardized experimental methodologies used to generate validation data. Below are detailed protocols for two key areas.

Protocol 4.1: Biaxial Mechanical Testing of Soft Biological Tissue for Constitutive Model Validation

- Sample Preparation: Excise tissue specimens (e.g., arterial wall, myocardium) to a standardized planar cruciform or square geometry. Mark the sample surface with a speckle pattern for digital image correlation (DIC).

- Experimental Setup: Mount the sample in a biaxial testing system with four independent servo-controlled actuators. Submerge the sample in a warmed (37°C), physiologically buffered saline bath.

- Loading Protocol: Apply displacement-controlled loading along two orthogonal axes (typically aligned with tissue microstructure). Protocols include equibiaxial stretch, strip biaxial (one axis stretched, other held fixed), and stress-controlled paths to replicate in vivo states.

- Data Acquisition: Synchronize actuator load cells (force) and DIC system (full-field 2D or 3D strain) during testing. Record force and displacement data at a minimum of 100 Hz.

- Data Processing: Convert forces to engineering stress. Use DIC to compute Lagrangian strain tensor components (Exx, Eyy, Exy). The resulting stress-strain curves form the primary validation dataset.

Protocol 4.2: Particle Image Velocimetry (PIV) for Hemodynamic Model Validation

- Flow Phantom Fabrication: Create a transparent scale model of the vascular geometry of interest (e.g., aneurysm, stenosed artery) using 3D printing and silicone molding. Ensure refractive index matching of the fluid.

- Seeding and Fluid Properties: Seed the working fluid (glycerol-water mixture) with fluorescent or silver-coated tracer particles (~10 µm diameter). Adjust fluid viscosity to match the desired blood Reynolds number.

- Flow Circuit: Connect the phantom to a pulsatile flow pump capable of replicating physiological waveforms. Incorporate pressure sensors upstream and downstream.

- Imaging: Illuminate a thin laser sheet through the region of interest. Capture high-speed image pairs (Δt separation ~100 µs) using a synchronized camera positioned orthogonally to the laser sheet.

- Analysis: Use cross-correlation algorithms on image pairs to compute 2D velocity vector fields for each phase of the cardiac cycle. Derive quantitative metrics like wall shear stress (from velocity gradients) and flow separation zones.

Visualizing Credibility Workflows and Relationships

Diagram 1: Credibility Assurance Workflow for Biomechanics Models

Diagram 2: VVUQ Conceptual Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomechanical Validation Experiments

| Item / Solution | Primary Function in Credibility Assessment | Example Use Case |

|---|---|---|

| Polyacrylamide (PAA) Gel Phantoms | Tunable, optically clear material for fabricating anatomically accurate tissue mimics with controlled mechanical properties. | Validation of soft tissue stress/strain predictions (e.g., tumor indentation). |