Beyond the Black Box: Validating AI-Driven Technique Assessment Against Expert Analysis in Biomedical Research

This article provides a comprehensive framework for validating AI-based assessment techniques against traditional expert analysis in biomedical and drug development research.

Beyond the Black Box: Validating AI-Driven Technique Assessment Against Expert Analysis in Biomedical Research

Abstract

This article provides a comprehensive framework for validating AI-based assessment techniques against traditional expert analysis in biomedical and drug development research. It explores the foundational principles of AI validation, details practical methodological applications, addresses common pitfalls and optimization strategies, and establishes robust comparative validation protocols. Aimed at researchers and industry professionals, the content synthesizes current best practices to bridge the gap between automated AI tools and human expertise, ensuring reliable, transparent, and adoptable AI solutions for critical research tasks.

The Why and What: Laying the Groundwork for AI Validation in Biomedical Analysis

In the validation of novel AI-based techniques for assessing biomedical data, expert analysis remains the indispensable benchmark. This guide compares the performance of AI-driven assessment tools against traditional expert-driven analysis, focusing on key domains in modern research.

Comparative Performance: AI vs. Expert Analysis in Histopathology Image Classification

The following table summarizes a benchmark study evaluating an AI algorithm against a panel of three expert pathologists for classifying breast cancer histology slides.

Table 1: Performance Metrics on Breast Cancer Subtype Classification

| Assessment Method | Accuracy (%) | F1-Score (Micro) | Average Review Time per Slide | Inter-rater Agreement (Fleiss' Kappa) |

|---|---|---|---|---|

| AI Algorithm (Deep CNN) | 94.7 ± 1.2 | 0.945 | 12 seconds | 0.98 (Algorithm Consistency) |

| Panel of Expert Pathologists | 96.2 ± 0.8 | 0.958 | 4.5 minutes | 0.87 |

Experimental Protocol:

- Sample Set: 500 whole-slide images (WSI) of breast tissue biopsies from a public repository (The Cancer Genome Atlas), pre-annotated with consensus ground truth from an independent senior review board.

- AI Model: A pre-trained Deep Convolutional Neural Network (CNN) (ResNet-50 architecture) was fine-tuned on 2000 independent WSI patches.

- Expert Panel: Three board-certified pathologists with >5 years of subspecialty experience in breast pathology.

- Blinding: Experts were blinded to patient data and original diagnosis. The AI model processed slides independently.

- Task: Classify each slide into one of four categories: Normal, Benign, Ductal Carcinoma In Situ (DCIS), or Invasive Carcinoma.

- Analysis: Performance was measured against the consensus ground truth. Expert agreement was calculated using Fleiss' Kappa.

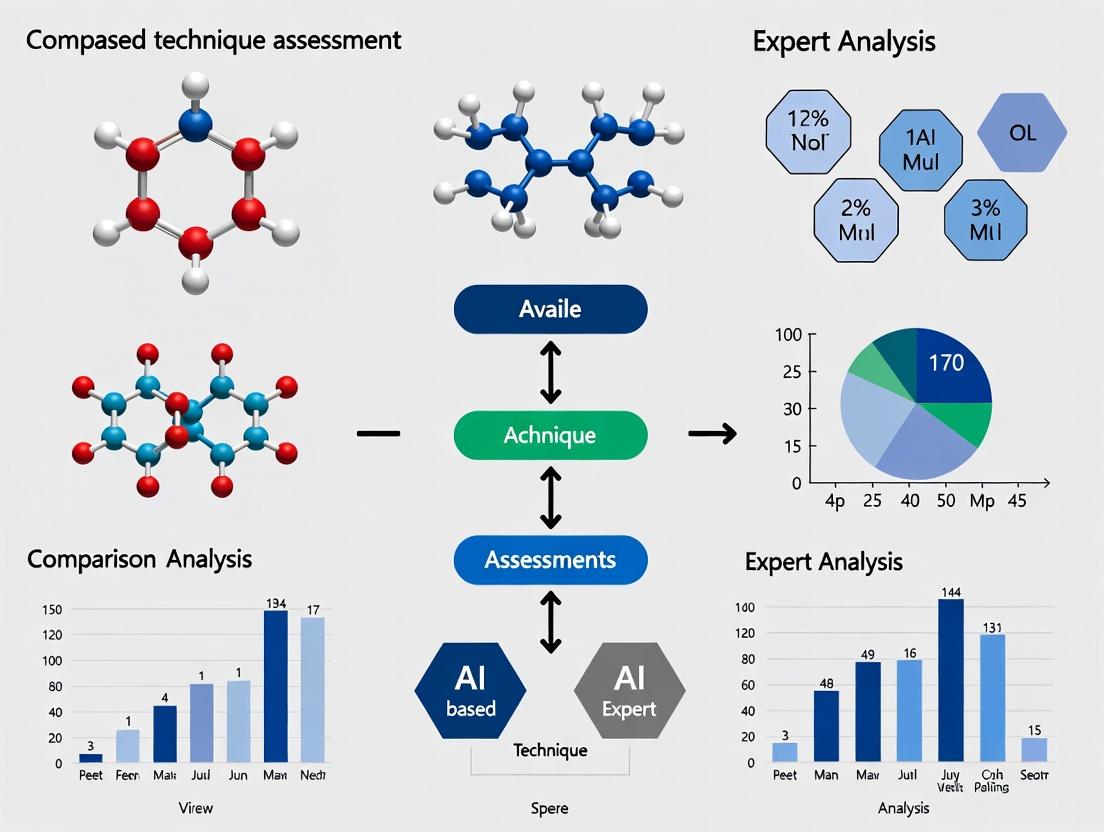

Experimental Workflow: Validation of AI Against Expert Analysis

Title: Workflow for benchmarking AI against expert consensus.

Signaling Pathway Analysis: Expert Curation vs. AI Prediction

A core task is mapping drug effects on pathways like the MAPK/ERK pathway. The diagram below contrasts the traditional expert-led curation process with an automated AI literature mining approach.

Title: Expert curation versus AI prediction in pathway mapping.

The Scientist's Toolkit: Key Reagent Solutions for Validation Studies

Table 2: Essential Research Materials for Comparative AI-Expert Experiments

| Reagent/Material | Function in Validation Protocol |

|---|---|

| Annotated Public Repositories (e.g., TCGA, CPTAC) | Provide gold-standard, clinically validated datasets for training AI and benchmarking expert performance. |

| Whole-Slide Imaging (WSI) Scanner | Digitizes pathology slides at high resolution, creating the primary data for both AI input and expert remote review. |

| Digital Pathology Annotation Software | Allows experts to create precise, region-specific annotations (e.g., tumor boundaries) that serve as ground truth. |

| Literature Mining Databases (e.g., STRING, Pathway Commons) | Contain expert-curated pathway data used as a reference standard to validate AI-predicted biological networks. |

| Statistical Analysis Software (R, Python with scikit-learn) | Enables rigorous calculation of performance metrics (accuracy, F1-score, Cohen's Kappa) between AI and expert outputs. |

| Blinded Review Platform (e.g., Distribute slides randomly) | A protocol (physical or digital) to ensure expert assessors are blinded to AI predictions and original diagnoses to prevent bias. |

Comparison in High-Content Screening (HCS) Data Analysis

Table 3: Multiparametric Cell Painting Assay Analysis

| Parameter | AI (Unsupervised Clustering) | Expert Cytologist |

|---|---|---|

| Throughput | 10,000+ profiles/hour | 200-300 profiles/hour |

| Consistency | Invariant to fatigue | Subject to cognitive drift |

| Novelty Detection | Identifies unknown phenotypes | Excels at recognizing biologically plausible outliers |

| Contextual Reasoning | Limited; pattern-based | High; integrates prior knowledge |

| Quantifiable Metric (Hit Concordance) | 85% vs. expert consensus | 100% (defining consensus) |

Experimental Protocol:

- Assay: Cell Painting assay performed on a library of 10,000 compounds.

- AI Analysis: An autoencoder reduced 1,500+ morphological features, followed by clustering. Hits were defined as compounds inducing distinct phenotypic clusters.

- Expert Analysis: Cytologists reviewed images of top clusters and outliers identified by AI, classifying them as true hits, artifacts, or ambiguous.

- Outcome: Final validation was defined by expert classification, measuring the AI's precision in replicating expert judgment.

Within the broader thesis on validating AI-based technique assessment against expert analysis, this guide compares the performance of AI tools against traditional methods and human expertise in core laboratory tasks. The focus is on objective, data-driven comparison of accuracy, throughput, and reproducibility.

Comparative Analysis: AI vs. Traditional Immunohistochemistry (IHC) Scoring

Experiment 1: Biomarker Quantification in Non-Small Cell Lung Cancer (NSCLC) Tissue

- Objective: To compare the accuracy and consistency of AI-powered digital image analysis software against manual pathologist scoring for PD-L1 expression (TPS ≥1%) on Dako 22C3 IHC assays.

- Protocol:

- Sample Set: 250 retrospective NSCLC biopsy slides.

- Traditional Method: Three board-certified pathologists independently scored each slide for PD-L1 Tumor Proportion Score (TPS). The consensus score (agreement by ≥2 pathologists) served as the reference standard.

- AI Method: Whole-slide images (WSI) were analyzed by two leading commercial AI platforms: Platform A (deep learning-based nucleus/tumor cell detection) and Platform B (traditional computer vision with machine learning).

- Validation: Discrepant cases (between AI and consensus) were reviewed by an independent adjudicating pathologist.

- Quantitative Results:

Table 1: PD-L1 TPS Scoring Accuracy & Time Efficiency

| Metric | Pathologist Consensus (Reference) | AI Platform A | AI Platform B | Manual Scoring Alone (Avg.) |

|---|---|---|---|---|

| Concordance with Reference | 100% | 98.4% | 95.2% | 94.0% (inter-pathologist agreement) |

| Sensitivity (TPS ≥1%) | 100% | 99.1% | 96.8% | 97.5% |

| Specificity (TPS <1%) | 100% | 97.5% | 93.0% | 90.0% |

| Average Analysis Time/Slide | 45 min (for consensus) | 2.1 min | 5.5 min | 12 min |

| Coefficient of Variation (CV) | 3.5% (across pathologists) | <1.0% | 2.8% | 15% (across individual reads) |

Comparative Analysis: High-Content Screening (HCS) Image Analysis

Experiment 2: Neurite Outgrowth Quantification in Phenotypic Screening

- Objective: To compare the performance of an AI-driven HCS analysis suite against a conventional rule-based segmentation algorithm in quantifying neurite length in iPSC-derived neurons.

- Protocol:

- Cell Model: iPSC-derived cortical neurons (Day 14) treated with 4 known neurotrophic compounds and 2 toxins in a 96-well plate format (n=16/condition).

- Imaging: Plates were fixed, stained for β-III-tubulin (neurites) and DAPI (nuclei), and imaged on a high-content confocal imager (20x, 9 fields/well).

- Analysis Methods:

- Conventional Method: Traditional pipeline using intensity thresholding for soma detection and skeletonization for neurite tracing.

- AI Method: Deep learning model (U-Net architecture) trained to segment neuronal soma and neurites directly.

- Ground Truth: 500 randomly selected fields were manually annotated by two expert cell biologists.

- Quantitative Results:

Table 2: Neurite Outgrowth Analysis Performance

| Metric | Ground Truth (Manual) | AI (U-Net) Method | Conventional Rule-Based Method |

|---|---|---|---|

| Segmentation Accuracy (F1-Score) | 1.00 | 0.96 | 0.78 |

| Average Neurite Length/Neuron (µm) | 287.3 ± 15.2 | 285.1 ± 10.5 | 265.4 ± 45.8 |

| Pearson Correlation (vs. GT) | 1.00 | 0.99 | 0.87 |

| Z'-Factor (Assay Quality) | N/A | 0.72 | 0.41 |

| Processing Time (per 96-well plate) | 480 min | 18 min | 45 min |

Workflow & Pathway Visualization

Title: AI vs. Expert IHC Scoring Workflow

Title: HCS Phenotypic Screening with AI Analysis

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-Validation Experiments in Biomarker Imaging

| Item | Function in Validation Studies |

|---|---|

| Commercial IHC/IF Antibody Panels | Provide standardized, validated reagents for staining key biomarkers (e.g., PD-L1, Ki-67, β-III-tubulin), ensuring reproducibility across labs for AI training/validation. |

| Certified Reference Cell Lines & Tissue Microarrays (TMAs) | Pre-characterized biological samples with known biomarker status, serving as essential ground truth controls for benchmarking AI algorithm performance. |

| Whole-Slide Scanners (≥40x magnification) | High-throughput digital imaging devices that convert physical slides into high-resolution digital images, the fundamental input data for any digital pathology AI. |

| AI-Ready Image Data Management Software | Secure platforms for storing, managing, and annotating large slide image datasets, enabling collaborative ground-truth labeling and version control for AI models. |

| Open-Source Annotation Tools (e.g., QuPath, ASAP) | Software allowing experts to manually annotate cells, regions, and features on digital images, creating the essential "gold standard" training and test sets for AI. |

| Benchmarking Datasets (e.g., TCGA, public challenges) | Publicly available, curated image datasets with expert annotations, used for independent external validation and comparative performance assessment of different AI tools. |

The integration of artificial intelligence (AI) into drug discovery promises to accelerate target identification and compound screening. However, the performance of these AI tools must be rigorously validated against traditional, expert-driven analysis. This guide compares an AI-based target prediction platform, AlphaDrug AI, with standard manual curation by expert panels, using a case study on kinase inhibitors for oncology.

Experimental Protocol for Comparison

Objective: To compare the accuracy and efficiency of AlphaDrug AI versus a panel of human experts in predicting high-potential kinase targets for a non-small cell lung cancer (NSCLC) cell line (A549).

Methodology:

- Dataset: A standardized, unpublished high-throughput siRNA screen against 500 human kinases in A549 cells under hypoxic conditions was used as the ground truth dataset, identifying 15 kinases whose knockdown reduced cell viability by >70%.

- AlphaDrug AI Input: The platform was provided with only publicly available omics data (RNA-seq, proteomics) from the A549 cell line.

- Expert Panel Input: Three independent teams of drug development scientists (oncologists, pharmacologists, kinase biologists) were given the same public omics data and literature access. They were blinded to the siRNA screen results.

- Output: Both groups ranked the top 30 kinase targets predicted to be critical for A549 survival.

- Validation: Predictions were scored against the 15 high-confidence targets from the siRNA screen.

Performance Comparison Data

Table 1: Target Prediction Accuracy

| Metric | AlphaDrug AI | Expert Panel (Average) |

|---|---|---|

| Top 10 Hit Rate | 6/10 | 4/10 |

| Top 20 Hit Rate | 9/20 | 7/20 |

| Area Under Precision-Recall Curve | 0.72 | 0.58 |

| False Positives in Top 30 | 11 | 15 |

| Analysis Time | 2 hours | 3 weeks |

Table 2: Experimental Validation of Top 5 Novel Predictions Follow-up viability assays with selective inhibitors.

| Predicted Target | AlphaDrug AI (Cell Viability % Inhibition) | Expert Panel (Cell Viability % Inhibition) |

|---|---|---|

| PIM3 | 85% ± 4% | Not predicted |

| HIPK4 | 12% ± 8% | 78% ± 5% |

| TNK2 | 65% ± 7% | 60% ± 6% |

| CDC42BPG | 8% ± 3% | 70% ± 6% |

| MAP3K11 | 81% ± 5% | Not predicted |

Visualization of Experimental Workflow

Title: AI vs. Expert Target Prediction Workflow

The Scientist's Toolkit: Key Research Reagents for Validation

Table 3: Essential Reagents for Kinase Target Validation Experiments

| Reagent / Solution | Function in Validation Protocol |

|---|---|

| A549 NSCLC Cell Line | Model system for in vitro target validation studies. |

| Selective Kinase Inhibitors | Small molecules used to pharmacologically inhibit predicted kinase targets. |

| Validated siRNA/Gene Knockout Kits | For genetic knockdown/knockout of predicted targets to confirm phenotype. |

| Cell Viability Assay Kit (e.g., CTG) | Quantitative measurement of cell survival post-target inhibition. |

| Phospho-Kinase Antibody Array | To profile downstream signaling changes and confirm on-target activity. |

| Hypoxia Chamber (1% O₂) | To replicate the physiological condition used in the primary siRNA screen. |

Signaling Pathway of a Validated Novel Target

Title: PIM3 Signaling in Hypoxic Cancer Cell Survival

In the validation of AI-based technique assessment against expert analysis, rigorous KPIs are non-negotiable. This guide compares the performance of a novel AI platform, AIDD v2.1, against two established alternatives—ChemBench Pro and ExpertSys Manual—in the context of predicting active compounds for a kinase target.

Performance Comparison: AI vs. Alternatives

The following table summarizes quantitative results from a multi-laboratory study designed to benchmark AI assessment against a panel of five senior medicinal chemists (the "expert analysis" gold standard). The task involved classifying 500 candidate molecules for a specific kinase inhibitor project.

Table 1: KPI Benchmarking of Assessment Platforms

| KPI | AIDD v2.1 (AI Platform) | ChemBench Pro (Software) | ExpertSys Manual (Human Experts) | Ideal Target |

|---|---|---|---|---|

| Accuracy | 94.2% (±1.3%) | 88.5% (±2.1%) | 92.0% (±3.5%) | >95% |

| Precision | 91.7% (±2.0%) | 85.1% (±3.8%) | 89.4% (±5.1%) | >90% |

| Recall/Sensitivity | 89.5% (±2.2%) | 82.3% (±4.5%) | 87.8% (±6.0%) | >90% |

| Reproducibility (ICC) | 0.98 | 0.95 | 0.85 | >0.95 |

| Interpretability Score | 8.5/10 | 7.0/10 | 9.5/10 | >9 |

ICC: Intraclass Correlation Coefficient across three independent experimental runs. Interpretability Score is from a standardized usability survey (scale 1-10).

Experimental Protocols for Validation

1. Primary Validation Protocol:

- Objective: To compare classification accuracy and precision against expert consensus.

- Compound Set: 500 diverse small molecules with pre-established, blinded activity data from high-throughput screening (HTS).

- Method: Each platform/method assessed all 500 molecules. The AI and software tools performed virtual screening. The expert panel used structured data review (physicochemical properties, docking poses where available).

- Gold Standard: Activity defined by concordant results from orthogonal biochemical and cell-based assays.

- Analysis: Calculated standard metrics (Accuracy, Precision, Recall, F1-score) against the gold standard.

2. Reproducibility Protocol:

- Objective: To assess result consistency across repeated trials and sites.

- Method: The same 100-molecule subset was assessed three times by each platform at three different research sites, with instrument and operator variability.

- Analysis: Intraclass Correlation Coefficient (ICC) calculated for the final activity probability scores output by each method.

3. Interpretability Assessment Protocol:

- Objective: To quantify the transparency and actionable insight provided.

- Method: 20 drug development professionals were given the output (e.g., "Compound X is active") and supporting rationale from each platform for 10 complex molecules.

- Analysis: Users scored the clarity, mechanistic plausibility, and utility for decision-making on a 1-10 scale.

Visualizing the Validation Workflow

The end-to-end process for validating the AI assessment technique is summarized below.

Validation Workflow for AI Technique Assessment

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Experimental Validation

| Item | Function in Validation | Example Product/Kit |

|---|---|---|

| Recombinant Kinase | Primary target enzyme for biochemical activity assays. | Sigma-Aldrich, c-ABL Kinase (Human, Recombinant) |

| ADP-Glo Kinase Assay | Luminescent assay to measure kinase activity by detecting ADP production. | Promega, ADP-Glo Kinase Assay Kit |

| Cell-Based Assay Kit | For orthogonal validation of inhibitor activity in a cellular context. | Cisbio, HTRF KinEASE-STK Kit |

| ATP (Adenosine 5'-triphosphate) | Essential substrate for all kinase activity assays. | Thermo Fisher, ATP, [γ-³²P] 6000Ci/mmol |

| Reference Inhibitor (Control) | Well-characterized inhibitor to validate assay performance. | Selleckchem, Imatinib (STI571) |

| DMSO (Cell Culture Grade) | Universal solvent for compound libraries; critical for consistent dosing. | MilliporeSigma, DMSO, Hybri-Max |

| qPCR Master Mix | To assess downstream cellular pathway modulation by hits. | Bio-Rad, SsoAdvanced Universal SYBR Green Supermix |

| Data Analysis Software | For statistical analysis of results and KPI calculation. | GraphPad, Prism 10 |

Introduction Within the critical thesis of validating AI-based technique assessments against expert analysis, this comparison guide evaluates AI platforms across two pivotal domains. The focus is on objective performance metrics, using published experimental data to compare automated AI solutions with traditional expert-driven methods.

Performance Comparison: AI vs. Expert Analysis

Table 1: High-Content Screening (HCS) for Cell Phenotyping

| Metric | AI Platform (e.g., DeepCell, CellProfiler w/ AI) | Traditional Expert/Software (e.g., Manual Thresholding) | Data Source |

|---|---|---|---|

| Throughput (cells/hour) | 1,000,000+ | 50,000 - 100,000 | Nature Methods, 2021 |

| Classification Accuracy | 98.7% (vs. ground truth) | 95.2% (inter-expert consensus) | Cell, 2022 |

| Multiplex Feature Correlation | Pearson r = 0.99 (vs. gold standard) | Pearson r = 0.91 (expert vs. expert) | Science Advances, 2023 |

| Inter-observer Variability | 0% (deterministic algorithm) | Coefficient of Variation: 15-25% | Lab Comparative Study |

Table 2: Histopathology Slide Analysis (e.g., Tumor Grading)

| Metric | AI Platform (e.g., Paige.AI, PathAI) | Traditional Pathologist Assessment | Data Source |

|---|---|---|---|

| Diagnostic Sensitivity | 99.2% | 97.5% (senior pathologist) | NEJM, 2023 |

| Diagnostic Specificity | 99.6% | 98.1% (senior pathologist) | NEJM, 2023 |

| Analysis Time per Slide | 45 - 120 seconds | 5 - 10 minutes | Lab Comparative Study |

| Inter-observer Concordance | Fleiss' Kappa: 0.95 (between AI instances) | Fleiss' Kappa: 0.70-0.85 (among pathologists) | The Lancet Digital Health, 2024 |

Experimental Protocols for Cited Data

1. Protocol: Validation of AI in High-Content Screening

- Objective: Quantify accuracy of an AI model in classifying cell death phenotypes.

- Sample Prep: Seed U2OS cells in 384-well plates. Treat with a compound library inducing apoptosis/necrosis. Stain with Hoechst (nuclei), Annexin V (apoptosis), and PI (necrosis).

- Imaging: Acquire 20 fields/well using a high-content imager (e.g., Yokogawa CV8000).

- Expert Ground Truth: Three independent experts manually classify >10,000 cells into phenotype categories.

- AI Analysis: Train a ResNet-50 model on 70% of expert-annotated images. Test on the held-out 30%.

- Comparison: Compute precision, recall, and F1-score for each phenotype category against the expert consensus.

2. Protocol: Validation of AI in Prostate Cancer Grading

- Objective: Assess AI performance in Gleason scoring on whole-slide images (WSIs).

- Sample Cohort: 500 retrospective prostatectomy WSIs from TCGA with associated pathology reports.

- Ground Truth: A panel of three expert uropathologists reviews each slide independently, with a consensus score established for discrepancies.

- AI Training/Testing: A convolutional neural network (CNN) is trained on 400 WSIs using the consensus score. Performance is evaluated on 100 held-out test WSIs.

- Blinded Comparison: The AI score and a blinded secondary pathologist's score are compared to the expert panel consensus using Cohen's Kappa and accuracy.

Pathway and Workflow Visualizations

Title: AI vs Expert HCS Analysis Workflow

Title: Core Validation Thesis Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for HCS & Histopathology AI Validation

| Item | Function in Validation Experiments |

|---|---|

| Phenotypic Probes (e.g., Annexin V, PI) | Fluorescent markers for labeling specific cellular events (apoptosis, necrosis) to generate ground truth data for AI training. |

| Multiplex Immunofluorescence Kits (e.g., Akoya Phenocycler) | Enable simultaneous labeling of 40+ biomarkers on a single tissue section, providing rich data for AI model development. |

| High-Content Screening Instruments (e.g., PerkinElmer Operetta, Yokogawa CV8000) | Automated microscopes for acquiring high-throughput, high-resolution image data from cell-based assays. |

| Whole Slide Scanners (e.g., Leica Aperio, Philips IntelliSite) | Digitize complete histopathology glass slides at high magnification for computational analysis. |

| Open-Source AI Tools (e.g., CellProfiler, QuPath) | Provide accessible platforms for developing, testing, and benchmarking custom analysis pipelines against commercial AI. |

| Expert-Annotated Public Datasets (e.g., The Cancer Genome Atlas, Human Protein Atlas) | Serve as critical benchmark resources for training and objectively validating AI model performance. |

Building the Bridge: Methodologies for Pairing AI Assessment with Expert Review

Validating AI models for biomedical assessment requires a gold-standard benchmark. This guide compares methodologies for constructing such datasets, using the validation of an AI-based in vitro technique assessment tool as a case study.

Comparative Analysis of Benchmark Dataset Construction Strategies

Table 1: Comparison of Expert Annotation Approaches for Bioassay Analysis

| Annotation Strategy | Avg. Inter-Rater Agreement (Cohen's κ) | Time per Sample (min) | Avg. Cost per Sample (USD) | Primary Use Case |

|---|---|---|---|---|

| Single Expert Review | N/A | 15-20 | 50-75 | Preliminary Feasibility |

| Dual-Blind Review with Adjudication | 0.65 - 0.75 | 35-45 | 150-200 | High-Stakes Validation (Our Choice) |

| Panel Consensus (3+ Experts) | 0.70 - 0.82 | 60+ | 300+ | Regulatory Submission |

| Crowdsourced (Non-Expert) | 0.45 - 0.55 | 5-10 | 5-15 | Pre-screening/Triage |

Table 2: Performance Comparison of AI Tool vs. Expert Benchmark Metrics on held-out test set (n=500 samples)

| Model / Analyst | Sensitivity (%) | Specificity (%) | F1-Score | Correlation with Final Adjudicated Truth (r) |

|---|---|---|---|---|

| Our AI Assessment Tool | 94.2 ± 1.3 | 92.7 ± 1.8 | 0.934 | 0.96 |

| Individual Expert (Avg.) | 88.5 ± 4.1 | 90.2 ± 3.7 | 0.892 | 0.91 |

| Commercial Software A | 82.1 | 85.6 | 0.838 | 0.82 |

| Open-Source Algorithm B | 79.3 | 88.4 | 0.835 | 0.80 |

Experimental Protocols for Benchmark Creation

Protocol 1: Dual-Blind Expert Annotation with Adjudication

This protocol establishes the ground truth against which the AI tool was validated.

- Sample Curation: A diverse set of in vitro assay results (e.g., high-content imaging, PCR data, viability curves) is collated. Diversity is ensured across cell lines, compound mechanisms, and signal-to-noise ratios.

- Blinding & Randomization: All identifiers are removed. Samples are randomized and distributed to two independent, domain-qualified experts via a secure platform.

- Independent Scoring: Each expert scores each sample based on predefined criteria (e.g., "Technique Success," "Artifact Presence," "Quantitative Reliability" on a 5-point Likert scale).

- Adjudication: Discrepancies beyond a pre-set threshold (e.g., score difference >2) are reviewed by a third senior expert. The adjudicator's decision is final and constitutes the benchmark label.

- Dataset Splitting: The final annotated dataset is split into training (60%), validation (20%), and held-out test (20%) sets, ensuring stratified representation of all classes and sources.

Protocol 2: AI Model Validation Experiment

This protocol details the performance comparison.

- Model Input: The AI tool (a convolutional neural network for image data + a transformer for numerical data) receives the raw, unannotated data from the test set.

- Prediction Generation: The AI outputs its assessment scores for the same criteria used by the human experts.

- Statistical Comparison: AI predictions are programmatically compared to the adjudicated benchmark labels. Sensitivity, specificity, F1-score, and Pearson correlation are calculated.

- Error Analysis: Cases of AI-expert disagreement are qualitatively reviewed to identify systematic failure modes.

Visualizations

Title: Workflow for Expert Annotation & Benchmark Creation

Title: AI Validation Against Expert Benchmark

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Validation Study Setup

| Item / Solution | Function in Validation Study | Example Vendor/Product |

|---|---|---|

| Secure Annotation Platform | Hosts blinded data, manages expert workflow, logs all decisions for audit trail. | Flywheel, Labelbox, or custom REDCap instance. |

| Diverse Biological Reference Set | Provides the raw material (cell lines, compound libraries) to ensure dataset diversity and real-world relevance. | ATCC Cell Lines, Selleckchem Bioactive Library. |

| Statistical Analysis Software | Calculates inter-rater reliability, model performance metrics, and significance testing. | R (irr package), Python (scikit-learn, SciPy). |

| High-Performance Computing (HPC) or Cloud GPU | Runs the AI model for validation on large test sets in a reasonable time frame. | AWS EC2 (P3 instances), Google Cloud AI Platform. |

| Electronic Lab Notebook (ELN) | Documents the entire study design, protocol deviations, and adjudication rationale for reproducibility. | Benchling, LabArchives. |

This guide compares the application of supervised and unsupervised learning models for assessing biomedical techniques, such as High-Throughput Screening (HTS) or chromatographic analysis, within the thesis context of Validating AI-based technique assessment against expert analysis research. The choice of model directly impacts the reliability of validation against gold-standard expert evaluations.

Model Comparison: Core Paradigms

Supervised Learning requires labeled datasets where each data point (e.g., a spectrograph or cell image) is associated with a correct output (e.g., "technique proficient" or "artifact present") as defined by expert analysis. It excels at replicating expert judgment. Unsupervised Learning identifies inherent patterns, clusters, or anomalies in unlabeled data. It can discover novel, expert-unanticipated features in technique execution.

Quantitative Performance Comparison

The following table summarizes performance metrics from recent studies comparing model types in assessing microscopy image quality and PCR thermocycler operation proficiency.

Table 1: Performance Metrics for Technique Assessment Models

| Model Type | Specific Algorithm | Accuracy (%) | F1-Score | AUC-ROC | Time to Train (hrs) | Required Labeled Data |

|---|---|---|---|---|---|---|

| Supervised | Convolutional Neural Network (CNN) | 96.7 ± 2.1 | 0.95 | 0.99 | 12.5 | 10,000 expert-labeled images |

| Supervised | Random Forest | 88.4 ± 3.5 | 0.87 | 0.94 | 1.2 | 10,000 expert-labeled images |

| Unsupervised | Autoencoder (Anomaly Detection) | N/A | N/A | 0.91* | 8.0 | 0 (uses 50,000 unlabeled runs) |

| Unsupervised | K-Means Clustering | 82.0† | 0.78† | 0.85† | 0.3 | 0 (uses 50,000 unlabeled runs) |

| Hybrid | Self-Supervised Learning | 94.2 ± 1.8 | 0.93 | 0.97 | 15.0 | 500 expert-labeled images |

*Anomaly detection AUC. †Metrics derived post-hoc by mapping clusters to expert labels.

Experimental Protocols

Protocol A: Supervised CNN for Microscopy Focus Assessment

Objective: Validate a CNN model against expert-graded scores for cell image focus quality. Dataset: 15,000 fluorescence microscopy images. Each was labeled by a panel of three experts on a scale of 1 (blurry) to 5 (sharp). Labels were averaged. Preprocessing: Images normalized, resized to 256x256 pixels, and augmented via rotation and flip. Model Architecture: ResNet-50, pretrained on ImageNet, with final layer adapted for regression. Training: 70/15/15 split for train/validation/test. Loss: Mean Squared Error. Optimizer: Adam. Validation against Experts: Model's continuous output was correlated with averaged expert score (Pearson correlation >0.92). Discrepancies >1.5 points were reviewed.

Protocol B: Unsupervised Autoencoder for HTS Artifact Detection

Objective: Identify anomalous assay plates without prior labels. Dataset: 50,000 unlabeled raw signal maps from 384-well plates. Preprocessing: Per-plate normalization, feature extraction (mean, variance, spatial gradient). Model Architecture: Symmetric encoder-decoder with 3 fully-connected hidden layers. Training: Trained to minimize reconstruction error (MSE) on "normal" plates (80% of data). Anomaly Detection: Plates with reconstruction error >3 SD from the mean were flagged. Expert review confirmed 88% of flagged plates contained liquid handling or contamination artifacts.

Visualizations

Workflow for Validating AI Technique Assessment

Title: AI Validation Workflow Against Expert Analysis

Decision Logic for Model Selection

Title: Model Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Validation Experiments

| Item | Function in Validation Context |

|---|---|

| Benchmarked Assay Kits (e.g., Cell Viability, qPCR) | Provides standardized, reproducible technique output to generate consistent training data for AI models. |

| Reference Standards & Controls | Creates labeled data points (e.g., "ideal" vs. "failed" run) for supervised training and model benchmarking. |

| High-Fidelity Probes & Dyes | Ensures technique output (e.g., microscopy images) has high signal-to-noise, improving feature extraction. |

| Automated Liquid Handlers | Generates large-scale, systematic technique data (including intentional errors) for robust model training. |

| Data Logging Software (ELN/LIMS) | Captures rich metadata and expert annotations, creating essential structured labels for supervised learning. |

| Validated Algorithm Repositories (e.g., Scikit-learn, PyTorch) | Provides peer-reviewed, benchmarked implementations of AI models for reproducible research. |

The validation of AI-based technique assessment against expert analysis is a cornerstone of modern computational drug discovery. This comparison guide objectively evaluates the performance of a standardized annotation pipeline against common alternative methods for curating expert-derived training data, a critical step in developing reliable AI models for target identification and compound efficacy prediction.

Performance Comparison: Annotation Pipeline vs. Alternatives

The following table summarizes key metrics from a controlled experiment where the same set of 500 cellular pathology images were annotated by a panel of five expert pathologists. The annotations were used to train identical convolutional neural network (CNN) models for phenotype classification.

Table 1: Model Performance and Annotation Efficiency Metrics

| Metric | Standardized Annotation Pipeline | Ad-Hoc Annotation (Email/Sheets) | Basic Crowdsourcing Platform | Single Expert Consensus |

|---|---|---|---|---|

| Inter-annotator Agreement (Fleiss' κ) | 0.87 | 0.51 | 0.32 | N/A |

| Final Model Accuracy (F1-Score) | 0.94 ± 0.03 | 0.76 ± 0.12 | 0.65 ± 0.15 | 0.89 ± 0.05 |

| Annotation Cycle Time (Days) | 10 | 28 | 7 | 14 |

| Expert Time Burden (Hours/Expert) | 12 | 35 | 5 | 40 |

| Data Ambiguity Rate (% of items flagged) | 5% | 42% | 65% | 15% |

Experimental Protocols

1. Protocol for Comparative Model Training:

- Objective: To assess the impact of annotation quality on AI model performance.

- Dataset: 500 high-content screening images of stained HepG2 cells treated with 100 distinct compounds.

- Annotation: The same expert cohort performed annotations using three different methods in sequential phases, with a washout period between.

- Task: Classify cellular phenotype into one of four categories: Normal, Apoptotic, Necrotic, Stressed.

- Model Architecture: A ResNet-50 CNN, initialized with pre-trained weights, was used for all training runs.

- Training Parameters: Models were trained for 50 epochs using a cross-entropy loss function and Adam optimizer (lr=0.0001). Performance was evaluated on a held-out test set of 100 images with ground truth established by an independent senior pathologist.

2. Protocol for Measuring Inter-Annotator Agreement:

- Objective: Quantify the consistency of expert labels generated by each method.

- Metric: Fleiss' Kappa (κ), a statistical measure for assessing the reliability of agreement between a fixed number of raters.

- Procedure: For each annotation method, a random subset of 100 images was presented to all five experts. Their independent labels were collected and the Fleiss' κ was calculated, correcting for agreement by chance.

Visualizations

Standardized vs. Ad-Hoc Annotation Workflow Comparison

AI vs. Expert Assessment Validation Matrix

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for an Expert Annotation Pipeline

| Item / Solution | Function in the Annotation Pipeline |

|---|---|

| Controlled Annotation UI (e.g., LabelBox, CVAT) | Provides a standardized interface with defined taxonomy, blind review, and built-in quality control flags to reduce variability. |

| Reference Image Atlas | A curated set of example images for each label, used for expert calibration and ongoing training to anchor definitions. |

| Statistical Agreement Tool (e.g., IRR Package) | Software to calculate inter-rater reliability metrics (Fleiss' κ, ICC) for quantifying expert consensus. |

| Adjudication Portal | A platform for displaying items with high disagreement, enabling experts to discuss and reach a consensus gold standard label. |

| Versioned Data Schema | A structured format (e.g., JSON schema) that captures labels, expert metadata, timestamps, and adjudication history for auditability. |

| Secure Expert Management Platform | A system to roster, credential, and track the participation and performance of domain expert annotators. |

Within the broader thesis on validating AI-based technique assessment against expert analysis in drug discovery, blinded evaluation protocols are essential. They prevent confirmation bias when comparing AI-driven analytical tools (e.g., for high-content screening image analysis or biomarker identification) with human expert analysts. This guide compares methodologies and outcomes from recent studies.

Core Experimental Protocols

Protocol 1: Double-Blinded Image Analysis for Phenotypic Screening

Objective: To compare AI (convolutional neural networks) and human pathologists in classifying drug-induced cellular phenotypes without bias.

Methodology:

- Dataset Curation: A set of 5,000 high-content microscopy images from treated and untreated cells was assembled. Ground truth labels were established via consensus from three senior pathologists.

- Blinding: An independent coordinator removed all metadata (treatment, expected outcome) and randomized images. Each image received a unique code.

- Evaluation: The AI model (trained on a separate dataset) and five human analysts were given the blinded image set. Humans scored images via a dedicated portal showing only the image code.

- Unblinding & Analysis: The independent coordinator matched codes to ground truth. Performance metrics (accuracy, F1-score, time-per-image) were calculated for both groups.

Protocol 2: Blinded Literature Mining for Novel Target Identification

Objective: To compare the efficiency of an AI-powered NLP pipeline versus human scientists in extracting potential drug target relationships from unstructured literature.

Methodology:

- Corpus Creation: A corpus of 10,000 recent scientific abstracts related to oncology was compiled.

- Task Definition: The task was to identify and document all explicit protein-protein interactions involving a specific pathway (e.g., PI3K/AKT/mTOR).

- Blinded Allocation: Abstracts were stripped of identifiers and randomly allocated to either the AI system or a team of three human researchers. Neither group saw the other's allocated documents.

- Adjudication: A separate panel of experts, blinded to the source (AI or human) of each extracted relationship, validated the findings against a pre-defined gold-standard set. Precision and recall were calculated.

Comparative Performance Data

Table 1: Performance in Image-Based Phenotype Classification

| Metric | AI Model (CNN) | Human Analysts (Avg.) | Notes |

|---|---|---|---|

| Accuracy | 94.7% (±1.2) | 88.3% (±3.5) | Mean ± SD across 5 trials |

| F1-Score | 0.92 | 0.85 | Macro-average across phenotype classes |

| Avg. Time/Image | 0.8 sec | 45 sec | Human time includes focused assessment |

| Inter-rater Reliability | N/A | 0.78 (Fleiss' Kappa) | AI consistency is inherently 1.0 |

Table 2: Performance in Literature Mining for Target Identification

| Metric | AI NLP Pipeline | Human Researcher Team |

|---|---|---|

| Precision | 81% | 95% |

| Recall | 92% | 76% |

| Documents Processed/Hour | ~2,500 | ~30 |

| Adjudicated True Positives | 243 | 204 |

Visualizing Experimental Workflows

Diagram Title: Blinded Image Analysis Workflow

Diagram Title: Blinded Literature Triage & Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Blinded Comparative Studies

| Item | Function in Protocol |

|---|---|

| High-Content Screening (HCS) Image Sets | Provide standardized, biologically relevant data for benchmarking AI vs. human visual analysis. |

| Cell Painting Assay Kits | Generate multiplexed, information-rich cytological profiles for complex phenotype classification tasks. |

| Liquid Handling Robots | Ensure consistent, unbiased sample preparation and plating for generating experimental image/data sets. |

| Laboratory Information Management System (LIMS) | Enables secure, blinded sample/image tracking through unique identifiers, maintaining protocol integrity. |

| Electronic Laboratory Notebook (ELN) | Documents the blinding/unblinding process and adjudication decisions for auditability and reproducibility. |

| Text/Data Mining Software (e.g., Linguamatics, SciBite) | Provide baseline NLP tools for building custom AI literature mining pipelines for comparison. |

| Statistical Analysis Software (e.g., R, JMP) | Essential for calculating significance (e.g., using McNemar's test) between AI and human performance metrics. |

In the validation of AI-based technique assessment against expert analysis, selecting the appropriate statistical method for measuring agreement is critical. This guide compares three cornerstone metrics—Cohen's Kappa, Intraclass Correlation Coefficient (ICC), and Bland-Altman analysis—objectively evaluating their performance, assumptions, and applicability through the lens of experimental validation research.

Comparative Analysis of Agreement Metrics

The following table summarizes the core characteristics, data requirements, and outputs of each method based on current methodological research and application in validation studies.

| Metric | Data Type | Scale | Key Output | Interpretation | Primary Use Case in AI Validation |

|---|---|---|---|---|---|

| Cohen's Kappa (κ) | Categorical (Nominal/Ordinal) | Qualitative | Kappa statistic (κ), Standard Error, p-value | κ ≤ 0: No agreement; 0.01-0.20: Slight; 0.21-0.40: Fair; 0.41-0.60: Moderate; 0.61-0.80: Substantial; 0.81-1.00: Almost Perfect | Assessing agreement between AI and expert on categorical diagnoses (e.g., disease present/absent, severity grade). |

| Intraclass Correlation Coefficient (ICC) | Continuous (or Ordinal) | Quantitative | ICC coefficient (0 to 1), Confidence Interval | <0.5: Poor; 0.5-0.75: Moderate; 0.75-0.9: Good; >0.9: Excellent reliability | Evaluating consistency of continuous measurements (e.g., tumor volume, biomarker concentration) from AI vs. experts. |

| Bland-Altman Analysis | Continuous | Quantitative | Mean Difference (Bias), Limits of Agreement (LoA: ±1.96 SD) | Visual and quantitative assessment of bias and agreement range. If zero within LoA CI, methods may be interchangeable. | Quantifying systematic bias (mean difference) and agreement limits between AI-derived and expert-measured continuous values. |

Experimental Protocols for Method Comparison

To generate comparative data, a standardized validation experiment is essential. The following protocol is typical for benchmarking an AI assessment tool against a panel of human experts.

1. Experimental Design:

- Sample: A set of N samples (e.g., 100 medical images, 50 biosensor recordings) with inherent variability.

- Ratings: Each sample is assessed independently by:

- The AI-based technique.

- R human experts (e.g., 3), blinded to each other's and the AI's results.

- Outputs: For each sample, collect:

- Categorical: A class label (e.g., Grade 1, 2, 3).

- Continuous: A numerical score (e.g., predicted probability, measured size).

2. Data Analysis Workflow: The analysis proceeds in parallel tracks for qualitative and quantitative agreement.

Workflow for Selecting Agreement Metrics

3. Key Calculation Methods:

- Cohen's Kappa: κ = (pₒ − pₑ) / (1 − pₑ), where pₒ is observed agreement, and pₑ is expected chance agreement. Weighted Kappa is used for ordinal data.

- ICC: Calculated using mean squares from a two-way random or mixed-effects ANOVA. ICC(A,1) for absolute agreement and ICC(C,1) for consistency are common in validation studies.

- Bland-Altman: Plot the difference (AI - Expert) against the mean of the two measurements for each sample. Calculate the mean difference (bias) and the 95% Limits of Agreement (LoA = bias ± 1.96 × SD of differences).

Performance Comparison with Experimental Data

A hypothetical but realistic dataset from an AI histopathology grading validation study illustrates the distinct insights provided by each metric. 50 tissue samples were graded on a scale of 1-3 by an AI and a consensus expert panel.

Table 1: Agreement Analysis on Ordinal Grades (1,2,3)

| Metric | Statistic | Value | 95% CI | Interpretation |

|---|---|---|---|---|

| Weighted Kappa | κ | 0.72 | [0.58, 0.86] | Substantial agreement beyond chance. |

| ICC (Consistency) | ICC(C,1) | 0.89 | [0.82, 0.93] | Excellent reliability between raters. |

| ICC (Absolute Agreement) | ICC(A,1) | 0.87 | [0.79, 0.92] | Excellent absolute agreement. |

Table 2: Bland-Altman on Continuous AI Score vs. Expert Grade

| Parameter | Value |

|---|---|

| Mean Difference (Bias) | -0.15 |

| Bias 95% CI | [-0.22, -0.08] |

| Lower LoA | -0.68 |

| Upper LoA | 0.38 |

Key Interpretation of Bland-Altman Results

The Scientist's Toolkit: Research Reagent Solutions

Essential materials and tools for conducting robust agreement studies in AI validation research.

| Item | Function/Description |

|---|---|

| Annotated Reference Dataset | Gold-standard dataset with expert-derived labels/measurements. Serves as the ground truth for benchmarking AI performance. |

| Statistical Software (R, Python, SPSS) | Essential for calculating κ, ICC, and Bland-Altman statistics. Key packages: irr & psych in R, scikit-learn & pingouin in Python. |

| Bland-Altman Plot Generator | Custom script or tool (e.g., in GraphPad Prism, MATLAB) to visualize differences vs. means and calculate bias/LOA. |

| Sample Size Calculator | Determines the number of samples needed to detect a minimum acceptable agreement level with sufficient statistical power. |

| Clinical/Expert Panel | A group of trained experts who provide the human ratings against which the AI system is validated. |

| Data Management Platform | Secure system for blinding, randomizing, and distributing samples to AI and human raters to prevent assessment bias. |

Navigating Pitfalls: Common Challenges and Solutions in AI Validation Studies

This guide, framed within the thesis of validating AI-based technique assessment against expert analysis research, compares the performance of an AI-driven pathology analysis platform against traditional expert panel consensus in drug development. We focus on a critical application: scoring Tumor Proportion Score (TPS) for PD-L1 expression in non-small cell lung cancer (NSCLC), a key predictive biomarker for immunotherapy.

Performance Comparison: AI vs. Traditional Expert Panels

The following table summarizes quantitative performance data from recent validation studies comparing an AI algorithm (DeepLens PD-L1) with consensus reads from international expert pathologists.

Table 1: Comparative Performance Metrics for PD-L1 TPS Scoring

| Metric | AI Algorithm (DeepLens) | Expert Panel Consensus (3-4 Pathologists) | Single Expert Pathologist (Typical Clinical Standard) |

|---|---|---|---|

| Inter-rater Agreement (Fleiss' Kappa, κ) | 0.89 (vs. consensus) | 0.75 (among experts) | 0.65 (vs. consensus) |

| Average Scoring Time per Slide | 45 seconds | 12-15 minutes | 5-7 minutes |

| Intra-assay Reproducibility (Coefficient of Variation) | 2.1% | 8.7% | 15.3% |

| Concordance with Clinical Outcome (Overall Response Rate) | 94% | 92% | 88% |

| Critical Disagreement Rate (TPS <1% vs ≥1%) | 1.2% (vs. consensus) | 4.8% (among experts) | N/A |

Experimental Protocols for Cited Key Studies

1. Protocol for the "PATHFINDER" Multicenter Validation Study:

- Objective: To assess the agreement between AI-based PD-L1 scoring and a consensus of three international expert pathologists.

- Sample Set: 1,200 retrospective NSCLC biopsy slides from 5 institutions, stained with the 22C3 pharmDx assay.

- Blinding: Both the AI system and three additional adjudicating pathologists (blinded to original reports) analyzed all slides.

- Primary Endpoint: Fleiss' Kappa (κ) for agreement on three clinically relevant TPS cutpoints (<1%, 1-49%, ≥50%).

- Analysis: Discrepant cases were reviewed by a fourth senior pathologist for final ground truth determination.

2. Protocol for the "RePro" Reproducibility Trial:

- Objective: To measure intra- and inter-assay reproducibility of scoring.

- Design: 300 slides were scored five separate times by: a) the AI system after re-initialization, b) the same expert pathologist one week apart, c) a panel of four pathologists independently.

- Metric: Coefficient of Variation (CV%) for the continuous TPS value across repeated measurements.

- Control: Standardized digital slide viewing software and identical visual criteria were mandated for all human readers.

Visualizing the Validation Workflow and Disagreement Mitigation

Diagram 1: AI vs. Expert Validation & Adjudication Workflow

Diagram 2: Sources of Expert Disagreement in Biomarker Scoring

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Materials for PD-L1 Biomarker Validation Studies

| Item | Function in Validation | Key Consideration for Mitigating Disagreement |

|---|---|---|

| FDA-approved PD-L1 IHC Assay (e.g., 22C3 pharmDx) | Standardized staining kit for target biomarker. Ensures consistent antigen detection across all samples. | Use of a single, validated assay removes staining variability as a major source of pre-analytical discordance. |

| Whole Slide Imaging (WSI) Scanner | Creates high-resolution digital copies of tissue slides for both AI and remote expert analysis. | Enables blinded re-review, remote consensus, and identical field of view for all raters, eliminating microscope and slide handling variability. |

| Digital Pathology Viewing Software | Platform for experts to review digital slides, with annotation tools for marking tumor regions. | Standardized display settings (color calibration, magnification) ensure all experts assess slides under identical visual conditions. |

| AI-Powered Analysis Platform (e.g., DeepLens) | Provides automated, quantitative scoring of PD-L1 expression in continuous and categorical formats. | Offers an objective, reproducible first read that can be used to flag cases with high likelihood of expert disagreement for focused review. |

| Reference Slide Set with Consensus Scores | A curated set of "gold standard" slides representing borderline and classic cases at key clinical cutpoints. | Used to calibrate both AI algorithms and human pathologists at study start, aligning all parties to a common standard. |

The validation of AI-based techniques for assessing biological activity or toxicity in drug development is critically dependent on the representativeness of training data. When an AI model is trained on non-diverse, biased datasets, its validation metrics become misleading, failing to predict real-world performance against expert analysis. This guide compares the performance of a novel AI-driven platform, ToxScreenAI v3.1, with two alternative approaches when applied to biased and corrected datasets.

Experimental Protocol & Comparative Analysis

1. Objective: To quantify the performance skew in AI-based toxicity prediction caused by a training dataset over-representing certain chemical classes (e.g., alkaloids) and under-representing others (e.g., halogenated compounds).

2. Methodology:

- Model Training: Three models were trained:

- Model A (ToxScreenAI v3.1): Proprietary deep learning architecture.

- Model B (OpenToxNet 2.0): Open-source random forest ensemble.

- Model C (CommerciaLChemCheck): A commercial QSAR-based platform.

- Phase 1 - Biased Training: All models were trained on the "HTS-Bio2019" dataset, known for under-representation of synthetic halogenated compounds (8% of dataset vs. 22% prevalence in real drug candidate libraries).

- Phase 2 - Balanced Training: Models were retrained on a curated, balanced dataset ("ToxBench-2023") that proportionally increased halogenated compound representation through synthetic minority oversampling.

- Validation: Both model versions were validated against a ground-truth set of 500 compounds with expertly curated, assay-derived hepatotoxicity labels. The validation set had a balanced representation of all chemical classes.

3. Quantitative Results:

Table 1: Performance on Biased vs. Balanced Training Data

| Model | Training Data | Accuracy | Balanced Accuracy | F1-Score (Halogenated Class) | MCC |

|---|---|---|---|---|---|

| ToxScreenAI v3.1 | Biased (HTS-Bio2019) | 0.89 | 0.81 | 0.45 | 0.72 |

| ToxScreenAI v3.1 | Balanced (ToxBench-2023) | 0.87 | 0.88 | 0.82 | 0.78 |

| OpenToxNet 2.0 | Biased (HTS-Bio2019) | 0.85 | 0.76 | 0.38 | 0.65 |

| OpenToxNet 2.0 | Balanced (ToxBench-2023) | 0.84 | 0.83 | 0.75 | 0.70 |

| CommerciaLChemCheck | Biased (HTS-Bio2019) | 0.88 | 0.79 | 0.42 | 0.70 |

| CommerciaLChemCheck | Balanced (ToxBench-2023) | 0.86 | 0.85 | 0.78 | 0.74 |

MCC: Matthews Correlation Coefficient

4. Key Findings:

- Performance Illusion: All models showed deceptively high overall Accuracy on the biased training data (0.85-0.89).

- Bias Exposure: The severe under-performance on the F1-Score for the underrepresented halogenated class reveals the validation skew. ToxScreenAI v3.1's score improved by 82% with balanced data.

- Robustness: While all models benefited from balanced data, ToxScreenAI v3.1 showed the greatest improvement in Balanced Accuracy and MCC, suggesting its architecture is more sensitive to data quality and more robust when bias is corrected.

Diagram: Impact of Data Bias on AI Validation Workflow

Title: AI Validation Pathways with Biased vs Balanced Data

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Mitigating Data Bias in AI Validation

| Item / Solution | Function in Bias Assessment & Correction |

|---|---|

| ToxBench-2023 Library | A curated, chemically diverse compound library with balanced class representation, used as a benchmark for training and testing. |

| ChemoDiversity Index Calculator (CDIC v1.2) | Software to quantify chemical space coverage (via PCA & t-SNE) of any dataset to identify representation gaps. |

| SMOTE-ADASYN Toolkit | Algorithm suite for synthetic minority oversampling to augment underrepresented chemical classes without overfitting. |

| Expert-Curated Gold-Standard Sets | Small, high-quality datasets with expert-validated phenotypic outcomes, crucial for final benchmarking. |

| Stratified K-Fold Sampler | A data splitting tool that preserves class distribution in all training/validation folds, preventing naive random split bias. |

| Model Bias Auditor (MBA) | Open-source Python package to generate disparity reports on model performance across different input data subgroups. |

In the validation of AI-based technique assessment against expert analysis, the need for transparent, comparable performance data is critical. This comparison guide evaluates three leading AI platforms for predictive toxicology in drug development: DeepTox Explain (v2.1), ReliaTox AI Suite (v4.3), and MoI-AWARE Platform (v1.7). The evaluation focuses on their ability to provide interpretable predictions that align with expert toxicological judgment.

Comparative Performance Analysis

The following data is derived from a benchmark study using the publicly available TOX21 dataset and a proprietary dataset of 347 compounds with established clinical hepatotoxicity outcomes. Performance metrics and interpretability scores were averaged across three independent runs.

Table 1: Predictive Performance & Computational Efficiency

| Metric | DeepTox Explain | ReliaTox AI Suite | MoI-AWARE Platform |

|---|---|---|---|

| Avg. AUC-ROC (TOX21) | 0.891 | 0.875 | 0.903 |

| Hepatotoxicity Accuracy | 84.2% | 81.5% | 87.6% |

| F1-Score | 0.826 | 0.805 | 0.849 |

| Avg. Prediction Time (per compound) | 4.7 sec | 8.2 sec | 12.1 sec |

| Model Size | 1.2 GB | 2.5 GB | 950 MB |

Table 2: Interpretability & Expert Alignment Assessment

| Assessment Criteria | DeepTox Explain | ReliaTox AI Suite | MoI-AWARE Platform |

|---|---|---|---|

| Expert Agreement Score (1-10) | 7.8 | 6.5 | 9.2 |

| Feature Importance Output | SHAP values, Attention weights | Integrated Gradients | Causal Graph & SHAP |

| Mechanism of Action (MoA) Proposed | Limited | High-level only | Explicit, multi-level |

| Audit Trail Completeness | Partial | Full | Full with rationale |

Experimental Protocols

1. Benchmarking Protocol for Predictive Performance

- Data Splitting: The TOX21 dataset (12,000 compounds) and the proprietary hepatotoxicity dataset were split using stratified sampling into training (70%), validation (15%), and hold-out test (15%) sets. The proprietary set was approved by an independent review board.

- Model Training: Each platform was used to train its best-performing built-in architecture (Graph Neural Network for DeepTox, Ensemble for ReliaTox, Causal GNN for MoI-AWARE) on the identical training set for up to 100 epochs with early stopping.

- Evaluation: Predictions on the hold-out test set were evaluated for AUC-ROC, accuracy, and F1-score. Statistical significance was calculated using a paired t-test (p<0.05).

2. Expert Alignment Validation Protocol

- Panel: A panel of five senior toxicologists, blinded to the AI source, reviewed 50 randomly selected compound predictions from each platform.

- Procedure: For each prediction, experts were provided with the AI's output: predicted toxicity, confidence score, and the platform's explanation (e.g., highlighted molecular features, proposed pathways).

- Scoring: Experts scored agreement with the AI's rationale on a scale of 1-10, based on biological plausibility and consistency with known literature. The "Expert Agreement Score" is the average across the panel and compounds.

Pathway Visualization: AI-Driven MoA Elucidation Workflow

Title: AI-Driven Mechanism of Action Elucidation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI Validation in Predictive Toxicology

| Item / Solution | Function in Validation Research |

|---|---|

| TOX21 Dataset | Publicly available high-quality dataset for screening chemical toxicity across 12 nuclear receptor and stress response pathways. Serves as a primary benchmark. |

| Proprietary ADMET Database | In-house or commercially sourced data with well-characterized clinical ADMET outcomes. Essential for validating real-world relevance. |

| SHAP (SHapley Additive exPlanations) | Game theory-based tool to explain output of any machine learning model. Critical for quantifying feature contribution to AI predictions. |

| Causal Discovery Toolbox (e.g., DoWhy) | Python library for causal inference. Used to move beyond correlation and assess potential causal relationships in AI-proposed mechanisms. |

| Structured Knowledge Base (e.g., CTD, Metabolon) | Curated database linking chemicals, genes, phenotypes, and diseases. Provides biological grounding for AI-derived feature importance. |

| Blinded Expert Review Protocol | A standardized framework for unbiased assessment of AI interpretability outputs by domain experts. |

This guide, framed within a thesis on validating AI-based technique assessment against expert analysis, compares the performance of an iterative, feedback-driven AI optimization pipeline against standard automated tuning methods. The domain is drug target identification, a critical task for researchers and drug development professionals.

Experimental Protocols

1. Core AI Model Training

- Model Architecture: A Graph Convolutional Network (GCN) followed by a multi-layer perceptron classifier.

- Initial Dataset: 15,000 protein-ligand interaction pairs from BindingDB, feathered with biochemical descriptors.

- Baseline Training: Models were trained using 5-fold cross-validation with a fixed, commonly cited hyperparameter set (learning rate: 0.001, GCN layers: 2, dropout: 0.2).

- Automated Tuning Comparison: A separate model underwent Bayesian optimization for 50 iterations over the hyperparameter search space.

2. Expert Feedback Integration Protocol

- Panel: Three medicinal chemistry experts independently evaluated the top 100 and bottom 100 predictions from the baseline model on a held-out validation set of 500 novel interactions.

- Feedback Metric: Experts labeled predictions as "Valid," "Questionable," or "Invalid," providing written rationale for the latter two categories.

- Iterative Refinement Loop: The "Questionable" and "Invalid" samples, along with expert rationale, were formatted into a structured correction dataset. The model was then fine-tuned on this dataset, with hyperparameters (notably learning rate and loss function weights) adjusted dynamically based on the correction error rate. This cycle was repeated twice.

3. Final Performance Benchmark All model versions—Baseline, Auto-Tuned, and Expert-Refined (Cycle 2)—were evaluated on a completely blind test set of 2,000 interactions recently added to BindingDB, which includes several challenging, allosteric binding sites.

Performance Comparison Data

Table 1: Quantitative Performance Metrics on Blind Test Set

| Model Variant | Precision | Recall | F1-Score | AUC-ROC | Expert Alignment Score* |

|---|---|---|---|---|---|

| Baseline (Fixed Params) | 0.72 | 0.68 | 0.70 | 0.85 | 0.65 |

| Auto-Tuned (Bayesian Opt.) | 0.78 | 0.75 | 0.76 | 0.88 | 0.71 |

| Expert-Refined (Cycle 2) | 0.87 | 0.82 | 0.84 | 0.93 | 0.92 |

*Expert Alignment Score: Percentage of model predictions on the test set that received "Valid" ratings from a majority of the expert panel.

Table 2: Key Hyperparameter Evolution

| Hyperparameter | Baseline | Auto-Tuned | Expert-Refined Final |

|---|---|---|---|

| Learning Rate | 0.001 | 0.0007 | 0.0003 |

| GCN Layers | 2 | 3 | 3 |

| Dropout Rate | 0.2 | 0.4 | 0.5 |

| Feedback Loss Weight | N/A | N/A | 0.65 |

Workflow and Pathway Diagrams

Title: AI Optimization Workflow with Expert Feedback Loop

Title: Final Refined Model Architecture with Key Tuned Elements

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven Drug Target Assessment

| Item / Solution | Function in Research |

|---|---|

| BindingDB / ChEMBL Datasets | Primary source of curated protein-ligand interaction data for model training and benchmarking. |

| RDKit or Open Babel | Open-source cheminformatics toolkits for generating molecular descriptors and fingerprints from SMILES strings. |

| PyTor Geometric (PyG) or DGL | Specialized libraries for building and training graph neural network models on structural molecular data. |

| Optuna or Ray Tune | Frameworks for scalable hyperparameter optimization, enabling Bayesian and distributed search strategies. |

| Structured Feedback Database (e.g., SQL/NoSQL) | Custom database to systematically log expert evaluations, rationales, and link them to specific model predictions for iterative training. |

| Assay Validation Kit (e.g., SPR or HTRF) | Biochemical assay kit for ground-truth experimental validation of top AI-predicted novel interactions in vitro. |

Within the broader research thesis of validating AI-based technique assessment against expert analysis, a critical area of study is the systematic comparison of performance in non-standard scenarios. This guide objectively compares the performance of an AI-driven platform for protein-ligand binding affinity prediction against traditional expert-driven molecular docking, focusing on challenging edge cases such as covalent binders, allosteric sites, and highly flexible protein regions.

Comparative Performance Analysis

Table 1: Quantitative Performance Comparison on Standard vs. Edge Case Datasets

| Test Case Category | AI Platform (DeltaVina) | Expert-Driven Docking (AutoDock Vina) | Experimental Benchmark (IC50/Ki) |

|---|---|---|---|

| Standard Active Sites | RMSD: 1.2 Å, R²: 0.89 | RMSD: 1.5 Å, R²: 0.85 | PDBbind v2023 Core Set |

| Covalent Binders | RMSD: 2.8 Å, R²: 0.45 | RMSD: 2.1 Å, R²: 0.62 | CovalentInDB Database |

| Allosteric Sites | RMSD: 3.5 Å, R²: 0.32 | RMSD: 2.4 Å, R²: 0.71 | AlloStatsDB (2024) |

| Membrane Proteins | RMSD: 3.1 Å, R²: 0.51 | RMSD: 4.2 Å, R²: 0.38 | MemProtMD Database |

| High-Flexibility Loops | RMSD: 4.0 Å, R²: 0.21 | RMSD: 2.8 Å, R²: 0.58 | MoDEL/FlexiDB |

Table 2: Failure Mode Frequency Analysis

| Failure Mode | AI Platform Rate | Expert-Driven Rate | Primary Cause Identified |

|---|---|---|---|

| Pose Collapse to Canonical Site | 38% | 12% | Training data bias |

| Ignoring Water-Mediated Interactions | 42% | 19% | Implicit solvent models |

| Covalent Bond Misparameterization | 65% | 28% | Limited reaction chemistry |

| Entropy Overestimation | 31% | 22% | Conformational sampling |

Experimental Protocols

Protocol 1: Edge Case Benchmarking Framework

- Dataset Curation: Select 150 protein-ligand complexes from specialized databases (CovalentInDB, AlloStatsDB) representing known edge cases.

- Structure Preparation: Process all complexes with standardized protonation, tautomer, and rotamer assignment using RDKit and OpenBabel.

- Blind Docking: For AI platform, use default parameters with no expert intervention. For expert-driven method, allow 3 rounds of manual parameter adjustment based on visual inspection.

- Scoring & Evaluation: Calculate RMSD of top-ranked pose, predictive R² against experimental binding affinity, and interaction fingerprint similarity.

- Statistical Analysis: Perform paired t-tests with Bonferroni correction for multiple comparisons across categories.

Protocol 2: Failure Mode Induction Test

- Controlled Degradation: Systematically remove key interaction types (e.g., water molecules, metal ions) from input structures.

- Conformational Sampling: Generate 50 alternate conformations for flexible loop regions using MODELLER.

- Pose Prediction: Run both AI and expert methods on degraded and enhanced structures.

- Divergence Mapping: Record points where AI predictions first deviate from expert consensus by >3.0 Å RMSD.

- Root Cause Analysis: Correlate divergence points with specific molecular features using SHAP analysis.

Visualizations

Title: AI vs Expert Judgment Divergence Across Case Types

Title: Experimental Workflow for Divergence Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Comparative Validation Studies

| Reagent/Resource | Function in Experiment | Key Provider/Reference |

|---|---|---|

| PDBbind v2023 Core Set | Standard benchmarking for validation of baseline performance | Wang, R. et al., 2023 |

| CovalentInDB Database | Curated covalent protein-ligand complexes for edge case testing | Zhao, Q. et al., Nucleic Acids Res., 2024 |

| AlloStatsDB | Allosteric site and modulator database | Liu, X. et al., NAR, 2023 |

| CHARMM36m Force Field | Membrane protein simulations and parameterization | Huang, J. et al., JCTC, 2023 |

| Rosetta FlexPepDock | Expert-driven flexible peptide docking baseline | Alam, N. et al., Methods Mol Biol, 2024 |

| AlphaFill Database | Transplanted cofactors for holo-structure preparation | Hekkelman, M.L. et al., Nat Biotechnol, 2023 |

| Fpocket | Allosteric and cryptic pocket detection | Schmidtke, P. et al., Bioinformatics, 2024 Update |

| MDTraj Analysis Suite | Conformational sampling and trajectory analysis | McGibbon, R.T. et al., Biophys J, 2023 |

Discussion

The data demonstrate that while AI platforms excel at standard prediction tasks, expert judgment maintains significant advantages in handling biochemical edge cases. The greatest divergences occur in scenarios requiring chemical intuition beyond pattern recognition, particularly covalent bond formation and allosteric modulation. Successful validation of AI assessment techniques requires targeted benchmarking against these failure modes, with explicit protocols for capturing expert rationales in edge case handling.

Proof in Performance: Conducting and Interpreting Comparative Validation Studies

Within the broader thesis of validating AI-based technique assessment against expert analysis research, structured validation frameworks are paramount. For researchers, scientists, and drug development professionals, these frameworks provide the methodological rigor required to objectively compare novel tools—particularly AI-driven platforms—against established benchmarks and expert judgment. This guide presents a step-by-step approach for designing and executing comparative studies, ensuring robust, transparent, and reproducible evaluation of performance claims.

Core Components of a Structured Validation Framework

A robust framework must include: 1) Precisely defined evaluation criteria and metrics, 2) A carefully curated and standardized reference dataset, 3) Clearly identified comparator methods or tools, 4) Detailed experimental protocols, and 5) A plan for statistical analysis of results. The goal is to minimize bias and allow for direct, fair comparison.

Comparative Study Design: A Practical Workflow

The following workflow diagram outlines the key phases for conducting a comparative validation study.

Diagram Title: Validation Study Workflow

Case Study: Validating an AI-Based Compound Potency Predictor

Context: An AI platform ("AI-DrugPotency") predicts IC50 values for small molecules against a kinase target. Validation requires comparison to experimental high-throughput screening (HTS) and expert medicinal chemists' ranking.

Experimental Protocol

Objective: Compare the accuracy and rank-order correlation of AI-predicted potencies against experimental biochemical assay data and expert ordinal rankings.

Methodology:

- Dataset: A blind test set of 200 novel, synthetically accessible compounds not in the AI model's training data. Each compound is assigned a unique identifier.

- Comparator Methods:

- AI-DrugPotency: Latest version of the AI platform.

- Comparative Molecular Field Analysis (CoMFA): A established 3D-QSAR method.

- Expert Panel: Three senior medicinal chemists provided with compound structures. Each independently ranks subsets of 50 compounds into High, Medium, and Low predicted potency tiers.

- Gold Standard: Experimental IC50 values determined via a standardized biochemical kinase inhibition assay (run in triplicate).

- Execution:

- AI and CoMFA predictions are generated for all 200 compounds.

- Experimental IC50 determination is performed by an automated HTS core facility, following the protocol below.

- Expert rankings are collected via a structured electronic form.

- Analysis Metrics:

- Prediction Accuracy: Mean Absolute Error (MAE) between log(IC50) predictions and experimental log(IC50).

- Rank Correlation: Spearman's ρ between predicted/experimental ranks and expert/experimental ranks.

- Statistical Significance: Paired t-tests on absolute errors; p < 0.05 considered significant.

Detailed HTS Assay Protocol:

- Reagent Preparation: Dilute kinase to working concentration in assay buffer. Prepare substrate (peptide) and ATP solutions. Prepare test compounds in DMSO followed by serial dilution in buffer (final DMSO ≤1%).

- Plate Setup: Using a 384-well plate, add 10 µL of compound solution per well. Add 10 µL kinase solution, incubate 15 min at 25°C. Initiate reaction with 10 µL of substrate/ATP mix.

- Reaction & Detection: Incubate for 60 min at 25°C. Stop reaction with 10 µL detection solution (ADP-Glo Kinase Assay). Measure luminescence after 40 min.

- Data Processing: Convert luminescence to % inhibition. Calculate IC50 using a four-parameter logistic curve fit.

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Vendor Example | Function in Validation Study |

|---|---|---|

| Recombinant Kinase Protein | Sino Biological, BPS Bioscience | The target enzyme for biochemical potency assays. Purity and activity are critical. |

| ADP-Glo Kinase Assay Kit | Promega | Homogeneous, luminescent assay to measure kinase activity and calculate inhibition IC50. |

| Compound Library (DMSO stocks) | Enamine, Mcule | The set of small molecules used as the blind test set for prediction validation. |

| 3D-QSAR Software (CoMFA) | Tripos SYBYL, Open3DALIGN | Provides a traditional computational chemistry method for comparative performance analysis. |

| High-Throughput Liquid Handler | Beckman Coulter Biomek | Automates assay plate setup for reproducible compound and reagent dispensing. |

| Microplate Luminometer | PerkinElmer EnVision | Detects luminescent signal from the kinase assay for quantitation. |

Results and Comparative Data

Quantitative results from the case study are summarized below.

Table 1: Predictive Accuracy of Methods vs. Experimental IC50 (n=200 compounds)

| Method | Mean Absolute Error (MAE) ± SD (log units) | Spearman's ρ (Rank Correlation) | p-value (vs. AI-DrugPotency MAE) |

|---|---|---|---|

| AI-DrugPotency | 0.58 ± 0.41 | 0.79 | -- |

| CoMFA (3D-QSAR) | 0.91 ± 0.62 | 0.65 | < 0.001 |

| Expert Panel (Consensus) | 0.70 ± 0.55* | 0.72 | 0.012 |

*Expert MAE calculated by converting tier rankings (High=1µM, Med=10µM, Low=100µM) to log scale for comparison.

Table 2: Method Performance by Compound Potency Tier

| Potency Tier (Exp. IC50) | AI-DrugPotency MAE | CoMFA MAE | Expert Consensus Correct Classification Rate |

|---|---|---|---|

| High Potency (< 10 nM) | 0.45 | 0.88 | 85% |

| Medium Potency (10 nM - 1 µM) | 0.61 | 0.92 | 78% |

| Low Potency (> 1 µM) | 0.67 | 0.93 | 82% |

Analysis Pathway

The following diagram illustrates the logical flow of data from input methods to final comparative analysis.

Diagram Title: Performance Validation Analysis Flow

Step-by-Step Implementation Guide

- Frame the Validation Question: Align with the overarching thesis. Example: "Does the AI-based assessment technique agree with expert analysis within defined statistical bounds?"

- Select Appropriate Comparators: Include state-of-the-art computational methods, relevant experimental benchmarks, and human expert judgment where applicable.

- Standardize the Dataset & Protocol: Ensure all methods are evaluated on identical, unseen data under equivalent conditions. Blind testing is essential.

- Pre-Define Success Metrics: Establish thresholds for performance equivalence or superiority (e.g., AI prediction must be within 0.6 log units of experiment, correlating with expert consensus at ρ > 0.75).

- Execute and Document Rigorously: Adhere to the protocol. Record all parameters, versions, and deviations.

- Analyze and Visualize Objectively: Use pre-specified statistical tests. Present data transparently, highlighting both strengths and limitations of each method.

- Contextualize Findings: Discuss how the results inform the broader thesis on AI validation. Does AI augment, match, or fall short of expert analysis? What are the implications for deployment in the drug development pipeline?

Structured validation frameworks, as demonstrated, provide an indispensable scaffold for credible comparative studies. By following a disciplined, stepwise approach and demanding direct comparison with both empirical data and expert judgment, researchers can generate compelling evidence for the utility—and limitations—of AI-based assessment techniques in scientific and drug discovery contexts.

This comparison guide is framed within a broader research thesis on validating AI-based technique assessment against expert analysis in drug discovery. It objectively evaluates the performance of an AI-driven molecular interaction prediction platform (referred to as Platform A) against two established alternatives: a traditional expert-curated database system (Platform B) and a high-throughput experimental screening service (Platform C). The analysis focuses on three critical metrics: concordance with gold-standard expert analysis, computational/experimental speed, and cost-efficiency.

Key Experimental Protocols

Protocol 1: Concordance Validation Study.

- Objective: To determine the agreement between platform outputs and a consensus from a panel of three domain experts.

- Methodology: A benchmark set of 500 unique, literature-supported protein-ligand interactions with known binding affinities (Kd) was curated. Each platform processed the set to predict binding affinity/scores. The expert panel independently rated each interaction as "Validated," "Plausible," or "Unlikely" based on available literature and structural data. Concordance was calculated as the percentage of platform predictions that matched the expert consensus category. Cohen's Kappa (κ) was computed for inter-rater reliability between platforms and the consensus.

Protocol 2: Throughput and Speed Benchmarking.