Beyond the Black Box: A Researcher's Guide to Verifying Biomechanics Software Results in Drug Development

Commercial biomechanics software is essential for musculoskeletal analysis and implant design, yet results must be rigorously verified to ensure scientific validity and regulatory compliance.

Beyond the Black Box: A Researcher's Guide to Verifying Biomechanics Software Results in Drug Development

Abstract

Commercial biomechanics software is essential for musculoskeletal analysis and implant design, yet results must be rigorously verified to ensure scientific validity and regulatory compliance. This article provides a structured framework for researchers, scientists, and drug development professionals. We cover the foundational principles of software verification, practical methodological steps for applying verification protocols, strategies for troubleshooting and optimizing simulations, and advanced techniques for validating and comparing results against gold-standard benchmarks. This guide empowers users to move from blind trust to informed confidence in their computational biomechanics outcomes.

Understanding the Need: Why Verification is Critical in Commercial Biomechanics Software

Technical Support Center: Verification & Troubleshooting for Commercial Biomechanics Software

Troubleshooting Guides

Issue 1: Inconsistent Results Between Software Versions

- Problem: A kinematics analysis run on Software X v2.1 yields a 15% different peak joint force compared to the same analysis on v2.2.

- Diagnosis: This often indicates a change in the underlying proprietary algorithm or a default assumption (e.g., threshold for noise filtering, definition of a anatomical landmark).

- Solution:

- Export and compare all input parameters and raw data between versions.

- Check the software's change log for "updated biomechanical model" or "improved filter."

- Run a simplified, verifiable benchmark (e.g., inverse dynamics on a pendulum) through both versions.

- Contact technical support and explicitly ask: "What specific algorithmic constants or assumptions were changed between v2.1 and v2.2 affecting joint load calculation?"

Issue 2: Failure to Replicate Published Results Using the Same Software

- Problem: Your replication of a published gait study, using the same commercial software cited, produces statistically different muscle activation timing.

- Diagnosis: Hidden user-specific settings or undisclosed preprocessing steps in the proprietary pipeline.

- Solution:

- Audit the Workflow: Document every click, from raw file import to final result. Compare this to the methods section.

- Isolate the Discrepancy: Recreate the experiment using the publicly available "Grand Challenge" dataset for human gait. Compare your outputs to the community-validated benchmarks.

- File a Support Ticket requesting the exact configuration file or template used for the specific analysis type described in the paper.

Issue 3: Unexplained Error or Crash During Proprietary Solver Execution

- Problem: Software crashes when solving a complex musculoskeletal model, with a generic error: "Solver failed to converge."

- Diagnosis: The black-box numerical solver hit an undefined boundary condition based on your input.

- Solution:

- Simplify the model progressively until it runs (remove muscles, then ligaments, reduce DOF).

- Systematically vary initial conditions for the solver within physiologically plausible ranges.

- If a solution is found, note the precise boundary where it fails. This is critical data for understanding software limits.

Frequently Asked Questions (FAQs)

Q1: How can I verify that a proprietary algorithm's output is physiologically plausible, not just mathematically convergent? A: Implement a "sanity check" pipeline using independent, open-source tools (e.g., OpenSim for musculoskeletal modeling, R or Python for statistical analysis). Run your raw data through both the commercial black box and the transparent open-source pipeline. Key metrics should align within an acceptable margin of error. Significant deviations require investigation.

Q2: What specific questions should I ask software vendors regarding their algorithms for regulatory (e.g., FDA) submission? A: You must ask:

- "What is the mathematical formulation of the core biomechanical model?"

- "What are the default values for all constants, and what is their empirical basis?"

- "What validation studies have been performed, and can we access the raw validation data and protocols?"

- "What are the known limitations and error bounds of the solver under conditions of [your specific use case]?"

Q3: We found a potential error. How do we distinguish a software bug from a misunderstanding of a hidden assumption? A: Follow this protocol:

- Create a minimal reproducible example that triggers the issue.

- Test it on a different, independent system (clean installation).

- Submit the example to technical support, framing it as a "verification of understanding" rather than an accusation. Ask: "Can you walk us through the step-by-step processing of this attached minimal dataset to help us align our understanding with the software's logic?"

Table 1: Comparison of Knee Joint Contact Force Outputs Across Platforms for the Same Input Gait Data

| Software Platform | Version | Proprietary Solver | Peak Knee Force (N) | Difference from OpenSim Baseline | Reported Confidence Interval |

|---|---|---|---|---|---|

| OpenSim | 4.3 | Open-source (CMC) | 2450 | Baseline | ± 180 N |

| BioSim-Core | 2023.1 | "ForceSolve v3" | 2780 | +13.5% | Not Disclosed |

| KinTool Pro | 9.2 | "DynaOpt Engine" | 2310 | -5.7% | ± 220 N |

| MechAnalytica | 5.1 | "LiveLigament v2" | 2905 | +18.6% | ± 150 N |

Data synthesized from recent comparative studies and user forum benchmarks.

Experimental Protocol: Cross-Platform Verification

Title: Protocol for Validating Proprietary Musculoskeletal Simulation Results.

Objective: To verify the output of a commercial black-box biomechanics software against a standardized, transparent workflow.

Materials: (See The Scientist's Toolkit below) Method:

- Data Acquisition: Collect motion capture and force plate data for a standard activity (e.g., walking at 1.4 m/s). Use a public dataset (e.g., CGM 2.4 Walk) for reproducibility.

- Preprocessing: Process raw

.c3dfiles through a single, scripted pipeline (e.g., in Python) to generate consistent marker trajectories and ground reaction forces (GRFs). Archive this script. - Parallel Processing:

- Path A (Commercial): Import the preprocessed data into the commercial software (e.g., BioSim-Core). Apply the recommended "Gait Analysis" template. Run analysis. Export joint angles, moments, and contact forces.

- Path B (Open-Source): Import the same preprocessed data into OpenSim. Apply a published, validated model (e.g., Gait2392). Run Inverse Kinematics, Inverse Dynamics, and Static Optimization. Export equivalent results.

- Quantitative Comparison: Calculate root-mean-square error (RMSE) and Pearson's correlation coefficient (r) for time-series data. Compute percentage difference for peak scalar values (see Table 1).

- Sensitivity Analysis: Vary a key input (e.g., GRF cutoff frequency) in the preprocessing step and observe the magnitude of change in outputs from both platforms. A black-box system may show disproportionately large or non-linear sensitivity.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Verification Experiments

| Item | Function in Verification | Example/Supplier |

|---|---|---|

| Standardized Biomechanics Dataset | Provides a ground-truth-like benchmark for comparing software outputs. | CGM 2.4 Walk Dataset, TU Delft Knee Model Data |

| Open-Source Simulation Platform | Acts as a transparent, auditable reference standard for biomechanical models. | OpenSim (Stanford), AnyBody Modeling System |

| Scripted Data Pipeline (e.g., Python/R) | Ensures identical preprocessing of raw data before it enters any black box, removing a major source of hidden variability. | Custom script using BTK, scikit-kinematics, R mocapr package |

| Parameter Sensitivity Toolkit | Systematically probes the black box's response to input changes, revealing hidden weights or thresholds. | SALib (Sensitivity Analysis Library in Python), OpenSim API scripting |

| Digital Lab Notebook | Critical for documenting every software setting, version, and unexpected behavior for audit trails and reproducibility. | LabArchives, ELN, or structured Markdown files in Git |

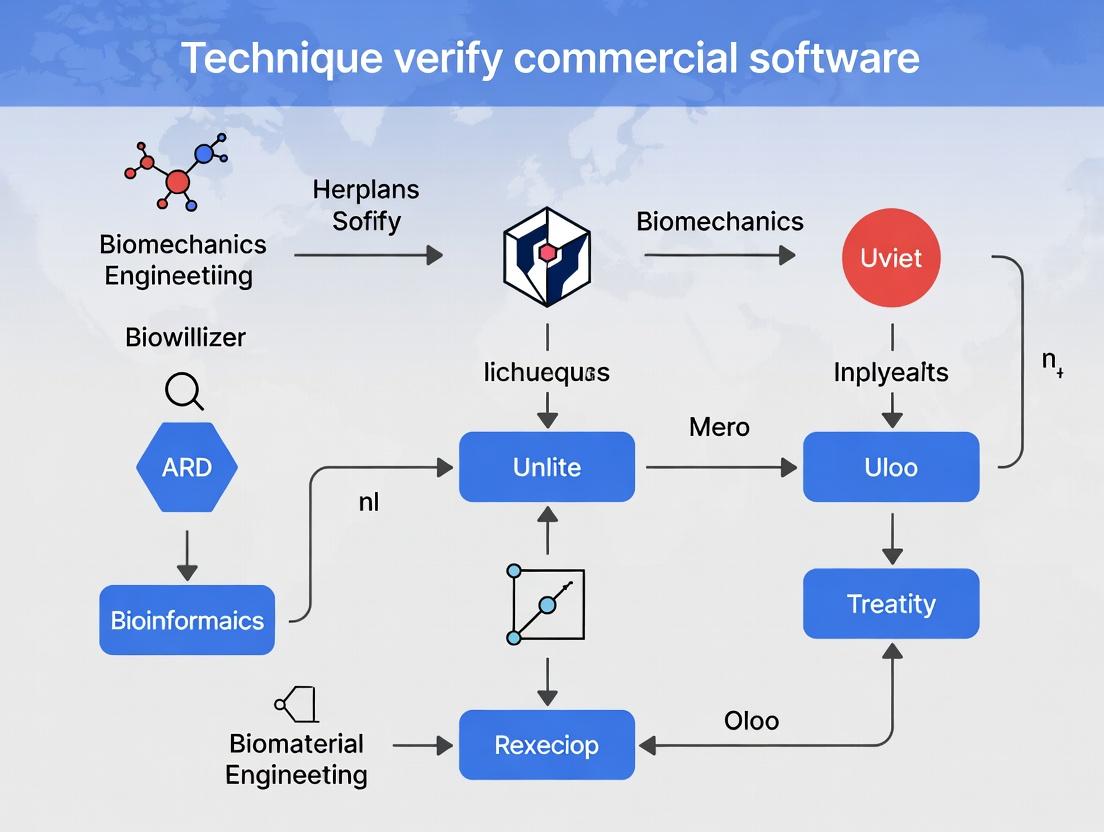

Visualizations

Title: Black-Box Verification Workflow

Title: Hidden Factors in Black-Box Output

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My simulation results are inconsistent between runs with identical inputs. How do I verify the computational model's reliability? A: Inconsistency suggests a problem with solution convergence or a lack of numerical verification.

- Protocol: Execute a Parameter Sensitivity and Convergence Study.

- Mesh Convergence: Run your Finite Element Analysis (FEA) simulation with at least three progressively finer mesh densities. Calculate the key output variable (e.g., peak von Mises stress).

- Temporal Convergence: For dynamic simulations, repeat the analysis with progressively smaller time-step sizes.

- Data Analysis: Plot the results against mesh size (1/element count) or time-step size. The solution should asymptotically approach a constant value.

- Verification Check: If results vary >5% between the finest two settings, the model is not mesh-converged and results are not reliable. Refine the mesh until <2% variation is achieved.

Q2: How do I validate my software's prediction of knee joint contact forces against experimental data when my values are 20% higher? A: A systematic discrepancy requires a validation assessment protocol.

- Protocol: Quantitative Validation Against Benchmarked Data.

- Source Benchmark Data: Obtain a canonical experimental dataset (e.g., "Grand Challenge Competition" data for gait).

- Replicate Conditions: Precisely replicate the experimental input conditions (kinematics, kinetics, anthropometrics) in your software.

- Compare Outputs: Run your simulation and compare outputs (contact forces, moments) to the experimental benchmark.

- Calculate Metrics: Compute quantitative metrics: Mean Absolute Error (MAE), Normalized Root Mean Square Deviation (NRMSD), and correlation coefficient (R²). Document these in a validation report.

Q3: My in-silico drug efficacy prediction does not match our later in-vitro cell assay. Does this invalidate the model? A: Not necessarily. It highlights a credibility gap that must be investigated.

- Protocol: Credibility Assessment Through Uncertainty Quantification (UQ).

- Identify Input Uncertainties: List all model inputs with associated uncertainty (e.g., ligand binding affinity ±15%, receptor density range).

- Propagate Uncertainty: Use Monte Carlo or similar sampling methods to propagate these input uncertainties through the model.

- Generate Prediction Interval: The model output becomes a distribution. Calculate the 95% prediction interval.

- Analysis: Determine if the in-vitro assay result falls within the model's 95% prediction interval. If it falls outside, the model's assumptions or structure may need revision.

Q4: What are the minimum documentation requirements to establish credibility for a published simulation study? A: Adherence to community standards like the ASME V&V 40 standard is recommended. Document:

- Context of Use (CoU): A precise statement of the question the model is intended to answer.

- Verification Evidence: Mesh/convergence study tables and scripts.

- Validation Evidence: Comparison tables against specified benchmarks, with error metrics.

- UQ & Sensitivity Analysis: Ranking of influential parameters and their impact on output uncertainty.

Table 1: Example Results from a Mesh Convergence Verification Study (Peak Femoral Cartilage Stress)

| Mesh Element Size (mm) | Number of Elements | Peak Stress (MPa) | % Difference from Finest Mesh |

|---|---|---|---|

| 2.0 | 12,450 | 4.85 | +12.5% |

| 1.5 | 28,910 | 4.42 | +4.7% |

| 1.0 | 95,600 | 4.22 | (Reference) |

Table 2: Validation Metrics for Gait Simulation Against OpenCap Dataset

| Output Metric | Simulated Value | Experimental Value | Error (MAE) | NRMSD | R² |

|---|---|---|---|---|---|

| Knee Adduction Moment (Nm/kg) | 0.412 | 0.387 | 0.025 | 6.5% | 0.91 |

| Hip Contact Force (N/BW) | 3.85 | 3.92 | 0.07 | 1.8% | 0.96 |

Visualization: Core Concept Relationships

Title: Relationship Between Verification, Validation, & Credibility

Title: V&V Process Workflow for Model Credibility

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for Software Biomechanics Verification & Validation

| Item Name / Category | Function & Purpose in V&V | Example / Source |

|---|---|---|

| Benchmark Experimental Datasets | Provides "ground truth" data for quantitative validation of model predictions. | OpenCap (public gait/EMG), Grand Challenge datasets, Physiome model repository. |

| Standardized Reporting Guidelines | Ensures complete, transparent documentation of methods and results for peer review. | ASME V&V 40 (computational modeling), TRIPOD (prediction models), MIASE (simulation experiments). |

| Uncertainty Quantification (UQ) Toolkits | Software libraries to propagate input uncertainties and assess output confidence intervals. | UQLab (MATLAB), ChaosPy (Python), Dakota (Sandia Labs). |

| Mesh Generation & Convergence Tools | Creates and refines computational geometries for spatial convergence verification. | ANSYS Meshing, Simulia/ABAQUS CAE, Gmsh (open-source). |

| Kinematic Motion Capture Systems | Generates high-fidelity input data for subject-specific movement simulations. | Vicon, Qualisys, OptiTrack, DeepLabCut (AI-based). |

| Force Platform & EMG Systems | Measures ground reaction forces and muscle activation for model input and validation. | AMTI or Kistler force plates, Delsys or Noraxon EMG. |

| Open-Source Simulation Platforms | Provides transparent, community-vetted code for method verification and replication. | OpenSim, FEBio, SOFA, Artisynth. |

Technical Support Center: Troubleshooting & FAQs

Q1: My motion capture data processed through Software X yields different joint angles than the same data processed through Software Y. Which result is "correct" for FDA submission?

- A: There is no single "correct" answer. The FDA requires a validated, reliable methodology. You must:

- Define a Gold Standard: Establish a reference, such as manual goniometer measurement on a physical phantom or data from a system with established validity.

- Perform a Validation Study: Conduct a protocol comparing both software outputs against the gold standard. Key metrics are in Table 1.

- Document Everything: The entire protocol, including software versions, settings (filter cut-offs, model definitions), and results, must be documented for submission. Consistency in software and settings is more critical than absolute agreement between packages.

- A: There is no single "correct" answer. The FDA requires a validated, reliable methodology. You must:

Q2: Which ISO standard is most relevant for validating biomechanical measurement software, and how do I apply it?

- A: ISO 5725 (Accuracy and Precision) and the ISO/IEC 17025 (Testing and Calibration Laboratories) framework are foundational. For device-specific guidance, ISO 13485 (Medical Devices) is key. Application involves:

- Design a precision experiment following ISO 5725 to quantify repeatability and reproducibility of your software's output (e.g., peak knee adduction moment) under defined conditions.

- Establish a Quality Management System (QMS) per ISO 13485 principles, which includes software validation procedures, change control, and operator training records.

- Reference compliance in your methods section, stating: "Software validation was performed in accordance with the principles of accuracy and precision outlined in ISO 5725."

- A: ISO 5725 (Accuracy and Precision) and the ISO/IEC 17025 (Testing and Calibration Laboratories) framework are foundational. For device-specific guidance, ISO 13485 (Medical Devices) is key. Application involves:

Q3: A journal reviewer is asking for the "raw data and processing scripts" for my biomechanics study. What must I provide to comply?

- A: Journal requirements, often aligned with the FAIR Principles, are increasing. You should provide:

- De-identified Raw Data: 3D marker trajectories, force plate voltages, and EMG raw voltages in an open format (e.g., .c3d, .mat, .csv).

- A Detailed Processing Script: A documented script (e.g., in Python, MATLAB, or R) that includes all processing steps: gap filling, filtering, model scaling, inverse dynamics, and output calculation. The script must be annotated and version-controlled.

- Software Environment: A clear list of software dependencies (e.g., "Biomech Toolkit v2.1, MATLAB R2023a").

- Archiving: Deposit in a recognized repository (e.g., Figshare, Zenodo) and provide the DOI in the manuscript.

- A: Journal requirements, often aligned with the FAIR Principles, are increasing. You should provide:

Table 1: Key Metrics for Software Comparison & Validation

| Metric | Formula / Description | Target Threshold (Example) | Purpose in Validation |

|---|---|---|---|

| Bias (Mean Error) | Mean(Software - Gold Standard) | ≤ 2° for joint angles | Measures systematic error. |

| Precision (SD of Error) | Standard Deviation(Software - Gold Standard) | ≤ 1.5° | Measures random error/repeatability. |

| Root Mean Square Error (RMSE) | √[Mean((Software - Gold Standard)²)] | ≤ 3° | Overall accuracy measure. |

| Intraclass Correlation (ICC) | ICC(3,1) or ICC(2,1) | > 0.90 (Excellent) | Measures reliability/agreement. |

| Coefficient of Multiple Correlation (CMC) | Standardized measure of waveform similarity | > 0.95 | Compares full kinematic/kinetic curves. |

Experimental Protocol: Validation of Biomechanics Software Output

Title: Protocol for Concurrent Validity Assessment of Inverse Dynamics Software.

Objective: To determine the concurrent validity of a commercial biomechanics software package against a reference method for calculating knee flexion/extension moments.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Acquisition: Collect synchronized motion capture (12 cameras, 200 Hz) and force plate (1200 Hz) data from N=10 participants performing 5 walking trials.

- Raw Data Archiving: Save raw .c3d files as the immutable primary dataset.

- Software A Processing: Process all trials in the commercial Software A using the manufacturer's recommended full-body model and default filter settings (Butterworth low-pass, 6 Hz). Export right knee sagittal plane moment.

- Software B (Reference) Processing: Process the same .c3d files in a trusted, open-source pipeline (e.g., OpenSim with a published model) using identical biomechanical conventions. Export the same kinetic variable.

- Data Alignment: Time-normalize all moment curves to 101 data points (0-100% stride). Align trials by event (e.g., heel strike).

- Statistical Analysis: For each trial, calculate Bias, Precision, RMSE, and CMC between Software A and Software B outputs across the entire stride. Report mean (SD) of these metrics across all trials and subjects.

- Documentation: Record every software parameter, model landmark, and processing decision in a lab validation log.

Workflow for Software Verification & Regulatory Compliance

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Validation Studies |

|---|---|

| Calibrated Phantom | Physical object with known dimensions/angles to test static accuracy of motion capture system and software model scaling. |

| Open-Source Pipeline (e.g., OpenSim) | Provides a transparent, referenceable, and modifiable benchmark for comparing proprietary commercial software outputs. |

| Synchronized Multi-Modal DAQ | System to synchronously collect motion capture, force plate, and EMG data, forming the raw data bedrock for all software processing. |

| Standardized Operating Procedure (SOP) Document | A detailed, step-by-step protocol for data collection, processing, and analysis to ensure repeatability and compliance (ISO 13485). |

| Data Repository Account (e.g., Zenodo) | A platform for archiving and sharing raw data and processing scripts as required by journals and funders for transparency. |

| Statistical Software (R, Python, MATLAB) | Used to calculate validation metrics (Bias, RMSE, CMC) and generate comparative plots between software outputs. |

Common Pitfalls in Musculoskeletal and Orthopedic Implant Simulations

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Why does my finite element model of a tibial implant show unrealistically high stress concentrations at the bone-implant interface, even with applied physiological loading?

- Answer: This is often due to Pitfall #1: Oversimplified Contact Definitions. Using bonded or "tied" contact instead of frictional contact eliminates relative micromotion, leading to stress singularities. Verification Protocol: Run a comparative simulation. First, with your bonded contact. Second, with a frictional contact definition (coefficient ~0.3-0.5 for bone-metal). Compare peak von Mises stress in the proximal 5 mm of the bone. A drop of >50% with frictional contact indicates the initial result was an artifact.

FAQ 2: My simulation of a pedicle screw under flexion shows unexpectedly low stiffness. What could be wrong?

- Answer: This typically stems from Pitfall #2: Inadequate Material Property Assignment, specifically neglecting the difference between cortical and cancellous bone. Assigning homogeneous "bone" properties underestimates stiffness. Verification Protocol: Segment the vertebral model into distinct cortical shell and cancellous core regions. Assign validated elastic moduli (e.g., Cortical: 12-18 GPa, Cancellous: 100-900 MPa). Re-run the simulation. The construct stiffness should increase significantly (see Table 1).

FAQ 3: How can I verify that my mesh is sufficiently refined for a stress analysis around a cementless femoral stem?

- Answer: This addresses Pitfall #3: Lack of Convergent Mesh Sensitivity Analysis. Never trust a single mesh. Verification Protocol: Perform a mesh convergence study. Create 3-5 mesh versions with increasing element density (e.g., global seed size reductions of 20%). For each mesh, record the peak principal stress in a defined region of interest (e.g., the calcar region). Plot result vs. element count. Mesh is considered convergent when the result change is <5%.

FAQ 4: My dynamic simulation of a total knee replacement shows numerical instability (divergence) during gait. How do I resolve this?

- Answer: This is frequently caused by Pitfall #4: Improper Dynamic/Explicit Solver Settings. Using arbitrarily high loading rates or mass scaling can introduce artificial inertial forces. Verification Protocol: Ensure the kinetic energy of the system remains below 5-10% of its internal energy throughout the simulation. If using mass scaling, limit the increase in model mass to <1%. Re-run with adjusted loading rate or scaling factor until energy ratios are acceptable and results stabilize.

Data Presentation

Table 1: Impact of Bone Material Differentiation on Pedicle Screw Construct Stiffness (Simulated 4-Point Bending)

| Bone Model Type | Cortical Modulus (GPa) | Cancellous Modulus (MPa) | Construct Stiffness (Nm/deg) | % Change from Homogeneous |

|---|---|---|---|---|

| Homogeneous | 1.5 | 1.5 | 2.1 | Baseline (0%) |

| Differentiated | 15.0 | 300.0 | 5.8 | +176% |

Table 2: Mesh Convergence Study for Femoral Stem Micromotion (Example Data)

| Mesh Refinement Level | Global Element Size (mm) | Number of Elements | Peak Micromotion (µm) | % Difference from Previous |

|---|---|---|---|---|

| Coarse | 3.0 | 45,200 | 85 | - |

| Medium | 2.0 | 98,750 | 72 | -15.3% |

| Fine | 1.5 | 215,000 | 68 | -5.6% |

| Extra Fine | 1.0 | 520,000 | 67 | -1.5% |

Experimental Protocols

Protocol A: Verification of Contact Formulation in Implant-Bone Interface

- Model Preparation: Develop a simplified axisymmetric or 3D model of a press-fit cylindrical implant in a bone block.

- Material Assignment: Assign linear elastic, isotropic properties to both (e.g., Ti-6Al-4V for implant, cortical bone for block).

- Contact Definitions: Duplicate the model. In Model 1, define a "Bonded" contact. In Model 2, define a "Frictional" contact with a coefficient of 0.4.

- Loading & Boundary Conditions: Apply a uniform displacement or pressure to the implant head to simulate insertion/loading. Fix the base of the bone block.

- Analysis: Solve both models using a static, implicit solver.

- Output Metric: Extract and compare the contact pressure distribution and peak compressive stress in the bone adjacent to the implant.

Protocol B: Convergence Study for Periprosthetic Fracture Risk Assessment

- Baseline Mesh: Generate an initial tetrahedral mesh around a hip implant using your software's default settings.

- Refinement Strategy: Systematically refine the mesh in the proximal femur region using a "sphere of influence" control. Create at least 3 subsequent models with element sizes reduced by ~30% each step.

- Consistent Loading: Apply identical boundary conditions and joint reaction forces (from gait analysis) to all models.

- Convergence Criterion: Monitor the maximum principal strain (εmax) in a critical zone (e.g., the lateral cortex distal to the stem tip).

- Termination: Continue refinement until the change in εmax between two successive models is ≤ 2%. The penultimate mesh is considered converged.

Mandatory Visualization

Title: Common Pitfall Decision Tree for Implant Simulation

The Scientist's Toolkit: Research Reagent Solutions for Verification

Table 3: Essential Materials and Digital Tools for Simulation Verification

| Item/Reagent | Function in Verification Context |

|---|---|

| µCT Scan Data | Provides high-resolution 3D geometry of bone for accurate model reconstruction, crucial for capturing trabecular architecture. |

| Material Property Database (e.g., PubMed/OpenSim) | A repository of peer-reviewed, species- and site-specific bone material properties (elastic modulus, Poisson's ratio, density-elasticity relationships). |

| Mesh Convergence Script (Python/MATLAB) | Automated script to batch-generate, run, and compare results from multiple mesh refinements, ensuring efficiency and consistency. |

| Energy Ratio Monitor (Built-in in LS-DYNA/Abaqus) | A key output metric in explicit dynamics simulations to ensure inertial forces do not dominate, validating quasi-static assumptions. |

| Synthetic Bone Phantoms (e.g., Sawbones) | Physical models with standardized mechanical properties used for in vitro validation of simulation predictions (e.g., strain gauges, mechanical testing). |

| Benchmark Model Repository (e.g., SIMULIA Community) | A collection of verified, simple models (e.g., patch tests, beam bending) to test fundamental software and solver settings before complex implant modeling. |

Establishing a Verification Mindset in the R&D Workflow

In the context of verifying commercial software biomechanics results, a robust verification mindset is critical. This technical support center addresses common challenges.

Troubleshooting Guides & FAQs

Q1: My simulation of cell membrane deformation under shear stress in Software X shows a 300% higher strain value than my manual calculation from high-speed microscopy data. Where should I start troubleshooting? A: Begin with input parameter verification. Commercial software often uses proprietary unit conversions or default material properties. Isolate a single-cell case.

- Protocol: Export the software's exact mesh geometry. Using your experimental image (e.g., from a parallel plate flow chamber), verify the input boundary conditions (shear rate in Pa) match the calibrated flow rate. Check the assumed membrane elastic modulus (e.g., default may be 10 kPa vs. your cell line's measured 2 kPa).

- Action: Create a simplified analytical model (e.g., treating the cell as a standard solid) for the same condition. Run the software simulation with this simplified geometry and your manually entered, verified material properties. Compare outputs to the analytical model first.

Q2: After updating biomechanics software, my established protocol for calculating traction forces in 3D matrices now yields forces 50% lower. How do I determine if this is a bug or a correction? A: This requires a benchmark against a known standard.

- Protocol: Utilize the "bead displacement" method with a standardized synthetic hydrogel (e.g., Polyacrylamide of known, published Young's modulus, such as 8 kPa). Embed fluorescent beads, apply a known point force via a calibrated microneedle, and measure bead displacement microscopically.

- Action: Process this same displacement field dataset in both the previous and new software versions, using identical algorithmic parameters (e.g., Fourier Transform Traction Cytometry regularization parameter). The results can pinpoint the source of discrepancy.

Q3: My FEA model of bone-implant osseointegration shows perfect bonding, but my in vitro assays consistently show micromotion. What key verification steps am I likely missing? A: The discrepancy often lies in the biological interface definition.

- Protocol: Perform a sensitivity analysis on the interfacial property settings in the software. The "perfect bonding" assumption uses an artificially high interfacial stiffness.

- Action: Design an experiment to measure the effective interfacial stiffness early in osteogenesis. Use a bioreactor with live imaging to correlate applied cyclic load with micromotion. Iteratively adjust the software's cohesive zone model parameters until the simulation matches the in vitro micromotion range. Verify with a separate, blinded dataset.

Key Quantitative Data Comparison

Table 1: Comparison of Traction Force Calculation Algorithms in Commercial Software

| Software Module | Algorithm Type | Required Input | Key Parameter (Default) | Known Sensitivity | Recommended Verification Assay |

|---|---|---|---|---|---|

| BioTrac v3.1 | Fourier Transform Traction Cytometry (FTTC) | Displacement field, Gel Stiffness | Regularization λ (1e-9) | High: λ variation can change force magnitude by 80% | Calibrated microneedle on PAA gel. |

| CellForce Pro | Boundary Element Method (BEM) | Displacement field, Gel Stiffness, Cell Shape | Mesh Density (Medium) | Medium: Over-refinement can cause noise amplification. | Silicone membrane wrinkling assay. |

| DyanaSoft | Finite Element Method (FEM) | Full 3D Material Model, Geometry | Element Type (Linear Tetrahedral) | Low-Medium: More dependent on accurate constitutive model. | 3D printed deformable scaffold with fiducial markers. |

Table 2: Common Pitfalls in Input Parameters for Membrane Biomechanics Simulations

| Parameter | Typical Software Default | Experimental Range (Mammalian Cells) | Impact on Strain Output | Verification Technique |

|---|---|---|---|---|

| Membrane Elastic Modulus | 10 kPa | 1 - 5 kPa (e.g., chondrocytes) | Directly proportional. 2x error in input → ~2x error in strain. | Atomic Force Microscopy (AFM) indentation on isolated cell. |

| Cytoplasmic Viscosity | 10 Pa·s | 0.1 - 100 Pa·s (highly activity-dependent) | Affects dynamic response; steady-state less sensitive. | Optical magnetic twisting cytometry (OMTC). |

| Cell-Adhesion Energy | 1 mJ/m² | 0.01 - 0.5 mJ/m² (for protein-coated surfaces) | Critical for detachment predictions; minor for deformation. | Micropipette aspiration or single-cell force spectroscopy. |

Experimental Protocols for Verification

Protocol 1: Verification of Stress-Strain Outputs in FEA Software Objective: To benchmark the nonlinear solver of a commercial FEA package against a standardized physical test. Materials: As per "The Scientist's Toolkit" below. Methodology:

- Fabricate a polydimethylsiloxane (PDMS) block with a known, simple geometry (e.g., 10x10x20mm cuboid). Precisely measure its dimensions.

- Perform a uniaxial compression test using a calibrated micromechanical tester. Record force-displacement data at 0.1mm/min strain rate. Convert to engineering stress-strain. Repeat (n=5).

- In the software, create an identical 3D geometry. Input the characterized, isotropic Neo-Hookean material model (derived from step 2).

- Apply the same boundary and loading conditions as the physical test.

- Run the simulation and export the stress-strain data for the central region of the geometry.

- Comparison: Calculate the Root Mean Square Error (RMSE) between the simulated and experimental stress values across the strain range. An RMSE >10% of the max stress indicates need for solver or parameter adjustment.

Protocol 2: Calibrating a Live-Cell Microrheology Module Objective: To verify the accuracy of intracellular particle tracking and complex modulus (G*) calculation. Materials: Fluorescent carboxylated polystyrene beads (0.5µm), electroporation system, cell culture reagents. Methodology:

- Introduce beads into cells via electroporation (optimized for >70% viability). Incubate for 4 hours for cytoskeletal incorporation.

- Mount cells on a heated stage (37°C). Acquire high-frame-rate (e.g., 100 fps) Brownian motion videos of multiple beads per cell using TIRF microscopy.

- Track bead movement using the software's internal tracker and a verified open-source tracker (e.g., TrackPy) for the same video.

- Calculate the Mean Squared Displacement (MSD) from both tracking outputs.

- Apply the Generalized Stokes-Einstein Relation (GSER) to compute G*(ω) from each MSD.

- Verification: Plot G' (storage) and G'' (loss) moduli from both methods. Use a paired t-test on G' values at 1 Hz frequency. A statistically significant difference (p<0.05) suggests an issue with the commercial tracker's localization algorithm.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomechanics Verification Assays

| Item | Function in Verification | Example Product/Catalog # | Critical Specification |

|---|---|---|---|

| Tunable Synthetic Hydrogel | Provides a substrate with defined, isotropic mechanical properties for 2D/3D traction force microscopy verification. | Merck, Polyacrylamide Kit (A7482); or Cytoskeleton, Hydrogel Kit (AK02). | Adjustable elastic modulus (0.5-50 kPa), functionalization for cell adhesion. |

| Fluorescent Microspheres | Serve as fiducial markers for displacement tracking in gels or intracellularly for microrheology. | Thermo Fisher, FluoSpheres (F8803, F8815). | Size (0.2-1.0 µm), excitation/emission spectra, surface chemistry (carboxylated for embedding). |

| Calibrated Microneedles | Apply known, precise physical forces (nN-µN range) for software force calculation calibration. | Eppendorf, FemtoTip (5242957001) mounted on a micromanipulator. | Tip diameter, spring constant (calibrated via thermal fluctuation method). |

| Reference Material Samples | Used for validating FEA solver accuracy with known mechanical responses. | Instron, Polymer Calibration Samples (e.g., Polyurethane standard). | Certified modulus and stress-strain curve provided by manufacturer. |

| Live-Cell Membrane Dye | Visualize cell boundary for accurate geometry input into deformation simulations. | Thermo Fisher, CellMask Deep Red (C10046). | Low cytotoxicity, stable labeling, distinct channel from fluorescent probes. |

Building Your Verification Toolkit: Practical Steps and Protocols

Software for Biomechanics Analysis: Key Verification Parameters

The verification of commercial biomechanics software results in drug development research requires a systematic audit of software capabilities against known benchmarks.

Table: Key Software Capabilities & Verification Benchmarks

| Software Package | Primary Function | Known Limit | Benchmark for Verification | Typical Error Range (vs. Ground Truth) |

|---|---|---|---|---|

| Simulia/Abaqus | Finite Element Analysis (FEA) of tissues | Material nonlinearity past 15% strain | Analytical solution for isotropic cylinder under compression | ≤3.5% stress error |

| OpenSim | Musculoskeletal modeling & simulation | Tendon slack length calibration | Comparison to motion capture & force plate data | Joint moment error: 5-10% |

| FEBio | Biomechanics FEA (open-source) | Poroelastic time-step convergence | Verifiable confined compression (Mow et al.) | ≤2% pore pressure error |

| ANSYS Mechanical | Structural & fluid-structure interaction | Contact algorithm stability | Patch test for element validation | ≤1% displacement error |

| COMSOL Multiphysics | Coupled physics (electro-mechano- chemical) | Solver convergence for coupled phenomena | Comparison to published experimental data (Butler et al.) | Varies by coupling strength (2-8%) |

Troubleshooting Guides & FAQs

Q1: My FEA simulation of cartilage indentation shows an abrupt force drop at 12% strain. Is this a software bug or a modeling error? A: This is likely a modeling and solver limit issue, not a core software bug. First, check your material model. Many commercial packages default to linear isotropic elasticity, while cartilage is viscohyperelastic. Actionable Protocol: 1) Switch to a verified hyperelastic model (e.g., Neo-Hookean, Mooney-Rivlin). 2) Reduce your initial time step/increment size by 50%. 3) Enable the "Large Displacement" flag. 4) Re-run and compare the force-strain curve to the classic Hayes et al. (1972) indentation data. If the discontinuity persists, it is a solver contact instability—refine the mesh at the indenter contact region.

Q2: When comparing OpenSim gait simulation results to lab force plates, joint moments differ by over 15%. How do I verify what's wrong? A: A discrepancy >15% exceeds acceptable validation limits and requires a stepwise audit. Actionable Protocol: 1) Input Verification: Ensure your motion capture data is filtered correctly (low-pass Butterworth 6Hz). 2) Model Verification: Check if the model's anthropometrics match your subject. Scale the model precisely. 3) Inverse Dynamics Tool Verification: In OpenSim, run the provided "testInvDynamics" tool on the sample "gait2354" model. If this passes, your installation is correct. 4) Ground Reaction Force (GRF) Alignment: Misaligned GRF application point is the most common error. Visually verify the GRF vector visually passes through the model's center of pressure in the GUI.

Q3: My cell contraction analysis software (e.g., ImageJ plugin) gives different cytoskeletal strain values upon re-analysis of the same video. How do I establish a reliable baseline? A: This indicates poor repeatability, often from inconsistent parameter settings. Actionable Protocol for Verification: 1) Documentation Audit: Fully review the plugin's documentation for all thresholding and optical flow parameters. 2) Create a Synthetic Benchmark: Generate a known-displacement synthetic video using MATLAB/Python (e.g., a circle moving 5 pixels). Process this with your plugin. 3) Quantify Error: Calculate the Root Mean Square Error (RMSE) between the plugin's output and the known displacement. If RMSE > 0.5 pixels, the algorithm is unstable. 4) Parameter Locking: Document the exact parameter set that yields the correct result on the synthetic benchmark and use only that set for all experimental videos.

Experimental Protocol: Verification of a Finite Element Solver for Bone Micromechanics

Objective: To verify the accuracy of a commercial FEA software's elastic solution for trabecular bone against µCT-derived experimental mechanical testing.

Materials & Reagents:

- Human trabecular bone core: Ø5mm, from femoral head.

- µCT Scanner: (e.g., SkyScan 1272) for 3D geometry acquisition.

- Materials Testing System: (e.g., Instron 5848) for unconfined compression.

- Phosphate-Buffered Saline (PBS): To keep specimen hydrated.

- Commercial FEA Software: (e.g., ANSYS, Abaqus) with µCT image import module.

Methodology:

- Sample Preparation & Imaging: Scan bone core at 10µm isotropic resolution in µCT. Reconstruct 3D image.

- Experimental Benchmark: Perform unconfined compression test at 0.01%/s strain rate in PBS to 1% strain. Record stress-strain curve for elastic modulus (E_exp).

- FE Model Generation:

- Import µCT image stack into FEA software.

- Threshold and convert to a 3D tetrahedral mesh (element size ~20µm).

- Assign material properties: Isotropic linear elasticity, with initial guess E_guess=1 GPa, Poisson's ratio ν=0.3.

- Apply boundary conditions matching experiment: Fix bottom, displace top surface.

- Solver Execution & Verification: Run linear static analysis. Extract reaction force, compute apparent modulus (E_FEA).

- Iterative Calibration & Verification: Adjust Eguess in the model until EFEA matches Eexp. The final validated Eguess is the verified tissue-level modulus. Document all solver settings (element type, solver type, convergence tolerance).

The Scientist's Toolkit: Research Reagent Solutions for Mechanobiology Assays

Table: Essential Reagents for Validating Software-Predicted Mechanobiological Effects

| Reagent/Material | Function in Verification | Example Product/Catalog # |

|---|---|---|

| Cytochalasin D | Actin cytoskeleton disruptor; used to verify models predicting actin's role in cellular stiffness. | Sigma-Aldrich, C8273 |

| Y-27632 (ROCK Inhibitor) | Inhibits Rho-associated kinase; validates model predictions of stress fiber contractility in cell migration. | Tocris Bioscience, 1254 |

| Fluorescent Gelatin (DQ-Gelatin) | Proteolysis substrate; validates software predictions of pericellular protease activity under shear stress. | Thermo Fisher Scientific, D12060 |

| TRITC-Phalloidin | Stains F-actin; enables quantitative comparison of software-predicted vs. actual stress fiber alignment. | Sigma-Aldrich, P1951 |

| Polyacrylamide Hydrogels of Defined Stiffness | Provides substrates with known elastic modulus (0.1-50 kPa) to verify cell mechanics model predictions. | BioVision, Inc., or in-house fabrication. |

| Microsphere Traction Force Beads (Red Fluorescent) | Embedded in hydrogels to measure cellular traction forces; ground truth for FEA-based force estimation. | FluoSpheres, F8810 |

Visualizations

Title: Software Verification Workflow for Biomechanics

Title: Mechanotransduction Pathway Validated by Software & Reagents

Troubleshooting Guides & FAQs

Q1: When verifying commercial software results for a simple tendon force model, my closed-form solution for stress (Force/Area) deviates >5% from the software's FEA output. What are the primary checkpoints?

A1: Follow this structured troubleshooting protocol:

- Boundary Condition Alignment: Ensure the analytical model's fixed and free boundaries exactly match the software's implicit settings. Commercial solvers often apply automatic constraints.

- Material Model Consistency: Verify the software is set to a linear-elastic, isotropic material with the same Young's modulus (E) and Poisson's ratio (ν) used in your hand calculation. Defaults may be different.

- Geometry Simplification: Confirm the software's geometry truly represents the simplified cross-sectional area (A) used in your σ=F/A calculation. Check for unintended fillets or tapered sections in the CAD import.

Q2: I derived the closed-form beam deflection equation, but my software's dynamic simulation of the same cantilever beam under a static load shows different results. How do I diagnose this?

A2: This indicates a dynamic solver setting issue. Implement this experimental verification protocol:

- Step 1: Ensure all damping coefficients (Rayleigh damping) in the software are set to zero.

- Step 2: Set the solver to "Static" or "Quasi-static." If using a dynamic solver, apply the load linearly over a long duration (e.g., 10 seconds) to negate inertial effects.

- Step 3: Compare the software's final equilibrium state deflection directly with your analytical solution

y_max = (P*L^3)/(3*E*I).

Q3: For verifying a joint reaction force calculation in a static posture, my free-body diagram solution conflicts with the software's inverse dynamics output. What is the systematic verification pathway?

A3: Conflict often arises from input data mismatch. Execute this calibration experiment:

- Input Synchronization: Create a table in your software that explicitly lists all segment masses, center of mass locations, and lengths from your analytical model.

- Force & Moment Validation: Isolate a single segment (e.g., forearm). Use the software's tool to output the net force and moment at the joint for a static frame. Compare these vectors directly to your free-body diagram calculations.

Table 1: Common Analytical Solutions for Verifying Software Results

| Biomechanical Model | Analytical Solution Formula | Key Parameters to Match in Software | Expected Agreement |

|---|---|---|---|

| Uniaxial Tendon Stress | σ = F / A | Cross-sectional Area (A), Applied Force (F) | >99% |

| Cantilever Beam Deflection | y_max = (P * L³) / (3 * E * I) | Load (P), Length (L), Modulus (E), 2nd Moment of Area (I) | >98% |

| Two-Segment Static Equilibrium | ΣM_joint = 0, ΣF = 0 | Segment Mass, CoM Position, Gravity Vector | >95% |

| Linear Spring System | F = k * Δx | Spring Stiffness (k), Displacement (Δx) | >99.5% |

Table 2: Troubleshooting Checklist: Software vs. Closed-Form Discrepancy

| Symptom | Likely Cause | Verification Experiment |

|---|---|---|

| Stress values off by a constant multiplier | Units mismatch (MPa vs kPa, mm² vs m²). | Run a unit calibration test with a 1N load on a 1mm² area. |

| Deflection shape matches, magnitude is off | Incorrect material property (E) or inertia (I). | Model a standard beam with published E and I; solve for tip deflection. |

| Reaction forces are present when none are expected | Unintended software constraints (e.g., fixed joint). | Model a free body in space; reaction forces should be zero. |

| Dynamic result doesn't converge to static solution | Excessive damping or inertial effects in static load. | Follow Protocol A2, Step 2 (apply load slowly). |

Experimental Verification Protocols

Protocol A: Verification of a Linear Elastic Uniaxial Test Simulation

- Objective: Confirm commercial FEA software matches Hooke's Law (σ = Eε) for a simple bar.

- Materials: Software (e.g., Abaqus, ANSYS, AnyBody); scripting interface.

- Method: a. Create a 3D cylindrical bar (L=100mm, r=5mm). Mesh with 20-node hexagonal elements. b. Assign linear elastic material: E=500 MPa, ν=0.3. c. Apply a fixed constraint to one face. Apply a tensile force F=1000 N to the opposite face. d. Run a static linear analysis. e. Extract average axial stress and strain from the central element group. f. Compare to analytical: σ = F/(π*r²)=12.73 MPa, ε = σ/E=0.02546.

- Acceptance Criterion: Software results within 1% of analytical values.

Protocol B: Verification of Segmental Static Equilibrium in a Multi-Body System

- Objective: Validate that software inverse dynamics computes correct joint moments for a static, known posture.

- Materials: Biomechanics software (OpenSim, AnyBody); subject-specific model.

- Method: a. Simplify model to a two-link system (thigh, shank) in a seated 90° knee-flexion posture. b. Input exact segment masses and CoM locations from anthropometric tables. c. Lock all joints in the static posture. Run an inverse static analysis. d. Output the knee joint reaction moment. e. Calculate moment manually using free-body diagram of the shank: Mknee = mshank * g * d, where d is horizontal distance from knee to shank CoM.

- Acceptance Criterion: Computed joint moment from software matches hand calculation within 2%.

Visualizations

Title: Troubleshooting Path for Solution Discrepancy

Title: Verification Workflow: Analytical vs. Software Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Verification Studies

| Item/Reagent | Function in Verification | Example/Specification |

|---|---|---|

| Standardized Geometric Phantoms | Provides known geometry (length, area, volume) to test software's mesh generation and basic mechanics. | Idealized CAD files: cylinder, beam, sphere with published dimensions. |

| Reference Material Properties Database | Supplies standardized material constants (E, ν, density) for input into both analytical and software models. | Published values for cortical bone (E=17 GPa), rubber (E=0.01 GPa), tendon (E=1.2 GPa). |

| Benchmark Problem Sets | Offers pre-solved, complex analytical solutions for non-trivial biomechanics problems. | "Nafoletto" foot model static equilibrium problem; "Felix" knee contact force challenge. |

| Scripting Interface (API) Access | Enables automated parameter sweeps and direct extraction of raw output data for comparison. | Python scripts for Abaqus, MATLAB interface for OpenSim/AnyBody. |

| Unit Conversion & Dimensional Analysis Tool | Prevents fundamental errors by ensuring consistency across all model inputs. | Software like Mathcad or a custom spreadsheet with SI unit enforcement. |

Troubleshooting Guides & FAQs

Q1: My simulation results change significantly with each mesh refinement. How do I determine if I've achieved mesh independence? A: This indicates you are likely in a pre-convergence zone. Implement a systematic mesh sensitivity study:

- Start with a coarse base mesh.

- Refine globally (or in regions of high stress/strain gradient) by ~20-30% element size reduction per step.

- Monitor key output variables (e.g., max principal stress, strain energy, displacement at a critical point).

- Convergence is typically declared when the change in these outputs between successive refinements is less than a predetermined threshold (e.g., 2-5%). Use a table to track progress.

Q2: The solver aborts with "Solution does not converge" errors. What are the first steps to address this? A: Solver instability often stems from model definition or numerical issues.

- Check Material Models: Ensure material properties are physically realistic and units are consistent. Hyperelastic or plastic models may require stable tangent matrices.

- Review Contact Definitions: Penetrations or overly aggressive contact stiffness can cause divergence. Verify initial contact conditions and consider using a softer contact algorithm initially.

- Adjust Solver Controls: For implicit solvers, gradually increase the number of iterations and adjust the tolerance. For difficult nonlinearities, use line searches or stabilization (damping) factors. For explicit solvers, ensure the stable time increment is not excessively small due to a single tiny or distorted element.

Q3: How can I distinguish between a true physical instability (like buckling) and a numerical instability? A: This is a critical distinction in verification.

- Mesh Dependency: A true instability will persist or converge to a consistent mode as the mesh is refined. A numerical instability may vanish or change pattern with refinement.

- Parameter Sensitivity: Slight perturbations in loading or geometry will alter the buckling mode but not eliminate it. Numerical instability may be "cured" by minor, physically irrelevant changes like damping value.

- Energy Balance: Monitor artificial strain energy (for ABAQUS) or hourglass energy. These should be a small fraction (<5-10%) of internal strain energy. High values indicate numerical distortion.

Q4: What is a robust experimental protocol for conducting a convergence test within a biomechanics thesis study? A: Follow this documented methodology:

Protocol: Mesh Convergence for Soft Tissue Stress Analysis

- Objective: Determine the mesh density required for mesh-independent von Mises stress results in a femoral cartilage model under compressive load.

- Software: ANSYS Mechanical v2023 R2 (or similar).

- Variable: Monitor Peak von Mises Stress (MPa) and Total Strain Energy (J).

- Procedure: a. Generate Mesh 1 (Coarse: ~10,000 tetrahedral elements). b. Apply physiological loading (1500 N compressive force) and boundary conditions. c. Solve using implicit static solver with large deflection ON. d. Record output variables. e. Refine mesh globally by 25% (increase element count), creating Mesh 2. f. Repeat solve and data recording. g. Iterate to create Mesh 3 and Mesh 4.

- Convergence Criterion: The solution is considered mesh-independent when the relative difference in Peak von Mises Stress between two successive meshes is ≤ 3%.

- Data Presentation: Results must be tabulated.

Q5: Are there specific convergence considerations for fluid-structure interaction (FSI) simulations in cardiovascular biomechanics? A: Yes, FSI introduces coupled-field complexities.

- Dual Convergence: You must achieve mesh independence for both the fluid domain (monitoring wall shear stress, pressure drop) and the solid domain (monitoring wall stress/displacement).

- Solver Coupling: Ensure stability of the coupling algorithm (e.g., partitioned Gauss-Seidel). Often, under-relaxation factors are needed. Monitor the residual of the coupled system across iterations.

- Time Step Independence: In addition to spatial mesh, you must perform a temporal convergence study by reducing the time step until key outputs stabilize.

Data Presentation

Table 1: Mesh Convergence Study for Tibial Implant Micromotion

| Mesh ID | Number of Elements (Millions) | Avg. Element Size (mm) | Peak Micromotion (µm) | % Change from Previous Mesh | Comp. Time (hrs) |

|---|---|---|---|---|---|

| M1 | 0.5 | 0.8 | 125.6 | N/A | 0.5 |

| M2 | 1.2 | 0.5 | 142.3 | +13.3% | 1.8 |

| M3 | 2.9 | 0.3 | 148.7 | +4.5% | 5.5 |

| M4 | 5.0 | 0.2 | 149.8 | +0.7% | 12.0 |

Based on a representative convergence study for implant stability analysis. Mesh M4 satisfies a <2% change criterion.

Table 2: Solver Stability Analysis for Tendon Nonlinear Hyperelastic Model

| Solver Configuration | Max. Increment Size | Stabilization (Damping) Factor | Convergence Achieved? | Notes |

|---|---|---|---|---|

| Default (Newton) | 1.0 | None | No | Diverged at 12% applied strain |

| Modified 1 | 0.5 | None | No | Diverged at 45% applied strain |

| Modified 2 | 0.2 | 0.1E-4 | Yes | Completed full 80% strain loading |

| Modified 3 | 0.1 | None | Yes | Completed, but 2.3x longer CPU time |

Illustrates the trade-off between stabilization techniques and computational efficiency.

Mandatory Visualization

Title: Convergence Testing Workflow for Mesh Independence

Title: Solver Instability Diagnostic Decision Tree

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

| Item/Software Module | Function in Convergence & Stability Testing |

|---|---|

| Adaptive Mesh Refinement (AMR) Tool | Automatically refines mesh in regions of high solution gradient, improving convergence efficiency. |

| Solver Stabilization (e.g., Viscous Damping) | Adds artificial numerical damping to dissipate energy and overcome convergence hurdles in unstable static problems. |

| Line Search Algorithm | Improves convergence of Newton-Raphson methods by scaling the iteration step size. |

| High-Performance Computing (HPC) Cluster License | Enables running high-fidelity, finely meshed models required for conclusive convergence studies in reasonable time. |

| Python/Matlab Automation Script | Automates the batch process of mesh generation, job submission, and result extraction for systematic studies. |

| Reference Analytical Solution (e.g., Patch Test) | A simple benchmark with a known solution to verify solver and element formulation correctness before complex studies. |

| Post-Processor with Field Calculator | Allows creation and monitoring of custom convergence metrics (e.g., energy norm error) across different meshes. |

Troubleshooting Guide & FAQs

Q1: When I compare my software's output to a published dataset (e.g., LINCS L1000), the correlation coefficients are consistently lower than literature values. What could be the cause? A: This is a common calibration issue. First, verify your input normalization. Published datasets often apply specific scaling (e.g., robust z-scoring) that your software might not replicate by default. Check the original dataset's preprocessing protocol. Second, ensure you are comparing analogous data levels. Confusing gene-level expression with signature-level scores will yield poor correlations. Re-run the comparison using the exact same summary statistic (e.g., Level 4 vs. Level 5 data in LINCS).

Q2: My software fails to reproduce a key pathway activation score from a community challenge (e.g., a DREAM Challenge). How do I debug this? A: Systematically isolate the discrepancy. Follow this protocol:

- Input Verification: Download the challenge's raw input data again to rule out corruption.

- Stepwise Output: If possible, configure your software to export intermediate results (e.g., normalized counts, fold-changes, prior knowledge weights).

- Modular Benchmarking: Compare each intermediate output against any available intermediate benchmarks from the challenge. The error often lies in the normalization or aggregation step, not the core algorithm.

- Containerization: Run your analysis in a containerized environment (e.g., Docker) provided by the challenge to eliminate OS and dependency conflicts.

Q3: I encounter "missing identifier" errors when mapping my results to a reference database like STRING or KEGG for benchmarking.

A: This is typically an identifier mismatch. Use a dedicated conversion tool (e.g., bioDBnet, g:Profiler's gconvert) to map your software's output identifiers (e.g., Ensembl ID) to the database's required type (e.g., UniProt). Always use the stable release version of the database that matches the benchmarking publication's time frame, as entries can change.

Q4: How do I handle contradictory results when benchmarking against multiple datasets? A: Contradiction often reveals biological or technical context. Create a structured comparison table:

Table: Framework for Resolving Benchmarking Contradictions

| Factor to Compare | Dataset A (Supporting Result) | Dataset B (Contradicting Result) | Investigation Action |

|---|---|---|---|

| Cell Line/Model | Primary cardiomyocytes | Immortalized HEK293 | Check for known pathway differences in these models. |

| Perturbation Type | Genetic knockdown (siRNA) | Small molecule inhibitor | Assess off-target effects of the compound. |

| Time Point | 24-hour exposure | 2-hour exposure | Analyze if your result is time-sensitive. |

| Assay Technology | RNA-seq | Microarray | Investigate platform-specific biases (e.g., probe design). |

Q5: The community challenge leaderboard uses a specific evaluation metric (e.g., Area Under the Precision-Recall Curve). How do I compute this accurately from my software's output?

A: Do not implement the metric from scratch. Use the challenge's official evaluation script, often provided in GitHub repositories. If unavailable, use a rigorously tested library like scikit-learn in Python. Ensure your software's output format (score ranking, binary prediction) exactly matches the script's expected input. Test with the challenge's example data first.

Experimental Protocol: Benchmarking Against a Published Phosphoproteomics Dataset

Objective: To verify a commercial phospho-kinase analysis tool's output against a gold-standard mass spectrometry (MS) dataset.

- Dataset Acquisition: Download the curated dataset from a repository like PRIDE (e.g., PXD123456). Obtain the normalized phosphorylation intensity matrix and the sample annotation file.

- Software Processing: Input the corresponding raw experimental data (cell line, treatment, time point) into your commercial software. Export its kinase activity scores (e.g., z-scores, probabilities).

- Data Alignment: Map the software's kinase targets to the UniProt IDs in the MS dataset. Aggregate MS phosphopeptide intensities for each kinase based on known substrate sites.

- Correlation Analysis: For each treatment vs. control pair, calculate the Spearman correlation between the software's kinase activity score and the log2-fold-change of aggregated substrate phosphorylation from the MS data.

- Validation Threshold: A Pearson |r| > 0.7 with a p-value < 0.05 for key modulated kinases is considered successful verification.

Diagram: Benchmarking Workflow for Software Verification

The Scientist's Toolkit: Key Reagents & Resources for Benchmarking

Table: Essential Resources for Benchmarking Biomechanics Software

| Item | Function in Benchmarking | Example/Provider |

|---|---|---|

| Reference Datasets | Provide ground truth for algorithm validation. | LINCS L1000, TCGA, CMap, PRIDE proteomics. |

| Community Challenge Platforms | Standardized framework for comparative performance assessment. | DREAM Challenges, CAFA, CASP. |

| Data Converter Tools | Resolve identifier mismatches between software and databases. | bioDBnet, g:Profiler, UniProt ID Mapping. |

| Containerization Software | Ensures reproducible environment for running challenge pipelines. | Docker, Singularity. |

| Metric Calculation Libraries | Trusted implementation of performance metrics. | scikit-learn (Python), caret (R). |

| Pathway Databases | Source of prior knowledge for pathway activation benchmarking. | KEGG, Reactome, WikiPathways. |

Troubleshooting Guides & FAQs

Q1: My software outputs a peak muscle force of 5000 N for a human bicep during a curl. How do I perform a basic sanity check? A: This value is physiologically implausible. Perform a unit and scale check.

- Concept: Relate force to fundamental physical limits. Muscle stress (Force/Cross-Sectional Area) for mammalian skeletal muscle has a theoretical maximum of ~0.3 MPa.

- Calculation: A large bicep has a physiological cross-sectional area (PCSA) of ~20 cm² (0.002 m²).

- Max Expected Force = Max Stress × PCSA = 300,000 Pa × 0.002 m² = 600 N.

- Conclusion: A 5000 N result is ~8x this upper bound, indicating a potential unit conversion error (e.g., grams-force vs. Newtons), incorrect model scaling, or erroneous material property assignment.

Q2: My joint contact pressure simulation shows 50 MPa in the hip cartilage. Is this reasonable? A: No. This exceeds the ultimate tensile strength of articular cartilage. Use known material property ranges for a plausibility check.

- Reference Data: Healthy articular cartilage compressive modulus is typically 0.5 - 2 MPa. Failure stress is far lower.

- Check: 50 MPa is more characteristic of metals, not soft hydrated tissues. This likely indicates an error in applied load magnitude, contact area definition, or an overly stiff material model assigned to the cartilage.

Q3: The metabolic cost output from my musculoskeletal simulation is 250 W/kg for walking. What's wrong? A: This is orders of magnitude too high. Compare against established physiological benchmarks.

- Benchmark: The basal metabolic rate is ~1.2 W/kg. Walking typically costs ~2-4 W/kg above resting.

- Action: Suspect a mismatch in time units (e.g., power calculated per stride but reported per second) or incorrect summation of energy rates across muscles.

Q4: How do I formally check the dimensional consistency of my simulation outputs? A: Implement a step-by-step dimensional analysis protocol for all primary outputs.

| Output Variable | Common Units (SI) | Base SI Dimensions | Physiological Plausibility Range (Human Adult) | Common Source of Dimensional Error |

|---|---|---|---|---|

| Force | Newton (N) | kg·m·s⁻² | Muscle force: Tens to ~1000s of N. Joint contact: Up to ~5x body weight. | Confusing mass (kg) and force (N). Forgetting gravity scaling (mass * 9.81). |

| Moment/Torque | Newton-meter (Nm) | kg·m²·s⁻² | Ankle: ~200 Nm, Knee: ~300 Nm, Hip: ~400 Nm (gait). | Incorrect moment arm units (e.g., cm vs m). |

| Pressure/Stress | Pascal (Pa), Megapascal (MPa) | kg·m⁻¹·s⁻² | Cartilage contact: 1-10 MPa. Tendon stress: 50-100 MPa. | Incorrect area calculation (mm² vs m²). Force and area units mismatch. |

| Power | Watt (W) | kg·m²·s⁻³ | Whole-body net metabolic for walking: ~100-400 W. | Product of force (N) and velocity (m/s), but with unit/time errors. |

| Energy | Joule (J) | kg·m²·s⁻² | Work per gait cycle: ~50 J. | Confusing power (W) and energy (J). Incorrect time integration. |

Experimental Protocol: Dimensional Analysis Verification for Simulation Outputs

Title: Protocol for Systematic Dimensional Verification of Biomechanical Outputs. Purpose: To identify unit conversion errors and implausible results by analyzing the physical dimensions of software outputs. Materials: Simulation output file, reference physiological data table, unit conversion calculator. Procedure:

- Isolate Key Outputs: List the 5-10 primary quantitative results (e.g.,

F_max,P_contact,E_metabolic). - Trace to Base Units: For each output, write its derived SI units (e.g., N = kg·m·s⁻²). Consult software documentation to confirm the reported unit.

- Construct Dimension Equation: Express the output as a product of input parameters. For example, muscle force (

kg·m·s⁻²) should relate to muscle stress (kg·m⁻¹·s⁻²) and area (m²). - Scale Comparison: Compare the magnitude of your result to the "Plausibility Range" table above. If it differs by more than one order of magnitude, investigate.

- Unit Sanity Test: Manually apply a known, simple input case (e.g., a known force on a known spring) to verify the software's input/output unit relationship.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Verification & Analysis |

|---|---|

| Reference Biomechanics Text (e.g., Winter's Biomechanics) | Provides foundational equations, standard variable notations, and benchmark physiological data for sanity checking. |

| Unit Conversion Software/Library (e.g., GNU Units, Python Pint) | Automates and reduces errors in converting between common (mmHg, kcal) and SI units. |

| Open-Source Dataset Repository (e.g., SimTK, PhysioNet) | Supplies real-world experimental data (kinematics, forces, EMG) for comparing against simulation outputs. |

| Scripting Environment (e.g., Python with NumPy/Matplotlib) | Enables automated post-processing, dimensional analysis, and generation of consistency plots. |

| Material Property Database (e.g., literature compilations) | Provides critical ranges for tissue properties (modulus, strength, density) essential for plausibility checks. |

Diagram: Workflow for Physiological Plausibility Verification

Diagram: Data Consistency Check Logic

Diagnosing Discrepancies: A Systematic Approach to Troubleshooting Results

Interpreting Error Messages and Warning Flags in Biomechanics Solvers

Troubleshooting Guide & FAQ

Frequently Asked Questions

Q1: What does the error "Singular Matrix" or "Jacobian is singular at iteration X" mean and how do I resolve it? A: This indicates the solver's system of equations has become ill-conditioned, often due to insufficient model constraints, excessive element distortion, or redundant kinematic constraints. Resolution steps include:

- Verify all joint and contact definitions for over-constraint.

- Check for unconnected parts or "floating" bodies in your assembly.

- For nonlinear materials, ensure the stress-strain curve is physically plausible and smooth.

- Gradually increase load in smaller increments (step size) rather than applying it fully in one step.

Q2: My simulation stops with "Time step too small" or "Convergence not achieved." What should I check? A: This warning suggests the solver cannot find an equilibrium solution for the given increment, typically due to:

- Material Instability: The material model (e.g., hyperelastic, plastic) may be unstable at the computed strains. Verify material parameters against experimental data.

- Contact Issues: Sharp discontinuities from sudden contact creation. Refine the contact surface mesh, adjust penalty stiffness, or define smoother contact initiation.

- Large Deformations: The model may exhibit buckling or snapping. Enable nonlinear geometry (Large Displacement) and consider using an arc-length control method (Riks) for post-instability analysis.

Q3: How should I interpret the warning "Negative Eigenvalue in the Stiffness Matrix"? A: This is a critical numerical flag indicating a loss of structural stability or uniqueness of solution, often preceding buckling, material softening, or liftoff in contact. It is both a warning and a diagnostic tool. Your action should be to:

- Analyze the output state: Visualize the model at the increment where the warning first appears. Look for buckling, excessive element distortion, or contact separation.

- Verify Intent: Determine if the instability is physically expected (e.g., tissue tearing, joint dislocation) or a numerical artifact.

- For Physical Instability: Use solver controls that accommodate path-following (like Riks method) to trace the post-buckling or softening response.

- For Numerical Artifact: Check for overly soft boundary conditions, insufficiently restrained rigid body modes, or inappropriate element formulation for large strains.

Q4: What do "Hourglassing" or "Zero-Energy Mode" warnings indicate in musculoskeletal finite element models? A: These are specific to reduced-integration elements (common for computational efficiency). They signal the development of a non-physical, oscillatory deformation pattern that doesn't generate strain energy. To mitigate:

- Activate Hourglass Control: Most solvers offer enhanced hourglass control or artificial stiffness. Use with caution to avoid over-stiffening.

- Refine Mesh: A finer mesh can reduce hourglassing patterns.

- Switch Element Type: Consider using fully integrated elements (typically slower but more robust) for critical soft tissue regions.

Q5: "Maximum iterations exceeded" is a common error. What is the systematic approach to address it? A: This is a root-level convergence failure. Follow this protocol:

| Check Category | Specific Item to Investigate | Typical Adjustment |

|---|---|---|

| Model Definition | Unconstrained rigid body motion. | Add soft springs or friction constraints. |

| Initial penetrations in contact. | Adjust initial positions or use "adjust to touch". | |

| Material & Load | Unrealistic material parameters (e.g., GPa vs MPa). | Review and scale units; use literature values. |

| Discontinuous load application. | Apply loads gradually over more steps. | |

| Solver Settings | Too tight convergence tolerance. | Relax tolerance from 1e-6 to 1e-5 as a test. |

| Default Newton-Raphson scheme struggling. | Activate Line Search or Quasi-Newton methods. |

Experimental Protocol for Software Verification (Context: Thesis on Techniques for Verifying Commercial Software Biomechanics Results)

Protocol 1: Analytical Benchmarking for Solver Logic Objective: To verify that the solver's core numerical implementation correctly solves fundamental biomechanical problems with known analytical solutions. Methodology:

- Construct Simple Models: In the commercial software (e.g., Abaqus, Ansys, AnyBody), create models of a 1D tendon under tension (linear spring), a pressurized thick-walled sphere (closed-form elastic solution), and a simple pendulum.

- Prescribe Inputs: Apply loads, pressures, or initial displacements matching the analytical problem's assumptions.

- Run Simulations: Execute static and dynamic analyses.

- Quantitative Comparison: Compare software output (stress, strain, natural frequency) to the exact mathematical solution. Calculate percentage error.

Protocol 2: Convergence Analysis for Mesh and Time Step Independence Objective: To ensure simulation results are not artifacts of discretization. Methodology:

- Select a Key Output Variable (KOV): For a complex model (e.g., knee joint contact stress), define the KOV (e.g., peak cartilage pressure).

- Systematic Refinement: Run a series of simulations with progressively finer global mesh sizes (e.g., 4mm, 2mm, 1mm, 0.5mm element size) and smaller time steps for dynamics.

- Plot and Analyze: Plot the KOV against element size or time step. Result is considered independent when the change in KOV is <2-5% between refinements.

- Document: The final reported result must use mesh/time-step parameters from the independent region.

Research Reagent Solutions & Essential Materials

| Item / Solution | Function in Biomechanics Verification |

|---|---|

| Open-Space Benchmark Suite (e.g., NAFEMS, SIMBIO) | Provides standardized, peer-reviewed FEA problems with certified results to test solver accuracy. |

| Custom MATLAB/Python Scripts | For automating the comparison of simulation output to analytical solutions and calculating error metrics (RMSE, NRMSE). |

| Digital Calibration Phantom (e.g., 3D printable lattice or compliant mechanism) | A physical object with known deformation under load, used to validate coupled MRI/FEA or optical motion capture simulations. |

| Literature Meta-Dataset | A curated database of published experimental results (e.g., tendon modulus, joint kinematics) serves as a "reagent" for validating model predictions. |

| Containerized Software Environment (Docker/Singularity) | Ensures the exact solver version and settings used can be reproduced, acting as a "buffer solution" for replicable results. |

Visualization: Workflow for Error Diagnosis

Title: Error Diagnosis Workflow for Biomechanics Solvers

Visualization: Protocol for Convergence Analysis

Title: Convergence Analysis Protocol for Mesh Independence

Technical Support Center: Troubleshooting & FAQs

FAQ 1: How do I determine which input parameters are most influential when my biomechanics simulation results are unstable?

- Answer: Unstable results often indicate high sensitivity to certain inputs. We recommend performing a global sensitivity analysis (GSA), such as Sobol' indices. First, define a plausible range (minimum/maximum) for each uncertain input parameter based on experimental literature. Use a sampling method (e.g., Latin Hypercube) to generate input combinations. Run your commercial software (e.g., AnyBody, OpenSim, FEBio) for each combination and collect the key output metric. Calculate first-order and total-effect Sobol' indices using a statistical package (e.g., SALib in Python). Parameters with high total-effect indices (>0.7) are the key drivers of variability and require precise characterization.

FAQ 2: My software's output changes dramatically with small input variations. Is this a software bug or an expected sensitivity?

- Answer: Before reporting a bug, conduct a local one-at-a-time (OAT) sensitivity screen. Vary each input parameter by a small, physiologically relevant amount (±5%) from its nominal value while holding others constant. Calculate the normalized sensitivity coefficient (S) for each parameter-output pair. A coefficient with an absolute value >>1 indicates a highly sensitive relationship, which may be a feature of the underlying biomechanics model, not a bug. Compare your findings against published sensitivity studies for similar models.

FAQ 3: What is the best practice for sampling input parameter spaces in complex, computationally expensive musculoskeletal models?

- Answer: For expensive models, a space-filling design like Latin Hypercube Sampling (LHS) is preferred over full factorial designs. It ensures efficient coverage of the multi-dimensional parameter space with fewer runs. We recommend a sample size of at least N=128*(number of parameters) for initial screening. If runtime is prohibitive, consider building a surrogate model (e.g., a Gaussian Process emulator) from a smaller LHS dataset, then perform the sensitivity analysis on the faster surrogate.

FAQ 4: How can I verify that my sensitivity analysis results are robust and not an artifact of my sampling method?

- Answer: Robustness must be verified. Perform a convergence analysis by incrementally increasing your sample size (e.g., N=100, 500, 1000) and recalculating sensitivity indices. The indices for the key parameters should stabilize. Additionally, repeat the entire GSA with a different random seed for sampling. Compare the rankings of the top three sensitive parameters; they should be consistent. Significant divergence suggests an under-sampled or highly non-linear/interactive parameter space.

Data Presentation: Summary of Common Sensitivity Analysis Methods

| Method | Type | Key Metric | Pros | Cons | Best For |

|---|---|---|---|---|---|

| One-at-a-Time (OAT) | Local | Normalized Sensitivity Coefficient (∂Y/∂X * X/Y) | Simple, intuitive, low computational cost. | Misses interactions, only explores local space. | Initial screening, model debugging. |

| Morris Method | Global | Elementary Effects (μ*, σ) | Efficient screening, ranks parameter influence. | Qualitative ranking, no precise variance apportionment. | Identifying unimportant parameters in models with many inputs. |