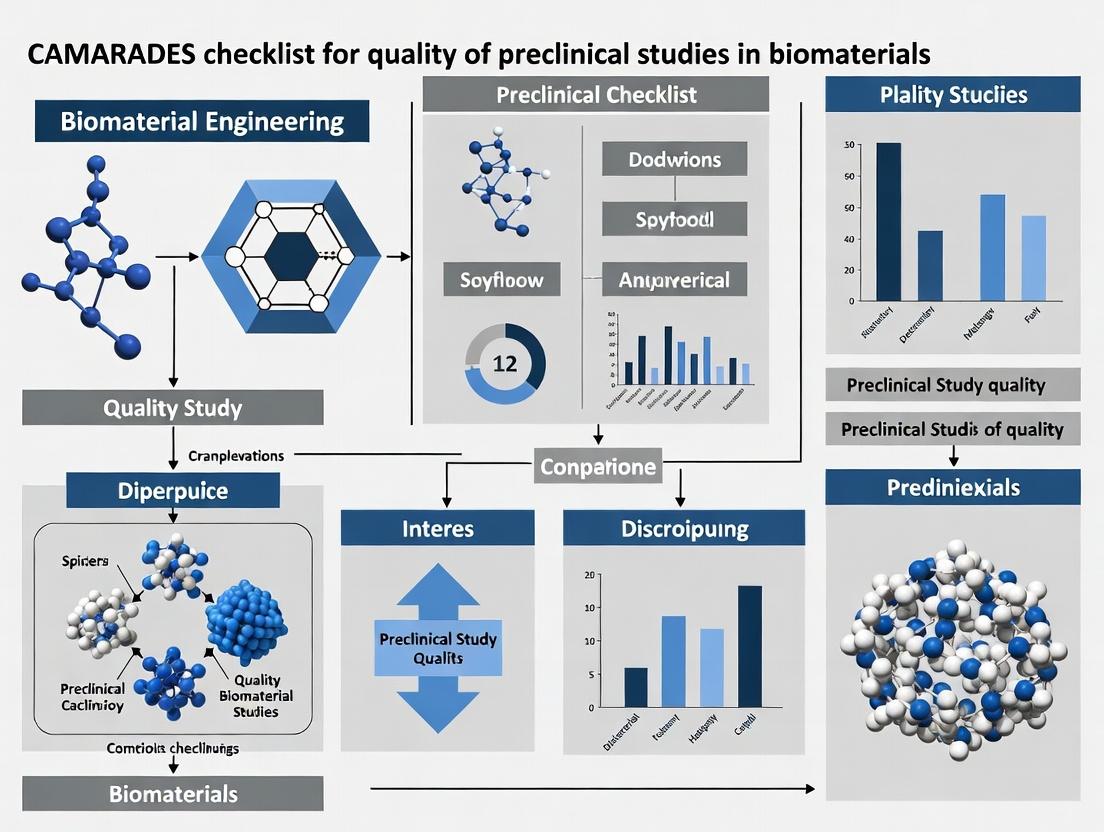

Beyond Bias: The Ultimate CAMARADES Checklist Guide for High-Quality Biomaterial Studies

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the CAMARADES checklist to biomaterial research.

Beyond Bias: The Ultimate CAMARADES Checklist Guide for High-Quality Biomaterial Studies

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the CAMARADES checklist to biomaterial research. It explores the foundational principles of study quality assessment, offers practical methodological steps for implementation, addresses common troubleshooting and optimization challenges, and presents frameworks for validation and comparison with other guidelines like ARRIVE and PRISMA. The goal is to empower scientists to design, execute, and report robust, reproducible, and clinically translatable biomaterial studies, ultimately enhancing the credibility and impact of preclinical research in the field.

What is CAMARADES? Demystifying the Gold Standard for Preclinical Biomaterial Research Quality

Introduction The Collaborative Approach to Meta-Analysis and Review of Animal Data from Experimental Studies (CAMARADES) framework originated to address the critical need for improving the quality, transparency, and reproducibility of animal research, primarily in neurological fields like stroke. Its core mandate is to mitigate bias through a systematic checklist. As biomaterials research for drug delivery, tissue engineering, and regenerative medicine has matured, the complexity of in vivo studies has surged. This necessitates the rigorous application of quality assessment tools. This article posits that the adaptation and strict application of the CAMARADES checklist are indispensable for advancing credible, clinically translatable biomaterials research.

Technical Support Center: CAMARADES for BiomaterialIn VivoStudies

FAQs & Troubleshooting

Q1: Our biomaterial implantation study showed high efficacy, but the meta-analysis flagged us for "lack of randomization." Why is this critical, and how do we implement it correctly? A: Randomization minimizes selection bias by ensuring each animal has an equal chance of receiving any experimental group (e.g., novel hydrogel vs. control). Its absence is a major source of overestimated effect sizes.

- Protocol: Use a computer-generated random number sequence or a random number table. After assigning a unique ID to each animal, use the sequence to allocate them to groups. The allocation list must be concealed until after group assignment (allocation concealment).

- Troubleshooting: Do not randomize by cage or birth order. Use sealed, opaque envelopes for small studies or a dedicated online randomization service for larger ones.

Q2: What constitutes adequate "blinding" during outcome assessment in a biomaterial study, especially when physical differences are visible? A: Blinding (masking) prevents observer bias. For biomaterials, where the implant may be visible (e.g., subcutaneous), special measures are needed.

- Protocol: The outcome assessor (e.g., histologist, behavior analyst) must be different from the surgeon. For imaging/histology, code all samples with blind labels. For functional recovery, use automated scoring systems or video analysis assessed by a blinded third party.

- Troubleshooting: If a material's presence is unmistakable, consider having an independent blinded pathologist assess specific, pre-defined endpoints (e.g., inflammation score, vessel counts) from standardized, coded micrographs.

Q3: How do we justify our sample size for a novel bone graft experiment to satisfy the "sample size calculation" item? A: A priori sample size calculation uses a pre-experiment effect size estimate to ensure sufficient statistical power, reducing the risk of false negatives.

- Protocol:

- Define your primary outcome measure (e.g., bone volume fraction in µCT).

- Determine the minimum clinically/scientifically meaningful effect size (∆).

- Estimate the expected standard deviation (σ) from pilot data or literature.

- Set your desired statistical power (typically 80%) and significance level (α=0.05).

- Use the formula for a two-group comparison:

n per group = 2 * [(Zα/2 + Zβ)^2 * σ^2] / ∆^2. Tools like G*Power automate this.

Q4: We encountered unexpected animal mortality. How should we handle "complete outcome data" and reporting of animals excluded from analysis? A: All animals allocated to groups must be accounted for. Exclusions can introduce attrition bias.

- Protocol: Use a pre-defined, ethically approved exclusion criterion (e.g., post-operative complications unrelated to the biomaterial, like infection). Maintain a detailed study flow diagram.

- Troubleshooting: Report the number of animals excluded per group and the precise reason. Analyze data using both an "Intention-to-Treat" (include all allocated animals with imputation for missing data) and a "Per-Protocol" analysis to show consistency.

Q5: For biomaterials, what are the key elements of a "statement of potential conflicts of interest"? A: This is vital for transparency, as financial or intellectual interests can consciously or unconsciously influence study design, analysis, or reporting.

- Protocol: Disclose all funding sources for the work. Disclose any patents (pending or granted) on the material. Declare any financial stakes in companies commercializing the technology. State if a material was provided gratis by a company with a vested interest.

Data Presentation: Core CAMARADES Items & Biomaterials Application

Table 1: Evolution of CAMARADES Checklist Application

| CAMARADES Item | Typical Stroke Study Application | Specific Adaptation for Biomaterials Studies |

|---|---|---|

| Peer-reviewed protocol | Pre-registration of hypothesis, design. | Pre-register material synthesis specs, sterilization method, implantation technique. |

| Randomization | Random assignment to treatment/control. | Randomization to material type, dosage, or carrier control. |

| Blinding | Blinded assessment of neurological score. | Blinded assessment of histology, imaging, biomechanical testing. |

| Sample size calculation | Based on behavioral effect size. | Based on primary biomaterial outcome (e.g., degradation rate, tensile strength gain). |

| Animal model characteristics | Species, strain, sex, age, weight. | Include material-relevant details: immune status, defect size/location model. |

| Experimental details | Dose, route, timing of drug. | Material characterization (e.g., porosity, modulus), surgical implant procedure, sterilization. |

| Outcome measures | Infarct volume, functional tests. | Material integration, foreign body response, degradation, functional restoration. |

| Conflict of interest | Funding from pharmaceutical company. | Funding from device company, material patents held by investigators. |

Experimental Protocol: Assessing Foreign Body Response to a Subcutaneous Implant (Key Cited Methodology)

Title: Histomorphometric Analysis of the Peri-Implant Fibrous Capsule. Objective: To quantitatively evaluate the foreign body reaction to a biomaterial implant over time. Materials: Test biomaterial (e.g., 5mm diameter disc), control material (e.g., medical-grade silicone), isoflurane, surgical tools, sutures, formalin, paraffin, H&E stain, Masson's Trichrome stain. Animals: Female C57BL/6 mice (n=8 per group per time point, justified by sample size calculation). Procedure:

- Randomization & Blinding: Animals are randomly assigned to test or control groups using a computer generator. The surgeon is unblinded, but all subsequent analysts are blinded to group codes.

- Implantation: Anesthetize mouse. Make a 1cm dorsal incision. Create a subcutaneous pocket. Implant one material disc. Close wound with sutures.

- Termination & Harvest: Euthanize at pre-defined endpoints (e.g., 1, 4, 12 weeks). Excise the implant with surrounding tissue en bloc.

- Histology: Fix in 10% formalin for 48h. Process, embed in paraffin. Section (5µm) through the implant center. Stain with H&E and Masson's Trichrome.

- Blinded Analysis: Using image analysis software (e.g., ImageJ), measure: a) Capsule thickness (µm) at 4 equidistant points, b) Cell density within capsule (cells/mm²), c) % area of collagen (from Trichrome).

- Statistical Analysis: Compare groups using two-way ANOVA (factors: material x time) with appropriate post-hoc tests. Report mean ± SD.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomaterial In Vivo Evaluation

| Item | Function | Example/Note |

|---|---|---|

| Medical-Grade Silicone / SHAM control | Biologically inert control material for comparison. | Essential for distinguishing baseline surgical response from material-specific response. |

| PBS or Saline (Vehicle Control) | Carrier control for injectable biomaterials (hydrogels, particles). | Controls for the effect of the injection procedure and volume. |

| Optimal Cutting Temperature (O.C.T.) Compound | For cryosectioning of hydrogel or soft tissue samples. | Preserves native structure of materials that may melt during paraffin processing. |

| Specific Antibody Panels (IHC/IF) | Characterization of immune response and integration. | CD68 (macrophages), CD3 (T-cells), α-SMA (myofibroblasts), CD31 (endothelial cells). |

| Micro-CT Contrast Agent | Enhancing material/tissue contrast for in vivo or ex vivo imaging. | Iodine-based agents (e.g., Lugol's) for soft biomaterials; Gold nanoparticles for targeted imaging. |

| Controlled-Release Anesthetic/Analgesic | Post-operative pain management per animal welfare guidelines. | Buprenorphine SR (sustained-release) ensures consistent analgesia, reducing stress confounders. |

Mandatory Visualizations

Technical Support Center

FAQs & Troubleshooting Guide

Q1: Why does our meta-analysis of biomaterial-induced osteogenesis show extreme heterogeneity (I² > 90%)?

- A: High heterogeneity often stems from unassessed variations in study quality. Using the CAMARADES checklist, you may find that studies differ critically in areas like randomization, blinding in outcome assessment, and sample size calculation. These methodological flaws introduce bias and variability. Solution: Perform a subgroup analysis based on a quality score (e.g., high vs. low CAMARADES compliance). This often explains heterogeneity and strengthens conclusions.

Q2: Our systematic review found consistently positive results, but a peer reviewer criticized it as "not credible." What went wrong?

- A: Consistent positive results without quality assessment may indicate publication bias or methodological bias across studies. The CAMARADES framework mandates assessing for selective outcome reporting and conflict of interest. Solution: Apply the checklist rigorously. Generate a funnel plot and conduct statistical tests for publication bias (e.g., Egger's test) only after accounting for study quality, as low-quality studies can distort these plots.

Q3: How do we handle a "negative" or null result study that has a high CAMARADES quality score?

- A: A high-quality null study is powerful evidence. It must be weighted appropriately in your analysis. Solution: In your meta-analysis, ensure the weighting algorithm (often inverse-variance) gives this study its due influence. Discuss its methodological rigor in contrast to lower-quality positive studies to provide a nuanced interpretation.

Q4: We are comparing two biomaterial coatings. How can a quality checklist inform our preclinical study design?

- A: The CAMARADES checklist serves as a pre-emptive quality control protocol. Solution: Before starting your experiment, use it as a design template: ensure you implement allocation concealment, blinded histological scoring, pre-defined primary endpoints, and a sample size justified by a power calculation. This proactively minimizes bias in your future work.

Experimental Protocols & Data

Protocol 1: Implementing CAMARADES Quality Assessment in a Systematic Review

- Search & Screening: Conduct literature search across PubMed, Embase, Web of Science using predefined biomaterial/search terms. Record results in a PRISMA flow diagram.

- Pilot Calibration: Two independent reviewers assess 5-10 studies using the CAMARADES checklist. Discuss discrepancies to align scoring criteria.

- Formal Review: Reviewers independently score all included studies across CAMARADES items (e.g., peer review, randomization, blinding, temperature control, compliance).

- Consensus & Arbitration: Resolve scoring differences through discussion; involve a third reviewer if needed.

- Data Synthesis: Extract outcome data and link each data point to the study's quality score for analysis.

Protocol 2: Subgroup Meta-Analysis Based on CAMARADES Score

- Calculate Score: For each study, calculate a quality score (e.g., 1 point per CAMARADES item satisfied).

- Define Thresholds: Define subgroups (e.g., High Quality: ≥ 7/10; Low Quality: < 7/10).

- Stratified Analysis: Perform separate meta-analyses for each subgroup using appropriate models (fixed or random effects).

- Compare Estimates: Statistically compare the pooled effect estimates between subgroups using a test for subgroup differences (e.g., in RevMan, Cochrane software).

Table 1: Example Meta-Analysis Results Stratified by CAMARADES Quality Score

| Subgroup (CAMARADES Score) | Number of Studies | Pooled Effect Size (SMD) | 95% CI | I² (Heterogeneity) |

|---|---|---|---|---|

| High Quality (≥ 7/10) | 8 | 1.45 | [1.10, 1.80] | 35% |

| Low Quality (< 7/10) | 12 | 2.30 | [1.85, 2.75] | 89% |

| Overall | 20 | 1.95 | [1.50, 2.40] | 85% |

SMD: Standardized Mean Difference; CI: Confidence Interval

Visualizations

Title: Quality Assessment Informs Synthesis

Title: Pathway from Flaw to Compromised Synthesis

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Biomaterial Quality Research |

|---|---|

| CAMARADES Checklist | The core 10-item tool to systematically assess risk of bias in preclinical animal studies. |

| PRISMA Guidelines | Provides framework for reporting the systematic review process transparently. |

Meta-Analysis Software (RevMan, R/metafor) |

Statistical software to pool data and perform subgroup/sensitivity analyses. |

| Reference Manager (EndNote, Zotero) | Manages literature, deduplicates search results, and facilitates screening. |

| Blinded Assessment Template | A standardized form for independent reviewers to score studies without conflict. |

| Power Analysis Software (G*Power) | Used to critique or plan sample sizes, a key CAMARADES item. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common experimental hurdles in biomaterial science within the framework of the CAMARADES checklist for study quality. The questions are structured to align with checklist items to promote rigorous, reproducible research.

FAQs & Troubleshooting Guides

Q1: Our in vivo biomaterial implantation study showed high variability in the inflammatory response. How can we better control for this to satisfy checklist items on randomization and blinding?

A: High variability often stems from unaccounted-for experimental confounders. Implement a stratified randomization protocol based on animal weight and litter. For blinding, use a third-party researcher to code all material implants and surgical kits.

- Protocol for Stratified Randomization:

- Weigh all animals and assign to weight strata (e.g., 20-22g, 22-24g).

- Within each stratum, randomly assign animals to treatment or control groups using a computer-generated random number sequence.

- Assign a unique ID to each animal.

- Provide the surgical researcher with pre-packed, coded kits (by a blinded team member) containing the biomaterial or control vehicle matched to the animal ID.

Q2: For checklist items requiring sample size calculation, what parameters are essential for biomaterial biocompatibility studies?

A: Sample size should be justified a priori using effect size, variability, desired power (typically 80%), and alpha (typically 0.05). Use pilot study data or literature values.

- Key Parameters Table:

Parameter Description Typical Source for Biomaterials Effect Size Minimum difference of clinical/scientific importance (e.g., 40% reduction in fibrosis score). Pilot data or previous similar studies. Standard Deviation Expected variability in the primary outcome (e.g., SD of histological scoring). Pilot data or published literature. Alpha (α) Probability of Type I error (false positive). Usually set at 0.05. Power (1-β) Probability of detecting an effect if it exists. Usually set at 0.8 or 80%.

Q3: How do we select appropriate controls for a novel hydrogel scaffold, addressing the checklist's requirement for "appropriate controls"?

A: Biomaterial studies often require multiple control groups to isolate the material's effect from the surgical procedure and the defect itself.

- Control Group Strategy Table:

Control Group Purpose Rationale Sham Surgery Animals undergo the same surgical procedure without defect creation or implantation. Controls for effects of anesthesia and surgical trauma. Defect-Only A critical-sized defect is created but left empty or filled with saline. Controls for natural healing capacity and defines the baseline defect. Material Control Implantation of a clinically approved material (e.g., collagen sponge). Provides a benchmark for expected performance.

Q4: Our study involves assessing angiogenesis. Which objective quantification methods satisfy the checklist's call for "objective outcome measurement"?

A: Move beyond qualitative descriptions (e.g., "increased vascularization"). Implement these protocols:

Protocol for Immunohistochemical Quantification (CD31):

- Section embedded tissue at 5µm.

- Perform CD31 immunohistochemistry.

- Image 5 random, non-overlapping fields per sample at 200x magnification.

- Use image analysis software (e.g., ImageJ/Fiji) to apply a consistent color threshold to identify stained structures.

- Report Mean Vessel Density (vessels per mm²) and Percent Area (% of field positive for CD31).

Protocol for Perfusion Imaging (if applicable):

- Inject fluorescent lectin (e.g., Lycopersicon esculentum) intravenously prior to sacrifice.

- Harvest tissue, image whole mounts or sections via confocal microscopy.

- Quantify total fluorescence intensity or perfused vessel length per volume.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in Biomaterial Studies |

|---|---|

| Live/Dead Cell Assay Kit | Provides a rapid, fluorescent-based quantification of cell viability and cytotoxicity directly on biomaterial surfaces. |

| ELISA Kits (e.g., for TNF-α, IL-1β, VEGF) | Enables precise, quantitative measurement of specific inflammatory or trophic factors in supernatant or tissue homogenate. |

| AlamarBlue or MTT Assay | Colorimetric or fluorometric assays for measuring cell proliferation and metabolic activity on 2D or 3D material substrates. |

| Fluorescently-Conjugated Phalloidin | Binds to F-actin, allowing for high-resolution visualization of cell morphology and cytoskeletal organization on materials. |

| Masson's Trichrome Stain Kit | Standard histological stain for differentiating collagen (blue) from muscle/cytoplasm (red), critical for fibrosis assessment. |

| Micro-CT Contrast Agent | Allows for non-destructive, 3D visualization and quantification of biomaterial degradation and new bone formation in vivo. |

Experimental Workflow for a Typical BiomaterialIn VivoStudy

Biomaterial In Vivo Study Workflow

Key Signaling Pathway in the Foreign Body Response

Foreign Body Response Signaling Pathway

Technical Support Center: CAMARADES Checklist & Biomaterial Study Troubleshooting

FAQs & Troubleshooting Guides

Q1: Our in vivo biomaterial implantation study showed high efficacy, but a subsequent independent lab could not replicate our results. What might be the primary CAMARADES-related issue? A: This is a classic symptom of inadequate reporting under the "Study Quality" and "Randomization" domains of the CAMARADES checklist. Failure to properly randomize animals to treatment/control groups introduces selection bias, inflating effect sizes. Ensure your methodology details: 1) The specific randomization method (e.g., computer-generated sequence), 2) Who generated the sequence, and 3) Who assigned animals to cages/groups.

Q2: Our histopathology analysis of a bone-regeneration biomaterial shows high variability, blurring the treatment effect. How can the CAMARADES framework help? A: This likely falls under "Blinded Assessment" (Item 8). If the pathologist assessing the slides is aware of the treatment group, confirmation bias can skew scoring. Implement a protocol where slides are coded by a third party, and the assessor is blinded to these codes until analysis is complete. This directly reduces observer bias, a key quality metric.

Q3: When performing a systematic review on hydrogel drug-delivery systems, how do I handle studies that don't report animal sex? A: Under CAMARADES, "Animal Characteristics (e.g., sex, weight)" is a key item. Omission is a major quality flaw. You must: 1) Contact the authors to request the data. 2) If unavailable, note it as a "critical reporting gap" in your review's risk-of-bias table and perform a sensitivity analysis discussing how this omission could impact the translational relevance of the findings.

Q4: Our meta-analysis shows extreme heterogeneity (I² > 80%). Which CAMARADES items should we re-examine to identify sources? A: High heterogeneity often stems from variability in study design quality. Prioritize investigating these CAMARADES items across your included studies:

- Item 4 (Randomization)

- Item 5 (Blinded Induction of Pathology/Therapy)

- Item 8 (Blinded Assessment of Outcome)

- Item 10 (Declaration of Potential Conflicts of Interest) Stratify or subgroup your analysis based on these quality items. Often, low-quality studies (e.g., unblinded) show larger, more variable effects.

Q5: A reviewer criticized our biomaterial biocompatibility study for not accounting for "all animals used." What does this mean? A: This references CAMARADES Item 9: "Reporting of animals excluded from analysis." You must provide a complete flow diagram (e.g., based on ARRIVE guidelines) accounting for every animal. If animals died or were euthanized due to surgical complications or infection, they must be reported, not silently removed. This is critical for assessing the true safety profile and operational feasibility of the intervention.

Key Experimental Protocols

Protocol 1: Implementing Blinded Randomization for Implantation Studies

- Sequence Generation: Use a software tool (e.g., GraphPad QuickCalcs, R

blockrand) to generate a randomized allocation sequence with permuted blocks (block size 4-6). - Concealment: Place each allocation assignment in sequentially numbered, opaque, sealed envelopes (SNOSE).

- Animal Assignment: Upon ready for surgery, the surgeon opens the next envelope in sequence to reveal the group assignment (e.g., "Material A" or "Sham").

- Blinding: The surgeon cannot be involved in sequence generation. The outcome assessor must be unaware of the envelope number and group assignment.

Protocol 2: Blinded Histopathological Scoring Workflow

- Sample Coding: After tissue collection and slide preparation, a lab member not involved in scoring assigns a unique, random alphanumeric code to each slide.

- Code Log Maintenance: This person maintains a master log linking codes to animal ID and treatment group, stored securely.

- Assessment: The pathologist receives only coded slides and a scoring sheet with the codes. Scoring is performed based on pre-defined, objective criteria.

- Unblinding: After all analysis is complete, the code log is used to merge scores with group data for statistical testing.

Data Presentation

Table 1: Impact of CAMARADES Checklist Items on Effect Size in Preclinical Biomaterial Studies (Meta-Analysis Data)

| CAMARADES Quality Item | Number of Studies Assessing Item | Pooled Effect Size (SMD) When Item Reported | Pooled Effect Size (SMD) When Item Not Reported/Used | P-value for Subgroup Difference |

|---|---|---|---|---|

| Randomization | 142 | 0.85 (CI: 0.72, 0.98) | 1.45 (CI: 1.21, 1.69) | P < 0.001 |

| Blinded Induction | 128 | 0.91 (CI: 0.78, 1.04) | 1.38 (CI: 1.10, 1.66) | P = 0.002 |

| Blinded Assessment | 155 | 0.88 (CI: 0.76, 1.00) | 1.52 (CI: 1.28, 1.76) | P < 0.001 |

| Sample Size Calculation | 45 | 0.75 (CI: 0.58, 0.92) | 1.20 (CI: 0.95, 1.45) | P = 0.003 |

| Conflict of Interest Statement | 167 | 0.95 (CI: 0.84, 1.06) | 1.41 (CI: 1.15, 1.67) | P = 0.008 |

SMD: Standardized Mean Difference. CI: 95% Confidence Interval. Data synthesized from recent systematic reviews in neural, bone, and cardiac biomaterial therapies.

Table 2: The Scientist's Toolkit: Essential Reagents for Rigorous Biomaterial Characterization

| Reagent / Material | Function in Ensuring Study Quality |

|---|---|

| PBS (Phosphate-Buffered Saline) | Control vehicle for injections/implantations; critical for distinguishing material effects from surgical/procedural effects. |

| Low-Melt Temperature Agarose | For preparing tissue for standardized, reproducible sectioning in histological analysis, reducing technical variability. |

| DAPI (4',6-diamidino-2-phenylindole) | Nuclear counterstain for fluorescence microscopy; enables blinded, quantitative cell counting (e.g., for inflammation). |

| ISO 10993-Compatible Positive Control Materials (e.g., Polyethylene, Latex) | Essential for validating biocompatibility assays (cytotoxicity, sensitization) as per regulatory standards. |

| Pre-specified Statistical Analysis Plan (SAP) Template | Not a wet reagent, but a critical tool. Documenting analysis choices a priori prevents data dredging and p-hacking. |

| Animal Identification Microchips | Ensures unique, permanent identification for reliable longitudinal tracking and data linkage, supporting item 9 (animal accounting). |

Visualizations

Title: Study Quality Impact on Translation Pathway

Title: Rigorous In Vivo Biomaterial Experiment Workflow

Technical Support Center: Troubleshooting & FAQs

This support center is designed to help researchers address common experimental challenges in biomaterials research, framed within the context of improving study quality and reproducibility as per the CAMARADES (Collaborative Approach to Meta-Analysis and Review of Animal Data from Experimental Studies) checklist. The following guides address specific, actionable issues.

FAQs: Biocompatibility Testing

Q1: During in vitro cytotoxicity testing (e.g., ISO 10993-5), my negative control (e.g., polyethylene) shows unexpected cytotoxicity. What could be wrong?

- A: This indicates a fundamental protocol or reagent issue. First, check your extraction conditions. Excessive temperature or duration can degrade even inert materials, leaching oligomers. Second, ensure your cell culture reagents (serum, media) are not contaminated. Third, confirm your assay reagents (e.g., MTT, AlamarBlue) are fresh and properly stored. A systematic negative control failure directly undermines CAMARADES Item 5 ("Blinded assessment of outcome") and Item 8 ("Randomization of subjects/treatments"), as baseline assay validity is compromised.

Q2: My in vivo implantation shows excessive inflammatory response compared to literature for a similar material. How should I investigate?

- A: Follow a tiered diagnostic approach. First, rule out sterility: was your sterilization method (e.g., autoclave, ethanol, gamma) appropriate and validated for the polymer? Some methods induce surface degradation. Second, analyze degradation byproducts: accelerated degradation in vivo can create an acidic or allergic local environment. Third, assess mechanical mismatch: if the implant modulus is vastly different from the host tissue, it can cause friction and foreign body reaction. Documenting this investigation aligns with CAMARADES Item 10 ("Comprehensive outcome reporting").

FAQs: Degradation Profiling

Q3: The in vitro degradation rate of my polyester scaffold (e.g., PLGA) in PBS is much slower than in my animal model. Why is this mismatch occurring?

- A: PBS degradation models only hydrolysis. In vivo degradation involves enzymatic activity (e.g., esterases), cellular activity (phagocytosis), and dynamic mechanical stress. Your in vitro protocol may lack relevant enzymes or fail to simulate physiological loading. Consider using enzyme-containing buffers (e.g., with esterase or lysozyme) or a bioreactor that applies cyclic strain. This relates to CAMARADES Item 6 ("Model validity")—ensuring your test system accurately models the in vivo environment.

Q4: How do I distinguish between surface erosion and bulk erosion experimentally?

- A: Use a combination of techniques. Track mass loss over time versus molecular weight (Mw) loss. Bulk erosion (common in PLGA): Mw drops significantly before substantial mass loss occurs. Surface erosion (common in polyanhydrides): Mass loss is linear, and the core Mw remains largely unchanged. See Table 1 for a methodological summary.

FAQs: Functional Performance Testing

Q5: The seeded cells on my 3D scaffold aggregate in clumps rather than distributing evenly. How can I improve cell seeding efficiency and homogeneity?

- A: This is often due to poor scaffold wettability or inadequate seeding technique. Pre-wet the scaffold using ethanol gradient hydration or apply vacuum infiltration. Use dynamic seeding methods (e.g., orbital shaker, perfusion bioreactor) instead of static seeding. Optimize cell seeding density and viscosity of the seeding medium with a low percentage of methylcellulose. Inconsistent seeding directly impacts CAMARADES Item 7 ("Sample size calculation") by introducing high inter-sample variability.

Q6: My electrically conductive neural scaffold shows inconsistent performance across batches in stimulating neuron differentiation. What should I check?

- A: Focus on material characterization consistency. Batch-to-batch variations in conductivity, surface topography, or residual solvent can drastically alter cellular response. For each batch, characterize: 1) Surface chemistry (XPS), 2) Conductivity (4-point probe), 3) Topography (SEM/AFM). Functional batch release criteria are essential. This addresses CAMARADES Item 4 ("Investigation of a dose-response gradient") by controlling the independent variable (scaffold properties).

Data Presentation

Table 1: Techniques for Characterizing Biomaterial Degradation Modes

| Characterization Method | What It Measures | Indication of Bulk Erosion | Indication of Surface Erosion | Standard Protocol Reference |

|---|---|---|---|---|

| Gel Permeation Chromatography (GPC) | Change in polymer molecular weight (Mw) over time. | Rapid, early drop in Mw. | Mw of the material core remains high until late stages. | ASTM D6579-11. Samples dried, dissolved in THF, compared to polystyrene standards. |

| Mass Loss Profiling | Remaining dry mass of the material over time. | Lag phase followed by rapid mass loss. | Linear, time-proportional mass loss. | ISO 13781. Samples washed, lyophilized, weighed. Performed in triplicate. |

| Scanning Electron Microscopy (SEM) | Surface and cross-sectional morphology. | Porosity increases throughout the bulk, surface may crack. | Clearly visible thinning of material walls, uniform recession. | Samples sputter-coated with gold/palladium, imaged at multiple time points. |

| pH Monitoring of Degradation Medium | Accumulation of acidic degradation byproducts. | Sudden drop in pH at later time points. | Gradual, sustained decrease in pH. | Use a calibrated pH meter; medium should be refreshed at set intervals to mimic clearance. |

Experimental Protocols

Protocol 1: Standardized In Vitro Hydrolytic Degradation (Based on ISO 13781) Objective: To determine the mass loss and molecular weight change of a polymeric solid implant material under simulated hydrolytic conditions. Materials: Test specimens, Phosphate Buffered Saline (PBS, pH 7.4 ± 0.2), sodium azide (0.02% w/v), orbital shaking incubator, lyophilizer, analytical balance, GPC system. Method:

- Preparation: Cut specimens to specified dimensions (e.g., 10mm x 10mm x 1mm). Record initial dry mass (W₀) and initial molecular weight (Mw₀).

- Immersion: Place each specimen in a sealed vial with 10-20x its volume of PBS containing 0.02% sodium azide to prevent microbial growth.

- Incubation: Place vials in an orbital shaking incubator (37°C ± 1°C, 60 rpm).

- Sampling: At predetermined time points (e.g., 1, 4, 12, 26 weeks), remove samples in triplicate.

- Analysis: Rinse samples thoroughly with deionized water, lyophilize to constant weight, and record dry mass (Wₜ). Calculate percentage mass remaining:

(Wₜ / W₀) * 100. - GPC Analysis: Dissolve dried samples in appropriate solvent (e.g., THF for PLGA), filter, and analyze via GPC to determine Mw at time t (Mwₜ).

Protocol 2: Direct Contact Cytotoxicity Test (Based on ISO 10993-5) Objective: To assess the cytotoxic potential of a biomaterial using a direct contact assay with mammalian cells. Materials: L929 fibroblast cells, complete cell culture medium, test material (sterile, in size per standard), negative control (HDPE film), positive control (latex or tin-stabilized PVC), multi-well plates, incubator, inverted microscope, viability assay kit (e.g., MTT). Method:

- Cell Seeding: Seed L929 cells in a multi-well plate to achieve 80-90% confluency after 24 hours of growth.

- Application: Carefully place sterile test material, negative control, and positive control directly onto the cell monolayer in separate wells. Ensure direct, uniform contact.

- Incubation: Incubate plates for 24 ± 2 hours at 37°C in a humidified 5% CO₂ atmosphere.

- Assessment:

- Microscopic Evaluation: Observe cells under an inverted microscope around the material edges. Score reactivity (0-4) based on cell lysis, detachment, and morphology.

- Quantitative Assay: Remove material, perform MTT assay per manufacturer's instructions. Measure absorbance. Calculate cell viability relative to negative control.

- Interpretation: A reduction in viability >30% versus the negative control is considered a cytotoxic potential.

Diagrams

Diagram 1: Biocompatibility Assessment Cascade

Diagram 2: Hydrolytic vs. Enzymatic Degradation Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Primary Function | Key Consideration for Biomaterial Studies |

|---|---|---|

| AlamarBlue / MTT / WST-8 Assay Kits | Measure cell viability, proliferation, and cytotoxicity in vitro. | Choose based on material interference; some scaffolds can reduce tetrazolium salts, causing false positives. Pre-test for interference. |

| Phosphate Buffered Saline (PBS) with Azide | Standard medium for in vitro hydrolytic degradation studies. | Sodium azide (0.02%) prevents microbial growth over long-term studies. Ensure pH is 7.4 ± 0.2. |

| Lysozyme & Esterase Enzymes | Model enzymatic component of in vivo degradation for polymers like PLA/PLGA. | Use at physiological concentrations (e.g., lysozyme ~15 µg/mL in PBS). Activity must be verified and replenished. |

| Paraformaldehyde (4%), Glutaraldehyde | Fixatives for histology and SEM preparation of tissue-scaffold constructs. | Glutaraldehyde provides superior cross-linking for SEM but may autofluoresce. 4% PFA is standard for immunohistochemistry. |

| Type I Collase / Dispase Enzymes | Digest extracellular matrix to retrieve cells from explanted scaffolds for flow cytometry or PCR. | Optimization of digestion time and enzyme concentration is critical to preserve cell surface markers and RNA integrity. |

| Fluorophore-Conjugated Antibodies (e.g., CD68, CD206) | Identify and differentiate macrophage phenotypes (M1 pro-inflammatory vs. M2 pro-healing) on explants. | Must include isotype controls and FMOs (Fluorescence Minus One) for accurate gating in flow cytometry. |

Step-by-Step Implementation: How to Apply the CAMARADES Checklist to Your Biomaterial Study Design

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: What is the CAMARADES checklist, and why is it critical for biomaterial studies? The CAMARADES checklist is a framework for ensuring quality and reducing bias in preclinical animal research. For biomaterial studies, which often involve complex interventions like scaffolds or implants, it is critical because it standardizes reporting on items such as randomization, blinding, sample size calculation, and animal characteristics. This improves the translational potential of your findings to clinical applications.

FAQ 2: How do I implement proper randomization for biomaterial implantation surgeries?

- Issue: Unequal assignment of animals to treatment/control groups leads to selection bias.

- Solution: Use a computer-generated random number sequence prior to surgery. Do not randomize based on animal weight or activity. The sequence should be prepared by a researcher not performing the surgeries and placed in sealed, opaque envelopes. For biomaterial studies, ensure batches of the material are pre-assigned to animal codes to prevent batch variability from confounding results.

FAQ 3: How can blinding be maintained when the treatment group receives a visible implant?

- Issue: The surgeon and outcome assessor can visually identify the intervention group.

- Solution: Implement a sham surgery for the control group that mimics all steps (e.g., incision, tissue exposure) except implantation of the biomaterial. The biomaterial and any necessary vehicles should be prepared by an independent researcher and provided in identically appearing, coded syringes or containers. Post-operative assessments (e.g., gait analysis, histological scoring) must be performed by an assessor blinded to the group codes.

FAQ 4: What are the key inclusion criteria for animals in a biomaterial osteointegration study?

- Issue: Inconsistent animal models lead to unreliable data.

- Solution: Pre-define and report all criteria in your protocol. Key items include species, strain, sex, age/weight range, specific pathogen-free status, and a detailed health assessment prior to inclusion. For bone studies, consider and report baseline bone mineral density if relevant.

FAQ 5: How do I calculate an appropriate sample size for a novel biomaterial efficacy study?

- Issue: Underpowered studies produce inconclusive results.

- Solution: Perform an a priori sample size calculation using a relevant primary outcome measure (e.g., bone volume/total volume from micro-CT). You will need an estimate of the expected effect size (from pilot data or literature) and the acceptable alpha and beta error rates (typically 0.05 and 0.20). Account for potential attrition (e.g., post-surgical complications).

FAQ 6: How should I handle and report outcome data from animals that received a defective implant?

- Issue: Excluding data can introduce bias.

- Solution: Pre-define objective technical failure criteria in your protocol (e.g., implant dislodgement within 24 hours due to surgical error, clear post-operative infection). Data from animals meeting these criteria should be excluded from analysis, but the number and reason for exclusion must be fully reported. Data from animals with a properly implanted device that simply shows poor performance must not be excluded.

Data Presentation

Table 1: Core CAMARADES Items for Biomaterial Studies & Implementation Rate from a 2023 Systematic Review Data sourced from a review of 100 recent preclinical biomaterial for bone regeneration studies.

| CAMARADES Item | Description | Reported in Studies (%) |

|---|---|---|

| Peer-Reviewed Protocol | Study plan published or registered beforehand. | 15% |

| Sample Size Calculation | Justification of animal numbers with statistical methods. | 22% |

| Randomization | Random allocation to treatment/control. | 58% |

| Blinded Assessment | Outcome evaluator unaware of treatment group. | 47% |

| Animal Model Details | Species, strain, sex, weight, etc. | 95% |

| Surgical Details | Anesthesia, analgesia, aseptic technique. | 88% |

| Biomaterial Characterization | Physical/chemical properties reported. | 91% |

| Conflict of Interest | Potential sources of bias declared. | 65% |

Experimental Protocols

Protocol: Randomized, Blinded Evaluation of a Novel Hydrogel for Cartilage Repair in a Rat Model

1. Study Design & Randomization

- Design: Randomized, controlled trial with two groups: (1) Experimental hydrogel implant, (2) Sham surgery control (needle puncture only).

- Randomization: Generate allocation sequence using

=RAND()in Excel for N animals. Place assignments in sequentially numbered, opaque, sealed envelopes. An independent lab member prepares numbered, identical syringes (filled with hydrogel or empty for sham) based on the list.

2. Animal Model & Surgery

- Animals: 40 male Sprague-Dawley rats, 12 weeks old, weight 300-325g.

- Anesthesia/Analgesia: Induce with 5% isoflurane, maintain with 2-3% in O₂. Pre-operative buprenorphine SR (1.0 mg/kg SC).

- Surgical Procedure (Blinded Surgeon): Aseptic preparation of knee. Medial parapatellar incision. Patellar dislocation. Creation of a standardized full-thickness chondral defect (1.8mm diameter) in the trochlear groove. For Group 1, defect filled with assigned hydrogel. For Group 2, defect left empty. Closure in layers.

3. Blinded Outcome Assessment (8 weeks post-op)

- Macroscopic Scoring: Performed by two independent, blinded scorers using the ICRS visual scale.

- Histology: Sagittal sections stained with Safranin-O/Fast Green. Blinded scoring using the O'Driscoll scale.

4. Statistical Analysis

- Use two-way ANOVA with Tukey's post-hoc test. Inter-scorer reliability assessed by ICC. Significance set at p < 0.05.

Mandatory Visualization

Title: CAMARADES Protocol Development Workflow

Title: Biomaterial Study Blinding Workflow Diagram

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Preclinical Biomaterial Testing

| Item | Function in Experiment | Example/Supplier |

|---|---|---|

| Injectable Hydrogel (Test Article) | The biomaterial under investigation; provides scaffold for cell infiltration/tissue regeneration. | Custom-engineered PEG-based hydrogel. |

| Sham Control Vehicle | Inert carrier solution identical in appearance/viscosity to the test article; enables blinding. | Phosphate-Buffered Saline (PBS). |

| Buprenorphine SR | Extended-release analgesic for post-operative pain management, reducing animal stress and confounding. | ZooPharm, 1.0 mg/kg subcutaneous. |

| Isoflurane | Volatile inhalation anesthetic for induction and maintenance of surgical anesthesia. | Patterson Veterinary. |

| Safranin-O / Fast Green Stain | Histological dyes for proteoglycan (red) and collagen (green) visualization in cartilage/bone. | Sigma-Aldrich, Kit #S8884. |

| Micro-CT Imaging Agent | Contrast solution (e.g., Silver Stain) for enhanced visualization of soft biomaterial boundaries in situ. | Scanco Medical AG. |

| Blinding Kits | Opaque, numbered containers/syringes for allocating test/control materials. | Custom 3D-printed or commercial. |

| Statistical Power Analysis Software | To perform a priori sample size calculation (e.g., G*Power, PASS). | G*Power (Free). |

Technical Support Center: Troubleshooting Guides & FAQs

This support center addresses common experimental challenges in implementing the CAMARADES checklist items for Randomization and Blinding in preclinical biomaterial studies. These practices are critical for minimizing bias and enhancing the translational value of research.

FAQs on Randomization (Item 1)

Q1: How do I practically randomize animal subjects when testing a novel hydrogel for bone repair, given that litter, weight, and sex can all influence outcomes?

A: Use a stratified block randomization protocol. First, stratify your animal pool by critical confounding variables (e.g., sex, litter). Then, within each stratum, use computer-generated random number sequences to assign subjects to control or treatment groups in blocks. This ensures balanced group numbers and controls for known confounders.

- Troubleshooting: If group sizes appear unbalanced on a key variable post-hoc (e.g., average weight), you likely did not stratify correctly. Re-check your stratification factors. Use dedicated software (e.g., Research Randomizer, Excel RAND() with sorting) rather than manual methods.

Q2: What is the best method to randomize the surgical location (e.g., left vs. right femur) in a bilateral implant model?

A: Implement a pre-defined, computer-generated randomization schedule. The assignment (e.g., "Left leg: treatment hydrogel; Right leg: control") should be sealed in opaque envelopes opened by the surgeon only after the animal is anesthetized and prepared for surgery.

- Troubleshooting: Avoid alternating patterns (L, R, L, R...). If the surgeon knows the sequence, blinding is compromised. Always conceal the sequence until the last possible moment.

Q3: Our biomaterial is batch-dependent. How do we randomize across material batches?

A: Incorporate batch as a stratification factor. If possible, pre-mix batches to create a homogeneous supply. If not, ensure each treatment group receives material from every batch in equal proportion, as dictated by the randomization schedule.

FAQs on Blinding (Item 2)

Q4: How can we blind the surgeon if the control (sham surgery) and the test biomaterial implant look physically different?

A: Utilize a two-surgeon model. Surgeon A, unblinded, prepares the materials in identical, coded syringes or containers. Surgeon B, blinded, performs the procedure using the pre-prepared kit. The key is making the intraoperative presentation of treatment and control indistinguishable.

- Troubleshooting: If a two-surgeon model is impossible, use a neutral third party to prepare the coded kits. Validate blinding by asking the surgeon to guess the treatment at the end of each procedure; success is indicated by a guess rate at chance level (~50%).

Q5: What are effective strategies for blinding during histological scoring of tissue response to a polymer scaffold?

A: All identifying information (group ID, slide label) must be obscured. Use a lab member not involved in surgery or grouping to re-label all slides with a random numerical code. Use digital scanning and randomize the order of images for scoring. Ensure scoring criteria are strictly objective and defined in a protocol before analysis begins.

- Troubleshooting: If the biomaterial has a unique visual signature (e.g., fluorescent microparticles), consider alternative staining protocols that mask this signature for initial blinded scoring. A separate, confirmatory analysis can then assess material integration.

Q6: Who should remain blinded, and until when?

A: Blinding should ideally extend to all individuals involved in post-procedure care, outcome assessment (behavioral, histological, biochemical), and data analysis. The blinding code should only be broken after the final statistical analysis is complete (locked).

- Troubleshooting: Maintain a secure, password-protected log of the randomization/blinding code. Physical copies should be in sealed, signed, and dated envelopes.

Summarized Data from Key Studies

Table 1: Impact of Randomization & Blinding on Effect Size in Preclinical Biomaterial Studies (Meta-Analysis Data)

| Study Type | Number of Studies Analyzed | Median Effect Size (Unblinded/Unrandomized) | Median Effect Size (Blinded/Randomized) | Reported Reduction in Effect Size |

|---|---|---|---|---|

| Bone Graft Substitute Efficacy | 127 | 2.1 (SMD*) | 1.4 (SMD*) | 33% |

| Nerve Conduit Performance | 58 | 1.8 (SMD*) | 1.2 (SMD*) | 34% |

| Drug-Eluting Stent Patency | 89 | 1.9 (Risk Ratio) | 1.5 (Risk Ratio) | 21% |

SMD: Standardized Mean Difference. Data synthesized from systematic reviews adhering to CAMARADES criteria.

Table 2: Common Flaws and Solutions in Biomaterial Study Design

| CAMARADES Item | Common Flaw in Biomaterials Research | Practical Solution |

|---|---|---|

| Randomization | "Animals were randomly assigned" without detail. | Specify: "Stratified by weight, block size of 4, computer-generated." |

| Blinding | "Assessment was performed blinded." | Specify: "Histologic slides were coded by a technician not involved in surgery. The scorer was blinded to group identity until analysis was complete." |

| Sample Size Calc. | Not reported. | Perform a power analysis based on pilot data of primary outcome (e.g., new bone volume) and report parameters. |

Experimental Protocols

Protocol 1: Stratified Block Randomization for a Rat Calvarial Defect Study

Objective: To randomly assign rats to a new bioceramic graft material or a standard-of-control graft.

- Stratification: List all rats by sex and weight (e.g., <300g, 300-350g, >350g).

- Block Generation: Using statistical software, generate a randomization sequence with a block size of 4 (e.g., AABB, ABAB, BBAA, etc., where A=Control, B=Treatment) for each stratum.

- Assignment: Sequentially assign each rat within a stratum to the next letter in that stratum's sequence upon enrollment.

- Concealment: Place group assignment (A/B) into sequentially numbered, opaque, sealed envelopes. The surgeon opens the envelope after anesthetic induction.

Protocol 2: Blinded Histomorphometric Analysis of Implant Integration

Objective: To quantify bone-implant contact (BIC%) without bias.

- Slide De-identification: After sectioning and staining, a researcher not involved in group allocation removes all original labels.

- Re-coding: Slides are labeled with a random numeric code (e.g., from a random number table).

- Digitalization & Order Randomization: Slides are scanned. The digital image files are renamed using a separate random code and presented to the analyst in a randomized order.

- Analysis: The analyst, using image analysis software (e.g., ImageJ), measures BIC% according to a pre-defined, written protocol.

- Unblinding: The code is broken only after all measurements are recorded in the master database.

Visualizations

Diagram 1: Blinded Experimental Workflow for Biomaterial Implantation

Diagram 2: Stratified Randomization Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Implementing Rigorous Randomization & Blinding

| Item | Function/Description | Example Product/Technique |

|---|---|---|

| Random Number Generator | Generates unpredictable allocation sequences. Critical for avoiding systematic bias. | Research Randomizer (website), =RAND() in Excel, MATLAB randperm, GraphPad QuickCalcs. |

| Opaque Sealed Envelopes | Physical concealment of allocation to maintain blinding until point of intervention. | Numbered, tamper-evident security envelopes. |

| Coding Labels/Syringes | Allows materials to be prepared by an unblinded party and used by a blinded party. | Pre-printed numeric labels, colored tape codes, identical sterile syringes. |

| Digital Slide Scanner | Enables blinding by removing physical slide identity and allowing image randomization. | Leica Aperio, Hamamatsu NanoZoomer, or high-resolution slide scanners. |

| Image Analysis Software | Allows objective, quantifiable measurement of outcomes per pre-set thresholds. | ImageJ/Fiji, Visiopharm, Indica Labs HALO. |

| Blinding Audit Log | A secure document to record the blinding code, ensuring it can be retrieved but not viewed prematurely. | Password-protected Excel file or physical logbook stored separately. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: General Concepts

Q1: Within the CAMARADES framework for biomaterial studies, what does Item 6 specifically require? A: Item 6 mandates the clear definition and reporting of primary and secondary outcome measures. It emphasizes the need to justify the choice of endpoint (e.g., functional recovery vs. histological assessment) as relevant to the clinical problem the biomaterial aims to address. The timing of outcome assessment must also be explicitly reported.

Q2: What is the core distinction between functional and histological endpoints? A: Functional endpoints measure the physiological or behavioral outcome of an intervention (e.g., limb grip strength, locomotor scoring, forced swim test). Histological endpoints provide morphological or structural data (e.g., lesion volume, cell count, fibrous capsule thickness, immunofluorescence for specific markers). Functional outcomes often reflect integrated system recovery, while histological outcomes offer mechanistic insight.

Q3: My study reports both functional and histological data. Which should be my primary outcome? A: The primary outcome should be the one most directly aligned with the primary objective of your study. If the biomaterial is intended to restore function (e.g., a nerve conduit), a functional measure should be primary. If it is designed to modulate a specific cellular response (e.g., reduce inflammation), a histological/immunohistochemical measure may be primary. The choice must be pre-defined and justified in the protocol.

Troubleshooting Guide: Common Experimental Issues

Issue 1: Discrepancy between positive histological results and poor functional outcomes.

- Possible Causes: 1) The biomaterial improves local histology but does not facilitate proper integration or system-level functional circuitry. 2) The functional test is not sensitive or specific to the anatomical area targeted. 3) The timing of functional assessment is too early or too late.

- Solutions:

- Correlate histological findings with multiple functional tests at different time points.

- Ensure functional tests are validated for your specific disease/injury model.

- Consider advanced functional measures (e.g., electrophysiology, gait analysis) for more granular data.

Issue 2: High variability in subjective functional scoring (e.g., Basso, Beattie, Bresnahan (BBB) scale).

- Possible Causes: Lack of blinding, insufficient rater training, inherent subjectivity of the scale.

- Solutions:

- Implement strict blinding procedures for all experimenters conducting assessments.

- Use multiple, trained raters and report inter-rater reliability scores.

- Supplement with objective functional measures (e.g., kinematic analysis, automated gait systems).

Issue 3: Quantitative histological analysis yields inconsistent results between researchers.

- Possible Causes: Inconsistent region of interest (ROI) selection, variable thresholding for image analysis, staining batch effects.

- Solutions:

- Develop a standard operating procedure (SOP) for ROI selection and provide diagrams.

- Use automated, threshold-blind analysis software where possible and report all parameters.

- Process all samples for a given stain in a single batch, or use internal controls across batches.

Data Presentation: Comparison of Endpoint Types

Table 1: Characteristics of Functional vs. Histological Endpoints

| Feature | Functional Endpoints | Histological Endpoints |

|---|---|---|

| What it Measures | Integrated physiological/behavioral recovery | Morphological, cellular, or molecular structure |

| Temporal Relevance | Often later time points (weeks-months) | Can be early (days) and late (weeks-months) |

| Key Advantage | High clinical relevance; measures "real-world" benefit | Provides mechanistic insight; high spatial resolution |

| Key Limitation | Can be influenced by compensatory mechanisms; may lack specificity | May not correlate with functional improvement; destructive to tissue |

| Common Examples | Limb grip strength test, Rotarod, Hot plate test, Walking track analysis (Sciatic Function Index) | Histomorphometry, Immunohistochemistry (IHC), Stereology for cell counts, Fibrosis/collagen quantification |

| Reporting Requirement (CAMARADES) | Specify test, equipment, parameters, timing, and blinding. | Specify stain, antibodies (clones, dilutions), quantification method, ROI, and blinding. |

Experimental Protocols

Protocol 1: Grip Strength Test (Functional Endpoint)

Objective: To assess limb muscle strength and recovery in rodent models of peripheral nerve or muscle injury treated with a biomaterial. Materials: Grip strength meter, rodent, clear plexiglass enclosure. Procedure:

- Calibrate the grip strength meter according to manufacturer instructions.

- Allow the animal to acclimatize to the testing room for 30 minutes.

- Gently hold the animal by the tail and allow it to grasp the metal grid or T-bar with its forelimbs (or fore- and hindlimbs).

- Pull the animal steadily backwards horizontally until its grip is released.

- Record the peak force (in grams or Newtons) displayed on the meter.

- Repeat for 3-5 trials per session, allowing ~1 minute rest between trials.

- Average the trials for a single session score. Perform tests weekly for the study duration.

- The experimenter must be blinded to the treatment groups.

Protocol 2: Quantitative Histomorphometry for Nerve Regeneration (Histological Endpoint)

Objective: To quantify axon count and myelination in regenerated nerves following biomaterial conduit implantation. Materials: Fixed nerve segments, resin embedding supplies, ultra-microtome, toluidine blue stain, light microscope with digital camera, image analysis software (e.g., ImageJ, Fiji). Procedure:

- Fix explained nerve segments in glutaraldehyde, post-fix in osmium tetroxide, and embed in epoxy resin.

- Cut 1-µm thick transverse sections using an ultra-microtome and stain with toluidine blue.

- Using a light microscope at 100x oil immersion, capture 5-8 non-overlapping, representative images from the mid-conduit region per sample.

- Import images to analysis software. Calibrate the scale (µm/pixel).

- For axon count: Apply consistent thresholding to identify myelinated axons. Use particle analysis to count total axons per image. Report mean axon density (axons/mm²).

- For myelination: Measure the total axon area and the total fiber area (axon + myelin) for each axon. Calculate the g-ratio (axon diameter / fiber diameter) for individual axons. Report average g-ratio per sample.

- All analyses must be performed on coded samples by a blinded researcher.

Visualizations

Diagram 1: Endpoint Selection Workflow

Diagram 2: Biomaterial Nerve Regeneration Assessment Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Outcome Assessment in Biomaterial Studies

| Item | Function in Experiment | Example/Notes |

|---|---|---|

| Automated Gait Analysis System (e.g., CatWalk, DigiGait) | Provides objective, quantitative data on locomotion, gait dynamics, and coordination. Reduces subjectivity. | Essential for spinal cord injury, osteoarthritis, and peripheral nerve studies. |

| Digital Grip Strength Meter | Quantifies limb muscle force generation. Standard for neuromuscular function. | Ensure proper calibration and consistent pulling force angle/speed. |

| Von Frey Filaments | Assesses mechanical allodynia (sensitivity) in pain models. A key functional sensory endpoint. | Use up-down method for threshold calculation. |

| Anti-Neurofilament Antibody (e.g., NF200, clone N52) | Labels axons in histological sections for regeneration assessment. | Use for immunofluorescence or bright-field IHC. Critical for nerve studies. |

| Anti-Iba1 / Anti-CD68 Antibodies | Labels macrophages/microglia. Quantifies inflammatory response to biomaterial. | Distinguish between M1 (pro-inflammatory) and M2 (pro-healing) phenotypes. |

| Masson's Trichrome Stain Kit | Differentiates collagen (blue/green) from muscle/cytoplasm (red). Quantifies fibrosis. | Standard for assessing foreign body response and fibrous capsule thickness. |

| Stereology Software (e.g., Stereo Investigator) | Provides unbiased, quantitative cell counting in 3D tissue volumes. Gold standard for histology. | Requires specific sampling protocols but minimizes bias. |

| Open-Source Image Analysis Software (e.g., ImageJ/Fiji, QuPath) | Performs quantitative analysis on histological images (cell count, area, intensity). | Use plugins like "Analyze Particles" and "Color Deconvolution" for reproducibility. |

FAQs & Troubleshooting

Q1: My biomaterial shows efficacy in a mouse model of myocardial infarction (MI), but fails in a later rat study. What could be the primary model-related issue? A: This is a classic issue of species-specific pathophysiology. Mice and rats have fundamental differences in cardiac electrophysiology, heart rate, and coronary artery anatomy. The common surgical (LAD) ligation model may not produce an equivalent infarct size or remodeling response. Furthermore, immune responses to your biomaterial (e.g., a hydrogel) can vary drastically between species due to differences in complement activation and macrophage polarization.

Q2: For a spinal cord injury study using a hydrogel scaffold, does the choice between a C57BL/6 and a BALB/c mouse strain matter? A: Critically. C57BL/6 mice are Th1-biased and generally show a more robust inflammatory response post-injury. BALB/c mice are Th2-biased. Your biomaterial's integration and the subsequent glial scar formation will be significantly influenced by this. A biomaterial designed to modulate inflammation may have opposite effects in these strains. Always pilot your disease induction (e.g., contusion, compression) in the specific strain chosen.

Q3: I am inducing osteoarthritis (OA) in rats for a biomaterial implant study. The disease progression is highly variable between animals. How can I improve consistency? A: Variability often stems from the disease induction method. Chemical induction (e.g., mono-iodoacetate) is highly dose and injection-location sensitive. Surgical methods (e.g., medial meniscal tear) depend heavily on surgeon skill.

- Troubleshooting Guide:

- Precision Dosing: Use an ultrasound-guided injector for intra-articular chemical induction.

- Surgical Standardization: Implement a detailed, step-by-step surgical protocol with the same surgeon. Use a calibrated device for meniscal damage.

- Post-Op Monitoring: Standardize weight-bearing and activity levels across cages post-surgery.

- Validation: Use weekly gait analysis (e.g., CatWalk) to track progression and group animals by functional deficit, not just time post-induction.

Q4: How do I justify my choice of a subcutaneous implantation model in a mouse for a bone regeneration biomaterial when the reviewer asks about clinical relevance? A: You must link the model's relevance to a specific research question within the CAMARADES framework. A subcutaneous model is not relevant for testing functional bone load-bearing. However, it is highly relevant for assessing ectopic osteogenesis and the intrinsic osteoinductive potential of your biomaterial in isolation from a bone marrow environment. Frame it as Item 7: "The subcutaneous model was selected specifically to isolate the material's osteoinductive properties, a key stage in the translational pipeline before testing in a critical-sized femoral defect model."

Experimental Protocols

Protocol 1: Consistent Induction of Myocardial Infarction in C57BL/6 Mice for Biomaterial Patch Testing

- Anesthesia: Induce with 4% isoflurane, maintain with 1.5-2% via nose cone on a warming pad.

- Intubation: Perform orotracheal intubation and connect to a mini-ventilator (120 breaths/min, tidal volume ~0.2ml).

- Thoracotomy: Make a left parasternal incision between the 3rd and 4th ribs. Gently retract the ribs to expose the heart.

- LAD Ligation: Identify the left anterior descending (LAD) coronary artery. Pass a 7-0 polypropylene suture under the artery 2-3mm from the tip of the left atrium. Place a 1mm section of PE-10 tubing on top of the artery, tie the suture over the tubing, and then remove the tubing to create a controlled ischemia. Visual blanching of the anterior wall confirms success.

- Biomaterial Application: Apply the biomaterial patch (e.g., fibrin-based hydrogel) directly onto the ischemic area.

- Closure: Close the chest in layers (ribs, muscle, skin). Administer analgesia (buprenorphine, 0.1 mg/kg) pre- and post-op.

Protocol 2: Controlled Cortical Contusion Spinal Cord Injury (SCI) in Rats for Hydrogel Injection

- Preparation: Anesthetize adult Sprague-Dawley rats (e.g., 250g) with ketamine/xylazine (80/10 mg/kg, i.p.). Shave and sterilize the T8-T10 dorsal area. Secure in a stereotaxic frame on a heating pad.

- Laminectomy: Make a midline incision over T8-T10. Carefully dissect muscle and perform a T9 laminectomy to expose the dura.

- Contusion Injury: Use an Infinite Horizon or MASCIS impactor. Position the tip centered over the exposed cord. Set parameters (e.g., 200 kdyn force, 1-second dwell time for a moderate injury). Activate the device.

- Biomaterial Delivery: Using a Hamilton syringe mounted on a microinjector, inject your hydrogel (e.g., 10μL total) at multiple sites (e.g., 2mm rostral and caudal to epicenter, 1.5mm depth) at a slow rate (1 μL/min).

- Closure: Irrigate the area with saline. Close muscle and skin in layers. Provide postoperative care (manual bladder expression BID, antibiotics, and analgesia).

Data Presentation

Table 1: Comparison of Common Species & Strains for Biomaterial Studies in Disease Models

| Disease Area | Common Species/Strain | Key Relevance for Biomaterials | Potential Pitfall |

|---|---|---|---|

| Myocardial Infarction | C57BL/6 mouse | Well-characterized immune profile; good for studying inflammatory phase of repair. | Small heart size limits physical biomaterial delivery. |

| Sprague-Dawley rat | Larger size allows for precise biomaterial application (patch, injection). | Higher cost; stronger adaptive immune response to some materials. | |

| Spinal Cord Injury | C57BL/6 mouse | Extensive availability of transgenic lines to probe mechanisms. | Smaller lesion size makes injectable biomaterial volume critical. |

| Lewis rat | Low incidence of autoimmune issues; consistent injury response. | Limited transgenic tools compared to mice. | |

| Osteoarthritis | Hartley guinea pig | Develops OA spontaneously; good for long-term biomaterial degradation studies. | Cost and less available species-specific reagents. |

| C57BL/6 mouse (DMM model) | Surgical model (Destabilization of Medial Meniscus) allows controlled induction timing. | Requires highly skilled microsurgery. | |

| Bone Defect | SD rat (femoral defect) | Defect size is suitable for screening osteoconductive materials. | Non-weight-bearing model limits functional assessment. |

| NZW rabbit (radial defect) | Larger, load-bearing defect for testing mechanical integration. | Stronger immune response to xenogeneic components. |

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

- Isoflurane Inhalation System: For safe, adjustable, and reversible anesthesia during survival surgeries. Critical for maintaining animal welfare during disease induction and biomaterial implantation.

- Stereotaxic Frame with Microinjector: Provides precise, repeatable targeting for intracerebral, intraspinal, or intra-articular delivery of biomaterials (hydrogels, cells).

- In Vivo Imaging System (e.g., IVIS, MRI): Allows longitudinal, non-invasive tracking of biomaterial degradation (if labeled), donor cell survival, or disease progression (e.g., tumor size, luciferase activity).

- Gait Analysis System (e.g., CatWalk, DigiGait): Provides quantitative, objective functional outcome measures for neurological, musculoskeletal, or pain-related biomaterial studies, crucial for CAMARADES Item 10 (outcome measures).

- Polypropylene Suture (e.g., 7-0, 8-0): For precise surgical procedures like vessel ligation (MI model) or nerve crush injuries. Size and material are critical to minimize foreign body reaction.

- Controlled Impactors (e.g., for SCI, TBI): Standardizes the force, depth, and dwell time of traumatic injuries, reducing inter-animal variability and improving study power (addresses CAMARADES Item 3).

- Species-Specific ELISA Kits: Essential for quantifying key inflammatory cytokines (IL-1β, TNF-α, IL-10) in serum or tissue homogenates to assess the host immune response to the implanted biomaterial.

Technical Support Center: Troubleshooting & FAQs

FAQ 1: My Systematic Review Identifies High Heterogeneity in Outcome Measurements. How Do I Report This in a CAMARADES-Compliant Manner?

- Answer: High heterogeneity is a common issue. Your methods section must detail your search strategy (databases, dates, keywords) and study selection process with a PRISMA-style flow diagram. Report the statistical methods used to assess heterogeneity (e.g., I², Q-statistic). In the results, present a table of study characteristics and a dedicated table for outcome measures. Discuss sources of heterogeneity (e.g., model species, timing of intervention) as a key limitation.

FAQ 2: What is the Correct Way to Report Randomization and Blinding in Animal Studies for the CAMARADES Checklist?

- Answer: Simply stating "animals were randomly assigned" is insufficient. You must specify the method (e.g., random number generator, coin toss). For blinding, state who was blinded (e.g., surgeon, outcome assessor, data analyst) and how (e.g., coded cages, treatment solutions). If blinding was not possible for certain aspects, explicitly state this.

FAQ 3: How Should I Handle and Report Animals Excluded from the Analysis?

- Answer: All exclusions must be pre-defined in your protocol (e.g., mortality due to anesthesia, failure to establish disease model). Report the number of animals excluded at each stage of the experiment (from allocation to analysis) and the reasons in the results section. A CONSORT-style flow diagram for animal studies is highly recommended.

FAQ 4: My Biomaterial Study Involves Multiple Control Groups. How Do I Justify This and Present the Data Clearly?

- Answer: Justify each control group (e.g., sham surgery, vehicle control, positive drug control, untreated disease control) in the introduction or methods. Present comparative data in a clear table. Use statistical models appropriate for multiple comparisons (e.g., ANOVA with post-hoc correction) and state the correction method used.

Data Presentation & Protocols

Table 1: CAMARADES Checklist Items & Reporting Compliance in Published Biomaterial Studies (Hypothetical Analysis)

| CAMARADES Item | Percentage Reported (n=50 hypothetical studies) | Common Deficiencies Noted |

|---|---|---|

| Peer-reviewed publication | 100% | N/A |

| Control of temperature | 45% | Ambient temperature not stated, no monitoring. |

| Random allocation to group | 78% | Method of randomization not described. |

| Blinded assessment of outcome | 62% | Unclear which specific procedures were blinded. |

| Sample size calculation | 18% | Often omitted; "n=6 per group" without justification. |

| Compliance with animal welfare | 92% | Ethical permit number sometimes missing. |

| Statement of potential conflicts | 85% | Some statements were vague. |

Experimental Protocol: Assessing Biomaterial Integration in a Rodent Bone Defect Model

- Animal Model: Use 12-week-old Sprague-Dawley rats (n=10/group). Anesthetize with isoflurane.

- Surgery: Create a 3mm critical-sized defect in the femoral condyle using a trephine drill.

- Intervention: Implant the test biomaterial scaffold into the defect. Control groups receive a sham defect (empty) or a standard-of-care material (e.g., hydroxyapatite).

- Randomization & Blinding: Randomize animals to groups using a computer-generated list. The surgeon is not blinded to group allocation due to material handling differences, but all subsequent histological and radiographic analyses are performed by a researcher blinded to group codes.

- Outcome Assessment (8 weeks post-op):

- Micro-CT: Scan explanted femurs. Analyze bone volume/total volume (BV/TV) and trabecular number within the defect region.

- Histology: Decalcify, section, and stain with H&E and Masson's Trichrome. Score for new bone formation, fibrosis, and inflammation using a semi-quantitative scale (0-4).

- Statistical Analysis: Perform ANOVA with Tukey's post-hoc test. Data presented as mean ± SD. p < 0.05 considered significant.

Diagrams

CAMARADES Manuscript Workflow

Biomaterial Bone Healing Pathways

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Biomaterial/Preclinical Research |

|---|---|

| Hydroxyapatite (Standard Control) | A calcium phosphate ceramic providing a bioactive and osteoconductive reference material for bone defect studies. |

| Poly(lactic-co-glycolic acid) (PLGA) | A biodegradable polymer used as a scaffold material or for controlled drug delivery within defects. |

| Recombinant Bone Morphogenetic Protein-2 (BMP-2) | A potent osteoinductive growth factor used as a positive control to stimulate bone formation. |

| Isoflurane | A volatile inhalational anesthetic for maintaining surgical plane anesthesia in rodent models. |

| Paraformaldehyde (4%) | A fixative for preserving tissue architecture post-explantation for histological processing. |

| Masson's Trichrome Stain Kit | Used to differentiate collagen (blue/green) from muscle/cytoplasm (red) in bone histology. |

| Micro-CT Phantom | A calibration standard containing known mineral densities for quantitative bone analysis in micro-CT. |

Solving Common Pitfalls: Troubleshooting and Optimizing Your Biomaterial Study with CAMARADES

Overcoming Blinding Challenges in Obvious Biomaterial Implantation Studies

Technical Support Center: Troubleshooting & FAQs

Q1: In our rodent bone defect model, the implanted biomaterial (e.g., a calcium phosphate ceramic) is visually obvious during histology analysis. How can we effectively blind the outcome assessor to prevent bias in scoring new bone formation?

A: Implement a multi-step, staged blinding protocol.

- Sample De-identification: After euthanasia and specimen retrieval, a lab member not involved in outcome assessment assigns a randomized alphanumeric code to each sample. All original identifiers (group, animal ID, treatment) are stored in a separate, password-protected log.

- Processing & Sectioning: Samples are processed, embedded, and sectioned in batches organized by the random code only.

- Masking of the Implant Site: For staining (e.g., H&E, Masson's Trichrome), instruct the histotechnologist to mount coverslips on all slides from the batch. The assessor then analyzes the slides under standardized conditions. If the implant's morphology remains distinguishable, use a physical mask on the microscope stage or a digital overlay in image analysis software to obscure only the immediate implant region, forcing analysis of the surrounding tissue integration.

- Sequential Unblinding: Primary histological scores (e.g., osteointegration, inflammation score) are recorded in a database linked only to the random code. Statistical analysis is performed on the coded data. The group key is only merged after the analysis is finalized.

Q2: We use micro-CT to quantify bone ingrowth into a porous scaffold. The scaffold material itself has a different radiodensity than bone. Can automated analysis scripts be considered "blinded"?

A: Automated scripts are not inherently blinded; their setup and thresholding require careful blinding.

- Issue: The user setting grayscale thresholds to segment bone vs. scaffold may unconsciously bias thresholds if they know the treatment group.

- Solution:

- Blinded Threshold Calibration: Use a subset of images from all groups, fully de-identified, to establish standardized, reproducible threshold values. Document these thresholds in the protocol.

- Blinded Script Execution: The final analysis script, using the pre-defined thresholds, should be run by a researcher who only has access to the de-identified image files (named with random codes). They should not perform manual corrections that could introduce bias.

- Validation: Manually check a random subset of analyzed images from each group after analysis to ensure threshold consistency.

Q3: Our biomaterial releases a fluorescent tag. How do we blind assessments when the treatment group is literally glowing?

A: Separate the detection of the fluorescent signal (confirming presence) from the assessment of the biological outcome.

- Dual-Assessor Method: Assign one researcher to perform fluorescent imaging only to confirm implant location. They provide location coordinates or masked images to a second, fully blinded assessor.

- Spectral Unmixing & Channel Separation: If the fluorescent signal and histological stains (e.g., for immune cells) are in different channels, the blinded assessor reviews only the channels relevant to the biological outcome. The fluorescence channel is reviewed separately by a different individual.