AI-Driven Precision: How Statistical Models and Deep Learning are Revolutionizing Implant Positioning in Modern Medicine

This article provides a comprehensive analysis of the latest methodologies for optimizing medical implant positioning, targeting researchers, scientists, and drug development professionals.

AI-Driven Precision: How Statistical Models and Deep Learning are Revolutionizing Implant Positioning in Modern Medicine

Abstract

This article provides a comprehensive analysis of the latest methodologies for optimizing medical implant positioning, targeting researchers, scientists, and drug development professionals. We begin by exploring the foundational principles and challenges of traditional implant planning (Intent 1). We then detail the technical implementation and application of both statistical shape modeling and advanced deep learning architectures, including Convolutional Neural Networks (CNNs) and Generative Adversarial Networks (GANs) for 3D anatomical prediction (Intent 2). The discussion moves to troubleshooting common pitfalls, such as data scarcity and model interpretability, and strategies for optimizing algorithmic performance and clinical integration (Intent 3). Finally, we present a rigorous comparative analysis of validation frameworks, benchmark datasets, and the translational success of these computational methods versus conventional surgical planning (Intent 4). The conclusion synthesizes key takeaways and outlines future trajectories for personalized, data-driven implantology.

The Anatomy of Precision: Foundational Challenges and Statistical Principles in Implant Positioning

The precision of implant placement is a cornerstone of successful long-term outcomes in orthopedic and dental applications. Misalignment leads to biomechanical instability, accelerated wear, and inflammatory responses, culminating in premature failure. This technical support center provides resources for researchers developing and validating statistical and deep learning methods to optimize implant positioning.

Troubleshooting Guides & FAQs

Q1: Our biomechanical simulation model shows unrealistic stress concentrations at the bone-implant interface. What could be the cause? A: This is often due to inaccurate mesh generation or improper definition of material properties at the interface. Verify your finite element analysis (FEA) workflow:

- Ensure the implant surface mesh is conformal with the bone mesh.

- Check the assigned material properties for bone (often modeled as anisotropic or as a density-modulus relationship). Use site-specific values from µCT data.

- Validate the definition of the contact interface (e.g., bonded, frictional). A common error is over-constraining the model.

Q2: When training a deep learning model for predicting optimal implant position from CT scans, the model fails to generalize to new patient anatomies. How can we improve robustness? A: This indicates dataset bias or insufficient regularization.

- Solution 1: Augment your training data with synthetic anatomies generated using statistical shape models (SSMs). Apply random affine transformations (rotation, scaling, shear) and realistic noise models.

- Solution 2: Implement a hybrid architecture. Use a convolutional neural network (CNN) for feature extraction from the CT scan, then feed features into a graph neural network (GNN) that operates on a mesh representation of the bone, which better handles anatomical variability.

Q3: Our quantitative measurement of implant migration using Model-Based Radiostereometric Analysis (MBRSA) shows high intra-observer variance. What protocol adjustments are needed? A: High variance typically stems from inconsistent landmark identification. Follow this refined protocol:

| Step | Procedure | Key Parameter | Tolerance |

|---|---|---|---|

| 1 | Calibration | Use a biplanar calibration cage. Capture 10 empty calibration images. | Mean error < 0.03 mm |

| 2 | Radiograph Acquisition | Patient positioned per protocol. Acquire paired stereo radiographs at all time points (post-op, 3mo, 12mo, etc.). | Same tube angles (±0.5°) |

| 3 | Model Registration | Use a pre-defined CAD model of the implant. Automate initial pose estimation with edge detection. | - |

| 4 | Manual Refinement | Observer aligns model to radiograph edges. Use standardized contrast/brightness. Perform 3 independent trials. | Recommended |

| 5 | Migration Calculation | Software calculates 3D translation/rotation of implant from post-op baseline. Report as median of 3 trials. | - |

Q4: What are the key signaling pathways activated by micromotion due to suboptimal implant positioning, and how can we assay them in a preclinical model? A: Micromotion (>150µm) prevents osseointegration and induces a pro-inflammatory fibroblastic response. The key pathways involve mechanical transduction and inflammation.

Diagram Title: Key Pathways in Aseptic Loosening from Micromotion

Experimental Protocol: Assessing Peri-Implant Tissue Response in a Murine Model

- Implant: Use a titanium pin with a controlled, suboptimal fit in the femoral canal to induce 200µm micromotion.

- Groups: Sham surgery (tight fit), Micromotion group (loose fit), n=10/group.

- Harvest: Euthanize at 2 and 6 weeks. Extract implant with surrounding bone tissue.

- Analysis:

- Histology: Fix in 4% PFA, decalcify, section. Perform H&E staining for tissue morphology and Trichrome for collagen/fibrous tissue.

- Immunohistochemistry: Stain for TNF-α (macrophages) and RUNX2/OSX (osteoblast activity).

- qPCR: Grind tissue. Isolate RNA. Use primers for Tnfa, Il1b, Sost, Rankl, Col1a1, and housekeeper Gapdh.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Implant Positioning Research |

|---|---|

| µCT Scanner (e.g., Scanco µCT100) | Provides high-resolution 3D bone morphology and density data for constructing statistical shape models and validating implant fit. |

| Finite Element Analysis Software (e.g., Abaqus, FEBio) | Simulates biomechanical stresses and strains for different implant positions to predict risk of bone resorption or failure. |

| Deep Learning Framework (e.g., PyTorch, MONAI) | Used to build models for automated segmentation of anatomical structures and prediction of optimal implant pose from medical images. |

| Statistical Shape Model (SSM) Library (e.g., ShapeWorks) | Captures population-level anatomical variation to generate synthetic training data and define a "safe zone" for implant placement. |

| Radiostereometric Analysis (RSA) System | The gold standard for in vivo measurement of micromotion (<0.1mm accuracy) to validate long-term stability predictions. |

| Human Mesenchymal Stem Cells (hMSCs) & Osteogenic Media | For in vitro studies of how mechanical stimulation (mimicking implant loads) affects differentiation pathways. |

| Pro-inflammatory Cytokine Panel (TNF-α, IL-1β, IL-6 ELISA Kits) | To quantify the inflammatory response in tissue explants or cell culture models subjected to particulate wear debris or fluid shear stress. |

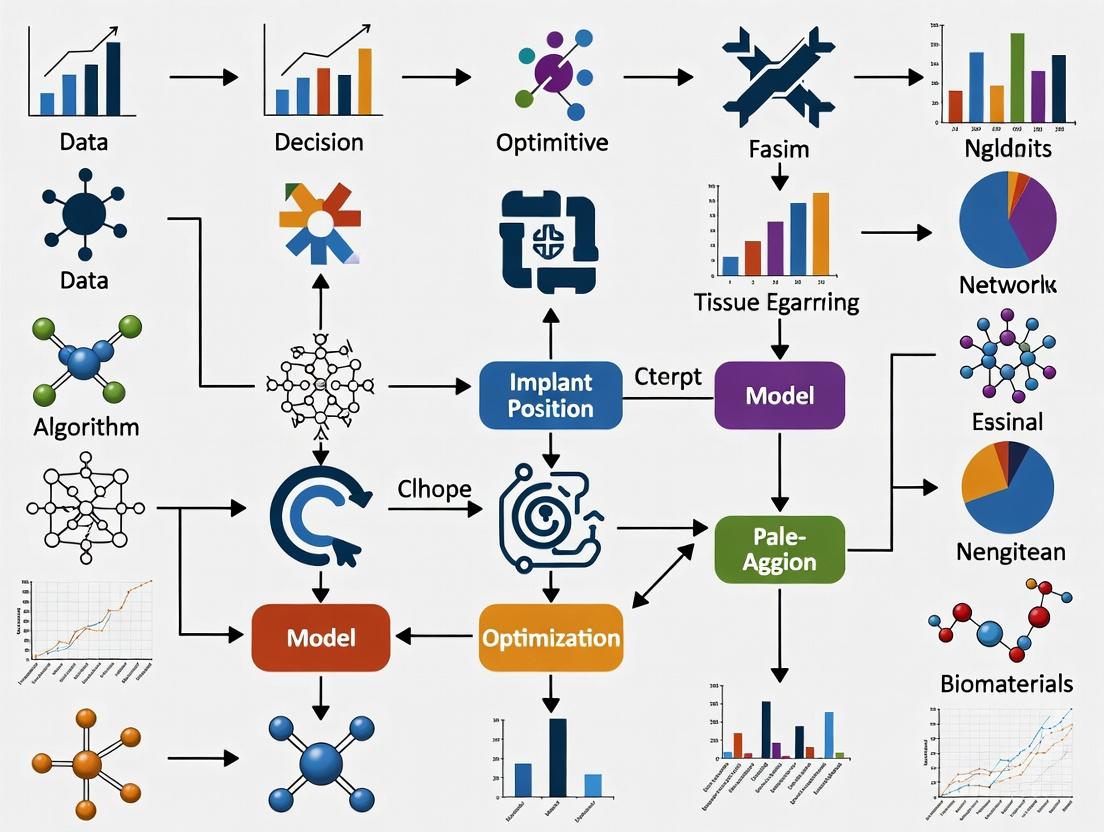

Diagram Title: Workflow for AI-Driven Implant Positioning

Troubleshooting Guides & FAQs

Q1: During manual implant planning on 2D radiographs, I observe significant positional variance (>2mm) between my planned and post-operative implant locations. What are the primary sources of this error? A: The variance is likely due to the cumulative limitations of 2D imaging and manual techniques.

- 2D Projection Error: A single radiograph compresses 3D anatomy into a 2D plane, losing depth information. Landmarks can be misregistered due to patient positioning (e.g., head tilt).

- Manual Landmarking Inconsistency: Visual identification of fiducials (e.g., the anterior commissure) is subjective and suffers from intra- and inter-rater variability.

- Lack of Soft Tissue Contrast: Conventional X-rays poorly differentiate soft tissue boundaries critical for targeting (e.g., subthalamic nucleus borders). Troubleshooting Steps:

- Calibration Check: Ensure your radiographic scaling is correctly calibrated using the known size of an implantable fiducial marker (e.g., a 5mm sphere) in the image.

- Protocol Standardization: Implement a strict, documented protocol for patient head fixation and imaging angle (e.g., Cannthomeatal line alignment).

- Blinded Review: Have a second researcher perform planning on the same image set independently to quantify inter-observer error.

Q2: My 2D imaging-based planning fails to account for critical vasculature, leading to bleeding risk in preclinical models. How can I mitigate this with conventional tools? A: 2D imaging (like standard micro-CT projections) cannot reliably resolve complex 3D vascular networks.

- Root Cause: The limitation is fundamental. Overlapping vessels in a 2D projection are indistinguishable, and depth along the beam path is unknown.

- Workaround (Protocol): You must integrate a contrast-enhanced 3D imaging modality prior to final planning.

- Experimental Protocol: Ex Vivo Vascular Casting & Planar Radiography:

- Perfuse the subject (e.g., rodent) with a radio-opaque polymer (e.g., MICROFIL).

- Excise the target organ/tissue block.

- Acquire high-resolution digital radiographs from multiple angles (e.g., 0°, 45°, 90°).

- Manually trace suspected vessel paths by comparing angles to create a rough 3D mental map.

- Plan implant trajectory to avoid major trunks identified in Step 4.

- Limitation: This is destructive, time-consuming, and still approximate.

- Experimental Protocol: Ex Vivo Vascular Casting & Planar Radiography:

Q3: When using atlas overlay on a 2D image for targeting a deep brain structure, the atlas does not align with my subject's anatomy. What should I do? A: This misalignment stems from the use of a standardized atlas on a subject with unique brain geometry.

- Primary Issue: Conventional linear scaling of an atlas based on one or two external landmarks (e.g., Bregma, Lambda) does not account for non-uniform anatomical variation.

- Immediate Action:

- Identify More Landmarks: Use all visible internal landmarks (e.g., sinus borders, distinct ventricular edges) as anchor points.

- Perform Piecewise Scaling: Manually divide the atlas and image into sectors (anterior/posterior, dorsal/ventral) and scale each sector separately to improve local fit.

- Document Discrepancy: Note the regions of poorest fit quantitatively (see table below). This data is valuable for training statistical shape models.

Quantitative Data Summary: Error Magnitudes in Conventional 2D Planning

| Error Source | Typical Magnitude (in Preclinical Rodent Models) | Impact on Implant Positioning |

|---|---|---|

| 2D Projection (Depth Error) | 0.5 - 1.5 mm | Highest along the axis perpendicular to the imaging plane |

| Manual Landmarking (Inter-User) | 0.3 - 0.8 mm | Introduces translational shift in all planes |

| Atlas Registration Mismatch | 0.4 - 1.2 mm (region-dependent) | Causes target-specific bias (e.g., ventral vs. dorsal) |

| Surgical Drill Wander | 0.2 - 0.5 mm | Deviates from planned trajectory, error increases with depth |

Experimental Protocols

Protocol 1: Quantifying Intra-Operator Variance in Manual 2D Planning Objective: To measure the consistency of a single researcher performing implant planning on identical 2D image sets over multiple sessions. Materials: See "Research Reagent Solutions" table. Methodology:

- Select a repository of 10 representative 2D radiographic images (e.g., lateral skull X-rays) with pre-defined, verifiable ground truth landmarks (e.g., implanted fiducials).

- The researcher performs complete implant trajectory planning (entry point and target point selection) on all 10 images in Session 1.

- All plans are saved as coordinate sets relative to a defined origin (e.g., Bregma).

- Steps 2-3 are repeated in two more sessions, spaced 48 hours apart, with the image order randomized.

- Analysis: For each image, calculate the Euclidean distance between the planned target coordinates from Session 1 vs. 2, Session 1 vs. 3, and Session 2 vs. 3. Compute the mean and standard deviation of these distances across all 10 images.

Protocol 2: Assessing the Impact of Head Tilt on 2D Targeting Accuracy Objective: To empirically determine how angular deviation in a subject's positioning affects the perceived location of a target in a 2D projection. Methodology:

- Use a phantom skull containing a known, measurable 3D target point (e.g., a small metal bead at a known coordinate).

- Mount the phantom on a goniometric stage. Acquire a "perfect" reference radiograph at 0° tilt (frontal plane perpendicular to beam).

- Mark the target's apparent 2D location (

X_ref,Y_ref) on this reference image. - Systematically tilt the phantom stage to 5°, 10°, and 15° in the sagittal and coronal planes, acquiring a new radiograph at each angle.

- For each tilted image, mark the apparent 2D location of the same target (

X_tilt,Y_tilt). - Analysis: Plot the vector displacement (

√[(X_tilt - X_ref)² + (Y_tilt - Y_ref)²]) against tilt angle. This curve visualizes the projection error introduced by non-standardized positioning.

Visualizations

Title: Error Propagation in Conventional 2D Implant Planning

Title: Thesis Framework: Solving Conventional Limits with AI & Stats

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context | Typical Specification / Example |

|---|---|---|

| Radio-Opaque Fiducial Markers | Provide ground truth landmarks for quantifying error in 2D imaging studies. | 100-500µm titanium spheres; MICROFIL (lead-based polymer) for vascular casting. |

| Stereotactic Frame System | Provides a coordinate system for translating 2D plans to 3D surgery, minimizing mechanical error. | Digital readout precision: ±10µm; Angular adjustment: ±1°. |

| Calibration Phantom | Validates scaling and geometric distortion of 2D imaging systems (X-ray, fluoroscope). | Acrylic grid with embedded metal markers at known spaced intervals (e.g., 1mm). |

| Histological Validation Dyes | Post-mortem verification of implant location, the gold standard for assessing planning error. | Fluorescent DiI tract tracing; Cresyl Violet for Nissl staining to locate electrode lesions. |

| 2D Image Analysis Software | Enables manual planning, coordinate extraction, and basic measurement. | ImageJ (Fiji) with custom coordinate logging macros; commercial surgical planning suites. |

| Statistical Shape Model Atlas | Advanced tool that moves beyond linear atlases by modeling population-based anatomical variance. | Built from >50 high-resolution 3D scans (µMRI, µCT) of the target population (e.g., C57BL/6J mice). |

This technical support center addresses common issues encountered when developing and applying Statistical Shape Models (SSMs) within research focused on optimizing implant position using statistical and deep learning methods.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During SSM construction, my Procrustes alignment fails to converge, resulting in unstable mean shape calculation. What could be wrong? A: This is often caused by poor initialization or extreme outliers. Follow this protocol:

- Center & Scale: Ensure all shapes are centered to zero and scaled to unit size before alignment.

- Check Landmark Correspondence: Manually verify a subset of shapes for consistent landmark ordering. Use

meshalignorgparettools for validation. - Outlier Removal: Calculate the Procrustes distance of each shape to the initial mean. Temporarily exclude shapes with distances >3 standard deviations. Re-run alignment and reintroduce outliers after convergence.

- Iteration Limit: Increase the maximum iteration limit to 500+.

Q2: My SSM shows poor generalization (high specificity error) when tested on unseen data, limiting its use for implant simulation. How can I improve it? A: Poor generalization indicates the model is over-fitted to the training set or the training set lacks diversity.

- Solution 1: Increase Training Data Diversity. Ensure your dataset spans the full anatomical population (age, pathology, ethnicity) relevant to your implant cohort. Aim for n > 50 for initial models.

- Solution 2: Apply Dimensionality Reduction Correctly. Retain fewer principal components (PCs). Use the following metrics to choose the number of PCs k:

Table 1: Metrics for Selecting Principal Components in SSM

| Metric | Formula/Description | Target Threshold for Model Selection |

|---|---|---|

| Compactness | ( C(k) = \sum{i=1}^{k} λi ) | Choose k that captures 95-98% of total variance. |

| Generalization | ( G(k) = \frac{1}{N} \sum{i=1}^{N} |\mathbf{x}i - \hat{\mathbf{x}}_i(k)|^2 ) | Look for the "elbow" point where error plateaus. |

| Specificity | ( S(k) = \frac{1}{M} \sum{j=1}^{M} |\mathbf{y}j - \hat{\mathbf{y}}_j(k)|^2 ) | Should remain low; a sharp rise indicates over-fitting. |

- Solution 3: Consider a Bayesian or Deep Learning Prior. For very small datasets (n < 30), integrate a deep shape prior (e.g., from a convolutional autoencoder) to regularize the SSM.

Q3: When integrating my SSM with a deep learning segmentation network (CNN/U-Net), the pipeline fails to output a statistically plausible shape for implant planning. How do I debug this? A: The issue likely lies in the coupling between the CNN output and the SSM fitting step.

- Verify CNN Output: Ensure your CNN is trained to predict dense point correspondences (not just a binary mask) that match the SSM's topology. Use a differentiable Procrustes layer or a spatial transformer network within the CNN.

- Check the Reconstruction Loss Weight: If using a combined loss (e.g.,

L_total = L_image + β * L_shape), the shape regularization weightβmay be incorrectly set. Perform a sweep:- β too low: Output shape is anatomically implausible.

- β too high: Output shape is overly rigid and ignores image data.

- Protocol for Optimal β:

- On a validation set, vary β logarithmically (e.g., 0.01, 0.1, 1, 10).

- For each β, compute the Mean Point-to-Mesh Error (in mm) and the Mahalanobis distance of the shape parameters to the SSM.

- Select β that minimizes Point Error while keeping Mahalanobis distance < 3σ.

Q4: I encounter "Missing Correspondence" errors when building a model from automated segmentations. What tools can establish correspondence? A: Correspondence is critical. Use this workflow:

Workflow Title: Dense Correspondence for SSM Building

Key Tools:

- MeshMonk: Robust, open-source non-rigid mesh registration.

- Deformetrica: Statistical shape modeling software with automatic correspondence.

- Open3D/ICP: For initial rigid alignment.

- Manual Landmarking (e.g., 3D Slicer): Still required for 5-10 initial landmarks to bootstrap automation.

Research Reagent Solutions

Table 2: Essential Toolkit for SSM-based Implant Optimization Research

| Item | Function in Research | Example Solutions / Software |

|---|---|---|

| Shape Dataset | Population cohort for training and validation. | Public: ADNI (brain), UK Biobank. Private: Institutional CT/MRI scans. |

| Segmentation Tool | Extract binary masks from medical images. | Manual: ITK-SNAP, 3D Slicer. Automated: nnU-Net, MONAI. |

| Correspondence Algorithm | Establish point-to-point matches across shapes. | MeshMonk, Deformetrica, MATLAB "pcregistercpd". |

| SSM Construction Library | Perform PCA and build the parametric model. | ShapeWorks (C++/Python), scikit-learn (PCA), MATLAB Stats Toolbox. |

| Deep Learning Framework | Integrate SSM priors into networks. | PyTorch, PyTorch3D, TensorFlow with Keras. |

| Biomechanical Simulator | Test implant performance across shape modes. | FEBio, ANSYS, Abaqus, SOFA. |

| Validation Metric Suite | Quantify model compactness, generalization, specificity. | Custom scripts implementing formulas in Table 1. |

Q5: How do I validate that my SSM is suitable for biomechanical simulation of implant loads? A: You must test beyond standard metrics. Use this Biomechanical Validation Protocol:

- Generate Extreme Shapes: Synthesize shapes at ±3σ along the first 3-5 mode boundaries.

- Run Simulations: For each extreme shape and the mean shape, run a Finite Element Analysis (FEA) simulation applying your implant's standard load.

- Measure Key Outputs: Record maximum stress (implant and bone) and displacement.

- Establish Safe Envelope: If stress/displacement values exceed material yield strengths or clinical thresholds, define the allowable shape parameters (PCA scores) as a "safe operating envelope" for implant planning.

Workflow Title: Biomechanical Validation of SSM for Implants

Technical Support & Troubleshooting Center

This center addresses common issues in data acquisition and processing for research on Optimizing Implant Position Using Statistical and Deep Learning Methods.

FAQ 1: Image Preprocessing & Segmentation

- Q: Our CT scan segmentation for bone morphology yields noisy, inaccurate 3D models. What are the critical parameters to check?

- A: Inaccurate segmentation often stems from poor scan quality or suboptimal thresholding.

- Verify Scan Parameters: Ensure your source CT scans meet the minimum resolution. For trabecular bone detail, isotropic voxel sizes ≤ 0.5 mm are recommended.

- Calibrate Hounsfield Units (HU): Use a phantom to calibrate HU scales across datasets. Consistent threshold ranges are vital.

- Apply Preprocessing Filters: Use a non-local means or Gaussian filter to reduce noise before thresholding.

- Threshold Selection: Do not rely on a single global value. Use adaptive or region-growing techniques, validated against manual segmentation by an expert.

- A: Inaccurate segmentation often stems from poor scan quality or suboptimal thresholding.

- Q: How do we ensure consistent landmark identification across multiple raters for statistical shape modeling?

- A: Inter-rater reliability is crucial for generating a robust statistical shape model (SSM).

- Protocol Definition: Create a detailed, image-based guide with example slices for each landmark.

- Use a Landmarking Tool: Employ software (e.g., 3D Slicer) that allows visualization in coronal, sagittal, and axial planes simultaneously.

- Training & Validation: Have all raters annotate a small, common set (n=5-10 scans). Calculate intra-class correlation coefficients (ICC) for each landmark coordinate.

- Threshold: Only include landmarks with ICC > 0.75 in your final set. Consider using semi-automated landmarking algorithms after initial manual alignment.

- A: Inter-rater reliability is crucial for generating a robust statistical shape model (SSM).

FAQ 2: Biomechanical Parameter Calculation

- Q: Our finite element analysis (FEA) results show unrealistic stress concentrations at the bone-implant interface. How to troubleshoot?

- A: This typically indicates issues with mesh quality or material property assignment.

- Mesh Convergence Test: Refine your mesh globally and at the interface until the maximum von Mises stress changes by <5%.

- Interface Definition: Ensure contact conditions between bone and implant are defined correctly (e.g., bonded, frictional). Verify there are no penetrating or gap elements.

- Material Properties: Assign heterogeneous material properties based on the CT-derived bone density (HU). Use a validated density-elasticity relationship formula.

- A: This typically indicates issues with mesh quality or material property assignment.

- Q: What is the standard method to calculate the "center of rotation" or "helical axis" from dynamic motion capture data for joint biomechanics?

- A: The helical axis is calculated from the transformation matrix between two poses of a rigid body (e.g., femur).

- Data: You need the 3D positions of a minimum of three non-collinear markers on the segment at two time points.

- Method: Use the Spatial Helmert Transformation (or similar) to compute the rigid-body transformation matrix. The helical axis parameters (orientation, position, rotation, translation) are then derived from this matrix via eigenvalue decomposition.

- Tools: Implement using libraries like SciPy (Python) or custom scripts in MATLAB. Visualize the axis in your 3D coordinate system.

- A: The helical axis is calculated from the transformation matrix between two poses of a rigid body (e.g., femur).

Experimental Protocol: Generating a Statistical Shape Model (SSM) for a Femur

- Data Acquisition: Collect N ≥ 50 high-resolution CT scans of the target bone (e.g., femur). Standardize positioning.

- Segmentation: Semi-automatically segment each scan using a calibrated HU threshold (-200 to 1500 HU for cortical bone). Generate a 3D surface mesh (.stl).

- Alignment: Perform Procrustes analysis to rigidly align all meshes to a common coordinate system.

- Correspondence Establishment: Use a mesh correspondence algorithm (e.g., coherent point drift, mesh morphing) to ensure each mesh has the same number of vertices and consistent landmark correspondence.

- Model Construction: Perform Principal Component Analysis (PCA) on the stacked vectors of all corresponding vertex coordinates. The output is the SSM, defined by a mean shape and modes of variation (eigenvectors).

Experimental Protocol: Finite Element Analysis of an Implanted Tibia

- Model Generation: Start with a segmented 3D model of a tibia from a CT scan.

- Implant Positioning: Position a 3D CAD model of the implant in the desired location using surgical planning software.

- Boolean Operation: Subtract the implant volume from the bone volume to create the implanted geometry.

- Meshing: Generate a volumetric tetrahedral mesh. Apply mesh refinement at the bone-implant interface.

- Material Assignment: Assign isotropic, linear elastic properties to the implant (e.g., Titanium, E=110 GPa, ν=0.3). Assign bone properties spatially using a formula (e.g., E = 1.92 * ρ^1.56, where ρ is apparent density from HU).

- Boundary Conditions & Loading: Fix the distal end. Apply a joint reaction force (e.g., 1000N) and muscle forces (e.g., patellar tendon force) at their physiological locations and directions based on gait analysis data.

- Solver & Output: Run a static structural analysis in an FEA solver (e.g., Abaqus, FEBio). Output von Mises stress in bone and implant, and micromotion at the interface.

Key Datasets for Implant Optimization Research

| Dataset Name | Key Biometric Parameters | Modality | Sample Size (Typical) | Primary Use Case |

|---|---|---|---|---|

| The Osteoarthritis Initiative (OAI) | Knee joint space width, bone morphology, cartilage thickness | MRI, X-Ray | ~4,800 participants | Statistical shape modeling of knee; longitudinal studies |

| CASIA-B | Gait kinematics, silhouette | Video | 124 subjects | Pre-training deep learning models for pose estimation |

| NIH Chest CT | Thoracic bone structure (ribs, spine) | CT | 1,000+ patients | Developing bone segmentation algorithms |

| Private Biomechanics Lab Data | Joint reaction forces, EMG, motion capture trajectories | Force plates, EMG, Optitrack | Varies (n=10-50) | Validating simulated biomechanical loading in FEA |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| µCT Scanner | Provides high-resolution 3D images of bone microstructure for ex-vivo validation of in-silico models. |

| Optical Motion Capture System (e.g., Vicon, OptiTrack) | Captures high-fidelity kinematic data to define physiological loading conditions for FEA and validate implant kinematics. |

| 3D Slicer | Open-source platform for medical image visualization, segmentation, and 3D model generation from DICOM files. |

| FEA Software (e.g., Abaqus, ANSYS, FEBio) | Performs biomechanical simulations to assess implant stability, stress shielding, and bone remodeling potential. |

| Python Stack (NumPy, SciPy, PyTorch/TensorFlow, VTK) | Core environment for statistical analysis, building deep learning models (e.g., for landmark detection), and custom 3D data processing. |

| Statistical Shape Model Software (e.g., Deformetrica, ShapeWorks) | Specialized tools for building and analyzing SSMs from population-based 3D meshes. |

Visualization 1: Data Pipeline for Implant Optimization Research

Research Workflow: Data to Implant Design

Visualization 2: Finite Element Analysis Workflow

FEA Simulation & Validation Process

Technical Support Center: Troubleshooting for Digital Anatomy & Implant Planning Pipelines

This support center addresses common technical issues encountered when implementing computational anatomy workflows for the thesis: Optimizing implant position using statistical and deep learning methods. The guides below assume a pipeline involving medical image segmentation, statistical shape model (SSM) construction, and deep learning-based landmark detection or registration.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During Statistical Shape Model (SSM) construction, my Procrustes alignment fails to converge or produces extreme scaling. What are the common causes? A: This is typically a data preprocessing issue.

- Root Cause 1: Incorrect Centroid Calculation. Ensure centroids are computed after masking all non-relevant voxels. Noise at image borders can skew the centroid.

- Root Cause 2: Inconsistent Meshing. Meshes derived from segmentations must have consistent topology (same number of vertices and connectivity). Verify you used a template-based mesh warping algorithm (e.g., from a reference to each subject) rather than independent meshing.

- Troubleshooting Protocol:

- Visualize the initial centroids of all aligned shapes.

- Check vertex counts across all meshes. They must be identical.

- Re-run alignment with scaling disabled. If results are stable, the issue is likely in the scaling step of your algorithm.

Q2: My deep learning model for landmark detection trains well but generalizes poorly to new clinical scans from a different scanner. How can I improve robustness? A: This indicates a domain shift problem between your training and validation data.

- Root Cause: Lack of Intensity & Spatial Augmentation. The model has overfitted to the specific intensity distribution and limited anatomical variation in your training set.

- Troubleshooting Protocol:

- Augmentation Enhancement: Implement a robust augmentation pipeline during training. See Table 2.

- Input Normalization: Apply test-time normalization (e.g., Z-score) based on the statistics of the input scan itself, not the training set.

- Data Source: Incorporate multi-scanner, multi-protocol data into training, even if limited.

Q3: When integrating a deep learning output (e.g., a heatmap) into a subsequent biomechanical simulation, the implant position is physically implausible. How do I debug this? A: The issue lies in the post-processing of network outputs.

- Root Cause 1: Argmax vs. Weighted Average. Using a simple

argmaxon a heatmap can lead to voxel-locked, jumpy predictions. A weighted centroid calculation is more stable. - Root Cause 2: Lack of Physiological Constraints. The network proposes a location that violates anatomical constraints (e.g., implant penetrating cortex).

- Troubleshooting Protocol:

- Visualize the network's confidence heatmap overlayed on the anatomy.

- Replace

argmaxwith a function that computes the centroid of all voxels with values above 0.5 * max(heatmap). - Implement a post-processing rule-based filter that checks the proposed position against a shape model's principal component bounds.

Data Presentation Tables

Table 1: Common Datasets for Computational Anatomy & Implant Planning Research

| Dataset Name | Modality | Primary Anatomy | Key Use-Case for Implant Planning | Sample Size (Typical) |

|---|---|---|---|---|

| CT-MICCAI 2015 | CT | Liver, Spleen | Abdominal implant/device planning | 30-40 training |

| OAI (Osteoarthritis Initiative) | MRI, X-Ray | Knee, Hip | Joint replacement & osteotomy planning | 4,796 subjects |

| UK Biobank | MRI (Brain, Cardiac) | Brain, Heart | Neurological & cardiovascular implants | 100,000+ subjects |

| Total Knee Replacement CT | CT | Femur, Tibia | Patient-specific knee implant positioning | 100-200 studies |

Table 2: Recommended Augmentation Strategies for Robust Deep Learning Models

| Augmentation Type | Parameters | Purpose in Digital Planning |

|---|---|---|

| Intensity | Gamma shift (±0.3), Gaussian noise (μ=0, σ=0.03) | Simulates scanner and protocol variability. |

| Spatial (Affine) | Rotation (±10°), Scaling (±0.1), Shear (±0.05) | Accounts for patient positioning differences. |

| Spatial (Elastic) | Alpha (10-15), Sigma (3-5) | Models soft tissue deformation and anatomical variability. |

| Cutout/Dropout | Random mask of 5-10% of voxels | Forces model to rely on multiple contextual features, not single points. |

Experimental Protocols

Protocol 1: Building a Principal Component Analysis (PCA)-Based Statistical Shape Model

- Data Preparation: Segment the target anatomy (e.g., femur) from N aligned CT scans. Generate a consistent mesh for each using non-rigid registration to a chosen template.

- Alignment: Perform Generalized Procrustes Analysis (GPA) on all meshes to remove global translation, rotation, and scaling.

- Matrix Construction: Represent each shape as a vector of 3D vertex coordinates. Stack vectors to form a matrix

Xof size [N x (3*V)]. - PCA: Compute the mean shape. Perform Singular Value Decomposition (SVD) on the centered data matrix to obtain eigenvectors (modes of variation) and eigenvalues (variance explained).

- Model Validation: Use compactness (variance vs. modes), generalization, and specificity metrics.

Protocol 2: Training a 3D Landmark Detection Network (e.g., Voxel Heatmap Regression)

- Input/Output: Input is a 3D image patch (e.g., 128x128x128 voxels). Output is a 3D Gaussian heatmap centered on the target landmark (e.g., femoral head center).

- Network Architecture: Use a 3D variant of a U-Net with residual connections. The final layer uses a sigmoid activation.

- Loss Function: Minimize Mean Squared Error (MSE) between predicted and ground-truth heatmaps.

- Training: Use Adam optimizer (lr=1e-4), batch size of 4-8 (memory dependent). Train for 50-100 epochs with the augmentations from Table 2.

- Inference: Feed full image in a sliding-window or resized manner. Aggregate heatmaps and compute landmark coordinates as the weighted centroid.

Mandatory Visualization

Diagram 1: Workflow for Implant Optimization Pipeline

Diagram 2: Statistical Shape Model Construction & Use

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Computational Anatomy / Implant Planning |

|---|---|

| ITK-SNAP / 3D Slicer | Open-source software for manual segmentation, visualization, and mesh generation from medical images. Essential for creating ground truth data. |

| PyTorch / MONAI | Deep learning frameworks. MONAI provides domain-specific layers, losses, and metrics for 3D medical imaging, accelerating model development. |

| ShapeWorks | Specialized open-source toolkit for statistical shape modeling. Handles correspondence optimization and PCA on particle-based models. |

| VTK / PyVista | Visualization Toolkit. Used for 3D rendering, mesh processing, and creating interactive visualizations of shapes and implants. |

| FEBio / SOFA | Finite element analysis (FEA) and biomechanical simulation software. Critical for validating the mechanical feasibility of a planned implant position. |

| DICOM to NIfTI Converter (dcm2niix) | Robust tool for converting clinical DICOM files into the NIfTI format used by most research software, preserving metadata. |

| Elastix / SimpleITK | Toolkits for image registration. Used for atlas-based segmentation, inter-patient alignment, and non-rigid registration for template meshing. |

From Data to Deployment: Implementing Statistical and Deep Learning Models for Surgical Planning

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My PCA model overfits to the training cohort and fails to generalize to unseen bone shapes from new patients. What could be the cause?

- A: This is often due to insufficient sample size or poor registration. Ensure your training dataset is large and diverse (e.g., >50-100 shapes). Re-examine your registration protocol; misaligned landmarks or surfaces will cause the PCA to model registration error rather than true biological shape variation. Consider using a groupwise registration approach prior to PCA.

Q2: How many principal components (PCs) should I retain for my statistical shape model (SSM) of the pelvis?

- A: There is no universal answer. Use objective criteria: retain enough PCs to explain ≥95-98% of the total cumulative variance. Always check the scree plot for an "elbow." Validate by reconstructing left-out shapes and measuring the accuracy. Insufficient PCs lose detail; too many introduce noise.

Q3: I get unstable PCs (mode swapping) when I rerun PCA on the same dataset. Why?

- A: PCA modes can become unstable when eigenvalues (variances) of consecutive PCs are very similar or equal. This indicates that the shape variation along those directions is not well-defined in your data. Use regularization or consider that these near-identical PCs represent a subspace of equivalent variation. For stability, you may bundle them together.

Q4: How can I integrate non-shape data (e.g., patient age, BMI) with my PCA shape model for implant optimization?

- A: After constructing the SSM, you can perform regression (e.g., Partial Least Squares Regression - PLSR) of the PC scores against the clinical parameters. This creates a predictive model linking demographics to shape, which can be used to generate patient-specific anatomies or stratify risk groups.

Troubleshooting Guides

Issue: Poor Compactness of the PCA Model

- Symptom: The model requires a large number of PCs to explain a modest amount of total variance.

- Diagnosis & Solution:

- Check Data Correspondence: Verify that all vertices/landmarks are in perfect anatomical correspondence across all samples. Poor registration is the leading cause.

- Check for Outliers: Run a leave-one-out analysis. Samples with very high reconstruction error may be outliers or registration failures. Visually inspect them.

- Increase Sample Size: The model may be trying to capture noise due to a small dataset.

Issue: Generated Shapes from the PCA Model are Unanatomical

- Symptom: Sampling along a PC (e.g., ±3√λ) produces shapes with impossible geometries.

- Diagnosis & Solution:

- Validate Parameter Ranges: The assumed Gaussian distribution of PC scores may not hold in the tails. Restrict sampling to observed ranges in the training data (e.g., min/max score for each PC).

- Incorporate Constraints: Use a more advanced model like a Probabilistic PCA (PPCA) or a Gaussian Process Morphable Model that can incorporate biomechanical constraints to ensure plausibility.

Key Experimental Protocol: Building a PCA-based Statistical Shape Atlas for the Femur

- Data Acquisition: Collect a cohort of N (>50 is recommended) 3D medical images (CT scans) of the target anatomy (e.g., femur) from a representative population.

- Segmentation & Surface Generation: Segment the bone from each image using thresholding or deep learning (e.g., a U-Net) to generate a 3D mesh.

- Establishing Correspondence:

- Select one mesh as the reference (template).

- Use a non-rigid iterative closest point (ICP) or model-based registration algorithm to deform the template mesh onto each target mesh in the cohort.

- This yields a set of K vertices for each of the N meshes, where each vertex k corresponds to the same anatomical location across all samples.

- Shape Vectorization: For each sample i, concatenate the (x, y, z) coordinates of all K vertices into a single shape vector s_i of length 3K.

- Data Alignment (Procrustes): Perform Generalized Procrustes Analysis (GPA) on the set of shape vectors to remove global translation, rotation, and scaling.

- Principal Component Analysis (PCA):

- Compute the mean shape: μ = (1/N) Σ si.

- Build the data matrix X = [s1 - μ, s2 - μ, ..., sN - μ]^T.

- Compute the covariance matrix C = (1/(N-1)) X^T X.

- Perform eigenvalue decomposition: C = U Λ U^T.

- The columns of U are the principal components (modes of shape variation). Λ is a diagonal matrix of eigenvalues (variances).

- Model Generation: Any shape can now be approximated as: s = μ + U p, where p is a vector of PC scores (parameters).

Visualizations

Diagram Title: Workflow for Building a PCA-Based Statistical Shape Model

Diagram Title: PCA Shape Model Encoding, Decoding, and Variation Modes

Quantitative Data Summary

Table 1: Typical Variance Explained by First 10 PCs in a Pelvic SSM (Example Cohort, N=80)

| Principal Component | Eigenvalue (λ) | Variance Explained (%) | Cumulative Variance (%) |

|---|---|---|---|

| PC1 | 125.4 | 32.5% | 32.5% |

| PC2 | 89.7 | 23.2% | 55.7% |

| PC3 | 45.2 | 11.7% | 67.4% |

| PC4 | 22.1 | 5.7% | 73.1% |

| PC5 | 18.3 | 4.7% | 77.8% |

| PC6 | 12.8 | 3.3% | 81.1% |

| PC7 | 9.5 | 2.5% | 83.6% |

| PC8 | 7.1 | 1.8% | 85.4% |

| PC9 | 6.0 | 1.6% | 87.0% |

| PC10 | 4.9 | 1.3% | 88.3% |

Table 2: Reconstruction Accuracy vs. Number of PCs Used (Leave-One-Out Test)

| Number of PCs Retained | Explained Variance | Mean Surface Error (mm) | 95th Percentile Error (mm) |

|---|---|---|---|

| 5 | 77.8% | 1.8 mm | 4.5 mm |

| 10 | 88.3% | 1.1 mm | 2.8 mm |

| 15 | 93.5% | 0.7 mm | 1.9 mm |

| 20 | 96.8% | 0.4 mm | 1.2 mm |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for PCA-based Shape Modeling in Implant Research

| Item / Software / Library | Function & Purpose |

|---|---|

| 3D Slicer | Open-source platform for medical image visualization, segmentation, and initial mesh processing. |

| Python (SciKit-Learn, NumPy) | Core libraries for implementing PCA, data standardization, and matrix operations. |

| PyTorch / TensorFlow | Frameworks for developing deep learning segmentation models (Step 1 of protocol) and potential deep PCA variants. |

| MeshLab / PyMesh | Tools for cleaning, simplifying, and analyzing 3D surface meshes post-segmentation. |

| Deformable Registration Toolkit (e.g., ANTs, Elastix) | Software packages for performing the critical non-rigid registration to establish correspondence between meshes. |

| ShapeWorks | Dedicated open-source platform for building particle-based statistical shape models, an alternative/complement to PCA. |

| VTK / PyVista | Libraries for 3D visualization of mean shapes, principal modes, and implant-fit simulations. |

| Clinical CT/MRI Datasets | High-resolution, anonymized patient image databases (e.g., TCIA) for building the training cohort. |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: For our research on optimizing orthopedic implant position from CT scans, which architecture should I start with: a standard CNN, a U-Net, or a Vision Transformer? A: For segmentation tasks crucial to implant boundary delineation, U-Net is the established starting point due to its encoder-decoder structure and skip connections for precise localization. Use a CNN (e.g., ResNet) for initial classification tasks (e.g., assessing bone quality). Vision Transformers are promising for capturing global context but require significantly more data and computational resources; start with them only if you have a large, well-annotated dataset (>10,000 scans).

Q2: My U-Net model for segmenting the femoral canal is converging poorly—training loss is volatile. What are the first checks? A: Follow this checklist:

- Data & Labels: Verify annotation consistency across all training images. A single mislabeled slice can destabilize training.

- Learning Rate: It is likely too high. Reduce by an order of magnitude (e.g., from 1e-3 to 1e-4) and monitor.

- Batch Size: With small medical datasets, use a small batch size (2, 4, 8) to ensure stable gradient estimates.

- Loss Function: For class imbalance (e.g., more background than canal voxels), switch from Dice Loss to a combined Dice + Cross-Entropy Loss.

Q3: When implementing a Vision Transformer for classifying implant stability from X-rays, I get "CUDA out of memory." How can I manage this? A: ViTs have high memory complexity (O(n²) for sequence length). Implement these strategies:

- Patch Size: Increase patch size (e.g., from 16x16 to 32x32) to reduce the sequence length.

- Gradient Accumulation: Use smaller effective batch sizes by accumulating gradients over multiple steps before updating weights.

- Model Scaling: Start with a "Tiny" or "Small" ViT configuration (e.g., ViT-T/16) before scaling up.

- Mixed Precision: Use Automatic Mixed Precision (AMP) training to reduce memory footprint.

Q4: How do I effectively combine statistical shape models (SSM) with a deep learning pipeline for implant positioning? A: A hybrid pipeline is effective. Use the U-Net to segment the target anatomy (e.g., pelvis). Then, fit a pre-computed Statistical Shape Model to the segmentation to obtain a statistically plausible 3D model. The SSM provides biomechanically constrained parameters (modes of variation) that serve as input to a final regression network (CNN) that predicts the optimal implant pose. This ensures predictions are anatomically realistic.

Q5: My dataset is small (~500 scans). How can I possibly train a deep model effectively? A: Leverage transfer learning and aggressive augmentation.

- Transfer Learning: Initialize your CNN encoder or ViT backbone with weights pre-trained on a large natural image dataset (ImageNet). Fine-tune on your medical data.

- Augmentation: Use domain-specific augmentations: random elastic deformations, simulated metal artifact noise, variations in contrast and brightness, and random rotations/translations within a physiologically plausible range.

Troubleshooting Guides

Issue: Model Overfitting on Small Medical Dataset Symptoms: Training loss decreases, but validation loss stagnates or increases after a few epochs. Performance on unseen patient data is poor. Step-by-Step Resolution:

- Increase Data Regularization:

- Apply stronger data augmentation (see Q5).

- Use spatial dropout (e.g.,

SpatialDropout2Din CNNs) instead of standard dropout.

- Increase Model Regularization:

- Add L2 weight regularization (kernel_regularizer) to convolutional layers.

- Increase dropout rates in the fully connected/classification heads.

- Reduce Model Capacity:

- Reduce the number of filters in CNN layers or the embedding dimension in ViTs.

- For U-Net, reduce the depth of the network.

- Apply Early Stopping: Halt training when validation loss has not improved for a defined number of epochs (patience=10-20).

- Use k-Fold Cross-Validation: Ensure your performance metric is stable across different data splits.

Issue: Poor Boundary Accuracy in U-Net Segmentation Symptoms: Predicted implant region or bone segmentations are "blobby" and lack fine, crisp edges, reducing pose estimation accuracy. Step-by-Step Resolution:

- Inspect Loss Function: Switch to a boundary-aware loss like

Boundary LossorClDice, which penalizes misalignment of contours more heavily. - Enhance Skip Connections: Ensure skip connections are copying feature maps correctly; consider using

attention gatesin skip connections to focus on relevant structures. - Post-Processing: Apply a conditional random field (CRF) as a post-processing step to refine boundaries based on both the model's probability output and the original image's low-level features.

- Data Inspection: Check if your ground truth annotations themselves have poor boundary definition. Inconsistent labeling is a common root cause.

Issue: Vision Transformer Training is Slow and Unstable Symptoms: Training takes days, loss curves are jagged, and final performance is subpar. Step-by-Step Resolution:

- Optimizer & Scheduling: Use the AdamW optimizer (better weight decay handling) with a warmup learning rate schedule. Warm up for 5-10% of total epochs.

- Gradient Clipping: Clip gradients to a norm (e.g., 1.0) to prevent exploding gradients in deep ViT models.

- Layer Normalization (Pre-Norm): Ensure your ViT implementation uses Pre-Layer Normalization, not Post-Layer Normalization, for better gradient flow.

- Stochastic Depth: Implement stochastic depth (drop entire layers during training) for regularization and faster training.

- Linear Attention Approximation: For very high-resolution images, consider implementing a linear attention variant (e.g., Performer, Linformer) to reduce the O(n²) complexity.

Quantitative Performance Comparison

Table 1: Typical Benchmark Performance of Architectures on Medical Imaging Tasks (Based on Recent Literature)

| Architecture | Typical Task | Key Metric (Avg. Range) | Data Requirement | Training Speed (Relative) | Key Strength for Implant Research |

|---|---|---|---|---|---|

| CNN (ResNet50) | Classification (Fracture Detection) | Accuracy: 88-94% | Medium (1k-10k images) | Fast | Excellent feature extraction for downstream tasks. |

| U-Net (with ResNet Encoder) | Segmentation (Bone/Organ) | Dice Score: 0.85-0.95 | Medium (500-5k images) | Moderate | Precise pixel-level segmentation for anatomy modeling. |

| Vision Transformer (ViT-B/16) | Classification/Detection | Accuracy: 90-96%* | Large (>10k images) | Slow | Captures long-range dependencies in full-body scans. |

| Hybrid (CNN + Transformer) | Segmentation/Registration | Dice Score: 0.88-0.96 | Medium-Large | Moderate-Slow | Balances local features (CNN) with global context (ViT). |

*When pre-trained on large datasets and fine-tuned effectively.

Experimental Protocols

Protocol 1: Training a U-Net for Automatic Femoral Canal Segmentation Objective: Generate accurate 3D masks of the femoral canal from CT to guide implant sizing and positioning. Methodology:

- Data Preprocessing: Convert Hounsfield Units (HU) to a normalized range [0, 1]. Resample all CT volumes to isotropic voxel spacing (e.g., 1mm³). Extract coronal slices centered on the femur.

- Annotation: Use semi-automatic tools (ITK-SNAP) with manual correction by an expert radiologist to create ground truth masks.

- Augmentation: On-the-fly augmentation using batch-wise: random rotation (±15°), scaling (±10%), elastic deformation (σ=3, α=50), and additive Gaussian noise.

- Model: Standard U-Net with 4 encoding/decoding levels, batch normalization, and ReLU activation.

- Training: Loss = 0.5 * Dice Loss + 0.5 * Binary Cross-Entropy. Optimizer: Adam (lr=1e-4). Batch size: 8. Train for 200 epochs with early stopping.

- Validation: 5-fold cross-validation. Primary metric: 3D Dice Similarity Coefficient (DSC) on a hold-out test set of unseen patients.

Protocol 2: Fine-Tuning a Vision Transformer for Implant Loosening Detection Objective: Classify post-operative X-rays into "stable" vs. "loosening" categories. Methodology:

- Data Curation: Collect paired AP and lateral view X-rays. Label based on clinical follow-up and revision surgery records.

- Preprocessing: Apply histogram equalization, resize to 224x224, and normalize with ImageNet statistics (for pre-trained models).

- Model Initialization: Use a ViT-B/16 model pre-trained on ImageNet-21k. Replace the final classification head with a two-layer MLP for binary classification.

- Training Strategy:

- Phase 1: Freeze the transformer encoder, train only the new head for 20 epochs (lr=1e-3).

- Phase 2: Unfreeze the last 4 transformer blocks and the head, fine-tune for 50 epochs (lr=1e-5). Use linear warmup for the first 5 epochs.

- Evaluation: Report Accuracy, Precision, Recall, F1-Score, and ROC-AUC on a temporally separated test set (patients from a later time period).

Visualizations

Title: U-Net & SSM Pipeline for Implant Planning

Title: Vision Transformer (ViT) Architecture for X-ray Classification

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for Medical DL Research

| Item (Name & Version) | Category | Function/Benefit | Typical Use in Implant Research |

|---|---|---|---|

| MONAI (Medical Open Network for AI) | DL Framework | Domain-specific PyTorch extensions for healthcare imaging (losses, metrics, transforms). | Standardized 3D medical data loading, advanced augmentation (random cropping, simulated artifacts), and medical-specific metrics. |

| ITK-SNAP / 3D Slicer | Annotation & Visualization | Interactive software for semi-automatic and manual 3D image segmentation. | Creating high-quality ground truth labels for bones, implants, and anatomical regions of interest from CT/MRI. |

| SimpleITK / ITK | Image Processing | Comprehensive library for image registration, segmentation, and spatial transformation. | Preprocessing: resampling CT scans to isotropic voxels, aligning pre- and post-op scans, applying biomechanical transformations. |

| PyTorch / TensorFlow | Core DL Framework | Flexible deep learning libraries for building and training custom neural network models. | Implementing and experimenting with CNN, U-Net, and Transformer architectures. |

| NiBabel / PyDicom | Data I/O | Libraries for reading and writing neuroimaging (NIfTI) and DICOM file formats. | Loading clinical CT and MRI scans directly into Python for pipeline processing. |

| Elastix / ANTs | Advanced Registration | Toolboxes for robust, high-dimensional image registration. | Non-rigidly registering a statistical shape model atlas to a patient-specific scan for initialization. |

| TensorBoard / Weights & Biases | Experiment Tracking | Visualizing training metrics, model graphs, and hyperparameter comparisons. | Tracking loss/accuracy curves for different model architectures, comparing Dice scores across experiments. |

| scikit-learn | General ML | Tools for data splitting, statistical analysis, and traditional machine learning models. | Creating balanced train/val/test splits, performing principal component analysis (PCA) on shape model parameters. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During volumetric data pre-processing for landmark detection, I encounter severe artifacts and misalignment between my CT scan slices. What steps should I take? A: This is often due to patient movement or scanner calibration issues. Follow this protocol:

- Re-run rigid registration: Use a mutual information-based algorithm (e.g., SimpleITK or ANTs) to align all slices to a reference middle slice.

- Apply intensity normalization: Use N4 bias field correction to correct for scanner intensity inhomogeneity.

- Filter artifacts: Apply a non-local means denoising filter (e.g., in OpenCV or ITK) to reduce noise while preserving edges.

- Validate: Check alignment by visualizing multiplanar reconstruction (MPR) views. The cortex and other rigid structures should be continuous.

Q2: My deep learning model for landmark segmentation converges but produces high false-positive predictions in areas of low bone density. How can I improve specificity? A: This indicates poor generalization to varied anatomical densities. Implement the following:

- Data Augmentation: Augment your training set with synthetic variations in Hounsfield Unit (HU) values to simulate osteopenia and osteoporosis.

- Loss Function: Switch from Dice Loss to a combined Dice + Focal Loss. Focal Loss reduces the weight of easy, background-negative examples, forcing the network to focus on harder, low-contrast regions.

- Post-Processing: Apply a connected-component analysis filter post-segmentation. Remove predicted blobs with a mean HU value (from the original scan) below a conservative threshold (e.g., 200 HU for trabecular bone).

Q3: The statistical shape model (SSM) fails to initialize correctly on atypical anatomies (e.g., severe dysplasia). How can I make the pipeline more robust? A: The SSM's mean shape may be too far from the target. Use a hybrid initialization:

- Coarse Deep Learning Detection: First, run a lightweight CNN (e.g., a U-Net) trained to predict a low-resolution, bounding box heatmap for the general region (e.g., entire pelvis).

- SSM Initialization: Use the centroid of this coarse segmentation as the initial pose for the SSM.

- Allow Greater Shape Deviation: In the first 10 iterations of SSM fitting, increase the allowed Mahalanobis distance parameter for shape coefficients to 3√λ (beyond the typical 3√λ), then reduce it for final refinement.

Q4: When integrating the landmark detection pipeline into the broader implant optimization workflow, runtime is too slow for clinical use. What are the key optimization points? A: Profile your pipeline. The bottlenecks are typically:

- Model Inference: Convert your trained PyTorch/TensorFlow models to ONNX and then to TensorRT (for NVIDIA GPUs) or OpenVINO (for Intel CPUs) for optimized inference.

- Data Loading: Use a dedicated data loader (e.g., PyTorch's DataLoader with multiple workers) and pre-cache all pre-processed volumes.

- Algorithmic: For the SSM, use a multi-resolution fitting approach—fit to a heavily downsampled volume first, then refine at full resolution.

Q5: How do I validate the accuracy of my automated landmarks against a manually annotated "gold standard" dataset, and what are acceptable error margins? A: Use standardized geometric error metrics and report them as per Table 1.

Table 1: Landmark Validation Metrics and Benchmarks

| Metric | Formula | Calculation Example | Typical Target for Pelvic Landmarks |

|---|---|---|---|

| Target Registration Error (TRE) | ‖L_auto - L_manual‖ in mm |

Euclidean distance between corresponding points. | Mean TRE < 2.0 mm |

| Mean Absolute Error (MAE) | (1/n) * Σ ‖L_auto - L_manual‖ |

Average TRE across all 'n' landmarks. | MAE < 1.5 mm |

| Standard Deviation (SD) | √[ Σ (x - μ)² / (n-1) ] |

Spread of the TRE distribution. | SD < 1.2 mm |

| 95% Hausdorff Distance (HD95) | 95th percentile of max min-distance between point sets | Measures worst-case outliers. | HD95 < 4.0 mm |

Experimental Protocol for Validation:

- Dataset: Use at least 30 scans with landmarks annotated by 2+ experts.

- Compute Inter-observer Variability: Calculate MAE between expert annotations. This defines the "best possible" benchmark.

- Run Pipeline: Process all scans with your automated tool.

- Compute Metrics: Calculate TRE for each landmark, then aggregate MAE, SD, and HD95 as in Table 1.

- Statistical Test: Perform a paired t-test (or non-parametric equivalent) to show no significant difference (p > 0.05) between your tool's error and inter-observer variability.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for 3D Landmark Detection Research

| Item / Solution | Function in Context | Example Product / Library |

|---|---|---|

| High-Resolution CT Scan Data | Raw 3D volumetric input for reconstruction and analysis. | Public: TCIA (Cancer Imaging Archive). Private: Institutional PACS. |

| Manual Annotation Software | Creating gold-standard landmark datasets for training & validation. | 3D Slicer, ITK-SNAP, Mimics. |

| Deep Learning Framework | Building, training, and deploying segmentation/landmark detection networks. | PyTorch, TensorFlow, MONAI. |

| Medical Image Processing Library | Core algorithms for registration, filtering, and metric calculation. | SimpleITK, ITK, NiBabel. |

| Statistical Shape Model Library | Building and fitting PCA-based shape models to new instances. | Deformetrica, ShapeWorks, SCALPEL. |

| Optimization & Analysis Suite | Statistical testing, data visualization, and script automation. | Python (SciPy, NumPy, Pandas, Matplotlib). |

| High-Performance Computing (HPC) | GPU clusters for training deep networks and processing large cohorts. | NVIDIA DGX Station, AWS EC2 (P3/G4 instances). |

Experimental Workflow Diagram

Title: Automated Landmark Detection Workflow

Statistical Shape Model Fitting Diagram

Title: SSM Fitting to Target Anatomy

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: During training of a probabilistic regression network for implant position prediction, my model outputs degenerate, overconfident distributions (near-zero variance). What is the cause and solution? A: This is often caused by numerical instability in the loss function or incorrect scaling of target variables.

- Cause: Using Negative Log-Likelihood (NLL) loss with an unstable implementation where the predicted variance appears in a denominator.

- Solution: Implement a numerically stable NLL. Ensure the model outputs the log variance (

s = log(σ²)) instead of the variance or standard deviation directly. The loss is then:L = 0.5 * exp(-s) * (y_true - y_pred)² + 0.5 * s. - Protocol: Modify your final network layer to have two outputs:

[μ, log(σ²)]. Use the above stabilized loss. Also, standardize your target implant coordinates (e.g., translation in mm, rotation in degrees) to have zero mean and unit variance.

Q2: My Bayesian Neural Network (BNN) for uncertainty quantification in implant positioning is prohibitively slow to train and infer. Are there efficient alternatives? A: Yes, consider Monte Carlo (MC) Dropout or Deep Ensemble variants as efficient approximations.

- Cause: Full BNNs with variational inference or MCMC sampling have high computational overhead.

- Solution: Implement MC Dropout. Enable dropout at training and inference time. For each input, run 100-1000 forward passes with dropout active. The mean of the outputs is the final prediction; the standard deviation is the epistemic uncertainty.

- Protocol: After training a standard dropout-equipped regression network, perform inference using

model.train()mode (in PyTorch) ortraining=True(in TensorFlow) to keep dropout active. Collect multiple samples into a tensor and compute statistics.

Q3: How do I integrate statistical shape models (SSM) of bone anatomy with deep learning regression networks effectively? A: Use the SSM parameters as a compact, physiologically meaningful input feature vector to the network.

- Cause: Raw 3D mesh or image data is high-dimensional and may obscure key morphological parameters relevant to implant fit.

- Solution: Pre-process your dataset by fitting the SSM to each patient's anatomy (e.g., CT scan). Extract the primary shape mode coefficients.

- Protocol:

- Align and scale all bone meshes in your dataset.

- Perform Principal Component Analysis (PCA) to build the SSM.

- For each patient mesh, project it onto the SSM to obtain a vector of

kcoefficients (e.g.,[β₁, β₂, ..., βₖ]). - Use this

k-dimensional vector, concatenated with demographic data (age, BMI), as input to your regression network predicting optimal implant parameters.

Q4: When validating my model on a new clinical dataset, the probabilistic outputs show low uncertainty even for clearly erroneous predictions. What does this indicate? A: This indicates a mismatch between training and test data (domain shift) and that your model is poorly capturing epistemic (model) uncertainty.

- Cause: The model was likely trained only with Maximum Likelihood Estimation (MLE) which captures aleatoric (data) uncertainty but not epistemic uncertainty. It's overconfident on out-of-distribution samples.

- Solution: Incorporate methods that quantify epistemic uncertainty. Use Deep Ensembles or MC Dropout as in Q2.

- Protocol: Switch from a deterministic network to a Deep Ensemble. Train 5-10 independent models with different random weight initializations on the same data. At inference, compute the mean and variance across the ensemble's predictions. This variance captures both aleatoric and epistemic uncertainty.

Experimental Protocols Cited in FAQs

Protocol 1: Stabilized Probabilistic Regression Training

- Data Preprocessing: Normalize input features (e.g., SSM coefficients) to

[0,1]. Standardize target implant parameters (6-DOF pose) to zero mean, unit variance. - Model Architecture: Design a fully connected network with final layer outputting two values:

out_1 = μ,out_2 = log(σ²). Initialize bias forlog(σ²)to a small value (e.g., -3). - Loss Function: Implement stabilized NLL:

Loss = 0.5 * exp(-out_2) * ||y_true - out_1||² + 0.5 * out_2. - Training: Use Adam optimizer (

lr=1e-4), batch size of 32, for 1000 epochs with early stopping.

Protocol 2: MC Dropout for Efficient Uncertainty

- Model Modification: Insert Dropout layers (rate=0.1) after each hidden layer in a trained deterministic regression network.

- Stochastic Inference: Perform

T=200forward passes for a given input, with dropout layers active. - Aggregation: Stack outputs into matrix

P ∈ R^(T×6)(for 6 pose parameters). Compute predictive mean:μ_mc = mean(P, axis=0). Compute predictive variance (total uncertainty):σ²_total = var(P, axis=0).

Protocol 3: Deep Ensemble for Robust Uncertainty Quantification

- Independent Training: Train

M=5identical probabilistic regression networks (from Protocol 1) from different random seeds. - Inference: For each input, obtain

Msets of parameters(μ_i, σ²_aleatoric_i)from each network. - Uncertainty Decomposition: Compute:

- Predictive Mean:

μ_* = (1/M) Σ μ_i - Total Variance:

σ²_total = (1/M) Σ (σ²_aleatoric_i + μ_i²) - μ_*² - This separates epistemic (

σ²_epistemic = σ²_total - (1/M) Σ σ²_aleatoric_i) and aleatoric uncertainty.

- Predictive Mean:

Table 1: Comparison of Uncertainty Quantification Methods in Implant Position Prediction

| Method | Training Speed (rel.) | Inference Speed (rel.) | Captures Aleatoric Uncertainty? | Captures Epistemic Uncertainty? | Mean Absolute Error (mm/deg) |

|---|---|---|---|---|---|

| Deterministic NN | 1.0 (baseline) | 1.0 (fastest) | No | No | 1.21 |

| Probabilistic NN (MLE) | ~1.1 | 1.0 | Yes | No | 1.18 |

| MC Dropout | ~1.2 | 100x slower | Yes | Approximate | 1.15 |

| Deep Ensemble (M=5) | 5x slower | 5x slower | Yes | Yes | 1.10 |

| Full Bayesian NN | 50x slower | 1000x slower | Yes | Yes | 1.16 |

Table 2: Impact of Input Features on Model Performance for Femoral Implant Positioning

| Input Feature Set | RMSE (mm) | RMSE (deg) | Predictive Log-Likelihood ↑ |

|---|---|---|---|

| Raw Voxel (CT Scan) | 2.45 | 3.21 | -1.84 |

| Segmented Surface Mesh | 1.89 | 2.54 | -1.12 |

| SSM Coefficients (k=10) | 1.32 | 1.88 | -0.67 |

| SSM Coeff. + Patient Demographics | 1.28 | 1.79 | -0.61 |

Visualizations

Title: Implant Position Prediction Workflow

Title: Deep Ensemble Uncertainty Quantification

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Implant Optimization Research

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| PyTorch / TensorFlow Probability | Software Library | Core frameworks for building and training probabilistic neural networks with built-in probability distributions and loss functions. |

| VTK / SimpleITK | Software Library | For processing and visualizing 3D medical image data (CT/MRI) and performing mesh operations essential for SSM. |

| ShapeWorks | Software Toolkit | Open-source platform for building statistical shape models from segmented mesh populations. |

| 3D Slicer | Software | Clinical image computing platform for segmentation, registration, and 3D visualization of patient anatomy. |

| Synthetic Bone Dataset | Data | CT scans of synthetic femur/tibia models with known density and geometry, useful for controlled pilot studies. |

| Biomechanical Simulation Software (FEBio, ANSYS) | Software | Validates predicted implant positions by simulating stress, strain, and micromotion to check for biomechanical stability. |

| Automated Segmentation NN (e.g., nnU-Net) | Model/Software | Provides high-quality, consistent bone segmentations from CT scans as a prerequisite for SSM or direct learning. |

| Bayesian Optimization Library (Ax, BoTorch) | Software | For hyperparameter tuning of complex regression networks and optimizing acquisition functions in active learning loops. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when integrating digital twin, AR/VR, and CAD/CAM technologies into surgical workflows for research on optimizing implant position using statistical and deep learning methods.

Frequently Asked Questions (FAQs)

Q1: During the registration of a pre-operative digital twin to the intra-operative patient, the system reports a mean target registration error (mTRE) exceeding 2.5 mm. What are the primary troubleshooting steps?

A: High mTRE typically stems from issues in the 3D reconstruction or landmark correspondence. First, verify the fidelity of the pre-operative imaging (CT/MRI) segmentation. Ensure the surface mesh is watertight and free of artifacts. Second, check the intra-operative tracking system (e.g., optical or electromagnetic) for line-of-sight issues or metal interference. Re-calibrate all trackers. Third, validate the fiducial or surface landmark selection. Use a minimum of 4 non-coplanar fiducials. If using a surface scan, ensure the overlapping region with the digital twin is >70%. Consider switching from paired-point to a robust point-cloud algorithm (e.g., Iterative Closest Point with trimming) for better outlier rejection.

Q2: In our AR headset visualization, the holographic implant model appears unstable or "jitters" relative to the physical anatomy. How can this be minimized?

A: Jitter is primarily caused by latency and tracking inaccuracies in the AR system's simultaneous localization and mapping (SLAM). First, ensure the operating room environment has sufficient visual texture and stable lighting for the SLAM camera to track. Add passive QR code markers in fixed positions around the field as a stable global reference. Second, reduce computational latency by simplifying the holographic model's polygon count for the implant visualization. Third, implement a temporal filter (e.g., a Kalman filter) on the pose data stream to smooth the display. Check that the system's refresh rate is synchronized (ideally ≥ 90 Hz).

Q3: When exporting a statistically shaped implant from our deep learning framework to the CAD/CAM milling machine, the file fails with "non-manifold geometry" errors. What causes this and how is it fixed?

A: This error indicates the 3D mesh has edges shared by more than two faces, making it unsuitable for manufacturing. This is a common output issue from some neural network-based shape generators. The fix requires a post-processing pipeline:

- Import the generated STL/PLY file into a repair tool (e.g., MeshLab, Netfabb).

- Run automated repair scripts: "Remove Duplicate Faces," "Close Holes," and "Make Manifold."

- Re-mesh the surface using a Poisson surface reconstruction or a ball-pivoting algorithm to ensure a single, closed, watertight surface.

- Validate the mesh using the CAD software's analysis tool (should report "Manifold" and "No Self-Intersections").

Q4: Our deep learning model for predicting optimal implant position generalizes poorly to new patient demographics not well-represented in our training set. What data augmentation or model strategies are recommended?

A: This is a domain shift problem. Strategies include:

- Data Augmentation: Beyond spatial transforms, use simulation-based augmentation. Apply synthetic morphological variations to your training meshes using statistical shape model (SSM) principal component analysis (PCA) modes to generate plausible new anatomies.

- Transfer Learning: Pre-train your network on a larger, public dataset of general anatomical shapes (e.g., from public CT repositories) before fine-tuning on your specific implant dataset.

- Domain Adaptation: Incorporate a domain confusion loss or use adversarial training to learn features invariant to specific demographic descriptors.

- Algorithm Choice: Consider switching to or incorporating a Bayesian deep learning framework, which can provide uncertainty estimates. High uncertainty on novel demographics can flag cases for expert review.

Troubleshooting Guides

Guide 1: Resolving CAD/CAM Interface Incompatibility

Issue: The CAM software cannot interpret the curvature or tolerance specifications from the research-grade CAD file.

Solution Protocol:

- Re-export Geometry: From your research CAD/CAM interface (e.g., 3D Slicer, MITK), export the implant design as a high-resolution STEP file (AP214 or AP242 schema) in addition to STL. STEP files preserve precise boundary representation (B-Rep) data.

- Define Tolerances Explicitly: In the CAD software, before export, set the angular and chordal tolerance for any NURBS surfaces to be ≤ 0.01 mm.

- Use Intermediate Software: Import the STEP file into an intermediate, manufacturing-focused CAD package (e.g., FreeCAD, Fusion 360). Use the "Heal Geometry" tool, then re-export as a new STEP file or directly generate G-code if a post-processor for your specific mill is available.

- Verification: Open the final file in the target CAM suite and run a toolpath simulation to verify no collisions or impossible milling angles exist.

Guide 2: Calibrating an Optical See-Through AR Headset for Metric Accuracy

Objective: To achieve a visualization error of < 1.5 mm at a working distance of 50 cm for implant positioning guidance.

Protocol:

- Setup: Mount the AR headset (e.g., HoloLens 2) securely on a fixture. Position a calibrated checkerboard pattern (square size: 2.5 cm) at 50 cm in the headset's field of view. Use a high-accuracy optical tracker (e.g., OptiTrack) as ground truth.

- Display Calibration Pattern: Render a virtual clone of the checkerboard in the headset's display.

- Data Collection: Using the headset's eye-tracking cameras (via research mode APIs), capture the perceived 2D positions of multiple virtual checkerboard corners. Simultaneously, record their true 3D positions from the optical tracker. Repeat for 20 different pattern poses.

- Compute Correction: Calculate the homography or a non-linear distortion map between the perceived 2D and the expected 2D projections of the true 3D points.

- Apply and Validate: Apply this correction matrix to all subsequent holographic renders. Validate by placing a new virtual object at a known distance and measuring its perceived position error against the physical tracker.

Table 1: Comparison of Common Tracking Technologies for Surgical Workflow Integration

| Technology | Typical Static Accuracy | Latency | Key Advantage | Primary Limitation in OR | Best For |

|---|---|---|---|---|---|

| Optical (IR Passive) | 0.1 - 0.3 mm | 10 - 20 ms | Very High Accuracy | Line-of-sight required | Lab-based validation, high-precision CAD/CAM registration |

| Optical (IR Active) | 0.2 - 0.5 mm | 15 - 30 ms | No camera calibration drift | Wired tools, cost | Integrated navigation systems |

| Electromagnetic | 1.0 - 2.0 mm | 15 - 40 ms | No line-of-sight needed | Distorted by metal/electronics | ENT, biopsy with metal tools |

| Inside-Out (AR SLAM) | 1.0 - 5.0 mm (dynamic) | 30 - 50 ms | No external infrastructure | Drift over time, map memory | AR visualization, context-aware guidance |

Table 2: Performance Metrics for Implant Position Prediction Models (Hypothetical Data)

| Model Architecture | Mean ASD (mm) | 95% Hausdorff Distance (mm) | Inference Time (ms) | Computational Requirements (FLOPs) | Interpretability |

|---|---|---|---|---|---|

| 3D U-Net (Baseline) | 1.45 | 4.32 | 120 | ~15 G | Low |

| VoxelCNN with Attention | 1.28 | 3.95 | 85 | ~22 G | Medium |

| Statistical Shape Model (SSM) | 1.82 | 5.21 | 10 | <1 G | High |

| Hybrid SSM + Deep Network | 1.15 | 3.41 | 95 | ~18 G | Medium-High |

ASD: Average Surface Distance; FLOPs: Floating Point Operations.

Experimental Protocols

Protocol 1: Generating a Patient-Specific Digital Twin for Pre-Surgical Planning

Objective: To create a biomechanically simulated digital twin from patient CT data for implant stress analysis.

Methodology:

- Image Acquisition & Segmentation: Obtain high-resolution CT scans (slice thickness ≤ 0.625 mm). Use a deep learning segmentation tool (e.g., nnU-Net) to label bone tissue, creating a 3D binary mask.

- Mesh Generation & Processing: Convert the mask to a surface mesh (Marching Cubes). Apply smoothing and decimation to reduce polygons while preserving shape (target: 100k-200k faces). Ensure mesh is manifold and watertight.

- Material Property Assignment: Assign heterogeneous material properties based on CT Hounsfield Units (HU). Use a validated density-elasticity relationship (e.g., ρ = 0.001 * HU + 1.0 g/cm³; E = 3390 * ρ^1.2 MPa).

- Finite Element Model (FEM) Setup: Import mesh into FEM software (e.g., FEBio, Abaqus). Apply boundary conditions (fixed constraints at proximal ends) and load cases (joint contact forces from literature).