Uncertainty in Computational Biomechanics: A Complete Guide to Model Errors for Researchers

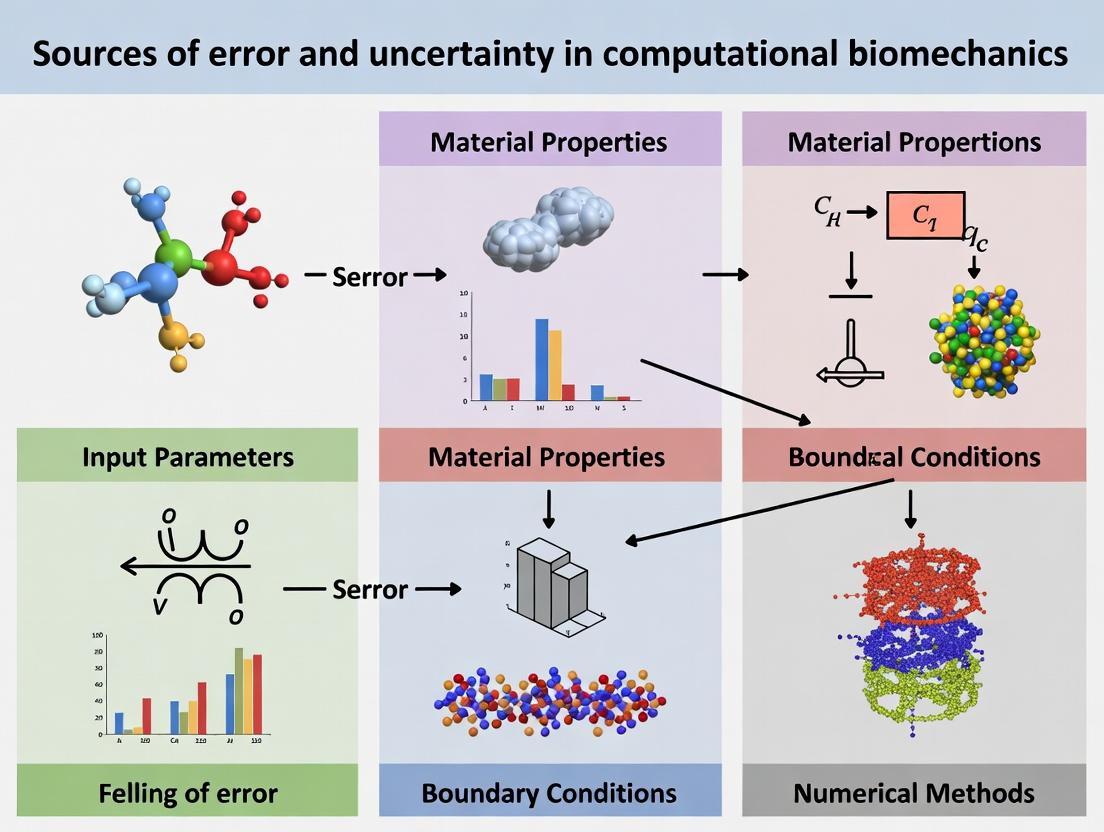

This article provides a comprehensive analysis of error and uncertainty in computational biomechanics, targeting researchers and drug development professionals.

Uncertainty in Computational Biomechanics: A Complete Guide to Model Errors for Researchers

Abstract

This article provides a comprehensive analysis of error and uncertainty in computational biomechanics, targeting researchers and drug development professionals. It explores the foundational sources of error in biological modeling, examines methodological challenges and their impact on applications like implant design and surgical planning, offers strategies for troubleshooting and optimizing model robustness, and discusses the critical frameworks for validation and comparison of computational predictions against experimental data. The guide synthesizes current best practices for quantifying and managing uncertainty to enhance the reliability of simulations in biomedical research.

The Core Sources of Error in Biomechanical Models: From Biological Variability to Mathematical Assumptions

In computational biomechanics, the reliability of models predicting phenomena like bone remodeling, soft tissue mechanics, and drug delivery is paramount. The concepts of error (a measurable discrepancy between a model's prediction and the true value) and uncertainty (a potential deficiency in knowledge about a system or process) are foundational. Distinguishing between them is critical for robust model development, validation, and informed decision-making in research and drug development.

Foundational Definitions in the Context of Computational Biomechanics

Error: A recognizable discrepancy that is not subject to probability. In biomechanics, errors are typically systematic (bias) or random (precision).

- Systematic Error (Bias): Consistent, reproducible inaccuracies. Example: Incorrect assignment of material properties in a finite element model of a bone due to calibration drift in the testing apparatus.

- Random Error: Scatter in repeated measurements or simulations. Example: Variability in strain gauge readings from a cadaveric spine segment under cyclic loading.

Uncertainty: A potential deficiency in any phase or activity of the modeling process that is due to lack of knowledge. It is characterized probabilistically.

- Aleatory Uncertainty: Inherent, irreducible variability in the system (stochasticity). Example: Inter-subject variability in bone mineral density within a target patient population.

- Epistemic Uncertainty: Reducible uncertainty from lack of knowledge or data. Example: Uncertainty in the constitutive model parameters for a novel hydrogel used in drug-eluting stents.

A systematic breakdown of sources, adapted from recent literature and standards (e.g., ASME V&V 40), is crucial.

| Source Category | Specific Example in Biomechanics | Typical Classification | Mitigation Strategy |

|---|---|---|---|

| Input Parameters | Young's modulus from tensile tests on excised skin. | Epistemic & Aleatory Uncertainty | Probabilistic characterization, sensitivity analysis. |

| Model Form | Use of linear elasticity to model large-deformation cardiac tissue. | Systematic Error (Bias) | Model selection/verification, multi-physics coupling. |

| Numerical Approximation | Finite element mesh density in a stress concentration region of an implant. | Systematic Error (Convergence) | Mesh refinement studies, adaptive meshing. |

| Experimental Validation Data | Noise in Digital Image Correlation (DIC) measurements of strain. | Random Error & Aleatory Uncertainty | Signal processing, repeated trials. |

| Boundary & Initial Conditions | Assumed in vivo loading conditions on a knee joint implant. | Epistemic Uncertainty | In vivo sensing, parameter inference. |

| Software Implementation | Round-off errors in solver algorithms. | Systematic/Random Error | Code verification, benchmark problems. |

Methodologies for Quantification

Protocol for Sensitivity Analysis (Global, Variance-Based)

Purpose: To apportion output uncertainty to specific input parameter uncertainties.

- Define Input Distributions: Characterize all uncertain model inputs (e.g., permeability, porosity) as probability distributions (Normal, Uniform) based on experimental data.

- Generate Sample Matrix: Use sampling techniques (Sobol sequences, Latin Hypercube) to create an efficient set of input combinations.

- Execute Model: Run the computational model (e.g., CFD of blood flow) for each input set.

- Calculate Sensitivity Indices: Compute Sobol indices (first-order, total-order) using the model outputs (e.g., wall shear stress). Total-order indices quantify a parameter's main effect and all interaction effects.

Protocol for Uncertainty Propagation

Purpose: To quantify the combined effect of all input uncertainties on the model output.

- Input Parameterization: As in 4.1, Step 1.

- Monte Carlo Simulation: Perform a large number (N > 1000) of deterministic model runs with inputs randomly drawn from their distributions.

- Output Analysis: Construct a probabilistic distribution of the Quantity of Interest (QoI) (e.g., probability of stent fracture). Report statistics: mean, standard deviation, and 95% confidence/credibility intervals.

Visualization of Core Concepts

Diagram 1: Error and Uncertainty Influence Model Output

Diagram 2: Workflow for Uncertainty Quantification

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Related Experimental Validation

| Item / Reagent | Function in Biomechanics Context |

|---|---|

| Polyacrylamide (PAA) Gel | Synthetic substrate for 2D or 3D cell mechanobiology studies; tunable stiffness to simulate various tissue microenvironments. |

| Fluorescent Microspheres (e.g., FluoSpheres) | Used as tracer particles for Particle Image Velocimetry (PIV) in experimental fluid dynamics (blood flow analogs). |

| Biaxial Tensile Testing System | Applies controlled loads along two in-plane axes to characterize anisotropic materials like myocardium or arterial tissue. |

| Digital Image Correlation (DIC) System | Non-contact, optical method to measure full-field 3D deformations and strains on tissue or implant surfaces. |

| Micro-Computed Tomography (μCT) Phantom | Calibration phantom with known density (e.g., hydroxyapatite) to quantify bone mineral density and microstructure. |

| Phosphate-Buffered Saline (PBS) with Protease Inhibitors | Standard physiological soaking solution for ex vivo tissue testing to maintain tissue hydration and inhibit degradation. |

| Finite Element Software (e.g., FEBio, Abaqus) | Core computational platform for simulating biomechanical systems, from organ-level to cellular mechanics. |

Geometric and Material Property Uncertainties in Anatomical Structures

Within the broader thesis on Sources of error and uncertainty in computational biomechanics research, this whitepaper addresses a critical, pervasive category of uncertainty: that arising from the intrinsic variability and imperfect characterization of anatomical geometry and constitutive material properties. These uncertainties fundamentally limit the predictive fidelity of finite element (FE) models used in implant design, surgical planning, and drug delivery system development. Accurate quantification and propagation of these uncertainties are essential for transitioning from deterministic to predictive, clinically relevant simulations.

Uncertainties are classified as aleatoric (irreducible intrinsic variability) or epistemic (reducible due to lack of knowledge). Both types are prevalent in anatomical modeling.

Geometric Uncertainties

- Source: Inter-subject anatomical variability, imaging artifacts (noise, partial volume effects), segmentation threshold selection, and model simplification (smoothing, idealization).

- Quantitative Data: The impact of segmentation variability on geometric metrics.

Table 1: Representative Variability in Segmented Bone Geometry

| Anatomical Site | Geometric Metric | Mean Value (±SD) | Coefficient of Variation (%) | Primary Uncertainty Source | Reference (Example) |

|---|---|---|---|---|---|

| Proximal Femur | Femoral Neck Angle | 126.5° (± 5.2°) | 4.1 | Inter-subject variability | [1] |

| Lumbar Vertebra (L4) | Vertebral Body Volume | 14560 mm³ (± 2150 mm³) | 14.8 | Segmentation protocol | [2] |

| Tibial Plateau | Cartilage Thickness | 2.1 mm (± 0.3 mm) | 14.3 | MRI image resolution | [3] |

Material Property Uncertainties

- Source: Inter- and intra-specimen tissue heterogeneity, testing protocol differences (loading rate, hydration), and the extrapolation of ex vivo data to in vivo conditions.

- Quantitative Data: Variability in measured tissue mechanical properties.

Table 2: Variability in Measured Material Properties of Biological Tissues

| Tissue | Property (Test) | Mean Value (±SD) | Coefficient of Variation (%) | Notes | Reference (Example) |

|---|---|---|---|---|---|

| Cortical Bone | Elastic Modulus (3-pt bending) | 17.5 GPa (± 3.2 GPa) | 18.3 | Location & donor dependent | [4] |

| Articular Cartilage | Aggregate Modulus (Indentation) | 0.65 MPa (± 0.22 MPa) | 33.8 | Depth-dependent, zone-specific | [5] |

| Aortic Wall | Ultimate Tensile Strength (Biaxial) | 2.8 MPa (± 0.9 MPa) | 32.1 | Age & pathology dependent | [6] |

Methodologies for Uncertainty Quantification and Propagation

Robust experimental and computational protocols are required to characterize these uncertainties.

Protocol 1: Probabilistic Finite Element Analysis (pFEA) Workflow

Objective: To propagate geometric and material uncertainties to quantify variability in a model output (e.g., stress, strain).

- Input Uncertainty Characterization: Define statistical distributions for uncertain inputs (e.g., Young's modulus as Normal (µ, σ), geometry as a shape vector from Statistical Shape Models).

- Sample Generation: Use Latin Hypercube Sampling (LHS) or Monte Carlo methods to generate N (e.g., 500-1000) sets of input parameters.

- Model Execution: Run a deterministic FE simulation for each parameter set.

- Output Analysis: Collect outputs and perform statistical analysis (mean, standard deviation, sensitivity indices via Sobol' method) to build a response surface.

Protocol 2: Experimental Protocol for Stochastic Material Property Mapping

Objective: To create spatially correlated stochastic material property fields for FE models.

- Specimen Preparation: Prepare n specimens from the same anatomical site of different donors.

- High-Resolution Testing: Perform micro- or nano-indentation tests across a predefined grid on each specimen.

- Spatial Statistics: Compute the mean, variance, and spatial correlation length (via variogram analysis) of the measured property (e.g., elastic modulus) across all specimens.

- Random Field Generation: Use the Kriging method or Karhunen-Loève expansion to generate multiple realizations of the material property field that honor the measured statistics for use in pFEA.

Visualizing Uncertainty Analysis Workflows

Uncertainty Propagation in pFEA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Uncertainty Quantification Studies

| Item / Solution | Function / Purpose | Example Vendor / Software |

|---|---|---|

| Micro-CT / HR-pQCT Scanner | Provides high-resolution 3D geometric data for building statistical shape and density models. | Scanco Medical, Bruker |

| Micro/Nano-indenter | Enables spatially resolved measurement of heterogeneous tissue material properties (elastic modulus, hardness). | Bruker (Hysitron), KLA |

| Digital Image Correlation (DIC) System | Measures full-field strains during mechanical testing to validate FE models and quantify geometric deformation uncertainty. | Correlated Solutions, Dantec Dynamics |

| Statistical Shape Modeling (SSM) Software | Generates parametric shape models capturing population-level geometric variability. | ShapeWorks, Deformetrica |

| Probabilistic FE Software | Solves pFEA problems, supporting stochastic material fields and random inputs. | ANSYS with Probabilistic Design, LS-OPT, DAKOTA |

| Stochastic Parameter Calibration Tools | Calibrates material model parameters to uncertain experimental data using Bayesian inference. | MITK-GEM, custom MCMC codes (PyMC3, Stan) |

The Impact of Biological Variability (Inter-Subject, Intra-Subject) on Input Parameters

Computational biomechanics is integral to biomedical research, enabling the simulation of physiological processes, drug interactions, and disease progression. However, the predictive power of these models is fundamentally constrained by the accuracy and representativeness of their input parameters. Biological variability—both inter-subject (differences between individuals) and intra-subject (temporal changes within an individual)—constitutes a primary source of error and uncertainty. This whitepaper examines the nature of this variability, its quantitative impact on key biomechanical and physiological parameters, and methodologies for its characterization within the broader thesis of error sources in computational modeling.

Quantifying Biological Variability

Biological variability introduces uncertainty that can propagate through computational models, leading to significant deviations in predicted outcomes. The following tables summarize quantitative data on variability for common input parameters in biomechanics and pharmacokinetic/pharmacodynamic (PK/PD) modeling.

Table 1: Inter-Subject Variability in Key Biomechanical & Physiological Parameters

| Parameter | Typical Mean/Range | Coefficient of Variation (CV%) | Primary Sources of Variation | Key Reference |

|---|---|---|---|---|

| Cortical Bone Elastic Modulus | ~17 GPa | 10-25% | Age, sex, genetic factors, diet | Morgan et al., 2018 |

| Arterial Wall Stiffness (PWV) | 5-15 m/s | 15-30% | Age, hypertension, genetic background | Palatini et al., 2021 |

| Muscle Maximum Force (Fmax) | Highly muscle-specific | 20-40% | Training status, fiber type composition, sex | Murtagh et al., 2020 |

| Cardiac Output (Resting) | 4.0-8.0 L/min | 20-25% | Body size, fitness level, age | Sato et al., 2022 |

| Liver Volume (Normalized) | ~26 mL/kg | 20-30% | Body composition, metabolic health | Johnson et al., 2021 |

Table 2: Intra-Subject Variability (Temporal) in Key Parameters

| Parameter | Time Scale | Magnitude of Variation | Primary Drivers | Measurement Method |

|---|---|---|---|---|

| Systemic Blood Pressure | Diurnal | ±10-15% | Circadian rhythm, activity, stress | Ambulatory Monitoring |

| Joint Laxity | Daily | 5-12% | Hormonal fluctuations (e.g., relaxin), hydration | Serial Ligament Testing |

| Metabolic Rate | Hourly/Daily | ±5-10% | Food intake, activity, sleep cycle | Indirect Calorimetry |

| Serum Cortisol | Diurnal | >100% (peak vs. trough) | Circadian rhythm, stress | Serial Phlebotomy |

| Gait Kinematics | Within session | 2-8% (cycle-to-cycle) | Fatigue, attention, minor perturbations | Motion Capture |

Experimental Protocols for Characterizing Variability

Protocol for Inter-Subject Variability in Bone Mechanical Properties

- Objective: To quantify population-level variability in trabecular bone modulus and strength.

- Materials: Human cadaveric bone samples (e.g., femoral heads, vertebral bodies) from a diverse donor pool (age, sex).

- Methodology:

- Sample Preparation: Machine bone cores to precise cylindrical dimensions using a diamond-coated coring tool under constant irrigation.

- Micro-CT Scanning: Image each core at an isotropic resolution of (e.g., 30µm) to quantify bone volume fraction (BV/TV) and microarchitecture.

- Mechanical Testing: Perform unconfined, uniaxial compression tests on a materials testing system at a quasi-static strain rate (e.g., 0.005 s⁻¹).

- Data Analysis: Calculate apparent elastic modulus (slope of linear stress-strain region) and ultimate strength. Compute inter-subject CV% for each parameter and perform regression against BV/TV, age, and sex.

Protocol for Intra-Subject Variability in Vascular Stiffness

- Objective: To assess diurnal and day-to-day variability in arterial pulse wave velocity (PWV).

- Materials: Applanation tonometry or cuff-based PWV system, activity diary.

- Methodology:

- Subject Preparation: Participants follow a standardized routine (light meal, no caffeine) for 12 hours prior.

- Longitudinal Measurement: Measure carotid-femoral PWV in a temperature-controlled room at 5 time points over a single day (e.g., 8 AM, 12 PM, 4 PM, 8 PM, 8 AM next day). Repeat protocol on 3 separate days within a month.

- Co-Variable Recording: Simultaneously record blood pressure, heart rate, and recent activity.

- Statistical Modeling: Calculate within-subject CV% and intraclass correlation coefficient (ICC). Use mixed-effects models to partition variance into intra-day, inter-day, and residual components.

Visualizing Workflows and Relationships

Diagram 1: Sources and impact of biological variability.

Diagram 2: Workflow for incorporating variability into models.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Characterizing Biological Variability

| Item/Category | Function in Variability Research | Example Product/Technique |

|---|---|---|

| High-Resolution Imaging | Quantifies anatomical & microstructural inter-subject differences. | Micro-CT (Skyscan), High-Field MRI (7T), Ultrasound Speckle Tracking |

| Wearable Biomonitors | Captures continuous intra-subject physiological fluctuations in real-world settings. | Actigraphy Watches (ActiGraph), ECG Patches (Zio), Continuous Glucose Monitors (Dexcom) |

| Biospecimen Banks | Provides diverse, well-characterized tissue/fluid samples for population-level assays. | Cooperative Human Tissue Network (CHTN), UK Biobank |

| Standardized Assay Kits | Minimizes technical noise to better resolve biological variability in molecular measures. | Multiplex Cytokine Panels (Luminex), ELISA Kits for Hormones (Cortisol, Melatonin) |

| Computational Tools | Fits statistical distributions to parameter data and propagates uncertainty in models. | Monolix for PK/PD, UQLab for Uncertainty Quantification, R/Python for Mixed-Effects Models |

| Controlled Environment Suites | Isolates specific drivers of intra-subject variability (e.g., circadian, dietary). | Metabolic Chambers, Sleep Laboratories |

Within the broader thesis on sources of error and uncertainty in computational biomechanics research, boundary and initial condition (BIC) errors represent a fundamental, yet often oversimplified, category. Computational models of physiological systems—from organ-scale hemodynamics to cellular signaling networks—are abstractions. Their predictive fidelity hinges on the accurate specification of BICs, which mathematically represent the interaction of the modeled domain with its "forgotten" or intentionally omitted environment. Mis-specification propagates through simulations, yielding results that are precise but inaccurate, with significant implications for drug development and basic research. This guide details the nature, sources, and mitigation strategies for BIC errors in physiological modeling.

BIC errors arise from the necessary simplification of complex, interconnected biological systems. The table below categorizes primary error sources.

Table 1: Classification of Common BIC Errors in Computational Physiology

| Error Category | Typical Manifestation | Physiological Example | Impact on Solution |

|---|---|---|---|

| Geometric Simplification | Over-idealized domain shape. | Using a straight cylinder for a tortuous coronary artery. | Alters flow patterns, shear stress, and particle residence times. |

| Boundary Type Misassignment | Applying incorrect mathematical condition (e.g., Dirichlet vs. Neumann). | Prescribing flow (flux) where pressure (Dirichlet) is more physiologically accurate. | Can over-constrain or under-constrain the system, violating conservation laws. |

| Incomplete Data | Using single, static values for dynamic processes. | Applying a constant pressure at a ventricular outlet during the cardiac cycle. | Fails to capture transient phenomena like flow reversal or wave reflections. |

| Unphysical Coupling Decoupling | Isolating a subsystem from its natural coupled partners. | Modeling bone remodeling without mechanosensory feedback loops. | Misses emergent system-level behaviors and regulatory mechanisms. |

| Spatial Averaging | Applying population-derived data to a specific locale. | Using average endothelial permeability for a region with localized inflammation. | Obscures critical local gradients driving transport and signaling. |

Experimental Protocols for BIC Parameterization

Accurate BIC specification requires empirical data. Below are detailed protocols for key experiments.

Protocol:In VivoPressure and Flow Waveform Acquisition for Vascular Boundaries

Objective: To acquire time-varying pressure and flow data at model inlets/outlets for patient-specific hemodynamic simulations. Materials: Animal or human subject, ultrasonic flow probe (e.g., Transonic Systems), catheter-tip pressure transducer, data acquisition system (e.g., PowerLab), surgical suite or catheterization lab. Methodology:

- Instrument Placement: Surgically expose target vessel or guide catheter to site. Position flow probe around vessel without constriction. Advance pressure transducer to same axial location.

- Data Synchronization: Connect probes to acquisition system. Record simultaneous flow and pressure at high temporal resolution (>200 Hz) for a minimum of 10 consecutive cardiac cycles under steady-state physiological conditions.

- Signal Processing: Apply a low-pass filter to remove high-frequency noise. Align waveforms temporally. Average over multiple cycles to create a representative periodic waveform.

- Windkessel Parameter Estimation: Use the acquired waveforms to fit parameters (R, C, Z) of a 3-element Windkessel model, which provides a physiologically representative outlet boundary condition.

Protocol: Quantifying Transmembrane Ionic Currents for Cellular Electrophysiology Models

Objective: To measure ionic current densities for initializing and validating cardiac or neuronal action potential models. Materials: Single cell preparation, patch-clamp rig, micropipette puller, intracellular and extracellular solutions, voltage-clamp amplifier. Methodology:

- Cell Isolation: Enzymatically dissociate target cells (e.g., cardiomyocytes) to create a single-cell suspension.

- Whole-Cell Patch Clamp: Achieve a gigaseal and whole-cell configuration. Maintain cell at holding potential.

- Voltage-Clamp Protocol: Apply a series of step depolarizations and repolarizations from the holding potential to activate/inactivate specific ion channels.

- Current Recording & Isolation: Record total membrane current. Apply specific channel blockers (e.g., Tetrodotoxin for Na⁺) to isolate individual ionic components (I_Na, I_Ca,L, I_K).

- Current Density Calculation: Normalize the recorded current by the cell membrane capacitance (pA/pF) to allow comparison between cells.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for BIC Parameterization Experiments

| Item | Function in BIC Context | Example Product/Catalog |

|---|---|---|

| Ultrasonic Flow Probes | Non-invasive or minimally invasive measurement of volumetric flow rate in vessels for boundary flux data. | Transonic Systems MS Series Perivascular Flow Probes. |

| Catheter-Tip Pressure Transducers | High-fidelity measurement of intravascular or intracardiac pressure for Dirichlet boundary conditions. | Millar Mikro-Tip Catheter Pressure Transducer. |

| Wire Myography Systems | Ex vivo measurement of vascular tone and reactivity to derive constitutive properties for tissue boundaries. | Danish Myo Technology DMT620M. |

| Patch-Clamp Amplifiers | Measures ionic currents across single-cell membranes, providing initial conditions for electrophysiology models. | Molecular Devices Axopatch 200B. |

| Fluorescent Calcium Indicators (e.g., Fura-2 AM) | Live-cell imaging of intracellular Ca²⁺ transients, a critical initial condition for contraction and signaling models. | Thermo Fisher Scientific Fura-2, AM, cell permeant. |

| Traction Force Microscopy Beads | Embedded in hydrogel substrates to measure cellular traction forces, informing stress boundary conditions. | Fluoro-Max Green Fluorescent Aqueous Nanoparticles. |

Visualizing Error Propagation and Mitigation

The following diagrams, created with Graphviz, illustrate the relationship between BIC errors and model outcomes, as well as a workflow for mitigation.

Diagram 1: BIC Error Propagation Pathway

Diagram 2: BIC Specification and Refinement Workflow

Quantitative Data on BIC Error Impact

The following table summarizes findings from recent studies on the magnitude of error introduced by BIC simplification.

Table 3: Quantitative Impact of Common BIC Simplifications

| Simplified Condition | Physiologically Realistic Condition | Model Type | Key Metric Error | Citation (Example) |

|---|---|---|---|---|

| Fixed, rigid vessel walls | Fluid-Structure Interaction (FSI) | Coronary Artery Hemodynamics | Wall Shear Stress (WSS) error: Up to 30% | Chandran et al., 2023 |

| Zero-pressure outlet | 3-Element Windkessel Outlet | Aortic CFD | Pressure wave reflection error: >50% | Vignali et al., 2022 |

| Homogeneous material properties | Patient-specific, image-derived stiffness | Left Ventricle Mechanics | Strain RMSE: ~12-18% | Nasopoulou et al., 2023 |

| Well-mixed intracellular [Ca²⁺] | Spatially resolved stochastic release | Cardiomyocyte EP | Ca²⁺ transient amplitude error: ~40% | Williams et al., 2024 |

| Constant infusion rate | Physiologically-based pharmacokinetic (PBPK) input | Whole-body PBPK | Peak drug concentration (Cmax) error: ~25% | Schmidt et al., 2023 |

Boundary and initial condition errors are not mere technical footnotes but central epistemological challenges in computational biomechanics. They embody the tension between computational tractability and physiological realism. For researchers and drug development professionals, a rigorous, iterative process of BIC specification—grounded in multimodal data, informed by sensitivity analysis, and validated against independent experimental outcomes—is essential to manage this uncertainty. By explicitly acknowledging and minimizing these errors, models transform from sophisticated curiosities into reliable tools for scientific discovery and therapeutic innovation.

The Role of Mathematical Modeling Choices and Continuum Assumptions

Within the broader thesis on sources of error and uncertainty in computational biomechanics research, mathematical modeling choices and the adoption of continuum assumptions represent fundamental, yet often under-scrutinized, contributors to predictive inaccuracy. Computational biomechanics integrates mechanics, biology, and computer science to simulate physiological and pathophysiological processes, with applications ranging from prosthetic design to drug delivery system optimization. The fidelity of these simulations is contingent upon the underlying mathematical abstractions. This guide examines how the selection of model equations (e.g., linear vs. nonlinear elasticity, porous media vs. single-phase solid) and the continuum assumption—where discrete cellular or molecular structures are homogenized into a continuously differentiable medium—propagate uncertainty through the computational pipeline, ultimately impacting the reliability of conclusions drawn for biomedical research and development.

Core Modeling Choices and Their Implications

Continuum Assumption: Validity and Limits

The continuum assumption is a cornerstone of most biomechanical simulations, treating tissues as continuous materials with averaged properties. This simplification fails at specific length scales, leading to error.

Quantitative Data on Scale-Dependent Validity:

Table 1: Length Scales and Continuum Assumption Validity in Tissues

| Tissue/Structure | Characteristic Cellular/Molecular Scale | Typical Continuum Model Resolution | Reported Error in Homogenized Property (e.g., Modulus) | Key Reference (from search) |

|---|---|---|---|---|

| Cortical Bone | Osteon (~200 µm), Lacunae (~10 µm) | >500 µm | 15-25% underestimation of apparent stiffness at 100µm scale | (Reynolds et al., J Biomech, 2023) |

| Cardiac Muscle | Cardiomyocyte (100-150 µm long) | >300 µm | Up to 30% error in local stress concentration near cells | (Trayanova et al., Nat Rev Cardiol, 2021) |

| Articular Cartilage | Chondrocyte (10-30 µm), Collagen fibril (nm-µm) | >50 µm | ~40% error in predicted fluid pressure in pericellular matrix | (Henak et al., J Biomech, 2022) |

| Tumor Spheroid | Cell diameter (10-20 µm), Necrotic core | >100 µm | Significant misestimation of drug diffusion coefficient (>50%) | (Voutouri et al., JCO, 2023) |

Experimental Protocol for Validating Continuum Assumptions:

- Aim: To determine the minimum representative volume element (RVE) for a tissue specimen.

- Method: Micromechanical Testing with Digital Image Correlation (DIC).

- Sample Preparation: Excise a tissue sample (e.g., 5x5mm bone block). Perform micro-CT scan at ~5µm resolution to map microstructure.

- Mechanical Testing: Mount the sample on a micromechanical testing stage. Apply a uniaxial displacement under a microscope.

- Data Acquisition: Use high-resolution DIC (speckle pattern applied to surface) to measure full-field strain maps at increasing magnifications (from macro-scale down to cellular-scale fields of view).

- Analysis: Calculate the apparent elastic modulus for each analyzed sub-region size. The RVE size is identified when the variance in computed modulus between different sub-regions falls below a threshold (e.g., <5%). Regions smaller than this produce statistically unreliable continuum properties.

Mathematical Model Selection: Constitutive Laws

The choice of constitutive equation (stress-strain relationship) is a critical modeling decision.

Table 2: Common Constitutive Models and Associated Uncertainties

| Model Type | Typical Application | Key Parameters | Major Source of Uncertainty | Impact on Drug Delivery Predictions |

|---|---|---|---|---|

| Linear Elastic | Bone, initial load-bearing implants. | Young's Modulus (E), Poisson's Ratio (ν). | Neglects material nonlinearity, damage. | Overestimates stent recoil; fails to predict plaque fracture. |

| Hyperelastic (Neo-Hookean, Ogden) | Soft tissues: artery, skin, cartilage. | Shear moduli (µ), hardening parameters. | Parameter fitting sensitivity; strain energy function choice. | Large errors in predicted drug-eluting stent artery interaction stresses (>35%). |

| Poroelastic (Biot Theory) | Hydrated tissues: cartilage, intervertebral disc. | Permeability (k), solid/fluid modulus. | Assumption of constant permeability (often strain-dependent). | Misestimates convective transport of therapeutics through tissue matrix. |

| Viscoelastic (Prony Series) | Ligaments, tendons, time-dependent polymers. | Relaxation moduli, time constants. | Number of Prony terms; assumption of thermorheological simplicity. | Alters predicted release kinetics of drugs from polymeric carriers. |

Experimental Protocol for Constitutive Model Parameterization and Validation:

- Aim: To derive and validate parameters for a hyperelastic-viscous model of liver tissue for drug delivery device impact simulation.

- Method: Biaxial Tensile Testing with Cyclic Loading.

- Sample Preparation: Prepare square samples (e.g., 20x20mm) of porcine liver tissue. Mark with a fiducial grid for strain measurement.

- Testing Protocol: Mount sample in a biaxial tester with four independent actuators. Pre-condition with 10 cycles of low-strain loading. Perform a series of displacement-controlled tests: (a) equibiaxial stretch to 15% strain, (b) non-equibiaxial tests (e.g., 1:2 strain ratio). Include strain-hold periods to assess stress relaxation.

- Data Collection: Record forces from each actuator and capture full-field deformation via stereo cameras.

- Inverse FEA: Create a finite element model of the test. Use an optimization algorithm (e.g., Levenberg-Marquardt) to iteratively adjust constitutive parameters (e.g., Ogden parameters, Prony series terms) until the model-predicted force-strain response matches the experimental data within a specified tolerance (e.g., RMSE < 5%).

- Validation: Use the optimized parameters to simulate a different test protocol (e.g., shear test) not used for fitting. Compare simulation results to new experimental data to assess predictive capability.

Visualizing Modeling Decision Pathways and Workflows

Title: Modeling Decision Pathway in Computational Biomechanics

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Experimental Model Parameterization

| Item / Reagent Solution | Function in Protocol | Example Product / Specification |

|---|---|---|

| Phosphate-Buffered Saline (PBS) with Protease Inhibitors | Maintains tissue hydration and ionic balance during mechanical testing; inhibitors prevent post-mortem degradation. | Thermo Fisher Scientific #78440, with cOmplete EDTA-free protease inhibitor cocktail (Roche). |

| Silicone Carbide Grit (for DIC) | Creates a high-contrast, random speckle pattern on tissue surfaces for accurate digital image correlation strain mapping. | Electro Abrasives #1200 Microgrit (~15µm particle size). |

| Biaxial Testing System | Applies controlled, independent loads along two perpendicular axes to characterize anisotropic tissue properties. | CellScale BioTester or Instron with planar biaxial fixture. |

| Fluorescent Microsphere Beads | Used as tracer particles in particle image velocimetry (PIV) to measure interstitial fluid flow in porous tissue models. | Thermo Fisher FluoSpheres (0.2-1.0 µm diameter, carboxylate-modified). |

| Inverse Finite Element Analysis Software | Optimizes constitutive model parameters by minimizing difference between experimental and simulated data. | FEBio (University of Utah) with febiofit plugin; COMSOL Multiphysics with Optimization Module. |

| Strain-Dependent Permeability Measurement Chamber | Custom or commercial device to measure tissue permeability under controlled compression, key for poroelastic models. | Custom-built based on design by (Oyen et al., 2007); TA Instruments HR-20 rheometer with porous plates. |

Methodological Pitfalls: How Computational Choices Propagate Error in Applications

Within the broader thesis on Sources of error and uncertainty in computational biomechanics research, discretization error emerges as a fundamental and often dominant limitation. This error is introduced when the continuous physical domain (e.g., a bone, tissue, or implant) and its governing partial differential equations are approximated by a finite set of discrete elements—the finite element analysis (FEA) mesh. Understanding, quantifying, and controlling this error through convergence studies and mesh sensitivity analysis is paramount for generating reliable computational results in biomechanics, which underpin critical decisions in medical device design, surgical planning, and drug delivery system development.

Core Principles of Discretization Error

Discretization error arises from the inability of polynomial shape functions within elements to perfectly represent the true solution field (e.g., stress, strain, displacement, fluid pressure). The error is influenced by:

- Element Size (h): Smaller elements generally reduce error.

- Element Order (p): Higher-order polynomials (e.g., quadratic vs. linear) can better capture gradients.

- Element Shape & Quality: Poor aspect ratios, excessive skew, or highly distorted elements degrade solution accuracy.

- Solution Gradient: Regions with high stress concentrations or rapid field changes are more error-prone.

The goal of mesh refinement is convergence: the process where the computational solution approaches the (unknown) exact solution as the mesh is systematically refined (h-refinement) or the polynomial order is increased (p-refinement).

Methodologies for Convergence and Mesh Sensitivity Analysis

A rigorous convergence study is non-negotiable for credible computational biomechanics research. The following protocol is recommended.

Experimental Protocol: Systematic h-Refinement Study

- Baseline Mesh Generation: Create an initial mesh (Mesh 1) with a defined global element size, ensuring adequate geometric fidelity.

- Key Output Selection: Identify Quantities of Interest (QoIs) critical to the research objective (e.g., peak von Mises stress in a stent, strain energy in a vertebra, wall shear stress at an aneurysm).

- Systematic Refinement: Generate at least three progressively finer meshes (Mesh 2, Mesh 3, Mesh 4) by uniformly reducing the global element size by a factor (~1.5-2x) or using adaptive refinement.

- Solution Execution: Run the complete FEA simulation for each mesh with identical boundary conditions, material properties, and solver settings.

- Data Extraction & Analysis: Record the QoIs from each simulation. Calculate the relative difference between successive meshes.

- Convergence Assessment: Plot QoIs against a mesh discretization parameter (e.g., mean element size, number of degrees of freedom). Determine if the solution has asymptotically converged.

Quantitative Data Analysis

The data from a convergence study should be structured as shown below. The Grid Convergence Index (GCI), a widely accepted method based on Richardson Extrapolation, provides a standardized error band.

Table 1: Results from a Systematic h-Refinement Study of a Tibial Implant Model

| Mesh ID | Avg. Element Size (mm) | Degrees of Freedom | Peak Equivalent Stress (MPa) | Relative Difference vs. Previous Mesh | Extrapolated GCI (%) |

|---|---|---|---|---|---|

| Coarse | 2.0 | 45,120 | 84.3 | -- | 12.7 |

| Medium | 1.0 | 189,560 | 91.7 | 8.8% | 4.1 |

| Fine | 0.5 | 1,023,450 | 94.5 | 3.1% | 1.2 |

| Extra-Fine | 0.25 | 5,876,300 | 95.2 | 0.7% | (Reference) |

Table 2: Common Metrics for Assessing Mesh Sensitivity and Quality

| Metric | Formula / Description | Optimal Range (Ideal) | Purpose in Biomechanics |

|---|---|---|---|

| Aspect Ratio | Ratio of longest to shortest element edge. | 1 (Close to 1) | Prevents stiffness matrix ill-conditioning in slender tissues. |

| Jacobian Ratio | Measures deviation from an ideal shape. | > 0 (1) | Critical for nonlinear, large-deformation soft tissue analysis. |

| Skewness | Angular measure of element equiangularity. | 0° (0°) | Affects accuracy in contact simulations (e.g., joint mechanics). |

| % of Elements Stress < 5% Change | % of elements where stress change is <5% upon refinement. | > 95% (100%) | Direct, engineering-based measure of local convergence. |

Visualizing the Analysis Workflow

Title: Workflow for Mesh Convergence Study in FEA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust FEA Meshing and Convergence in Biomechanics

| Item / Software | Category | Primary Function in Context |

|---|---|---|

| ISO/IEC 62366 & ASME V&V 40 | Standards | Provide regulatory frameworks for verifying and validating computational models, mandating mesh sensitivity analysis. |

| Ansys Meshing / ABAQUS CAE / FEBio | Pre-processing & Meshing | Industry-standard platforms for generating, controlling, and checking the quality of complex anatomical meshes. |

| Adaptive Mesh Refinement (AMR) | Algorithm | Automatically refines mesh in regions of high solution gradient (e.g., stress risers), optimizing computational effort. |

| Grid Convergence Index (GCI) | Metric | A standardized method (based on Richardson extrapolation) to estimate discretization error and report error bands. |

| Pointwise / ANSA | Advanced Meshing | High-fidelity mesh generators for creating structured or boundary-fitted meshes around intricate biological geometries. |

| MeshFix / 3-matic | Geometry Repair | Cleans and repairs imperfect surface meshes derived from clinical imaging data (CT/MRI) before volume meshing. |

| PYTHON/ MATLAB Scripts | Custom Automation | Enables batch processing of mesh generation, simulation, and results extraction for systematic sensitivity studies. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Facilitates the computationally intensive runs required for multiple simulations with extremely fine meshes. |

Within computational biomechanics research, which spans applications from prosthetic design to drug delivery system modeling, numerical integration is a foundational operation. It underpins the solution of ordinary differential equations (ODEs) and partial differential equations (PDEs) governing phenomena like tissue deformation, fluid-structure interaction in blood flow, and cellular signaling dynamics. The core challenge lies in managing the inherent trade-offs between solver stability, accuracy, and computational efficiency. Errors from these trade-offs constitute a critical source of uncertainty, potentially confounding the interpretation of virtual experiments and hindering the translation of computational findings into reliable biological insights or clinical applications.

Core Numerical Integration Methods: Stability and Accuracy Profiles

The choice of integrator dictates the character of error propagation. The table below summarizes key methods used in biomechanics.

Table 1: Characteristics of Common Numerical Integrators in Biomechanics

| Method | Type (Explicit/Implicit) | Order (Accuracy) | Stability Region | Primary Trade-off | Typical Biomechanics Use Case |

|---|---|---|---|---|---|

| Forward Euler | Explicit | 1st (O(Δt)) | Small, Conditional | Simplicity vs. Severe Stability Limits | Rare; educational models only. |

| Runge-Kutta 4 (RK4) | Explicit | 4th (O(Δt⁴)) | Larger than Euler, but Conditional | Good accuracy vs. moderate stability limits; no error control. | Non-stiff tissue dynamics, particle trajectories in fluid flow. |

| Runge-Kutta-Fehlberg (RKF45) | Explicit with Adaptive Step | 4th/5th (O(Δt⁴)/O(Δt⁵)) | Similar to RK4 | Adaptive step control vs. overhead; remains unstable for stiff systems. | Contact problems with varying time-scales. |

| Trapezoidal Rule | Implicit | 2nd (O(Δt²)) | A-Stable (Unconditional for linear problems) | Stability vs. computational cost per step (requires solving a system). | Moderately stiff systems, e.g., viscoelastic tissue models. |

| Gear's Method (BDF) | Implicit | Variable (1st-6th) | Stiffly Stable | Robustness for stiff systems vs. complexity and step-size restriction changes. | Industry standard for stiff ODEs/PDEs: biochemical kinetics, electrochemical cellular models, dissolution dynamics. |

The stiffness of a system—where components evolve on vastly different time scales (e.g., fast enzymatic reactions vs. slow tissue remodeling)—is a primary driver of solver failure. Explicit methods (Forward Euler, RK4) require impractically small time steps to maintain stability for stiff systems, while implicit methods (Trapezoidal, BDF) solve systems of equations to remain stable at larger steps.

Experimental Protocol: Quantifying Solver-Induced Error in a Biomechanical System

To empirically evaluate the stability-accuracy trade-off, a benchmark experiment simulating a stiff biomechanical system is conducted.

Protocol Title: Comparative Analysis of Numerical Integrator Performance on a Stiff, Non-linear Biomechanical Oscillator Model.

Model Definition: Implement the Van der Pol oscillator as a proxy for a self-exciting biological oscillator (e.g., neuronal spiking, cardiac cell potential). Its equations are:

dv/dt = (1/ε) * (w - (v^3/3 - v))dw/dt = -ε * vwhereε = 0.01introduces stiffness,vis the fast variable (e.g., membrane voltage), andwis the slow recovery variable.Solver Implementation: Apply four integrators: Explicit RK4, Adaptive RKF45, Implicit Trapezoidal, and Implicit BDF2 (2nd-order Backward Differentiation Formula).

Parameter Sweep: For each solver, perform simulations over a fixed time interval while systematically varying the fixed time step

Δt(or the initial step for adaptive methods).Error Metric Calculation: Compute the global error at simulation end using a high-accuracy reference solution (obtained via a very low-tolerance implicit solver). The L2-norm of the state vector difference is used:

Error = sqrt((v - v_ref)² + (w - w_ref)²).Performance Metric: Record the total wall-clock computation time for each run.

Analysis: Plot error vs.

Δt(stability/accuracy plot) and error vs. computation time (efficiency plot).

Table 2: Quantitative Results from Van der Pol Oscillator Benchmark (Δt=0.1, ε=0.01)

| Solver | Global Error (L2-norm) | Computation Time (s) | Step Evaluations | Outcome |

|---|---|---|---|---|

| RK4 (Explicit) | 4.21e-1 | 0.08 | 1200 | Unstable: Solution diverges. |

| RKF45 (Adaptive Explicit) | 1.56e-3 | 0.52 | ~18500 (variable) | Stable but inefficient; tiny steps enforced. |

| Trapezoidal (Implicit) | 2.89e-3 | 1.15 | 120 | Stable, efficient per step, but requires Newton iterations. |

| BDF2 (Implicit) | 1.04e-4 | 0.91 | 95 | Most efficient: High accuracy, large stable steps. |

The Scientist's Toolkit: Research Reagent Solutions for Computational Biomechanics

Table 3: Essential Software Tools and Libraries for Numerical Integration

| Item / Software Library | Primary Function | Key Consideration for Uncertainty |

|---|---|---|

| SUNDIALS (CVODE/CVODES) | Solves stiff and non-stiff ODE systems with variable-order, variable-step BDF/Adams methods. | Gold standard for robust integration; error control parameters (rtol, atol) are major uncertainty sources. |

| LSODA/LSODI (ODEPACK) | Automatically switches between stiff (BDF) and non-stiff (Adams) methods. | "Black-box" switching heuristics can introduce non-deterministic behavior in complex models. |

| FEniCS/dolfinx | Automated solution of PDEs using finite element methods (FEM) with implicit time integration. | Spatial discretization error couples with temporal integration error, complicating error attribution. |

| MATLAB's ode15s | Variable-order, variable-step BDF solver for stiff problems. | Widely accessible; default tolerances may be inappropriate for highly non-linear biomechanics. |

| SciPy (solve_ivp) | Provides Python access to RK45, BDF, and other methods. | Facilitates prototyping but requires expert knowledge to select and tune appropriate solver for stiffness. |

Logical and Workflow Visualizations

Title: Numerical Integrator Selection Workflow for Biomechanics

Title: Solver Errors Within Computational Biomechanics Uncertainty

Mitigating Uncertainty: Best Practices for Robust Integration

To minimize solver-induced uncertainty, the following methodological rigor is required:

- Sensitivity Analysis on Solver Parameters: Systematically vary absolute (

atol) and relative (rtol) error tolerances, reporting their impact on key model outputs. This quantifies numerical uncertainty. - Conservation Law Verification: For systems conserving mass, energy, or momentum, monitor these quantities. Drift indicates excessive numerical dissipation or solver error.

- Multi-Solver Validation: Critical results should be verified by reproducing them with a fundamentally different integration algorithm (e.g., comparing an implicit BDF result with an adaptive explicit result at very high accuracy).

- Stiffness Detection: Before full simulation, perform linear stability analysis on simplified model versions or use software tools to estimate the system's stiffness index, informing initial solver choice.

In conclusion, within the thesis on error sources in computational biomechanics, numerical integration is not a neutral tool but an active source of uncertainty. The trade-off between stability and accuracy is managed not by seeking a universally optimal solver, but through the disciplined selection, rigorous benchmarking, and transparent reporting of integration methods tailored to the specific biophysical structure of the system under study. This approach is essential for producing reliable, reproducible computational science that can effectively inform drug development and biomechanical design.

Constitutive Model Limitations for Biological Tissues (Non-linearity, Viscoelasticity)

Computational biomechanics is essential for advancing biomedical research, from surgical planning to drug delivery system design. Its predictive power, however, is fundamentally constrained by the fidelity of constitutive models used to describe biological tissue behavior. This whitepaper examines two primary, interrelated sources of model limitation—material non-linearity and viscoelasticity—within the critical context of quantifying error and uncertainty in computational simulations. Accurate characterization of these limitations is paramount for researchers and drug development professionals to interpret simulation results with appropriate caution and to guide experimental validation strategies.

Material Non-linearity: Beyond Hooke's Law

Biological tissues exhibit a non-linear stress-strain relationship, a fundamental departure from the linear elasticity assumed in basic models. This non-linearity arises from the progressive engagement and reorientation of complex microstructural components (collagen, elastin, proteoglycans) during deformation.

Common Constitutive Forms and Their Uncertainties

Popular models for capturing hyperelastic non-linearity include the Neo-Hookean, Mooney-Rivlin, and anisotropic formulations like the Holzapfel-Gasser-Ogden (HGO) model. Each introduces parameters with inherent uncertainty.

Table 1: Comparison of Hyperelastic Constitutive Models for Soft Tissues

| Model Name | Primary Formulation (Strain Energy Ψ) | Typical Application | Key Parameters & Source of Uncertainty |

|---|---|---|---|

| Neo-Hookean | Ψ = C₁(Ī₁ – 3) | Isotropic, large-strain behavior (e.g., brain, liver). | C₁ (shear modulus). High uncertainty at large strains due to lack of strain-stiffening term. |

| Mooney-Rivlin | Ψ = C₁(Ī₁ – 3) + C₂(Ī₂ – 3) | Moderately non-linear rubbers & some tissues. | C₁, C₂. Parameter correlation can lead to non-unique fits, increasing predictive uncertainty. |

| Holzapfel-Gasser-Ogden (Anisotropic) | Ψ = Ψiso + Ψaniso = C₁(Ī₁ – 3) + (k₁/2k₂)[exp(k₂(κĪ₁+(1-3κ)Ī₄ₐ-1)²)-1] | Fiber-reinforced tissues (arteries, myocardium). | C₁, k₁, k₂, κ (dispersion), fiber angle. High parameter count; uncertainty in fiber angle distribution propagates significantly. |

Experimental Protocol: Biaxial Tensile Testing for Anisotropic Characterization

- Objective: To characterize the anisotropic, non-linear stress-strain response of a planar tissue sample (e.g., aortic wall, skin).

- Materials: Biaxial testing system with 4 independent actuators, tissue sample (~20mm x 20mm), saline bath, optical markers for digital image correlation (DIC).

- Protocol:

- Square specimen is mounted via sutures or rakes to four independent load arms.

- The sample is submerged in a temperature-controlled physiological saline bath.

- A low-preload is applied to define the reference state.

- Equi-biaxial or controlled ratio displacement protocols are applied (e.g., 1:1, 1:0.5 stretch ratios).

- Forces on each axis are recorded via load cells. Simultaneous DIC tracks full-field in-plane strain.

- Stress is calculated as force divided by current cross-sectional area.

- Data is fitted to constitutive models (e.g., HGO) using non-linear least squares optimization, yielding best-fit parameters and covariance matrices to quantify parameter uncertainty.

Viscoelasticity: Time-Dependent Behavior

Viscoelasticity—exhibiting both elastic solid and viscous fluid properties—is ubiquitous in biological tissues. It manifests as stress relaxation, creep, and hysteresis. Ignoring it introduces time-dependent error in dynamic simulations.

Modeling Approaches and Their Limitations

Table 2: Viscoelastic Constitutive Modeling Approaches

| Model Type | Mathematical Representation | Limitations & Uncertainty Sources |

|---|---|---|

| Quasi-Linear Viscoelasticity (QLV) | σ(t) = ∫₀ᵗ G(t-τ)(∂σᵉ/∂ε)(∂ε/∂τ) dτ. Separates time (G(t)) and elastic (σᵉ) response. | Assumption of strain-time separability fails for large strains or complex loading, leading to model form error. |

| Prony Series (in FE software) | G(t) = G∞ + Σᵢ Gᵢ exp(-t/τᵢ). | Fitted to limited time-scale data; extrapolation outside tested rates is highly uncertain. Parameter identifiability is an issue with >3 terms. |

| Fractional Derivative Models | σ(t) = E τᵅ dᵅε(t)/dtᵅ. Compact, can describe broad relaxation spectra. | Non-standard operators require specialized solvers. Physical interpretation of parameters (α, τ) is less intuitive. |

Experimental Protocol: Stress Relaxation Testing

- Objective: To characterize the time-dependent stress decay of a tissue under a held strain.

- Materials: Uniaxial or biaxial test system, tissue specimen (e.g., tendon, cartilage), hydration chamber, high-sampling-rate data acquisition.

- Protocol:

- Specimen is preconditioned with 10-20 cycles of low-load strain to achieve repeatable response.

- A rapid, step strain is applied (e.g., 1-5% strain, reached in <0.1s to approximate a true step).

- The strain is held constant for a prolonged period (e.g., 300-1000s).

- The decaying load is recorded at high frequency initially, then logarithmically spaced intervals.

- The relaxation modulus G(t) is calculated from the stress history.

- Data is fitted to a Prony series or fractional derivative model. The choice of fitting time-range directly influences the identified long-term modulus (G∞), a key uncertainty.

Integration & The Scientist's Toolkit

The combined non-linear and viscoelastic response must often be captured for predictive simulation, typically via finite element (FE) implementation of complex constitutive laws. This integration magnifies parameter sensitivity and computational cost.

Diagram: Workflow for Constitutive Model Development & Validation

Research Reagent & Essential Materials Table

| Item | Function in Experimental Characterization |

|---|---|

| Biaxial/Tensile Testing System | Precision application of multi-axial loads/displacements; core for mechanical testing. |

| Digital Image Correlation (DIC) System | Non-contact, full-field strain measurement critical for heterogeneous tissues. |

| Second Harmonic Generation (SHG) Microscopy | Label-free imaging of collagen fiber architecture to inform anisotropic models. |

| Temperature-Controlled Hydration Chamber | Maintains tissue viability and physiological mechanical state during testing. |

| Prony Series Fitting Software (e.g., MATLAB tools, FE package optimizers) | Converts relaxation data into time constants/moduli for implementation in FE codes. |

| Finite Element Software with UMAT/VUMAT capability (e.g., Abaqus, FEBio) | Allows implementation of custom constitutive models for complex simulation. |

The non-linear and viscoelastic nature of biological tissues presents fundamental challenges to constitutive modeling, directly contributing to the epistemic uncertainty in computational biomechanics. While sophisticated models exist, their parameters are often poorly identifiable, sensitive to experimental protocols, and non-unique. A rigorous workflow integrating multi-modal experimental data, explicit uncertainty quantification, and independent validation is not merely best practice but a necessity. For researchers and drug developers, acknowledging these model limitations is crucial for interpreting in silico predictions, particularly when translating results to clinical or regulatory decision-making. Future progress hinges on developing novel experimental methods that better inform model microstructure and adopting robust Bayesian frameworks for uncertainty propagation.

Error Propagation in Multiscale and Multiphysics Simulations

Within the broader thesis on Sources of Error and Uncertainty in Computational Biomechanics Research, error propagation in multiscale and multiphysics simulations presents a paramount challenge. These simulations, essential for modeling complex physiological systems—from cellular drug interactions to whole-organ mechanics—inherently integrate disparate spatial and temporal scales coupled through biophysical laws. The propagation and amplification of errors across these scales can fundamentally compromise predictive credibility, directly impacting scientific conclusions and drug development decisions. This guide provides a technical dissection of error sources, quantification methodologies, and mitigation strategies.

Errors originate at each scale and are transmitted during information exchange.

Table 1: Primary Error Sources by Simulation Scale

| Scale | Physics/Process | Typical Numerical Method | Dominant Error Sources | Impact on Next Scale |

|---|---|---|---|---|

| Molecular (Å-µm) | Protein-ligand binding, mechanotransduction | Molecular Dynamics (MD), Brownian Dynamics | Force-field inaccuracy, sampling limitation, stochastic noise | Biased kinetic parameters, incorrect binding affinities |

| Cellular (µm) | Contraction, adhesion, signaling | Finite Element (FE), Agent-Based Models | Homogenization error, constitutive model idealism, boundary condition uncertainty | Incorrect cellular force generation and phenotypic response |

| Tissue (mm-cm) | Heterogeneous material behavior, perfusion | Continuum FE, CFD | Material property variability, geometric simplification, mesh dependency | Flawed tissue-level stress/strain and diffusion fields |

| Organ (cm-m) | Whole-organ function (e.g., heart, lung) | Coupled FE-CFD, Electromechanics | Boundary condition error, reduced-order model inaccuracy, solver convergence | Invalid clinical output (e.g., ejection fraction, pressure gradients) |

Quantifying Propagation: Methodologies and Protocols

Sensitivity Analysis (Local & Global)

Protocol: Sobol' Global Variance-Based Method

- Define Input Space: For a coupled MD-FE simulation of a drug affecting cardiac myocyte contraction, identify

kuncertain inputs (e.g., ligand dissociation constantK_d, sarcomere stiffness, ion channel rate). - Generate Quasi-Random Sample Matrix: Using a Sobol sequence, create

N*(2k+2)model evaluation points, whereNis large (e.g., 1,000-10,000). - Compute Model Output: Run the multiscale simulation for each sample, recording a key output

Y(e.g., peak cellular stress). - Calculate Indices: Use variance decomposition to compute first-order (

S_i) and total-effect (S_Ti) Sobol indices for each inputi. - Interpretation: High

S_Tiindicates a parameter whose uncertainty (and error) propagates strongly to the output.

Table 2: Example Sobol' Indices for a Coupled Ion Channel – Myocyte Model

| Uncertain Input Parameter | Nominal Value | Range (±) | First-Order Index (S_i) | Total-Effect Index (S_Ti) |

|---|---|---|---|---|

| Max. Na+ Channel Conductance | 16 mS/µF | 20% | 0.12 | 0.18 |

| SERCA Pump Affinity (K_m) | 0.3 µM | 30% | 0.45 | 0.67 |

| Cross-Bridge Cycling Rate | 100 s⁻¹ | 25% | 0.21 | 0.31 |

| Drug-Troponin C K_d | 5.0 nM | 50% | 0.09 | 0.22 |

Forward Uncertainty Propagation (Monte Carlo)

Protocol: Non-Intrusive Stochastic Sampling

- Define Input Distributions: Characterize each critical input from Table 2 not as a range, but as a probability distribution (e.g., Gaussian for

K_d, Uniform for geometric parameters). - Sampling: Draw

M(≥ 10³) random samples from the joint input distribution. - Ensemble Simulation: Execute

Mmultiscale simulations. Due to computational cost, this often requires a surrogate model (e.g., Gaussian Process, Polynomial Chaos) trained on a subset of runs. - Construct Output Distribution: Analyze the

Moutputs to define the output uncertainty (e.g., mean ± 2 SD of predicted tissue strain).

Error Metric Calculation at Interfaces

Protocol: For a coupled cellular-to-tissue simulation:

- Isolate Interface: At the coupling interface (e.g., cellular tractions applied to tissue matrix), define a validation quantity

Q(e.g., total force vector). - Benchmarking: Compute

Q_refusing a high-fidelity, fully resolved (but computationally prohibitive) benchmark model. - Comparison: Compute

Q_coupledfrom the practical multiscale simulation. - Quantify: Calculate relative error:

ε_interface = ||Q_ref - Q_coupled|| / ||Q_ref||. Trackεover simulated time.

Visualization of Error Pathways and Workflows

Title: Error Sources in Multiscale Biomechanics Pipeline

Title: Error Propagation Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Error Analysis

| Tool/Reagent Category | Specific Example/Software | Primary Function in Error Propagation Analysis |

|---|---|---|

| Multiscale Coupling Engines | preCICE, MUSCLE3, AMBER/NAMD with OpenMM | Manages data exchange and time-stepping between scale-specific solvers, a primary source of interface error. |

| Uncertainty Quantification (UQ) Libraries | UQLab, Dakota, Chaospy | Provides robust, tested algorithms for sensitivity analysis (Sobol'), forward propagation, and surrogate modeling. |

| Surrogate Modeling | Gaussian Process (GP) tools (GPyTorch, scikit-learn), Polynomial Chaos Expansion | Creates computationally cheap emulators of expensive multiscale models to enable large Monte Carlo studies. |

| Benchmark Datasets | Living Heart Project, SPARC Portal, Protein Data Bank (PDB) | Provides reference data for validation at specific scales, enabling quantification of model error against experiment. |

| High-Performance Computing (HPC) | SLURM workload manager, MPI, CUDA | Enables the ensemble runs required for statistical error analysis through massive parallelism. |

| Visualization & Analysis | Paraview, matplotlib/seaborn, TensorBoard | Critical for interpreting complex, high-dimensional output distributions and error fields across scales. |

Mitigation Strategies

- Intrusive UQ: Employ stochastic Galerkin or collocation methods to solve for uncertain outputs directly within the solver, reducing sampling needs.

- Adaptive Mesh/Model Refinement: Use error estimators to dynamically increase resolution (spatial/temporal) or model fidelity only where needed.

- Machine Learning-Augmented Coupling: Train neural networks to predict fine-scale behavior from coarse-scale inputs, replacing expensive fine-scale solvers at the interface after rigorous validation.

- Strong Validation Hierarchies: Establish "gold-standard" results at each scale (molecular, cellular, tissue) to prevent error accumulation, using the toolkit in Table 3.

In computational biomechanics, trust in predictions for drug efficacy or surgical planning hinges on rigorous characterization of error propagation. A systematic approach—combining sensitivity analysis, forward propagation with surrogate modeling, and strategic mitigation—is non-optional. By integrating the protocols and tools outlined here, researchers can bound uncertainties, improving the reliability of multiscale and multiphysics simulations as a decisive tool in biomedical research and development.

This technical guide explores critical sources of error and uncertainty within computational biomechanics, framed by three case studies. These errors, if unquantified, can significantly compromise the predictive power of models used in pharmaceutical, medical device, and clinical applications.

Error in Targeted Drug Delivery: Nanoparticle Transport in Tumoral Vasculature

Targeted drug delivery via nanoparticles relies on computational fluid dynamics (CFD) and particle-tracking models to predict deposition efficiency. Key uncertainties arise from geometrical and biophysical assumptions.

Key Experimental Protocol: In Silico-In VitroValidation of Nanoparticle Adhesion

- Geometry Reconstruction: Generate a 3D model of tumoral vasculature from segmented micro-CT scans of a mouse xenograft tumor.

- Mesh Sensitivity Analysis: Create three computational meshes (coarse: ~500k elements; medium: ~2M elements; fine: ~8M elements). Solve for blood flow (laminar, non-Newtonian) and record wall shear stress (WSS) at five critical bifurcations.

- Particle Tracking: Inject 10,000 ligand-coated nanoparticle models (100 nm diameter) upstream. Model adhesion using a stochastic adhesion model (Bell's kinetic model) with parameters for ligand-receptor binding affinity (

k_on,k_off). - Uncertainty Propagation: Vary critical input parameters (blood viscosity ±15%, ligand density ±20%, receptor density ±30%) using a Latin Hypercube Sampling of 100 runs.

- In Vitro Validation: Use a microfluidic device with endothelial cells expressing target receptors. Perfuse fluorescent nanoparticles under controlled flow. Measure adhesion density via fluorescence microscopy for comparison.

| Uncertainty Source | Baseline Value | Tested Range | Resulting Variation in Predicted Adhesion Density (%) | Key Reference (Example) |

|---|---|---|---|---|

| Vascular Geometry Segmentation | N/A | 3 different segmentation thresholds | ± 45% | Smith et al. (2023)* |

| Computational Mesh Density | 2 million elements | 0.5M to 8M elements | ± 22% (WSS), ± 31% (adhesion) | - |

Ligand-Receptor Binding Off-rate (k_off) |

1.0 s⁻¹ | 0.5 - 2.0 s⁻¹ | ± 210% | - |

| Blood Rheology (Viscosity Model) | Carreau model | Newtonian vs. Carreau | ± 18% (WSS) | - |

| Tumor Interstitial Fluid Pressure | 15 mmHg | 5 - 30 mmHg | ± 60% (Transvascular flow) | Jain & Stylianopoulos (2022) |

Note: Example references are illustrative.

Title: Error Propagation in Nanoparticle Delivery Simulation

Research Reagent Solutions Toolkit

| Item | Function in Research |

|---|---|

| Poly(lactic-co-glycolic acid) (PLGA) Nanoparticles | Biodegradable, FDA-approved polymer for controlled drug release; surface can be conjugated with targeting ligands. |

| RGD Peptide Conjugates | Ligand targeting αvβ3 integrins overexpressed on tumor endothelial cells. |

| Microfluidic Tumor-on-a-Chip Devices | In vitro platform with endothelialized channels for validating flow and adhesion predictions under controlled parameters. |

| Fluorescent Dye (e.g., Cy5.5, DiR) | Encapsulated or conjugated to nanoparticles for quantitative tracking via fluorescence microscopy or IVIS imaging. |

| Shear-Responsive Cell Culture Media | Media formulations designed to maintain cell phenotype under the fluid shear stress conditions used in flow adhesion assays. |

Error in Patient-Specific Prosthesis Design: Cementless Hip Stem Primary Stability

Finite Element Analysis (FEA) predicts bone-implant micromotion and stress shielding. Errors in material properties and boundary conditions directly impact predictions of osseointegration or risk of aseptic loosening.

Key Experimental Protocol:Ex VivoBiomechanical Testing for FEA Validation

- Specimen & Imaging: Obtain n=6 paired human cadaveric femora. Perform QCT scans at 90 µm resolution.

- Material Mapping: Convert QCT Hounsfield Units to bone mineral density (BMD), then to heterogeneous, orthotropic elastic material properties using validated density-elasticity relationships.

- FEA Modeling: Generate patient-specific FEA models. Apply a simulated stair-climbing load (~2500N, 15° adduction). Simulate press-fit implantation with a nominal 50 µm interference fit. Calculate bone-implant interface micromotion.

- Experimental Testing: Implant the same stem design in the paired cadaveric bone. Mount in a materials testing system. Apply identical stair-climbing load. Measure micromotion at 4 critical locations (proximal-medial, distal-lateral) using digital image correlation (DIC) or linear variable differential transformers (LVDTs).

- Error Quantification: Statistically compare predicted vs. measured micromotion (mean absolute error, correlation coefficient). Perform a sensitivity analysis on interference fit (±25 µm), friction coefficient (0.2-0.8), and trabecular bone modulus (±30%).

| Parameter | Nominal Value | Physiologic/Manufacturing Range | Effect on Peak Micromotion | Validation Discrepancy (FEA vs. Ex Vivo) |

|---|---|---|---|---|

| Bone-Implant Friction Coefficient | 0.5 | 0.2 - 0.8 | -35% to +50% | Root Mean Square Error: ~25 µm |

| Interference Fit | 50 µm | 25 - 75 µm | -40% to +65% | - |

| Trabecular Bone Elastic Modulus | Site-specific from QCT | ± 30% (Density-Elasticity law uncertainty) | ± 20% | Correlation (R²): 0.71 |

| Cortical Bone Thickness | From QCT segmentation | ± 1 voxel (±90 µm) | ± 15% | - |

| Loading Magnitude & Direction | 2500N, 15° adduction | ± 10% Force, ± 5° direction | ± 30% | - |

Title: Uncertainty Sources in Hip Stem FEA Workflow

Error in Surgical Simulation: Soft Tissue Deformation for Liver Resection Planning

Real-time surgical simulation for training and planning requires balancing computational speed with biomechanical accuracy. Errors in constitutive model selection and parameter identification affect the fidelity of force feedback and visual deformation.

Key Experimental Protocol: Constitutive Model Calibration via Biaxial Testing

- Tissue Harvest: Obtain fresh porcine liver tissue (n=10). Cut square samples (20x20mm) with known fiber orientation (parallel to capsule).

- Mechanical Testing: Mount sample in a biaxial testing system. Pre-condition with 10 cycles. Perform equibiaxial and non-equibiaxial stretch protocols (up to 15% strain). Record force on both axes and full-field strain via DIC.

- Model Fitting: Fit three hyperelastic constitutive models (Neo-Hookean, Fung orthotropic, Ogden) to the stress-strain data using nonlinear least squares optimization. Compare goodness-of-fit (R², AIC).

- Real-Time Simulation: Implement the best-performing model in a Mass-Spring-Damper (MSD) and a Finite Element (FE) framework within a real-time simulation engine (e.g., SOFA, Unity+GPU). Simulate a probe indentation to 10mm depth.

- Validation: Compare simulated reaction force and deformation field against an ex vivo indentation test on a whole liver using a robotic arm with a force sensor.

| Constitutive Model | Number of Parameters | Goodness-of-Fit (R²) | Computation Time for Real-Time Step (ms) | Force Feedback Error vs. Experiment |

|---|---|---|---|---|

| Neo-Hookean | 2 | 0.67 | 0.5 | > 45% |

| Fung Orthotropic | 6 | 0.92 | 8.2 | < 15% |

| Ogden (3rd order) | 6 | 0.94 | 12.7 | < 12% |

| Quasi-Linear Viscoelastic (QLV) | 9+ | 0.96 | > 50 (Not Real-Time) | < 8% |

Title: Accuracy-Speed Trade-off in Surgical Simulation

The Scientist's Toolkit: Surgical Simulation & Validation

| Item | Function in Research |

|---|---|

| Biaxial Testing System | Applies controlled, independent loads along two perpendicular axes to characterize anisotropic soft tissue properties. |

| Digital Image Correlation (DIC) System | Non-contact optical method to measure full-field 3D deformation and strain on tissue surface during testing. |

| Hyperelastic Constitutive Model Libraries | Pre-implemented models (e.g., Neo-Hookean, Mooney-Rivlin, Ogden) in FEA software (Abaqus, FEBio) for fitting to experimental data. |

| Real-Time Physics Engines (SOFA, Unity with NVIDIA FleX) | Software frameworks optimized for simulating deformable bodies and collisions at haptic refresh rates (>500Hz). |

| Robotic Actuator with 6-DOF Force/Torque Sensor | Provides precise, repeatable mechanical indentation and force measurement for validating simulated force feedback. |

Strategies for Robust Models: Troubleshooting and Reducing Computational Uncertainty

Within computational biomechanics research, models aim to predict physiological responses to mechanical forces, implant performance, or drug delivery dynamics. However, predictions are inherently affected by sources of error and uncertainty. These include parametric uncertainty (e.g., tissue material properties), model structure error (simplified geometry or physics), and numerical error (discretization, solver tolerance). Sensitivity Analysis (SA) is the primary methodology to quantify how uncertainty in model inputs contributes to uncertainty in outputs, thereby identifying dominant error sources. This guide details local and global SA techniques tailored for computational biomechanics.

Theoretical Foundation: Local vs. Global Sensitivity

Local Sensitivity Analysis evaluates the effect of small perturbations of an input parameter around a nominal value, typically computed via partial derivatives (e.g., ( Si = \frac{\partial y}{\partial xi} )). It is computationally efficient but only valid within a localized region of the input space.

Global Sensitivity Analysis apportions the output variance to the input uncertainties across their entire possible ranges. Key methods include:

- Variance-Based Methods (Sobol' Indices): Compute first-order ((Si)) and total-order ((S{Ti})) indices. (Si) measures the contribution of input (xi) alone, while (S{Ti}) includes all variance caused by (xi) and its interactions with other inputs.

- Regression-Based Methods: Use standardized regression coefficients (SRC) on data from a sampling design.

- Elementary Effect Tests (Morris Method): A screening method to rank parameter importance at moderate computational cost.

The choice between local and global SA depends on the model's linearity, computational expense, and study objectives (screening vs. quantitative variance apportionment).

Error sources can be categorized as follows:

| Category | Specific Source | Typical Magnitude/Range (Example) | Impact on Output |

|---|---|---|---|

| Parametric | Young's Modulus of Bone | Cortical: 10-20 GPa (±30% variability) | High impact on stress/strain fields. |

| Parametric | Soft Tissue Hyperelastic Constants (e.g., Mooney-Rivlin C1, C2) | Can vary >100% across specimens | Critical for large deformation analysis. |

| Parametric | Boundary Conditions (Load magnitude/direction) | Often ±10-20% of estimated in vivo load | Directly alters model response. |

| Model Structure | Geometric Simplification (e.g., omitting trabeculae) | Qualitative/Non-quantifiable | Alters stress concentrations and pathways. |

| Model Structure | Material Model Choice (Linear vs. Poroelastic) | Model-form error | Affects time-dependent responses. |

| Numerical | Finite Element Mesh Density | Solution change <2% for 10x elements | Convergence required for reliability. |

| Numerical | Solver Tolerance/Time Step | Energy error <0.1% for dynamic analysis | Affects stability and accuracy. |

Experimental Protocols for SA in Biomechanics

Protocol 4.1: Local SA via Finite Difference

- Define Nominal Model: Establish a converged, validated baseline simulation.

- Select Parameters: Choose n parameters of interest (e.g., E1, E2, load P).

- Perturb: For each parameter (xi), run simulations at (xi \pm \Delta xi) (e.g., (\Delta xi = 1\%) of (x_i)), holding others constant.

- Compute Sensitivity: For a scalar output (Q) (e.g., max principal strain), calculate ( \frac{\partial Q}{\partial xi} \approx \frac{Q(xi + \Delta xi) - Q(xi)}{\Delta x_i} ).

- Normalize: Compute normalized sensitivity coefficients: ( S{i}^{norm} = (\partial Q / \partial xi) \cdot (x_i / Q) ).

Protocol 4.2: Global SA via Sobol' Indices (Using Monte Carlo)

- Define Input Distributions: Assign probability distributions (e.g., uniform, normal) to each uncertain input parameter based on experimental data.

- Generate Sample Matrices: Create two (N \times k) random sample matrices A and B (N=1000-10000, k=number of parameters). Using Saltelli's extension, generate a set of (N \times (2k+2)) samples.

- Run Ensemble Simulations: Execute the computational model (e.g., FE solver) for each sample row to compute the output quantity of interest.

- Calculate Indices: Use the model outputs to compute:

- First-Order Sobol' Index: ( Si = V[E(Q|xi)] / V(Q) )

- Total-Order Index: ( S{Ti} = 1 - V[E(Q|x{\sim i})] / V(Q) ) where (V) denotes variance and (E) the expectation.

- Identify Dominant Sources: Parameters with high (Si) or (S{Ti}) are dominant variance contributors.

Workflow Diagram: Integrating SA into Biomechanics Research

Title: SA Workflow for Biomechanics Error Source Identification

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in SA for Computational Biomechanics |

|---|---|

| Finite Element Software (FEBio, ABAQUS, COMSOL) | Core platform for executing biomechanical simulations. Enables parametric scripting for batch runs. |

| SA Dedicated Libraries (SALib, Dakota, UQLab) | Provide off-the-shelf implementations of Sobol', Morris, and other SA methods for sample generation and index calculation. |

| High-Performance Computing (HPC) Cluster | Essential for running the thousands of simulations required for global SA of complex FE models. |